深度学习-ResNet18模型分类CIFAR10数据集详解

简介:

首先,ResNet是何凯明大神在2015年提出的,该模型提出后立刻引起轰动。因为在传统卷积神经网络中,当深度越来越深,就会出现梯度消失或者梯度爆炸等问题,从而使准确率降低。

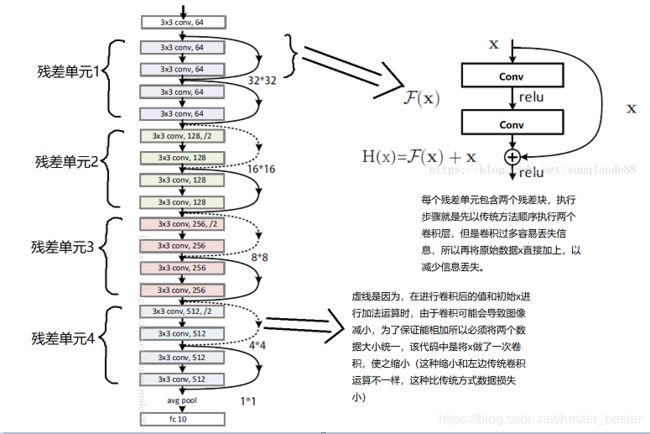

结构理解:

残差块的短路部分被称作Shortcut Connection,单个残差块的期待输出为H(x),H(x)是由传统卷积层的输出F(x)加短路部分携带的初始数据x求得。

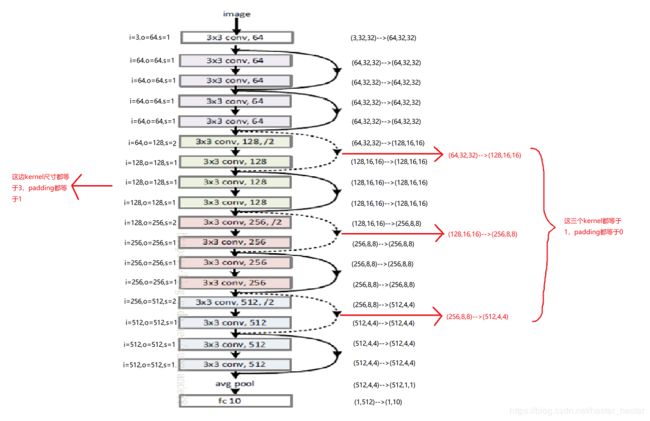

特征变换:

为了更直观的显示特征图的大小在执行过程中是怎样变换的,所以在这里详细列了一下。

代码实现:

resnet.py页面:

'''ResNet-18 Image classfication for cifar-10 with PyTorch

Author 'Sun-qian'.

'''

import torch

import torch.nn as nn

import torch.nn.functional as F

"""每一个残差块"""

class ResidualBlock(nn.Module): #继承nn.Module

def __init__(self, inchannel, outchannel, stride=1): #__init()中必须自己定义可学习的参数

super(ResidualBlock, self).__init__() #调用nn.Module的构造函数

self.left = nn.Sequential( #左边,指残差块中按顺序执行的普通卷积网络

nn.Conv2d(inchannel, outchannel, kernel_size=3, stride=stride, padding=1, bias=False),

nn.BatchNorm2d(outchannel), #最常用于卷积网络中(防止梯度消失或爆炸)

nn.ReLU(inplace=True), #implace=True是把输出直接覆盖到输入中,节省内存

nn.Conv2d(outchannel, outchannel, kernel_size=3, stride=1, padding=1, bias=False),

nn.BatchNorm2d(outchannel)

)

self.shortcut = nn.Sequential()

if stride != 1 or inchannel != outchannel: #只有步长为1并且输入通道和输出通道相等特征图大小才会一样,如果不一样,需要在合并之前进行统一

self.shortcut = nn.Sequential(

nn.Conv2d(inchannel, outchannel, kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(outchannel)

)

def forward(self, x): #实现前向传播过程

out = self.left(x) #先执行普通卷积神经网络

out += self.shortcut(x) #再加上原始x数据

out = F.relu(out)

return out

"""整个卷积网络,包含若干个残差块"""

class ResNet(nn.Module):

def __init__(self, ResidualBlock, num_classes=10):

super(ResNet, self).__init__()

self.inchannel = 64

self.conv1 = nn.Sequential(

nn.Conv2d(3, 64, kernel_size=3, stride=1, padding=1, bias=False),

nn.BatchNorm2d(64), #设置参数为卷积的输出通道数

nn.ReLU(),

)

self.layer1 = self.make_layer(ResidualBlock, 64, 2, stride=1) #一个残差单元,每个单元中国包含2个残差块

self.layer2 = self.make_layer(ResidualBlock, 128, 2, stride=2)

self.layer3 = self.make_layer(ResidualBlock, 256, 2, stride=2)

self.layer4 = self.make_layer(ResidualBlock, 512, 2, stride=2)

self.fc = nn.Linear(512, num_classes) #全连接层(1,512)-->(1,10)

def make_layer(self, block, channels, num_blocks, stride):

strides = [stride] + [1] * (num_blocks - 1) #将该单元中所有残差块的步数做成一个一个向量,第一个残差块的步数由传入参数指定,后边num_blocks-1个残差块的步数全部为1,第一个单元为[1,1],后边三个单元为[2,1]

layers = []

for stride in strides: #对每个残差块的步数进行迭代

layers.append(block(self.inchannel, channels, stride)) #执行每一个残差块,定义向量存储每个残差块的输出值

self.inchannel = channels

return nn.Sequential(*layers) #如果*加在了实参上,代表的是将向量拆成一个一个的元素

def forward(self, x):

out = self.conv1(x)

out = self.layer1(out)

out = self.layer2(out)

out = self.layer3(out)

out = self.layer4(out)

out = F.avg_pool2d(out, 4) #平均池化,4*4的局部特征取平均值,最后欸(512,1,1)

out = out.view(out.size(0), -1) #转换为(1,512)的格式

out = self.fc(out)

return out

def ResNet18():

return ResNet(ResidualBlock)

CIFAR_Test.py页面:

import torch

import torch.nn as nn

import torch.optim as optim

import torchvision

import torchvision.transforms as transforms

import argparse

from resnet import ResNet18

# 定义是否使用GPU

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# 参数设置,使得我们能够手动输入命令行参数,就是让风格变得和Linux命令行差不多

parser = argparse.ArgumentParser(description='PyTorch CIFAR10 Training')

parser.add_argument('--outf', default='./model/', help='folder to output images and model checkpoints') #输出结果保存路径

parser.add_argument('--net', default='./model/Resnet18.pth', help="path to net (to continue training)") #恢复训练时的模型路径

args = parser.parse_args()

# 超参数设置

EPOCH = 135 #遍历数据集次数

pre_epoch = 0 # 定义已经遍历数据集的次数

BATCH_SIZE = 128 #批处理尺寸(batch_size)

LR = 0.1 #学习率

# 准备数据集并预处理

transform_train = transforms.Compose([

transforms.RandomCrop(32, padding=4), #先四周填充0,在吧图像随机裁剪成32*32

transforms.RandomHorizontalFlip(), #图像一半的概率翻转,一半的概率不翻转

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010)), #R,G,B每层的归一化用到的均值和方差

])

transform_test = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010)),

])

trainset = torchvision.datasets.CIFAR10(root='./data', train=True, download=False, transform=transform_train) #训练数据集

trainloader = torch.utils.data.DataLoader(trainset, batch_size=BATCH_SIZE, shuffle=True, num_workers=2) #生成一个个batch进行批训练,组成batch的时候顺序打乱取

testset = torchvision.datasets.CIFAR10(root='./data', train=False, download=False, transform=transform_test)

testloader = torch.utils.data.DataLoader(testset, batch_size=100, shuffle=False, num_workers=2)

# Cifar-10的标签

classes = ('plane', 'car', 'bird', 'cat', 'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

# 模型定义-ResNet

net = ResNet18().to(device)

# 定义损失函数和优化方式

criterion = nn.CrossEntropyLoss() #损失函数为交叉熵,多用于多分类问题

optimizer = optim.SGD(net.parameters(), lr=LR, momentum=0.9, weight_decay=5e-4) #优化方式为mini-batch momentum-SGD,并采用L2正则化(权重衰减)

# 训练

if __name__ == "__main__":

best_acc = 85 #2 初始化best test accuracy

print("Start Training, Resnet-18!") # 定义遍历数据集的次数

with open("acc.txt", "w") as f:

with open("log.txt", "w")as f2:

for epoch in range(pre_epoch, EPOCH):

print('\nEpoch: %d' % (epoch + 1))

net.train() #训练模型时使用该语句,根据情况对Dropout和BatchNormalization进行参数调整

sum_loss = 0.0

correct = 0.0

total = 0.0

for i, data in enumerate(trainloader, 0):

# 准备数据

length = len(trainloader) #获取训练数据总长度

inputs, labels = data

inputs, labels = inputs.to(device), labels.to(device)

optimizer.zero_grad()

# forward + backward

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

# 每训练1个batch打印一次loss和准确率

sum_loss += loss.item() #损失加和(越来越小)

_, predicted = torch.max(outputs.data, 1) #输出这一批次128的对应分类

total += labels.size(0)

correct += predicted.eq(labels.data).cpu().sum() #判断这一批次的正确个数,并进行加和

print('[epoch:%d, iter:%d] Loss: %.03f | Acc: %.3f%% '

% (epoch + 1, (i + 1 + epoch * length), sum_loss / (i + 1), 100. * correct / total))

f2.write('%03d %05d |Loss: %.03f | Acc: %.3f%% '

% (epoch + 1, (i + 1 + epoch * length), sum_loss / (i + 1), 100. * correct / total))

f2.write('\n')

f2.flush()

# 每训练完一个epoch测试一下准确率

print("Waiting Test!")

with torch.no_grad(): #里边的数据不需要计算梯度,不需要进行反向传播

correct = 0

total = 0

for data in testloader:

net.eval() #测试模型时使用该语句,因为模型已经训练完毕,参数不会再更改,所以直接计算训练时所有batch的均值和方差

images, labels = data

images, labels = images.to(device), labels.to(device)

outputs = net(images)

# 取得分最高的那个类 (outputs.data的索引号)

_, predicted = torch.max(outputs.data, 1) # 取得分最高的那个类 (outputs.data的索引号)

total += labels.size(0)

correct += (predicted == labels).sum()

print('测试分类准确率为:%.3f%%' % (100 * correct / total))

acc = 100. * correct / total

# 将每次测试结果实时写入acc.txt文件中

print('Saving model......')

torch.save(net.state_dict(), '%s/net_%03d.pth' % (args.outf, epoch + 1))

f.write("EPOCH=%03d,Accuracy= %.3f%%" % (epoch + 1, acc))

f.write('\n')

f.flush()

# 记录最佳测试分类准确率并写入best_acc.txt文件中

if acc > best_acc:

f3 = open("best_acc.txt", "w")

f3.write("EPOCH=%d,best_acc= %.3f%%" % (epoch + 1, acc))

f3.close()

best_acc = acc

print("Training Finished, TotalEPOCH=%d" % EPOCH)

运行结果:

运行结果已删除,因为昨天刚知道自己修改的代码实际上是错误的,所以把自己修改的代码替换成了未修改的,运行结果同学在服务器上跑着大约91%。