残差神经网络ResNet学习,以ResNet18为例代码剖析

1.首先导入需要使用的包

import torch

import torch.nn as nn

import torch.nn.functional as F我用的是pytorch,所以导入这三个。

2.定义的残差模块结构

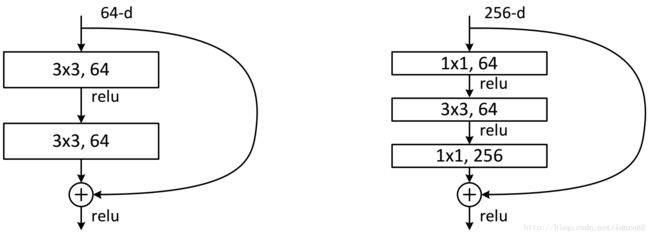

残差块有两种设计方式,左边的是用于18,34层的,这样参数多,右面这种设计方式参数少,适用于更深度的

class ResidualBlock(nn.Module):

def __init__(self, inchannel, outchannel, stride):

super(ResidualBlock, self).__init__()

self.left = nn.Sequential(

nn.Conv2d(inchannel, outchannel, kernel_size=3, stride=stride, padding=1, bias=False),

nn.BatchNorm2d(outchannel),

nn.ReLU(inplace=True),

nn.Conv2d(outchannel, outchannel, kernel_size=3, stride=1, padding=1, bias=False),

nn.BatchNorm2d(outchannel)

)

self.shortcut = nn.Sequential()

if stride != 1 or inchannel != outchannel:

self.shortcut = nn.Sequential(

nn.Conv2d(inchannel, outchannel, kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(outchannel)

)

def forward(self, x):

out = self.left(x)

out += self.shortcut(x)

out = F.relu(out)

return out

这段代码包括三个部分,

(1)self.left 这段代码主要是定义左边包括两个卷积层,其顺序为Conv2d—>BatchNorm2d—>ReLU—>Conv2d—>BatchNorm2

(2)self.shortcut 这段代码有两种情况,如果是每层的第一个残差块,则对上一层残差块进行降维,降维方法是使用1*1的卷积核,设置步长为2*2,使其与self.left执行后的保持一致。如果不是每层的第一个残差块,则对上一层残差块的输出不做处理,

(2)forward 这段代码主要实现了前面两段的相加,这样一个残差块就定义结束,这也就是残差的精髓。

3、ResNet主体部分的实现

class ResNet(nn.Module):

def __init__(self, ResidualBlock, num_classes=10):

super(ResNet, self).__init__()

self.inchannel = 64

self.conv1 = nn.Sequential(

nn.Conv2d(3, 64, kernel_size=3, stride=1, padding=1, bias=False),

nn.BatchNorm2d(64),

nn.ReLU(),

)

self.layer1 = self.make_layer(ResidualBlock, 64, 2, stride=1)

self.layer2 = self.make_layer(ResidualBlock, 128, 2, stride=2)

self.layer3 = self.make_layer(ResidualBlock, 256, 2, stride=2)

self.layer4 = self.make_layer(ResidualBlock, 512, 2, stride=2)

self.fc = nn.Linear(512, num_classes)

def make_layer(self, block, channels, num_blocks, stride):

strides = [stride] + [1] * (num_blocks - 1) #strides=[1,1]

print(strides)

layers = []

for stride in strides:

layers.append(block(self.inchannel, channels, stride))

self.inchannel = channels

return nn.Sequential(*layers)

def forward(self, x):

out = self.conv1(x)

out = self.layer1(out)

out = self.layer2(out)

out = self.layer3(out)

out = self.layer4(out)

out = F.avg_pool2d(out,4)

out = out.view(out.size(0), -1)

out = self.fc(out)

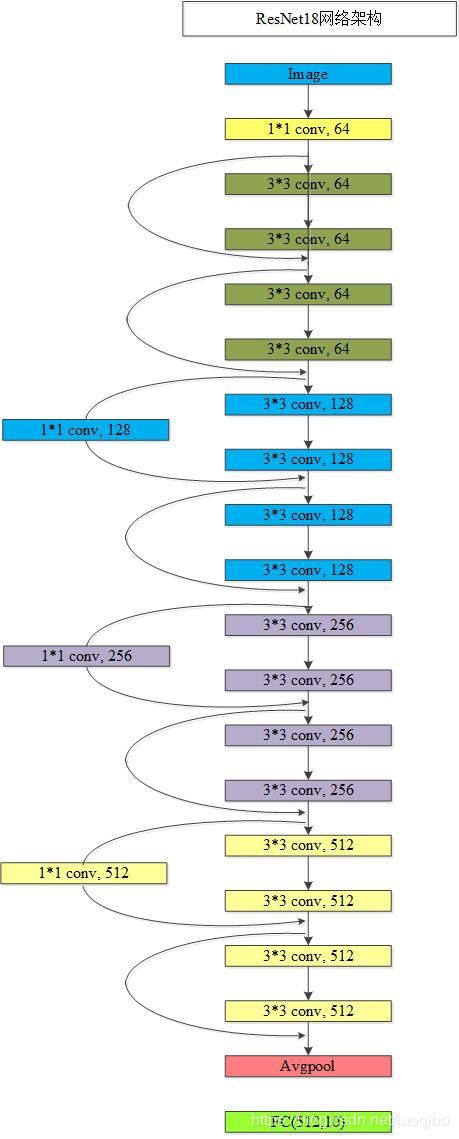

return out这段代码也分为4个部分,

(1)self.conv1,这里首先对输入图像进行一次卷机

(2)self.layer 这里定义4个大layer,每个layer包括两个残差块,这里定义的是ResNet18,所以每个层都是2,

上边的一起看成是五个阶段

(3)make_layer函数,首先判断步长,主要是由于每个层的残差块的第一个卷积层的步长不同,之后将layers中的所有残差块按顺序接在一起。

(4)forward函数,这个函数的作用是将每个layer连接起来。

4、定义ResNet18网络与查看网络模型

def ResNet18():

return ResNet(ResidualBlock)

if __name__=='__main__':

model = ResNet18()

print(model)

input = torch.randn(1, 3, 32, 32)

out = model(input)

print(out.shape)在分析残差网络的时候,打印出网络模型,更容易理解点。

下面是我画的一个ResNet18模型架构,我是在cifar-10上跑的,所以输入是32*32,输出是10