C++Yolov4目标检测实战

Introduction

今年2月份,Yolo之父Joseph Redmon由于Yolo被用于军事和隐私窥探退出CV界表示抗议,就当我们以为Yolo系列就此终结的时候,4月24日,Yolov4横空出世,新的接棒者出现,而一作正是赫赫有名的AB大神。

paper github

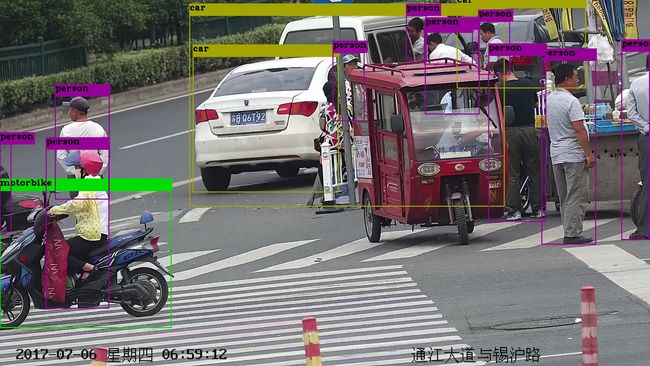

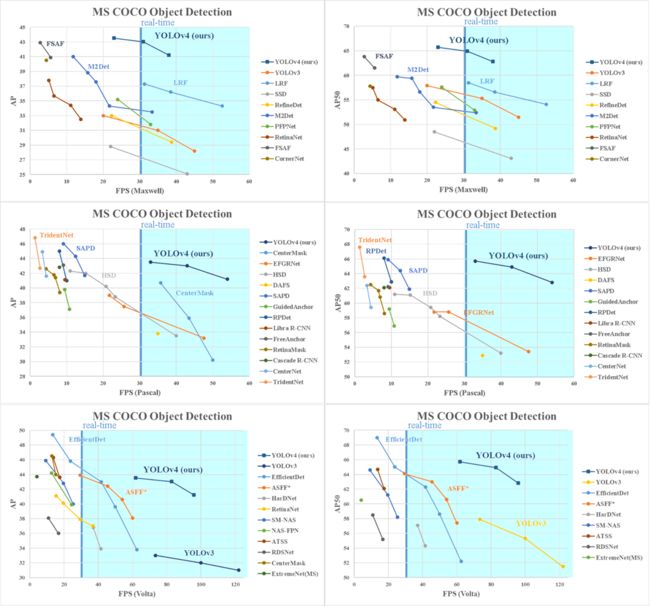

在本篇文章里,我们先不急去探究Yolov4的原理,而是从工程的角度来使用Yolov4。首先我们来看一下,Yolov4的性能有多么强劲,下面是使用不同显卡的时候,主流目标检测器的性能,从下图我们发现,Yolov4真的比自己的前辈Yolov3强劲了很多。

官方darknet

darknetAB

官方工程的使用在AB大神的github里面已经讲的非常清楚,可以实现Yolov4网络的训练,检测等功能。但是使用官方darknet项目我们很难直接进行定制化的项目开发,因此本文利用官方项目提供的C++接口进行目标检测实战。

Yolov4实战

首先我们从github下载yolov4.weights,不能上外网的同学下载应该很慢,我上传到百度云盘里,方便大家下载,链接如下:

链接: https://pan.baidu.com/s/14U9pkxJE3MHYj7KCWHnkNw 提取码: 54v4

工程包括两个文件main.cpp, yolo_v2_class.hpp.我把自己的整个工程上传到github上,同学们可以直接去git clone下来,觉得有用的同学麻烦star一下,谢谢。

main.cpp

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include // std::mutex, std::unique_lock

#include

// It makes sense only for video-Camera (not for video-File)

// To use - uncomment the following line. Optical-flow is supported only by OpenCV 3.x - 4.x

//#define TRACK_OPTFLOW

//#define GPU

// To use 3D-stereo camera ZED - uncomment the following line. ZED_SDK should be installed.

//#define ZED_STEREO

#include "yolo_v2_class.hpp" // imported functions from DLL

#ifdef OPENCV

#ifdef ZED_STEREO

#include

#if ZED_SDK_MAJOR_VERSION == 2

#define ZED_STEREO_2_COMPAT_MODE

#endif

#undef GPU // avoid conflict with sl::MEM::GPU

#ifdef ZED_STEREO_2_COMPAT_MODE

#pragma comment(lib, "sl_core64.lib")

#pragma comment(lib, "sl_input64.lib")

#endif

#pragma comment(lib, "sl_zed64.lib")

float getMedian(std::vector &v) {

size_t n = v.size() / 2;

std::nth_element(v.begin(), v.begin() + n, v.end());

return v[n];

}

std::vector get_3d_coordinates(std::vector bbox_vect, cv::Mat xyzrgba)

{

bool valid_measure;

int i, j;

const unsigned int R_max_global = 10;

std::vector bbox3d_vect;

for (auto &cur_box : bbox_vect) {

const unsigned int obj_size = std::min(cur_box.w, cur_box.h);

const unsigned int R_max = std::min(R_max_global, obj_size / 2);

int center_i = cur_box.x + cur_box.w * 0.5f, center_j = cur_box.y + cur_box.h * 0.5f;

std::vector x_vect, y_vect, z_vect;

for (int R = 0; R < R_max; R++) {

for (int y = -R; y <= R; y++) {

for (int x = -R; x <= R; x++) {

i = center_i + x;

j = center_j + y;

sl::float4 out(NAN, NAN, NAN, NAN);

if (i >= 0 && i < xyzrgba.cols && j >= 0 && j < xyzrgba.rows) {

cv::Vec4f &elem = xyzrgba.at(j, i); // x,y,z,w

out.x = elem[0];

out.y = elem[1];

out.z = elem[2];

out.w = elem[3];

}

valid_measure = std::isfinite(out.z);

if (valid_measure)

{

x_vect.push_back(out.x);

y_vect.push_back(out.y);

z_vect.push_back(out.z);

}

}

}

}

if (x_vect.size() * y_vect.size() * z_vect.size() > 0)

{

cur_box.x_3d = getMedian(x_vect);

cur_box.y_3d = getMedian(y_vect);

cur_box.z_3d = getMedian(z_vect);

}

else {

cur_box.x_3d = NAN;

cur_box.y_3d = NAN;

cur_box.z_3d = NAN;

}

bbox3d_vect.emplace_back(cur_box);

}

return bbox3d_vect;

}

cv::Mat slMat2cvMat(sl::Mat &input) {

int cv_type = -1; // Mapping between MAT_TYPE and CV_TYPE

if(input.getDataType() ==

#ifdef ZED_STEREO_2_COMPAT_MODE

sl::MAT_TYPE_32F_C4

#else

sl::MAT_TYPE::F32_C4

#endif

) {

cv_type = CV_32FC4;

} else cv_type = CV_8UC4; // sl::Mat used are either RGBA images or XYZ (4C) point clouds

return cv::Mat(input.getHeight(), input.getWidth(), cv_type, input.getPtr(

#ifdef ZED_STEREO_2_COMPAT_MODE

sl::MEM::MEM_CPU

#else

sl::MEM::CPU

#endif

));

}

cv::Mat zed_capture_rgb(sl::Camera &zed) {

sl::Mat left;

zed.retrieveImage(left);

cv::Mat left_rgb;

cv::cvtColor(slMat2cvMat(left), left_rgb, CV_RGBA2RGB);

return left_rgb;

}

cv::Mat zed_capture_3d(sl::Camera &zed) {

sl::Mat cur_cloud;

zed.retrieveMeasure(cur_cloud,

#ifdef ZED_STEREO_2_COMPAT_MODE

sl::MEASURE_XYZ

#else

sl::MEASURE::XYZ

#endif

);

return slMat2cvMat(cur_cloud).clone();

}

static sl::Camera zed; // ZED-camera

#else // ZED_STEREO

std::vector get_3d_coordinates(std::vector bbox_vect, cv::Mat xyzrgba) {

return bbox_vect;

}

#endif // ZED_STEREO

#include // C++

#include

#ifndef CV_VERSION_EPOCH // OpenCV 3.x and 4.x

#include

#define OPENCV_VERSION CVAUX_STR(CV_VERSION_MAJOR)"" CVAUX_STR(CV_VERSION_MINOR)"" CVAUX_STR(CV_VERSION_REVISION)

#ifndef USE_CMAKE_LIBS

#pragma comment(lib, "opencv_world" OPENCV_VERSION ".lib")

#ifdef TRACK_OPTFLOW

/*

#pragma comment(lib, "opencv_cudaoptflow" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_cudaimgproc" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_core" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_imgproc" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_highgui" OPENCV_VERSION ".lib")

*/

#endif // TRACK_OPTFLOW

#endif // USE_CMAKE_LIBS

#else // OpenCV 2.x

#define OPENCV_VERSION CVAUX_STR(CV_VERSION_EPOCH)"" CVAUX_STR(CV_VERSION_MAJOR)"" CVAUX_STR(CV_VERSION_MINOR)

#ifndef USE_CMAKE_LIBS

#pragma comment(lib, "opencv_core" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_imgproc" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_highgui" OPENCV_VERSION ".lib")

#pragma comment(lib, "opencv_video" OPENCV_VERSION ".lib")

#endif // USE_CMAKE_LIBS

#endif // CV_VERSION_EPOCH

using namespace std;

vector split(const string&s,char sepeartor)

{

vector split_vector;

int subinit=0;

for (int id=0;id!=s.length();id++)

{

if (s[id]==sepeartor)

{

split_vector.push_back(s.substr(subinit,id-subinit));

subinit=id+1;

}

}

split_vector.push_back(s.substr(subinit,s.length()-subinit));

return split_vector;

}

void draw_boxes(cv::Mat mat_img, std::vector result_vec, std::vector obj_names,

int current_det_fps = -1, int current_cap_fps = -1)

{

int const colors[6][3] = { { 1,0,1 },{ 0,0,1 },{ 0,1,1 },{ 0,1,0 },{ 1,1,0 },{ 1,0,0 } };

for (auto &i : result_vec) {

cv::Scalar color = obj_id_to_color(i.obj_id);

cv::rectangle(mat_img, cv::Rect(i.x, i.y, i.w, i.h), color, 2);

if (obj_names.size() > i.obj_id) {

std::string obj_name = obj_names[i.obj_id];

if (i.track_id > 0) obj_name += " - " + std::to_string(i.track_id);

cv::Size const text_size = getTextSize(obj_name, cv::FONT_HERSHEY_COMPLEX_SMALL, 1.2, 2, 0);

int max_width = (text_size.width > i.w + 2) ? text_size.width : (i.w + 2);

max_width = std::max(max_width, (int)i.w + 2);

//max_width = std::max(max_width, 283);

std::string coords_3d;

if (!std::isnan(i.z_3d)) {

std::stringstream ss;

ss << std::fixed << std::setprecision(2) << "x:" << i.x_3d << "m y:" << i.y_3d << "m z:" << i.z_3d << "m ";

coords_3d = ss.str();

cv::Size const text_size_3d = getTextSize(ss.str(), cv::FONT_HERSHEY_COMPLEX_SMALL, 0.8, 1, 0);

int const max_width_3d = (text_size_3d.width > i.w + 2) ? text_size_3d.width : (i.w + 2);

if (max_width_3d > max_width) max_width = max_width_3d;

}

cv::rectangle(mat_img, cv::Point2f(std::max((int)i.x - 1, 0), std::max((int)i.y - 35, 0)),

cv::Point2f(std::min((int)i.x + max_width, mat_img.cols - 1), std::min((int)i.y, mat_img.rows - 1)),

color, CV_FILLED, 8, 0);

putText(mat_img, obj_name, cv::Point2f(i.x, i.y - 16), cv::FONT_HERSHEY_COMPLEX_SMALL, 1.2, cv::Scalar(0, 0, 0), 2);

if(!coords_3d.empty()) putText(mat_img, coords_3d, cv::Point2f(i.x, i.y-1), cv::FONT_HERSHEY_COMPLEX_SMALL, 0.8, cv::Scalar(0, 0, 0), 1);

}

}

if (current_det_fps >= 0 && current_cap_fps >= 0) {

std::string fps_str = "FPS detection: " + std::to_string(current_det_fps) + " FPS capture: " + std::to_string(current_cap_fps);

putText(mat_img, fps_str, cv::Point2f(10, 20), cv::FONT_HERSHEY_COMPLEX_SMALL, 1.2, cv::Scalar(50, 255, 0), 2);

}

}

#endif // OPENCV

void show_console_result(std::vector const result_vec, std::vector const obj_names, int frame_id = -1) {

if (frame_id >= 0) std::cout << " Frame: " << frame_id << std::endl;

for (auto &i : result_vec) {

if (obj_names.size() > i.obj_id) std::cout << obj_names[i.obj_id] << " - ";

std::cout << "obj_id = " << i.obj_id << ", x = " << i.x << ", y = " << i.y

<< ", w = " << i.w << ", h = " << i.h

<< std::setprecision(3) << ", prob = " << i.prob << std::endl;

}

}

std::vector objects_names_from_file(std::string const filename) {

std::ifstream file(filename);

std::vector file_lines;

if (!file.is_open()) return file_lines;

for(std::string line; getline(file, line);) file_lines.push_back(line);

std::cout << "object names loaded \n";

return file_lines;

}

template

class send_one_replaceable_object_t {

const bool sync;

std::atomic a_ptr;

public:

void send(T const& _obj) {

T *new_ptr = new T;

*new_ptr = _obj;

if (sync) {

while (a_ptr.load()) std::this_thread::sleep_for(std::chrono::milliseconds(3));

}

std::unique_ptr old_ptr(a_ptr.exchange(new_ptr));

}

T receive() {

std::unique_ptr ptr;

do {

while(!a_ptr.load()) std::this_thread::sleep_for(std::chrono::milliseconds(3));

ptr.reset(a_ptr.exchange(NULL));

} while (!ptr);

T obj = *ptr;

return obj;

}

bool is_object_present() {

return (a_ptr.load() != NULL);

}

send_one_replaceable_object_t(bool _sync) : sync(_sync), a_ptr(NULL)

{}

};

int main(int argc, char *argv[])

{

std::string names_file = "coco.names";

std::string cfg_file = "cfg/yolov4.cfg";

std::string weights_file = "yolov4.weights";

std::string filename;

if (argc > 4) { //voc.names yolo-voc.cfg yolo-voc.weights test.mp4

names_file = argv[1];

cfg_file = argv[2];

weights_file = argv[3];

filename = argv[4];

}

else if (argc > 1) filename = argv[1];

float const thresh = (argc > 5) ? std::stof(argv[5]) : 0.2;

Detector detector(cfg_file, weights_file);

auto obj_names = objects_names_from_file(names_file);

std::string out_videofile = "result.avi";

bool const save_output_videofile = false; // true - for history

bool const send_network = false; // true - for remote detection

bool const use_kalman_filter = false; // true - for stationary camera

bool detection_sync = true; // true - for video-file

#ifdef TRACK_OPTFLOW // for slow GPU

detection_sync = false;

Tracker_optflow tracker_flow;

//detector.wait_stream = true;

#endif // TRACK_OPTFLOW

while (true)

{

std::cout << "input image or video filename: ";

if(filename.size() == 0) std::cin >> filename;

if (filename.size() == 0) break;

try {

#ifdef OPENCV

preview_boxes_t large_preview(100, 150, false), small_preview(50, 50, true);

bool show_small_boxes = false;

std::string const file_ext = filename.substr(filename.find_last_of(".") + 1);

std::string const protocol = filename.substr(0, 7);

if (file_ext == "avi" || file_ext == "mp4" || file_ext == "mjpg" || file_ext == "mov" || // video file

protocol == "rtmp://" || protocol == "rtsp://" || protocol == "http://" || protocol == "https:/" || // video network stream

filename == "zed_camera" || file_ext == "svo" || filename == "web_camera") // ZED stereo camera

{

if (protocol == "rtsp://" || protocol == "http://" || protocol == "https:/" || filename == "zed_camera" || filename == "web_camera")

detection_sync = false;

cv::Mat cur_frame;

std::atomic fps_cap_counter(0), fps_det_counter(0);

std::atomic current_fps_cap(0), current_fps_det(0);

std::atomic exit_flag(false);

std::chrono::steady_clock::time_point steady_start, steady_end;

int video_fps = 25;

bool use_zed_camera = false;

track_kalman_t track_kalman;

#ifdef ZED_STEREO

sl::InitParameters init_params;

init_params.depth_minimum_distance = 0.5;

#ifdef ZED_STEREO_2_COMPAT_MODE

init_params.depth_mode = sl::DEPTH_MODE_ULTRA;

init_params.camera_resolution = sl::RESOLUTION_HD720;// sl::RESOLUTION_HD1080, sl::RESOLUTION_HD720

init_params.coordinate_units = sl::UNIT_METER;

init_params.camera_buffer_count_linux = 2;

if (file_ext == "svo") init_params.svo_input_filename.set(filename.c_str());

#else

init_params.depth_mode = sl::DEPTH_MODE::ULTRA;

init_params.camera_resolution = sl::RESOLUTION::HD720;// sl::RESOLUTION::HD1080, sl::RESOLUTION::HD720

init_params.coordinate_units = sl::UNIT::METER;

if (file_ext == "svo") init_params.input.setFromSVOFile(filename.c_str());

#endif

//init_params.sdk_cuda_ctx = (CUcontext)detector.get_cuda_context();

init_params.sdk_gpu_id = detector.cur_gpu_id;

if (filename == "zed_camera" || file_ext == "svo") {

std::cout << "ZED 3D Camera " << zed.open(init_params) << std::endl;

if (!zed.isOpened()) {

std::cout << " Error: ZED Camera should be connected to USB 3.0. And ZED_SDK should be installed. \n";

getchar();

return 0;

}

cur_frame = zed_capture_rgb(zed);

use_zed_camera = true;

}

#endif // ZED_STEREO

cv::VideoCapture cap;

if (filename == "web_camera") {

cap.open(0);

cap >> cur_frame;

} else if (!use_zed_camera) {

cap.open(filename);

cap >> cur_frame;

}

#ifdef CV_VERSION_EPOCH // OpenCV 2.x

video_fps = cap.get(CV_CAP_PROP_FPS);

#else

video_fps = cap.get(cv::CAP_PROP_FPS);

#endif

cv::Size const frame_size = cur_frame.size();

//cv::Size const frame_size(cap.get(CV_CAP_PROP_FRAME_WIDTH), cap.get(CV_CAP_PROP_FRAME_HEIGHT));

std::cout << "\n Video size: " << frame_size << std::endl;

cv::VideoWriter output_video;

if (save_output_videofile)

#ifdef CV_VERSION_EPOCH // OpenCV 2.x

output_video.open(out_videofile, CV_FOURCC('D', 'I', 'V', 'X'), std::max(35, video_fps), frame_size, true);

#else

output_video.open(out_videofile, cv::VideoWriter::fourcc('D', 'I', 'V', 'X'), std::max(35, video_fps), frame_size, true);

#endif

struct detection_data_t {

cv::Mat cap_frame;

std::shared_ptr det_image;

std::vector result_vec;

cv::Mat draw_frame;

bool new_detection;

uint64_t frame_id;

bool exit_flag;

cv::Mat zed_cloud;

std::queue track_optflow_queue;

detection_data_t() : exit_flag(false), new_detection(false) {}

};

const bool sync = detection_sync; // sync data exchange

send_one_replaceable_object_t cap2prepare(sync), cap2draw(sync),

prepare2detect(sync), detect2draw(sync), draw2show(sync), draw2write(sync), draw2net(sync);

std::thread t_cap, t_prepare, t_detect, t_post, t_draw, t_write, t_network;

// capture new video-frame

if (t_cap.joinable()) t_cap.join();

t_cap = std::thread([&]()

{

uint64_t frame_id = 0;

detection_data_t detection_data;

do {

detection_data = detection_data_t();

#ifdef ZED_STEREO

if (use_zed_camera) {

while (zed.grab() !=

#ifdef ZED_STEREO_2_COMPAT_MODE

sl::SUCCESS

#else

sl::ERROR_CODE::SUCCESS

#endif

) std::this_thread::sleep_for(std::chrono::milliseconds(2));

detection_data.cap_frame = zed_capture_rgb(zed);

detection_data.zed_cloud = zed_capture_3d(zed);

}

else

#endif // ZED_STEREO

{

cap >> detection_data.cap_frame;

}

fps_cap_counter++;

detection_data.frame_id = frame_id++;

if (detection_data.cap_frame.empty() || exit_flag) {

std::cout << " exit_flag: detection_data.cap_frame.size = " << detection_data.cap_frame.size() << std::endl;

detection_data.exit_flag = true;

detection_data.cap_frame = cv::Mat(frame_size, CV_8UC3);

}

if (!detection_sync) {

cap2draw.send(detection_data); // skip detection

}

cap2prepare.send(detection_data);

} while (!detection_data.exit_flag);

std::cout << " t_cap exit \n";

});

// pre-processing video frame (resize, convertion)

t_prepare = std::thread([&]()

{

std::shared_ptr det_image;

detection_data_t detection_data;

do {

detection_data = cap2prepare.receive();

det_image = detector.mat_to_image_resize(detection_data.cap_frame);

detection_data.det_image = det_image;

prepare2detect.send(detection_data); // detection

} while (!detection_data.exit_flag);

std::cout << " t_prepare exit \n";

});

// detection by Yolo

if (t_detect.joinable()) t_detect.join();

t_detect = std::thread([&]()

{

std::shared_ptr det_image;

detection_data_t detection_data;

do {

detection_data = prepare2detect.receive();

det_image = detection_data.det_image;

std::vector result_vec;

if(det_image)

result_vec = detector.detect_resized(*det_image, frame_size.width, frame_size.height, thresh, true); // true

fps_det_counter++;

//std::this_thread::sleep_for(std::chrono::milliseconds(150));

detection_data.new_detection = true;

detection_data.result_vec = result_vec;

detect2draw.send(detection_data);

} while (!detection_data.exit_flag);

std::cout << " t_detect exit \n";

});

// draw rectangles (and track objects)

t_draw = std::thread([&]()

{

std::queue track_optflow_queue;

detection_data_t detection_data;

do {

// for Video-file

if (detection_sync) {

detection_data = detect2draw.receive();

}

// for Video-camera

else

{

// get new Detection result if present

if (detect2draw.is_object_present()) {

cv::Mat old_cap_frame = detection_data.cap_frame; // use old captured frame

detection_data = detect2draw.receive();

if (!old_cap_frame.empty()) detection_data.cap_frame = old_cap_frame;

}

// get new Captured frame

else {

std::vector old_result_vec = detection_data.result_vec; // use old detections

detection_data = cap2draw.receive();

detection_data.result_vec = old_result_vec;

}

}

cv::Mat cap_frame = detection_data.cap_frame;

cv::Mat draw_frame = detection_data.cap_frame.clone();

std::vector result_vec = detection_data.result_vec;

#ifdef TRACK_OPTFLOW

if (detection_data.new_detection) {

tracker_flow.update_tracking_flow(detection_data.cap_frame, detection_data.result_vec);

while (track_optflow_queue.size() > 0) {

draw_frame = track_optflow_queue.back();

result_vec = tracker_flow.tracking_flow(track_optflow_queue.front(), false);

track_optflow_queue.pop();

}

}

else {

track_optflow_queue.push(cap_frame);

result_vec = tracker_flow.tracking_flow(cap_frame, false);

}

detection_data.new_detection = true; // to correct kalman filter

#endif //TRACK_OPTFLOW

// track ID by using kalman filter

if (use_kalman_filter) {

if (detection_data.new_detection) {

result_vec = track_kalman.correct(result_vec);

}

else {

result_vec = track_kalman.predict();

}

}

// track ID by using custom function

else {

int frame_story = std::max(5, current_fps_cap.load());

result_vec = detector.tracking_id(result_vec, true, frame_story, 40);

}

if (use_zed_camera && !detection_data.zed_cloud.empty()) {

result_vec = get_3d_coordinates(result_vec, detection_data.zed_cloud);

}

//small_preview.set(draw_frame, result_vec);

//large_preview.set(draw_frame, result_vec);

draw_boxes(draw_frame, result_vec, obj_names, current_fps_det, current_fps_cap);

//show_console_result(result_vec, obj_names, detection_data.frame_id);

//large_preview.draw(draw_frame);

//small_preview.draw(draw_frame, true);

detection_data.result_vec = result_vec;

detection_data.draw_frame = draw_frame;

draw2show.send(detection_data);

if (send_network) draw2net.send(detection_data);

if (output_video.isOpened()) draw2write.send(detection_data);

} while (!detection_data.exit_flag);

std::cout << " t_draw exit \n";

});

// write frame to videofile

t_write = std::thread([&]()

{

if (output_video.isOpened()) {

detection_data_t detection_data;

cv::Mat output_frame;

do {

detection_data = draw2write.receive();

if(detection_data.draw_frame.channels() == 4) cv::cvtColor(detection_data.draw_frame, output_frame, CV_RGBA2RGB);

else output_frame = detection_data.draw_frame;

output_video << output_frame;

} while (!detection_data.exit_flag);

output_video.release();

}

std::cout << " t_write exit \n";

});

// send detection to the network

t_network = std::thread([&]()

{

if (send_network) {

detection_data_t detection_data;

do {

detection_data = draw2net.receive();

detector.send_json_http(detection_data.result_vec, obj_names, detection_data.frame_id, filename);

} while (!detection_data.exit_flag);

}

std::cout << " t_network exit \n";

});

// show detection

detection_data_t detection_data;

do {

steady_end = std::chrono::steady_clock::now();

float time_sec = std::chrono::duration(steady_end - steady_start).count();

if (time_sec >= 1) {

current_fps_det = fps_det_counter.load() / time_sec;

current_fps_cap = fps_cap_counter.load() / time_sec;

steady_start = steady_end;

fps_det_counter = 0;

fps_cap_counter = 0;

}

detection_data = draw2show.receive();

cv::Mat draw_frame = detection_data.draw_frame;

//if (extrapolate_flag) {

// cv::putText(draw_frame, "extrapolate", cv::Point2f(10, 40), cv::FONT_HERSHEY_COMPLEX_SMALL, 1.0, cv::Scalar(50, 50, 0), 2);

//}

cv::imshow("window name", draw_frame);

filename.replace(filename.end()-4, filename.end(), "_yolov4_out.jpg");

int key = cv::waitKey(3); // 3 or 16ms

if (key == 'f') show_small_boxes = !show_small_boxes;

if (key == 'p') while (true) if (cv::waitKey(100) == 'p') break;

//if (key == 'e') extrapolate_flag = !extrapolate_flag;

if (key == 27) { exit_flag = true;}

//std::cout << " current_fps_det = " << current_fps_det << ", current_fps_cap = " << current_fps_cap << std::endl;

} while (!detection_data.exit_flag);

std::cout << " show detection exit \n";

cv::destroyWindow("window name");

// wait for all threads

if (t_cap.joinable()) t_cap.join();

if (t_prepare.joinable()) t_prepare.join();

if (t_detect.joinable()) t_detect.join();

if (t_post.joinable()) t_post.join();

if (t_draw.joinable()) t_draw.join();

if (t_write.joinable()) t_write.join();

if (t_network.joinable()) t_network.join();

break;

}

else if (file_ext == "txt") { // list of image files

std::ifstream file(filename);

if (!file.is_open()) std::cout << "File not found! \n";

else

for (std::string line; file >> line;) {

std::cout << line << std::endl;

cv::Mat mat_img = cv::imread(line);

std::vector result_vec = detector.detect(mat_img);

show_console_result(result_vec, obj_names);

//draw_boxes(mat_img, result_vec, obj_names);

//cv::imwrite("res_" + line, mat_img);

}

}

else { // image file

// to achive high performance for multiple images do these 2 lines in another thread

cv::Mat mat_img = cv::imread(filename);

auto det_image = detector.mat_to_image_resize(mat_img);

auto start = std::chrono::steady_clock::now();

std::vector result_vec = detector.detect_resized(*det_image, mat_img.size().width, mat_img.size().height);

auto end = std::chrono::steady_clock::now();

std::chrono::duration spent = end - start;

std::cout << " Time: " << spent.count() << " sec \n";

//result_vec = detector.tracking_id(result_vec); // comment it - if track_id is not required

draw_boxes(mat_img, result_vec, obj_names);

cv::imshow("window name", mat_img);

vector filenamesplit=split(filename,'/');

string endname=filenamesplit[filenamesplit.size()-1];

endname.replace(endname.end()-4,endname.end(),"_yolov4_out.jpg");

std::string outputfile="detect_result/"+endname;

imwrite(outputfile, mat_img);

show_console_result(result_vec, obj_names);

cv::waitKey(0);

}

#else // OPENCV

//std::vector result_vec = detector.detect(filename);

auto img = detector.load_image(filename);

std::vector result_vec = detector.detect(img);

detector.free_image(img);

show_console_result(result_vec, obj_names);

#endif // OPENCV

}

catch (std::exception &e) { std::cerr << "exception: " << e.what() << "\n"; getchar(); }

catch (...) { std::cerr << "unknown exception \n"; getchar(); }

filename.clear();

}

return 0;

}

yolo_v2_class.hpp直接使用github的代码

Cmakelist.txt如下:

cmake_minimum_required(VERSION 3.5)

project(yolov4)

find_package( OpenCV 3 REQUIRED )

set(CMAKE_CXX_STANDARD 14)

include_directories(${OpenCV_INCLUDE_DIRS})

add_executable(yolov4 main.cpp yolo_v2_class.hpp)

target_link_libraries(yolov4 ${OpenCV_LIBS} libdarknet.so libpthread.so.0)

注意project的yolov4改成自己工程的名字,另外需要链接两个动态库,第一个库是darknet的所有网络层的定义,第二个库是linux的多线程库。两个库都放在/usr/lib目录下,因为这个目录是可以被C++自动检索到的。

libpthread.so.0默认情况下在/lib/x86_64-linux-gnu下,因此可以自动被检索到,只需要把它写在Cmakelist里面就可以。

/usr/bin/ld: CMakeFiles/yolov4.dir/main.cpp.o: undefined reference to symbol 'pthread_create@@GLIBC_2.2.5'

//lib/x86_64-linux-gnu/libpthread.so.0: error adding symbols: DSO missing from command line

collect2: error: ld returned 1 exit status

CMakeFiles/yolov4.dir/build.make:111: recipe for target 'yolov4' failed

make[2]: *** [yolov4] Error 1

CMakeFiles/Makefile2:67: recipe for target 'CMakeFiles/yolov4.dir/all' failed

make[1]: *** [CMakeFiles/yolov4.dir/all] Error 2

Makefile:83: recipe for target 'all' failed

make: *** [all] Error 2

libdarknet.so则可以通过编译AB大神的darknet工程得到,修改工程的makefile如下:

GPU=0

CUDNN=0

CUDNN_HALF=0

OPENCV=1

AVX=0

OPENMP=1

LIBSO=1

ZED_CAMERA=0 # ZED SDK 3.0 and above

ZED_CAMERA_v2_8=0 # ZED SDK 2.X

然后make,编译成功以后,就在目录里就出现了libdarknet.so动态库,将其copy至/usr/lib文件夹。

切换到我们的Yolov4文件夹,然后make,通过编译以后,可以运行可执行程序。

./yolov4 image/kite.jpg //检测图片

./yolov4 test.mp4 //检测视频

./yolov4 web_camera //检测摄像头

mini_batch = 1, batch = 1, time_steps = 1, train = 0

layer filters size/strd(dil) input output

0 conv 32 3 x 3/ 1 576 x 576 x 3 -> 576 x 576 x 32 0.573 BF

1 conv 64 3 x 3/ 2 576 x 576 x 32 -> 288 x 288 x 64 3.058 BF

2 conv 64 1 x 1/ 1 288 x 288 x 64 -> 288 x 288 x 64 0.679 BF

3 route 1 -> 288 x 288 x 64

4 conv 64 1 x 1/ 1 288 x 288 x 64 -> 288 x 288 x 64 0.679 BF

5 conv 32 1 x 1/ 1 288 x 288 x 64 -> 288 x 288 x 32 0.340 BF

6 conv 64 3 x 3/ 1 288 x 288 x 32 -> 288 x 288 x 64 3.058 BF

7 Shortcut Layer: 4, wt = 0, wn = 0, outputs: 288 x 288 x 64 0.005 BF

8 conv 64 1 x 1/ 1 288 x 288 x 64 -> 288 x 288 x 64 0.679 BF

9 route 8 2 -> 288 x 288 x 128

10 conv 64 1 x 1/ 1 288 x 288 x 128 -> 288 x 288 x 64 1.359 BF

11 conv 128 3 x 3/ 2 288 x 288 x 64 -> 144 x 144 x 128 3.058 BF

12 conv 64 1 x 1/ 1 144 x 144 x 128 -> 144 x 144 x 64 0.340 BF

13 route 11 -> 144 x 144 x 128

14 conv 64 1 x 1/ 1 144 x 144 x 128 -> 144 x 144 x 64 0.340 BF

15 conv 64 1 x 1/ 1 144 x 144 x 64 -> 144 x 144 x 64 0.170 BF

16 conv 64 3 x 3/ 1 144 x 144 x 64 -> 144 x 144 x 64 1.529 BF

17 Shortcut Layer: 14, wt = 0, wn = 0, outputs: 144 x 144 x 64 0.001 BF

18 conv 64 1 x 1/ 1 144 x 144 x 64 -> 144 x 144 x 64 0.170 BF

19 conv 64 3 x 3/ 1 144 x 144 x 64 -> 144 x 144 x 64 1.529 BF

20 Shortcut Layer: 17, wt = 0, wn = 0, outputs: 144 x 144 x 64 0.001 BF

21 conv 64 1 x 1/ 1 144 x 144 x 64 -> 144 x 144 x 64 0.170 BF

22 route 21 12 -> 144 x 144 x 128

23 conv 128 1 x 1/ 1 144 x 144 x 128 -> 144 x 144 x 128 0.679 BF

24 conv 256 3 x 3/ 2 144 x 144 x 128 -> 72 x 72 x 256 3.058 BF

25 conv 128 1 x 1/ 1 72 x 72 x 256 -> 72 x 72 x 128 0.340 BF

26 route 24 -> 72 x 72 x 256

27 conv 128 1 x 1/ 1 72 x 72 x 256 -> 72 x 72 x 128 0.340 BF

28 conv 128 1 x 1/ 1 72 x 72 x 128 -> 72 x 72 x 128 0.170 BF

29 conv 128 3 x 3/ 1 72 x 72 x 128 -> 72 x 72 x 128 1.529 BF

30 Shortcut Layer: 27, wt = 0, wn = 0, outputs: 72 x 72 x 128 0.001 BF

31 conv 128 1 x 1/ 1 72 x 72 x 128 -> 72 x 72 x 128 0.170 BF

32 conv 128 3 x 3/ 1 72 x 72 x 128 -> 72 x 72 x 128 1.529 BF

33 Shortcut Layer: 30, wt = 0, wn = 0, outputs: 72 x 72 x 128 0.001 BF

34 conv 128 1 x 1/ 1 72 x 72 x 128 -> 72 x 72 x 128 0.170 BF

35 conv 128 3 x 3/ 1 72 x 72 x 128 -> 72 x 72 x 128 1.529 BF

36 Shortcut Layer: 33, wt = 0, wn = 0, outputs: 72 x 72 x 128 0.001 BF

37 conv 128 1 x 1/ 1 72 x 72 x 128 -> 72 x 72 x 128 0.170 BF

38 conv 128 3 x 3/ 1 72 x 72 x 128 -> 72 x 72 x 128 1.529 BF

39 Shortcut Layer: 36, wt = 0, wn = 0, outputs: 72 x 72 x 128 0.001 BF

40 conv 128 1 x 1/ 1 72 x 72 x 128 -> 72 x 72 x 128 0.170 BF

41 conv 128 3 x 3/ 1 72 x 72 x 128 -> 72 x 72 x 128 1.529 BF

42 Shortcut Layer: 39, wt = 0, wn = 0, outputs: 72 x 72 x 128 0.001 BF

43 conv 128 1 x 1/ 1 72 x 72 x 128 -> 72 x 72 x 128 0.170 BF

44 conv 128 3 x 3/ 1 72 x 72 x 128 -> 72 x 72 x 128 1.529 BF

45 Shortcut Layer: 42, wt = 0, wn = 0, outputs: 72 x 72 x 128 0.001 BF

46 conv 128 1 x 1/ 1 72 x 72 x 128 -> 72 x 72 x 128 0.170 BF

47 conv 128 3 x 3/ 1 72 x 72 x 128 -> 72 x 72 x 128 1.529 BF

48 Shortcut Layer: 45, wt = 0, wn = 0, outputs: 72 x 72 x 128 0.001 BF

49 conv 128 1 x 1/ 1 72 x 72 x 128 -> 72 x 72 x 128 0.170 BF

50 conv 128 3 x 3/ 1 72 x 72 x 128 -> 72 x 72 x 128 1.529 BF

51 Shortcut Layer: 48, wt = 0, wn = 0, outputs: 72 x 72 x 128 0.001 BF

52 conv 128 1 x 1/ 1 72 x 72 x 128 -> 72 x 72 x 128 0.170 BF

53 route 52 25 -> 72 x 72 x 256

54 conv 256 1 x 1/ 1 72 x 72 x 256 -> 72 x 72 x 256 0.679 BF

55 conv 512 3 x 3/ 2 72 x 72 x 256 -> 36 x 36 x 512 3.058 BF

56 conv 256 1 x 1/ 1 36 x 36 x 512 -> 36 x 36 x 256 0.340 BF

57 route 55 -> 36 x 36 x 512

58 conv 256 1 x 1/ 1 36 x 36 x 512 -> 36 x 36 x 256 0.340 BF

59 conv 256 1 x 1/ 1 36 x 36 x 256 -> 36 x 36 x 256 0.170 BF

60 conv 256 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 256 1.529 BF

61 Shortcut Layer: 58, wt = 0, wn = 0, outputs: 36 x 36 x 256 0.000 BF

62 conv 256 1 x 1/ 1 36 x 36 x 256 -> 36 x 36 x 256 0.170 BF

63 conv 256 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 256 1.529 BF

64 Shortcut Layer: 61, wt = 0, wn = 0, outputs: 36 x 36 x 256 0.000 BF

65 conv 256 1 x 1/ 1 36 x 36 x 256 -> 36 x 36 x 256 0.170 BF

66 conv 256 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 256 1.529 BF

67 Shortcut Layer: 64, wt = 0, wn = 0, outputs: 36 x 36 x 256 0.000 BF

68 conv 256 1 x 1/ 1 36 x 36 x 256 -> 36 x 36 x 256 0.170 BF

69 conv 256 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 256 1.529 BF

70 Shortcut Layer: 67, wt = 0, wn = 0, outputs: 36 x 36 x 256 0.000 BF

71 conv 256 1 x 1/ 1 36 x 36 x 256 -> 36 x 36 x 256 0.170 BF

72 conv 256 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 256 1.529 BF

73 Shortcut Layer: 70, wt = 0, wn = 0, outputs: 36 x 36 x 256 0.000 BF

74 conv 256 1 x 1/ 1 36 x 36 x 256 -> 36 x 36 x 256 0.170 BF

75 conv 256 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 256 1.529 BF

76 Shortcut Layer: 73, wt = 0, wn = 0, outputs: 36 x 36 x 256 0.000 BF

77 conv 256 1 x 1/ 1 36 x 36 x 256 -> 36 x 36 x 256 0.170 BF

78 conv 256 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 256 1.529 BF

79 Shortcut Layer: 76, wt = 0, wn = 0, outputs: 36 x 36 x 256 0.000 BF

80 conv 256 1 x 1/ 1 36 x 36 x 256 -> 36 x 36 x 256 0.170 BF

81 conv 256 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 256 1.529 BF

82 Shortcut Layer: 79, wt = 0, wn = 0, outputs: 36 x 36 x 256 0.000 BF

83 conv 256 1 x 1/ 1 36 x 36 x 256 -> 36 x 36 x 256 0.170 BF

84 route 83 56 -> 36 x 36 x 512

85 conv 512 1 x 1/ 1 36 x 36 x 512 -> 36 x 36 x 512 0.679 BF

86 conv 1024 3 x 3/ 2 36 x 36 x 512 -> 18 x 18 x1024 3.058 BF

87 conv 512 1 x 1/ 1 18 x 18 x1024 -> 18 x 18 x 512 0.340 BF

88 route 86 -> 18 x 18 x1024

89 conv 512 1 x 1/ 1 18 x 18 x1024 -> 18 x 18 x 512 0.340 BF

90 conv 512 1 x 1/ 1 18 x 18 x 512 -> 18 x 18 x 512 0.170 BF

91 conv 512 3 x 3/ 1 18 x 18 x 512 -> 18 x 18 x 512 1.529 BF

92 Shortcut Layer: 89, wt = 0, wn = 0, outputs: 18 x 18 x 512 0.000 BF

93 conv 512 1 x 1/ 1 18 x 18 x 512 -> 18 x 18 x 512 0.170 BF

94 conv 512 3 x 3/ 1 18 x 18 x 512 -> 18 x 18 x 512 1.529 BF

95 Shortcut Layer: 92, wt = 0, wn = 0, outputs: 18 x 18 x 512 0.000 BF

96 conv 512 1 x 1/ 1 18 x 18 x 512 -> 18 x 18 x 512 0.170 BF

97 conv 512 3 x 3/ 1 18 x 18 x 512 -> 18 x 18 x 512 1.529 BF

98 Shortcut Layer: 95, wt = 0, wn = 0, outputs: 18 x 18 x 512 0.000 BF

99 conv 512 1 x 1/ 1 18 x 18 x 512 -> 18 x 18 x 512 0.170 BF

100 conv 512 3 x 3/ 1 18 x 18 x 512 -> 18 x 18 x 512 1.529 BF

101 Shortcut Layer: 98, wt = 0, wn = 0, outputs: 18 x 18 x 512 0.000 BF

102 conv 512 1 x 1/ 1 18 x 18 x 512 -> 18 x 18 x 512 0.170 BF

103 route 102 87 -> 18 x 18 x1024

104 conv 1024 1 x 1/ 1 18 x 18 x1024 -> 18 x 18 x1024 0.679 BF

105 conv 512 1 x 1/ 1 18 x 18 x1024 -> 18 x 18 x 512 0.340 BF

106 conv 1024 3 x 3/ 1 18 x 18 x 512 -> 18 x 18 x1024 3.058 BF

107 conv 512 1 x 1/ 1 18 x 18 x1024 -> 18 x 18 x 512 0.340 BF

108 max 5x 5/ 1 18 x 18 x 512 -> 18 x 18 x 512 0.004 BF

109 route 107 -> 18 x 18 x 512

110 max 9x 9/ 1 18 x 18 x 512 -> 18 x 18 x 512 0.013 BF

111 route 107 -> 18 x 18 x 512

112 max 13x13/ 1 18 x 18 x 512 -> 18 x 18 x 512 0.028 BF

113 route 112 110 108 107 -> 18 x 18 x2048

114 conv 512 1 x 1/ 1 18 x 18 x2048 -> 18 x 18 x 512 0.679 BF

115 conv 1024 3 x 3/ 1 18 x 18 x 512 -> 18 x 18 x1024 3.058 BF

116 conv 512 1 x 1/ 1 18 x 18 x1024 -> 18 x 18 x 512 0.340 BF

117 conv 256 1 x 1/ 1 18 x 18 x 512 -> 18 x 18 x 256 0.085 BF

118 upsample 2x 18 x 18 x 256 -> 36 x 36 x 256

119 route 85 -> 36 x 36 x 512

120 conv 256 1 x 1/ 1 36 x 36 x 512 -> 36 x 36 x 256 0.340 BF

121 route 120 118 -> 36 x 36 x 512

122 conv 256 1 x 1/ 1 36 x 36 x 512 -> 36 x 36 x 256 0.340 BF

123 conv 512 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 512 3.058 BF

124 conv 256 1 x 1/ 1 36 x 36 x 512 -> 36 x 36 x 256 0.340 BF

125 conv 512 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 512 3.058 BF

126 conv 256 1 x 1/ 1 36 x 36 x 512 -> 36 x 36 x 256 0.340 BF

127 conv 128 1 x 1/ 1 36 x 36 x 256 -> 36 x 36 x 128 0.085 BF

128 upsample 2x 36 x 36 x 128 -> 72 x 72 x 128

129 route 54 -> 72 x 72 x 256

130 conv 128 1 x 1/ 1 72 x 72 x 256 -> 72 x 72 x 128 0.340 BF

131 route 130 128 -> 72 x 72 x 256

132 conv 128 1 x 1/ 1 72 x 72 x 256 -> 72 x 72 x 128 0.340 BF

133 conv 256 3 x 3/ 1 72 x 72 x 128 -> 72 x 72 x 256 3.058 BF

134 conv 128 1 x 1/ 1 72 x 72 x 256 -> 72 x 72 x 128 0.340 BF

135 conv 256 3 x 3/ 1 72 x 72 x 128 -> 72 x 72 x 256 3.058 BF

136 conv 128 1 x 1/ 1 72 x 72 x 256 -> 72 x 72 x 128 0.340 BF

137 conv 256 3 x 3/ 1 72 x 72 x 128 -> 72 x 72 x 256 3.058 BF

138 conv 255 1 x 1/ 1 72 x 72 x 256 -> 72 x 72 x 255 0.677 BF

139 yolo

[yolo] params: iou loss: ciou (4), iou_norm: 0.07, cls_norm: 1.00, scale_x_y: 1.20

nms_kind: greedynms (1), beta = 0.600000

140 route 136 -> 72 x 72 x 128

141 conv 256 3 x 3/ 2 72 x 72 x 128 -> 36 x 36 x 256 0.764 BF

142 route 141 126 -> 36 x 36 x 512

143 conv 256 1 x 1/ 1 36 x 36 x 512 -> 36 x 36 x 256 0.340 BF

144 conv 512 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 512 3.058 BF

145 conv 256 1 x 1/ 1 36 x 36 x 512 -> 36 x 36 x 256 0.340 BF

146 conv 512 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 512 3.058 BF

147 conv 256 1 x 1/ 1 36 x 36 x 512 -> 36 x 36 x 256 0.340 BF

148 conv 512 3 x 3/ 1 36 x 36 x 256 -> 36 x 36 x 512 3.058 BF

149 conv 255 1 x 1/ 1 36 x 36 x 512 -> 36 x 36 x 255 0.338 BF

150 yolo

[yolo] params: iou loss: ciou (4), iou_norm: 0.07, cls_norm: 1.00, scale_x_y: 1.10

nms_kind: greedynms (1), beta = 0.600000

151 route 147 -> 36 x 36 x 256

152 conv 512 3 x 3/ 2 36 x 36 x 256 -> 18 x 18 x 512 0.764 BF

153 route 152 116 -> 18 x 18 x1024

154 conv 512 1 x 1/ 1 18 x 18 x1024 -> 18 x 18 x 512 0.340 BF

155 conv 1024 3 x 3/ 1 18 x 18 x 512 -> 18 x 18 x1024 3.058 BF

156 conv 512 1 x 1/ 1 18 x 18 x1024 -> 18 x 18 x 512 0.340 BF

157 conv 1024 3 x 3/ 1 18 x 18 x 512 -> 18 x 18 x1024 3.058 BF

158 conv 512 1 x 1/ 1 18 x 18 x1024 -> 18 x 18 x 512 0.340 BF

159 conv 1024 3 x 3/ 1 18 x 18 x 512 -> 18 x 18 x1024 3.058 BF

160 conv 255 1 x 1/ 1 18 x 18 x1024 -> 18 x 18 x 255 0.169 BF

161 yolo

[yolo] params: iou loss: ciou (4), iou_norm: 0.07, cls_norm: 1.00, scale_x_y: 1.05

nms_kind: greedynms (1), beta = 0.600000

Total BFLOPS 115.293

avg_outputs = 958892

Loading weights from yolov4.weights...

seen 64, trained: 32032 K-images (500 Kilo-batches_64)

Done! Loaded 162 layers from weights-file

object names loaded

input image or video filename: Time: 7.29136 sec

surfboard - obj_id = 37, x = 521, y = 517, w = 32, h = 15, prob = 0.393

surfboard - obj_id = 37, x = 497, y = 521, w = 39, h = 10, prob = 0.255

surfboard - obj_id = 37, x = 814, y = 567, w = 30, h = 10, prob = 0.248

kite - obj_id = 33, x = 591, y = 79, w = 75, h = 71, prob = 0.994

kite - obj_id = 33, x = 279, y = 235, w = 23, h = 45, prob = 0.979

kite - obj_id = 33, x = 575, y = 343, w = 25, h = 25, prob = 0.951

kite - obj_id = 33, x = 1082, y = 393, w = 14, h = 28, prob = 0.943

kite - obj_id = 33, x = 464, y = 339, w = 16, h = 18, prob = 0.855

kite - obj_id = 33, x = 300, y = 375, w = 23, h = 32, prob = 0.68

kite - obj_id = 33, x = 760, y = 379, w = 7, h = 9, prob = 0.634

person - obj_id = 0, x = 110, y = 610, w = 51, h = 151, prob = 0.994

person - obj_id = 0, x = 213, y = 698, w = 53, h = 159, prob = 0.993

person - obj_id = 0, x = 1204, y = 450, w = 9, h = 12, prob = 0.872

person - obj_id = 0, x = 37, y = 509, w = 16, h = 51, prob = 0.871

person - obj_id = 0, x = 345, y = 487, w = 9, h = 14, prob = 0.866

person - obj_id = 0, x = 176, y = 539, w = 11, h = 32, prob = 0.832

person - obj_id = 0, x = 21, y = 529, w = 14, h = 26, prob = 0.801

person - obj_id = 0, x = 82, y = 506, w = 25, h = 57, prob = 0.697

person - obj_id = 0, x = 518, y = 506, w = 16, h = 18, prob = 0.606

person - obj_id = 0, x = 692, y = 462, w = 7, h = 6, prob = 0.552

person - obj_id = 0, x = 460, y = 471, w = 7, h = 6, prob = 0.394

person - obj_id = 0, x = 537, y = 514, w = 14, h = 17, prob = 0.381

总结

在本文中,我们使用C++调用了作者在COCO数据集上的训练结果进行了图片测试,并且可以进行视频测试和网络摄像头测试(自己笔记本显卡太弱,视频跑不起来),如果我们想要自己训练数据集,仍然可以参考github,可以通过训练得到weights文件,然后按照本文所讲的进行测试。