TensorFlow1.0系统学习(五)————Tensorboard的使用(显示网络结构,各种数据,可视化训练过程)

文章目录

- 一、使用Tensorboard显示网络结构

- 二、使用Tensorboard显示网络运行时的数据

- 三、使用Tensorboard可视化训练过程

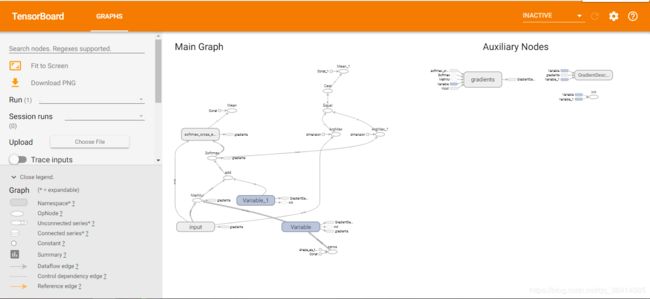

一、使用Tensorboard显示网络结构

因为只是为了显示网络结构,故只训练一次就好,不要浪费时间。

首先要定义命名空间

#命名空间

with tf.name_scope('input'):

# 定义两个placeholder

x = tf.placeholder(tf.float32, [None, 784], name='x-input')

y = tf.placeholder(tf.float32, [None, 10], name='y-input')

然后在会话里添加下面代码,为保存的路径。

writer = tf.summary.FileWriter('logs/', sess.graph)

完整代码:

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

# 载入数据集

mnist = input_data.read_data_sets("MNIST_data", one_hot=True)

# 每个批次的大小

batch_size = 100

# 计算一共有多少个批次

n_batch = mnist.train.num_examples // batch_size

#命名空间

with tf.name_scope('input'):

# 定义两个placeholder

x = tf.placeholder(tf.float32, [None, 784], name='x-input')

y = tf.placeholder(tf.float32, [None, 10], name='y-input')

# 创建一个简单的神经网络

W = tf.Variable(tf.zeros([784, 10]))

b = tf.Variable(tf.zeros([10]))

prediction = tf.nn.softmax(tf.matmul(x, W) + b)

#交叉熵代价函数(cross-entropy)和softmax搭配

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y, logits=prediction))

# 使用梯度下降法

train_step = tf.train.GradientDescentOptimizer(0.2).minimize(loss)

init = tf.global_variables_initializer()

# 结果存放在一个布尔型列表中

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(prediction, 1)) # argmax返回一维张量中最大的值所在的位置

# 求准确率

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) # cast把布尔类型转成浮点型,True为1.0,False为0

with tf.Session() as sess:

sess.run(init)

writer = tf.summary.FileWriter('logs/', sess.graph)

for epoch in range(1): # 训练1个周期

for batch in range(n_batch): # 训练所有的图片一次

batch_xs, batch_ys = mnist.train.next_batch(batch_size) # 获取batch_size大小的图片

sess.run(train_step, feed_dict={x: batch_xs, y: batch_ys})

test_acc = sess.run(accuracy, feed_dict={x: mnist.test.images, y: mnist.test.labels})

train_acc = sess.run(accuracy, feed_dict={x: mnist.train.images, y: mnist.train.labels})

print("epoch: " + str(epoch) + ",Training Accuracy: " + str(train_acc) + ",Testing Accuracy: " + str(test_acc))

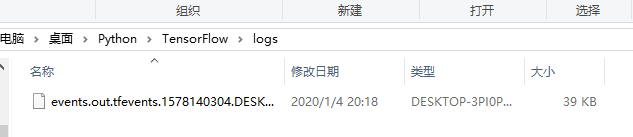

训练完得到下面文件:

window下在logs的目录下打开命令行(shift+鼠标右键),输入以下代码

tensorboard --logdir logs --host=127.0.0.1

http://127.0.0.1:6006

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

# 载入数据集

mnist = input_data.read_data_sets("MNIST_data", one_hot=True)

# 每个批次的大小

batch_size = 100

# 计算一共有多少个批次

n_batch = mnist.train.num_examples // batch_size

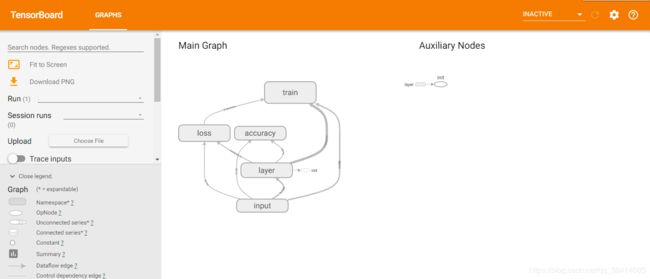

#命名空间

with tf.name_scope('input'):

# 定义两个placeholder

x = tf.placeholder(tf.float32, [None, 784], name='x-input')

y = tf.placeholder(tf.float32, [None, 10], name='y-input')

with tf.name_scope('layer'):

# 创建一个简单的神经网络

with tf.name_scope('wights'):

W = tf.Variable(tf.zeros([784, 10]))

with tf.name_scope('biases'):

b = tf.Variable(tf.zeros([10]))

with tf.name_scope('wx_plus_b'):

wx_plus_b = tf.matmul(x, W) + b

with tf.name_scope('softmax'):

prediction = tf.nn.softmax(wx_plus_b)

with tf.name_scope('loss'):

#交叉熵代价函数(cross-entropy)和softmax搭配

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y, logits=prediction))

with tf.name_scope('train'):

# 使用梯度下降法

train_step = tf.train.GradientDescentOptimizer(0.2).minimize(loss)

init = tf.global_variables_initializer()

with tf.name_scope('accuracy'):

with tf.name_scope('correct_prediction'):

# 结果存放在一个布尔型列表中

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(prediction, 1)) # argmax返回一维张量中最大的值所在的位置

with tf.name_scope('accuracy'):

# 求准确率

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) # cast把布尔类型转成浮点型,True为1.0,False为0

with tf.Session() as sess:

sess.run(init)

writer = tf.summary.FileWriter('logs/', sess.graph)

for epoch in range(1): # 训练1个周期

for batch in range(n_batch): # 训练所有的图片一次

batch_xs, batch_ys = mnist.train.next_batch(batch_size) # 获取batch_size大小的图片

sess.run(train_step, feed_dict={x: batch_xs, y: batch_ys})

test_acc = sess.run(accuracy, feed_dict={x: mnist.test.images, y: mnist.test.labels})

train_acc = sess.run(accuracy, feed_dict={x: mnist.train.images, y: mnist.train.labels})

print("epoch: " + str(epoch) + ",Training Accuracy: " + str(train_acc) + ",Testing Accuracy: " + str(test_acc))

二、使用Tensorboard显示网络运行时的数据

首先定义一个计算函数,用来计算查看权值W和偏置值b的信息:

def variable_summaries(var):

with tf.name_scope('sumaries'):

mean = tf.reduce_mean(var)

tf.summary.scalar('mean', mean) #平均值

with tf.name_scope('stddev'):

stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))

tf.summary.scalar('stddev', stddev) #标准差

tf.summary.scalar('max', tf.reduce_max(var)) #最大值

tf.summary.scalar('min', tf.reduce_min(var)) #最小值

tf.summary.histogram('histogram', var) #直方图

在W,b 的空间窗口下分别加入:

variable_summaries(W)

variable_summaries(b)

在loss的空间窗口下加入:

tf.summary.scalar('loss', loss)

在accuracy的空间窗口下加入:

tf.summary.scalar('accuracy', accuracy)

然后加入汇总:

#合并所有的summary

merged = tf.summary.merge_all()

然后在会话里修改:

summary,_ = sess.run([merged, train_step], feed_dict={x: batch_xs, y: batch_ys}) #merged 会有返回值,存在summary里面

writer.add_summary(summary, epoch)

完整代码:

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

# 载入数据集

mnist = input_data.read_data_sets("MNIST_data", one_hot=True)

# 每个批次的大小

batch_size = 100

# 计算一共有多少个批次

n_batch = mnist.train.num_examples // batch_size

def variable_summaries(var):

with tf.name_scope('sumaries'):

mean = tf.reduce_mean(var)

tf.summary.scalar('mean', mean) #平均值

with tf.name_scope('stddev'):

stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))

tf.summary.scalar('stddev', stddev) #标准差

tf.summary.scalar('max', tf.reduce_max(var)) #最大值

tf.summary.scalar('min', tf.reduce_min(var)) #最小值

tf.summary.histogram('histogram', var) #直方图

#命名空间

with tf.name_scope('input'):

# 定义两个placeholder

x = tf.placeholder(tf.float32, [None, 784], name='x-input')

y = tf.placeholder(tf.float32, [None, 10], name='y-input')

with tf.name_scope('layer'):

# 创建一个简单的神经网络

with tf.name_scope('wights'):

W = tf.Variable(tf.zeros([784, 10]))

variable_summaries(W)

with tf.name_scope('biases'):

b = tf.Variable(tf.zeros([10]))

variable_summaries(b)

with tf.name_scope('wx_plus_b'):

wx_plus_b = tf.matmul(x, W) + b

with tf.name_scope('softmax'):

prediction = tf.nn.softmax(wx_plus_b)

with tf.name_scope('loss'):

#交叉熵代价函数(cross-entropy)和softmax搭配

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y, logits=prediction))

tf.summary.scalar('loss', loss)

with tf.name_scope('train'):

# 使用梯度下降法

train_step = tf.train.GradientDescentOptimizer(0.2).minimize(loss)

init = tf.global_variables_initializer()

with tf.name_scope('accuracy'):

with tf.name_scope('correct_prediction'):

# 结果存放在一个布尔型列表中

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(prediction, 1)) # argmax返回一维张量中最大的值所在的位置

with tf.name_scope('accuracy'):

# 求准确率

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) # cast把布尔类型转成浮点型,True为1.0,False为0

tf.summary.scalar('accuracy', accuracy)

#合并所有的summary

merged = tf.summary.merge_all()

with tf.Session() as sess:

sess.run(init)

writer = tf.summary.FileWriter('logs/', sess.graph)

for epoch in range(51): # 训练1个周期

for batch in range(n_batch): # 训练所有的图片一次

batch_xs, batch_ys = mnist.train.next_batch(batch_size) # 获取batch_size大小的图片

summary,_ = sess.run([merged, train_step], feed_dict={x: batch_xs, y: batch_ys}) #merged 会有返回值,存在summary里面

writer.add_summary(summary, epoch)

test_acc = sess.run(accuracy, feed_dict={x: mnist.test.images, y: mnist.test.labels})

train_acc = sess.run(accuracy, feed_dict={x: mnist.train.images, y: mnist.train.labels})

print("epoch: " + str(epoch) + ",Training Accuracy: " + str(train_acc) + ",Testing Accuracy: " + str(test_acc))

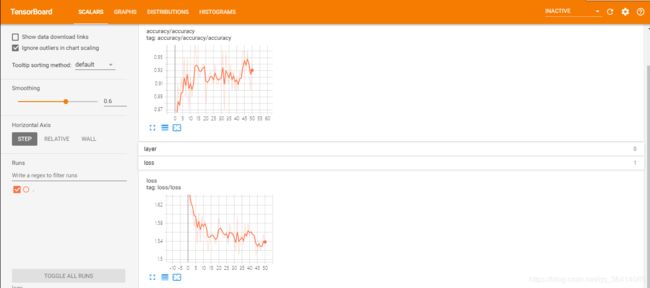

然后打开:http://127.0.0.1:6006

可以看到:

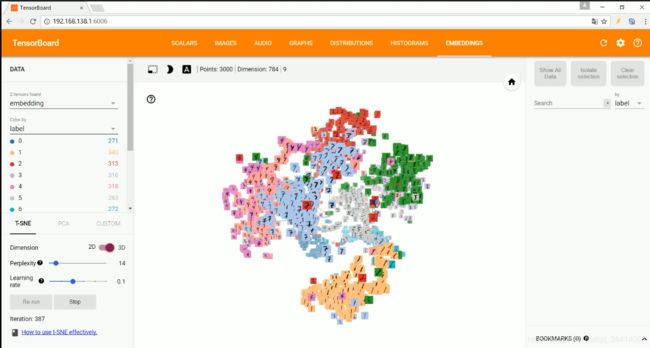

三、使用Tensorboard可视化训练过程

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

from tensorflow.contrib.tensorboard.plugins import projector

#载入数据集

mnist = input_data.read_data_sets("MNIST_data/",one_hot=True)

#运行次数

max_steps = 1001

#图片数量

image_num = 3000

#当前py文件路径

DIR = "D:/Tensorflow/"

#定义会话

sess = tf.Session()

#载入图片

embedding = tf.Variable(tf.stack(mnist.test.images[:image_num]), trainable=False, name='embedding')

#参数概要

def variable_summaries(var):

with tf.name_scope('summaries'):

mean = tf.reduce_mean(var)

tf.summary.scalar('mean', mean)#平均值

with tf.name_scope('stddev'):

stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))

tf.summary.scalar('stddev', stddev)#标准差

tf.summary.scalar('max', tf.reduce_max(var))#最大值

tf.summary.scalar('min', tf.reduce_min(var))#最小值

tf.summary.histogram('histogram', var)#直方图

#命名空间

with tf.name_scope('input'):

#这里的none表示第一个维度可以是任意的长度

x = tf.placeholder(tf.float32,[None,784],name='x-input')

#正确的标签

y = tf.placeholder(tf.float32,[None,10],name='y-input')

#显示图片

with tf.name_scope('input_reshape'):

image_shaped_input = tf.reshape(x, [-1, 28, 28, 1])

tf.summary.image('input', image_shaped_input, 10)

with tf.name_scope('layer'):

#创建一个简单神经网络

with tf.name_scope('weights'):

W = tf.Variable(tf.zeros([784,10]),name='W')

variable_summaries(W)

with tf.name_scope('biases'):

b = tf.Variable(tf.zeros([10]),name='b')

variable_summaries(b)

with tf.name_scope('wx_plus_b'):

wx_plus_b = tf.matmul(x,W) + b

with tf.name_scope('softmax'):

prediction = tf.nn.softmax(wx_plus_b)

with tf.name_scope('loss'):

#交叉熵代价函数

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y,logits=prediction))

tf.summary.scalar('loss',loss)

with tf.name_scope('train'):

#使用梯度下降法

train_step = tf.train.GradientDescentOptimizer(0.5).minimize(loss)

#初始化变量

sess.run(tf.global_variables_initializer())

with tf.name_scope('accuracy'):

with tf.name_scope('correct_prediction'):

#结果存放在一个布尔型列表中

correct_prediction = tf.equal(tf.argmax(y,1),tf.argmax(prediction,1))#argmax返回一维张量中最大的值所在的位置

with tf.name_scope('accuracy'):

#求准确率

accuracy = tf.reduce_mean(tf.cast(correct_prediction,tf.float32))#把correct_prediction变为float32类型

tf.summary.scalar('accuracy',accuracy)

#产生metadata文件

if tf.gfile.Exists(DIR + 'projector/projector/metadata.tsv'):

tf.gfile.DeleteRecursively(DIR + 'projector/projector/metadata.tsv')

with open(DIR + 'projector/projector/metadata.tsv', 'w') as f:

labels = sess.run(tf.argmax(mnist.test.labels[:],1))

for i in range(image_num):

f.write(str(labels[i]) + '\n')

#合并所有的summary

merged = tf.summary.merge_all()

projector_writer = tf.summary.FileWriter(DIR + 'projector/projector',sess.graph)

saver = tf.train.Saver()

config = projector.ProjectorConfig()

embed = config.embeddings.add()

embed.tensor_name = embedding.name

embed.metadata_path = DIR + 'projector/projector/metadata.tsv'

embed.sprite.image_path = DIR + 'projector/data/mnist_10k_sprite.png'

embed.sprite.single_image_dim.extend([28,28])

projector.visualize_embeddings(projector_writer,config)

for i in range(max_steps):

#每个批次100个样本

batch_xs,batch_ys = mnist.train.next_batch(100)

run_options = tf.RunOptions(trace_level=tf.RunOptions.FULL_TRACE)

run_metadata = tf.RunMetadata()

summary,_ = sess.run([merged,train_step],feed_dict={x:batch_xs,y:batch_ys},options=run_options,run_metadata=run_metadata)

projector_writer.add_run_metadata(run_metadata, 'step%03d' % i)

projector_writer.add_summary(summary, i)

if i%100 == 0:

acc = sess.run(accuracy,feed_dict={x:mnist.test.images,y:mnist.test.labels})

print ("Iter " + str(i) + ", Testing Accuracy= " + str(acc))

saver.save(sess, DIR + 'projector/projector/a_model.ckpt', global_step=max_steps)

projector_writer.close()

sess.close()