Tensorflow2.0 实现 YOLOv3(三):yolov3.py

文章目录

- 文章说明

- 传入参数

- YOLOv3

- decode

- bbox_iou

- bbox_giou

- compute_loss

- 完整代码

文章说明

本系列文章旨在对 Github 上 malin9402 提供的代码进行说明,在这篇文章中,我们会对 YOLOv3 项目中的 yolov3.py 文件进行说明。

如果只是想运行 Github 上的代码,可以参考对 YOLOv3 代码的说明一文。

传入参数

import numpy as np

import tensorflow as tf

import core.utils as utils

import core.common as common

import core.backbone as backbone

from core.config import cfg

NUM_CLASS = len(utils.read_class_names(cfg.YOLO.CLASSES))

ANCHORS = utils.get_anchors(cfg.YOLO.ANCHORS)

STRIDES = np.array(cfg.YOLO.STRIDES) # [8, 16, 32]

IOU_LOSS_THRESH = cfg.YOLO.IOU_LOSS_THRESH # IoU阈值为0.5

其中:

- NUM_CLASS:检测物体的类别数量;

- ANCHORS:(三种)先验框的尺寸;

- STRIDES:三个 feature map 上单位长度所代表的原始图像长度;

- IOU_LOSS_THRESH:IoU阈值。

YOLOv3

在文章 YOLOv3 之网络结构中已给出详细说明,此处不再赘述。

decode

目的:解码 YOLOv3 网络的输出。

输入:YOLOv3 网络的输出(三个 feature map 中的一个)。

步骤:

- 假设输入的形状为(1, 52, 52, 255),这里的 1 是指每次只训练一张图片;52 是指输入的 feature map 的长和宽,则这个 feature map 由 52x52 个格子组成;255 是指每个格子中含有的通道数。

- 将输入 reshape 成(1, 52, 52, 3, 85),3 是因为每个格子上有三个先验框;85 是指 4 个预测框信息(两个中心位置的偏移量和两个预测框长宽的偏移量),1 个预测框的置信度(判断预测框中有没有检测物体),80 个预测框的类别概率。

- 将 reshape 后的输入切片分到不同数组中;

- 对每个先验框生成相对坐标(画网格);

- 计算预测框的绝对坐标以及宽高度;

- 计算预测框的置信值和分类值。

下面这幅图将先验框和预测框画到原始图像中去了,其中黑色虚线框代表先验框,蓝色框表示的是预测框。

- b h b_h bh 和 b w b_w bw 分别表示预测框的长宽, p h p_h ph 和 p w p_w pw 分别表示先验框的长宽;

- t x t_x tx 和 t y t_y ty 表示的是物体中心距离网格左上角位置的偏移量, c x c_x cx 和 c y c_y cy 则代表预测框左上角的坐标。

def decode(conv_output, i=0):

conv_shape = tf.shape(conv_output)

batch_size = conv_shape[0] # 样本数

output_size = conv_shape[1] # 输出矩阵大小

conv_output = tf.reshape(conv_output, (batch_size, output_size, output_size, 3, 5 + NUM_CLASS))

conv_raw_dxdy = conv_output[:, :, :, :, 0:2] # 中心位置的偏移量

conv_raw_dwdh = conv_output[:, :, :, :, 2:4] # 预测框长宽的偏移量

conv_raw_conf = conv_output[:, :, :, :, 4:5] # 预测框的置信度

conv_raw_prob = conv_output[:, :, :, :, 5:] # 预测框的类别概率

# 1.对每个先验框生成在 feature map 上的相对坐标,以左上角为基准,其坐标单位为格子,即数值表示第几个格子

y = tf.tile(tf.range(output_size, dtype=tf.int32)[:, tf.newaxis], [1, output_size]) # shape=(52, 52)

x = tf.tile(tf.range(output_size, dtype=tf.int32)[tf.newaxis, :], [output_size, 1]) # shape=(52, 52)

xy_grid = tf.concat([x[:, :, tf.newaxis], y[:, :, tf.newaxis]], axis=-1) # shape=(52, 52, 2)

xy_grid = tf.tile(xy_grid[tf.newaxis, :, :, tf.newaxis, :], [batch_size, 1, 1, 3, 1]) # shape=(1, 52, 52, 3, 2)

xy_grid = tf.cast(xy_grid, tf.float32)

# 2,计算预测框的绝对坐标以及宽高度

# 根据上图公式计算预测框的中心位置

pred_xy = (tf.sigmoid(conv_raw_dxdy) + xy_grid) * STRIDES[i] # xy_grid表示 feature map 中左上角的位置,即是第几行第几个格子;STRIDES表示格子的长度,即 feature map 中一个格子在原始图像上的长度

# 根据上图公式计算预测框的长和宽大小

pred_wh = (tf.exp(conv_raw_dwdh) * ANCHORS[i]) * STRIDES[i] # ANCHORS[i] * STRIDES[i]表示先验框在原始图像中的大小

pred_xywh = tf.concat([pred_xy, pred_wh], axis=-1)

# 3. 计算预测框的置信值和分类值

pred_conf = tf.sigmoid(conv_raw_conf)

pred_prob = tf.sigmoid(conv_raw_prob)

return tf.concat([pred_xywh, pred_conf, pred_prob], axis=-1)

bbox_iou

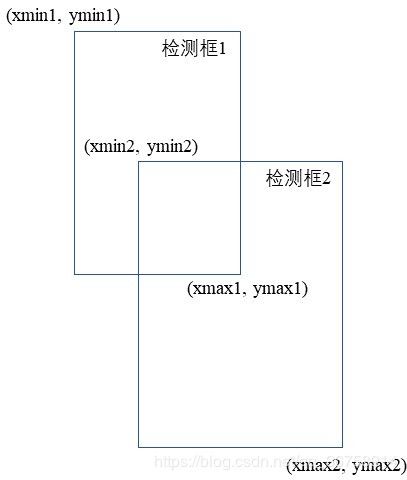

bbox_iou 函数被用来计算两个检测框之间的 IOU 值。在 utils.py 中也有函数 bboxes_iou 被用来计算 IOU 值,它们之间的区别是输入的检测框的信息:

- bboxes_iou 的输入是两个(或一个对多个)检测框左上角+右下角的坐标信息。

- bbox_iou 的输入是两个(或一个对多个)检测框中心坐标+宽高的坐标信息。

IOU 值其实就是两个框的交集面积比上它们的并集面积,这个值越大,代表这两个框的位置越接近。用下面这个图片表示:

def bbox_iou(boxes1, boxes2):

boxes1_area = boxes1[..., 2] * boxes1[..., 3] # 第一个检测框的面积

boxes2_area = boxes2[..., 2] * boxes2[..., 3] # 第二个检测框的面积

boxes1 = tf.concat([boxes1[..., :2] - boxes1[..., 2:] * 0.5,

boxes1[..., :2] + boxes1[..., 2:] * 0.5], axis=-1) # 第一个检测框的左上角坐标+右下角坐标

boxes2 = tf.concat([boxes2[..., :2] - boxes2[..., 2:] * 0.5,

boxes2[..., :2] + boxes2[..., 2:] * 0.5], axis=-1) # 第二个检测框的左上角坐标+右下角坐标

left_up = tf.maximum(boxes1[..., :2], boxes2[..., :2]) # 对上图来说,left_up=[xmin2, ymin2]

right_down = tf.minimum(boxes1[..., 2:], boxes2[..., 2:]) # 对上图来说,right_down=[xmax1, ymax1]

inter_section = tf.maximum(right_down - left_up, 0.0) # 交集区域

inter_area = inter_section[..., 0] * inter_section[..., 1] # 交集面积

union_area = boxes1_area + boxes2_area - inter_area # 并集面积

return 1.0 * inter_area / union_area

bbox_giou

在代码原作者的文章中,GIoU (Generalized IoU,广义 IoU ) 这种优化边界框的新方式被做了较为详细的介绍,这里用作者文章中的内容总结一下。

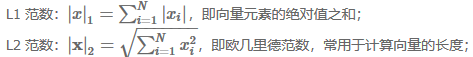

边界框一般由左上角和右下角坐标所表示,即 (xmin, ymin, xmax, ymax)。所以这其实也是一个向量。向量的距离一般可以用 L1 范数或者 L2 范数来度量。但是在 L1 及 L2 范数取到相同的值时,实际上检测效果却是差异巨大的,直接表现就是预测和真实检测框的 IoU 值变化较大,这说明 L1 和 L2 范数不能很好的反映检测效果。

当 L1 或 L2 范数都相同的时候,发现 IoU 和 GIoU 的值差别都很大,这表明使用 L 范数来度量边界框的距离是不合适的。在这种情况下,学术界普遍使用 IoU 来衡量两个边界框之间的相似性。作者发现使用 IoU 会有两个缺点,导致其不太适合做损失函数:

当 L1 或 L2 范数都相同的时候,发现 IoU 和 GIoU 的值差别都很大,这表明使用 L 范数来度量边界框的距离是不合适的。在这种情况下,学术界普遍使用 IoU 来衡量两个边界框之间的相似性。作者发现使用 IoU 会有两个缺点,导致其不太适合做损失函数:

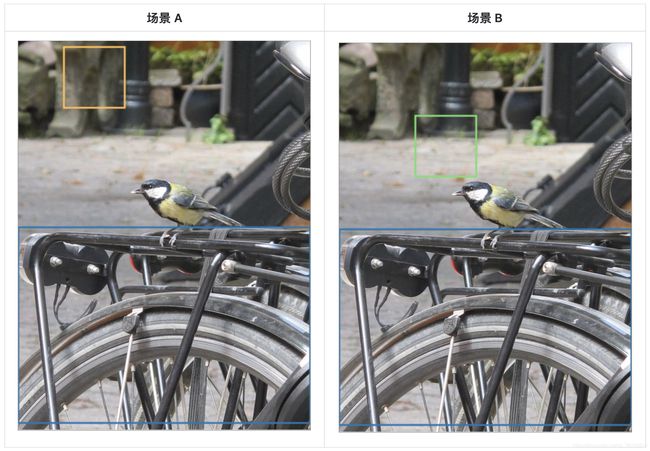

- 预测框和真实框之间没有重合时,IoU 值为 0, 导致优化损失函数时梯度也为 0,意味着无法优化。如下图所示,场景 A 和场景 B 的 IoU 值都为 0,但是显然场景 B 的预测效果较 A 更佳,因为两个边界框的距离更近( L 范数更小)。

- 即使预测框和真实框之间相重合且具有相同的 IoU 值时,检测的效果也具有较大差异,如下图所示。

上面三幅图的 IoU = 0.33, 但是 GIoU 值分别是 0.33, 0.24 和 -0.1, 这表明如果两个边界框重叠和对齐得越好,那么得到的 GIoU 值就会越高。

上面三幅图的 IoU = 0.33, 但是 GIoU 值分别是 0.33, 0.24 和 -0.1, 这表明如果两个边界框重叠和对齐得越好,那么得到的 GIoU 值就会越高。

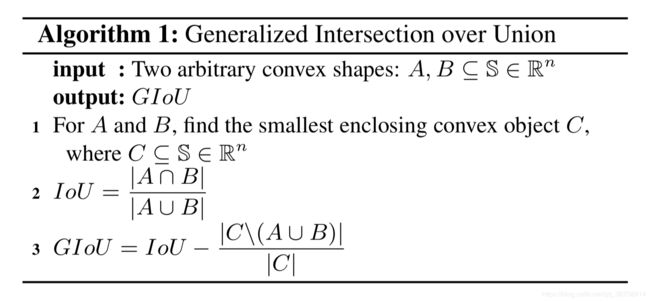

GIoU 的计算公式为:

the smallest enclosing convex object C 指的是最小闭合凸面 C,例如在上述场景 A 和 B 中,C 的形状分别为:

the smallest enclosing convex object C 指的是最小闭合凸面 C,例如在上述场景 A 和 B 中,C 的形状分别为:

图中绿色包含的区域就是最小闭合凸面 C,the smallest enclosing convex object。

图中绿色包含的区域就是最小闭合凸面 C,the smallest enclosing convex object。

在代码中,输入是模型输出的两个检测框位置(中心坐标+宽高)。输出的是这两个检测框的 GIOU 值。

def bbox_giou(boxes1, boxes2):

boxes1 = tf.concat([boxes1[..., :2] - boxes1[..., 2:] * 0.5,

boxes1[..., :2] + boxes1[..., 2:] * 0.5], axis=-1) # 第一个检测框的左上角坐标+右下角坐标

boxes2 = tf.concat([boxes2[..., :2] - boxes2[..., 2:] * 0.5,

boxes2[..., :2] + boxes2[..., 2:] * 0.5], axis=-1) # 第二个检测框的左上角坐标+右下角坐标

# 这两部分……我也不知道有什么用,好像改变不了什么。

boxes1 = tf.concat([tf.minimum(boxes1[..., :2], boxes1[..., 2:]),

tf.maximum(boxes1[..., :2], boxes1[..., 2:])], axis=-1)

boxes2 = tf.concat([tf.minimum(boxes2[..., :2], boxes2[..., 2:]),

tf.maximum(boxes2[..., :2], boxes2[..., 2:])], axis=-1)

boxes1_area = (boxes1[..., 2] - boxes1[..., 0]) * (boxes1[..., 3] - boxes1[..., 1]) # 第一个检测框的面积

boxes2_area = (boxes2[..., 2] - boxes2[..., 0]) * (boxes2[..., 3] - boxes2[..., 1]) # 第二个检测框的面积

left_up = tf.maximum(boxes1[..., :2], boxes2[..., :2]) # 对上图来说,left_up=[xmin2, ymin2]

right_down = tf.minimum(boxes1[..., 2:], boxes2[..., 2:]) # 对上图来说,right_down=[xmax1, ymax1]

inter_section = tf.maximum(right_down - left_up, 0.0) # 交集区域

inter_area = inter_section[..., 0] * inter_section[..., 1] # 交集面积

union_area = boxes1_area + boxes2_area - inter_area # 并集面积

iou = inter_area / union_area

enclose_left_up = tf.minimum(boxes1[..., :2], boxes2[..., :2]) # 对上图来说,enclose_left_up =[xmin1, ymin1]

enclose_right_down = tf.maximum(boxes1[..., 2:], boxes2[..., 2:]) # 对上图来说,enclose_right_down =[xmax2, ymax2]

enclose = tf.maximum(enclose_right_down - enclose_left_up, 0.0)

enclose_area = enclose[..., 0] * enclose[..., 1] # 最小闭合凸面面积

giou = iou - 1.0 * (enclose_area - union_area) / enclose_area

return giou

compute_loss

compute_loss 函数被用来计算损失。

损失分为三类:框回归损失、置信度损失以及分类损失。

框回归损失

计算过程:

- 获得置信度 r e s p o n d _ b b o x respond\_bbox respond_bbox;

- b b o x _ l o s s _ s c a l e = 2 − 框 的 面 积 原 图 面 积 bbox\_loss\_scale = 2-\frac{框的面积}{原图面积} bbox_loss_scale=2−原图面积框的面积

- 损失 g i o u _ l o s s = r e s p o n d _ b b o x × b b o x _ l o s s _ s c a l e × ( 1 − g i o u ) giou\_loss = respond\_bbox\times bbox\_loss\_scale\times (1 - giou) giou_loss=respond_bbox×bbox_loss_scale×(1−giou)

置信度损失

计算过程:

- 对某检测框求出它和所有真实框间的 IOU 值;

- 得到这些 IOU 值中最大的 IOU 值;

- 如果这个 IOU 值小于阈值,那么认为该检测框内不包含物体,为背景框(负样本),否则这个框是前景框(正样本);

- 正样本误差:直接计算交叉熵损失即可;

- 负样本误差:同样计算交叉熵损失,但对于如果最大 IOU 值大于阈值,但这个检测框实际上是负样本这种情况,我们不计误差;

- 置信度损失 = 正样本误差 + 负样本误差。

分类损失

对于分类损失,我们同样只考虑正样本损失,即对正样本进行交叉熵损失计算。

输入:

- pred:模型输出中经过解码的检测框,即原图上的检测框;

- conv:模型输出中没有经过解码的检测框,即 feature map 上的检测框;

- label:标签的格式为 [batch_size, output_size, output_size, anchor_per_scale, 85=(2个位置xy+2个形状wh+1个置信值+80个类别)];

- i:表示是第几个尺度上的 feature map。

def compute_loss(pred, conv, label, bboxes, i=0):

conv_shape = tf.shape(conv)

batch_size = conv_shape[0]

output_size = conv_shape[1]

input_size = STRIDES[i] * output_size # 原始图片尺寸

input_size = tf.cast(input_size, tf.float32)

conv = tf.reshape(conv, (batch_size, output_size, output_size, 3, 5 + NUM_CLASS))

# 模型输出的置信值与分类

conv_raw_conf = conv[:, :, :, :, 4:5]

conv_raw_prob = conv[:, :, :, :, 5:]

pred_xywh = pred[:, :, :, :, 0:4] # 模型输出处理后预测框的位置

pred_conf = pred[:, :, :, :, 4:5] # 模型输出处理后预测框的置信值

label_xywh = label[:, :, :, :, 0:4] # 标签图片的标注框位置

respond_bbox = label[:, :, :, :, 4:5] # 标签图片的置信值,有目标的为1 没有目标为0

label_prob = label[:, :, :, :, 5:] # 标签图片的分类

# 1、框回归损失

# 计算检测框和真实框的 GIOU 值

giou = tf.expand_dims(bbox_giou(pred_xywh, label_xywh), axis=-1)

# bbox_loss_scale 制衡误差 2-w*h

bbox_loss_scale = 2.0 - 1.0 * label_xywh[:, :, :, :, 2:3] * label_xywh[:, :, :, :, 3:4] / (input_size ** 2)

giou_loss = respond_bbox * bbox_loss_scale * (1 - giou)

# 2、置信度损失

# 生成负样本

iou = bbox_iou(pred_xywh[:, :, :, :, np.newaxis, :], bboxes[:, np.newaxis, np.newaxis, np.newaxis, :, :])

max_iou = tf.expand_dims(tf.reduce_max(iou, axis=-1), axis=-1) # [batch_size, output_size, output_size, 1, 1]

# respond_bgd 形状为 [batch_size, output_size, output_size, anchor_per_scale, x],当无目标且小于阈值时x为1,否则为0

respond_bgd = (1.0 - respond_bbox) * tf.cast(max_iou < IOU_LOSS_THRESH, tf.float32)

conf_focal = tf.pow(respond_bbox - pred_conf, 2)

conf_loss = conf_focal * (

# 正样本误差

respond_bbox * tf.nn.sigmoid_cross_entropy_with_logits(labels=respond_bbox, logits=conv_raw_conf)

+

# 负样本误差

respond_bgd * tf.nn.sigmoid_cross_entropy_with_logits(labels=respond_bbox, logits=conv_raw_conf)

)

# 3.分类损失

prob_loss = respond_bbox * tf.nn.sigmoid_cross_entropy_with_logits(labels=label_prob, logits=conv_raw_prob)

# 误差平均

giou_loss = tf.reduce_mean(tf.reduce_sum(giou_loss, axis=[1, 2, 3, 4]))

conf_loss = tf.reduce_mean(tf.reduce_sum(conf_loss, axis=[1, 2, 3, 4]))

prob_loss = tf.reduce_mean(tf.reduce_sum(prob_loss, axis=[1, 2, 3, 4]))

return giou_loss, conf_loss, prob_loss

完整代码

import numpy as np

import tensorflow as tf

import core.utils as utils

import core.common as common

import core.backbone as backbone

from core.config import cfg

NUM_CLASS = len(utils.read_class_names(cfg.YOLO.CLASSES))

ANCHORS = utils.get_anchors(cfg.YOLO.ANCHORS)

STRIDES = np.array(cfg.YOLO.STRIDES)

IOU_LOSS_THRESH = cfg.YOLO.IOU_LOSS_THRESH

def YOLOv3(input_layer):

route_1, route_2, conv = backbone.darknet53(input_layer)

conv = common.convolutional(conv, (1, 1, 1024, 512))

conv = common.convolutional(conv, (3, 3, 512, 1024))

conv = common.convolutional(conv, (1, 1, 1024, 512))

conv = common.convolutional(conv, (3, 3, 512, 1024))

conv = common.convolutional(conv, (1, 1, 1024, 512))

conv_lobj_branch = common.convolutional(conv, (3, 3, 512, 1024))

conv_lbbox = common.convolutional(conv_lobj_branch, (1, 1, 1024, 3*(NUM_CLASS + 5)), activate=False, bn=False)

conv = common.convolutional(conv, (1, 1, 512, 256))

conv = common.upsample(conv)

conv = tf.concat([conv, route_2], axis=-1)

conv = common.convolutional(conv, (1, 1, 768, 256))

conv = common.convolutional(conv, (3, 3, 256, 512))

conv = common.convolutional(conv, (1, 1, 512, 256))

conv = common.convolutional(conv, (3, 3, 256, 512))

conv = common.convolutional(conv, (1, 1, 512, 256))

conv_mobj_branch = common.convolutional(conv, (3, 3, 256, 512))

conv_mbbox = common.convolutional(conv_mobj_branch, (1, 1, 512, 3*(NUM_CLASS + 5)), activate=False, bn=False)

conv = common.convolutional(conv, (1, 1, 256, 128))

conv = common.upsample(conv)

conv = tf.concat([conv, route_1], axis=-1)

conv = common.convolutional(conv, (1, 1, 384, 128))

conv = common.convolutional(conv, (3, 3, 128, 256))

conv = common.convolutional(conv, (1, 1, 256, 128))

conv = common.convolutional(conv, (3, 3, 128, 256))

conv = common.convolutional(conv, (1, 1, 256, 128))

conv_sobj_branch = common.convolutional(conv, (3, 3, 128, 256))

conv_sbbox = common.convolutional(conv_sobj_branch, (1, 1, 256, 3*(NUM_CLASS +5)), activate=False, bn=False)

return [conv_sbbox, conv_mbbox, conv_lbbox]

def decode(conv_output, i=0):

"""

return tensor of shape [batch_size, output_size, output_size, anchor_per_scale, 5 + num_classes]

contains (x, y, w, h, score, probability)

"""

conv_shape = tf.shape(conv_output)

batch_size = conv_shape[0]

output_size = conv_shape[1]

conv_output = tf.reshape(conv_output, (batch_size, output_size, output_size, 3, 5 + NUM_CLASS))

conv_raw_dxdy = conv_output[:, :, :, :, 0:2]

conv_raw_dwdh = conv_output[:, :, :, :, 2:4]

conv_raw_conf = conv_output[:, :, :, :, 4:5]

conv_raw_prob = conv_output[:, :, :, :, 5: ]

y = tf.tile(tf.range(output_size, dtype=tf.int32)[:, tf.newaxis], [1, output_size])

x = tf.tile(tf.range(output_size, dtype=tf.int32)[tf.newaxis, :], [output_size, 1])

xy_grid = tf.concat([x[:, :, tf.newaxis], y[:, :, tf.newaxis]], axis=-1)

xy_grid = tf.tile(xy_grid[tf.newaxis, :, :, tf.newaxis, :], [batch_size, 1, 1, 3, 1])

xy_grid = tf.cast(xy_grid, tf.float32)

pred_xy = (tf.sigmoid(conv_raw_dxdy) + xy_grid) * STRIDES[i]

pred_wh = (tf.exp(conv_raw_dwdh) * ANCHORS[i]) * STRIDES[i]

pred_xywh = tf.concat([pred_xy, pred_wh], axis=-1)

pred_conf = tf.sigmoid(conv_raw_conf)

pred_prob = tf.sigmoid(conv_raw_prob)

return tf.concat([pred_xywh, pred_conf, pred_prob], axis=-1)

def bbox_iou(boxes1, boxes2):

boxes1_area = boxes1[..., 2] * boxes1[..., 3]

boxes2_area = boxes2[..., 2] * boxes2[..., 3]

boxes1 = tf.concat([boxes1[..., :2] - boxes1[..., 2:] * 0.5,

boxes1[..., :2] + boxes1[..., 2:] * 0.5], axis=-1)

boxes2 = tf.concat([boxes2[..., :2] - boxes2[..., 2:] * 0.5,

boxes2[..., :2] + boxes2[..., 2:] * 0.5], axis=-1)

left_up = tf.maximum(boxes1[..., :2], boxes2[..., :2])

right_down = tf.minimum(boxes1[..., 2:], boxes2[..., 2:])

inter_section = tf.maximum(right_down - left_up, 0.0)

inter_area = inter_section[..., 0] * inter_section[..., 1]

union_area = boxes1_area + boxes2_area - inter_area

return 1.0 * inter_area / union_area

def bbox_giou(boxes1, boxes2):

boxes1 = tf.concat([boxes1[..., :2] - boxes1[..., 2:] * 0.5,

boxes1[..., :2] + boxes1[..., 2:] * 0.5], axis=-1)

boxes2 = tf.concat([boxes2[..., :2] - boxes2[..., 2:] * 0.5,

boxes2[..., :2] + boxes2[..., 2:] * 0.5], axis=-1)

boxes1 = tf.concat([tf.minimum(boxes1[..., :2], boxes1[..., 2:]),

tf.maximum(boxes1[..., :2], boxes1[..., 2:])], axis=-1)

boxes2 = tf.concat([tf.minimum(boxes2[..., :2], boxes2[..., 2:]),

tf.maximum(boxes2[..., :2], boxes2[..., 2:])], axis=-1)

boxes1_area = (boxes1[..., 2] - boxes1[..., 0]) * (boxes1[..., 3] - boxes1[..., 1])

boxes2_area = (boxes2[..., 2] - boxes2[..., 0]) * (boxes2[..., 3] - boxes2[..., 1])

left_up = tf.maximum(boxes1[..., :2], boxes2[..., :2])

right_down = tf.minimum(boxes1[..., 2:], boxes2[..., 2:])

inter_section = tf.maximum(right_down - left_up, 0.0)

inter_area = inter_section[..., 0] * inter_section[..., 1]

union_area = boxes1_area + boxes2_area - inter_area

iou = inter_area / union_area

enclose_left_up = tf.minimum(boxes1[..., :2], boxes2[..., :2])

enclose_right_down = tf.maximum(boxes1[..., 2:], boxes2[..., 2:])

enclose = tf.maximum(enclose_right_down - enclose_left_up, 0.0)

enclose_area = enclose[..., 0] * enclose[..., 1]

giou = iou - 1.0 * (enclose_area - union_area) / enclose_area

return giou

def compute_loss(pred, conv, label, bboxes, i=0):

conv_shape = tf.shape(conv)

batch_size = conv_shape[0]

output_size = conv_shape[1]

input_size = STRIDES[i] * output_size

conv = tf.reshape(conv, (batch_size, output_size, output_size, 3, 5 + NUM_CLASS))

conv_raw_conf = conv[:, :, :, :, 4:5]

conv_raw_prob = conv[:, :, :, :, 5:]

pred_xywh = pred[:, :, :, :, 0:4]

pred_conf = pred[:, :, :, :, 4:5]

label_xywh = label[:, :, :, :, 0:4]

respond_bbox = label[:, :, :, :, 4:5]

label_prob = label[:, :, :, :, 5:]

giou = tf.expand_dims(bbox_giou(pred_xywh, label_xywh), axis=-1)

input_size = tf.cast(input_size, tf.float32)

bbox_loss_scale = 2.0 - 1.0 * label_xywh[:, :, :, :, 2:3] * label_xywh[:, :, :, :, 3:4] / (input_size ** 2)

giou_loss = respond_bbox * bbox_loss_scale * (1- giou)

iou = bbox_iou(pred_xywh[:, :, :, :, np.newaxis, :], bboxes[:, np.newaxis, np.newaxis, np.newaxis, :, :])

max_iou = tf.expand_dims(tf.reduce_max(iou, axis=-1), axis=-1)

respond_bgd = (1.0 - respond_bbox) * tf.cast( max_iou < IOU_LOSS_THRESH, tf.float32 )

conf_focal = tf.pow(respond_bbox - pred_conf, 2)

conf_loss = conf_focal * (

respond_bbox * tf.nn.sigmoid_cross_entropy_with_logits(labels=respond_bbox, logits=conv_raw_conf)

+

respond_bgd * tf.nn.sigmoid_cross_entropy_with_logits(labels=respond_bbox, logits=conv_raw_conf)

)

prob_loss = respond_bbox * tf.nn.sigmoid_cross_entropy_with_logits(labels=label_prob, logits=conv_raw_prob)

giou_loss = tf.reduce_mean(tf.reduce_sum(giou_loss, axis=[1,2,3,4]))

conf_loss = tf.reduce_mean(tf.reduce_sum(conf_loss, axis=[1,2,3,4]))

prob_loss = tf.reduce_mean(tf.reduce_sum(prob_loss, axis=[1,2,3,4]))

return giou_loss, conf_loss, prob_loss