2019独角兽企业重金招聘Python工程师标准>>> ![]()

提要:本文中项目代码运行在windows的eclipse开发环境下。

注意事项:项目中需要引用Flume提供的jar包,最新版的Flume-1.6.0的jar包需要JDK1.7的环境,JDK1.8会报 ClassNotFoundException:org.apache.flume.clients.log4jappender.Log4jAppender 错误。

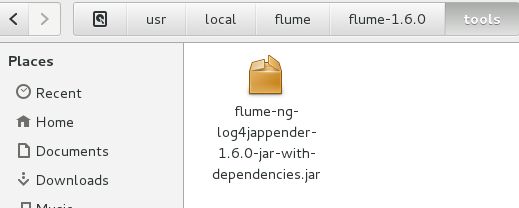

1、将flume安装路径下的tools文件下的jar包:flume-ng-log4jappender-1.6.0-jar-with-dependencies.jar

引入项目。(这个包是包含了所有依赖包的包。有的示例里说可以引用flume-ng-core-1.6.0.jar之类的等等,那种运行会报错,会报很多类找不到,还需要引用好多jar包,应用不便)

如图:

OS:作为一个小新手,之前查了好多资料,不同版本包名不一样,好多只说引入包,就不写路径或者路径真的不太一样,真是神烦。

2、 配置log4j,以下为配置文件:

log4j.rootLogger=INFO,console,file,flume

log4j.appender.console=org.apache.log4j.ConsoleAppender

log4j.appender.console.layout=org.apache.log4j.PatternLayout

log4j.appender.console.layout.ConversionPattern=%-5p %d [<%t>%F,%L] - %m%n

log4j.appender.file=org.apache.log4j.DailyRollingFileAppender

log4j.appender.file.layout=org.apache.log4j.PatternLayout

log4j.appender.file.datePattern=yyyy-MM-dd-HH'.log'

log4j.appender.file.append=true

log4j.appender.file.layout.ConversionPattern=%-5p %d [%F,%L] - %m%n

log4j.appender.file.File=D:/logs/logs.log

log4j.appender.flume=org.apache.flume.clients.log4jappender.Log4jAppender

log4j.appender.flume.Hostname=192.xxx.x.xxx(此处为flume配置文件中配置的IP)

log4j.appender.flume.Port=44444

log4j.appender.flume.UnsafeMode=true3、写一个测试项目用于测试flume:

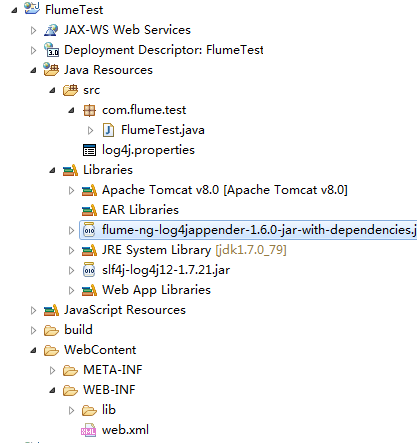

1)新建一个Dynamic Web Project

2)引入 flume-ng-log4jappender-1.6.0-jar-with-dependencies.jar 和slf4j-log4j12-1.7.21.jar

3)配置log4j.properties,具体配置如2。

4)写一个servlet

package com.flume.test;

import java.util.Date;

import javax.servlet.ServletContextEvent;

import javax.servlet.ServletContextListener;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

public class FlumeTest implements ServletContextListener{

protected static final Logger logger = LoggerFactory.getLogger(FlumeTest.class);

@Override

public void contextDestroyed(ServletContextEvent arg0) {

}

@SuppressWarnings("static-access")

@Override

public void contextInitialized(ServletContextEvent arg0) {

while (true) {

//每隔两秒log输出一下当前系统时间戳

logger.info(""+new Date().getTime());

Thread t = new Thread();

try {

t.sleep(2000);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}

}

5)配置一下web.xml

FlumeTest

index.html

index.htm

index.jsp

com.flume.test.FlumeTest

6)配置写入hdfs的flume代理文件

# example.conf: A single-node Flume configuration

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = avro

a1.sources.r1.bind = 192.xxx.x.xxx

#上面IP根据个人配置

a1.sources.r1.port = 44444

# Describe the sink

a1.sinks.k1.type = hdfs

a1.sinks.k1.hdfs.path=hdfs://localhost:9000/user/hadoop/flume

#hdfs://localhost:9000/user/hadoop/flume 根据个人的hdfs配置更改

a1.sinks.k1.hdfs.fileType=DataStream

a1.sinks.k1.hdfs.writeFormat=Text

a1.sinks.k1.hdfs.rollInterval=0

a1.sinks.k1.hdfs.rollSize=10240

a1.sinks.k1.hdfs.rollCount=0

a1.sinks.k1.hdfs.idleTimeout=60

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1此时启动hdfs,flume代理、web项目即可实现flume写入hdfs。