Ceph分布式存储系统-性能测试与优化

测试环境

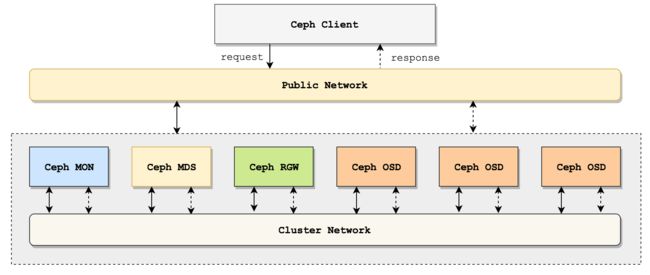

部署方案:整个Ceph Cluster使用4台ECS,均在同一VPC中,结构如图:

以下是 Ceph 的测试环境,说明如下:

- Ceph 采用 10.2.10 版本,安装于 CentOS 7.4 版本中;系统为初始安装,没有调优。

- 每个 OSD 存储服务器都是4核8GB,挂载1块300G高效云盘(非SSD硬盘);操作系统和OSD存储均用同一个磁盘。

[root@node1 ~]# ceph osd tree

ID WEIGHT TYPE NAME UP/DOWN REWEIGHT PRIMARY-AFFINITY

-6 0 rack test-bucket

-5 0 rack demo

-1 0.86458 root default

-2 0.28819 host node2

0 0.28819 osd.0 up 1.00000 1.00000

-3 0.28819 host node3

1 0.28819 osd.1 up 1.00000 1.00000

-4 0.28819 host node4

2 0.28819 osd.2 up 1.00000 1.00000- 使用 Test pool,此池为 64 个 PGs,数据存三份;

[root@node1 ~]# ceph osd pool create test 64 64

pool 'test' created

[root@node1 ~]# ceph osd pool get test size

size: 3

[root@node1 ~]# ceph osd pool get test pg_num

pg_num: 64- Ceph osd 采用 xfs 文件系统(若使用 brtf 文件系统读写性能将翻 2 倍,但brtf不建议在生产环境使用);

- Ceph 系统中的Block采用默认安装,为 64K;

- 性能测试客户端运行在node1上,在同一VPC下使用同一网段访问 Ceph 存贮系统进行数据读写;

本次测试中,发起流量的客户端位于Ceph Cluster中,故网络延时较小,真正生产环境中还需要考虑网络瓶颈。生产环境的网络访问图如下:

磁盘性能测试

测试磁盘写吞吐量

使用dd命令对磁盘进行标准写测试。使用一下命令行读取和写入文件,记住添加oflag参数以绕过磁盘页面缓存。

node1:

[root@node1 ~]# dd if=/dev/zero of=here bs=1G count=1 oflag=direct

记录了1+0 的读入

记录了1+0 的写出

1073741824字节(1.1 GB)已复制,15.466 秒,69.4 MB/秒node2:

[root@node2 ~]# dd if=/dev/zero of=here bs=1G count=1 oflag=direct

记录了1+0 的读入

记录了1+0 的写出

1073741824字节(1.1 GB)已复制,13.6518 秒,78.7 MB/秒node3:

[root@node3 ~]# dd if=/dev/zero of=here bs=1G count=1 oflag=direct

记录了1+0 的读入

记录了1+0 的写出

1073741824字节(1.1 GB)已复制,13.6466 秒,78.7 MB/秒node4:

[root@node4 ~]# dd if=/dev/zero of=here bs=1G count=1 oflag=direct

记录了1+0 的读入

记录了1+0 的写出

1073741824字节(1.1 GB)已复制,13.6585 秒,78.6 MB/秒可以看出,除了node1节点外,磁盘吞吐量在 78 MB/s 左右。node1上没有部署osd,最终不作为ceph的读写性能评判参考。

测试磁盘写延迟

使用dd命令,每次写512字节,连续写1万次。

node1:

[root@node1 test]# dd if=/dev/zero of=512 bs=512 count=10000 oflag=direct

记录了10000+0 的读入

记录了10000+0 的写出

5120000字节(5.1 MB)已复制,6.06715 秒,844 kB/秒node2:

[root@node2 test]# dd if=/dev/zero of=512 bs=512 count=10000 oflag=direct

记录了10000+0 的读入

记录了10000+0 的写出

5120000字节(5.1 MB)已复制,4.12061 秒,1.2 MB/秒node3:

[root@node3 test]# dd if=/dev/zero of=512 bs=512 count=10000 oflag=direct

记录了10000+0 的读入

记录了10000+0 的写出

5120000字节(5.1 MB)已复制,3.88562 秒,1.3 MB/秒node4:

[root@node4 test]# dd if=/dev/zero of=512 bs=512 count=10000 oflag=direct

记录了10000+0 的读入

记录了10000+0 的写出

5120000字节(5.1 MB)已复制,3.60598 秒,1.4 MB/秒平均耗时4秒,平均速度1.3MB/s。

集群网络I/O测试

由于客户端访问都是通过rgw访问各个osd(文件存储服务除外),主要测试rgw节点到各个osd节点的网络性能I/O。

rgw到osd.0

在osd.0节点上使用nc监听17480端口的网络I/O请求:

[root@node2 ~]# nc -v -l -n 17480 > /dev/null

Ncat: Version 6.40 ( http://nmap.org/ncat )

Ncat: Listening on :::17480

Ncat: Listening on 0.0.0.0:17480

Ncat: Connection from 192.168.0.97.

Ncat: Connection from 192.168.0.97:33644.在rgw节点上发起网络I/O请求:

[root@node2 ~]# time dd if=/dev/zero | nc -v -n 192.168.0.97 17480

Ncat: Version 6.40 ( http://nmap.org/ncat )

Ncat: Connected to 192.168.0.97:17480.

^C记录了121182456+0 的读入

记录了121182455+0 的写出

62045416960字节(62 GB)已复制,413.154 秒,150 MB/秒

real 6m53.156s

user 5m54.626s

sys 7m51.485s网络I/O总流量62GB,耗时413.154秒,平均速度150 MB/秒。

rgw到osd.1

在osd.1节点上使用nc监听17480端口的网络I/O请求:

[root@node3 ~]# nc -v -l -n 17480 > /dev/null

Ncat: Version 6.40 ( http://nmap.org/ncat )

Ncat: Listening on :::17480

Ncat: Listening on 0.0.0.0:17480

Ncat: Connection from 192.168.0.97.

Ncat: Connection from 192.168.0.97:35418.在rgw节点上发起网络I/O请求:

[root@node2 ~]# time dd if=/dev/zero | nc -v -n 192.168.0.98 17480

Ncat: Version 6.40 ( http://nmap.org/ncat )

Ncat: Connected to 192.168.0.98:17480.

^C记录了30140790+0 的读入

记录了30140789+0 的写出

15432083968字节(15 GB)已复制,111.024 秒,139 MB/秒

real 1m51.026s

user 1m21.996s

sys 2m20.039s网络I/O总流量15GB,耗时111.024秒,平均速度139 MB/秒。

rgw到osd.2

在osd.2节点上使用nc监听17480端口的网络I/O请求:

[root@node4 ~]# nc -v -l -n 17480 > /dev/null

Ncat: Version 6.40 ( http://nmap.org/ncat )

Ncat: Listening on :::17480

Ncat: Listening on 0.0.0.0:17480

Ncat: Connection from 192.168.0.97.

Ncat: Connection from 192.168.0.97:39156.在rgw节点上发起网络I/O请求:

[root@node2 ~]# time dd if=/dev/zero | nc -v -n 192.168.0.99 17480

Ncat: Version 6.40 ( http://nmap.org/ncat )

Ncat: Connected to 192.168.0.99:17480.

^C记录了34434250+0 的读入

记录了34434249+0 的写出

17630335488字节(18 GB)已复制,112.903 秒,156 MB/秒

real 1m52.906s

user 1m23.308s

sys 2m22.487s网络I/O总流量18GB,耗时112.903秒,平均速度156 MB/秒。

总结:集群内不同节点间,网络I/O平均在150MB/s左右。跟实际情况相符,因为本集群是千兆网卡。

rados集群性能测试

准备工作

-

- 查看ceph cluster的osd分布情况:

[root@node1 ~]# ceph osd tree

ID WEIGHT TYPE NAME UP/DOWN REWEIGHT PRIMARY-AFFINITY

-6 0 rack test-bucket

-5 0 rack demo

-1 0.86458 root default

-2 0.28819 host node2

0 0.28819 osd.0 up 1.00000 1.00000

-3 0.28819 host node3

1 0.28819 osd.1 up 1.00000 1.00000

-4 0.28819 host node4

2 0.28819 osd.2 up 1.00000 1.00000可见该cluster部署了3个osd节点,3个都处于up状态(正常work)。

- 为rados集群性能测试创建一个test pool,此池为 64 个 PGs,数据存三份;

[root@node1 ~]# ceph osd pool create test 64 64

pool 'test' created

[root@node1 ~]# ceph osd pool get test size

size: 3

[root@node1 ~]# ceph osd pool get test pg_num

pg_num: 64- 查看test pool默认配置:

[root@node1 test]# ceph osd dump | grep test

pool 12 'test' replicated size 3 min_size 2 crush_ruleset 0 object_hash rjenkins pg_num 64 pgp_num 64 last_change 37 flags hashpspool stripe_width 0- 查看test poll资源占用情况:

[root@node1 test]# rados -p test df

pool name KB objects clones degraded unfound rd rd KB wr wr KB

test 0 0 0 0 0 0 0 0 0

total used 27044652 192

total avail 854232624

total space 928512000写性能测试

- 测试写性能

[root@node1 ~]# rados bench -p test 60 write --no-cleanup

Maintaining 16 concurrent writes of 4194304 bytes to objects of size 4194304 for up to 60 seconds or 0 objects

Object prefix: benchmark_data_node1_26604

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

0 0 0 0 0 0 - 0

1 16 31 15 59.9966 60 0.953952 0.614647

2 16 38 22 43.9954 28 1.38736 0.781039

3 16 46 30 39.9958 32 1.87801 1.06765

4 16 61 45 44.9953 60 1.19344 1.23191

5 16 76 60 47.9949 60 0.993045 1.17022

6 16 91 75 49.9946 60 1.00303 1.1498

7 16 106 90 51.4231 60 0.999574 1.13609

8 16 119 103 51.4945 52 1.00504 1.12779

9 16 122 106 47.106 12 1.20668 1.13173

10 16 122 106 42.3954 0 - 1.13173

11 16 125 109 39.632 6 2.8996 1.18213

12 16 137 121 40.3289 48 3.90723 1.45272

13 16 151 135 41.5339 56 1.10043 1.47333

14 16 169 153 43.7096 72 0.927572 1.4129

15 16 181 165 43.9952 48 1.02879 1.38739

16 16 196 180 44.9951 60 1.08398 1.36665

17 16 209 193 45.4068 52 1.117 1.34742

18 16 212 196 43.5508 12 1.30703 1.3468

19 16 215 199 41.8902 12 2.79917 1.36874

2018-03-20 17:06:48.745397 min lat: 0.229762 max lat: 4.09713 avg lat: 1.40039

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

20 16 218 202 40.3956 12 3.49784 1.40039

21 16 225 209 39.8051 28 4.18987 1.48851

22 16 241 225 40.9046 64 1.00629 1.53148

23 16 256 240 41.7345 60 1.18098 1.49869

24 16 271 255 42.4953 60 1.0017 1.47319

25 16 286 270 43.1952 60 1.00118 1.45067

26 16 299 283 43.5337 52 1.19813 1.43348

27 16 302 286 42.3657 12 1.30607 1.43215

28 16 302 286 40.8527 0 - 1.43215

29 16 305 289 39.8577 6 3.00461 1.44847

30 16 316 300 39.9956 44 3.73721 1.54023

31 16 331 315 40.6407 60 0.97103 1.54526

32 16 346 330 41.2455 60 0.999926 1.5214

33 16 361 345 41.8136 60 1.00411 1.50169

34 16 376 360 42.3483 60 1.00089 1.48355

35 16 386 370 42.2811 40 1.20272 1.4727

36 16 389 373 41.4399 12 1.50616 1.47296

37 16 392 376 40.6442 12 3.1067 1.486

38 16 395 379 39.8903 12 3.90852 1.50518

39 16 402 386 39.5854 28 4.12175 1.551

2018-03-20 17:07:08.747628 min lat: 0.229762 max lat: 4.29984 avg lat: 1.56868

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

40 16 418 402 40.1956 64 1.07659 1.56868

41 16 433 417 40.6784 60 0.999955 1.54939

42 16 448 432 41.1383 60 1.17664 1.53256

43 16 463 447 41.5768 60 1.00297 1.51695

44 16 478 462 41.9953 60 1.00466 1.50234

45 16 479 463 41.151 4 1.19512 1.50168

46 16 482 466 40.5172 12 2.6118 1.50882

47 16 485 469 39.9105 12 3.3123 1.52034

48 16 493 477 39.7456 32 4.00971 1.55901

49 16 508 492 40.1588 60 1.01054 1.57611

50 16 523 507 40.5555 60 0.996004 1.55869

51 16 538 522 40.9366 60 0.997722 1.54464

52 16 553 537 41.3031 60 1.19815 1.53113

53 16 568 552 41.6557 60 1.21298 1.51864

54 16 572 556 41.1806 16 1.49932 1.51797

55 16 572 556 40.4318 0 - 1.51797

56 16 575 559 39.9241 6 3.09559 1.52643

57 16 583 567 39.785 32 3.99229 1.55923

58 16 595 579 39.9266 48 1.37706 1.57952

59 16 612 596 40.4022 68 0.89873 1.56855

2018-03-20 17:07:28.749935 min lat: 0.229762 max lat: 4.29984 avg lat: 1.56738

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

60 16 624 608 40.5288 48 1.65518 1.56738

Total time run: 60.821654

Total writes made: 625

Write size: 4194304

Object size: 4194304

Bandwidth (MB/sec): 41.1038

Stddev Bandwidth: 23.0404

Max bandwidth (MB/sec): 72

Min bandwidth (MB/sec): 0

Average IOPS: 10

Stddev IOPS: 5

Max IOPS: 18

Min IOPS: 0

Average Latency(s): 1.55581

Stddev Latency(s): 0.981606

Max latency(s): 4.29984

Min latency(s): 0.229762如果加上可选参数 --no-cleanup ,那么测试完之后,不会删除该池里面的数据。里面的数据可以继续用于测试集群的读性能。

从以上测试数据可以看出:数据写入时的平均带宽是41MB/sec,最大带宽是72,带宽标准差是23(反应网络稳定情况)。

读性能测试

- 测试读性能

[root@node1 ~]# rados bench -p test 60 rand

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

0 0 0 0 0 0 - 0

1 16 101 85 339.935 340 0.270579 0.147057

2 16 145 129 257.955 176 0.246583 0.220784

3 16 191 175 233.297 184 0.53086 0.253465

4 16 236 220 219.968 180 0.0326233 0.268682

5 16 281 265 211.971 180 0.528696 0.286853

6 16 328 312 207.973 188 0.0203012 0.295207

7 16 371 355 202.831 172 0.283736 0.303328

8 16 415 399 199.475 176 0.508335 0.30781

9 16 461 445 197.753 184 0.24398 0.312503

10 16 510 494 197.576 196 0.499586 0.31802

11 16 556 540 196.34 184 0.259304 0.320708

12 16 602 586 195.31 184 0.745053 0.320777

13 16 646 630 193.823 176 0.0422189 0.32386

14 16 692 676 193.12 184 0.0467997 0.326607

15 16 735 719 191.711 172 0.0272729 0.327432

16 16 777 761 190.228 168 0.0160831 0.326381

17 16 821 805 189.39 176 0.483385 0.330262

18 16 865 849 188.645 176 0.0279903 0.330038

19 16 913 897 188.82 192 0.237649 0.332631

2018-03-20 17:08:51.231039 min lat: 0.00844047 max lat: 0.964959 avg lat: 0.332994

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

20 16 962 946 189.178 196 0.0115256 0.332994

21 16 1009 993 189.121 188 0.26545 0.334135

22 16 1052 1036 188.342 172 0.502163 0.335411

23 16 1095 1079 187.631 172 0.191482 0.335954

24 16 1140 1124 187.312 180 0.0187187 0.33593

25 16 1187 1171 187.339 188 0.0128352 0.336301

26 16 1232 1216 187.056 180 0.0260001 0.336886

27 16 1278 1262 186.942 184 0.0148474 0.336478

28 16 1324 1308 186.836 184 0.723555 0.337355

29 16 1367 1351 186.324 172 0.0246515 0.339247

30 16 1412 1396 186.113 180 0.0120403 0.339659

31 16 1460 1444 186.302 192 0.569969 0.338129

32 16 1506 1490 186.229 184 0.0316037 0.340041

33 16 1551 1535 186.04 180 0.0273989 0.340237

34 16 1596 1580 185.862 180 0.525298 0.340735

35 16 1638 1622 185.351 168 0.0101045 0.34052

36 16 1686 1670 185.535 192 0.0159173 0.34091

37 16 1731 1715 185.385 180 0.986173 0.339939

38 16 1775 1759 185.138 176 0.0152587 0.340806

39 16 1818 1802 184.8 172 0.216865 0.342337

2018-03-20 17:09:11.233088 min lat: 0.0080755 max lat: 1.20072 avg lat: 0.342772

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

40 16 1863 1847 184.68 180 0.298863 0.342772

41 16 1907 1891 184.468 176 0.539937 0.341949

42 16 1950 1934 184.17 172 0.501967 0.343196

43 16 1997 1981 184.259 188 0.258521 0.34255

44 16 2043 2027 184.253 184 0.0441231 0.343493

45 16 2088 2072 184.158 180 0.302963 0.343621

46 16 2135 2119 184.241 188 0.0198267 0.34337

47 16 2179 2163 184.065 176 0.26388 0.343744

48 16 2224 2208 183.98 180 0.274291 0.343872

49 16 2268 2252 183.817 176 0.0345847 0.343383

50 16 2314 2298 183.82 184 0.0555181 0.344454

51 16 2359 2343 183.745 180 0.288888 0.344362

52 16 2405 2389 183.749 184 0.280761 0.344848

53 16 2447 2431 183.452 168 0.0135715 0.34438

54 16 2496 2480 183.684 196 0.259152 0.344883

55 15 2542 2527 183.762 188 0.0231959 0.34473

56 15 2585 2570 183.552 172 0.235059 0.345157

57 16 2627 2611 183.208 164 0.272916 0.3454

58 16 2674 2658 183.29 188 0.534074 0.345242

59 16 2717 2701 183.099 172 0.261746 0.345621

2018-03-20 17:09:31.235266 min lat: 0.0080755 max lat: 1.20072 avg lat: 0.344692

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

60 16 2765 2749 183.247 192 0.213941 0.344692

Total time run: 60.297422

Total reads made: 2765

Read size: 4194304

Object size: 4194304

Bandwidth (MB/sec): 183.424

Average IOPS: 45

Stddev IOPS: 5

Max IOPS: 85

Min IOPS: 41

Average Latency(s): 0.346804

Max latency(s): 1.20072

Min latency(s): 0.0080755从以上测试数据可以看出:数据读取时的平均带宽是183MB/sec,平均延时是0.3 sec,平均IOPS是45。

- 测试数据清除

rados -p test cleanup- 删除test池:

[root@node1 ~]# ceph osd pool delete test test --yes-i-really-really-mean-it

pool 'test' removed结论

针对不同大小的block对Rados、RBD进行了读写性能测试,最终统计结果如下:

| block | 读写顺序 | 读写数据 | 线程数 | IOPS | 带宽速度 | 运行时间 s |

|---|---|---|---|---|---|---|

| 4K Rados | 随机读 | 174M | 16 | 15563 | 60.7961MB/s | 2 |

| 顺序读 | 174M | 16 | 13199 | 51.5621MB/s | 2 | |

| 随机写 | 174M | 16 | 1486 | 5.80794MB/s | 30 | |

| 4K RBD | 随机读 | 17.6G | 16 | 104000 | 587.7MB/s | 30 |

| 顺序读 | 2.2G | 16 | 23800 | 74MB/s | 30 | |

| 随机写 | 571M | 16 | 2352 | 19MB/s | 30 | |

| 顺序写 | 43M | 16 | 352 | 1.4MB/s | 30 | |

| 16K Rados | 随机读 | 615m | 16 | 13530 | 211.416MB/s | 2 |

| 顺序读 | 615m | 16 | 10842 | 169.419MB/s | 3.7 | |

| 随机写 | 615M | 16 | 1313 | 20.52864MB/s | 30 | |

| 16K RBD | 随机读 | 56G | 16 | 120000 | 1881MB/s | 30 |

| 顺序读 | 10G | 16 | 25600 | 363MB/s | 30 | |

| 随机写 | 1.9G | 16 | 2854 | 65.8MB/s | 30 | |

| 顺序写 | 170M | 16 | 384 | 5.7MB/s | 30 | |

| 512K Rados | 随机读 | 8.88G | 16 | 4218 | 2109.11MB/s | 3 |

| 顺序读 | 8.88G | 16 | 4062 | 2031.33MB/s | 4 | |

| 随机写 | 8.88G | 16 | 592 | 296.0093MB/s | 30 | |

| 512K RBD | 随机读 | 54G | 16 | 3719 | 1814.6MB/s | 30 |

| 顺序读 | 56G | 16 | 2834 | 1879.8MB/s | 30 | |

| 随机写 | 32G | 16 | 1649 | 1082.5MB/s | 30 | |

| 顺序写 | 9G | 16 | 1650 | 303.8KB/s | 30 |

- ceph 针对大块文件的读写性能非常优秀,高达2GB/s。

- rados读比写高出10倍的速率,适合读数据的高并发场景。

- pool配置:2个副本比3个副本的性能高出很多,但官方推荐使用3个副本,因为2个不够安全;

- 若机器配置不算很差(4核8G以上),ceph很容易达到1G带宽的限制阀值,若想继续提升ceph性能,需考虑提升带宽阀值。

- 设置更多的PG值可以带来更好的负载均衡,但从测试来看,设置较大的PG值并不会提高性能。

- 将fileStore刷新器设置为false对性能有不错的提升。