自从看了师傅爬了顶点全站之后,我也手痒痒的,也想爬一个比较牛逼的小说网看看,于是选了宜搜这个网站,好了,马上开干,这次用的是mogodb数据库,感觉mysql太麻烦了下图是我选择宜搜里面遍历的网站

先看代码框架图

第一个,肯定先提取排行榜里面每个类别的链接啊,然后进入链接进行爬取,先看all_theme文件

from bs4 import BeautifulSoup

import requests

from MogoQueue import MogoQueue

spider_queue = MogoQueue('novel_list','crawl_queue')#实例化封装数据库操作这个类,这个表是存每一页书籍的链接的

theme_queue = MogoQueue('novel_list','theme_queue')#这个表是存每一个主题页面的链接的

html = requests.get('http://book.easou.com/w/cat_yanqing.html')

soup = BeautifulSoup(html.text,'lxml')

all_list = soup.find('div',{'class':'classlist'}).findAll('div',{'class':'tit'})

for list in all_list:

title = list.find('span',{'class':'name'}).get_text()

book_number = list.find('span',{'class':'count'}).get_text()

theme_link = list.find('a')['href']

theme_links='http://book.easou.com/'+theme_link#每个书籍类目的数量

#print(title,book_number,theme_links)找到每个分类的标题和每个类目的链接,然后再下面的links提取出来

theme_queue.push_theme(theme_links,title,book_number)

links=['http://book.easou.com//w/cat_yanqing.html',

'http://book.easou.com//w/cat_xuanhuan.html',

'http://book.easou.com//w/cat_dushi.html',

'http://book.easou.com//w/cat_qingxiaoshuo.html',

'http://book.easou.com//w/cat_xiaoyuan.html',

'http://book.easou.com//w/cat_lishi.html',

'http://book.easou.com//w/cat_wuxia.html',

'http://book.easou.com//w/cat_junshi.html',

'http://book.easou.com//w/cat_juqing.html',

'http://book.easou.com//w/cat_wangyou.html',

'http://book.easou.com//w/cat_kehuan.html',

'http://book.easou.com//w/cat_lingyi.html',

'http://book.easou.com//w/cat_zhentan.html',

'http://book.easou.com//w/cat_jishi.html',

'http://book.easou.com//w/cat_mingzhu.html',

'http://book.easou.com//w/cat_qita.html',

]

def make_links(number,url):#这里要解释一下,因为每个类目的书页不同,而且最末页是动态数据,源代码没有

#这里采取了手打上最后一页的方法,毕竟感觉抓包花的时间更多

for i in range(int(number)+1):

link=url+'?attb=&s=&tpg=500&tp={}'.format(str(i))

spider_queue.push_queue(link)#这里将每一页的书籍链接插进数据库

#print(link)

make_links(500,'http://book.easou.com//w/cat_yanqing.html')

make_links(500,'http://book.easou.com//w/cat_xuanhuan.html')

make_links(500,'http://book.easou.com//w/cat_dushi.html')

make_links(5,'http://book.easou.com//w/cat_qingxiaoshuo.html')

make_links(500,'http://book.easou.com//w/cat_xiaoyuan.html')

make_links(500,'http://book.easou.com//w/cat_lishi.html')

make_links(500,'http://book.easou.com//w/cat_wuxia.html')

make_links(162,'http://book.easou.com//w/cat_junshi.html')

make_links(17,'http://book.easou.com//w/cat_juqing.html')

make_links(500,'http://book.easou.com//w/cat_wangyou.html')

make_links(474,'http://book.easou.com//w/cat_kehuan.html')

make_links(427,'http://book.easou.com//w/cat_lingyi.html')

make_links(84,'http://book.easou.com//w/cat_zhentan.html')

make_links(9,'http://book.easou.com//w/cat_jishi.html')

make_links(93,'http://book.easou.com//w/cat_mingzhu.html')

make_links(500,'http://book.easou.com//w/cat_qita.html')

看看运行结果,这是书籍类目的

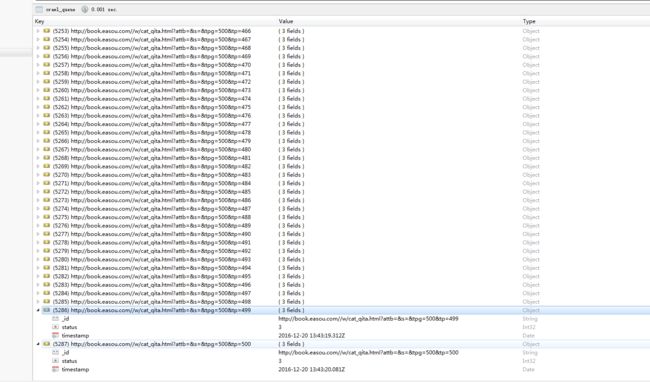

这是构造出的每一个类目里面所有的页数链接,也是我们爬虫的入口,一共5000多页

接下来是封装的数据库操作,因为用到了多进程以及多线程每个进程,他们需要知道那些URL爬取过了、哪些URL需要爬取!我们来给每个URL设置两种状态:

outstanding:等待爬取的URL

complete:爬取完成的URL

processing:正在进行的URL。

嗯!当一个所有初始的URL状态都为outstanding;当开始爬取的时候状态改为:processing;爬取完成状态改为:complete;失败的URL重置状态为:outstanding。为了能够处理URL进程被终止的情况、我们设置一个计时参数,当超过这个值时;我们则将状态重置为outstanding。

from pymongo import MongoClient,errors

from _datetime import datetime,timedelta

class MogoQueue():

OUTSIANDING = 1

PROCESSING = 2

COMPLETE = 3

def __init__(self,db,collection,timeout=300):

self.client=MongoClient()

self.Clinet=self.client[db]

self.db=self.Clinet[collection]

self.timeout=timeout

def __bool__(self):

record = self.db.find_one(

{'status': {'$ne': self.COMPLETE}}

)

return True if record else False

def push_theme(self,url,title,number):#这个函数用来添加新的URL以及URL主题名字进去队列

try:

self.db.insert({'_id':url,'status':self.OUTSIANDING,'主题':title,'书籍数量':number})

print(title,url,'插入队列成功')

except errors.DuplicateKeyError as e:#插入失败则是已经存在于队列了

print(title,url,'已经存在队列中')

pass

def push_queue(self,url):

try:

self.db.insert({'_id':url,'status':self.OUTSIANDING})

print(url,'插入队列成功')

except errors.DuplicateKeyError as e:#插入失败则是已经存在于队列了

print(url,'已经存在队列中')

pass

def push_book(self,title,author,book_style,book_introduction,book_url):

try:

self.db.insert({'_id':book_url,'书籍名称':title,'书籍作者':author,'书籍类型':book_style,'简介':book_introduction})

print(title, '书籍插入队列成功')

except errors.DuplicateKeyError as e:

print(title, '书籍已经存在队列中')

pass

def select(self):

record = self.db.find_and_modify(

query={'status':self.OUTSIANDING},

update={'$set':{'status': self.PROCESSING, 'timestamp':datetime.now() }}

)

if record:

return record['_id']

else:

self.repair()

raise KeyError

def repair(self):

record = self.db.find_and_modify(

query={

'timestamp':{'$lt':datetime.now()-timedelta(seconds=self.timeout)},

'status':{'$ne':self.COMPLETE}

},

update={'$set':{'status':self.OUTSIANDING}}#超时的要更改状态

)

if record:

print('重置URL',record['_id'])

def complete(self,url):

self.db.update({'_id':url},{'$set':{'status':self.COMPLETE}})

接下来是爬虫主程序

from ip_pool_request import html_request

from bs4 import BeautifulSoup

import random

import multiprocessing

import time

import threading

from ip_pool_request2 import download_request

from MogoQueue import MogoQueue

def novel_crawl(max_thread=8):

crawl_queue = MogoQueue('novel_list','crawl_queue')#实例化数据库操作,链接到数据库,这个是爬虫需要的书籍链接表

book_list = MogoQueue('novel_list','book_list')#爬取的内容放进这里

def pageurl_crawler():

while True:

try:

url = crawl_queue.select()#从数据库提取链接,开始抓

print(url)

except KeyError:#触发这个异常,则是链接都被爬完了

print('队列没有数据,你好坏耶')

else:

data=html_request.get(url,3)

soup = BeautifulSoup(data,'lxml')

all_novel = soup.find('div',{'class':'kindContent'}).findAll('li')

for novel in all_novel:#提取所需要的所以信息

text_tag =novel.find('div',{'class':'textShow'})

title = text_tag.find('div',{'class':'name'}).find('a').get_text()

author = text_tag.find('span',{'class':'author'}).find('a').get_text()

book_style = text_tag.find('span',{'class':'kind'}).find('a').get_text()

book_introduction= text_tag.find('div',{'class':'desc'}).get_text().strip().replace('\n','')

img_tag = novel.find('div',{'class':'imgShow'}).find('a',{'class':'common'})

book_url = 'http://book.easou.com/' + img_tag.attrs['href']

book_list.push_book(title,author,book_style,book_introduction,book_url)

crawl_queue.complete(url)#完成之后改变链接的状态

#print(title,author,book_style,book_introduction,book_url)

threads=[]

while threads or crawl_queue:

for thread in threads:

if not thread.is_alive():

threads.remove(thread)

while len(threads)< max_thread:

thread = threading.Thread(target=pageurl_crawler())#创建线程

thread.setDaemon(True)#线程保护

thread.start()

threads.append(thread)

time.sleep(5)

def process_crawler():

process=[]

num_cups=multiprocessing.cpu_count()

print('将会启动的进程数为',int(num_cups)-2)

for i in range(int(num_cups)-2):

p=multiprocessing.Process(target=novel_crawl)#创建进程

p.start()

process.append(p)

for p in process:

p.join()

if __name__ == '__main__':

process_crawler()

让我们来看看结果吧

里面因为很多都是重复的,所有去重之后只有十几万本,好失望......