机器学习实战 决策树(附数据集)

运行环境:Anaconda——Jupyter Notebook

Python版本为:3.6.6

数据集:lense.txt

提取码:9wsp

1.决策树

决策树也是最经常使用的数据挖掘算法,长方形代表判断模块(decision block),椭圆形代表终止模块(terminating block),表示已经得出结论,可以终止运行。从判断模块引出的左右箭头称作分支(branch),它可以到达另一个判断模块或者终止模块。

k-近邻算法最大的缺点就是无法给出数据的内在含义,决策树的主要优势就在于数据形式非常容易理解。

决策树算法能够读取数据集合,决策树的一个重要任务是为了数据中所蕴含的知识信息,因此决策树可以使用不熟悉的数据集合,并从中提取出一系列规则,在这些机器根据数据集创建规则时,就是机器学习的过程。

1.1 决策树的构造

决策树

优点:计算复杂度不高,输出结果易于理解,对中间值的缺失不敏感,可以处理不相关特征数据。

缺点:可能会产生过度匹配问题。

适用数据类型:数值型和标称型。

首先我们讨论数学上如何使用信息论划分数据集,然后编写代码将理论应用到具体的数据集上,最后编写代码构建决策树。

创建分支的伪代码函数createBranch()如下所示:

检测数据集中的每个子项是否属于同一分类:

If so return 类标签;

Else

寻找划分数据集的最好特征

划分数据集

创建分支节点

for 每个划分的子集

调用函数createBranch并增加返回结果到分支节点中

return 分支节点

决策树的一般流程

(1) 收集数据:可以使用任何方法。

(2) 准备数据:树构造算法只适用于标称型数据,因此数值型数据必须离散化。

(3) 分析数据:可以使用任何方法,构造树完成之后,我们应该检查图形是否符合预期。

(4) 训练算法:构造树的数据结构。

(5) 测试算法:使用经验树计算错误率。

(6) 使用算法:此步骤可以适用于任何监督学习算法,而使用决策树可以更好地理解数据的内在含义。

- 本文使用ID3算法划分数据集,该算法处理如何划分数据集,何时停止划分数据集,每次划分数据集时我们只选取一个特征属性。

- 现在我们想要决定依据第一个特征还是第二个特征划分数据。在回答这个问题之前,我们必须采用量化的方法判断如何划分数据。

- 在日常生活中,极少发生 的事件一旦发生是容易引起人们关注的(新闻说发生空难了,那必然会引起人们很大的关注,但事实是发生空难的概率很小很小),而 司空见惯的事不会引起注意 ,也就是说,极少见的事件所带来的信息量多。如果用统计学的术语来描述,就是出现概率小的事件信息量多。因此,事件出现得概率越小,信息量愈大。即信息量的多少是与事件发生频繁(即概率大小)成反比 。

2.1.1 信息增益

划分数据集的大原则是:将无序的数据变得更加有序。

我们可以在划分数据之前或之后使用信息论量化度量信息的内容。

在划分数据集之前之后信息发生的变化称为信息增益。

获得信息增益最高的特征就是最好的选择。

在可以评测哪种数据划分方式是最好的数据划分之前,我们必须学习如何计算信息增益。集合信息的度量方式称为香农熵或者简称为熵。

我们日常生活中会接收到无数的消息,但是只有那些你关心在意(或对你有用)的才叫做信息。

熵定义为信息的期望值,如果待分类的事务可能划分在多个分类之中,则符号xi的信息定义为:

其中p(xi)是选择该分类的概率。

为了计算熵,我们需要计算所有类别所有可能值包含的信息期望值:

def dataSet():

dataSet = [[1,1,'yes'],[1,1,'yes'],[1,0,'no'],[0,1,'no'],[0,1,'no']]

labels = ['no surfacing','flippers']

return dataSet,labels

myDat,labels = dataSet()

myDat

[[1, 1, 'yes'], [1, 1, 'yes'], [1, 0, 'no'], [0, 1, 'no'], [0, 1, 'no']]

labels

['no surfacing', 'flippers']

# 计算给定数据集的香农熵

from math import log

def caclShannonEnt(dataSet):

#计算实例总数

numEntries = len(dataSet)

labelCounts = {}

# 1.为所有可能分类创建字典

for featVec in dataSet:

currentLabel = featVec[-1]

# 为所有可能的分类创建字典,如果当前的键值不存在,则扩展字典并将当前键值加入字典。每个键值都记录了当前类别出现的次数。

if currentLabel not in labelCounts.keys():

labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1

shannonEnv = 0.0

for key in labelCounts:

prob = float(labelCounts[key])/numEntries

# 2.以2为底求对数

shannonEnv -= prob*log(prob,2)

return shannonEnv

caclShannonEnt(myDat)

0.9709505944546686

熵越高,则混合的数据也越多,在数据集中添加更多的分类,观察熵是如何变化的。

得到熵之后,我们就可以按照获取最大信息增益的方法划分数据集

myDat[0][-1] = 'maybe'

myDat

[[1, 1, 'maybe'], [1, 1, 'yes'], [1, 0, 'no'], [0, 1, 'no'], [0, 1, 'no']]

caclShannonEnt(myDat)

1.3709505944546687

1.1.2 划分数据集

分类算法除了需要测量信息熵,还需要划分数据集,度量划分数据集的熵,以便判断当前是否正确地划分了数据集。我们将对每个特征划分数据集的结果计算一次信息熵,然后判断按照哪个特征划分数据集是最好的划分方式。

# 程序清单 按照给定特征划分数据集

def splitDataSet(dataSet,axis,value):

# 1.创建新的list对象

retDataSet = []

for featVec in dataSet:

if (featVec[axis] == value):

# 2.抽取

reducedFeatVec = featVec[:axis]

reducedFeatVec.extend(featVec[axis+1:])

retDataSet.append(reducedFeatVec)

return retDataSet

splitDataSet(myDat,0,1)

[[1, 'maybe'], [1, 'yes'], [0, 'no']]

splitDataSet(myDat,0,0)

[[1, 'no'], [1, 'no']]

遍历整个数据集,循环计算香农熵和splitDataSet()函数,找到最好的特征划分方式

def chooseBestFeatureToSplit(dataSet):

numFeatures = len(dataSet[0])-1

baseEntropy = caclShannonEnt(dataSet)

bestInfoGain = 0.0

bestFeature = -1

for i in range(numFeatures):

# print('i:',i)

featList = [example[i] for example in dataSet]

# print(featList)

uniqueVals = set(featList)

# print(uniqueVals)

newEntropy = 0.0

for value in uniqueVals:

subDataSet = splitDataSet(dataSet,i,value)

prob = len(subDataSet)/float(len(dataSet))

newEntropy += prob*log(prob,2)

# print(value,prob,subDataSet)

if (baseEntropy - newEntropy > bestInfoGain):

bestInfoGain = baseEntropy - newEntropy

bestFeature = i

return bestFeature

chooseBestFeatureToSplit(myDat)

0

1.1.3 递归构建决策树

# 多数表决

import operator

def majorityCnt(classList):

classCount = {}

for vote in classCount:

if vote not in classCount.keys():

classCount[vote] = 0

classCount[vote] += 1

sortedClassCount = sorted(classCount.items(),key=operator.itemgetter(1),reverse=True)

return sortedClassCount[0][0]

def createTree(dataSet,labels):

classList = [example[-1] for example in dataSet]

if classList.count(classList[0])==len(classList):

return classList[0]

if len(dataSet[0])==1:

return majorityCnt(classList)

bestFeat = chooseBestFeatureToSplit(dataSet)

print('bestFeat:',bestFeat)

bestFeatLabel = labels[bestFeat]

print('bestFeatLabel:',bestFeatLabel)

myTree = {bestFeatLabel:{}}

del(labels[bestFeat])

featValues = [example[bestFeat] for example in dataSet]

print('featValues:',featValues)

uniqueVals = set(featValues)

print('uniqueVals:',uniqueVals)

for value in uniqueVals:

subLabels = labels[:]

print(splitDataSet(dataSet,bestFeat,value))

myTree[bestFeatLabel][value] = createTree(splitDataSet(dataSet,bestFeat,value),subLabels)

print('myTree:',myTree)

return myTree

myTree = createTree(myDat,labels)

bestFeat: 0

bestFeatLabel: no surfacing

featValues: [1, 1, 1, 0, 0]

uniqueVals: {0, 1}

[[1, 'no'], [1, 'no']]

myTree: {'no surfacing': {0: 'no'}}

[[1, 'maybe'], [1, 'yes'], [0, 'no']]

bestFeat: 0

bestFeatLabel: flippers

featValues: [1, 1, 0]

uniqueVals: {0, 1}

[['no']]

myTree: {'flippers': {0: 'no'}}

[['maybe'], ['yes']]

myTree: {'flippers': {0: 'no', 1: None}}

myTree: {'no surfacing': {0: 'no', 1: {'flippers': {0: 'no', 1: None}}}}

1.22 在Python中使用Matplotlib注解绘制树形图

import matplotlib.pyplot as plt

decisionNode = dict(boxstyle='sawtooth',fc='0.8')

leafNode = dict(boxstyle='round4',fc='0.8')

arrow_args = dict(arrowstyle='<-')

def plotNode(nodeText,centerPt,parentPt,nodeType):

# nodeTxt为要显示的文本,centerPt为文本的中心点,parentPt为指向文本的点

createPlot.ax1.annotate(nodeText,xytext=centerPt,textcoords="axes fraction",\

xy=parentPt,xycoords="axes fraction",\

va="center",ha="center",bbox=nodeType,arrowprops=arrow_args)

def createPlot():

fig = plt.figure(1,facecolor='white')

fig.clf()

createPlot.ax1 = plt.subplot(111,frameon=False)

plotNode(U"决策节点",(0.5,0.1),(0.1,0.5),decisionNode)

plotNode(U"叶子节点",(0.8,0.1),(0.3,0.8),leafNode)

plt.show()

# 求叶子节点数

def getNumLeafs(myTree):

numNode = 0

firstStr = list(myTree.keys())[0]

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__ == 'dict':

numNode += getNumLeafs(secondDict[key])

else:

numNode += 1

return numNode

getNumLeafs(myTree)

3

#获取决策树的深度

def getTreeDepth(myTree):

maxDepth = 0

firstStr = list(myTree.keys())[0]

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__ == 'dict':

thisDepth = 1 + getTreeDepth(secondDict[key])

else:

thisDepth = 1

return thisDepth

getTreeDepth(myTree)

2

接着,函数retrieveTree输出预先存储的树信息,避免每次测试代码时都要从数据中创建树的函数。

#预定义的树,用来测试

def retrieveTree(i):

listOfTrees = [

{'no surfacing': {0: 'no', 1: {'flippers': {0: 'no', 1: 'yes'}}}},

{'no surfacing': {0: 'no', 1: {'flippers': {0: {'head': {0: 'no', 1: 'yes'}}, 1: 'no'}}}}

]

return listOfTrees[i]

myTree = retrieveTree(0)

myTree

{'no surfacing': {0: 'no', 1: {'flippers': {0: 'no', 1: 'yes'}}}}

print('getNumLeaf: %d,getNumDepth: %d' %(getNumLeafs(myTree),getTreeDepth(myTree)))

getNumLeaf: 3,getNumDepth: 2

labels = ['no surfacing', 'flippers']

#绘制中间文本(在父子节点间填充文本信息)

def plotMidText(cntrPt,parentPt,txtString):

#求中间点的横坐标

xMid = (parentPt[0]- cntrPt[0])/2.0 + cntrPt[0]

#求中间点的纵坐标

yMid = (parentPt[1] - cntrPt[1])/2.0 + cntrPt[1]

#绘制树节点

createPlot.ax1.text(xMid,yMid,txtString,va='center',ha='center',rotation=30)

#绘制决策树

def plotTree(myTree,parentPt,nodeTxt):

#获得决策树的叶子节点数与深度

numLeafs = getNumLeafs(myTree)

depth = getTreeDepth(myTree)

#firstStr = myTree.keys()[0]

firstSides = list(myTree.keys())

firstStr = firstSides[0]

cntrPt = (plotTree.xOff + (1.0 + float(numLeafs))/2.0/plotTree.totalw,plotTree.yOff)

print('c:',cntrPt)

plotMidText(cntrPt,parentPt,nodeTxt)

plotNode(firstStr,cntrPt,parentPt,decisionNode)

secondDict = myTree[firstStr]

plotTree.yOff = plotTree.yOff - 1.0/plotTree.totalD

print('d:',plotTree.yOff)

for key in secondDict.keys():

#如果secondDict[key]是一颗子决策树,即字典

if type(secondDict[key]) is dict:

#递归地绘制决策树

plotTree(secondDict[key],cntrPt,str(key))

else:

plotTree.xOff = plotTree.xOff + 1.0/plotTree.totalw

print('e:',plotTree.xOff)

plotNode(secondDict[key],(plotTree.xOff,plotTree.yOff),cntrPt,leafNode)

plotMidText((plotTree.xOff,plotTree.yOff),cntrPt,str(key))

plotTree.yOff = plotTree.yOff + 1.0/plotTree.totalD

print('f:',plotTree.yOff)

def createPlot(inTree):

fig = plt.figure(1,facecolor='white')

fig.clf()

axprops = dict(xticks=[],yticks=[])

createPlot.ax1 = plt.subplot(111,frameon=False, **axprops)

plotTree.totalw = float(getNumLeafs(inTree))

plotTree.totalD = float(getTreeDepth(inTree))

plotTree.xOff = -0.5/plotTree.totalw

plotTree.yOff = 1.0

plotTree(inTree,(0.5,1.0),'')

plt.show()

axprops = dict(xticks=[],yticks=[])

xticks是一个列表,其中的元素就是x轴上将显示的坐标,yticks是y轴上显示的坐标,这里空列表则不显示坐标。

createPlot(retrieveTree(0))

c: (0.5, 1.0)

d: 0.5

e: 0.16666666666666666

c: (0.6666666666666666, 0.5)

d: 0.0

e: 0.5

e: 0.8333333333333333

f: 0.5

f: 1.0

#参数:inputTree--决策树模型

# featLabels--Feature标签对应的名称

# testVec--测试输入的数据

#返回结果 classLabel分类的结果值(需要映射label才能知道名称)

def classify(inTree,featLabels,testVec):

firstStr = list(inTree.keys())[0]

secondDict = inTree[firstStr]

featIndex = featLabels.index(firstStr)

key = testVec[featIndex]

valueOfFeat = secondDict[key]

if isinstance(valueOfFeat,dict):

classLabel = classify(valueOfFeat,featLabels,testVec)

else:

classLabel = valueOfFeat

return classLabel

classify(myTree,labels,(1,0))

'no'

myTree = retrieveTree(1)

#使用pickle模块存储决策树

def storeTree(inputTree,filename):

import pickle

#创建一个可以'写'的文本文件

#这里,如果按树中写的'w',将会报错write() argument must be str,not bytes

#所以这里改为二进制写入'wb'

with open(filename,'wb') as fw:

pickle.dump(inputTree,fw) #将inputTree保存到fw中

fw.close()

def grabTree(filename):

import pickle

#对应于二进制方式写入数据,'rb'采用二进制形式读出数据

fr = open(filename,'rb')

return pickle.load(fr) #读取

storeTree(myTree,'classifierStorage.txt')

grabTree('classifierStorage.txt')

{'no surfacing': {0: 'no',

1: {'flippers': {0: {'head': {0: 'no', 1: 'yes'}}, 1: 'no'}}}}

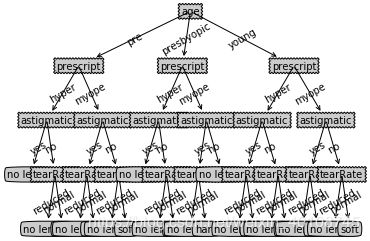

fr = open('lenses.txt')

lenses = [inst.strip().split('\t') for inst in fr.readlines()]

lenseLabels = ['age','prescript','astigmatic','tearRate']

lenseTree = createTree(lenses,lenseLabels)

lenseTree

bestFeat: 0

bestFeatLabel: age

featValues: ['young', 'young', 'young', 'young', 'young', 'young', 'young', 'young', 'pre', 'pre', 'pre', 'pre', 'pre', 'pre', 'pre', 'pre', 'presbyopic', 'presbyopic', 'presbyopic', 'presbyopic', 'presbyopic', 'presbyopic', 'presbyopic', 'presbyopic']

uniqueVals: {'pre', 'presbyopic', 'young'}

[['myope', 'no', 'reduced', 'no lenses'], ['myope', 'no', 'normal', 'soft'], ['myope', 'yes', 'reduced', 'no lenses'], ['myope', 'yes', 'normal', 'hard'], ['hyper', 'no', 'reduced', 'no lenses'], ['hyper', 'no', 'normal', 'soft'], ['hyper', 'yes', 'reduced', 'no lenses'], ['hyper', 'yes', 'normal', 'no lenses']]

bestFeat: 0

bestFeatLabel: prescript

featValues: ['myope', 'myope', 'myope', 'myope', 'hyper', 'hyper', 'hyper', 'hyper']

uniqueVals: {'hyper', 'myope'}

[['no', 'reduced', 'no lenses'], ['no', 'normal', 'soft'], ['yes', 'reduced', 'no lenses'], ['yes', 'normal', 'no lenses']]

bestFeat: 0

bestFeatLabel: astigmatic

featValues: ['no', 'no', 'yes', 'yes']

uniqueVals: {'yes', 'no'}

[['reduced', 'no lenses'], ['normal', 'no lenses']]

myTree: {'astigmatic': {'yes': 'no lenses'}}

[['reduced', 'no lenses'], ['normal', 'soft']]

bestFeat: 0

bestFeatLabel: tearRate

featValues: ['reduced', 'normal']

uniqueVals: {'reduced', 'normal'}

[['no lenses']]

myTree: {'tearRate': {'reduced': 'no lenses'}}

[['soft']]

myTree: {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}

myTree: {'astigmatic': {'yes': 'no lenses', 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}

myTree: {'prescript': {'hyper': {'astigmatic': {'yes': 'no lenses', 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}}}

[['no', 'reduced', 'no lenses'], ['no', 'normal', 'soft'], ['yes', 'reduced', 'no lenses'], ['yes', 'normal', 'hard']]

bestFeat: 0

bestFeatLabel: astigmatic

featValues: ['no', 'no', 'yes', 'yes']

uniqueVals: {'yes', 'no'}

[['reduced', 'no lenses'], ['normal', 'hard']]

bestFeat: 0

bestFeatLabel: tearRate

featValues: ['reduced', 'normal']

uniqueVals: {'reduced', 'normal'}

[['no lenses']]

myTree: {'tearRate': {'reduced': 'no lenses'}}

[['hard']]

myTree: {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}

myTree: {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}}}

[['reduced', 'no lenses'], ['normal', 'soft']]

bestFeat: 0

bestFeatLabel: tearRate

featValues: ['reduced', 'normal']

uniqueVals: {'reduced', 'normal'}

[['no lenses']]

myTree: {'tearRate': {'reduced': 'no lenses'}}

[['soft']]

myTree: {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}

myTree: {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}

myTree: {'prescript': {'hyper': {'astigmatic': {'yes': 'no lenses', 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}, 'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}}}

myTree: {'age': {'pre': {'prescript': {'hyper': {'astigmatic': {'yes': 'no lenses', 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}, 'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}}}}}

[['myope', 'no', 'reduced', 'no lenses'], ['myope', 'no', 'normal', 'no lenses'], ['myope', 'yes', 'reduced', 'no lenses'], ['myope', 'yes', 'normal', 'hard'], ['hyper', 'no', 'reduced', 'no lenses'], ['hyper', 'no', 'normal', 'soft'], ['hyper', 'yes', 'reduced', 'no lenses'], ['hyper', 'yes', 'normal', 'no lenses']]

bestFeat: 0

bestFeatLabel: prescript

featValues: ['myope', 'myope', 'myope', 'myope', 'hyper', 'hyper', 'hyper', 'hyper']

uniqueVals: {'hyper', 'myope'}

[['no', 'reduced', 'no lenses'], ['no', 'normal', 'soft'], ['yes', 'reduced', 'no lenses'], ['yes', 'normal', 'no lenses']]

bestFeat: 0

bestFeatLabel: astigmatic

featValues: ['no', 'no', 'yes', 'yes']

uniqueVals: {'yes', 'no'}

[['reduced', 'no lenses'], ['normal', 'no lenses']]

myTree: {'astigmatic': {'yes': 'no lenses'}}

[['reduced', 'no lenses'], ['normal', 'soft']]

bestFeat: 0

bestFeatLabel: tearRate

featValues: ['reduced', 'normal']

uniqueVals: {'reduced', 'normal'}

[['no lenses']]

myTree: {'tearRate': {'reduced': 'no lenses'}}

[['soft']]

myTree: {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}

myTree: {'astigmatic': {'yes': 'no lenses', 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}

myTree: {'prescript': {'hyper': {'astigmatic': {'yes': 'no lenses', 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}}}

[['no', 'reduced', 'no lenses'], ['no', 'normal', 'no lenses'], ['yes', 'reduced', 'no lenses'], ['yes', 'normal', 'hard']]

bestFeat: 0

bestFeatLabel: astigmatic

featValues: ['no', 'no', 'yes', 'yes']

uniqueVals: {'yes', 'no'}

[['reduced', 'no lenses'], ['normal', 'hard']]

bestFeat: 0

bestFeatLabel: tearRate

featValues: ['reduced', 'normal']

uniqueVals: {'reduced', 'normal'}

[['no lenses']]

myTree: {'tearRate': {'reduced': 'no lenses'}}

[['hard']]

myTree: {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}

myTree: {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}}}

[['reduced', 'no lenses'], ['normal', 'no lenses']]

myTree: {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': 'no lenses'}}

myTree: {'prescript': {'hyper': {'astigmatic': {'yes': 'no lenses', 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}, 'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': 'no lenses'}}}}

myTree: {'age': {'pre': {'prescript': {'hyper': {'astigmatic': {'yes': 'no lenses', 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}, 'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}}}, 'presbyopic': {'prescript': {'hyper': {'astigmatic': {'yes': 'no lenses', 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}, 'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': 'no lenses'}}}}}}

[['myope', 'no', 'reduced', 'no lenses'], ['myope', 'no', 'normal', 'soft'], ['myope', 'yes', 'reduced', 'no lenses'], ['myope', 'yes', 'normal', 'hard'], ['hyper', 'no', 'reduced', 'no lenses'], ['hyper', 'no', 'normal', 'soft'], ['hyper', 'yes', 'reduced', 'no lenses'], ['hyper', 'yes', 'normal', 'hard']]

bestFeat: 0

bestFeatLabel: prescript

featValues: ['myope', 'myope', 'myope', 'myope', 'hyper', 'hyper', 'hyper', 'hyper']

uniqueVals: {'hyper', 'myope'}

[['no', 'reduced', 'no lenses'], ['no', 'normal', 'soft'], ['yes', 'reduced', 'no lenses'], ['yes', 'normal', 'hard']]

bestFeat: 0

bestFeatLabel: astigmatic

featValues: ['no', 'no', 'yes', 'yes']

uniqueVals: {'yes', 'no'}

[['reduced', 'no lenses'], ['normal', 'hard']]

bestFeat: 0

bestFeatLabel: tearRate

featValues: ['reduced', 'normal']

uniqueVals: {'reduced', 'normal'}

[['no lenses']]

myTree: {'tearRate': {'reduced': 'no lenses'}}

[['hard']]

myTree: {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}

myTree: {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}}}

[['reduced', 'no lenses'], ['normal', 'soft']]

bestFeat: 0

bestFeatLabel: tearRate

featValues: ['reduced', 'normal']

uniqueVals: {'reduced', 'normal'}

[['no lenses']]

myTree: {'tearRate': {'reduced': 'no lenses'}}

[['soft']]

myTree: {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}

myTree: {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}

myTree: {'prescript': {'hyper': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}}}

[['no', 'reduced', 'no lenses'], ['no', 'normal', 'soft'], ['yes', 'reduced', 'no lenses'], ['yes', 'normal', 'hard']]

bestFeat: 0

bestFeatLabel: astigmatic

featValues: ['no', 'no', 'yes', 'yes']

uniqueVals: {'yes', 'no'}

[['reduced', 'no lenses'], ['normal', 'hard']]

bestFeat: 0

bestFeatLabel: tearRate

featValues: ['reduced', 'normal']

uniqueVals: {'reduced', 'normal'}

[['no lenses']]

myTree: {'tearRate': {'reduced': 'no lenses'}}

[['hard']]

myTree: {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}

myTree: {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}}}

[['reduced', 'no lenses'], ['normal', 'soft']]

bestFeat: 0

bestFeatLabel: tearRate

featValues: ['reduced', 'normal']

uniqueVals: {'reduced', 'normal'}

[['no lenses']]

myTree: {'tearRate': {'reduced': 'no lenses'}}

[['soft']]

myTree: {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}

myTree: {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}

myTree: {'prescript': {'hyper': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}, 'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}}}

myTree: {'age': {'pre': {'prescript': {'hyper': {'astigmatic': {'yes': 'no lenses', 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}, 'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}}}, 'presbyopic': {'prescript': {'hyper': {'astigmatic': {'yes': 'no lenses', 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}, 'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': 'no lenses'}}}}, 'young': {'prescript': {'hyper': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}, 'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses', 'normal': 'hard'}}, 'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}}}}}

{'age': {'pre': {'prescript': {'hyper': {'astigmatic': {'yes': 'no lenses',

'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}},

'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses',

'normal': 'hard'}},

'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}}},

'presbyopic': {'prescript': {'hyper': {'astigmatic': {'yes': 'no lenses',

'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}},

'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses',

'normal': 'hard'}},

'no': 'no lenses'}}}},

'young': {'prescript': {'hyper': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses',

'normal': 'hard'}},

'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}},

'myope': {'astigmatic': {'yes': {'tearRate': {'reduced': 'no lenses',

'normal': 'hard'}},

'no': {'tearRate': {'reduced': 'no lenses', 'normal': 'soft'}}}}}}}}

createPlot(lenseTree)

c: (0.5, 1.0)

d: 0.75

c: (0.16666666666666666, 0.75)

d: 0.5

c: (0.07142857142857142, 0.5)

d: 0.25

e: 0.023809523809523808

c: (0.09523809523809523, 0.25)

d: 0.0

e: 0.07142857142857142

e: 0.11904761904761904

f: 0.25

f: 0.5

c: (0.23809523809523808, 0.5)

d: 0.25

c: (0.19047619047619047, 0.25)

d: 0.0

e: 0.16666666666666666

e: 0.21428571428571427

f: 0.25

c: (0.2857142857142857, 0.25)

d: 0.0

e: 0.26190476190476186

e: 0.3095238095238095

f: 0.25

f: 0.5

f: 0.75

c: (0.47619047619047616, 0.75)

d: 0.5

c: (0.4047619047619047, 0.5)

d: 0.25

e: 0.3571428571428571

c: (0.4285714285714285, 0.25)

d: 0.0

e: 0.4047619047619047

e: 0.45238095238095233

f: 0.25

f: 0.5

c: (0.5476190476190476, 0.5)

d: 0.25

c: (0.5238095238095237, 0.25)

d: 0.0

e: 0.49999999999999994

e: 0.5476190476190476

f: 0.25

e: 0.5952380952380951

f: 0.5

f: 0.75

c: (0.8095238095238094, 0.75)

d: 0.5

c: (0.7142857142857142, 0.5)

d: 0.25

c: (0.6666666666666665, 0.25)

d: 0.0

e: 0.6428571428571428

e: 0.6904761904761905

f: 0.25

c: (0.7619047619047619, 0.25)

d: 0.0

e: 0.7380952380952381

e: 0.7857142857142858

f: 0.25

f: 0.5

c: (0.9047619047619049, 0.5)

d: 0.25

c: (0.8571428571428572, 0.25)

d: 0.0

e: 0.8333333333333335

e: 0.8809523809523812

f: 0.25

c: (0.9523809523809526, 0.25)

d: 0.0

e: 0.9285714285714288

e: 0.9761904761904765

f: 0.25

f: 0.5

f: 0.75

f: 1.0