Tensorflow目标检测接口配合tflite量化模型

1. 部署环境:

在PC上安装CUDA10和对应cuDNN,网上教程很多,这里不再累赘, 推荐使用conda集成环境,1. 新建python环境,2. 安装tensorflow-gpu=1.13, TensorFlow对象检测API需要使用其GitHub存储库中提供的特定目录结构, 所以第三步:从GitHub下载TensorFlow对象检测API存储库(下载TF V1.13版本,这里要与我们Python tensorflow对应 wget https://github.com/tensorflow/models/archive/v1.13.0.zip)

| TensorFlow版本 | GitHub模型存储库提交 |

|---|---|

| TF v1.7 | https://github.com/tensorflow/models/tree/adfd5a3aca41638aa9fb297c5095f33d64446d8f |

| TF v1.8 | https://github.com/tensorflow/models/tree/abd504235f3c2eed891571d62f0a424e54a2dabc |

| TF v1.9 | https://github.com/tensorflow/models/tree/d530ac540b0103caa194b4824af353f1b073553b |

| TF v1.10 | https://github.com/tensorflow/models/tree/b07b494e3514553633b132178b4c448f994d59df |

| TF v1.11 | https://github.com/tensorflow/models/tree/23b5b4227dfa1b23d7c21f0dfaf0951b16671f43 |

| TF v1.12 | https://github.com/tensorflow/models/tree/r1.12.0 |

| TF v1.13 | https://github.com/tensorflow/models/tree/r1.13.0 |

| 最新版本 | https://github.com/tensorflow/models |

安装依赖

sudo apt-get install protobuf-compiler python-pil python-lxml python-tk

pip install --user Cython

pip install --user contextlib2

pip install --user jupyter

pip install --user matplotlib

安装 COCO API

下载 cocoapi ,然后复制 pycocotools 文件夹到 tensorflow/models/research 文件夹。默认使用基于 Pascal VOC 的评价指标; 如果你对使用 COCO 评价指标感兴趣:使用 COCO 目标检测(object detection)指标,请添加metrics_set: "coco_detection_metrics"到配置文件eval_config消息中;使用 COCO 实例分割(instance segmentation)指标,请添加metrics_set: "coco_mask_metrics"到配置文件eval_config消息中。

git clone https://github.com/cocodataset/cocoapi.git

cd cocoapi/PythonAPI

make

cp -r pycocotools /models/research/

编译Protobuf和将库添加进 PYTHONPATH

Tensorflow Object Detection API 使用 Protobufs 来控制模型与训练参数。在使用框架之前,Protobuf 库必须被编译。这可以在 tensorflow/models/research/ 文件夹下运行命令:

./bin/protoc object_detection/protos/*.proto --python_out=.

当在本地运行时,tensorflow/models/research/ 和 slim 文件夹需要加入 PYTHONPATH 。这可以在 tensorflow/models/research/ 文件夹下运行下列命令来完成:

export PYTHONPATH=$PYTHONPATH:`pwd`:`pwd`/slim

注意: 如果这里添加路径之后还提示找不到"nets"文件,可以直接复制slim文件到research目录下

测试是否成功

python object_detection/builders/model_builder_test.py

返回OK则OK

2. 制作数据集

使用label-image标注工具对样本进行标注,得到VOC格式数据。将所有的图片放入images/文件夹,标注得到的xml文件保存到merged_xml/文件夹内,并新建文件夹Annotations/

训练集划分与配置文件修改

新建train_test_split.py把xml数据集分为了train 、test、 validation三部分,并存储在Annotations文件夹中,train为训练集占76.5%,test为测试集10%,validation为验证集13.5%,train_test_split.py代码如下:

import os

import random

import time

import shutil

xmlfilepath = r'merged_xml'

saveBasePath = r"./Annotations"

trainval_percent = 0.9

train_percent = 0.85

total_xml = os.listdir(xmlfilepath)

num = len(total_xml)

list = range(num)

tv = int(num*trainval_percent)

tr = int(tv*train_percent)

trainval = random.sample(list,tv)

train = random.sample(trainval,tr)

print("train and val size",tv)

print("train size",tr)

start = time.time()

test_num = 0

val_num = 0

train_num = 0

for i in list:

name = total_xml[i]

if i in trainval: # train and val set

if i in train:

directory = "train"

train_num += 1

xml_path = os.path.join(os.getcwd(), 'Annotations/{}'.format(directory))

if(not os.path.exists(xml_path)):

os.mkdir(xml_path)

filePath = os.path.join(xmlfilepath,name)

newfile = os.path.join(saveBasePath,os.path.join(directory,name))

shutil.copyfile(filePath, newfile)

else:

directory = "validation"

xml_path = os.path.join(os.getcwd(), 'Annotations/{}'.format(directory))

if(not os.path.exists(xml_path)):

os.mkdir(xml_path)

val_num += 1

filePath = os.path.join(xmlfilepath,name)

newfile = os.path.join(saveBasePath,os.path.join(directory,name))

shutil.copyfile(filePath, newfile)

else:

directory = "test"

xml_path = os.path.join(os.getcwd(), 'Annotations/{}'.format(directory))

if(not os.path.exists(xml_path)):

os.mkdir(xml_path)

test_num += 1

filePath = os.path.join(xmlfilepath,name)

newfile = os.path.join(saveBasePath,os.path.join(directory,name))

shutil.copyfile(filePath, newfile)

end = time.time()

seconds = end - start

print("train total : " + str(train_num))

print("validation total : " + str(val_num))

print("test total : " + str(test_num))

total_num = train_num + val_num + test_num

print("total number : " + str(total_num))

print( "Time taken : {0} seconds".format(seconds))

xml文件转换为csv文件

新建csvdata/目录存放生成的csv文件,代码如下:

import os

import glob

import pandas as pd

import xml.etree.ElementTree as ET

def xml_to_csv(path):

xml_list = []

for xml_file in glob.glob(path + '/*.xml'):

tree = ET.parse(xml_file)

root = tree.getroot()

print(root.find('filename').text)

for member in root.findall('object'):

value = (root.find('filename').text,

int(root.find('size')[0].text), #width

int(root.find('size')[1].text), #height

member[0].text,

int(member[4][0].text),

int(float(member[4][1].text)),

int(member[4][2].text),

int(member[4][3].text)

)

xml_list.append(value)

column_name = ['filename', 'width', 'height', 'class', 'xmin', 'ymin', 'xmax', 'ymax']

xml_df = pd.DataFrame(xml_list, columns=column_name)

return xml_df

def main():

for directory in ['train', 'test', 'validation']:

xml_path = os.path.join(os.getcwd(), 'Annotations/{}'.format(directory))

xml_df = xml_to_csv(xml_path)

xml_df.to_csv('csvdata/tf_{}.csv'.format(directory), index=None)

print('Successfully converted xml to csv.')

main()

在csvdata/文件夹下生成训练、验证和测试的csv格式文件:

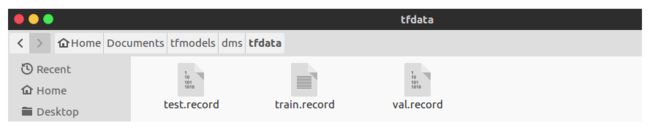

csv格式数据生成tf record格式数据

建generate_tfrecord.py脚本,并新建tfdata/文件夹,代码如下:

from __future__ import division

from __future__ import print_function

from __future__ import absolute_import

import os

import io

import pandas as pd

import tensorflow as tf

from PIL import Image

from object_detection.utils import dataset_util

from object_detection.utils import label_map_util

from collections import namedtuple

flags = tf.app.flags

flags.DEFINE_string('csv_input', '', 'Path to the CSV input')

flags.DEFINE_string('images_input', '', 'Path to the images input')

flags.DEFINE_string('output_path', '', 'Path to output TFRecord')

flags.DEFINE_string('label_map_path', '', 'Path to label map proto')

FLAGS = flags.FLAGS

def split(df, group):

data = namedtuple('data', ['filename', 'object'])

gb = df.groupby(group)

return [data(filename, gb.get_group(x)) for filename, x in

zip(gb.groups.keys(), gb.groups)]

def create_tf_example(group, label_map_dict, images_path):

with tf.gfile.GFile(os.path.join(

images_path, '{}'.format(group.filename)), 'rb') as fid:

encoded_jpg = fid.read()

encoded_jpg_io = io.BytesIO(encoded_jpg)

image = Image.open(encoded_jpg_io)

width, height = image.size

filename = group.filename.encode('utf8')

image_format = b'jpg'

xmins = []

xmaxs = []

ymins = []

ymaxs = []

classes_text = []

classes = []

for index, row in group.object.iterrows():

xmins.append(row['xmin'] / width)

xmaxs.append(row['xmax'] / width)

ymins.append(row['ymin'] / height)

ymaxs.append(row['ymax'] / height)

classes_text.append(row['class'].encode('utf8'))

classes.append(label_map_dict[row['class']])

tf_example = tf.train.Example(features=tf.train.Features(feature={

'image/height': dataset_util.int64_feature(height),

'image/width': dataset_util.int64_feature(width),

'image/filename': dataset_util.bytes_feature(filename),

'image/source_id': dataset_util.bytes_feature(filename),

'image/encoded': dataset_util.bytes_feature(encoded_jpg),

'image/format': dataset_util.bytes_feature(image_format),

'image/object/bbox/xmin': dataset_util.float_list_feature(xmins),

'image/object/bbox/xmax': dataset_util.float_list_feature(xmaxs),

'image/object/bbox/ymin': dataset_util.float_list_feature(ymins),

'image/object/bbox/ymax': dataset_util.float_list_feature(ymaxs),

'image/object/class/text': dataset_util.bytes_list_feature(classes_text),

'image/object/class/label': dataset_util.int64_list_feature(classes),

}))

return tf_example

def main(_):

writer = tf.python_io.TFRecordWriter(FLAGS.output_path)

label_map_dict = label_map_util.get_label_map_dict(FLAGS.label_map_path)

images_path = FLAGS.images_input

examples = pd.read_csv(FLAGS.csv_input)

grouped = split(examples, 'filename')

for group in grouped:

tf_example = create_tf_example(group, label_map_dict, images_path)

writer.write(tf_example.SerializeToString())

writer.close()

output_path = FLAGS.output_path

print('Successfully created the TFRecords: {}'.format(output_path))

if __name__ == '__main__':

tf.app.run()

用法:

python generate_tfrecord.py \

--csv_input=./csvdata/tf_train.csv \

--images_input=images \

--output_path=./tfdata/train.record \

--label_map_path=./label_map.pbtxt

类别文件

创建label_map.pbtxt文件, 根据自己训练的类别进行修改, 有几个类别就做几个itme!

item {

name: "face"

id: 1

display_name: "face"

}

item {

name: "telephone"

id: 2

display_name: "telephone"

}

item {

name: "cigarette"

id: 3

display_name: "cigarette"

}

配置pipeline.config

到models/research/object_detection/samples/configs/文件夹下将ssd_mobilenet_v2_coco.config拷贝到训练文件夹下,修改内容主要是:

①总类别数

②tfrecord文件的路径,包括训练集、验证集等路径

③label_map的路径

④预训练模型路径,如果没有则注释掉。也可以设置网络的各种学习参数,如:batch_size,学习率和退化率,训练的总步数等。

- num_classes:3

- fine_tune_checkpoint:“ssd_mobilenet_v1_coco_11_06_2017/model.ckpt” # 预训练模型位置

- num_steps:30000 # 训练步数设置,根据自己数据量来设置,默认为200000

- train_input_reader/input_path:“train.record” # 注意修改成自己的路径位置

- train_input_reader/label_map_path:“label_map.pbtxt” # 类别文件位置,注意修改成自己的路径位置

- num_examples:78 # test数据集的数量

- num_visualizations:78

- #max_evals:10 #注释这个变量,避免一些错误,个人习惯,之前因为这个遇到过错误

- eval_input_reader/inputpath:“test.record” # 注意修改成自己的位置

- eval_input_reader/label_map_path: “label_map.pbtxt” # 注意修改成自己的路径位置

训练模型与导出模型

首先在legacy文件夹中复制一份train.py到object_detection文件夹下,然后运行以下指令(ckpt模型训练后的输出位置)

python train.py --logtostderr --train_dir=training/ --pipeline_config_path=ssd_mobilenet_v2_coco.config

训练结果

生成一堆models.ckpt-xxx的文件,不同数字代表不同训练步数下保存的模型文件

转pb文件

使用export_inference_graph.py(在object detection目录下)导出pb文件:

python export_inference_graph.py \

--input_type image_tensor \

--pipeline_config_path=ssd_mobilenet_v2_coco.config \

--trained_checkpoint_prefix=training//model.ckpt-30000 \

--output_directory models_trained/

测试pb模型:

import tensorflow as tf

import cv2

import os

import time

import numpy as np

from object_detection.utils import label_map_util

from object_detection.utils import visualization_utils as vis_util

videofile='/home/zyl/Documents/caffe/examples/MobileNet-SSD/videos/20180813140109903.avi'

cap=cv2.VideoCapture(videofile)

MODEL_NUM_CLASSES=3

MODEL_LABEL_MAP ='/home/zyl/data/dms_tf/label_map.pbtxt'

MODEL_PB='/home/zyl/data/dms_tf/model2/export_result/frozen_inference_graph.pb'

# read graph model

with tf.gfile.GFile(MODEL_PB,'rb') as fd:

_graph=tf.GraphDef()

_graph.ParseFromString(fd.read())

tf.import_graph_def(_graph,name='')

# get the default graph model

detection_graph=tf.get_default_graph()

# read labelmap

label_map=label_map_util.load_labelmap(MODEL_LABEL_MAP)

categories=label_map_util.convert_label_map_to_categories(label_map,MODEL_NUM_CLASSES)

category_index=label_map_util.create_category_index(categories)

with tf.Session(graph=detection_graph) as sess:

while(cap.isOpened()):

ret,frame=cap.read()

frame_np_expanded=np.expand_dims(frame,axis=0)

image_tensor = detection_graph.get_tensor_by_name('image_tensor:0')

boxes = detection_graph.get_tensor_by_name('detection_boxes:0')

scores = detection_graph.get_tensor_by_name('detection_scores:0')

classes = detection_graph.get_tensor_by_name('detection_classes:0')

num_detections = detection_graph.get_tensor_by_name('num_detections:0')

t1=time.time()

(boxes,scores,classes,num_detections)=sess.run([boxes,scores,classes,num_detections], \

feed_dict={image_tensor:frame_np_expanded})

vis_util.visualize_boxes_and_labels_on_image_array(frame,np.squeeze(boxes),

np.squeeze(classes).astype(np.int32),np.squeeze(scores),category_index,

use_normalized_coordinates=True,line_thickness=6)

t2=time.time()

print('FPS:',1/(t2-t1))

cv2.imshow('MobilenetTF',frame)

if cv2.waitKey(1)&0xff ==27:

break

cap.release()

转tflite文件

因为要使用toco,这里我们需要从源码中编译tensorflow库,新建Python环境,并激活环境,基操不再累赘

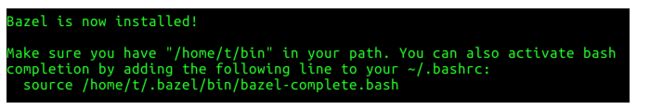

安装bazel

首先安装依赖包:

sudo apt-get install pkg-config zip g++ zlib1g-dev unzip

然后下载bazel二进制文件:下载地址 这里以bazel-0.15.0-installer-linux-x86_64.sh为例说明

修改权限并安装:

chmod +x bazel-0.15.0-installer-linux-x86_64.sh

./bazel-0.15.0-installer-linux-x86_64.sh --user

–user 选项会将bazel安装在$HOME/bin目录下, 显示如下,表示安装成功

设置环境变量

vim ~/.bashrc

在末尾添加

export PATH="$PATH:$HOME/bin"

下载TensorFlow源码并配置构建

mkdir C:\tensorflow-build

cd C:\tensorflow-build

git clone https://github.com/tensorflow/tensorflow.git

cd tensorflow

git checkout r1.13 # 签出TensorFlow v1.13的分支

接下来,我们将使用configure.py脚本配置TensorFlow构建

python ./configure.py

接下来,配置系统会给出各种询问,以确认编译时的配置参数,下面挑选比较重要的几个参数解释如下(有默认值的直接回车,Y/n的直接选择n,我是这样做的):

yhilly@ubuntu:~/tensorflow$ ./configure

You have bazel 0.9.0 installed.

Please specify the location of python. [Default is /home/yhilly/anaconda3/envs/tensorflow/bin/python]:

上面的提示是Bazel让我们选择Python的安装路径,只要确保是Anaconda的Python路径即可,直接按一系列的回车键(Enter)表示使用默认值

Do you wish to build TensorFlow with jemalloc as malloc support? [Y/n]:

jemalloc as malloc support will be enabled for TensorFlow.

上面的选项表示是否使用jemalloc代替传统的malloc来管理内存?Jemalloc是杰森·埃文斯(Jason Evans)于2006年开发的用以取代传统低性能的malloc内存管理模块而开发的一款内存管理模块[4]。埃文斯并非等闲之辈,他是FreeBSD项目(一种类UNIX操作系统)的重要维护者之一

Jemalloc先被Firefox浏览器采用,后来又被Facebook在其自己的各类应用上广泛使用,一战成名。好技术当然要用!直接按回车,确认默认值Y(默认值通常就是被大写的选项)

Do you wish to build TensorFlow with Google Cloud Platform support? [Y/n]: n

No Google Cloud Platform support will be enabled for TensorFlow.

这个选项是询问是否采用Google云平台来支持TensorFlow。这个云平台国内通常无法访问,建议输入“n”。有条件的读者,可直接按回车确认使用

Do you wish to build TensorFlow with Hadoop File System support? [Y/n]: n

No Hadoop File System support will be enabled for TensorFlow.

这个选项是询问是否使用Hadoop 文件系统(HDFS)来支持TensorFlow。如果搭建了Hadoop集群,有读取HDFS数据需求的用户,可以回车确认。如果没有需求,手动输入“n”

Do you wish to build TensorFlow with XLA JIT support? [y/N]: n

No XLA JIT support will be enabled for TensorFlow.

这个选项是询问是否开启XLA JIT编译支持。XLA(Accelerated Linear Algebra/加速线性代数)目前还是TensorFlow的实验项目,XLA 使用 JIT(Just in Time,即时编译)技术来分析用户在运行时(runtime)创建的 TensorFlow 图,专门用于实际运行时的维度和类型。作为新技术,这项编译技术还不成熟,爱折腾的可以选y

Do you wish to build TensorFlow with CUDA support? [y/N]:

No CUDA support will be enabled for TensorFlow.

这个选项是询问是否使用CUDA。CUDA是一种由NVIDIA推出的通用并行计算架构,该架构使GPU能够解决复杂的计算问题。如果用户配备有NVIDIA的GPU,可以选择“y”,如果仅使用TensorFlow的CPU版本,回车确认“N” (这里只是为了使用toco转换模型,为了节省时间,选择N)

编译源文件

在配置完毕Bazel的编译选项之后,接下来就可以使用如下指令编译TensorFlow的源代码:

bazel build --config=opt //tensorflow/tools/pip_package:build_pip_package

如果想获得GPU支持,则需要加入编译选项“–config=cuda”

bazel build --config=opt --config=cuda --define=no_tensorflow_py_deps=true //tensorflow/tools/pip_package:build_pip_package

现在已经创建了包构建器,让我们使用它来构建实际的TensorFlow wheel文件

bazel-bin/tensorflow/tools/pip_package/build_pip_package ~/tensorflow_pkg

下面我们要做的工作就是,利用pip来安装我们亲手编译的TensorFlow二进制文件:

pip install ~/tensorflow_pkg/.whl

让我们通过打开Python shell来确保正确安装:

python

打开Python后,发出以下命令:

>>> import tensorflow as tf

>>> tf.__version__

如果一切都已正确安装,它将以已安装的TensorFlow版本响应

生成TF Lite模型

前面frozen的pb模型直接转换会报错,需使用export_tflite_ssd_graph.py进行优化后再转换。将object_detection/export_tflite_ssd_graph.py拷贝到训练目录,运行:

python export_tflite_ssd_graph.py \

--pipeline_config_path=ssd_mobilenet_v2_coco.config \

--trained_checkpoint_prefix=models_trained/model.ckpt-30000\

--output_directory=models

生成冻结后的模型,再转换为对应的TF Lite模型,包括float类型的(模型更大,更准确)和量化后uint8类型的模型(模型更小,但准确率不高)

float32型:

bazel-bin/tensorflow/lite/toco/toco \

--input_file=/home/zyl/Documents/tfmodels/dms/models/tflite_graph.pb \

--input_format=TENSORFLOW_GRAPHDEF \

--output_format=TFLITE \

--output_file=/home/zyl/Documents/tfmodels/dms/models/litefloat_zyl.tflite \

--inference_type=FLOAT \

--input_arrays=normalized_input_image_tensor \

--output_arrays='TFLite_Detection_PostProcess','TFLite_Detection_PostProcess:1','TFLite_Detection_PostProcess:2','TFLite_Detection_PostProcess:3' \

--input_shapes=1,300,300,3 \

--mean_values=128 \

--std_dev_values=128 \

--default_ranges_min=0 \

--allow_custom_ops

uint8量化:

bazel-bin/tensorflow/lite/toco/toco \

--graph_def_file=/home/zyl/data/dms_tf/model1/tflite_graph.pb \

--output_file=/home/zyl/data/dms_tf/model1/tflite_model/model1.tflite \

--input_shapes=1,300,300,3 \

--input_arrays=normalized_input_image_tensor \

--output_arrays='TFLite_Detection_PostProcess','TFLite_Detection_PostProcess:1','TFLite_Detection_PostProcess:2','TFLite_Detection_PostProcess:3' \

--inference_type=QUANTIZED_UINT8 \

--mean_values=128 \

--std_dev_values=128 \

--default_ranges_min=0 \

--default_ranges_max=6 \

--change_concat_input_ranges=False \

--allow_custom_ops

调用TensorFlow Lite模型

wget https://raw.githubusercontent.com/EdjeElectronics/TensorFlow-Lite-Object-Detection-on-Android-and-Raspberry-Pi/master/TFLite_detection_image.py --no-check-certificate

wget https://raw.githubusercontent.com/EdjeElectronics/TensorFlow-Lite-Object-Detection-on-Android-and-Raspberry-Pi/master/TFLite_detection_video.py --no-check-certificate

wget https://raw.githubusercontent.com/EdjeElectronics/TensorFlow-Lite-Object-Detection-on-Android-and-Raspberry-Pi/master/TFLite_detection_webcam.py --no-check-certificate

使用这三个脚本可以实现不同场景的调用

有关在运行脚本的使用的-h选项的更多信息,请在调用脚本时使用该选项。例:

python TFLite_detection_image.py -h

参照:

https://github.com/EdjeElectronics/TensorFlow-Lite-Object-Detection-on-Android-and-Raspberry-Pi

https://github.com/tensorflow/models/blob/master/research/object_detection/g3doc/running_on_mobile_tensorflowlite.md

https://github.com/EdjeElectronics/TensorFlow-Object-Detection-API-Tutorial-Train-Multiple-Objects-Windows-10

欢迎留言评论, 转载请标明出处