机器学习-朴素贝叶斯(侮辱类词汇检测)

根据公式:

可以得出:

这里进行计算时,只需要计算分子,比较大小,因为分母只是对数值有影响,对两个数的比较不会产生影响

import numpy as np

"""创建数据集"""

def loadDataSet():

postingList = [['my', 'dog', 'has', 'flea', 'problems', 'help', 'please'],

['maybe', 'not', 'take', 'him', 'to', 'dog', 'park', 'stupid'], # stupid侮辱类

['my', 'dalmation', 'is', 'so', 'cute', 'I', 'love', 'him'],

['stop', 'posting', 'stupid', 'worthless', 'garbage'], # garbage,stupid侮辱类

['mr', 'licks', 'ate', 'my', 'steak', 'how', 'to', 'stop', 'him'],

['quit', 'buying', 'worthless', 'dog', 'food', 'stupid']] # stupid侮辱类

classVec = [0, 1, 0, 1, 0, 1] # 类别标签向量,1代表侮辱性词汇,0代表不是

return postingList, classVec

"""创建词汇表"""

def createVocabList(dataSet):

vocabSet = set([])

for document in dataSet: # 取出每一行文档(每行七个单词)

vocabSet = vocabSet | set(document) # 先将文档转换为set集合,无需不重复,再取并集

return list(vocabSet)

"""判断输入集中单词是否在词汇表中"""

def setOfWordsVec(vocabList, inputSet):

returnVec = [0] * len(vocabList) # 创建一个元素都为0的向量

for word in inputSet: # 取输入集的每一个单词

if word in vocabList: # 如果单词在词汇表中

returnVec[vocabList.index(word)] = 1 # 标志位置为一,表示所检测单词在词汇表中

else:

print("the word:$s is not in my Vocabulary!" % word)

return returnVec

"""计算概率"""

def trainNB0(trainMatrix, trainCategory):

numTrainDocs = len(trainMatrix) # 样本个数,6

numWords = len(trainMatrix[0]) # 每个样本长度,32

pAbusive = sum(trainCategory) / float(numTrainDocs) # 文档属于侮辱类的概率

p0Num = np.ones(numWords) # 非侮辱类情况下,某个单词出现的概率

p1Num = np.ones(numWords) # 侮辱类情况下,某个单词出现的概率

p0Denom = 2.0 # 分母,都设置为2(我们需要的是两个比较,所以都设置为共同的分母不影响大小)

p1Denom = 2.0

for i in range(numTrainDocs):

if trainCategory[i] == 1:

p1Num += trainMatrix[i] # 每个侮辱类样本都相加(记录侮辱类每个单词的个数)

p1Denom += sum(trainMatrix[i]) # 求和所有侮辱类样本的单词数

else:

p0Num += trainMatrix[i] # 每个非侮辱类样本都相加(记录侮辱类每个单词的个数)

p0Denom += sum(trainMatrix[i]) # 求和所有非侮辱类样本的单词数

p1Vect = np.log(p1Num / p1Denom) # 取对数,防止下溢出

p0Vect = np.log(p0Num / p0Denom)

return p0Vect, p1Vect, pAbusive

"""分类"""

def classifyNB(vecClassify, p0Vec, p1Vec, pClass1):

p1 = sum(vecClassify * p1Vec) + np.log(pClass1) # log(A*B)=logA+logB,前边没有log,是因为这需要两个数比较,同时log和都不log不会影响比较大小

p0 = sum(vecClassify * p0Vec) + np.log(1 - pClass1)

if p1 > p0:

return 1

else:

return 0

if __name__ == '__main__':

listOposts, listClasses = loadDataSet()

myVocabList = createVocabList(listOposts)

trainMat = []

for postinDoc in listOposts:

trainMat.append(setOfWordsVec(myVocabList, postinDoc)) # 生成6*32的矩阵,表示每条数据中单词在词汇表的存在情况

poV, p1V, pAb = trainNB0(np.array(trainMat), np.array(listClasses))

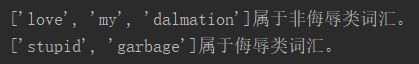

testEntry = ['love', 'my', 'dalmation']

thisDoc = np.array(setOfWordsVec(myVocabList, testEntry))

if classifyNB(thisDoc, poV, p1V, pAb):

print("%s属于侮辱类词汇。" % (testEntry,))

else:

print("%s属于非侮辱类词汇。" % (testEntry,))

testEntry = ['stupid', 'garbage']

thisDoc = np.array(setOfWordsVec(myVocabList, testEntry))

if classifyNB(thisDoc, poV, p1V, pAb):

print("%s属于侮辱类词汇。" % (testEntry,))

else:

print("%s属于非侮辱类词汇。" % (testEntry,))