PaddlePaddle实现手写藏文识别

原文博客:Doi技术团队

链接地址:https://blog.doiduoyi.com/authors/1584446358138

初心:记录优秀的Doi技术团队学习经历

前言

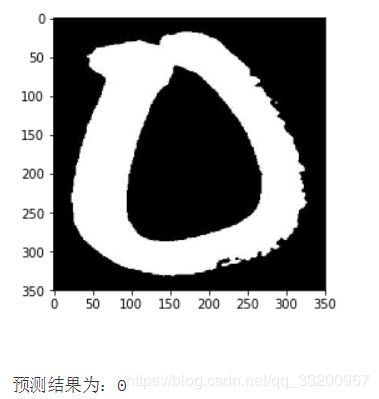

中央民族大学创业团队巨神人工智能科技在科赛网公开了一个TibetanMNIST正是形体藏文中的数字数据集,TibetanMNIST数据集的原图片中,图片的大小是350*350的黑白图片,图片文件名称的第一个数字就是图片的标签,如0_10_398.jpg这张图片代表的就是藏文的数字0。在本项目中我们结合第四章所学的卷积神经网络,来完成TibetanMNIST数据集的分类识别。

导入所需的包

主要是使用到PaddlePaddle的fluid和paddle依赖库,cpu_count库是获取当前CPU的数量的,matplotlib用于展示图片。

import paddle.fluid as fluid

import paddle

import numpy as np

from PIL import Image

import os

from multiprocessing import cpu_count

import matplotlib.pyplot as plt

生成图像列表

因为TibetanMNIST数据集已经在科赛网发布了,所以我们创建项目之前还需要在科赛网中把数据集下载下来,数据集标题为【首发活动】TibetanMNIST藏文手写数字数据集,下载之后解答会得到一个TibetanMnist(350x350)文件夹,这个文件就是存放原图像文件的,我们把这个文件压缩为zip格式并上传到AI Studio平台作为个人数据集,然后在创建项目的时候挂载这个数据集就可以了。

挂载数据集之后,执行解压命令,就可以得到一个目录TibetanMnist(350x350),原图像文件存放在这个目录,我们可以在这个目录读取全部的图片文件。

!unzip -q /home/aistudio/data/data2134/TibetanMnist(350x350).zip

data_path = './TibetanMnist(350x350)'

data_imgs = os.listdir(data_path)

获取全部的图片路径之后,我们就生成一个图像列表,这个列表文件包括图片的绝对路径和图片对于的label,中间用制表符分开。格式如下,其中有一个lable.txt的文本文件,我们要忽略它,否则在读取的时候就报错。

/home/kesci/input/TibetanMNIST5610/TibetanMNIST/TibetanMNIST/8_2_1.jpg 8

/home/kesci/input/TibetanMNIST5610/TibetanMNIST/TibetanMNIST/0_11_264.jpg 0

/home/kesci/input/TibetanMNIST5610/TibetanMNIST/TibetanMNIST/0_13_320.jpg 0

/home/kesci/input/TibetanMNIST5610/TibetanMNIST/TibetanMNIST/3_16_193.jpg 3

with open('./train_data.list', 'w') as f_train:

with open('./test_data.list', 'w') as f_test:

for i in range(len(data_imgs)):

if data_imgs[i] == 'lable.txt':

continue

if i % 10 == 0:

f_test.write(os.path.join(data_path, data_imgs[i]) + "\t" + data_imgs[i][0:1] + '\n')

else:

f_train.write(os.path.join(data_path, data_imgs[i]) + "\t" + data_imgs[i][0:1] + '\n')

print('图像列表已生成。')

定义读取数据

PaddlePaddle读取训练和测试数据都是通过reader来读取的,所以我们要自定义一个reader。首先我们定义一个train_mapper()函数,这个函数是对图片进行预处理的,比如通过paddle.dataset.image.simple_transform接口对图片进行压缩然后裁剪,和灰度化,当参数is_train为True时就会随机裁剪,否则为中心裁剪,一般测试和预测都是中心裁剪。train_r()函数是从上一部分生成的图像列表中读取图片路径和标签,然后把图片路径传递给train_mapper()函数进行预处理。同样的测试数据也是相同的操作。

def train_mapper(sample):

img, label = sample

img = paddle.dataset.image.load_image(file=img, is_color=False)

img = paddle.dataset.image.simple_transform(im=img, resize_size=32, crop_size=28, is_color=False, is_train=True)

img = img.flatten().astype('float32') / 255.0

return img, label

def train_r(train_list_path):

def reader():

with open(train_list_path, 'r') as f:

lines = f.readlines()

del lines[len(lines)-1]

for line in lines:

img, label = line.split('\t')

yield img, int(label)

return paddle.reader.xmap_readers(train_mapper, reader, cpu_count(), 1024)

def test_mapper(sample):

img, label = sample

img = paddle.dataset.image.load_image(file=img, is_color=False)

img = paddle.dataset.image.simple_transform(im=img, resize_size=32, crop_size=28, is_color=False, is_train=False)

img = img.flatten().astype('float32') / 255.0

return img, label

def test_r(test_list_path):

def reader():

with open(test_list_path, 'r') as f:

lines = f.readlines()

for line in lines:

img, label = line.split('\t')

yield img, int(label)

return paddle.reader.xmap_readers(test_mapper, reader, cpu_count(), 1024)

定义卷积神经网络

这里定义了一个卷积神经网络,读者可用根据自己的情况修改或更换其他卷积神经网络。

def cnn(ipt):

conv1 = fluid.layers.conv2d(input=ipt,

num_filters=32,

filter_size=3,

padding=1,

stride=1,

name='conv1',

act='relu')

pool1 = fluid.layers.pool2d(input=conv1,

pool_size=2,

pool_stride=2,

pool_type='max',

name='pool1')

bn1 = fluid.layers.batch_norm(input=pool1, name='bn1')

conv2 = fluid.layers.conv2d(input=bn1,

num_filters=64,

filter_size=3,

padding=1,

stride=1,

name='conv2',

act='relu')

pool2 = fluid.layers.pool2d(input=conv2,

pool_size=2,

pool_stride=2,

pool_type='max',

name='pool2')

bn2 = fluid.layers.batch_norm(input=pool2, name='bn2')

fc1 = fluid.layers.fc(input=bn2, size=1024, act='relu', name='fc1')

fc2 = fluid.layers.fc(input=fc1, size=10, act='softmax', name='fc2')

return fc2

获取网络

通过上面定义的卷积神经网络获取一个分类器,网络的输入层是通过fluid.layers.data接口定义的,输入的形状为[1, 28, 28],表示为单通道,宽度和高度都是28的灰度图。

image = fluid.layers.data(name='image', shape=[1, 28, 28], dtype='float32')

net = cnn(image)

定义损失函数

这里使用了交叉熵损失函数fluid.layers.cross_entropy,还使用了fluid.layers.accuracy接口,方便在训练和测试的是输出平均值。

label = fluid.layers.data(name='label', shape=[1], dtype='int64')

cost = fluid.layers.cross_entropy(input=net, label=label)

avg_cost = fluid.layers.mean(x=cost)

acc = fluid.layers.accuracy(input=net, label=label, k=1)

克隆测试程序

在定义损失之后和定义优化方法之前从主程序中克隆一个测试程序。

test_program = fluid.default_main_program().clone(for_test=True)

定义优化方法

接着是定义优化方法,这里使用的是Adam优化方法,读取也可用使用其他的优化方法。

optimizer = fluid.optimizer.AdamOptimizer(learning_rate=0.001)

opt = optimizer.minimize(avg_cost)

创建执行器

这里是创建执行器,并指定使用CPU执行训练。

place = fluid.CPUPlace()

exe = fluid.Executor(place=place)

exe.run(program=fluid.default_startup_program())

把图片数据生成reader

把上面定义的reader按照设置的大小得到每一个batch的reader。

train_reader = paddle.batch(reader=paddle.reader.shuffle(reader=train_r('./train_data.list'), buf_size=3000), batch_size=128)

test_reader = paddle.batch(reader=test_r('./test_data.list'), batch_size=128)

定义输入数据的维度

定义输入数据的维度,第一个是图片数据,第二个是图片对应的标签。

feeder = fluid.DataFeeder(place=place, feed_list=[image, label])

开始训练

开始执行训练,这里只是训练10个Pass,读者可以随意设置。我们在每一个Pass训练完成之后,都进行使用测试数据集测试模型的准确率和报错一次预测模型。

for pass_id in range(2):

for batch_id, data in enumerate(train_reader()):

train_cost, train_acc = exe.run(program=fluid.default_main_program(),

feed=feeder.feed(data),

fetch_list=[avg_cost, acc])

if batch_id % 100 == 0:

print('\nPass:%d, Batch:%d, Cost:%f, Accuracy:%f' % (pass_id, batch_id, train_cost[0], train_acc[0]))

else:

print('.', end="")

test_costs = []

test_accs = []

for batch_id, data in enumerate(test_reader()):

test_cost, test_acc = exe.run(program=test_program,

feed=feeder.feed(data),

fetch_list=[avg_cost, acc])

test_costs.append(test_cost[0])

test_accs.append(test_acc[0])

test_cost = sum(test_costs) / len(test_costs)

test_acc = sum(test_accs) / len(test_accs)

print('\nTest:%d, Cost:%f, Accuracy:%f' % (pass_id, test_cost, test_acc))

fluid.io.save_inference_model(dirname='./model', feeded_var_names=['image'], target_vars=[net], executor=exe)

输出的信息:

Pass:0, Batch:0, Cost:2.971555, Accuracy:0.101562

...................................................................................................

Pass:0, Batch:100, Cost:0.509201, Accuracy:0.859375

........................

Test:0, Cost:0.255964, Accuracy:0.928092

Pass:1, Batch:0, Cost:0.383406, Accuracy:0.882812

...................................................................................................

Pass:1, Batch:100, Cost:0.262583, Accuracy:0.906250

........................

Test:1, Cost:0.210227, Accuracy:0.942152

Pass:2, Batch:0, Cost:0.248821, Accuracy:0.921875

...................................................................................................

Pass:2, Batch:100, Cost:0.121569, Accuracy:0.953125

........................

Test:2, Cost:0.147000, Accuracy:0.959041

Pass:3, Batch:0, Cost:0.219034, Accuracy:0.914062

...................................................................................................

Pass:3, Batch:100, Cost:0.149375, Accuracy:0.929688

........................

Test:3, Cost:0.135075, Accuracy:0.967970

Pass:4, Batch:0, Cost:0.097395, Accuracy:0.960938

...................................................................................................

Pass:4, Batch:100, Cost:0.088472, Accuracy:0.976562

........................

Test:4, Cost:0.130905, Accuracy:0.965254

Pass:5, Batch:0, Cost:0.115069, Accuracy:0.960938

...................................................................................................

Pass:5, Batch:100, Cost:0.132130, Accuracy:0.953125

........................

Test:5, Cost:0.123031, Accuracy:0.969086

Pass:6, Batch:0, Cost:0.083716, Accuracy:0.984375

...................................................................................................

Pass:6, Batch:100, Cost:0.093365, Accuracy:0.968750

........................

Test:6, Cost:0.113957, Accuracy:0.970686

Pass:7, Batch:0, Cost:0.062250, Accuracy:0.976562

...................................................................................................

Pass:7, Batch:100, Cost:0.095572, Accuracy:0.968750

........................

Test:7, Cost:0.097893, Accuracy:0.974182

Pass:8, Batch:0, Cost:0.122696, Accuracy:0.960938

...................................................................................................

Pass:8, Batch:100, Cost:0.154212, Accuracy:0.976562

........................

Test:8, Cost:0.095770, Accuracy:0.969570

Pass:9, Batch:0, Cost:0.105826, Accuracy:0.960938

...................................................................................................

Pass:9, Batch:100, Cost:0.125963, Accuracy:0.976562

........................

Test:9, Cost:0.078607, Accuracy:0.973550

获取预测程序

通过上面保存的预测模型,我们可用生成预测程序,并用于图片预测。

[infer_program, feeded_var_names, target_vars] = fluid.io.load_inference_model(dirname='./model', executor=exe)

进行数据预处理

在对图片进行预测之前,还需要对图片进行预处理。

def load_image(path):

img = paddle.dataset.image.load_image(file=path, is_color=False)

img = paddle.dataset.image.simple_transform(im=img, resize_size=32, crop_size=28, is_color=False, is_train=False)

img = img.astype('float32')

img = img[np.newaxis, ] / 255.0

return img

获取预测图片

然后把与处理后的图片加入到列表中,可用多张图片一起预测的。然后转换成numpy的类型。

infer_imgs = []

infer_imgs.append(load_image('./TibetanMnist(350x350)/0_10_398.jpg'))

infer_imgs = np.array(infer_imgs)

infer_imgs.shape

执行预测

最后执行预测,输入的数据通过feed参数传入,得到一个预测结果,这个结果是每个类别的概率。

result = exe.run(program=infer_program,

feed={feeded_var_names[0]:infer_imgs},

fetch_list=target_vars)

解析结果,获取概率最大的label

我们对输出的结果转换一下,把概率最大的label输出,同时输出当前预测的图片。

lab = np.argsort(result)

im = Image.open('./TibetanMnist(350x350)/0_10_398.jpg')

plt.imshow(im)

plt.show()

print('预测结果为:%d' % lab[0][0][-1])

参考资料

- https://www.kesci.com/home/dataset/5bfe734a954d6e0010683839

- https://blog.csdn.net/qq_33200967/article/details/83506694