OpenShift 4 Hands-on Lab (7) 限制集群资源的使用量

文章目录

- OpenShift集群的规模

- 限制多个项目使用的资源和对象的总数量

- 限制一个项目使用的资源和对象的数量

- 限制一个Pod和Container使用资源的数量

- 通过设置项目级LimitRange,为该项目的Pod和Container设置缺省可用资源上限

- 针对每个Pod和Container设置可用资源

- 一些场景说明

- 只声明容器的limit,而不声明request

- 只声明容器的request,而不声明limit

- 限制网络资源

- 其它参考

OpenShift集群的规模

单一的OpenShift集群可以运行数量非常可观的资源和对象。下表列出了从OpenShift 3.11到OpenShift 4.3各个版本支持的对象和资源的最高配置上限(可以看出基本没有变化)。根据Red Hat的说明,这些上限大都并非硬性限制,而是当环境没有出现性能差( Poor Performance)情况时的最高数量。例如每个node运行的pod数量其实是可以超过250个,只不过当所有node部署的pod都超过250个,而且还运行了2000个node,此时OpenShift集群会出现性能显著下降的情况。下表中的数量上限其实都是经过Red Hat测试验证过的,所以用户可以在项目中放心大规模部署OpenShift集群。不过这些数据使用的是样例应用,因此在实际项目中,能运行的对象规模还需要根据用户的应用情况进行评估。

在OpenShift中,为了能合理地分配和使用对象和资源,我们可以对一个对象使用相关资源(可以是另一类对象,或着是诸如CPU、内存这样的系统资源)的数量进行控制和限制。资源限制主要针对以下三个层面:

- 限制多个项目使用的资源和对象的总数量

- 限制一个项目使用的资源和对象的数量

- 限制一个Pod和Container使用资源的数量

OpenShift使用以下对象实现资源使用限额:

- Quota:一种K8s对象,用来限制项目可以累计使用的资源总和。Quota分为限制单个项目的ResourceQuota(简称Quota)和多个项目的ClusterResourceQuota(简称ClusterQuota)。配额的资源可以是计算资源、存储资源和对象数量。

- LimitRange:一种K8s对象,用来限制一个项目中的Pod和Container缺省可以使用多少资源。

- Resource:非K8s对象,用来限制特定Container使用的资源。如果它比LimitRanges定义的缺省值高,则Container无法正常启动。

限制多个项目使用的资源和对象的总数量

可以用ClusterResourceQuota对象来限制租户消耗的资源总量,因为它可以使用基于项目的Annotation和Lable的Selector来实现跨多个项目限制资源使用的总配额(不像ResourceQuota仅与一个项目相关)。ClusterResourceQuota对象不属于任何一个项目,它属于整个集群。

- 用管理员用户执行以下命令,为用户USER-ID创建clusterresourcequota,限制用户USER-ID下所有项目(例如该用户下当前有"USER-ID-xxx"、“USER-ID-yyy”、"USER-ID-zzz"三个项目)使用的CPU和内存总量。

$ oc create clusterresourcequota USER-ID-crq --project-annotation-selector openshift.io/requester=USER-ID --hard limits.cpu=20 --hard limits.memory=40Gi

- 执行以下命令,查看刚刚创建的ClusterResourceQuota对象。可以看到Namespace Selector中是和USER-ID用户相关的项目。

$ oc describe clusterquota USER-ID-crq

Name: USER-ID-crq

Created: 38 hours ago

Labels: <none>

Annotations: <none>

Namespace Selector: ["USER-ID-xxx" "USER-ID-yyy" "USER-ID-zzz"]

Label Selector:

AnnotationSelector: map[openshift.io/requester:USER-ID]

Resource Used Hard

-------- ---- ----

limits.cpu 17500m 20

limits.memory 41064Mi 45Gi

限制一个项目使用的资源和对象的数量

可以用ResourceQuota对象来限制一个项目中能消耗的资源量,ResourceQuota对象属于一个特性项目。

- 用一般用户新建一个项目。

$ oc new-project USER-ID-resourcequota

- 执行以下命令,为项目创建一个ResourceQuota,然后查看它。

$ oc create resourcequota -n USER-ID-resourcequota my-quota --hard=cpu=10,memory=10G,pods=10,services=10,replicationcontrollers=10,resourcequotas=10,secrets=20,persistentvolumeclaims=10

resourcequota/my-quota created

$ oc get resourcequota

NAME CREATED AT

my-quota 2020-02-21T00:56:44Z

$ oc describe resourcequota my-quota

Name: my-quota1

Namespace: user1-resourcequota

Resource Used Hard

-------- ---- ----

cpu 0 10

memory 0 10G

persistentvolumeclaims 0 10

pods 0 10

replicationcontrollers 0 10

resourcequotas 1 10

secrets 9 20

services 0 10

$ oc get resourcequota my-quota -o yaml

apiVersion: v1

kind: ResourceQuota

metadata:

creationTimestamp: "2020-02-21T00:53:03Z"

name: my-quota

namespace: user1-resourcequota

resourceVersion: "5783782"

selfLink: /api/v1/namespaces/user1-resourcequota/resourcequotas/my-quota

uid: db282194-95fd-4eb8-959b-f02a470958e9

spec:

hard:

cpu: "10"

memory: 10G

persistentvolumeclaims: "10"

pods: "10"

replicationcontrollers: "10"

resourcequotas: "10"

secrets: "20"

services: "10"

status:

hard:

cpu: "10"

memory: 10G

persistentvolumeclaims: "10"

pods: "10"

replicationcontrollers: "10"

resourcequotas: "10"

secrets: "20"

services: "10"

used:

cpu: "0"

memory: "0"

persistentvolumeclaims: "0"

pods: "0"

replicationcontrollers: "0"

resourcequotas: "1"

secrets: "9"

services: "0"

- 还可以用管理员进入OpenShift控制台的Administrator视图的Administration菜单,然后进入Resource Quotas,确保当前是USER-ID-resourcequota项目,在列表中找到可以看到Resource Quotas(会列出所有ResourceQuota和ClusterResourceQuota对象)。进入myquota可以看到资源使用情况。

- (注意:本项内容只适用于OpenShift 4.2较新版本和4.3以后版本)。OpenShift 4.2较新版本和4.3以后版本会在新创的ResourceQuota对象的时候自动在项目中创建一个对应的LimitRange对象。我们可以通过以下命令查看自动创建的项目LimitRange对象中定义的Pod和Container中对CPU和内存的使用配额限制。

$ oc get limitrange

NAME CREATED AT

user1-resourcequota-core-resource-limits 2020-02-22T06:51:32Z

$ oc describe limitrange USER-ID-resourcequota-core-resource-limits

Name: user1-resourcequota-core-resource-limits

Namespace: user1-resourcequota

Type Resource Min Max Default Request Default Limit Max Limit/Request Ratio

---- -------- --- --- --------------- ------------- -----------------------

Container cpu - 4 50m 500m -

Container memory - 6Gi 256Mi 1536Mi -

Pod cpu - 4 - - -

Pod memory - 12Gi - - -

- (注意:本项只适用于OpenShift 4.2早期版本)。我们在上面的my-quota中限制了当前项目所能包含的相关资源总量。执行以下命令先创建另一个Pod对象,然后查看项目的Event,此时会发现出错,提示“Error creating deployer pod。。。。must specify cpu,memory”,说明必须要给运行在这个项目中的所有Pod指定使用的CPU和内存资源。虽然下面命令中已经为名为ruby-hello-world的Pod指定了CPU和内存,但是OpenShift系统自身使用的Pod(例如deployer pod 和 builder pod)都没有定义其所使用的CPU和内存资源量。

$ oc run ruby-hello-world --image=openshift/ruby-hello-world --limits=cpu=200m,memory=100Mi --requests=cpu=100m,memory=50Mi

$ oc get event

LAST SEEN TYPE REASON OBJECT MESSAGE

77s Normal DeploymentCreated deploymentconfig/ruby-hello-world Created new replication controller "ruby-hello-world-1" for version 1

16s Warning FailedCreate deploymentconfig/ruby-hello-world Error creating deployer pod: pods "ruby-hello-world-1-deploy" is forbidden: failed quota: my-quota: must specify cpu,memory

注意:以上说明当为项目定义了resourcequota后,需要为运行在项目中的所有Pod定义CPU和内存使用量限额。一方面可以在Pod中显式定义,另一方面还可通过后面章节中的LimitRange对象为项目中所有Pod提供缺省的CPU和内存使用限额(这个措施在OpenShift 4.2较新版本和4.3以后版本中会自动创建)。

限制一个Pod和Container使用资源的数量

可以通过2种方式可限制Pod和Container对使用资源的数量:

- 在项目中设置LimitRange对象来限制其下所有的Pod和Container缺省能用的资源量,LimitRange是该项目中为资源设置的缺省上限。

- 在Pod对象的YAML定义中的resources区域可定义该Pod和所含Container对象可以使用的资源量(包括:初始分配量 - request、最高分配量 - limit)。当针对Pod单独定义的使用资源超过了其所属项目为Pod定义的缺省能用资源的时候(或整个租户级别已经没有可用资源的时候),Pod就不能正常启动了。

通过设置项目级LimitRange,为该项目的Pod和Container设置缺省可用资源上限

LimitRange对象可对项目中的计算资源进行约束限制,这些资源包括:Pod,Container,Image,ImageStream和PVC。如果这些项目中的已有的资源已经超过了限制,则新的资源将无法创建。

- 用一般用户创建一个项目USER-ID-limit-project。

$ oc new-project USER-ID-limit-project

- 创建以下内容的project-limitrange.yaml文件,其中定义了Pod和Container级别的limit和request。注意:在LimitRange中不能为Pod 设置 Default。

apiVersion: "v1"

kind: "LimitRange"

metadata:

name: "project-limitrange"

spec:

limits:

- type: "Pod"

max:

cpu: "2"

memory: "1Gi"

min:

cpu: "200m"

memory: "60Mi"

- type: "Container"

max:

cpu: "2"

memory: "1Gi"

min:

cpu: "100m"

memory: "4Mi"

default:

cpu: "400m"

memory: "200Mi"

defaultRequest:

cpu: "200m"

memory: "100Mi"

maxLimitRequestRatio:

cpu: "10"

- 在project-limitrange.yaml项目中根据project-limitrange.yaml文件创建资源。

$ oc create -f project-limitrange.yaml -n USER-ID-limit-project

- 查看project-limitrange。

$ oc describe limits/project-limitrange -n USER-ID-limit-project

Name: project-limit-project

Namespace: USER-ID-limit-project

Type Resource Min Max Default Request Default Limit Max Limit/Request Ratio

---- -------- --- --- --------------- ------------- -----------------------

Pod cpu 200m 2 - - -

Pod memory 60Mi 1Gi - - -

Container cpu 100m 2 200m 400m 10

Container memory 4Mi 1Gi 100Mi 200Mi -

- 进入到Administrator视图的Administration菜单,然后进入Limit Ranges可以看到刚刚创建的project-limitrange,可在project-limitrange中看到设置的相关指标和上限。

针对每个Pod和Container设置可用资源

我们还可为每个Pod以及其中的Container进一步指定特定的使用资源上限。需要注意的是当针对Pod单独定义的使用资源超过了其所属项目为Pod定义的缺省能用资源的时候(或整个租户级别已经没有可用资源的时候),Pod是不能正常启动的。

- 直接通过run命令运行Pod,并限定Pod使用CPU和内存资源的limit和request。

$ oc run ruby-hello-world --image=openshift/ruby-hello-world --limits=cpu=200m,memory=100Mi --requests=cpu=100m,memory=50Mi -n USER-ID-limit-project

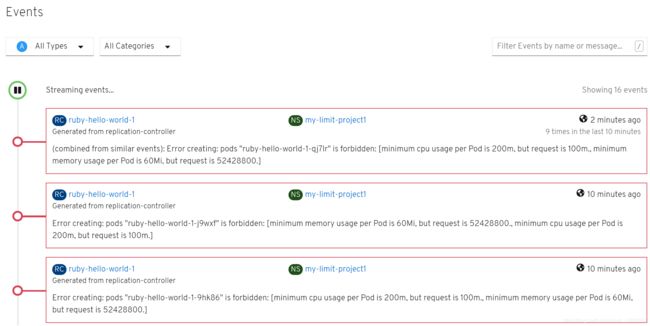

- 在OpenShift控制台的USER-ID-limit-project项目,可以在Events中看到以下错误事件。这是因为在Pod中request的CPU(100m)和内存(50Mi)比上一节创建的项目级limtrange中为Pod分配的最小CPU(200m)和最小内存(60Mi)还少少,因此冲突无法创建设个Pod。

- 修改创建Pod的参数,重新创建。

$ oc delete all --all -n USER-ID-limit-project

$ oc run ruby-hello-world --image=openshift/ruby-hello-world --limits=cpu=500m,memory=100Mi --requests=cpu=300m,memory=100Mi -n USER-ID-limit-project

- 查看Pod的信息,可以看到名为ruby-hello-world的Container中指定的limists和requests配置。

$ oc get pod -n USER-ID-limit-project

NAME READY STATUS RESTARTS AGE

ruby-hello-world-1-lwb2n 1/1 Running 0 45s

$ oc describe pod ruby-hello-world-1-lwb2n -n USER-ID-limit-project

Name: ruby-hello-world-1-lwb2n

Namespace: user1-limitrange

Priority: 0

Node: ip-10-0-132-181.ec2.internal/10.0.132.181

Start Time: Fri, 21 Feb 2020 05:44:39 +0000

Labels: deployment=ruby-hello-world-1

deploymentconfig=ruby-hello-world

run=ruby-hello-world

Annotations: k8s.v1.cni.cncf.io/networks-status:

[{

"name": "openshift-sdn",

"interface": "eth0",

"ips": [

"10.128.2.45"

],

"dns": {},

"default-route": [

"10.128.2.1"

]

}]

openshift.io/deployment-config.latest-version: 1

openshift.io/deployment-config.name: ruby-hello-world

openshift.io/deployment.name: ruby-hello-world-1

openshift.io/scc: restricted

Status: Running

IP: 10.128.2.45

IPs:

IP: 10.128.2.45

Controlled By: ReplicationController/ruby-hello-world-1

Containers:

ruby-hello-world:

Container ID: cri-o://c8e1886fc9133141fdc9ebae5a3df884ca32f4313c0e53cf68fc4f038ab6390a

Image: openshift/ruby-hello-world

Image ID: docker.io/openshift/ruby-hello-world@sha256:52384f1f4cc137335b59c25eb69273e766de7646c04c22f66648313f12f1828f

Port: <none>

Host Port: <none>

State: Running

Started: Fri, 21 Feb 2020 05:45:02 +0000

Ready: True

Restart Count: 0

Limits:

cpu: 500m

memory: 100Mi

Requests:

cpu: 300m

memory: 100Mi

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from default-token-6ww8z (ro)

。。。

一些场景说明

只声明容器的limit,而不声明request

- 创建内容如下的only-limit.yaml文件,其中只定义了Container的cpu limit。

apiVersion: v1

kind: Pod

metadata:

name: only-limit

spec:

containers:

- name: only-limit-2-ctr

image: openshift/hello-openshift

resources:

limits:

cpu: "1"

- 执行命令创建该对象。

$ oc apply -f only-limit.yaml -n USER-ID-limit-project

- 查看名为only-limit的Pod中container的resources limit。可以看到此时系统已经自动将cpu的request和limit设为一样了,另外没有明确指定的memory也适用了上一节为该项目创建的LimitRange中定义的default limit和default request。

containers:

- image: openshift/hello-openshift

imagePullPolicy: Always

name: only-request-2-ctr

resources:

resources:

limits:

cpu: "1"

memory: 200Mi

requests:

cpu: "1"

memory: 100Mi

只声明容器的request,而不声明limit

- 创建内容如下的only-request.yaml文件,其中只定义了Container的cpu request。

apiVersion: v1

kind: Pod

metadata:

name: only-request

spec:

containers:

- name: only-request-2-ctr

image: openshift/hello-openshift

resources:

requests:

cpu: "0.3"

- 执行命令创建该对象。

$ oc apply -f only-request.yaml -n USER-ID-limit-project

- 查看名为only-request的Pod中container的resources limit。可以看到此时系统已经自动将CPU和内存的limit设为limitrange中的default limit,而request的CPU为(1)步骤中定义的“0.3”(即300m),request的内存为default request中的内存量(100Mi)。

containers:

- image: openshift/hello-openshift

imagePullPolicy: Always

name: only-request-2-ctr

resources:

limits:

cpu: 400m

memory: 200Mi

requests:

cpu: 300m

memory: 100Mi

限制网络资源

在OpenShift中还可对Constainer占用网络带宽进行限制,这主要是在Pod中为Container配置kubernetes.io/ingress-bandwidth和kubernetes.io/egress-bandwidth参数实现的。

- 创建包含以下内容的bandlimit.json。

{

"kind": "Pod",

"spec": {

"containers": [

{

"image": "openshift/hello-openshift",

"name": "hello-openshift"

}

]

},

"apiVersion": "v1",

"metadata": {

"name": "bandlimit",

"annotations": {

"kubernetes.io/ingress-bandwidth": "10M",

"kubernetes.io/egress-bandwidth": "10M"

}

}

}

- 根据上面文件创建Pod,然后查看这个Pod。

$ oc create -f bandlimit.json -n USER-ID-limit-project

pod/bandwidth created

$ oc describe pod/bandwidth -n USER-ID-limit-project

Name: bandwidth

Namespace: user1-limitrange

Priority: 0

Node: ip-10-0-132-181.ec2.internal/10.0.132.181

Start Time: Fri, 21 Feb 2020 06:29:20 +0000

Labels: <none>

Annotations: k8s.v1.cni.cncf.io/networks-status:

[{

"name": "openshift-sdn",

"interface": "eth0",

"ips": [

"10.128.2.46"

],

"dns": {},

"default-route": [

"10.128.2.1"

]

}]

kubernetes.io/egress-bandwidth: 10M

kubernetes.io/ingress-bandwidth: 10M

openshift.io/scc: anyuid

。。。

其它参考

https://docs.openshift.com/container-platform/4.3/applications/quotas/quotas-setting-per-project.html

https://docs.openshift.com/container-platform/3.11/admin_guide/limits.html

https://docs.openshift.com/container-platform/3.11/dev_guide/compute_resources.html

https://kubernetes.io/zh/docs/tasks/administer-cluster/manage-resources/cpu-default-namespace/