PaddlePaddle实现口罩识别

Paddle实现口罩识别

- 一、导入库 那里没有pip install哪里

- 二、参数配置

- 三、预处理数据

- 四、模型配置

- 五、模型训练 && 模型评估

- 六、校验模型

- 七、模型预测

一、导入库 那里没有pip install哪里

import os

import zipfile

import random

import json

import paddle

import sys

import numpy as np

from PIL import Image

from PIL import ImageEnhance

import paddle.fluid as fluid

from multiprocessing import cpu_count

import matplotlib.pyplot as plt

二、参数配置

train_parameters = {

"input_size": [3, 224, 224], #输入图片的shape

"class_dim": -1, #分类数

"src_path":"/home/aistudio/work/maskDetect.zip",#原始数据集路径

"target_path":"/home/aistudio/data/", #要解压的路径

"train_list_path": "/home/aistudio/data/train.txt", #train.txt路径

"eval_list_path": "/home/aistudio/data/eval.txt", #eval.txt路径

"readme_path": "/home/aistudio/data/readme.json", #readme.json路径

"label_dict":{}, #标签字典

"num_epochs": 50, #训练轮数

"train_batch_size": 8, #训练时每个批次的大小

"learning_strategy": { #优化函数相关的配置

"lr": 0.001 #超参数学习率

}

三、预处理数据

(1)解压原始数据集

(2)按照比例划分训练集与验证集

(3)乱序,生成数据列表

(4)构造训练数据集提供器和验证数据集提供器

def unzip_data(src_path,target_path):

'''

解压原始数据集,将src_path路径下的zip包解压至data目录下

'''

if(not os.path.isdir(target_path + "maskDetect")):

z = zipfile.ZipFile(src_path, 'r')

z.extractall(path=target_path)

z.close()

In[4]

def get_data_list(target_path,train_list_path,eval_list_path):

'''

生成数据列表

'''

#存放所有类别的信息

class_detail = []

#获取所有类别保存的文件夹名称

data_list_path=target_path+"maskDetect/"

class_dirs = os.listdir(data_list_path)

#总的图像数量

all_class_images = 0

#存放类别标签

class_label=0

#存放类别数目

class_dim = 0

#存储要写进eval.txt和train.txt中的内容

trainer_list=[]

eval_list=[]

#读取每个类别,['maskimages', 'nomaskimages']

for class_dir in class_dirs:

if class_dir != ".DS_Store":

class_dim += 1

#每个类别的信息

class_detail_list = {}

eval_sum = 0

trainer_sum = 0

#统计每个类别有多少张图片

class_sum = 0

#获取类别路径

path = data_list_path + class_dir

# 获取所有图片

img_paths = os.listdir(path)

for img_path in img_paths: # 遍历文件夹下的每个图片

name_path = path + '/' + img_path # 每张图片的路径

if class_sum % 10 == 0: # 每10张图片取一个做验证数据

eval_sum += 1 # test_sum为测试数据的数目

eval_list.append(name_path + "\t%d" % class_label + "\n")

else:

trainer_sum += 1

trainer_list.append(name_path + "\t%d" % class_label + "\n")#trainer_sum测试数据的数目

class_sum += 1 #每类图片的数目

all_class_images += 1 #所有类图片的数目

# 说明的json文件的class_detail数据

class_detail_list['class_name'] = class_dir #类别名称,如jiangwen

class_detail_list['class_label'] = class_label #类别标签

class_detail_list['class_eval_images'] = eval_sum #该类数据的测试集数目

class_detail_list['class_trainer_images'] = trainer_sum #该类数据的训练集数目

class_detail.append(class_detail_list)

#初始化标签列表

train_parameters['label_dict'][str(class_label)] = class_dir

class_label += 1

#初始化分类数

train_parameters['class_dim'] = class_dim

#乱序

random.shuffle(eval_list)

with open(eval_list_path, 'a') as f:

for eval_image in eval_list:

f.write(eval_image)

random.shuffle(trainer_list)

with open(train_list_path, 'a') as f2:

for train_image in trainer_list:

f2.write(train_image)

# 说明的json文件信息

readjson = {}

readjson['all_class_name'] = data_list_path #文件父目录

readjson['all_class_images'] = all_class_images

readjson['class_detail'] = class_detail

jsons = json.dumps(readjson, sort_keys=True, indent=4, separators=(',', ': '))

with open(train_parameters['readme_path'],'w') as f:

f.write(jsons)

print ('生成数据列表完成!')

In[5]

def custom_reader(file_list):

'''

自定义reader

'''

def reader():

with open(file_list, 'r') as f:

lines = [line.strip() for line in f]

for line in lines:

img_path, lab = line.strip().split('\t')

img = Image.open(img_path)

if img.mode != 'RGB':

img = img.convert('RGB')

img = img.resize((224, 224), Image.BILINEAR)

img = np.array(img).astype('float32')

img = img.transpose((2, 0, 1)) # HWC to CHW

img = img/255 # 像素值归一化

yield img, int(lab)

return reader

In[6]

'''

参数初始化

'''

src_path=train_parameters['src_path']

target_path=train_parameters['target_path']

train_list_path=train_parameters['train_list_path']

eval_list_path=train_parameters['eval_list_path']

batch_size=train_parameters['train_batch_size']

'''

解压原始数据到指定路径

'''

unzip_data(src_path,target_path)

'''

划分训练集与验证集,乱序,生成数据列表

'''

#每次生成数据列表前,首先清空train.txt和eval.txt

with open(train_list_path, 'w') as f:

f.seek(0)

f.truncate()

with open(eval_list_path, 'w') as f:

f.seek(0)

f.truncate()

#生成数据列表

get_data_list(target_path,train_list_path,eval_list_path)

'''

构造数据提供器

'''

train_reader = paddle.batch(custom_reader(train_list_path),

batch_size=batch_size,

drop_last=True)

eval_reader = paddle.batch(custom_reader(eval_list_path),

batch_size=batch_size,

drop_last=True)

四、模型配置

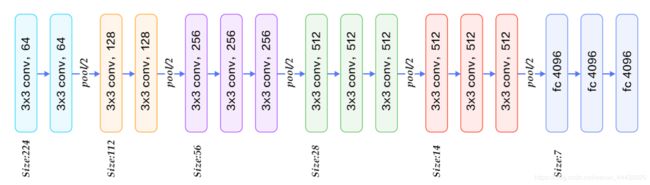

VGG的核心是五组卷积操作,每两组之间做Max-Pooling空间降维。同一组内采用多次连续的3X3卷积,卷积核的数目由较浅组的64增多到最深组的512,同一组内的卷积核数目是一样的。卷积之后接两层全连接层,之后是分类层。由于每组内卷积层的不同,有11、13、16、19层这几种模型,上图展示一个16层的网络结构。

class ConvPool(fluid.dygraph.Layer):

'''卷积+池化'''

def __init__(self,

num_channels,

num_filters,

filter_size,

pool_size,

pool_stride,

groups,

pool_padding=0,

pool_type='max',

conv_stride=1,

conv_padding=1,

act=None):

super(ConvPool, self).__init__()

self._conv2d_list = []

for i in range(groups):

conv2d = self.add_sublayer( #返回一个由所有子层组成的列表。

'bb_%d' % i,

fluid.dygraph.Conv2D(

num_channels=num_channels, #通道数

num_filters=num_filters, #卷积核个数

filter_size=filter_size, #卷积核大小

stride=conv_stride, #步长

padding=conv_padding, #padding大小,默认为0

act=act)

)

num_channels=num_filters

self._conv2d_list.append(conv2d)

self._pool2d = fluid.dygraph.Pool2D(

pool_size=pool_size, #池化核大小

pool_type=pool_type, #池化类型,默认是最大池化

pool_stride=pool_stride, #池化步长

pool_padding=pool_padding #填充大小

)

def forward(self, inputs):

x = inputs

for conv in self._conv2d_list:

x = conv(x)

x = self._pool2d(x)

return x

class VGGNet(fluid.dygraph.Layer):

'''

VGG网络

'''

def __init__(self):

super(VGGNet, self).__init__()

self.convpool01=ConvPool(3,64,3,2,2,2,act='relu')

self.convpool02=ConvPool(64,128,3,2,2,2,act='relu')

self.convpool03=ConvPool(128,256,3,2,2,3,act='relu')

self.convpool04=ConvPool(256,512,3,2,2,3,act='relu')

self.convpool05=ConvPool(512,512,3,2,2,3,act='relu')

self.pool_5_shape=512*7*7

self.fc01=fluid.dygraph.Linear(self.pool_5_shape,4096,act='relu')

self.fc02=fluid.dygraph.Linear(4096,4096,act='relu')

self.fc03=fluid.dygraph.Linear(4096,2,act='softmax')

def forward(self, inputs, label=None):

"""前向计算"""

x=self.convpool01(inputs)

x=self.convpool02(x)

x=self.convpool03(x)

x=self.convpool04(x)

x=self.convpool05(x)

x=fluid.layers.reshape(x,shape=[-1,512*7*7])

x=self.fc01(x)

x=fluid.layers.dropout(x,0.3)

x=self.fc02(x)

y=self.fc03(x)

if label is not None:

acc =fluid.layers.accuracy(input=y,label=label)

return y, acc

else:return y

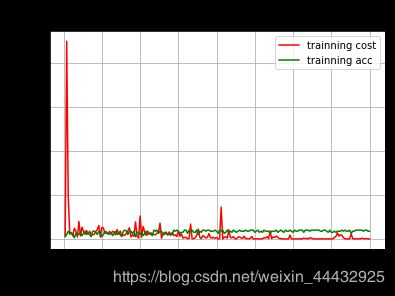

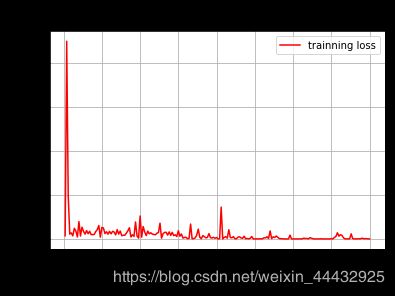

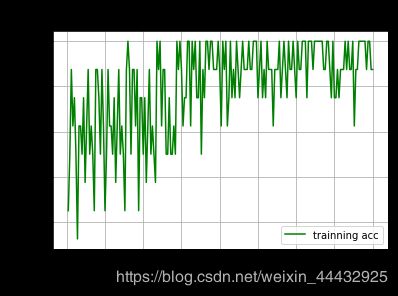

五、模型训练 && 模型评估

all_train_iter=0

all_train_iters=[]

all_train_costs=[]

all_train_accs=[]

def draw_train_process(title,iters,costs,accs,label_cost,lable_acc):

plt.title(title, fontsize=24)

plt.xlabel("iter", fontsize=20)

plt.ylabel("cost/acc", fontsize=20)

plt.plot(iters, costs,color='red',label=label_cost)

plt.plot(iters, accs,color='green',label=lable_acc)

plt.legend()

plt.grid()

plt.show()

def draw_process(title,color,iters,data,label):

plt.title(title, fontsize=24)

plt.xlabel("iter", fontsize=20)

plt.ylabel(label, fontsize=20)

plt.plot(iters, data,color=color,label=label)

plt.legend()

plt.grid()

plt.show()

'''

模型训练

'''

with fluid.dygraph.guard(place = fluid.CUDAPlace(0)):

# with fluid.dygraph.guard():

print(train_parameters['class_dim'])

print(train_parameters['label_dict'])

vgg = VGGNet()

optimizer=fluid.optimizer.AdamOptimizer(learning_rate=0.0001,parameter_list=vgg.parameters())

for epoch_num in range(10):

for batch_id, data in enumerate(train_reader()):

dy_x_data = np.array([x[0] for x in data]).astype('float32')

y_data = np.array([x[1] for x in data]).astype('int64')

y_data = y_data[:, np.newaxis]

#将Numpy转换为DyGraph接收的输入

img = fluid.dygraph.to_variable(dy_x_data)

label = fluid.dygraph.to_variable(y_data)

out,acc = vgg(img,label)

loss = fluid.layers.cross_entropy(out, label)

avg_loss = fluid.layers.mean(loss)

#使用backward()方法可以执行反向网络

avg_loss.backward()

optimizer.minimize(avg_loss)

#将参数梯度清零以保证下一轮训练的正确性

vgg.clear_gradients()

all_train_iter=all_train_iter+train_parameters['train_batch_size']

all_train_iters.append(all_train_iter)

all_train_costs.append(loss.numpy()[0])

all_train_accs.append(acc.numpy()[0])

if batch_id % 1 == 0:

print("Loss at epoch {} step {}: {}, acc: {}".format(epoch_num, batch_id, avg_loss.numpy(), acc.numpy()))

draw_train_process("training",all_train_iters,all_train_costs,all_train_accs,"trainning cost","trainning acc")

draw_process("trainning loss","red",all_train_iters,all_train_costs,"trainning loss")

draw_process("trainning acc","green",all_train_iters,all_train_accs,"trainning acc")

#保存模型参数

fluid.save_dygraph(vgg.state_dict(), "vgg")

print("Final loss: {}".format(avg_loss.numpy()))

2

{‘0’: ‘nomaskimages’, ‘1’: ‘maskimages’}

Loss at epoch 0 step 0: [0.9295834], acc: [0.25]

Loss at epoch 0 step 1: [11.639934], acc: [0.5]

Loss at epoch 0 step 2: [0.6261003], acc: [0.875]

Loss at epoch 0 step 3: [0.66833645], acc: [0.625]

Loss at epoch 0 step 4: [0.6667167], acc: [0.75]

Loss at epoch 0 step 5: [0.8361335], acc: [0.5]

Loss at epoch 0 step 6: [1.1536872], acc: [0.125]

Loss at epoch 0 step 7: [0.6044862], acc: [0.625]

Loss at epoch 0 step 8: [0.6605163], acc: [0.625]

Loss at epoch 0 step 9: [0.88535273], acc: [0.5]

Loss at epoch 0 step 10: [0.52518356], acc: [0.75]

Loss at epoch 0 step 11: [0.83097625], acc: [0.375]

Loss at epoch 0 step 12: [0.60872185], acc: [0.625]

Loss at epoch 0 step 13: [0.5761976], acc: [0.875]

Loss at epoch 0 step 14: [0.7011553], acc: [0.5]

Loss at epoch 0 step 15: [0.65026075], acc: [0.625]

Loss at epoch 0 step 16: [0.6944751], acc: [0.5]

Loss at epoch 0 step 17: [0.78067243], acc: [0.25]

Loss at epoch 0 step 18: [0.57722664], acc: [0.875]

Loss at epoch 0 step 19: [0.5686499], acc: [0.875]

Loss at epoch 1 step 0: [0.5841127], acc: [0.75]

Loss at epoch 1 step 1: [0.6960245], acc: [0.5]

Loss at epoch 1 step 2: [0.44773918], acc: [0.875]

Loss at epoch 1 step 3: [0.5250957], acc: [0.625]

Loss at epoch 1 step 4: [1.0879121], acc: [0.25]

Loss at epoch 1 step 5: [0.7848166], acc: [0.5]

Loss at epoch 1 step 6: [0.53614163], acc: [0.875]

Loss at epoch 1 step 7: [0.6268474], acc: [0.625]

Loss at epoch 1 step 8: [0.64221966], acc: [0.625]

Loss at epoch 1 step 9: [0.67701757], acc: [0.5]

Loss at epoch 1 step 10: [0.6142461], acc: [0.75]

Loss at epoch 1 step 11: [0.70151085], acc: [0.375]

Loss at epoch 1 step 12: [0.62126315], acc: [0.625]

Loss at epoch 1 step 13: [0.525994], acc: [0.875]

Loss at epoch 1 step 14: [0.7073336], acc: [0.5]

Loss at epoch 1 step 15: [0.65142167], acc: [0.625]

Loss at epoch 1 step 16: [0.69909334], acc: [0.5]

Loss at epoch 1 step 17: [0.8083452], acc: [0.25]

Loss at epoch 1 step 18: [0.5067572], acc: [0.875]

Loss at epoch 1 step 19: [0.5136257], acc: [1.]

Loss at epoch 2 step 0: [0.5377171], acc: [0.875]

Loss at epoch 2 step 1: [0.5754539], acc: [0.5]

Loss at epoch 2 step 2: [0.40548515], acc: [0.875]

Loss at epoch 2 step 3: [0.31952214], acc: [0.875]

Loss at epoch 2 step 4: [0.78113437], acc: [0.625]

Loss at epoch 2 step 5: [0.5048914], acc: [0.875]

Loss at epoch 2 step 6: [1.4830418], acc: [0.25]

Loss at epoch 2 step 7: [0.49757195], acc: [0.75]

Loss at epoch 2 step 8: [0.5676572], acc: [0.75]

Loss at epoch 2 step 9: [0.9900228], acc: [0.5]

Loss at epoch 2 step 10: [0.44408265], acc: [0.75]

Loss at epoch 2 step 11: [0.8730574], acc: [0.375]

Loss at epoch 2 step 12: [0.53578556], acc: [0.625]

Loss at epoch 2 step 13: [0.48786613], acc: [0.875]

Loss at epoch 2 step 14: [0.6509448], acc: [0.5]

Loss at epoch 2 step 15: [0.62657726], acc: [0.625]

Loss at epoch 2 step 16: [0.6353064], acc: [0.5]

Loss at epoch 2 step 17: [0.685336], acc: [0.375]

Loss at epoch 2 step 18: [0.5429473], acc: [1.]

Loss at epoch 2 step 19: [0.53305715], acc: [0.875]

Loss at epoch 3 step 0: [0.547396], acc: [1.]

Loss at epoch 3 step 1: [0.464895], acc: [0.625]

Loss at epoch 3 step 2: [0.3808568], acc: [0.875]

Loss at epoch 3 step 3: [0.26534224], acc: [0.875]

Loss at epoch 3 step 4: [1.2195588], acc: [0.5]

Loss at epoch 3 step 5: [0.7797859], acc: [0.5]

Loss at epoch 3 step 6: [0.44601098], acc: [0.75]

Loss at epoch 3 step 7: [0.66588986], acc: [0.5]

Loss at epoch 3 step 8: [0.6749136], acc: [0.5]

Loss at epoch 3 step 9: [0.6435183], acc: [0.625]

Loss at epoch 3 step 10: [0.62706214], acc: [0.5]

Loss at epoch 3 step 11: [0.47243363], acc: [1.]

Loss at epoch 3 step 12: [0.5122753], acc: [0.875]

Loss at epoch 3 step 13: [0.39265972], acc: [1.]

Loss at epoch 3 step 14: [0.46594098], acc: [0.875]

Loss at epoch 3 step 15: [0.5612832], acc: [0.625]

Loss at epoch 3 step 16: [0.5982967], acc: [0.75]

Loss at epoch 3 step 17: [0.60762286], acc: [0.75]

Loss at epoch 3 step 18: [0.19355822], acc: [1.]

Loss at epoch 3 step 19: [0.25222063], acc: [1.]

Loss at epoch 4 step 0: [0.69412076], acc: [0.625]

Loss at epoch 4 step 1: [0.06438629], acc: [1.]

Loss at epoch 4 step 2: [0.2938982], acc: [0.875]

Loss at epoch 4 step 3: [0.01501226], acc: [1.]

Loss at epoch 4 step 4: [0.8606665], acc: [0.75]

Loss at epoch 4 step 5: [0.85187256], acc: [0.75]

Loss at epoch 4 step 6: [0.14488651], acc: [1.]

Loss at epoch 4 step 7: [0.58163244], acc: [0.5]

Loss at epoch 4 step 8: [0.3487966], acc: [0.875]

Loss at epoch 4 step 9: [0.71025443], acc: [0.75]

Loss at epoch 4 step 10: [0.23581119], acc: [1.]

Loss at epoch 4 step 11: [0.28185755], acc: [1.]

Loss at epoch 4 step 12: [0.41142535], acc: [0.875]

Loss at epoch 4 step 13: [0.26113376], acc: [1.]

Loss at epoch 4 step 14: [0.29340506], acc: [1.]

Loss at epoch 4 step 15: [0.3636752], acc: [0.875]

Loss at epoch 4 step 16: [0.31026483], acc: [0.875]

Loss at epoch 4 step 17: [0.16071159], acc: [0.875]

Loss at epoch 4 step 18: [0.10324877], acc: [1.]

Loss at epoch 4 step 19: [0.2239728], acc: [0.875]

Loss at epoch 5 step 0: [1.075266], acc: [0.625]

Loss at epoch 5 step 1: [0.01089855], acc: [1.]

Loss at epoch 5 step 2: [0.4548849], acc: [0.875]

Loss at epoch 5 step 3: [0.03750079], acc: [1.]

Loss at epoch 5 step 4: [1.2823855], acc: [0.625]

Loss at epoch 5 step 5: [0.675403], acc: [0.75]

Loss at epoch 5 step 6: [0.16604242], acc: [1.]

Loss at epoch 5 step 7: [0.5277569], acc: [0.75]

Loss at epoch 5 step 8: [0.28130966], acc: [0.875]

Loss at epoch 5 step 9: [0.41909793], acc: [0.75]

Loss at epoch 5 step 10: [0.20996659], acc: [1.]

Loss at epoch 5 step 11: [0.2245177], acc: [0.875]

Loss at epoch 5 step 12: [0.52283394], acc: [0.75]

Loss at epoch 5 step 13: [0.252886], acc: [0.875]

Loss at epoch 5 step 14: [0.17898756], acc: [1.]

Loss at epoch 5 step 15: [0.23525244], acc: [0.875]

Loss at epoch 5 step 16: [0.21233535], acc: [0.875]

Loss at epoch 5 step 17: [0.2358022], acc: [0.875]

Loss at epoch 5 step 18: [0.07317601], acc: [1.]

Loss at epoch 5 step 19: [0.15355381], acc: [0.875]

Loss at epoch 6 step 0: [0.2345704], acc: [0.875]

Loss at epoch 6 step 1: [0.03883681], acc: [1.]

Loss at epoch 6 step 2: [0.07743732], acc: [1.]

Loss at epoch 6 step 3: [0.00871679], acc: [1.]

Loss at epoch 6 step 4: [0.6231563], acc: [0.75]

Loss at epoch 6 step 5: [0.54312867], acc: [0.875]

Loss at epoch 6 step 6: [0.00542075], acc: [1.]

Loss at epoch 6 step 7: [0.61691445], acc: [0.75]

Loss at epoch 6 step 8: [0.19379742], acc: [0.875]

Loss at epoch 6 step 9: [0.39975074], acc: [0.75]

Loss at epoch 6 step 10: [0.06522364], acc: [1.]

Loss at epoch 6 step 11: [0.35885918], acc: [0.875]

Loss at epoch 6 step 12: [0.3460069], acc: [0.875]

Loss at epoch 6 step 13: [0.2024731], acc: [0.875]

Loss at epoch 6 step 14: [0.5381352], acc: [0.625]

Loss at epoch 6 step 15: [0.33781287], acc: [0.875]

Loss at epoch 6 step 16: [0.32887], acc: [0.875]

Loss at epoch 6 step 17: [0.25033426], acc: [0.875]

Loss at epoch 6 step 18: [0.25389832], acc: [1.]

Loss at epoch 6 step 19: [0.34452206], acc: [0.75]

Loss at epoch 7 step 0: [0.18207917], acc: [0.875]

Loss at epoch 7 step 1: [0.02621263], acc: [1.]

Loss at epoch 7 step 2: [0.19247293], acc: [0.875]

Loss at epoch 7 step 3: [0.24505714], acc: [0.75]

Loss at epoch 7 step 4: [0.05677713], acc: [1.]

Loss at epoch 7 step 5: [0.19842745], acc: [0.875]

Loss at epoch 7 step 6: [0.1613762], acc: [0.875]

Loss at epoch 7 step 7: [0.14081438], acc: [1.]

Loss at epoch 7 step 8: [0.11826605], acc: [0.875]

Loss at epoch 7 step 9: [0.27152562], acc: [0.75]

Loss at epoch 7 step 10: [0.00868933], acc: [1.]

Loss at epoch 7 step 11: [0.18546718], acc: [0.875]

Loss at epoch 7 step 12: [0.19288036], acc: [0.875]

Loss at epoch 7 step 13: [0.04274368], acc: [1.]

Loss at epoch 7 step 14: [0.05203501], acc: [1.]

Loss at epoch 7 step 15: [0.02513801], acc: [1.]

Loss at epoch 7 step 16: [0.29849395], acc: [0.75]

Loss at epoch 7 step 17: [0.11062137], acc: [1.]

Loss at epoch 7 step 18: [0.04197507], acc: [1.]

Loss at epoch 7 step 19: [0.08454401], acc: [1.]

Loss at epoch 8 step 0: [0.55941767], acc: [0.875]

Loss at epoch 8 step 1: [0.02619867], acc: [1.]

Loss at epoch 8 step 2: [0.02495809], acc: [1.]

Loss at epoch 8 step 3: [0.02852487], acc: [1.]

Loss at epoch 8 step 4: [0.03313993], acc: [1.]

Loss at epoch 8 step 5: [0.0880249], acc: [1.]

Loss at epoch 8 step 6: [0.00471907], acc: [1.]

Loss at epoch 8 step 7: [0.14173785], acc: [0.875]

Loss at epoch 8 step 8: [0.17833945], acc: [0.875]

Loss at epoch 8 step 9: [0.04743274], acc: [1.]

Loss at epoch 8 step 10: [0.00036193], acc: [1.]

Loss at epoch 8 step 11: [0.4990233], acc: [0.875]

Loss at epoch 8 step 12: [0.3823611], acc: [0.75]

Loss at epoch 8 step 13: [0.00024095], acc: [1.]

Loss at epoch 8 step 14: [0.63016033], acc: [0.75]

Loss at epoch 8 step 15: [0.71019113], acc: [0.75]

Loss at epoch 8 step 16: [0.505288], acc: [0.875]

Loss at epoch 8 step 17: [0.28834325], acc: [0.75]

Loss at epoch 8 step 18: [0.43973053], acc: [0.875]

Loss at epoch 8 step 19: [0.44098347], acc: [0.875]

Loss at epoch 9 step 0: [0.29922554], acc: [0.875]

Loss at epoch 9 step 1: [0.2809055], acc: [1.]

Loss at epoch 9 step 2: [0.14478242], acc: [0.875]

Loss at epoch 9 step 3: [0.0635983], acc: [1.]

Loss at epoch 9 step 4: [0.16351134], acc: [0.875]

Loss at epoch 9 step 5: [0.43683648], acc: [0.875]

Loss at epoch 9 step 6: [0.00702756], acc: [1.]

Loss at epoch 9 step 7: [0.70568985], acc: [0.625]

Loss at epoch 9 step 8: [0.15877862], acc: [0.875]

Loss at epoch 9 step 9: [0.20800568], acc: [0.875]

Loss at epoch 9 step 10: [0.03415946], acc: [1.]

Loss at epoch 9 step 11: [0.02590652], acc: [1.]

Loss at epoch 9 step 12: [0.09308596], acc: [1.]

Loss at epoch 9 step 13: [0.01488115], acc: [1.]

Loss at epoch 9 step 14: [0.05175847], acc: [1.]

Loss at epoch 9 step 15: [0.19598301], acc: [0.875]

Loss at epoch 9 step 16: [0.11070873], acc: [1.]

Loss at epoch 9 step 17: [0.00711085], acc: [1.]

Loss at epoch 9 step 18: [0.09272808], acc: [0.875]

Loss at epoch 9 step 19: [0.24043441], acc: [0.875]

Final loss: [0.24043441]

六、校验模型

'''

模型校验

'''

with fluid.dygraph.guard():

model, _ = fluid.load_dygraph("vgg")

vgg = VGGNet()

vgg.load_dict(model)

vgg.eval()

accs = []

for batch_id, data in enumerate(eval_reader()):

dy_x_data = np.array([x[0] for x in data]).astype('float32')

y_data = np.array([x[1] for x in data]).astype('int')

y_data = y_data[:, np.newaxis]

img = fluid.dygraph.to_variable(dy_x_data)

label = fluid.dygraph.to_variable(y_data)

out, acc = vgg(img, label)

lab = np.argsort(out.numpy())

accs.append(acc.numpy()[0])

print(np.mean(accs))

0.9375

七、模型预测

def load_image(img_path):

'''

预测图片预处理

'''

img = Image.open(img_path)

if img.mode != 'RGB':

img = img.convert('RGB')

img = img.resize((224, 224), Image.BILINEAR)

img = np.array(img).astype('float32')

img = img.transpose((2, 0, 1)) # HWC to CHW

img = img/255 # 像素值归一化

return img

label_dic = train_parameters['label_dict']

'''

模型预测

'''

with fluid.dygraph.guard():

model, _ = fluid.dygraph.load_dygraph("vgg")

vgg = VGGNet()

vgg.load_dict(model)

vgg.eval()

#展示预测图片

infer_path='/home/aistudio/data/data23615/infer_mask01.jpg'

img = Image.open(infer_path)

plt.imshow(img) #根据数组绘制图像

plt.show() #显示图像

#对预测图片进行预处理

infer_imgs = []

infer_imgs.append(load_image(infer_path))

infer_imgs = np.array(infer_imgs)

for i in range(len(infer_imgs)):

data = infer_imgs[i]

dy_x_data = np.array(data).astype('float32')

dy_x_data=dy_x_data[np.newaxis,:, : ,:]

img = fluid.dygraph.to_variable(dy_x_data)

out = vgg(img)

lab = np.argmax(out.numpy()) #argmax():返回最大数的索引

print("第{}个样本,被预测为:{}".format(i+1,label_dic[str(lab)]))

print("结束")