ResNet中残差块的理解(附代码)

原论文下载地址:论文

原代码下载地址:官方pytorch代码

比较完整的论文理解:ResNet论文笔记及代码剖析

这里我只讲他的核心理念,残差块,也是我理解了很久的地方,请原谅我描述的如此口语化,希望能帮助大家理解,如果有理解的不对的地方,欢迎指正

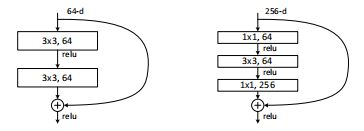

ImageNet的一个更深层次的残差函数F。左图:一个积木块(56×56个特征图),如图3所示,用于ResNet-34。右图:ResNet-50/101/152的“瓶颈”构建块。

左边的就叫做BasicBlock,右边就叫bottleneck

我们可以看到方框(就是一个卷积层layer)中的数字33,64,表示什么意思呢?就是卷积的大小是33的,然后维度是64,就是特征层有64个(大小是33),叠加在一起就是一个方块的样子,那在BasicBlock中,两个层的大小是相等,所以加在一起是长方体。

这样的话,是否就能够理解,bottleneck这个残差块的名字的由来了呢?

没错,上面是11,64大小的块,中间是33,64大小的块,下面是11,256大小的块,叠加在一起,是不是一个瓶子的形状,上面细,中间粗,下面细。

然后我们来看一下代码:

ResNet类(仅展示代码核心部分)

几个关键点:

1.在残差结构之前,先对原始224 x 224的图片处理,在经过7 x 7的大卷积核、BN、ReLU、最大池化之后得到56 x 56 x 64的feature map

2.从layer1、layer2、layer3、layer4的定义可以看出,第一个stage不会减小feature map,其余都会在stage的第一层用步长2的3 x 3卷积进行feature map长和宽减半

3._make_layer函数中downsample对残差结构的输入进行升维,直接1 x 1卷积再加上BN即可,后面BasicBlock类和Bottleneck类用得到

4.最后的池化层使用的是自适应平均池化,而非论文中的全局平均池化

class ResNet(nn.Module):

def __init__(self, block, layers, num_classes=1000, zero_init_residual=False):

super(ResNet, self).__init__()

self.inplanes = 64

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.fc = nn.Linear(512 * block.expansion, num_classes)

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

conv1x1(self.inplanes, planes * block.expansion, stride),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for _ in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

BasicBlock

expansion是残差结构中输出维度是输入维度的多少倍,BasicBlock没有升维,所以expansion = 1

残差结构是在求和之后才经过ReLU层

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.downsample = downsample

self.stride = stride

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

bottleneck

注意Res18、Res34用的是BasicBlock,其余用的是Bottleneck

expansion = 4,因为Bottleneck中每个残差结构输出维度都是输入维度的4倍

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = conv1x1(inplanes, planes)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = conv3x3(planes, planes, stride)

self.bn2 = nn.BatchNorm2d(planes)

self.conv3 = conv1x1(planes, planes * self.expansion)

self.bn3 = nn.BatchNorm2d(planes * self.expansion)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

注意Res18、Res34用的是BasicBlock,其余用的是Bottleneck

这里有一个对block代码的详解(超级好懂):ResNet代码详解