tensorflow tf.matmul() (多维)矩阵相乘(多维矩阵乘法)

@tf_export("matmul")

def matmul(a,

b,

transpose_a=False,

transpose_b=False,

adjoint_a=False,

adjoint_b=False,

a_is_sparse=False,

b_is_sparse=False,

name=None):

"""Multiplies matrix `a` by matrix `b`, producing `a` * `b`.

将矩阵a与矩阵b相乘,得出a * b。

The inputs must, following any transpositions, be tensors of rank >= 2

where the inner 2 dimensions specify valid matrix multiplication arguments,

and any further outer dimensions match.

在进行任何换位后,输入必须为(秩?)> = 2的张量,其中内部2维指定有效的矩阵乘法自变量,

并且任何其他外部维匹配。

Both matrices must be of the same type. The supported types are:

`float16`, `float32`, `float64`, `int32`, `complex64`, `complex128`.

两种矩阵必须属于同一类型。 支持的类型有:`float16`,`float32`,`float64`,`int32`,`complex64`,`complex128`。

Either matrix can be transposed or adjointed (conjugated and transposed) on

the fly by setting one of the corresponding flag to `True`. These are `False`

by default.

通过将相应标志之一设置为“ True”,可以即时对矩阵进行转置或连接(共轭和转置)。 这些默认为False。

If one or both of the matrices contain a lot of zeros, a more efficient

multiplication algorithm can be used by setting the corresponding

`a_is_sparse` or `b_is_sparse` flag to `True`. These are `False` by default.

This optimization is only available for plain matrices (rank-2 tensors) with

datatypes `bfloat16` or `float32`.

如果一个或两个矩阵都包含大量零,则可以通过将相应的“ a_is_sparse”或“ b_is_sparse”标志,

设置为“ True”来使用更有效的乘法算法。 这些默认为False。

此优化仅适用于数据类型为bfloat16或float32的普通矩阵(秩2张量)。

For example:

```python

# 2-D tensor `a`

# [[1, 2, 3],

# [4, 5, 6]]

a = tf.constant([1, 2, 3, 4, 5, 6], shape=[2, 3])

# 2-D tensor `b`

# [[ 7, 8],

# [ 9, 10],

# [11, 12]]

b = tf.constant([7, 8, 9, 10, 11, 12], shape=[3, 2])

# `a` * `b`

# [[ 58, 64],

# [139, 154]]

c = tf.matmul(a, b)

# 3-D tensor `a`

# [[[ 1, 2, 3],

# [ 4, 5, 6]],

# [[ 7, 8, 9],

# [10, 11, 12]]]

a = tf.constant(np.arange(1, 13, dtype=np.int32),

shape=[2, 2, 3])

# 3-D tensor `b`

# [[[13, 14],

# [15, 16],

# [17, 18]],

# [[19, 20],

# [21, 22],

# [23, 24]]]

b = tf.constant(np.arange(13, 25, dtype=np.int32),

shape=[2, 3, 2])

# `a` * `b`

# [[[ 94, 100],

# [229, 244]],

# [[508, 532],

# [697, 730]]]

c = tf.matmul(a, b)

# Since python >= 3.5 the @ operator is supported (see PEP 465).

# In TensorFlow, it simply calls the `tf.matmul()` function, so the

# following lines are equivalent:

由于python> = 3.5,因此支持@运算符(请参阅PEP 465)。

在TensorFlow中,它仅调用`tf.matmul()`函数,因此以下几行是等效的:

d = a @ b @ [[10.], [11.]]

d = tf.matmul(tf.matmul(a, b), [[10.], [11.]])

Args:

a: `Tensor` of type `float16`, `float32`, `float64`, `int32`, `complex64`,

`complex128` and rank > 1.

类型为`float16`,`float32`,`float64`,`int32,`complex64`,`complex128`和秩> 1的`Tensor`。

b: `Tensor` with same type and rank as `a`.

具有与a相同类型和秩的Tensor。

transpose_a: If `True`, `a` is transposed before multiplication.

如果为True,则在相乘之前对a进行转置。

transpose_b: If `True`, `b` is transposed before multiplication.

如果为True,则在相乘之前将b换位。

adjoint_a: If `True`, `a` is conjugated and transposed before

multiplication.

如果为True,则在相乘之前对a进行共轭和转置。

adjoint_b: If `True`, `b` is conjugated and transposed before

multiplication.

如果为True,则在相乘之前对b进行共轭和转置。

a_is_sparse: If `True`, `a` is treated as a sparse matrix.

如果为True,则将a视为稀疏矩阵。

b_is_sparse: If `True`, `b` is treated as a sparse matrix.

如果为True,则将b视为稀疏矩阵。

name: Name for the operation (optional).

操作名称(可选)。

Returns:

A `Tensor` of the same type as `a` and `b` where each inner-most matrix is

the product of the corresponding matrices in `a` and `b`, e.g. if all

transpose or adjoint attributes are `False`:

与`a`和`b`具有相同类型的`张量`,其中每个最里面的矩阵是`a`和`b`中对应矩阵的乘积,

例如 如果所有转置或伴随属性均为False:

`output`[..., i, j] = sum_k (`a`[..., i, k] * `b`[..., k, j]),

for all indices i, j.

Note: This is matrix product, not element-wise product.

这是矩阵乘积,而不是元素乘积。

Raises:

ValueError: If transpose_a and adjoint_a, or transpose_b and adjoint_b

are both set to True.

"""

with ops.name_scope(name, "MatMul", [a, b]) as name:

if transpose_a and adjoint_a:

raise ValueError("Only one of transpose_a and adjoint_a can be True.")

if transpose_b and adjoint_b:

raise ValueError("Only one of transpose_b and adjoint_b can be True.")

if context.executing_eagerly():

if not isinstance(a, (ops.EagerTensor, _resource_variable_type)):

a = ops.convert_to_tensor(a, name="a")

if not isinstance(b, (ops.EagerTensor, _resource_variable_type)):

b = ops.convert_to_tensor(b, name="b")

else:

a = ops.convert_to_tensor(a, name="a")

b = ops.convert_to_tensor(b, name="b")

# TODO(apassos) remove _shape_tuple here when it is not needed.

a_shape = a._shape_tuple() # pylint: disable=protected-access

b_shape = b._shape_tuple() # pylint: disable=protected-access

if (not a_is_sparse and

not b_is_sparse) and ((a_shape is None or len(a_shape) > 2) and

(b_shape is None or len(b_shape) > 2)):

# BatchMatmul does not support transpose, so we conjugate the matrix and

# use adjoint instead. Conj() is a noop for real matrices.

if transpose_a:

a = conj(a)

adjoint_a = True

if transpose_b:

b = conj(b)

adjoint_b = True

return gen_math_ops.batch_mat_mul(

a, b, adj_x=adjoint_a, adj_y=adjoint_b, name=name)

# Neither matmul nor sparse_matmul support adjoint, so we conjugate

# the matrix and use transpose instead. Conj() is a noop for real

# matrices.

if adjoint_a:

a = conj(a)

transpose_a = True

if adjoint_b:

b = conj(b)

transpose_b = True

use_sparse_matmul = False

if a_is_sparse or b_is_sparse:

sparse_matmul_types = [dtypes.bfloat16, dtypes.float32]

use_sparse_matmul = (

a.dtype in sparse_matmul_types and b.dtype in sparse_matmul_types)

if ((a.dtype == dtypes.bfloat16 or b.dtype == dtypes.bfloat16) and

a.dtype != b.dtype):

# matmul currently doesn't handle mixed-precision inputs.

use_sparse_matmul = True

if use_sparse_matmul:

ret = sparse_matmul(

a,

b,

transpose_a=transpose_a,

transpose_b=transpose_b,

a_is_sparse=a_is_sparse,

b_is_sparse=b_is_sparse,

name=name)

# sparse_matmul always returns float32, even with

# bfloat16 inputs. This prevents us from configuring bfloat16 training.

# casting to bfloat16 also matches non-sparse matmul behavior better.

if a.dtype == dtypes.bfloat16 and b.dtype == dtypes.bfloat16:

ret = cast(ret, dtypes.bfloat16)

return ret

else:

return gen_math_ops.mat_mul(

a, b, transpose_a=transpose_a, transpose_b=transpose_b, name=name)

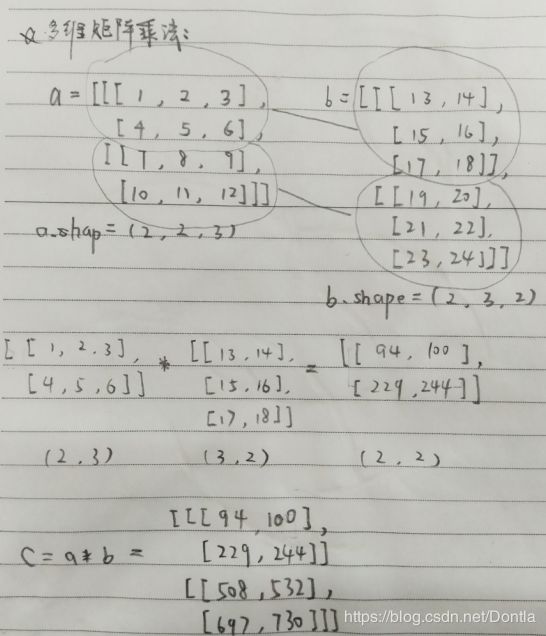

通过结果可以看出,两个多维矩阵再相乘时,它们除了后两维之外的维度必须相同,否则怎能做到一一对应呢?

比如a的维度是(2,2,3),b的维度是(2,3,2),它们除了最后两维之外的维度2必须相同,而最后两维需要满足矩阵乘法要求,一个是(i,j),另一个必须是(j,k)。

相乘后,除后两维之外的维度不变,后两维变成(i,k),如(…,i,j)*(…,j,k)= (…,i,k)

参考文章:[tensorflow] 多维矩阵的乘法