支持向量机SVM的推导

1、什么是支持向量机?

- 支持向量机是一种二分类模型,它的基本模型是定义在特征空间上的间隔最大的线性分类器。

- 支持向量机的学习策略就是间隔最大化。

- 支持向量机学习方法包含的模型有:线性可分支持向量机(硬间隔支持向量机)、线性支持向量机(软间隔支持向量机)、非线性支持向量机(核技巧)。

- 序列最小最优化算法(SMO) 的求解

SVM算法的推导非常重要,面试也是常考的,一定要亲自从头到尾手推一遍。

只需要推导线性可分支持向量机学习算法就可以了,需要输出分离超平面和分类决策函数。

2、线性可分支持向量机

训练数据集

T = { ( x 1 , y 1 ) , ( x 2 , y 2 ) , ⋯ , ( x N , y N ) } \begin{aligned} \\& T = \left\{ \left( \mathbf{x}_{1}, y_{1} \right), \left( \mathbf{x}_{2}, y_{2} \right), \cdots, \left( \mathbf{x}_{N}, y_{N} \right) \right\} \end{aligned} T={(x1,y1),(x2,y2),⋯,(xN,yN)}

其中, x i ∈ X = R n , y i ∈ Y = { + 1 , − 1 } , i = 1 , 2 , ⋯ , N \mathbf{x}_{i} \in \mathcal{X} = \mathbb{R}^{n}, y_{i} \in \mathcal{Y} = \left\{ +1, -1 \right\}, i = 1, 2, \cdots, N xi∈X=Rn,yi∈Y={+1,−1},i=1,2,⋯,N, x i \mathbf{x}_{i} xi为第 i i i个特征向量(实例), y i y_{i} yi为第 x i \mathbf{x}_{i} xi的类标记,当 y i = + 1 y_{i}=+1 yi=+1时,称 x i \mathbf{x}_{i} xi为正例;当 y i = − 1 y_{i}= -1 yi=−1时,称 x i \mathbf{x}_{i} xi为负例, ( x i , y i ) \left( \mathbf{x}_{i}, y_{i} \right) (xi,yi)称为样本点。

线性可分支持向量机:给定线性可分训练数据集,通过间隔最大化或等价地求解相应地凸二次规划问题学习得到分离超平面,在样本空间中,划分超平面可用 w T x + b = 0 w^{\mathsf{T}}x+b=0 wTx+b=0表示,记为 ( w , b ) (w,b) (w,b)。

以及相应的分类决策函数

f ( x ) = s i g n ( w T ⋅ x + b ) \begin{aligned} \\& f \left( x \right) = sign \left( w^\mathsf{T} \cdot x + b\right) \end{aligned} f(x)=sign(wT⋅x+b)

称为线型可分支持向量机。其中 w T w^\mathsf{T} wT和 b b b为感知机模型参数, w T ∈ R n w^\mathsf{T} \in \mathbb{R}^{n} wT∈Rn叫做权值或权值向量, b ∈ R b \in \mathbb{R} b∈R叫做偏置, w T ⋅ x w^\mathsf{T} \cdot x wT⋅x表示 w T w^\mathsf{T} wT和 x x x的内积。 s i g n sign sign是符号函数。

即超平面 ( w , b ) (w,b) (w,b)关于样本点 ( x i , y i ) (x_i,y_i) (xi,yi)到划分超平面的函数间隔为 γ ^ i = y i ( w ⋅ x i + b ) \hat{\gamma}_i=y_i\left(w\cdot x_i+b\right) γ^i=yi(w⋅xi+b),几何间隔为 γ i = y i ( w ∥ w ∥ ⋅ x i + b ∥ w ∥ ) \gamma_i=y_i\left(\frac{w}{\|w \|}\cdot x_i + \frac{b}{\|w \|} \right) γi=yi(∥w∥w⋅xi+∥w∥b) ,简写为: γ = γ ^ ∥ w ∥ \gamma = \frac {\hat{\gamma}} {\|w \|} γ=∥w∥γ^

支持向量机的基本想法就是求解能够正确划分训练数据集并且几何间隔最大的分离超平面,数学公式表达为:

max w , b γ s.t. y i ( w ∥ w ∥ ⋅ x i + b ∥ w ∥ ) ⩾ γ , i = 1 , 2 , ⋯ , N \begin{aligned} &\max \limits_{w,b} \quad \gamma \\ &\ \text{s.t.} \ \ \quad y_i\left(\frac {w}{\|w \|}\cdot x_i +\frac {b}{\|w \|} \right) \geqslant \gamma\text{,}\quad i=1,2,\cdots,N \end{aligned} w,bmaxγ s.t. yi(∥w∥w⋅xi+∥w∥b)⩾γ,i=1,2,⋯,N

即我们希望最大化超平面 ( w , b ) (w,b) (w,b)关于训练数据集的几何间隔 γ \gamma γ,约束条件表示的是超平面 ( w , b ) (w,b) (w,b)关于每个训练样本点的几何间隔至少是 γ \gamma γ,根据上面的函数间隔公式和几何间隔公式的关系得出以下公式:

max w , b γ ^ ∥ w ∥ s.t. y i ( w ⋅ x i + b ) ⩾ γ ^ , i = 1 , 2 , ⋯ , N \begin{aligned} &\max \limits_{w,b} \quad \frac{\hat{\gamma}} {\|w \|}\\ & \ \text{s.t.} \ \ \quad y_i\left(w\cdot x_i +b \right) \geqslant \hat{\gamma} \text{,}\quad i=1,2,\cdots,N \end{aligned} w,bmax∥w∥γ^ s.t. yi(w⋅xi+b)⩾γ^,i=1,2,⋯,N

假设将w和b按比例改变为 λ \lambda λw和 λ \lambda λb,这时的函数间隔为 λ γ ^ \lambda\hat{\gamma} λγ^,函数间隔的这一改变对上面最优化问题的不等式约束公式没有影响,对目标函数的优化也没有影响,那么就可以把 γ ^ = 1 \hat{\gamma}=1 γ^=1方便推导,即:

max w , b 1 ∥ w ∥ s.t. y i ( w ⋅ x i + b ) ⩾ 1 , i = 1 , 2 , ⋯ , N \begin{aligned} &\max \limits_{w,b} \quad \frac{1} {\|w \|}\\ & \ \text{s.t.} \ \ \quad y_i\left(w\cdot x_i +b \right) \geqslant 1 \text{,}\quad i=1,2,\cdots,N \end{aligned} w,bmax∥w∥1 s.t. yi(w⋅xi+b)⩾1,i=1,2,⋯,N

注意到最大化 1 ∥ w ∥ \frac {1}{\|w \|} ∥w∥1 和最小化 1 2 ∥ w ∥ 2 \frac {1}{2}\|w \|^2 21∥w∥2(取平方是为了后面方便求导)是等价的,于是就得到下面的线性可分支持向量机最优化问题的公式:

min w , b 1 2 ∥ w ∥ 2 s.t. y i ( w ⋅ x i + b ) − 1 ⩾ 0 , i = 1 , 2 , ⋯ , N \begin{aligned} &\min \limits_{w,b} \quad \frac{1}{2} \|w \|^2\\ &\ \text{s.t.} \ \ \quad y_i\left(w\cdot x_i +b \right) -1 \geqslant 0 \text{,}\quad i=1,2,\cdots,N \end{aligned} w,bmin21∥w∥2 s.t. yi(w⋅xi+b)−1⩾0,i=1,2,⋯,N

通过上面公式,(硬间隔)支持向量就是以训练数据集 T T T的样本点中与分离超平面距离最近的样本点的实例,即使约束条件等号成立的样本点

y i ( w ⋅ x i + b ) − 1 = 0 y_i\left(w\cdot x_i +b \right) -1 = 0 yi(w⋅xi+b)−1=0

对 y i = + 1 y_{i} = +1 yi=+1的正例点,支持向量在超平面

H 1 : w ⋅ x + b = 1 \begin{aligned} \\ & H_{1}:w \cdot x + b = 1 \end{aligned} H1:w⋅x+b=1

对 y i = − 1 y_{i} = -1 yi=−1的正例点,支持向量在超平面

H 1 : w ⋅ x + b = − 1 \begin{aligned} \\ & H_{1}:w \cdot x + b = -1 \end{aligned} H1:w⋅x+b=−1

H 1 H_{1} H1和 H 2 H_{2} H2称为间隔边界。

H 1 H_{1} H1和 H 2 H_{2} H2之间的距离称为间隔,且 ∣ H 1 H 2 ∣ = 1 ∥ w ∥ + 1 ∥ w ∥ = 2 ∥ w ∥ |H_{1}H_{2}| = \dfrac{1}{\| w \|} + \dfrac{1}{\| w \|} = \dfrac{2}{\| w \|} ∣H1H2∣=∥w∥1+∥w∥1=∥w∥2。

为了方便求解最优化问题,可以应用拉格朗日对偶性,求解他的对偶问题从而得到原始问题的最优解,这就是线性可分支持向量机的对偶算法,这样做的优点,一是对偶问题往往更容易求解;二是自然引入核函数,进而推广到非线性分类问题,首先先看看拉格朗日函数公式为:

首先假设 f ( x ) , c i ( x ) , h j ( x ) f(x),c_i(x),h_j(x) f(x),ci(x),hj(x)是定义在 R n \mathbf{R}^n Rn上的连续可微函数,考虑约束最优化问题,即:

min x ∈ R n f ( x ) s.t. c i ( x ) ⩽ 0 , i = 1 , 2 , ⋯ , k h i ( x ) = 0 , j = 1 , 2 , ⋯ , l \begin{aligned}&\min \limits_{x \in \mathbf{R}^n} \quad f(x) \\ &\ \text{s.t.} \ \ \quad c_i(x) \leqslant 0 , \ i=1,2,\cdots,k \\ &\ \ \ \ \quad \quad h_i(x)=0,\ j=1,2,\cdots,l \end{aligned} x∈Rnminf(x) s.t. ci(x)⩽0, i=1,2,⋯,k hi(x)=0, j=1,2,⋯,l

称次约束最优化问题为原始最优化问题或原始问题,最终引进广义拉格朗日函数,即:

L ( x , α , β ) = f ( x ) + ∑ i = 1 k α i c i ( x ) + ∑ j = 1 l β j h j ( x ) {L}\left(x,\alpha,\beta\right)= f(x) +\sum_{i=1}^{k} \alpha_i c_i (x) +\sum_{j=1}^{l} \beta_j h_j(x) L(x,α,β)=f(x)+i=1∑kαici(x)+j=1∑lβjhj(x)

这里, x = ( x ( 1 ) , x ( 2 ) , ⋯ , x ( n ) ) T ∈ R n x=(x^{(1)},x^{(2)},\cdots,x^{(n)})^T\in \mathbf{R}^n x=(x(1),x(2),⋯,x(n))T∈Rn , α i , β i \alpha_i , \beta_i αi,βi是拉格朗日乘子, α i ⩾ 0 \alpha_i \geqslant 0 αi⩾0

通过上面的拉格朗日函数例子,构建求解线性可分支持向量机的最优化问题,对每一个不等式约束引进拉格朗日乘子 α ⩾ 0 , i = 1 , 2 , ⋯ , N \alpha \geqslant 0 ,i=1,2,\cdots,N α⩾0,i=1,2,⋯,N,如下:

L ( w , b , α ) = 1 2 ∥ w ∥ 2 − ∑ i = 1 N α i y i ( w ⋅ x i + b ) + ∑ i = 1 N α i {L}(w,b,\alpha) = \frac {1}{2} \|w \|^2 - \sum_{i=1}^{N}\alpha_i y_i (w\cdot x_i + b) + \sum_{i=1}^{N} \alpha_i L(w,b,α)=21∥w∥2−i=1∑Nαiyi(w⋅xi+b)+i=1∑Nαi

根据拉格朗日对偶性,原始问题的对偶问题是极大极小问题,即:

max α min w , b L ( w , b , α ) \max \limits_{\alpha} \min \limits_{w,b} {L}(w,b,\alpha) αmaxw,bminL(w,b,α)

为了得到对偶问题的解,需要先求 L ( w , b , α ) {L}(w,b,\alpha) L(w,b,α)对 w , b w,b w,b的极小,再求对 α \alpha α的极大

1、求 min w , b L ( w , b , α ) \min \limits_{w,b} {L}(w,b,\alpha) w,bminL(w,b,α),将拉格朗日函数 L ( w , b , α ) {L}(w,b,\alpha) L(w,b,α)分别对 w , b w,b w,b求偏导数并令其等于0

∇ w L ( w , b , α ) = w − ∑ i = 1 N α i y i x i = 0 \nabla_w {L}(w,b,\alpha)=w-\sum_{i=1}^{N}\alpha_i y_i x_i =0 ∇wL(w,b,α)=w−i=1∑Nαiyixi=0

∇ b L ( w , b , α ) = − ∑ i = 1 N α i y i = 0 \nabla_b {L}(w,b,\alpha)=-\sum_{i=1}^{N}\alpha_i y_i=0 ∇bL(w,b,α)=−i=1∑Nαiyi=0

得

w = ∑ i = 1 N α i y i x i 0 = ∑ i = 1 N α i y i \begin{aligned}w &= \sum_{i=1}^{N} \alpha_i y_i x_i \\ 0&=\sum_{i=1}^{N} \alpha_i y_i \end{aligned} w0=i=1∑Nαiyixi=i=1∑Nαiyi

将其结果带入公式得:

L ( w , b , α ) = 1 2 ∑ i = 1 N ∑ j = 1 N α i α j y i y j ( x i ⋅ x j ) − ∑ i = 1 N α i y j ( ( ∑ j = 1 N α j y j x j ) ⋅ x i + b ) + ∑ i = 1 N α i = − 1 2 ∑ i = 1 N ∑ j = 1 N α i α j y i y j ( x i ⋅ x j ) + ∑ i = 1 N α i \begin{aligned}{L}(w,b,\alpha)&=\frac {1}{2} \sum_{i=1}^{N} \sum_{j=1}^{N} \alpha_i \alpha_j y_i y_j (x_i \cdot x_j ) - \sum_{i=1}^{N} \alpha_i y_j \left(\left(\sum_{j=1}^{N} \alpha_j y_j x_j \right)\cdot x_i +b \right) +\sum_{i=1}^{N} \alpha_i \\ &=-\frac{1}{2} \sum_{i=1}^{N} \sum_{j=1}^{N} \alpha_i \alpha_j y_i y_j (x_i \cdot x_j) + \sum_{i=1}^{N}\alpha_i \end{aligned} L(w,b,α)=21i=1∑Nj=1∑Nαiαjyiyj(xi⋅xj)−i=1∑Nαiyj((j=1∑Nαjyjxj)⋅xi+b)+i=1∑Nαi=−21i=1∑Nj=1∑Nαiαjyiyj(xi⋅xj)+i=1∑Nαi

即

min w , b L ( w , b , α ) = − 1 2 ∑ i = 1 N ∑ j = 1 N α i α j y i y j ( x i ⋅ x j ) + ∑ i = 1 N α i \begin{aligned}\min \limits_{w,b} \ {L}(w,b,\alpha)&=-\frac{1}{2} \sum_{i=1}^{N} \sum_{j=1}^{N} \alpha_i \alpha_j y_i y_j (x_i \cdot x_j) + \sum_{i=1}^{N}\alpha_i \end{aligned} w,bmin L(w,b,α)=−21i=1∑Nj=1∑Nαiαjyiyj(xi⋅xj)+i=1∑Nαi

求 min w , b L ( w , b , α ) \min \limits_{w,b} \ {L}(w,b,\alpha) w,bmin L(w,b,α)对 α \alpha α的极大,即是对偶问题:

max α − 1 2 ∑ i = 1 N ∑ j = 1 N α i α j y i y j ( x i ⋅ x j ) + ∑ i = 1 N α i s.t. ∑ i = 1 N α i y i = 0 α i ⩾ 0 , i = 1 , 2 , ⋯ , N \begin{aligned} &\max \limits_{\alpha} \ \ -\frac{1}{2} \sum_{i=1}^{N} \sum_{j=1}^{N} \alpha_i \alpha_j y_i y_j (x_i \cdot x_j) + \sum_{i=1}^{N}\alpha_i \\ & \ \text{s.t.} \quad \sum_{i=1}^N \alpha_i y_i=0 \quad \alpha_i \geqslant 0 , \ i=1,2,\cdots,N\end{aligned} αmax −21i=1∑Nj=1∑Nαiαjyiyj(xi⋅xj)+i=1∑Nαi s.t.i=1∑Nαiyi=0αi⩾0, i=1,2,⋯,N

将上面的目标函数由求极大转换成求极小,就得到下面与之等价的对偶最优化问题:

min α 1 2 ∑ i = 1 N ∑ j = 1 N α i α j y i y j ( x i ⋅ x j ) − ∑ i = 1 N α i s.t. ∑ i = 1 N α i y i = 0 α i ⩾ 0 , i = 1 , 2 , ⋯ , N \begin{aligned} &\min \limits_{\alpha} \ \ \ \frac{1}{2} \sum_{i=1}^{N} \sum_{j=1}^{N} \alpha_i \alpha_j y_i y_j (x_i \cdot x_j) - \sum_{i=1}^{N}\alpha_i \\ & \ \text{s.t.} \quad \sum_{i=1}^N \alpha_i y_i=0 \quad \alpha_i \geqslant 0 , \ i=1,2,\cdots,N\end{aligned} αmin 21i=1∑Nj=1∑Nαiαjyiyj(xi⋅xj)−i=1∑Nαi s.t.i=1∑Nαiyi=0αi⩾0, i=1,2,⋯,N

由于w的值为:

w = ∑ i = 1 N α i y i x i w = \sum_{i=1}^{N} \alpha_i y_i x_i w=i=1∑Nαiyixi

最终得到模型:

f ( x ) = w T x + b = ∑ i = 1 N α i y i x i T x + b \begin{aligned} f(x)&=w^T x + b \\ &=\sum_{i=1}^{N} \alpha_i y_i x_i^T x +b \end{aligned} f(x)=wTx+b=i=1∑NαiyixiTx+b

α i \alpha_i αi是拉格朗日乘子,它恰恰对应着训练样本 ( x i , y i ) (x_i,y_i) (xi,yi),又因为有 y i ( w ⋅ x i + b ) − 1 ⩾ 0 , i = 1 , 2 , ⋯ , N y_i\left(w\cdot x_i +b \right) -1 \geqslant 0 \text{,}\quad i=1,2,\cdots,N yi(w⋅xi+b)−1⩾0,i=1,2,⋯,N不等式约束,因此上述过程需要满足KKT条件,即要求

{ α i ⩾ 0 y i f ( x i ) − 1 ⩾ 0 α i ( y i f ( x i ) − 1 ) = 0 \left\{ \begin{aligned} \alpha_i \geqslant 0 \\ y_i f(x_i) -1 \geqslant 0 \\ \alpha_i(y_i f(x_i) -1) = 0\end{aligned} \right. ⎩⎪⎨⎪⎧αi⩾0yif(xi)−1⩾0αi(yif(xi)−1)=0

总结:对于任意训练样本 ( x i , y i ) (x_i,y_i) (xi,yi),总有 α i = 0 \alpha_i = 0 αi=0或者 y i f ( x i ) = 1 y_i f(x_i) =1 yif(xi)=1,也就是说最终与模型有关的的样本点都位于最大间隔的边界上,我们称之为支持向量,其余的样本点与模型无关

3、线性支持向量机

线性支持向量机(软间隔支持向量机):给定线性不可分训练数据集,通过求解凸二次规划问题

min w , b , ξ 1 2 ∥ w ∥ 2 + C ∑ i = 1 N ξ i s . t . y i ( w ⋅ x i + b ) ≥ 1 − ξ i ξ i ≥ 0 , i = 1 , 2 , ⋯ , N \begin{aligned} \\ & \min_{\mathbf{w},b,\xi} \quad \dfrac{1}{2} \| \mathbf{w} \|^{2} + C \sum_{i=1}^{N} \xi_{i} \\ & \ s.t. \quad y_{i} \left( \mathbf{w} \cdot \mathbf{x}_{i} + b \right) \geq 1 - \xi_{i} \\ & \ \xi_{i} \geq 0, \quad i=1,2, \cdots, N \end{aligned} w,b,ξmin21∥w∥2+Ci=1∑Nξi s.t.yi(w⋅xi+b)≥1−ξi ξi≥0,i=1,2,⋯,N

学习得到分离超平面为

w T ⋅ x + b = 0 \begin{aligned} \\& \mathbf{w}^\mathsf{T} \cdot \mathbf{x} + b = 0 \end{aligned} wT⋅x+b=0

以及相应的分类决策函数

f ( x ) = s i g n ( w T ⋅ x + b ) \begin{aligned} \\& f \left( \mathbf{x} \right) = sign \left( \mathbf{w}^\mathsf{T} \cdot \mathbf{x} + b \right) \end{aligned} f(x)=sign(wT⋅x+b)

称为线型支持向量机。

最优化问题的求解:

- 引入拉格朗日乘子 α i ≥ 0 , μ i ≥ 0 , i = 1 , 2 , ⋯ , N \alpha_{i} \geq 0, \mu_{i} \geq 0, i = 1, 2, \cdots, N αi≥0,μi≥0,i=1,2,⋯,N 构建拉格朗日函数

L ( w , b , ξ , α , μ ) = 1 2 ∥ w ∥ 2 + C ∑ i = 1 N ξ i + ∑ i = 1 N α i [ − y i ( w ⋅ x i + b ) + 1 − ξ i ] + ∑ i = 1 N μ i ( − ξ i ) = 1 2 ∥ w ∥ 2 + C ∑ i = 1 N ξ i − ∑ i = 1 N α i [ y i ( w ⋅ x i + b ) − 1 + ξ i ] − ∑ i = 1 N μ i ξ i \begin{aligned} \\ L \left( \mathbf{w}, b, \xi, \alpha, \mu \right) &= \dfrac{1}{2} \| \mathbf{w} \|^{2} + C \sum_{i=1}^{N} \xi_{i} + \sum_{i=1}^{N} \alpha_{i} \left[- y_{i} \left( \mathbf{w} \cdot \mathbf{x}_{i} + b \right) + 1 - \xi_{i} \right] + \sum_{i=1}^{N} \mu_{i} \left( -\xi_{i} \right) \\ & = \dfrac{1}{2} \| \mathbf{w} \|^{2} + C \sum_{i=1}^{N} \xi_{i} - \sum_{i=1}^{N} \alpha_{i} \left[ y_{i} \left( \mathbf{w} \cdot \mathbf{x}_{i} + b \right) -1 + \xi_{i} \right] - \sum_{i=1}^{N} \mu_{i} \xi_{i} \end{aligned} L(w,b,ξ,α,μ)=21∥w∥2+Ci=1∑Nξi+i=1∑Nαi[−yi(w⋅xi+b)+1−ξi]+i=1∑Nμi(−ξi)=21∥w∥2+Ci=1∑Nξi−i=1∑Nαi[yi(w⋅xi+b)−1+ξi]−i=1∑Nμiξi

其中, α = ( α 1 , α 2 , ⋯ , α N ) T \alpha = \left( \alpha_{1}, \alpha_{2}, \cdots, \alpha_{N} \right)^\mathsf{T} α=(α1,α2,⋯,αN)T以及 μ = ( μ 1 , μ 2 , ⋯ , μ N ) T \mu = \left( \mu_{1}, \mu_{2}, \cdots, \mu_{N} \right)^\mathsf{T} μ=(μ1,μ2,⋯,μN)T为拉格朗日乘子向量。 - 求 min w , b L ( w , b , ξ , α , μ ) \min_{\mathbf{w},b}L \left( \mathbf{w}, b, \xi, \alpha, \mu \right) minw,bL(w,b,ξ,α,μ):

令

∇ w L ( w , b , ξ , α , μ ) = w − ∑ i = 1 N α i y i x i = 0 ∇ b L ( w , b , ξ , α , μ ) = − ∑ i = 1 N α i y i = 0 ∇ ξ i L ( w , b , ξ , α , μ ) = C − α i − μ i = 0 \begin{aligned} \\ & \nabla_{\mathbf{w}} L \left( \mathbf{w}, b, \xi, \alpha, \mu \right) = \mathbf{w} - \sum_{i=1}^{N} \alpha_{i} y_{i} \mathbf{x}_{i} = 0 \\ & \nabla_{b} L \left( \mathbf{w}, b, \xi, \alpha, \mu \right) = -\sum_{i=1}^{N} \alpha_{i} y_{i} = 0 \\ & \nabla_{\xi_{i}} L \left( \mathbf{w}, b, \xi, \alpha, \mu \right) = C - \alpha_{i} - \mu_{i} = 0 \end{aligned} ∇wL(w,b,ξ,α,μ)=w−i=1∑Nαiyixi=0∇bL(w,b,ξ,α,μ)=−i=1∑Nαiyi=0∇ξiL(w,b,ξ,α,μ)=C−αi−μi=0

得

w = ∑ i = 1 N α i y i x i ∑ i = 1 N α i y i = 0 C − α i − μ i = 0 \begin{aligned} \\ & \mathbf{w} = \sum_{i=1}^{N} \alpha_{i} y_{i} \mathbf{x}_{i} \\ & \sum_{i=1}^{N} \alpha_{i} y_{i} = 0 \\ & C - \alpha_{i} - \mu_{i} = 0\end{aligned} w=i=1∑Nαiyixii=1∑Nαiyi=0C−αi−μi=0

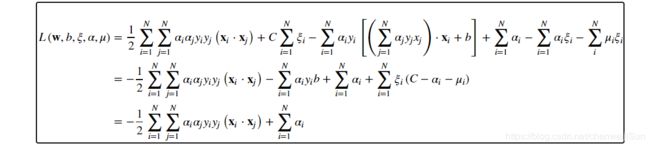

代入拉格朗日函数,得

L ( w , b , ξ , α , μ ) = 1 2 ∑ i = 1 N ∑ j = 1 N α i α j y i y j ( x i ⋅ x j ) + C ∑ i = 1 N ξ i − ∑ i = 1 N α i y i [ ( ∑ j = 1 N α j y j x j ) ⋅ x i + b ] + ∑ i = 1 N α i − ∑ i = 1 N α i ξ i − ∑ i N μ i ξ i = − 1 2 ∑ i = 1 N ∑ j = 1 N α i α j y i y j ( x i ⋅ x j ) − ∑ i = 1 N α i y i b + ∑ i = 1 N α i + ∑ i = 1 N ξ i ( C − α i − μ i ) = − 1 2 ∑ i = 1 N ∑ j = 1 N α i α j y i y j ( x i ⋅ x j ) + ∑ i = 1 N α i \begin{aligned} \\ L \left( \mathbf{w}, b, \xi, \alpha, \mu \right) &= \dfrac{1}{2} \sum_{i=1}^{N} \sum_{j=1}^{N} \alpha_{i} \alpha_{j} y_{i} y_{j} \left( \mathbf{x}_{i} \cdot \mathbf{x}_{j} \right) + C \sum_{i=1}^{N} \xi_{i} - \sum_{i=1}^{N} \alpha_{i} y_{i} \left[ \left( \sum_{j=1}^{N} \alpha_{j} y_{j} x_{j} \right) \cdot \mathbf{x}_{i} + b \right] + \sum_{i=1}^{N} \alpha_{i} - \sum_{i=1}^{N} \alpha_{i} \xi_{i} - \sum_{i}^{N} \mu_{i} \xi_{i} \\ & = - \dfrac{1}{2} \sum_{i=1}^{N} \sum_{j=1}^{N} \alpha_{i} \alpha_{j} y_{i} y_{j} \left( \mathbf{x}_{i} \cdot \mathbf{x}_{j} \right) - \sum_{i=1}^{N} \alpha_{i} y_{i} b + \sum_{i=1}^{N} \alpha_{i} + \sum_{i=1}^{N} \xi_{i} \left( C - \alpha_{i} - \mu_{i} \right) \\ & = - \dfrac{1}{2} \sum_{i=1}^{N} \sum_{j=1}^{N} \alpha_{i} \alpha_{j} y_{i} y_{j} \left( \mathbf{x}_{i} \cdot \mathbf{x}_{j} \right) + \sum_{i=1}^{N} \alpha_{i} \end{aligned} L(w,b,ξ,α,μ)=21i=1∑Nj=1∑Nαiαjyiyj(xi⋅xj)+Ci=1∑Nξi−i=1∑Nαiyi[(j=1∑Nαjyjxj)⋅xi+b]+i=1∑Nαi−i=1∑Nαiξi−i∑Nμiξi=−21i=1∑Nj=1∑Nαiαjyiyj(xi⋅xj)−i=1∑Nαiyib+i=1∑Nαi+i=1∑Nξi(C−αi−μi)=−21i=1∑Nj=1∑Nαiαjyiyj(xi⋅xj)+i=1∑Nαi

如果显示不全可以看下面这张图:

即

min w , b , ξ L ( w , b , ξ , α , μ ) = − 1 2 ∑ i = 1 N ∑ j = 1 N α i α j y i y j ( x i ⋅ x j ) + ∑ i = 1 N α i \begin{aligned} \\ & \min_{\mathbf{w},b,\xi}L \left( \mathbf{w}, b, \xi, \alpha, \mu \right) = - \dfrac{1}{2} \sum_{i=1}^{N} \sum_{j=1}^{N} \alpha_{i} \alpha_{j} y_{i} y_{j} \left( \mathbf{x}_{i} \cdot \mathbf{x}_{j} \right) + \sum_{i=1}^{N} \alpha_{i} \end{aligned} w,b,ξminL(w,b,ξ,α,μ)=−21i=1∑Nj=1∑Nαiαjyiyj(xi⋅xj)+i=1∑Nαi

3.求 max α min w , b , ξ L ( w , b , ξ , α , μ ) \max_{\alpha} \min_{\mathbf{w},b, \xi}L \left( \mathbf{w}, b, \xi, \alpha, \mu \right) maxαminw,b,ξL(w,b,ξ,α,μ):

max α − 1 2 ∑ i = 1 N ∑ j = 1 N α i α j y i y j ( x i ⋅ x j ) + ∑ i = 1 N α i s . t . ∑ i = 1 N α i y i = 0 C − α i − μ i = 0 α i ≥ 0 μ i ≥ 0 , i = 1 , 2 , ⋯ , N \begin{aligned} \\ & \max_{\alpha} - \dfrac{1}{2} \sum_{i=1}^{N} \sum_{j=1}^{N} \alpha_{i} \alpha_{j} y_{i} y_{j} \left( \mathbf{x}_{i} \cdot \mathbf{x}_{j} \right) + \sum_{i=1}^{N} \alpha_{i} \\ & s.t. \sum_{i=1}^{N} \alpha_{i} y_{i} = 0 \\ & C - \alpha_{i} - \mu_{i} = 0 \\ & \alpha_{i} \geq 0 \\ & \mu_{i} \geq 0, \quad i=1,2, \cdots, N \end{aligned} αmax−21i=1∑Nj=1∑Nαiαjyiyj(xi⋅xj)+i=1∑Nαis.t.i=1∑Nαiyi=0C−αi−μi=0αi≥0μi≥0,i=1,2,⋯,N

等价的

min α 1 2 ∑ i = 1 N ∑ j = 1 N α i α j y i y j ( x i ⋅ x j ) − ∑ i = 1 N α i s . t . ∑ i = 1 N α i y i = 0 0 ≤ α i ≤ C , i = 1 , 2 , ⋯ , N \begin{aligned} \\ & \min_{\alpha} \dfrac{1}{2} \sum_{i=1}^{N} \sum_{j=1}^{N} \alpha_{i} \alpha_{j} y_{i} y_{j} \left( \mathbf{x}_{i} \cdot \mathbf{x}_{j} \right) - \sum_{i=1}^{N} \alpha_{i} \\ & s.t. \sum_{i=1}^{N} \alpha_{i} y_{i} = 0 \\ & 0 \leq \alpha_{i} \leq C , \quad i=1,2, \cdots, N \end{aligned} αmin21i=1∑Nj=1∑Nαiαjyiyj(xi⋅xj)−i=1∑Nαis.t.i=1∑Nαiyi=00≤αi≤C,i=1,2,⋯,N

4、非线性支持向量机

待更新…

4.1 序列最小最优化算法(SMO)

待更新…

5、SVM实例应用

%matplotlib inline

import matplotlib.pyplot as plt

import numpy as np

from sklearn import datasets,linear_model,model_selection,svm

def load_data_classfication():

iris=datasets.load_iris()

X_train=iris.data

y_train=iris.target

return model_selection.train_test_split(X_train, y_train,test_size=0.25,

random_state=0,stratify=y_train)

def test_SVC_linear(*data):

X_train,X_test,y_train,y_test=data

cls=svm.SVC(kernel='linear')

cls.fit(X_train,y_train)

print('Coefficients:%s, intercept %s'%(cls.coef_,cls.intercept_))

print('Score: %.2f' % cls.score(X_test, y_test))

def test_SVC_poly(*data):

X_train,X_test,y_train,y_test=data

fig=plt.figure()

degrees=range(1,20)

train_scores=[]

test_scores=[]

for degree in degrees:

cls=svm.SVC(kernel='poly',degree=degree)

cls.fit(X_train,y_train)

train_scores.append(cls.score(X_train,y_train))

test_scores.append(cls.score(X_test, y_test))

ax=fig.add_subplot(1,3,1)

ax.plot(degrees,train_scores,label="Training score ",marker='+' )

ax.plot(degrees,test_scores,label= " Testing score ",marker='o' )

ax.set_title( "SVC_poly_degree ")

ax.set_xlabel("p")

ax.set_ylabel("score")

ax.set_ylim(0,1.05)

ax.legend(loc="best",framealpha=0.5)

gammas=range(1,20)

train_scores=[]

test_scores=[]

for gamma in gammas:

cls=svm.SVC(kernel='poly',gamma=gamma,degree=3)

cls.fit(X_train,y_train)

train_scores.append(cls.score(X_train,y_train))

test_scores.append(cls.score(X_test, y_test))

ax=fig.add_subplot(1,3,2)

ax.plot(gammas,train_scores,label="Training score ",marker='+' )

ax.plot(gammas,test_scores,label= " Testing score ",marker='o' )

ax.set_title( "SVC_poly_gamma ")

ax.set_xlabel(r"$\gamma$")

ax.set_ylabel("score")

ax.set_ylim(0,1.05)

ax.legend(loc="best",framealpha=0.5)

rs=range(0,20)

train_scores=[]

test_scores=[]

for r in rs:

cls=svm.SVC(kernel='poly',gamma=10,degree=3,coef0=r)

cls.fit(X_train,y_train)

train_scores.append(cls.score(X_train,y_train))

test_scores.append(cls.score(X_test, y_test))

ax=fig.add_subplot(1,3,3)

ax.plot(rs,train_scores,label="Training score ",marker='+' )

ax.plot(rs,test_scores,label= " Testing score ",marker='o' )

ax.set_title( "SVC_poly_r ")

ax.set_xlabel(r"r")

ax.set_ylabel("score")

ax.set_ylim(0,1.05)

ax.legend(loc="best",framealpha=0.5)

plt.show()

def test_SVC_rbf(*data):

X_train,X_test,y_train,y_test=data

gammas=range(1,20)

train_scores=[]

test_scores=[]

for gamma in gammas:

cls=svm.SVC(kernel='rbf',gamma=gamma)

cls.fit(X_train,y_train)

train_scores.append(cls.score(X_train,y_train))

test_scores.append(cls.score(X_test, y_test))

fig=plt.figure()

ax=fig.add_subplot(1,1,1)

ax.plot(gammas,train_scores,label="Training score ",marker='+' )

ax.plot(gammas,test_scores,label= " Testing score ",marker='o' )

ax.set_title( "SVC_rbf")

ax.set_xlabel(r"$\gamma$")

ax.set_ylabel("score")

ax.set_ylim(0,1.05)

ax.legend(loc="best",framealpha=0.5)

plt.show()

def test_SVC_sigmoid(*data):

X_train,X_test,y_train,y_test=data

fig=plt.figure()

gammas=np.logspace(-2,1)

train_scores=[]

test_scores=[]

for gamma in gammas:

cls=svm.SVC(kernel='sigmoid',gamma=gamma,coef0=0)

cls.fit(X_train,y_train)

train_scores.append(cls.score(X_train,y_train))

test_scores.append(cls.score(X_test, y_test))

ax=fig.add_subplot(1,2,1)

ax.plot(gammas,train_scores,label="Training score ",marker='+' )

ax.plot(gammas,test_scores,label= " Testing score ",marker='o' )

ax.set_title( "SVC_sigmoid_gamma ")

ax.set_xscale("log")

ax.set_xlabel(r"$\gamma$")

ax.set_ylabel("score")

ax.set_ylim(0,1.05)

ax.legend(loc="best",framealpha=0.5)

rs=np.linspace(0,5)

train_scores=[]

test_scores=[]

for r in rs:

cls=svm.SVC(kernel='sigmoid',coef0=r,gamma=0.01)

cls.fit(X_train,y_train)

train_scores.append(cls.score(X_train,y_train))

test_scores.append(cls.score(X_test, y_test))

ax=fig.add_subplot(1,2,2)

ax.plot(rs,train_scores,label="Training score ",marker='+' )

ax.plot(rs,test_scores,label= " Testing score ",marker='o' )

ax.set_title( "SVC_sigmoid_r ")

ax.set_xlabel(r"r")

ax.set_ylabel("score")

ax.set_ylim(0,1.05)

ax.legend(loc="best",framealpha=0.5)

plt.show()

if __name__ == "__main__":

X_train,X_test,y_train,y_test=load_data_classfication()

test_SVC_linear(X_train,X_test,y_train,y_test)

test_SVC_poly(X_train,X_test,y_train,y_test)

test_SVC_rbf(X_train,X_test,y_train,y_test)

test_SVC_sigmoid(X_train,X_test,y_train,y_test)

输出结果为:

Coefficients:[[-0.16990304 0.47442881 -0.93075307 -0.51249447]

[ 0.02439178 0.21890135 -0.52833486 -0.25913786]

[ 0.52289771 0.95783924 -1.82516872 -2.00292778]], intercept [2.0368826 1.1512924 6.3276538]

Score: 1.00

参考书籍:

- 《机器学习》- 周志华

- 《统计学习方法》- 李航