Bert源码学习

文章目录

- 前言

- 1. bert模型网络modeling.py

- 1.1 整体架构 BertModel(object):

- 1.2 embedding层

- 1.2.1 embedding_lookup

- 1.2.2 词向量处理 embedding_postprocessor

- 1.3 编码层 Encoder Layer

- 1.3.1 注意力层 Attention Layer

- 1.3.2 核心:Transformer

- 1.4 pooler

- 2. optimization.py 梯度更新

- 2.1 Adam参数更新

- 2.2 optimizer返回梯度更新执行单元

- 3. 模型评估

- 3.1 metrics_from_confusion_matrix

- 3.2 precision

- 3.3 recall

- 3.4 F1

- 4. 数据准备

- 4.1 原文本

- 4.2 处理逻辑

- 5. 预训练 pre-training

- 5.1 主函数 main()

- 5.2 模型构建 model_fn_builder

- 5.3 构建训练集 input_fn_builder

前言

网上关于bert的介绍文章有很多,不乏相当优秀的文章,只是大部分偏重理论没有代码,看起来总觉得少点什么,最近正好看相关代码,结合理论记录一下理解的理论和疑问。很多东西也是没办法一次看全的,所以这个笔记会不断的更新。

有以下几个关键点先记住:

- 1.Bert的编码层采用transformer的decoder部分(多头双向编码器),如果要看代码可以参考 Transformer 代码详解

- 2.Bert训练的双向模型,其应用的Masked Language Model是随机将15%的单词替换:

80%的时间:用[MASK]标记替换单词,例如,my dog is hairy → my dog is [MASK]

10%的时间:用一个随机的单词替换该单词,例如,my dog is hairy → my dog is apple

10%的时间:保持单词不变,例如,my dog is hairy → my dog is hairy. 这样做的目的是将表示偏向于实际观察到的单词。 - 3.Bert可以预测下一句,增加了计算下一句的loss,并且其训练输入是基于上下两句,训练的目的是预测是否是连续的两条上下文语句。

- 4.上述的2和3就是Bert模型的预训练过程,训练出通用模型,然后再根据具体应用,用supervised训练数据。

1. bert模型网络modeling.py

1.1 整体架构 BertModel(object):

首先来看bert模型的模型架构:

在bert变量空间内,包含了embedding层、encoder层和pooler层

embedding层的第一部分通过[vocab_size, embedding_size]维度的embedding_table生成向量

第二部分传递token_type和学习到的位置编码.

encoder层包含创建MASK、多头编码器(包含Attention)

pooler取encoder的输出seq_length的第一行进行全连接输出。

with tf.variable_scope(scope, default_name="bert"):

with tf.variable_scope("embeddings"):

# Perform embedding lookup on the word ids.

(self.embedding_output, self.embedding_table) = embedding_lookup(

input_ids=input_ids,

vocab_size=config.vocab_size,

embedding_size=config.hidden_size,

initializer_range=config.initializer_range,

word_embedding_name="word_embeddings",

use_one_hot_embeddings=use_one_hot_embeddings)

# Add positional embeddings and token type embeddings, then layer

# normalize and perform dropout.

self.embedding_output = embedding_postprocessor(

input_tensor=self.embedding_output,

use_token_type=True,

token_type_ids=token_type_ids,

token_type_vocab_size=config.type_vocab_size,

token_type_embedding_name="token_type_embeddings",

use_position_embeddings=True,

position_embedding_name="position_embeddings",

initializer_range=config.initializer_range,

max_position_embeddings=config.max_position_embeddings,

dropout_prob=config.hidden_dropout_prob)

with tf.variable_scope("encoder"):

# This converts a 2D mask of shape [batch_size, seq_length] to a 3D

# mask of shape [batch_size, seq_length, seq_length] which is used

# for the attention scores.

attention_mask = create_attention_mask_from_input_mask(

input_ids, input_mask)

# Run the stacked transformer.

# `sequence_output` shape = [batch_size, seq_length, hidden_size].

self.all_encoder_layers = transformer_model(

input_tensor=self.embedding_output,

attention_mask=attention_mask,

hidden_size=config.hidden_size,

num_hidden_layers=config.num_hidden_layers,

num_attention_heads=config.num_attention_heads,

intermediate_size=config.intermediate_size,

intermediate_act_fn=get_activation(config.hidden_act),

hidden_dropout_prob=config.hidden_dropout_prob,

attention_probs_dropout_prob=config.attention_probs_dropout_prob,

initializer_range=config.initializer_range,

do_return_all_layers=True)

self.sequence_output = self.all_encoder_layers[-1]

# The "pooler" converts the encoded sequence tensor of shape

# [batch_size, seq_length, hidden_size] to a tensor of shape

# [batch_size, hidden_size]. This is necessary for segment-level

# (or segment-pair-level) classification tasks where we need a fixed

# dimensional representation of the segment.

with tf.variable_scope("pooler"):

# We "pool" the model by simply taking the hidden state corresponding

# to the first token. We assume that this has been pre-trained

# 取第一行,然后压缩

first_token_tensor = tf.squeeze(self.sequence_output[:, 0:1, :], axis=1)

self.pooled_output = tf.layers.dense(

first_token_tensor,

config.hidden_size,

activation=tf.tanh,

kernel_initializer=create_initializer(config.initializer_range))

1.2 embedding层

1.2.1 embedding_lookup

embedding_lookup初始化embedding_table来生成向量,这里不复杂。

[batch_size, seq_length]->[batch_size, seq_length, embedding_size]

介绍一下几个重点参数

- vocab_size 原本词汇量大小

- embedding_size 生成的embedding_table的维度,实际就是hidden_size,bert默认大小768

- initializer_range 用于embedding_table的权重初始化

def embedding_lookup(input_ids,

vocab_size,

embedding_size=128,

initializer_range=0.02,

word_embedding_name="word_embeddings",

use_one_hot_embeddings=False):

"""Looks up words embeddings for id tensor.

Args:

input_ids: int32 Tensor of shape [batch_size, seq_length] containing word

ids.

vocab_size: int. Size of the embedding vocabulary.

embedding_size: int. Width of the word embeddings.

initializer_range: float. Embedding initialization range.

word_embedding_name: string. Name of the embedding table.

use_one_hot_embeddings: bool. If True, use one-hot method for word

embeddings. If False, use `tf.gather()`.

Returns:

float Tensor of shape [batch_size, seq_length, embedding_size].

"""

# This function assumes that the input is of shape [batch_size, seq_length,

# num_inputs].

# If the input is a 2D tensor of shape [batch_size, seq_length], we

# reshape to [batch_size, seq_length, 1].

if input_ids.shape.ndims == 2:

input_ids = tf.expand_dims(input_ids, axis=[-1])

embedding_table = tf.get_variable(

name=word_embedding_name,

shape=[vocab_size, embedding_size],

initializer=create_initializer(initializer_range))

flat_input_ids = tf.reshape(input_ids, [-1])

if use_one_hot_embeddings:

one_hot_input_ids = tf.one_hot(flat_input_ids, depth=vocab_size)

output = tf.matmul(one_hot_input_ids, embedding_table)

else:

output = tf.gather(embedding_table, flat_input_ids)

input_shape = get_shape_list(input_ids)

output = tf.reshape(output,

input_shape[0:-1] + [input_shape[-1] * embedding_size])

return (output, embedding_table)

1.2.2 词向量处理 embedding_postprocessor

向量预处理:添加token_type和位置向量。

[batch_size, seq_length, embedding_size]->[batch_size, seq_length,embedding_size] 输入和输出维度不变

- 这里要注意token_type也是通过token_type_table做的向量化处理,生成的向量与字向量的维度相同(width)

- 模型训练一个全位置向量,这里的全是指最长的单词长度对应的位置向量,后面会根据实际

seq_length(句子长度)截取所需要的长度。位置向量对于不同batch相同seq_length的句子都是相同的,所以这里是在[seq_length,width]维度的加,前面维度置为1。

def embedding_postprocessor(input_tensor,

use_token_type=False,

token_type_ids=None,

token_type_vocab_size=16,

token_type_embedding_name="token_type_embeddings",

use_position_embeddings=True,

position_embedding_name="position_embeddings",

initializer_range=0.02,

max_position_embeddings=512,

dropout_prob=0.1):

"""Performs various post-processing on a word embedding tensor.

Args:

input_tensor: float Tensor of shape [batch_size, seq_length,

embedding_size].

use_token_type: bool. Whether to add embeddings for `token_type_ids`.

token_type_ids: (optional) int32 Tensor of shape [batch_size, seq_length].

Must be specified if `use_token_type` is True.

token_type_vocab_size: int. The vocabulary size of `token_type_ids`.

token_type_embedding_name: string. The name of the embedding table variable

for token type ids.

use_position_embeddings: bool. Whether to add position embeddings for the

position of each token in the sequence.

position_embedding_name: string. The name of the embedding table variable

for positional embeddings.

initializer_range: float. Range of the weight initialization.

max_position_embeddings: int. Maximum sequence length that might ever be

used with this model. This can be longer than the sequence length of

input_tensor, but cannot be shorter.

dropout_prob: float. Dropout probability applied to the final output tensor.

Returns:

float tensor with same shape as `input_tensor`.

Raises:

ValueError: One of the tensor shapes or input values is invalid.

"""

input_shape = get_shape_list(input_tensor, expected_rank=3)

batch_size = input_shape[0]

seq_length = input_shape[1]

width = input_shape[2]

output = input_tensor

if use_token_type:

if token_type_ids is None:

raise ValueError("`token_type_ids` must be specified if"

"`use_token_type` is True.")

token_type_table = tf.get_variable(

name=token_type_embedding_name,

shape=[token_type_vocab_size, width],

initializer=create_initializer(initializer_range))

# This vocab will be small so we always do one-hot here, since it is always

# faster for a small vocabulary.

flat_token_type_ids = tf.reshape(token_type_ids, [-1])

one_hot_ids = tf.one_hot(flat_token_type_ids, depth=token_type_vocab_size)

token_type_embeddings = tf.matmul(one_hot_ids, token_type_table)

token_type_embeddings = tf.reshape(token_type_embeddings,

[batch_size, seq_length, width])

output += token_type_embeddings

if use_position_embeddings:

assert_op = tf.assert_less_equal(seq_length, max_position_embeddings)

with tf.control_dependencies([assert_op]):

full_position_embeddings = tf.get_variable(

name=position_embedding_name,

shape=[max_position_embeddings, width],

initializer=create_initializer(initializer_range))

# Since the position embedding table is a learned variable, we create it

# using a (long) sequence length `max_position_embeddings`. The actual

# sequence length might be shorter than this, for faster training of

# tasks that do not have long sequences.

# So `full_position_embeddings` is effectively an embedding table

# for position [0, 1, 2, ..., max_position_embeddings-1], and the current

# sequence has positions [0, 1, 2, ... seq_length-1], so we can just

# perform a slice.

# 切片 [[0,seq_length],[0,-1]]

position_embeddings = tf.slice(full_position_embeddings, [0, 0],

[seq_length, -1])

num_dims = len(output.shape.as_list())

# Only the last two dimensions are relevant (`seq_length` and `width`), so

# we broadcast among the first dimensions, which is typically just

# the batch size.因为只有后两个维度与位置相关,batch与位置无关

position_broadcast_shape = []

for _ in range(num_dims - 2):

position_broadcast_shape.append(1)

position_broadcast_shape.extend([seq_length, width])

position_embeddings = tf.reshape(position_embeddings,

position_broadcast_shape)

output += position_embeddings

output = layer_norm_and_dropout(output, dropout_prob)

return output

1.3 编码层 Encoder Layer

生成attention_mask用于transformer的生成,编码层主要执行的transformer的encoder

1.3.1 注意力层 Attention Layer

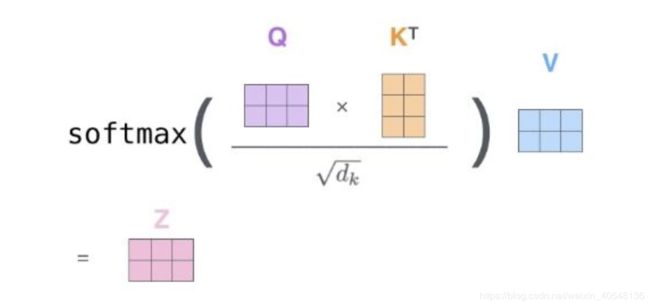

首先通过全连接层构建Q,K,V,这里Q,K,V的维度都是隐藏层的sizehead_size*num_attention_heads,后续会将hidden_size reshape为多头(head_size和num_attention_heads两个维度)来计算每一个head的下图公式的值。这里要注意K,V的维度可能与Q不同,这里取决于输入的from_tensor和to_tensor是否相同,因为Q是通过from_tensor全连接计算而得,K,V是通过to_tensor计算所得。不过就输出而言,肯定是与from_tensor维度相同的。最后将多头reshape为hidden_size.

我们来详细理解下上述公式,主要计算Attention score,获取softmax分数,这个分数表示每个单词对当前单词的贡献。将每个值向量乘以softmax分数(这里是希望关注语义上相关的单词,弱化不相关的单词)加权求和,即得到自注意力层在该位置的输出。

1.3.2 核心:Transformer

前面提到,编码层主要采用的是transformer模型的编码层,关键词:多头,多层

[batch_size, seq_length, hidden_size]->[batch_size, seq_length, hidden_size]

模型有几个关键字段:

- head_size=隐藏层大小(hidden_size)/ num_attention_heads

- hidden_size=input_width Transformer会将残差连接,所以输入的width需要与hidden_size相同。

- 隐藏层层数为num_hidden_layer,每一层包含attention层、intermediate层和output层。这里需要注意的是在attention层,多头输出经过连接和线性变换后加上residual(这一层的输入)

def transformer_model(input_tensor,

attention_mask=None,

hidden_size=768,

num_hidden_layers=12,

num_attention_heads=12,

intermediate_size=3072,

intermediate_act_fn=gelu,

hidden_dropout_prob=0.1,

attention_probs_dropout_prob=0.1,

initializer_range=0.02,

do_return_all_layers=False):

"""Multi-headed, multi-layer Transformer from "Attention is All You Need".

This is almost an exact implementation of the original Transformer encoder.

See the original paper:

https://arxiv.org/abs/1706.03762

Also see:

https://github.com/tensorflow/tensor2tensor/blob/master/tensor2tensor/models/transformer.py

Args:

input_tensor: float Tensor of shape [batch_size, seq_length, hidden_size].

attention_mask: (optional) int32 Tensor of shape [batch_size, seq_length,

seq_length], with 1 for positions that can be attended to and 0 in

positions that should not be.

hidden_size: int. Hidden size of the Transformer.

num_hidden_layers: int. Number of layers (blocks) in the Transformer.

num_attention_heads: int. Number of attention heads in the Transformer.

intermediate_size: int. The size of the "intermediate" (a.k.a., feed

forward) layer.

intermediate_act_fn: function. The non-linear activation function to apply

to the output of the intermediate/feed-forward layer.

hidden_dropout_prob: float. Dropout probability for the hidden layers.

attention_probs_dropout_prob: float. Dropout probability of the attention

probabilities.

initializer_range: float. Range of the initializer (stddev of truncated

normal).

do_return_all_layers: Whether to also return all layers or just the final

layer.

Returns:

float Tensor of shape [batch_size, seq_length, hidden_size], the final

hidden layer of the Transformer.

Raises:

ValueError: A Tensor shape or parameter is invalid.

"""

if hidden_size % num_attention_heads != 0:

raise ValueError(

"The hidden size (%d) is not a multiple of the number of attention "

"heads (%d)" % (hidden_size, num_attention_heads))

attention_head_size = int(hidden_size / num_attention_heads)

input_shape = get_shape_list(input_tensor, expected_rank=3)

batch_size = input_shape[0]

seq_length = input_shape[1]

input_width = input_shape[2]

# The Transformer performs sum residuals on all layers so the input needs

# to be the same as the hidden size.

if input_width != hidden_size:

raise ValueError("The width of the input tensor (%d) != hidden size (%d)" %

(input_width, hidden_size))

# We keep the representation as a 2D tensor to avoid re-shaping it back and

# forth from a 3D tensor to a 2D tensor. Re-shapes are normally free on

# the GPU/CPU but may not be free on the TPU, so we want to minimize them to

# help the optimizer.

prev_output = reshape_to_matrix(input_tensor)

all_layer_outputs = []

for layer_idx in range(num_hidden_layers):

with tf.variable_scope("layer_%d" % layer_idx):

layer_input = prev_output

with tf.variable_scope("attention"):

attention_heads = []

with tf.variable_scope("self"):

attention_head = attention_layer(

from_tensor=layer_input,

to_tensor=layer_input,

attention_mask=attention_mask,

num_attention_heads=num_attention_heads,

size_per_head=attention_head_size,

attention_probs_dropout_prob=attention_probs_dropout_prob,

initializer_range=initializer_range,

do_return_2d_tensor=True,

batch_size=batch_size,

from_seq_length=seq_length,

to_seq_length=seq_length)

attention_heads.append(attention_head)

attention_output = None

if len(attention_heads) == 1:

attention_output = attention_heads[0]

else:

# In the case where we have other sequences, we just concatenate

# them to the self-attention head before the projection.

attention_output = tf.concat(attention_heads, axis=-1)

# Run a linear projection of `hidden_size` then add a residual

# with `layer_input`.

with tf.variable_scope("output"):

attention_output = tf.layers.dense(

attention_output,

hidden_size,

kernel_initializer=create_initializer(initializer_range))

attention_output = dropout(attention_output, hidden_dropout_prob)

attention_output = layer_norm(attention_output + layer_input)

# The activation is only applied to the "intermediate" hidden layer.

with tf.variable_scope("intermediate"):

intermediate_output = tf.layers.dense(

attention_output,

intermediate_size,

activation=intermediate_act_fn,

kernel_initializer=create_initializer(initializer_range))

# Down-project back to `hidden_size` then add the residual.

with tf.variable_scope("output"):

layer_output = tf.layers.dense(

intermediate_output,

hidden_size,

kernel_initializer=create_initializer(initializer_range))

layer_output = dropout(layer_output, hidden_dropout_prob)

layer_output = layer_norm(layer_output + attention_output)

prev_output = layer_output

all_layer_outputs.append(layer_output)

if do_return_all_layers:

final_outputs = []

for layer_output in all_layer_outputs:

final_output = reshape_from_matrix(layer_output, input_shape)

final_outputs.append(final_output)

return final_outputs

else:

final_output = reshape_from_matrix(prev_output, input_shape)

return final_output

1.4 pooler

pooler层是对编码器的输出进行处理,这里只取了seq_length维度的第一行,然后压缩该维度:

- [batch_size, seq_length, hidden_size] => [batch_size, hidden_size]

最后经过一个线性激活层输出。

with tf.variable_scope("pooler"):

# We "pool" the model by simply taking the hidden state corresponding

# to the first token. We assume that this has been pre-trained

# 取第一行,然后压缩

first_token_tensor = tf.squeeze(self.sequence_output[:, 0:1, :], axis=1)

self.pooled_output = tf.layers.dense(

first_token_tensor,

config.hidden_size,

activation=tf.tanh,

kernel_initializer=create_initializer(config.initializer_range))

2. optimization.py 梯度更新

整体返回反向传播的执行单元train_op,用于tf.session执行

2.1 Adam参数更新

继承自Optimizer类,主要的函数是apply_gradients,通过梯度使用Adam算法更新模型参数,具体怎么使用我们下面来看反向传播的函数。

class AdamWeightDecayOptimizer(tf.train.Optimizer):

"""A basic Adam optimizer that includes "correct" L2 weight decay."""

def __init__(self,

learning_rate,

weight_decay_rate=0.0,

beta_1=0.9,

beta_2=0.999,

epsilon=1e-6,

exclude_from_weight_decay=None,

name="AdamWeightDecayOptimizer"):

"""Constructs a AdamWeightDecayOptimizer."""

super(AdamWeightDecayOptimizer, self).__init__(False, name)

self.learning_rate = learning_rate

self.weight_decay_rate = weight_decay_rate

self.beta_1 = beta_1

self.beta_2 = beta_2

self.epsilon = epsilon

self.exclude_from_weight_decay = exclude_from_weight_decay

def apply_gradients(self, grads_and_vars, global_step=None, name=None):

"""See base class."""

assignments = []

# grads_and_vars 梯度和要更新的变量

for (grad, param) in grads_and_vars:

if grad is None or param is None:

continue

param_name = self._get_variable_name(param.name)

m = tf.get_variable(

name=param_name + "/adam_m",

shape=param.shape.as_list(),

dtype=tf.float32,

trainable=False,

initializer=tf.zeros_initializer())

v = tf.get_variable(

name=param_name + "/adam_v",

shape=param.shape.as_list(),

dtype=tf.float32,

trainable=False,

initializer=tf.zeros_initializer())

# Standard Adam update.

next_m = (

tf.multiply(self.beta_1, m) + tf.multiply(1.0 - self.beta_1, grad))

next_v = (

tf.multiply(self.beta_2, v) + tf.multiply(1.0 - self.beta_2,

tf.square(grad)))

update = next_m / (tf.sqrt(next_v) + self.epsilon)

# Just adding the square of the weights to the loss function is *not*

# the correct way of using L2 regularization/weight decay with Adam,

# since that will interact with the m and v parameters in strange ways.

#

# Instead we want ot decay the weights in a manner that doesn't interact

# with the m/v parameters. This is equivalent to adding the square

# of the weights to the loss with plain (non-momentum) SGD.

if self._do_use_weight_decay(param_name):

update += self.weight_decay_rate * param

update_with_lr = self.learning_rate * update

next_param = param - update_with_lr

assignments.extend(

[param.assign(next_param),

m.assign(next_m),

v.assign(next_v)])

return tf.group(*assignments, name=name)

def _do_use_weight_decay(self, param_name):

"""Whether to use L2 weight decay for `param_name`."""

if not self.weight_decay_rate:

return False

if self.exclude_from_weight_decay:

for r in self.exclude_from_weight_decay:

if re.search(r, param_name) is not None:

return False

return True

def _get_variable_name(self, param_name):

"""Get the variable name from the tensor name."""

m = re.match("^(.*):\\d+$", param_name)

if m is not None:

param_name = m.group(1)

return param_name

2.2 optimizer返回梯度更新执行单元

参数优化执行需要以下几个参数:

global_step模型训练执行的全局步数,用于学习率的变化learning_rate学习率,通过多项式衰减变化num_warmup_steps模型预热,通过全局步数/预热步数*lr来变化学习率

关键变量:

tvars模型需要训练的参数grads根据loss计算参数的梯度,并通过梯度裁剪(clip_norm是裁剪的比例)train_op这里我理解为反向传播的整个执行单元new_global_step反向传播通常会自动更新global_step,这里AdamWeightDecayOptimizer并没有做,所以需要手动更新global_step

3. 模型评估

3.1 metrics_from_confusion_matrix

根据混淆矩阵(confusion_matrix是记录预测值和真实值的矩阵,可以方便的计算tp fp fn tn)计算准确率、召回率和F1 参考 confusion_matrix

def metrics_from_confusion_matrix(cm, pos_indices=None, average='micro',

beta=1):

"""Precision, Recall and F1 from the confusion matrix

Parameters

----------

cm : tf.Tensor of type tf.int32, of shape (num_classes, num_classes)

The streaming confusion matrix.

pos_indices : list of int, optional

The indices of the positive classes

beta : int, optional

Weight of precision in harmonic mean

average : str, optional

'micro', 'macro' or 'weighted'

"""

num_classes = cm.shape[0]

if pos_indices is None:

pos_indices = [i for i in range(num_classes)]

if average == 'micro':

return pr_re_fbeta(cm, pos_indices, beta)

elif average in {'macro', 'weighted'}:

precisions, recalls, fbetas, n_golds = [], [], [], []

for idx in pos_indices:

pr, re, fbeta = pr_re_fbeta(cm, [idx], beta)

precisions.append(pr)

recalls.append(re)

fbetas.append(fbeta)

cm_mask = np.zeros([num_classes, num_classes])

cm_mask[idx, :] = 1

n_golds.append(tf.to_float(tf.reduce_sum(cm * cm_mask)))

if average == 'macro':

pr = tf.reduce_mean(precisions)

re = tf.reduce_mean(recalls)

fbeta = tf.reduce_mean(fbetas)

return pr, re, fbeta

if average == 'weighted':

n_gold = tf.reduce_sum(n_golds)

pr_sum = sum(p * n for p, n in zip(precisions, n_golds))

pr = safe_div(pr_sum, n_gold)

re_sum = sum(r * n for r, n in zip(recalls, n_golds))

re = safe_div(re_sum, n_gold)

fbeta_sum = sum(f * n for f, n in zip(fbetas, n_golds))

fbeta = safe_div(fbeta_sum, n_gold)

return pr, re, fbeta

else:

raise NotImplementedError()

3.2 precision

构建混淆矩阵,然后根据混淆矩阵计算准确率。这里主要到混淆矩阵其实返回了两个值:

- total_cm: confusion matrix.

- update_op: An operation that increments the confusion matrix.

关于第二个变量先留个坑,以后来填。(涉及计算图、分布式计算貌似)

根据混淆矩阵计算了两个准确率。关于下面查全率和F1的计算与准确率类似。

def precision(labels, predictions, num_classes, pos_indices=None,

weights=None, average='micro'):

"""Multi-class precision metric for Tensorflow

Parameters

----------

labels : Tensor of tf.int32 or tf.int64

The true labels

predictions : Tensor of tf.int32 or tf.int64

The predictions, same shape as labels

num_classes : int

The number of classes

pos_indices : list of int, optional

The indices of the positive classes, default is all

weights : Tensor of tf.int32, optional

Mask, must be of compatible shape with labels

average : str, optional

'micro': counts the total number of true positives, false

positives, and false negatives for the classes in

`pos_indices` and infer the metric from it.

'macro': will compute the metric separately for each class in

`pos_indices` and average. Will not account for class

imbalance.

'weighted': will compute the metric separately for each class in

`pos_indices` and perform a weighted average by the total

number of true labels for each class.

Returns

-------

tuple of (scalar float Tensor, update_op)

"""

cm, op = _streaming_confusion_matrix(

labels, predictions, num_classes, weights)

pr, _, _ = metrics_from_confusion_matrix(

cm, pos_indices, average=average)

op, _, _ = metrics_from_confusion_matrix(

op, pos_indices, average=average)

return (pr, op)

3.3 recall

def recall(labels, predictions, num_classes, pos_indices=None, weights=None,

average='micro'):

"""Multi-class recall metric for Tensorflow

Parameters

----------

labels : Tensor of tf.int32 or tf.int64

The true labels

predictions : Tensor of tf.int32 or tf.int64

The predictions, same shape as labels

num_classes : int

The number of classes

pos_indices : list of int, optional

The indices of the positive classes, default is all

weights : Tensor of tf.int32, optional

Mask, must be of compatible shape with labels

average : str, optional

'micro': counts the total number of true positives, false

positives, and false negatives for the classes in

`pos_indices` and infer the metric from it.

'macro': will compute the metric separately for each class in

`pos_indices` and average. Will not account for class

imbalance.

'weighted': will compute the metric separately for each class in

`pos_indices` and perform a weighted average by the total

number of true labels for each class.

Returns

-------

tuple of (scalar float Tensor, update_op)

"""

cm, op = _streaming_confusion_matrix(

labels, predictions, num_classes, weights)

_, re, _ = metrics_from_confusion_matrix(

cm, pos_indices, average=average)

_, op, _ = metrics_from_confusion_matrix(

op, pos_indices, average=average)

return (re, op)

3.4 F1

def f1(labels, predictions, num_classes, pos_indices=None, weights=None,

average='micro'):

return fbeta(labels, predictions, num_classes, pos_indices, weights,

average)

def fbeta(labels, predictions, num_classes, pos_indices=None, weights=None,

average='micro', beta=1):

"""Multi-class fbeta metric for Tensorflow

Parameters

----------

labels : Tensor of tf.int32 or tf.int64

The true labels

predictions : Tensor of tf.int32 or tf.int64

The predictions, same shape as labels

num_classes : int

The number of classes

pos_indices : list of int, optional

The indices of the positive classes, default is all

weights : Tensor of tf.int32, optional

Mask, must be of compatible shape with labels

average : str, optional

'micro': counts the total number of true positives, false

positives, and false negatives for the classes in

`pos_indices` and infer the metric from it.

'macro': will compute the metric separately for each class in

`pos_indices` and average. Will not account for class

imbalance.

'weighted': will compute the metric separately for each class in

`pos_indices` and perform a weighted average by the total

number of true labels for each class.

beta : int, optional

Weight of precision in harmonic mean

Returns

-------

tuple of (scalar float Tensor, update_op)

"""

cm, op = _streaming_confusion_matrix(

labels, predictions, num_classes, weights)

_, _, fbeta = metrics_from_confusion_matrix(

cm, pos_indices, average=average, beta=beta)

_, _, op = metrics_from_confusion_matrix(

op, pos_indices, average=average, beta=beta)

return (fbeta, op)

4. 数据准备

4.1 原文本

以下内容部分是参考 BERT解读

模型最初的数据形式如下,源码中对于这个输入文件的要求为:

- 一行一句话,最好是真实文本中的一句话,而不是一段话或者一句话的部分

- 文档之间用空行分开,防止NSP任务跨文档。

从数据准备的主函数来看,重点是create_training_instances也就是构建训练集列表,每个元素为类实例。

This text is included to make sure Unicode is handled properly: 力加勝北区ᴵᴺᵀᵃছজটডণত

Text should be one-sentence-per-line, with empty lines between documents.

This sample text is public domain and was randomly selected from Project Guttenberg.

The rain had only ceased with the gray streaks of morning at Blazing Star, and the settlement awoke to a moral sense of cleanliness, and the finding of forgotten knives, tin cups, and smaller camp utensils, where the heavy showers had washed away the debris and dust heaps before the cabin doors.

Indeed, it was recorded in Blazing Star that a fortunate early riser had once picked up on the highway a solid chunk of gold quartz which the rain had freed from its incumbering soil, and washed into immediate and glittering popularity.

Possibly this may have been the reason why early risers in that locality, during the rainy season, adopted a thoughtful habit of body, and seldom lifted their eyes to the rifted or india-ink washed skies above them.

"Cass" Beard had risen early that morning, but not with a view to discovery.

A leak in his cabin roof,--quite consistent with his careless, improvident habits,--had roused him at 4 A. M., with a flooded "bunk" and wet blankets.

The chips from his wood pile refused to kindle a fire to dry his bed-clothes, and he had recourse to a more provident neighbor's to supply the deficiency.

This was nearly opposite.

Mr. Cassius crossed the highway, and stopped suddenly.

Something glittered in the nearest red pool before him.

Gold, surely!

But, wonderful to relate, not an irregular, shapeless fragment of crude ore, fresh from Nature's crucible, but a bit of jeweler's handicraft in the form of a plain gold ring.

Looking at it more attentively, he saw that it bore the inscription, "May to Cass."

Like most of his fellow gold-seekers, Cass was superstitious.

The fountain of classic wisdom, Hypatia herself.

As the ancient sage--the name is unimportant to a monk--pumped water nightly that he might study by day, so I, the guardian of cloaks and parasols, at the sacred doors of her lecture-room, imbibe celestial knowledge.

From my youth I felt in me a soul above the matter-entangled herd.

She revealed to me the glorious fact, that I am a spark of Divinity itself.

A fallen star, I am, sir!' continued he, pensively, stroking his lean stomach--'a fallen star!--fallen, if the dignity of philosophy will allow of the simile, among the hogs of the lower world--indeed, even into the hog-bucket itself. Well, after all, I will show you the way to the Archbishop's.

There is a philosophic pleasure in opening one's treasures to the modest young.

Perhaps you will assist me by carrying this basket of fruit?' And the little man jumped up, put his basket on Philammon's head, and trotted off up a neighbouring street.

Philammon followed, half contemptuous, half wondering at what this philosophy might be, which could feed the self-conceit of anything so abject as his ragged little apish guide;

but the novel roar and whirl of the street, the perpetual stream of busy faces, the line of curricles, palanquins, laden asses, camels, elephants, which met and passed him, and squeezed him up steps and into doorways, as they threaded their way through the great Moon-gate into the ample street beyond, drove everything from his mind but wondering curiosity, and a vague, helpless dread of that great living wilderness, more terrible than any dead wilderness of sand which he had left behind.

Already he longed for the repose, the silence of the Laura--for faces which knew him and smiled upon him; but it was too late to turn back now.

His guide held on for more than a mile up the great main street, crossed in the centre of the city, at right angles, by one equally magnificent, at each end of which, miles away, appeared, dim and distant over the heads of the living stream of passengers, the yellow sand-hills of the desert;

while at the end of the vista in front of them gleamed the blue harbour, through a network of countless masts.

At last they reached the quay at the opposite end of the street;

and there burst on Philammon's astonished eyes a vast semicircle of blue sea, ringed with palaces and towers.

He stopped involuntarily; and his little guide stopped also, and looked askance at the young monk, to watch the effect which that grand panorama should produce on him.

4.2 处理逻辑

对上述文件的处理代码如下:

主函数

def main(_):

tf.logging.set_verbosity(tf.logging.INFO)

# 端到端的token处理,对应词汇表

tokenizer = tokenization.FullTokenizer(

vocab_file=FLAGS.vocab_file, do_lower_case=FLAGS.do_lower_case)

input_files = []

for input_pattern in FLAGS.input_file.split(","):

input_files.extend(tf.gfile.Glob(input_pattern))

tf.logging.info("*** Reading from input files ***")

for input_file in input_files:

tf.logging.info(" %s", input_file)

rng = random.Random(FLAGS.random_seed)

instances = create_training_instances(

input_files, tokenizer, FLAGS.max_seq_length, FLAGS.dupe_factor,

FLAGS.short_seq_prob, FLAGS.masked_lm_prob, FLAGS.max_predictions_per_seq,

rng)

output_files = FLAGS.output_file.split(",")

tf.logging.info("*** Writing to output files ***")

for output_file in output_files:

tf.logging.info(" %s", output_file)

# 将类列表写入到输出文件中

write_instance_to_example_files(instances, tokenizer, FLAGS.max_seq_length,

FLAGS.max_predictions_per_seq, output_files)

create_training_instances创建样本列表

rng = random.Random(FLAGS.random_seed)

instances = create_training_instances(

input_files, tokenizer, FLAGS.max_seq_length, FLAGS.dupe_factor,

FLAGS.short_seq_prob, FLAGS.masked_lm_prob, FLAGS.max_predictions_per_seq,

rng)

print(instances[0])

输出的示例如下:

tokens: [CLS] for more than a [MASK] up [MASK] great main street , [MASK] [MASK] [MASK] centre of the city , at right angles , [MASK] one equally magnificent , at each end ##ミ which , miles away , appeared , dim and distant over the heads of the living stream of passengers , the yellow [MASK] - hills of [MASK] [MASK] ; while at the end of the vista in front [MASK] them gleamed the blue harbour , through a network [SEP] possibly this may have been the reason why early rise ##rs in [MASK] locality , during the rainy season , adopted [MASK] [MASK] [MASK] of body , and seldom lifted [MASK] eyes [MASK] the rift [MASK] or india - ink washed skies above them . [SEP]

segment_ids: 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

is_random_next: True

masked_lm_positions: 5 7 12 13 14 24 32 55 59 60 71 94 103 104 105 109 112 114 117

masked_lm_labels: mile the crossed in the by of sand the desert of that a thoughtful habit and their to ##ed

可见,其输出有:

- tokens:两句话pack在一起,并加上[MASK]、[CLS]和[SEP]之后的token序列

- segment_ids:句子标识符,0表示第一句话,1表示第二句话

- is_random_next:是否为下一句,NSP任务的label

- masked_lm_positions:用于MLM任务,标识哪些位置被mask了

- masked_lm_labels:用于MLM任务,表示被mask的位置上的label

下面我们来看create_training_instances的执行流程:

- 读取文件,生成一个大列表,大列表每个小列表装着一段话,以每句的形式构成小列表

- 将输入的文本分字后,通过vocab映射为id

- 这里

dupe_factor是为了生成不同的MASK - 调用

create_instances_from_document生成实际的训练数据

def create_training_instances(input_files, tokenizer, max_seq_length,

dupe_factor, short_seq_prob, masked_lm_prob,

max_predictions_per_seq, rng):

"""Create `TrainingInstance`s from raw text."""

all_documents = [[]]

for input_file in input_files:

with tf.gfile.GFile(input_file, "r") as reader:

while True:

line = tokenization.convert_to_unicode(reader.readline())

if not line:

break

line = line.strip()

# Empty lines are used as document delimiters

if not line:

all_documents.append([])

tokens = tokenizer.tokenize(line)

if tokens:

all_documents[-1].append(tokens)

# Remove empty documents

all_documents = [x for x in all_documents if x]

rng.shuffle(all_documents)

vocab_words = list(tokenizer.vocab.keys())

instances = []

for _ in range(dupe_factor):

for document_index in range(len(all_documents)):

instances.extend(

create_instances_from_document(

all_documents, document_index, max_seq_length, short_seq_prob,

masked_lm_prob, max_predictions_per_seq, vocab_words, rng))

rng.shuffle(instances)

return instances

下面看一下create_instances_from_document生成训练数据的逻辑:

def create_instances_from_document(

all_documents, document_index, max_seq_length, short_seq_prob,

masked_lm_prob, max_predictions_per_seq, vocab_words, rng):

"""Creates `TrainingInstance`s for a single document."""

document = all_documents[document_index]

# Account for [CLS], [SEP], [SEP]

max_num_tokens = max_seq_length - 3

target_seq_length = max_num_tokens

if rng.random() < short_seq_prob:

target_seq_length = rng.randint(2, max_num_tokens)

instances = []

current_chunk = []

current_length = 0

i = 0

while i < len(document):

segment = document[i]

current_chunk.append(segment)

current_length += len(segment)

# 文档结尾 or 当前长度大于目标长度

if i == len(document) - 1 or current_length >= target_seq_length:

if current_chunk:

a_end = 1

if len(current_chunk) >= 2:

# 随机拆分为 两句

a_end = rng.randint(1, len(current_chunk) - 1)

tokens_a = []

for j in range(a_end):

tokens_a.extend(current_chunk[j])

tokens_b = []

# Random next

is_random_next = False

# 随机下一句或不随机

if len(current_chunk) == 1 or rng.random() < 0.5:

is_random_next = True

target_b_length = target_seq_length - len(tokens_a)

for _ in range(10):

random_document_index = rng.randint(0, len(all_documents) - 1)

if random_document_index != document_index:

break

random_document = all_documents[random_document_index]

random_start = rng.randint(0, len(random_document) - 1)

for j in range(random_start, len(random_document)):

tokens_b.extend(random_document[j])

if len(tokens_b) >= target_b_length:

break

# 更新i:因为随机下一句,导致后半部分没有用到,构建下个句子组使用

num_unused_segments = len(current_chunk) - a_end

i -= num_unused_segments

# Actual next

else:

is_random_next = False

for j in range(a_end, len(current_chunk)):

tokens_b.extend(current_chunk[j])

truncate_seq_pair(tokens_a, tokens_b, max_num_tokens, rng)

assert len(tokens_a) >= 1

assert len(tokens_b) >= 1

# 构建tokens和segment_ids

tokens = []

segment_ids = []

tokens.append("[CLS]")

segment_ids.append(0)

for token in tokens_a:

tokens.append(token)

segment_ids.append(0)

tokens.append("[SEP]")

segment_ids.append(0)

for token in tokens_b:

tokens.append(token)

segment_ids.append(1)

tokens.append("[SEP]")

segment_ids.append(1)

(tokens, masked_lm_positions,

masked_lm_labels) = create_masked_lm_predictions(

tokens, masked_lm_prob, max_predictions_per_seq, vocab_words, rng)

instance = TrainingInstance(

tokens=tokens,

segment_ids=segment_ids,

is_random_next=is_random_next,

masked_lm_positions=masked_lm_positions,

masked_lm_labels=masked_lm_labels)

instances.append(instance)

current_chunk = []

current_length = 0

i += 1

return instances

到这里,数据准备就剩下创建MASKcreate_masked_lm_predictions和构建最终的训练集的类TrainingInstance.

以下是create_masked_lm_predictions的函数构建,解释很详细了。

# 构建mask 返回token mask位置 label值

def create_masked_lm_predictions(tokens, masked_lm_prob,

max_predictions_per_seq, vocab_words, rng):

"""Creates the predictions for the masked LM objective."""

cand_indexes = []

for (i, token) in enumerate(tokens):

if token == "[CLS]" or token == "[SEP]":

continue

# Whole Word Masking means that if we mask all of the wordpieces

# corresponding to an original word. When a word has been split into

# WordPieces, the first token does not have any marker and any subsequence

# tokens are prefixed with ##. So whenever we see the ## token, we

# append it to the previous set of word indexes.

#

# Note that Whole Word Masking does *not* change the training code

# at all -- we still predict each WordPiece independently, softmaxed

# over the entire vocabulary.

if (FLAGS.do_whole_word_mask and len(cand_indexes) >= 1 and

token.startswith("##")):

# 将##为前缀的加到前一个字的列表中,如果是做整词预测

cand_indexes[-1].append(i)

else:

cand_indexes.append([i])

rng.shuffle(cand_indexes)

output_tokens = list(tokens)

# 确定需要预测的字数量

num_to_predict = min(max_predictions_per_seq,

max(1, int(round(len(tokens) * masked_lm_prob))))

masked_lms = []

covered_indexes = set()

for index_set in cand_indexes:

if len(masked_lms) >= num_to_predict:

break

# If adding a whole-word mask would exceed the maximum number of

# predictions, then just skip this candidate.

# 如果加上这整个单词超过最大预测数,则跳过这个候选词

if len(masked_lms) + len(index_set) > num_to_predict:

continue

is_any_index_covered = False

# 是否是否有字已经包含在非重复集合里面

for index in index_set:

if index in covered_indexes:

is_any_index_covered = True

break

if is_any_index_covered:

continue

for index in index_set:

covered_indexes.add(index)

masked_token = None

# 80% of the time, replace with [MASK]

if rng.random() < 0.8:

masked_token = "[MASK]"

else:

# 10% of the time, keep original

if rng.random() < 0.5:

masked_token = tokens[index]

# 10% of the time, replace with random word

else:

masked_token = vocab_words[rng.randint(0, len(vocab_words) - 1)]

# 替换成相应的masked_token

output_tokens[index] = masked_token

masked_lms.append(MaskedLmInstance(index=index, label=tokens[index]))

assert len(masked_lms) <= num_to_predict

masked_lms = sorted(masked_lms, key=lambda x: x.index)

masked_lm_positions = []

masked_lm_labels = []

for p in masked_lms:

masked_lm_positions.append(p.index)

masked_lm_labels.append(p.label)

return (output_tokens, masked_lm_positions, masked_lm_labels)

下面是构建训练集定制类,添加了打印训练样本信息的函数。

class TrainingInstance(object):

"""A single training instance (sentence pair)."""

def __init__(self, tokens, segment_ids, masked_lm_positions, masked_lm_labels,

is_random_next):

self.tokens = tokens

self.segment_ids = segment_ids

self.is_random_next = is_random_next

self.masked_lm_positions = masked_lm_positions

self.masked_lm_labels = masked_lm_labels

def __str__(self):

s = ""

s += "tokens: %s\n" % (" ".join(

[tokenization.printable_text(x) for x in self.tokens]))

s += "segment_ids: %s\n" % (" ".join([str(x) for x in self.segment_ids]))

s += "is_random_next: %s\n" % self.is_random_next

s += "masked_lm_positions: %s\n" % (" ".join(

[str(x) for x in self.masked_lm_positions]))

s += "masked_lm_labels: %s\n" % (" ".join(

[tokenization.printable_text(x) for x in self.masked_lm_labels]))

s += "\n"

return s

def __repr__(self):

return self.__str__()

5. 预训练 pre-training

5.1 主函数 main()

预训练的主函数比较新的是进一步封装了训练的硬件信息为estimator,便于训练设备的切换(如果没有TPU,使用GPU或CPU),estimator执行训练和评估过程。

主函数调用了两个函数:模型构建函数和输入构建函数,接下来看一下。

def main(_):

tf.logging.set_verbosity(tf.logging.INFO) # 设定日志输出类型

if not FLAGS.do_train and not FLAGS.do_eval:

raise ValueError("At least one of `do_train` or `do_eval` must be True.")

bert_config = modeling.BertConfig.from_json_file(FLAGS.bert_config_file)

tf.gfile.MakeDirs(FLAGS.output_dir)

input_files = []

for input_pattern in FLAGS.input_file.split(","):

input_files.extend(tf.gfile.Glob(input_pattern))

tf.logging.info("*** Input Files ***")

for input_file in input_files:

tf.logging.info(" %s" % input_file)

tpu_cluster_resolver = None

if FLAGS.use_tpu and FLAGS.tpu_name:

tpu_cluster_resolver = tf.contrib.cluster_resolver.TPUClusterResolver(

FLAGS.tpu_name, zone=FLAGS.tpu_zone, project=FLAGS.gcp_project)

is_per_host = tf.contrib.tpu.InputPipelineConfig.PER_HOST_V2

run_config = tf.contrib.tpu.RunConfig(

cluster=tpu_cluster_resolver,

master=FLAGS.master,

model_dir=FLAGS.output_dir,

save_checkpoints_steps=FLAGS.save_checkpoints_steps,

tpu_config=tf.contrib.tpu.TPUConfig(

iterations_per_loop=FLAGS.iterations_per_loop,

num_shards=FLAGS.num_tpu_cores,

per_host_input_for_training=is_per_host))

model_fn = model_fn_builder(

bert_config=bert_config,

init_checkpoint=FLAGS.init_checkpoint,

learning_rate=FLAGS.learning_rate,

num_train_steps=FLAGS.num_train_steps,

num_warmup_steps=FLAGS.num_warmup_steps,

use_tpu=FLAGS.use_tpu,

use_one_hot_embeddings=FLAGS.use_tpu)

# If TPU is not available, this will fall back to normal Estimator on CPU

# or GPU.

estimator = tf.contrib.tpu.TPUEstimator(

use_tpu=FLAGS.use_tpu,

model_fn=model_fn,

config=run_config,

train_batch_size=FLAGS.train_batch_size,

eval_batch_size=FLAGS.eval_batch_size)

if FLAGS.do_train:

tf.logging.info("***** Running training *****")

tf.logging.info(" Batch size = %d", FLAGS.train_batch_size)

train_input_fn = input_fn_builder(

input_files=input_files,

max_seq_length=FLAGS.max_seq_length,

max_predictions_per_seq=FLAGS.max_predictions_per_seq,

is_training=True)

estimator.train(input_fn=train_input_fn, max_steps=FLAGS.num_train_steps)

if FLAGS.do_eval:

tf.logging.info("***** Running evaluation *****")

tf.logging.info(" Batch size = %d", FLAGS.eval_batch_size)

eval_input_fn = input_fn_builder(

input_files=input_files,

max_seq_length=FLAGS.max_seq_length,

max_predictions_per_seq=FLAGS.max_predictions_per_seq,

is_training=False)

result = estimator.evaluate(

input_fn=eval_input_fn, steps=FLAGS.max_eval_steps)

output_eval_file = os.path.join(FLAGS.output_dir, "eval_results.txt")

with tf.gfile.GFile(output_eval_file, "w") as writer:

tf.logging.info("***** Eval results *****")

for key in sorted(result.keys()):

tf.logging.info(" %s = %s", key, str(result[key]))

writer.write("%s = %s\n" % (key, str(result[key])))

5.2 模型构建 model_fn_builder

分为两种模式:

- 训练模式,分别计算MASK和下一句预测的损失,将损失用于计算梯度,更新梯度。

- 评估模式,主要计算模型效果的各种指标

这里不详细叙述(有一些细节我也没仔细看)。

def model_fn_builder(bert_config, init_checkpoint, learning_rate,

num_train_steps, num_warmup_steps, use_tpu,

use_one_hot_embeddings):

"""Returns `model_fn` closure for TPUEstimator."""

def model_fn(features, labels, mode, params): # pylint: disable=unused-argument

"""The `model_fn` for TPUEstimator."""

tf.logging.info("*** Features ***")

for name in sorted(features.keys()):

tf.logging.info(" name = %s, shape = %s" % (name, features[name].shape))

input_ids = features["input_ids"]

input_mask = features["input_mask"]

segment_ids = features["segment_ids"]

masked_lm_positions = features["masked_lm_positions"]

masked_lm_ids = features["masked_lm_ids"]

masked_lm_weights = features["masked_lm_weights"]

next_sentence_labels = features["next_sentence_labels"]

is_training = (mode == tf.estimator.ModeKeys.TRAIN)

model = modeling.BertModel(

config=bert_config,

is_training=is_training,

input_ids=input_ids,

input_mask=input_mask,

token_type_ids=segment_ids,

use_one_hot_embeddings=use_one_hot_embeddings)

(masked_lm_loss,

masked_lm_example_loss, masked_lm_log_probs) = get_masked_lm_output(

bert_config, model.get_sequence_output(), model.get_embedding_table(),

masked_lm_positions, masked_lm_ids, masked_lm_weights)

(next_sentence_loss, next_sentence_example_loss,

next_sentence_log_probs) = get_next_sentence_output(

bert_config, model.get_pooled_output(), next_sentence_labels)

total_loss = masked_lm_loss + next_sentence_loss

tvars = tf.trainable_variables()

initialized_variable_names = {}

scaffold_fn = None

if init_checkpoint:

(assignment_map, initialized_variable_names

) = modeling.get_assignment_map_from_checkpoint(tvars, init_checkpoint)

if use_tpu:

def tpu_scaffold():

tf.train.init_from_checkpoint(init_checkpoint, assignment_map)

return tf.train.Scaffold()

scaffold_fn = tpu_scaffold

else:

tf.train.init_from_checkpoint(init_checkpoint, assignment_map)

tf.logging.info("**** Trainable Variables ****")

for var in tvars:

init_string = ""

if var.name in initialized_variable_names:

init_string = ", *INIT_FROM_CKPT*"

tf.logging.info(" name = %s, shape = %s%s", var.name, var.shape,

init_string)

output_spec = None

if mode == tf.estimator.ModeKeys.TRAIN:

# 根据损失计算梯度

train_op = optimization.create_optimizer(

total_loss, learning_rate, num_train_steps, num_warmup_steps, use_tpu)

output_spec = tf.contrib.tpu.TPUEstimatorSpec(

mode=mode,

loss=total_loss,

train_op=train_op,

scaffold_fn=scaffold_fn)

elif mode == tf.estimator.ModeKeys.EVAL:

def metric_fn(masked_lm_example_loss, masked_lm_log_probs, masked_lm_ids,

masked_lm_weights, next_sentence_example_loss,

next_sentence_log_probs, next_sentence_labels):

"""Computes the loss and accuracy of the model."""

masked_lm_log_probs = tf.reshape(masked_lm_log_probs,

[-1, masked_lm_log_probs.shape[-1]])

masked_lm_predictions = tf.argmax(

masked_lm_log_probs, axis=-1, output_type=tf.int32)

masked_lm_example_loss = tf.reshape(masked_lm_example_loss, [-1])

masked_lm_ids = tf.reshape(masked_lm_ids, [-1])

masked_lm_weights = tf.reshape(masked_lm_weights, [-1])

masked_lm_accuracy = tf.metrics.accuracy(

labels=masked_lm_ids,

predictions=masked_lm_predictions,

weights=masked_lm_weights)

masked_lm_mean_loss = tf.metrics.mean(

values=masked_lm_example_loss, weights=masked_lm_weights)

next_sentence_log_probs = tf.reshape(

next_sentence_log_probs, [-1, next_sentence_log_probs.shape[-1]])

next_sentence_predictions = tf.argmax(

next_sentence_log_probs, axis=-1, output_type=tf.int32)

next_sentence_labels = tf.reshape(next_sentence_labels, [-1])

next_sentence_accuracy = tf.metrics.accuracy(

labels=next_sentence_labels, predictions=next_sentence_predictions)

next_sentence_mean_loss = tf.metrics.mean(

values=next_sentence_example_loss)

return {

"masked_lm_accuracy": masked_lm_accuracy,

"masked_lm_loss": masked_lm_mean_loss,

"next_sentence_accuracy": next_sentence_accuracy,

"next_sentence_loss": next_sentence_mean_loss,

}

eval_metrics = (metric_fn, [

masked_lm_example_loss, masked_lm_log_probs, masked_lm_ids,

masked_lm_weights, next_sentence_example_loss,

next_sentence_log_probs, next_sentence_labels

])

output_spec = tf.contrib.tpu.TPUEstimatorSpec(

mode=mode,

loss=total_loss,

eval_metrics=eval_metrics,

scaffold_fn=scaffold_fn)

else:

raise ValueError("Only TRAIN and EVAL modes are supported: %s" % (mode))

return output_spec

return model_fn

5.3 构建训练集 input_fn_builder

采用from_tensor_slices构建数据集,可以参考 Tensorflow tf.data.Dataset

通过parallel_interleave()映射tf.data.TFRecordDataset并行生成嵌套数据集。

def input_fn_builder(input_files,

max_seq_length,

max_predictions_per_seq,

is_training,

num_cpu_threads=4):

"""Creates an `input_fn` closure to be passed to TPUEstimator."""

def input_fn(params):

"""The actual input function."""

batch_size = params["batch_size"]

# 定义解析字典

name_to_features = {

"input_ids":

tf.FixedLenFeature([max_seq_length], tf.int64),

"input_mask":

tf.FixedLenFeature([max_seq_length], tf.int64),

"segment_ids":

tf.FixedLenFeature([max_seq_length], tf.int64),

"masked_lm_positions":

tf.FixedLenFeature([max_predictions_per_seq], tf.int64),

"masked_lm_ids":

tf.FixedLenFeature([max_predictions_per_seq], tf.int64),

"masked_lm_weights":

tf.FixedLenFeature([max_predictions_per_seq], tf.float32),

"next_sentence_labels":

tf.FixedLenFeature([1], tf.int64),

}

# For training, we want a lot of parallel reading and shuffling.

# For eval, we want no shuffling and parallel reading doesn't matter.

if is_training:

d = tf.data.Dataset.from_tensor_slices(tf.constant(input_files))

d = d.repeat()

d = d.shuffle(buffer_size=len(input_files))

# `cycle_length` is the number of parallel files that get read.

cycle_length = min(num_cpu_threads, len(input_files))

# `sloppy` mode means that the interleaving is not exact. This adds

# even more randomness to the training pipeline.

d = d.apply(

tf.contrib.data.parallel_interleave(

tf.data.TFRecordDataset,

sloppy=is_training,

cycle_length=cycle_length))

d = d.shuffle(buffer_size=100)

else:

d = tf.data.TFRecordDataset(input_files)

# Since we evaluate for a fixed number of steps we don't want to encounter

# out-of-range exceptions.

d = d.repeat()

# We must `drop_remainder` on training because the TPU requires fixed

# size dimensions. For eval, we assume we are evaluating on the CPU or GPU

# and we *don't* want to drop the remainder, otherwise we wont cover

# every sample.

d = d.apply(

tf.contrib.data.map_and_batch(

lambda record: _decode_record(record, name_to_features),

batch_size=batch_size,

num_parallel_batches=num_cpu_threads,

drop_remainder=True))

return d

return input_fn

至此,整体的源代码预训练初步看完了,后续结合fine-tuning再补充。

1. [NLP自然语言处理]谷歌BERT模型深度解析

2. tf.clip_by_global_norm理解

3. TensorFlow学习(十一):保存TFRecord文件