【11】大数据Hadoop框架下的TF-IDF技术原理和代码实现

文章目录

- 一、TF-IDF 技术简介

- 本次代码计算环境是 :

- 1、词频 (term frequency, TF)

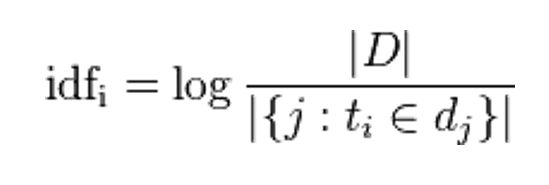

- 2、逆向文件频率(inverse document frequency, IDF)

- 3、TF-IDF

- 4、基于大数据hadoop框架的代码案例实现

- 5、Java代码实现

- 结果显示

一、TF-IDF 技术简介

- TF-IDF(term frequency–inverse document frequency)是一种用于资讯检索与资讯探勘的常用加权技术。

- TF-IDF是一种统计方法,用以评估一字词对于一个文件集或一个语料库中的其中一份文件的重要程度。 其中字词的重要性随着它在文件中出现的次数成正比增加,但同时会随着它在语料库中出现的频率成反比下降

- TF-IDF加权的各种形式常被搜寻引擎应用。作为文件与用户查询之间相关程度的度量或评级。 除了TF-IDF以外,因特网上的搜寻引擎还会使用基于链接分析的评级方法,以确定文件在搜寻结果中出现的顺序:PR

- 举个日常场景来说明情况:假设目前我们有10篇包含王者,荣耀,露娜,连招关键字的文章,但是这四个关键字在文章的个数是不一样的。以下四种用户操作:

第一个:只搜索王者关键字

第二个:只搜索王者,荣耀两个关键字;

第三个:只搜索王者,荣耀,露娜三个关键字;

第四种:只搜索王者,荣耀,露娜,连招四个关键字;

那么问题就来了: 这四种用户操作行为,结果是一样的,出现了我们10篇文章。但是这里需要考虑一个问题,每一种操作侧重点是不同的,就必须第一个操作比较第二个操作,除了关心王者这个关键字,更加侧重荣耀这个关键字。所以我们展示给用户的文章,处理必须要有包含用户搜索王者,荣耀这两个关键字,还要将侧重荣耀的文章首先放在第一条推向我们的用户。如何实现这一技术,就是我们接下来要讲的TF-IDF技术。

总结这个应用场景的模式:1、每个字词都有对应出现的页面。2、通过字词数量缩小范围 3、最终通过字词对于页面的权重来进行排序

本次代码计算环境是 :

- Hadoop-3.1.2

- 四台主机

- 两台NN的HA(高可用的NameNode节点)

- 两台RM的HA(高可用的ResourceManager节点)

- 离线计算框架MapReduce

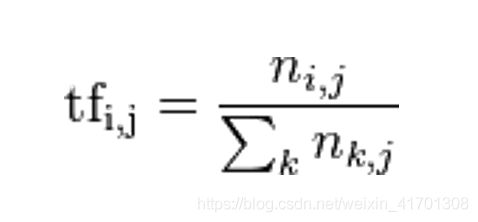

1、词频 (term frequency, TF)

- 词频 (term frequency, TF) 指的是某一个给定的词语在一份给定的文件中出现的次数。这个数字通常会被归一化(分子一般小于分母 区别于IDF),以防止它偏向长的文件。(同一个词语在长文件里可能会比短文件有更高的词频,而不管该词语重要与否。)

- 注意:ni,j是该词在文件dj中的出现次数,而分母则是在文件dj中所有字词的出现次数之和。

2、逆向文件频率(inverse document frequency, IDF)

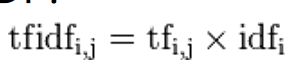

3、TF-IDF

-

- 某一特定文件内的高词语频率,以及该词语在整个文件集合中的低文件频率,可以产生出高权重的TF-IDF。因此,TF-IDF倾向于过滤掉常见的词语,保留重要的词语。

-

- TFIDF的主要思想是:如果某个词或短语在一篇文章中出现的频率TF高,并且在其他文章中很少出现,则认为此词或者短语具有很好的类别区分能力,适合用来分类。

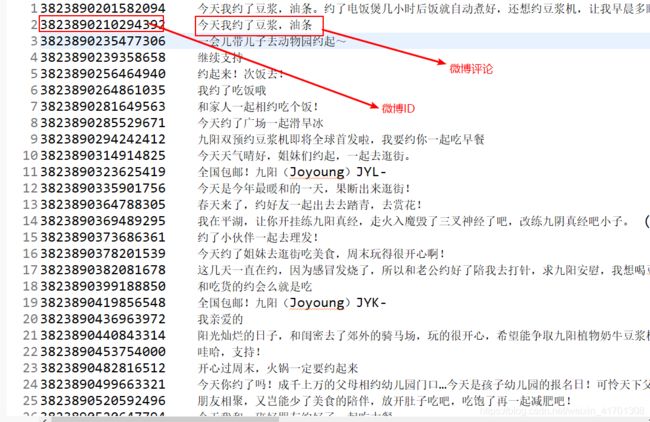

4、基于大数据hadoop框架的代码案例实现

-

2、业务要求:根据资料内容,制作这些内容的TF-IDF文档,同时计算是出现豆浆关键字的IF-IDF值的大小和出现过的内容;

-

3、hadoop框架设计思路

- 第一次:词频统计+文本总数统计

- map:词频:key:字词+文本,value:1;文本总数:key:count,value:1

- partition:4个reduce;0~2号reduce并行计算词频;3号reduce计算文本总数

- reduce:0~2:sum;3:count:sum

- 第二次: 字词集合统计:逆向文件频率

- map:key:字词,value:1

- reduce: sum

- 第三次:取1,2次结果最终计算出字词的TF-IDF

- map:输入数据为第一步的tf;setup:加载:a,DF;b,文本总数;计算TF-IDF;key:文本,value:字词+TF-IDF

- reduce:按文本(key)生成该文本的字词+TF-IDF值列表

- 第一次:词频统计+文本总数统计

5、Java代码实现

- FirstJob类

public class FirstJob {

public static void main(String[] args) {

Configuration conf = new Configuration();

conf.set("mapreduce.app-submission.coress-paltform", "true");

conf.set("mapreduce.framework.name", "local");

try {

FileSystem fs = FileSystem.get(conf);

Job job = Job.getInstance(conf);

job.setJarByClass(FirstJob.class);

job.setJobName("weibo1");

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

job.setNumReduceTasks(4);

job.setPartitionerClass(FirstPartition.class);

job.setMapperClass(FirstMapper.class);

job.setCombinerClass(FirstReduce.class);

job.setReducerClass(FirstReduce.class);

FileInputFormat.addInputPath(job, new Path("/data/tfidf/input/"));

Path path = new Path("/data/tfidf/output/weibo1");

if (fs.exists(path)) {

fs.delete(path, true);

}

FileOutputFormat.setOutputPath(job, path);

boolean f = job.waitForCompletion(true);

if (f) {

}

} catch (Exception e) {

e.printStackTrace();

}

}

}

- FirstMapper类

public class FirstMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

protected void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

//3823890210294392 今天我约了豆浆,油条

String[] v = value.toString().trim().split("\t");

if (v.length >= 2) {

String id = v[0].trim();

String content = v[1].trim();

StringReader sr = new StringReader(content);

IKSegmenter ikSegmenter = new IKSegmenter(sr, true);

Lexeme word = null;

while ((word = ikSegmenter.next()) != null) {

String w = word.getLexemeText();

context.write(new Text(w + "_" + id), new IntWritable(1));

//今天_3823890210294392 1

}

context.write(new Text("count"), new IntWritable(1));

//count 1

} else {

System.out.println(value.toString() + "-------------");

}

}

}

- FirstPartition类

/**

* 第一个MR自定义分区

* @author root

*

*/

public class FirstPartition extends HashPartitioner<Text, IntWritable>{

public int getPartition(Text key, IntWritable value, int reduceCount) {

if(key.equals(new Text("count")))

return 3;

else

return super.getPartition(key, value, reduceCount-1);

}

}

- FirstReduce类

public class FirstReduce extends Reducer<Text, IntWritable, Text, IntWritable> {

protected void reduce(Text key, Iterable<IntWritable> iterable,

Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable i : iterable) {

sum = sum + i.get();

}

if (key.equals(new Text("count"))) {

System.out.println(key.toString() + "___________" + sum);

}

context.write(key, new IntWritable(sum));

}

}

- TwoJob类

public class TwoJob {

public static void main(String[] args) {

Configuration conf =new Configuration();

conf.set("mapreduce.app-submission.coress-paltform", "true");

conf.set("mapreduce.framework.name", "local");

try {

Job job =Job.getInstance(conf);

job.setJarByClass(TwoJob.class);

job.setJobName("weibo2");

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

job.setMapperClass(TwoMapper.class);

job.setCombinerClass(TwoReduce.class);

job.setReducerClass(TwoReduce.class);

//mr运行时的输入数据从hdfs的哪个目录中获取

FileInputFormat.addInputPath(job, new Path("/data/tfidf/output/weibo1"));

FileOutputFormat.setOutputPath(job, new Path("/data/tfidf/output/weibo2"));

boolean f= job.waitForCompletion(true);

if(f){

System.out.println("执行job成功");

}

} catch (Exception e) {

e.printStackTrace();

}

}

}

- TwoMapper类

//统计df:词在多少个微博中出现过。

public class TwoMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

protected void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

// 获取当前 mapper task的数据片段(split)

FileSplit fs = (FileSplit) context.getInputSplit();

if (!fs.getPath().getName().contains("part-r-00003")) {

//豆浆_3823890201582094 3

String[] v = value.toString().trim().split("\t");

if (v.length >= 2) {

String[] ss = v[0].split("_");

if (ss.length >= 2) {

String w = ss[0];

context.write(new Text(w), new IntWritable(1));

}

} else {

System.out.println(value.toString() + "-------------");

}

}

}

}

- TwoReduce类

public class TwoReduce extends Reducer<Text, IntWritable, Text, IntWritable> {

protected void reduce(Text key, Iterable<IntWritable> arg1, Context context)

throws IOException, InterruptedException {

int sum = 0;

for (IntWritable i : arg1) {

sum = sum + i.get();

}

context.write(key, new IntWritable(sum));

}

}

- LastJob类

public class LastJob {

public static void main(String[] args) {

Configuration conf =new Configuration();

// conf.set("mapred.jar", "C:\\Users\\root\\Desktop\\tfidf.jar");

conf.set("mapreduce.job.jar", "C:\\Users\\root\\Desktop\\tfidf.jar");

conf.set("mapreduce.app-submission.cross-platform", "true");

try {

FileSystem fs =FileSystem.get(conf);

Job job =Job.getInstance(conf);

job.setJarByClass(LastJob.class);

job.setJobName("weibo3");

job.setJar("C:\\Users\\root\\Desktop\\tfidf.jar");

//2.5

//把微博总数加载到

job.addCacheFile(new Path("/data/tfidf/output/weibo1/part-r-00003").toUri());

//把df加载到

job.addCacheFile(new Path("/data/tfidf/output/weibo2/part-r-00000").toUri());

//设置map任务的输出key类型、value类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

job.setMapperClass(LastMapper.class);

job.setReducerClass(LastReduce.class);

//mr运行时的输入数据从hdfs的哪个目录中获取

FileInputFormat.addInputPath(job, new Path("/data/tfidf/output/weibo1"));

Path outpath =new Path("/data/tfidf/output/weibo3");

if(fs.exists(outpath)){

fs.delete(outpath, true);

}

FileOutputFormat.setOutputPath(job,outpath );

boolean f= job.waitForCompletion(true);

if(f){

System.out.println("执行job成功");

}

} catch (Exception e) {

e.printStackTrace();

}

}

}

- LastMapper类

public class LastMapper extends Mapper<LongWritable, Text, Text, Text> {

// 存放微博总数

public static Map<String, Integer> cmap = null;

// 存放df

public static Map<String, Integer> df = null;

// 在map方法执行之前

protected void setup(Context context) throws IOException,

InterruptedException {

System.out.println("******************");

if (cmap == null || cmap.size() == 0 || df == null || df.size() == 0) {

URI[] ss = context.getCacheFiles();

if (ss != null) {

for (int i = 0; i < ss.length; i++) {

URI uri = ss[i];

if (uri.getPath().endsWith("part-r-00003")) {// 微博总数

Path path = new Path(uri.getPath());

// FileSystem fs

// =FileSystem.get(context.getConfiguration());

// fs.open(path);

BufferedReader br = new BufferedReader(new FileReader(path.getName()));

String line = br.readLine();

if (line.startsWith("count")) {

String[] ls = line.split("\t");

cmap = new HashMap<String, Integer>();

cmap.put(ls[0], Integer.parseInt(ls[1].trim()));

}

br.close();

} else if (uri.getPath().endsWith("part-r-00000")) {// 词条的DF

df = new HashMap<String, Integer>();

Path path = new Path(uri.getPath());

BufferedReader br = new BufferedReader(new FileReader(path.getName()));

String line;

while ((line = br.readLine()) != null) {

String[] ls = line.split("\t");

df.put(ls[0], Integer.parseInt(ls[1].trim()));

}

br.close();

}

}

}

}

}

protected void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

FileSplit fs = (FileSplit) context.getInputSplit();

// System.out.println("--------------------");

if (!fs.getPath().getName().contains("part-r-00003")) {

//豆浆_3823930429533207 2

String[] v = value.toString().trim().split("\t");

if (v.length >= 2) {

int tf = Integer.parseInt(v[1].trim());// tf值

String[] ss = v[0].split("_");

if (ss.length >= 2) {

String w = ss[0];

String id = ss[1];

double s = tf * Math.log(cmap.get("count") / df.get(w));

NumberFormat nf = NumberFormat.getInstance();

nf.setMaximumFractionDigits(5);

context.write(new Text(id), new Text(w + ":" + nf.format(s)));

}

} else {

System.out.println(value.toString() + "-------------");

}

}

}

}

- LastReduce类

public class LastReduce extends Reducer<Text, Text, Text, Text> {

protected void reduce(Text key, Iterable<Text> iterable, Context context)

throws IOException, InterruptedException {

StringBuffer sb = new StringBuffer();

for (Text i : iterable) {

sb.append(i.toString() + "\t");

}

context.write(key, new Text(sb.toString()));

}

}