Hadoop 伪分布式运行模式

目录

3 Hadoop 伪分布式运行模式

3.1 启动HDFS 并运行 MapReduce 程序

3.1.1 分析

3.1.2 执行步骤

3.2 启动YARN 并运行 MapReduce 程序

3.2.1 分析

3.2.2 执行步骤

3.3 配置历史服务器

3.3.1 配置mapred-site.xml

3.3.2 启动历史服务器

3.3.3 查看历史服务器是否启动

3.3.4 查看JobHistory

3.4 配置日志的聚集

3.4.1 配置yarn-site.xml

3.4.2 关闭NodeManager 、ResourceManager和HistoryManager

3.4.3 启动NodeManager 、ResourceManager和HistoryManager

3.4.4 删除HDFS上已经存在的输出文件

3.4.5 执行WordCount程序

3.4.6 查看日志

3.5 配置文件说明

3 Hadoop 伪分布式运行模式

3.1 启动HDFS 并运行 MapReduce 程序

3.1.1 分析

(1)配置集群

(2)启动、测试集群增、删、查

(3)执行WordCount案例

3.1.2 执行步骤

3.1.2.1 配置集群

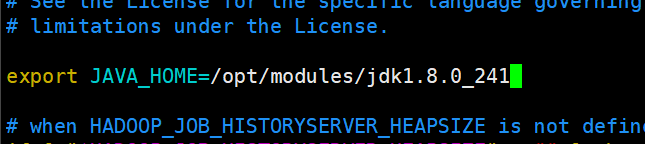

3.1.2.1.1 配置 hadoop-env.sh

[atlingtree@hadoop100 hadoop-2.9.2]$ cd etc/hadoop/

[atlingtree@hadoop100 hadoop]$ vim hadoop-env.sh

将文件中的JAVA_HOME修改成对应的位置,保存退出。

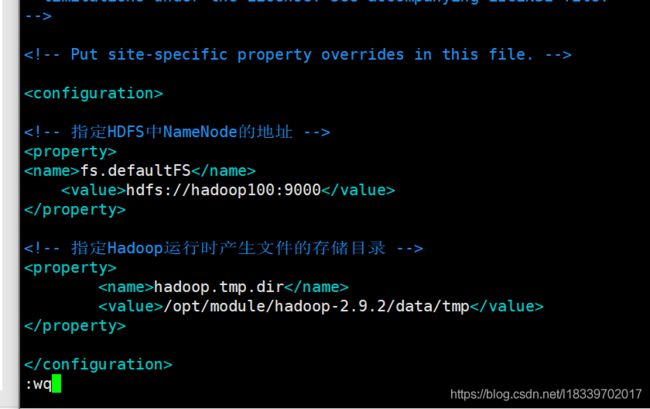

3.1.2.1.2 配置core-site.xml

[atlingtree@hadoop100 hadoop]$ vim core-site.xml

添加如下配置:

fs.defaultFS

hdfs://hadoop100:9000

hadoop.tmp.dir

/opt/module/hadoop-2.9.2/data/tmp

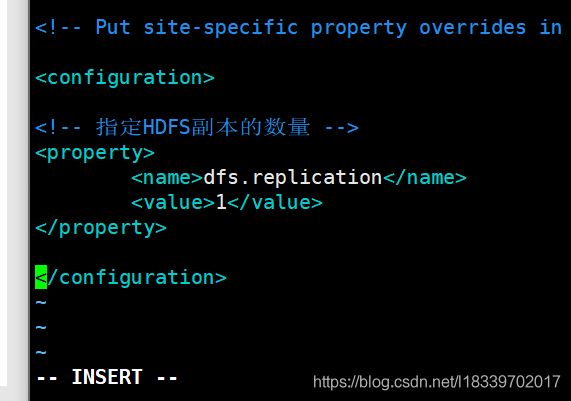

3.1.2.1.3 配置:hdfs-site.xml

[atlingtree@hadoop100 hadoop]$ vim hdfs-site.xml

添加如下配置:

dfs.replication

1

3.1.2.2 启动集群

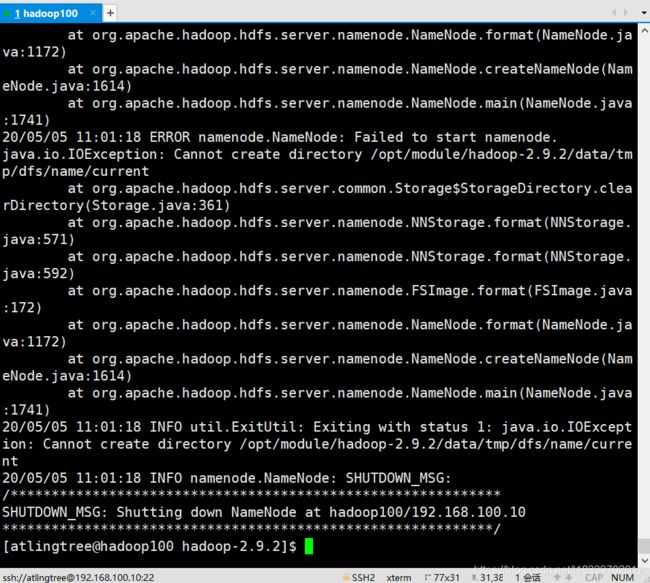

3.1.2.2.1 格式化NameNode

[atlingtree@hadoop100 hadoop]$ cd /opt/modules/hadoop-2.9.2/

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hdfs namenode -format

3.1.2.2.2 启动NameNode

[atlingtree@hadoop100 hadoop-2.9.2]$ sbin/hadoop-daemon.sh start namenode

starting namenode, logging to /opt/modules/hadoop-2.9.2/logs/hadoop-atlingtree-namenode-hadoop100.out

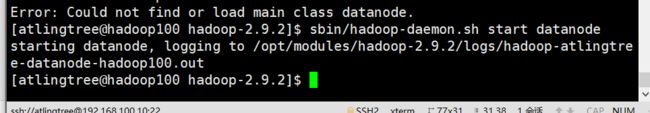

3.1.2.2.3 启动DataNode

[atlingtree@hadoop100 hadoop-2.9.2]$ sbin/hadoop-daemon.sh start datanode

starting datanode, logging to /opt/modules/hadoop-2.9.2/logs/hadoop-atlingtree-datanode-hadoop100.out

3.1.2.3 查看集群

3.1.2.3.1 查看是否启动成功

[atlingtree@hadoop100 hadoop-2.9.2]$ jps

3351 NameNode

3449 DataNode

3499 Jps

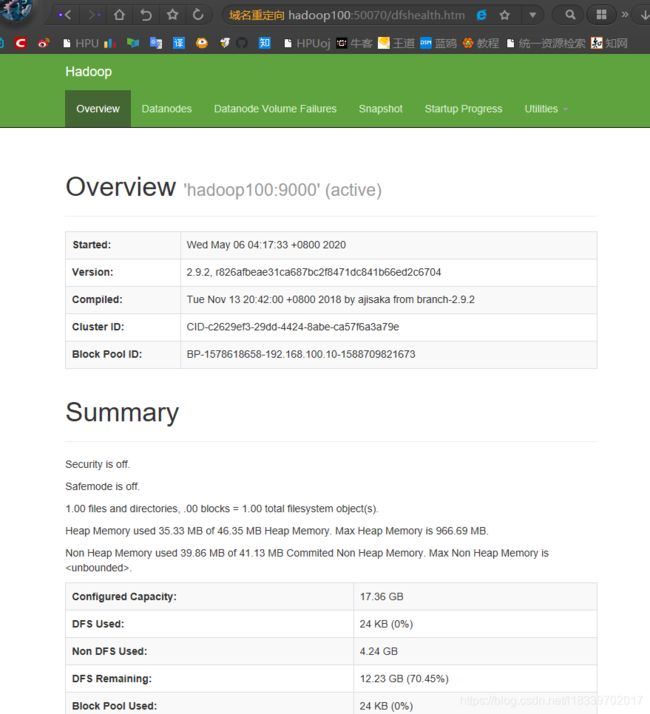

3.1.2.3.2 web端查看HDFS文件系统

打开浏览器输入 http://hadoop101:50070/

可以查看HDFS运行

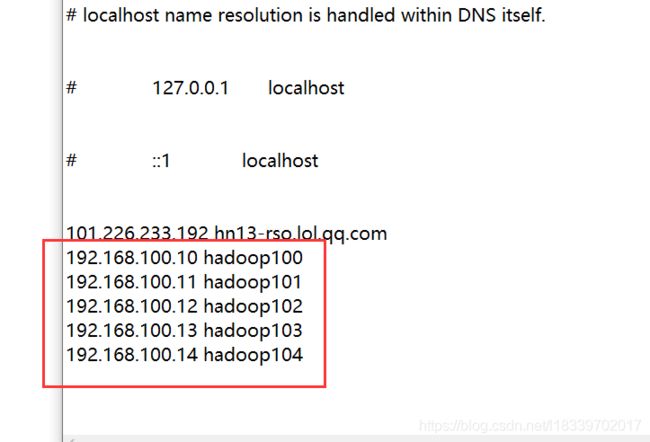

P.S 请修改本地hosts文件

C:\Windows\System32\drivers\etc\hosts

在最后添加如下内容

注意:如果不能查看,看如下帖子处理

http://www.cnblogs.com/zlslch/p/6604189.html

3.1.2.3.3 查看产生的Log日志

说明:在企业中遇到Bug时,经常根据日志提示信息去分析问题、解决Bug。

[atlingtree@hadoop100 ~]$ cd /opt/modules/hadoop-2.9.2/logs/

[atlingtree@hadoop100 logs]$ ls

hadoop-atlingtree-datanode-hadoop100.log

hadoop-atlingtree-datanode-hadoop100.out

hadoop-atlingtree-namenode-hadoop100.log

hadoop-atlingtree-namenode-hadoop100.out

SecurityAuth-atlingtree.audit

3.1.2.3.4 格式化NameNode需要注意的问题

格式化NameNode,会产生新的集群id,导致NameNode和DataNode的集群id不一致,集群找不到已往数据。所以,格式NameNode时,一定要先删除data数据和log日志,然后再格式化NameNode。

[atlingtree@hadoop100 hadoop-2.9.2]$ rm -rf data/ logs/

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hdfs namenode -format3.1.2.4 操作集群

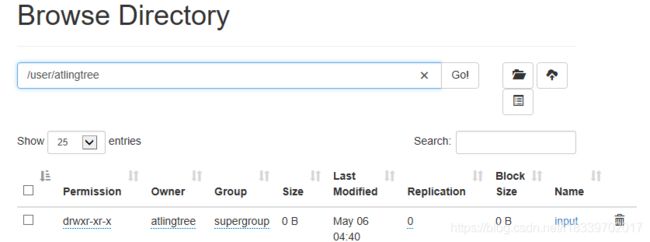

3.1.2.4.1 在在HDFS文件系统上创建一个input文件夹

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hdfs dfs -mkdir -p /user/atlingtree/input

我门在web端点击相应位置

可以看到成功创建了对应的文件夹

3.1.2.4.2 将测试文件内容上传到文件系统上

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hdfs dfs -put wcinput/wc.input /user/atlingtree/input/

3.1.2.4.3 查看上传的文件是否正确

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hdfs dfs -ls /user/atlingtree/input/

Found 1 items

-rw-r--r-- 1 atlingtree supergroup 46 2020-05-05 13:40 /user/atlingtree/input/wc.input

3.1.2.4.4 运行MapReduce 程序

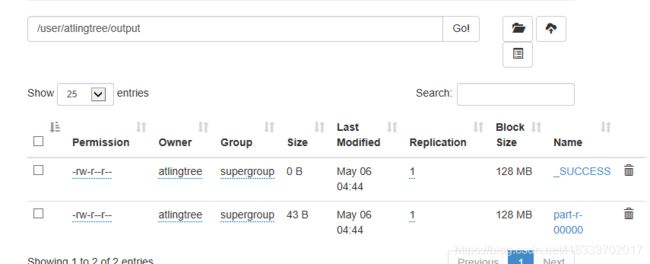

我们运行集群上 /user/atlingtree/input 的文件,同时将运行结果放在/user/atingtree/output

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.9.2.jar wordcount /user/atlingtree/input/ /user/atlingtree/output在web端查看:

果然多出了相应的文件。

3.1.2.4.5 查看输出结果

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hdfs dfs -cat /user/atlingtree/output/*

hadoop 2

ignb 2

mapreduce 1

tesnb 1

yarn 1

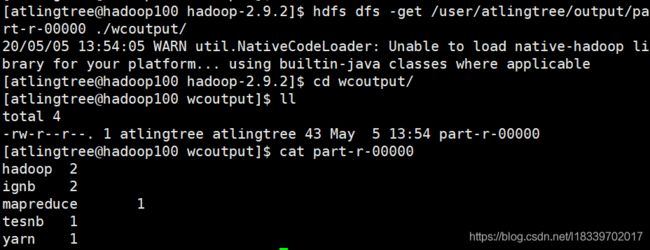

3.1.2.4.6 将测试文件内容下载到本地查看

之前运行过一次,所以要先把wcoutput的内容删除

[atlingtree@hadoop100 hadoop-2.9.2]$ cd wcoutput/

[atlingtree@hadoop100 wcoutput]$ ll

total 4

-rw-r--r--. 1 atlingtree atlingtree 43 May 5 10:17 part-r-00000

-rw-r--r--. 1 atlingtree atlingtree 0 May 5 10:17 _SUCCESS

[atlingtree@hadoop100 wcoutput]$ rm -rf part-r-00000 _SUCCESS

下载集群的输文件到本地:

[atlingtree@hadoop100 hadoop-2.9.2]$ hdfs dfs -get /user/atlingtree/output/part-r-00000 ./wcoutput/

3.1.2.4.7 删除集群上的输出结果

[atlingtree@hadoop100 hadoop-2.9.2]$ hdfs dfs -rm -r /user/atlingtree/output

Deleted /user/atlingtree/output

3.2 启动YARN 并运行 MapReduce 程序

3.2.1 分析

(1)配置集群在YARN上运行MR

(2)启动、测试集群增、删、查

(3)在YARN上执行WordCount案例

3.2.2 执行步骤

3.2.2.1 配置集群

3.2.2.1.1 配置yarn-env.sh

配置一下JAVA_HOME

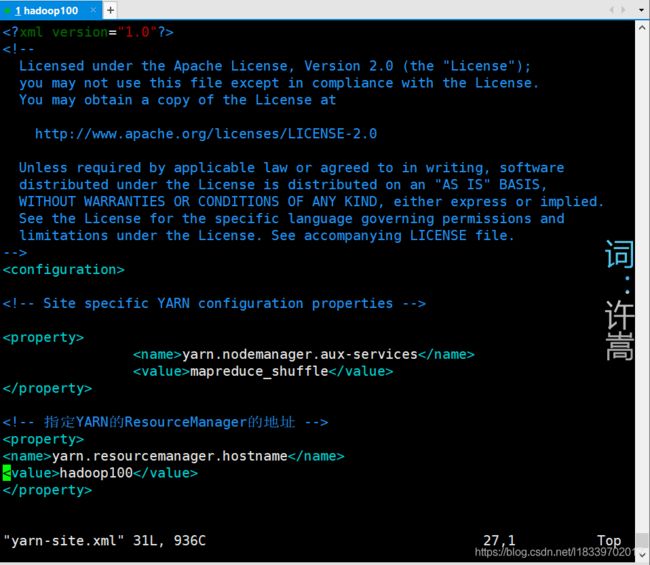

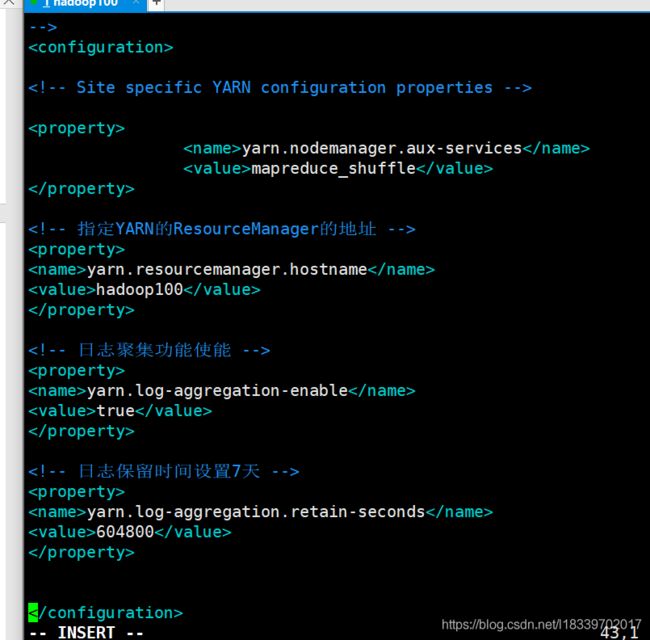

[atlingtree@hadoop100 hadoop]$ vim yarn-env.sh3.2.2.1.2 配置yarn-site.sh

[atlingtree@hadoop100 hadoop-2.9.2]$ cd etc/hadoop/

[atlingtree@hadoop100 hadoop]$ vim yarn-site.xml

将如下配置内容写入对应位置:

yarn.nodemanager.aux-services

mapreduce_shuffle

yarn.resourcemanager.hostname

hadoop100

3.2.2.1.3 配置mapred-env.sh

配置一下JAVA_HOME

[atlingtree@hadoop100 hadoop]$ vim mapred-env.sh

3.2.2.1.4 配置: (对mapred-site.xml.template重新命名为) mapred-site.xml

[atlingtree@hadoop100 hadoop]$ mv mapred-site.xml.template mapred-site.xml

[atlingtree@hadoop100 hadoop]$ vi mapred-site.xml

3.2.2.2 启动集群

3.2.2.2.1 启动前必须保证NameNode 和DataNode 已经启动

3.2.2.2.2 启动ResourceManager

[atlingtree@hadoop100 hadoop]$ cd /opt/modules/hadoop-2.9.2/

[atlingtree@hadoop100 hadoop-2.9.2]$ sbin/yarn-daemon.sh start resourcemanager

starting resourcemanager, logging to /opt/modules/hadoop-2.9.2/logs/yarn-atlingtree-resourcemanager-hadoop100.out

3.2.2.2.3 启动NodeManager

[atlingtree@hadoop100 hadoop-2.9.2]$ sbin/yarn-daemon.sh start nodemanager

starting nodemanager, logging to /opt/modules/hadoop-2.9.2/logs/yarn-atlingtree-nodemanager-hadoop100.out

3.2.2.3 集群操作

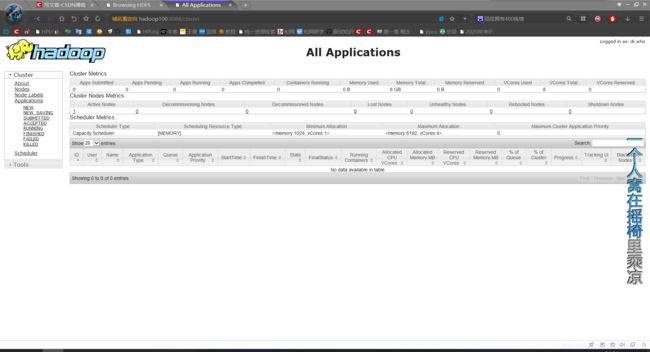

3.2.2.3.1 YARN 的浏览器页面查看

在浏览器中输入: http://hadoop100:8088

可查看当前Yarn 的状态

3.2.2.3.2 删除文件系统上的output文件夹

我们之前已经删除过了,可根据实际情况判断需不需要再删

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hdfs dfs -rm -R /user/atlingtree/output3.2.2.3.3 执行MapReduce 程序

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.9.2.jar wordcount /user/atlingtree/input /user/atlingtree/output3.2.2.3.4 查看运行结果

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hdfs dfs -cat /user/atlingtree/output/*

hadoop 2

ignb 2

mapreduce 1

tesnb 1

yarn 1

同时在web端可查看对应任务

3.3 配置历史服务器

为了查看程序的历史运行情况,需要配置一下历史服务器。具体配置步骤如下:

3.3.1 配置mapred-site.xml

[atlingtree@hadoop100 hadoop-2.9.2]$ cd /opt/modules/hadoop-2.9.2/etc/hadoop/

[atlingtree@hadoop100 hadoop]$ vim mapred-site.xml

添加如下配置:

mapreduce.jobhistory.address

hadoop100:10020

mapreduce.jobhistory.webapp.address

hadoop100:19888

3.3.2 启动历史服务器

[atlingtree@hadoop100 hadoop]$ cd /opt/modules/hadoop-2.9.2/

[atlingtree@hadoop100 hadoop-2.9.2]$ sbin/mr-jobhistory-daemon.sh start historyserver

starting historyserver, logging to /opt/modules/hadoop-2.9.2/logs/mapred-atlingtree-historyserver-hadoop100.out

3.3.3 查看历史服务器是否启动

[atlingtree@hadoop100 hadoop-2.9.2]$ jps

5172 JobHistoryServer

4389 NodeManager

4134 ResourceManager

5207 Jps

3351 NameNode

3449 DataNode

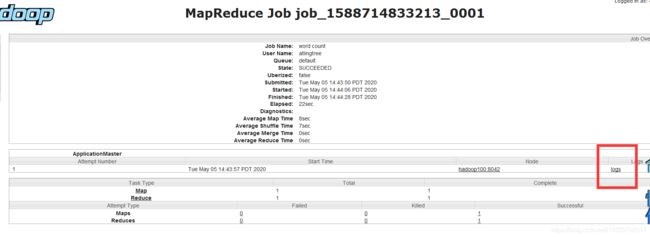

3.3.4 查看JobHistory

web端查看 http://hadoop100:19888

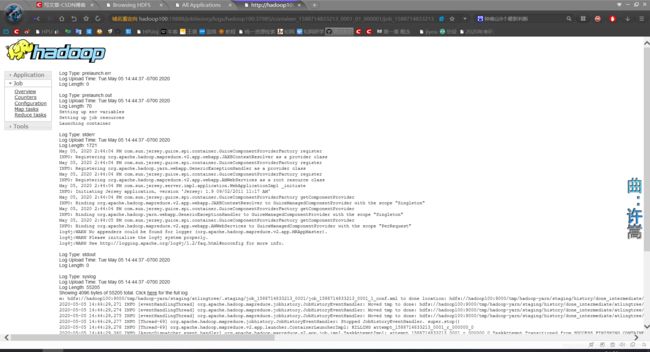

3.4 配置日志的聚集

日志聚集概念:应用运行完成以后,将程序运行日志信息上传到HDFS系统上。

日志聚集功能好处:可以方便的查看到程序运行详情,方便开发调试。

注意:开启日志聚集功能,需要重新启动NodeManager 、Reso

yarn.log-aggregation-enable

true

yarn.log-aggregation.retain-seconds

604800

urceManager和HistoryManager。

开启日志聚集功能具体步骤如下:

3.4.1 配置yarn-site.xml

[atlingtree@hadoop100 hadoop-2.9.2]$ cd /opt/modules/hadoop-2.9.2/etc/hadoop/

[atlingtree@hadoop100 hadoop]$ vim yarn-site.xml

添加如下配置:

3.4.2 关闭NodeManager 、ResourceManager和HistoryManager

[atlingtree@hadoop100 hadoop]$ cd /opt/modules/hadoop-2.9.2/

[atlingtree@hadoop100 hadoop-2.9.2]$ sbin/yarn-daemon.sh stop resourcemanagerstopping resourcemanager

[atlingtree@hadoop100 hadoop-2.9.2]$ sbin/yarn-daemon.sh stop nodemanager

stopping nodemanager

[atlingtree@hadoop100 hadoop-2.9.2]$ sbin/mr-jobhistory-daemon.sh stop historyserver

stopping historyserver

3.4.3 启动NodeManager 、ResourceManager和HistoryManager

[atlingtree@hadoop100 hadoop-2.9.2]$ sbin/yarn-daemon.sh start resourcemanager

starting resourcemanager, logging to /opt/modules/hadoop-2.9.2/logs/yarn-atlingtree-resourcemanager-hadoop100.out

[atlingtree@hadoop100 hadoop-2.9.2]$ sbin/yarn-daemon.sh start nodemanager

starting nodemanager, logging to /opt/modules/hadoop-2.9.2/logs/yarn-atlingtree-nodemanager-hadoop100.out

[atlingtree@hadoop100 hadoop-2.9.2]$ sbin/mr-jobhistory-daemon.sh start historyserver

starting historyserver, logging to /opt/modules/hadoop-2.9.2/logs/mapred-atlingtree-historyserver-hadoop100.out

可以看一下 jps 运行情况

3.4.4 删除HDFS上已经存在的输出文件

还是删除掉 /user/atlingtree/output 文件夹

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hdfs dfs -rm -R /user/atlingtree/output

Deleted /user/atlingtree/output

3.4.5 执行WordCount程序

[atlingtree@hadoop100 hadoop-2.9.2]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.9.2.jar wordcount /user/atlingtree/input /user/atlingtree/output

3.4.6 查看日志

http://hadoop100:19888/jobhistory

可以看到详细内容。

3.5 配置文件说明

Hadoop配置文件分两类:默认配置文件和自定义配置文件,只有用户想修改某一默认配置值时,才需要修改自定义配置文件,更改相应属性值。

(1)默认配置文件:

| 要获取的默认文件 |

文件存放在Hadoop的jar包中的位置 |

| [core-default.xml] |

hadoop-common-2.7.2.jar/ core-default.xml |

| [hdfs-default.xml] |

hadoop-hdfs-2.7.2.jar/ hdfs-default.xml |

| [yarn-default.xml] |

hadoop-yarn-common-2.7.2.jar/ yarn-default.xml |

| [mapred-default.xml] |

hadoop-mapreduce-client-core-2.7.2.jar/ mapred-default.xml |

(2)自定义配置文件:

core-site.xml、hdfs-site.xml、yarn-site.xml、mapred-site.xml四个配置文件存放在$HADOOP_HOME/etc/hadoop这个路径上,用户可以根据项目需求重新进行修改配置。