宽残差网络——WRN

宽残差网络——Wide Residual Network

文章出自Sergey Zagoruyko的Wide Residual Network

残差网络——Residual Network

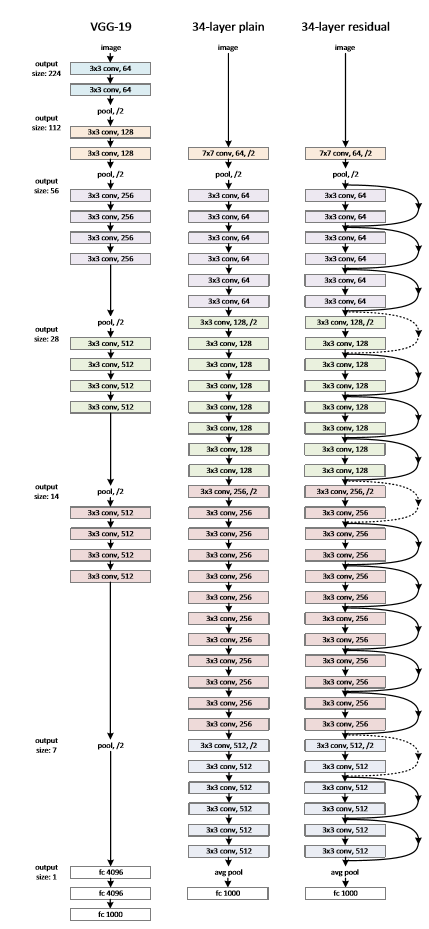

近年来,残差网络(Residual Network)在各个测试集上都取得了不错的效果,其网络结构如下图所示,

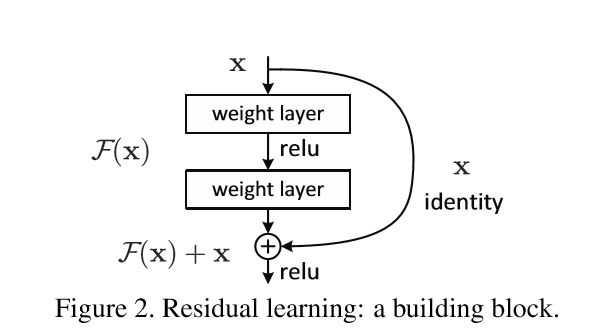

其网络网络由残差模块组成

其中的跳跃式连接可以将残差很好的传递过去,从而避免梯度消失的现象。

可是,深度残差网络过于追求网络深度,而忽略了模块自身的问题。随着模块的增加,模型的表现并没有明显提升,因此可以理解为部分模块其实并没有起到应有的作用,

as gradient flows through the network there is nothing to force it to go through residual block weights and ti can avoid learning anything during training, so ti is possible that there is either only a few blocks that learn useful representations

因此,本文的作者希望提出一种更加有效的方式,来提升残差模块的效果。

out goal is to explore a much richer set of network architectures of ResNet Blocks and thoroughly examine how several other different aspects besides the order of activations affect performance.

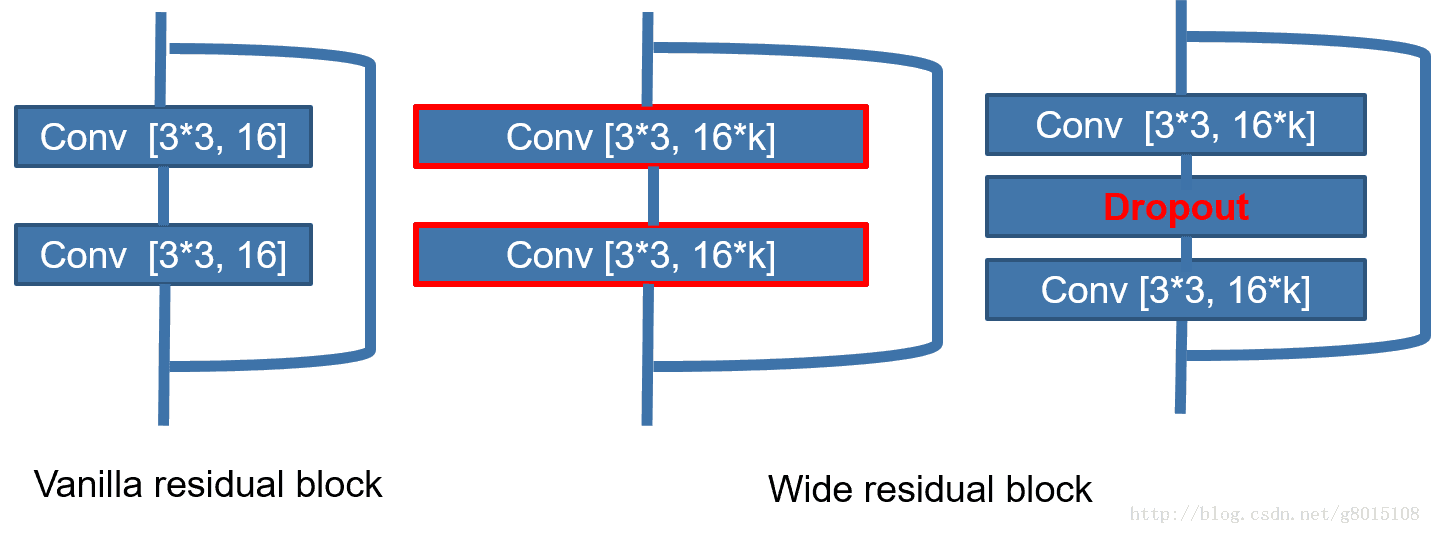

宽残差网络——WRN

WRN在原始的残差模块的基础上加上了一个系数k,从而拓宽卷积核的个数。按照文章中提出的解释,这样做降低了层数,但并没有减少模型参数,同时还加快了计算速度。

in particular, we present wider deep residual networks that significantly improved, having 50 times less layers and being more than 2 times faster.

模型结构如下表所示

![]()

实验

文中给出的实验结果

![]()

![]()

![]()

代码

# -*- coding: utf-8 -*-

"""

Created on Tue Nov 21 20:43:10 2017

@author: Sky_Gao

"""

from keras.models import Model

from keras.layers import Input, Add, Activation, Dropout, Flatten, Dense

from keras.layers.convolutional import Convolution2D, MaxPooling2D, AveragePooling2D

from keras.layers.normalization import BatchNormalization

from keras import backend as K

def initial_conv(input):

x = Convolution2D(16, (3, 3), padding='same', kernel_initializer='he_normal',

use_bias=False)(input)

channel_axis = 1 if K.image_data_format() == "channels_first" else -1

x = BatchNormalization(axis=channel_axis, momentum=0.1, epsilon=1e-5, gamma_initializer='uniform')(x)

x = Activation('relu')(x)

return x

def expand_conv(init, base, k, strides=(1, 1)):

x = Convolution2D(base * k, (3, 3), padding='same', strides=strides, kernel_initializer='he_normal',

use_bias=False)(init)

channel_axis = 1 if K.image_data_format() == "channels_first" else -1

x = BatchNormalization(axis=channel_axis, momentum=0.1, epsilon=1e-5, gamma_initializer='uniform')(x)

x = Activation('relu')(x)

x = Convolution2D(base * k, (3, 3), padding='same', kernel_initializer='he_normal',

use_bias=False)(x)

skip = Convolution2D(base * k, (1, 1), padding='same', strides=strides, kernel_initializer='he_normal',

use_bias=False)(init)

m = Add()([x, skip])

return m

def conv1_block(input, k=1, dropout=0.0):

init = input

channel_axis = 1 if K.image_data_format() == "channels_first" else -1

x = BatchNormalization(axis=channel_axis, momentum=0.1, epsilon=1e-5, gamma_initializer='uniform')(input)

x = Activation('relu')(x)

x = Convolution2D(16 * k, (3, 3), padding='same', kernel_initializer='he_normal',

use_bias=False)(x)

if dropout > 0.0: x = Dropout(dropout)(x)

x = BatchNormalization(axis=channel_axis, momentum=0.1, epsilon=1e-5, gamma_initializer='uniform')(x)

x = Activation('relu')(x)

x = Convolution2D(16 * k, (3, 3), padding='same', kernel_initializer='he_normal',

use_bias=False)(x)

m = Add()([init, x])

return m

def conv2_block(input, k=1, dropout=0.0):

init = input

channel_axis = 1 if K.image_dim_ordering() == "th" else -1

x = BatchNormalization(axis=channel_axis, momentum=0.1, epsilon=1e-5, gamma_initializer='uniform')(input)

x = Activation('relu')(x)

x = Convolution2D(32 * k, (3, 3), padding='same', kernel_initializer='he_normal',

use_bias=False)(x)

if dropout > 0.0: x = Dropout(dropout)(x)

x = BatchNormalization(axis=channel_axis, momentum=0.1, epsilon=1e-5, gamma_initializer='uniform')(x)

x = Activation('relu')(x)

x = Convolution2D(32 * k, (3, 3), padding='same', kernel_initializer='he_normal',

use_bias=False)(x)

m = Add()([init, x])

return m

def conv3_block(input, k=1, dropout=0.0):

init = input

channel_axis = 1 if K.image_dim_ordering() == "th" else -1

x = BatchNormalization(axis=channel_axis, momentum=0.1, epsilon=1e-5, gamma_initializer='uniform')(input)

x = Activation('relu')(x)

x = Convolution2D(64 * k, (3, 3), padding='same', kernel_initializer='he_normal',

use_bias=False)(x)

if dropout > 0.0: x = Dropout(dropout)(x)

x = BatchNormalization(axis=channel_axis, momentum=0.1, epsilon=1e-5, gamma_initializer='uniform')(x)

x = Activation('relu')(x)

x = Convolution2D(64 * k, (3, 3), padding='same', kernel_initializer='he_normal',

use_bias=False)(x)

m = Add()([init, x])

return m

def create_wide_residual_network(input_dim, nb_classes=100, N=2, k=1, dropout=0.0, verbose=1):

"""

Creates a Wide Residual Network with specified parameters

:param input: Input Keras object

:param nb_classes: Number of output classes

:param N: Depth of the network. Compute N = (n - 4) / 6.

Example : For a depth of 16, n = 16, N = (16 - 4) / 6 = 2

Example2: For a depth of 28, n = 28, N = (28 - 4) / 6 = 4

Example3: For a depth of 40, n = 40, N = (40 - 4) / 6 = 6

:param k: Width of the network.

:param dropout: Adds dropout if value is greater than 0.0

:param verbose: Debug info to describe created WRN

:return:

"""

channel_axis = 1 if K.image_data_format() == "channels_first" else -1

ip = Input(shape=input_dim)

x = initial_conv(ip)

nb_conv = 4

x = expand_conv(x, 16, k)

for i in range(N - 1):

x = conv1_block(x, k, dropout)

nb_conv += 2

x = BatchNormalization(axis=channel_axis, momentum=0.1, epsilon=1e-5, gamma_initializer='uniform')(x)

x = Activation('relu')(x)

x = expand_conv(x, 32, k, strides=(2, 2))

for i in range(N - 1):

x = conv2_block(x, k, dropout)

nb_conv += 2

x = BatchNormalization(axis=channel_axis, momentum=0.1, epsilon=1e-5, gamma_initializer='uniform')(x)

x = Activation('relu')(x)

x = expand_conv(x, 64, k, strides=(2, 2))

for i in range(N - 1):

x = conv3_block(x, k, dropout)

nb_conv += 2

x = BatchNormalization(axis=channel_axis, momentum=0.1, epsilon=1e-5, gamma_initializer='uniform')(x)

x = Activation('relu')(x)

x = AveragePooling2D((8, 8))(x)

x = Flatten()(x)

x = Dense(nb_classes, activation='softmax')(x)

model = Model(ip, x)

if verbose: print("Wide Residual Network-%d-%d created." % (nb_conv, k))

return model

if __name__ == "__main__":

from keras.utils import plot_model

from keras.layers import Input

from keras.models import Model

init = (32, 32, 3)

wrn_28_10 = create_wide_residual_network(init, nb_classes=10, N=2, k=2, dropout=0.0)

wrn_28_10.summary()

plot_model(wrn_28_10, "WRN-16-2.png", show_shapes=True, show_layer_names=True)