- yum install locate出现Error: Unable to find match: locate解决方案

爱编程的喵喵

Linux解决方案linuxlocateyum解决方案

大家好,我是爱编程的喵喵。双985硕士毕业,现担任全栈工程师一职,热衷于将数据思维应用到工作与生活中。从事机器学习以及相关的前后端开发工作。曾在阿里云、科大讯飞、CCF等比赛获得多次Top名次。现为CSDN博客专家、人工智能领域优质创作者。喜欢通过博客创作的方式对所学的知识进行总结与归纳,不仅形成深入且独到的理解,而且能够帮助新手快速入门。 本文主要介绍了yuminstalllocate出现

- 【人工智能机器学习基础篇】——深入详解无监督学习之降维:PCA与t-SNE的关键概念与核心原理

猿享天开

人工智能数学基础专讲人工智能机器学习无监督学习降维

深入详解无监督学习之降维:PCA与t-SNE的关键概念与核心原理在当今数据驱动的世界中,数据维度的增多带来了计算复杂性和存储挑战,同时也可能导致模型性能下降,这一现象被称为“维度诅咒”(CurseofDimensionality)。降维作为一种重要的特征提取和数据预处理技术,旨在通过减少数据的维度,保留其主要信息,从而简化数据处理过程,并提升模型的性能。本文将深入探讨两种广泛应用于无监督学习中的降

- 模型上下文协议 (MCP)是什么?Model Context Protocol 需要你了解一下

同学小张

学习AIGCAI-nativeagigpt开源协议

大家好,我是同学小张,+v:jasper_8017一起交流,持续学习AI大模型应用实战案例,持续分享,欢迎大家点赞+关注,订阅我的大模型专栏,共同学习和进步。在人工智能领域,ModelContextProtocol(MCP)正逐渐成为连接AI模型与各类数据源及工具的重要标准。MCP究竟为何物?它又将如何改变AI应用的开发与使用?文章目录0.概念1.MCP的总体架构2.为何使用MCP?3.我的理解4

- 生成式对抗网络在人工智能艺术创作中的应用与创新研究

辛迎蕌

人工智能

摘要本文深入探究生成式对抗网络(GAN)在人工智能艺术创作领域的应用与创新。通过剖析GAN核心原理,阐述其在图像、音乐、文学等艺术创作中的实践,分析面临的挑战与创新方向,呈现GAN对艺术创作模式的变革,为理解人工智能与艺术融合发展提供全面视角。一、引言在人工智能与艺术深度融合的时代浪潮中,生成式对抗网络(GAN)作为一项突破性技术,为艺术创作带来了全新的可能性。它打破传统创作边界,以独特的对抗学习

- 知识图谱在人工智能语义理解与推理中的关键作用及发展研究

@王威&

人工智能

摘要本文聚焦知识图谱,深入剖析其在人工智能语义理解与推理中的核心作用。阐述知识图谱的构建原理、表示方法,分析其在自然语言处理、智能问答系统、推荐系统等多领域助力语义理解与推理的应用,探讨面临的挑战并展望未来发展方向,全面呈现知识图谱对人工智能发展的重要价值与深远影响。一、引言在人工智能追求更精准理解和处理人类语言与知识的进程中,知识图谱成为关键技术。它以结构化形式组织海量知识,揭示实体间复杂关系,

- Flink启动任务

swg321321

flink大数据

Flink以本地运行作为解读例如:第一章Python机器学习入门之pandas的使用提示:写完文章后,目录可以自动生成,如何生成可参考右边的帮助文档文章目录Flink前言StreamExecutionEnvironmentLocalExecutorMiniClusterStreamGraph二、使用步骤1.引入库2.读入数据总结前言提示:这里可以添加本文要记录的大概内容:例如:随着人工智能的不断发

- 计算机专业毕业设计题目推荐(新颖选题)本科计算机人工智能专业相关毕业设计选题大全✅

会写代码的羊

毕设选题课程设计人工智能毕业设计毕设题目毕业设计题目aiAI编程

文章目录前言最新毕设选题(建议收藏起来)本科计算机人工智能专业相关的毕业设计选题毕设作品推荐前言2025全新毕业设计项目博主介绍:✌全网粉丝10W+,CSDN全栈领域优质创作者,博客之星、掘金/华为云/阿里云等平台优质作者。技术范围:SpringBoot、Vue、SSM、HLMT、Jsp、PHP、Nodejs、Python、爬虫、数据可视化、小程序、大数据、机器学习等设计与开发。主要内容:免费功能

- AI人工智能 Agent:在赋能传统行业中的应用

AI天才研究院

计算DeepSeekR1&大数据AI人工智能大模型计算科学神经计算深度学习神经网络大数据人工智能大型语言模型AIAGILLMJavaPython架构设计AgentRPA

AI人工智能Agent:在赋能传统行业中的应用1.背景介绍1.1人工智能的发展历程1.1.1人工智能的起源与发展1.1.2人工智能的三次浪潮1.1.3人工智能的现状与挑战1.2传统行业面临的困境1.2.1效率低下1.2.2成本高企1.2.3决策滞后1.3人工智能赋能传统行业的必要性1.3.1提高效率1.3.2降低成本1.3.3优化决策2.核心概念与联系2.1人工智能Agent的定义2.1.1Age

- 【C++】C++从入门到精通教程(持续更新...)

废人一枚

C++c++开发语言

前言最近在整理之前一些C++资料,重新整理出了一套C++从基础到实践的教程,包含概念、代码、运行结果以及知识点的扩展,感兴趣的后续大家持续关注。以下是更新的文章目录,文章之后整理了一个知识思维导图,看起来比较清楚点。目录1、C++基础知识C++基础知识一个简单的C++程序函数重载引用的概念引用与指针的区别引用作为函数参数引用作为返回值面向对象类的定义类的声明结构体与类的区别inline函数this

- “四预”驱动数字孪生水利:让智慧治水守护山河安澜

GeoSaaS

实景三维智慧城市人工智能gis大数据安全

近年来,从黄河秋汛到海河特大洪水,从珠江流域性洪灾到长江罕见骤旱,极端天气频发让水安全问题备受关注。如何实现“治水于未发”?数字孪生水利以“预报、预警、预演、预案”(四预)为核心,正在掀起一场水利治理的智慧革命。一、数字孪生水利:从物理世界到虚拟镜像的跃迁数字孪生水利并非简单的“数字建模”,而是通过高精度传感器、大数据、人工智能等技术,在虚拟空间构建与物理流域完全映射的“数字分身”,实现水情、工情

- 硬件NAS将成为电子垃圾?

DeepSeek+NAS

家用NASWinNAS飞牛NAS人工智能安卓NAS

随着人工智能(AI)技术的快速发展,传统的NAS设备正面临一场深刻的变革。过去,NAS的主要功能是提供数据存储和共享服务,但在AI时代,单纯的存储功能已无法满足用户需求。未来的NAS必须集成本地AI能力,才能成为真正的AI-NAS。然而,当前市场上的NAS产品硬件配置普遍较低,无法支持本地AI的运行。因此,现有的硬件NAS在三年内可能会被淘汰,取而代之的将是集成了AI和NAS功能的家用AI服务器。

- 【DeepSeek】 全方位使用指南————简版

諰.

人工智能aiAI写作

一、平台概述DeepSeek(深度求索)是专注实现AGI的中国的人工智能公司,提供多款AI产品:智能对话(Chat)文生图(Art)代码助手(Coder)API开发接口企业定制解决方案二、注册与登录2.1账号创建访问官网https://www.deepseek.com点击右上角「注册」支持三种方式:手机号+短信验证邮箱注册(需验证邮件)第三方登录(微信/Google账号)2.2订阅计划套餐类型免费

- 【人工智能】注意力机制深入理解

问道飞鱼

机器学习与人工智能人工智能注意力机制

文章目录**一、注意力机制的核心思想****二、传统序列模型的局限性****三、Transformer与自注意力机制****1.自注意力机制的数学公式****四、注意力机制的关键改进****1.稀疏注意力(SparseAttention)****2.相对位置编码(RelativePositionEncoding)****3.图注意力网络(GraphAttentionNetwork,GAN)****

- 笔记:代码随想录算法训练营day57:99.岛屿数量 深搜、岛屿数量 广搜、100.岛屿的最大面积

jingjingjing1111

深度优先算法笔记

学习资料:代码随想录注:文中含大模型生成内容99.岛屿数量卡码网题目链接(ACM模式)先看深搜方法:找到未标标记过的说明找到一片陆地的或者一片陆地的一个角落,dfs搜索是寻找相连接的陆地其余部分并做好标记#include#includeusingnamespacestd;intdirection[4][2]={0,1,-1,0,0,-1,1,0};voiddfs(constvector>&B612

- 笔记:代码随想录算法训练营day56:图论理论基础、深搜理论基础、98. 所有可达路径、广搜理论基础

jingjingjing1111

笔记

学习资料:代码随想录连通图是给无向图的定义,强连通图是给有向图的定义朴素存储:二维数组邻接矩阵邻接表:list基础知识:C++容器类|菜鸟教程深搜是沿着一个方向搜到头再不断回溯,转向;广搜是每一次搜索要把当前能够得到的方向搜个遍深搜三部曲:传入参数、终止条件、处理节点+递推+回溯98.所有可达路径卡码网题目链接(ACM模式)先是用邻接矩阵,矩阵的x,y表示从x到y有一条边主要还是用回溯方法遍历整个

- 深度学习的颠覆性发展:从卷积神经网络到Transformer

AI天才研究院

AI大模型应用入门实战与进阶ChatGPT大数据人工智能语言模型AILLMJavaPython架构设计AgentRPA

1.背景介绍深度学习是人工智能的核心技术之一,它通过模拟人类大脑中的神经网络学习从大数据中抽取知识,从而实现智能化的自动化处理。深度学习的发展历程可以分为以下几个阶段:2006年,GeoffreyHinton等人开始研究卷积神经网络(ConvolutionalNeuralNetworks,CNN),这是深度学习的第一个大突破。CNN主要应用于图像处理和语音识别等领域。2012年,AlexKrizh

- matlab中s-function模块局部变量的应用

0如约而至0

matlab

最近在项目中,涉及到了matlab中s-function函数的应用。需要在输出信号上加一个受地面站控制的3211激励信号。实现的过程中,遇到了s-function函数内部局部变量每次进入都会初始化置0的问题,网上查阅资料并结合模型实例,最后通过isempty函数来实现。具体的matlab实现代码如下://functiony=fcn(act_sign,act)persistentt2ifisempt

- 高性能计算:GPU加速与分布式训练

AI天才研究院

DeepSeekR1&大数据AI人工智能大模型AI大模型企业级应用开发实战计算科学神经计算深度学习神经网络大数据人工智能大型语言模型AIAGILLMJavaPython架构设计AgentRPA

1.背景介绍随着人工智能技术的飞速发展,深度学习模型的规模和复杂度不断提升,对计算能力的需求也越来越高。传统的CPU架构已经难以满足深度学习模型训练的需求,因此,GPU加速和分布式训练成为了高性能计算领域的研究热点。1.1.深度学习与计算挑战深度学习模型通常包含数百万甚至数十亿个参数,训练过程需要进行大量的矩阵运算和梯度更新,对计算资源的需求非常高。传统的CPU架构虽然具有较强的通用性,但其并行计

- 人工智能之数学基础:矩阵的范数

每天五分钟玩转人工智能

机器学习深度学习之数学基础人工智能矩阵算法线性代数范数

本文重点在前面课程中,我们学习了向量的范数,在矩阵中也有范数,本文来学习一下。矩阵的范数对于分析线性映射函数的特性有重要的作用。矩阵范数的本质矩阵范数是一种映射,它将一个矩阵映射到一个非负实数。矩阵的范数前面我们学习了向量的范数,只有当满足几个条件的时候,此时才可以,那么矩阵也是一样的,当满足下面的条件的时候,才可以定义||A||为矩阵A的范数矩阵范数的性质连续性矩阵范数是连续的函数。即如果矩阵序

- AI 大模型应用数据中心的数据清洗工具

SuperAGI2025

计算机软件编程原理与应用实践javapythonjavascriptkotlingolang架构人工智能

1.背景介绍在人工智能大模型应用的浪潮中,数据清洗作为数据预处理的重要环节,对于提升模型性能和可靠性具有至关重要的作用。数据中心作为人工智能模型的运行环境,面临着海量数据流和多样化的数据类型,如何高效、准确地进行数据清洗,成为应用大模型的关键问题之一。本文将详细介绍AI大模型应用数据中心的数据清洗工具,包括核心概念、算法原理、具体操作步骤、应用场景等,旨在为AI大模型的实际应用提供参考。2.核心概

- AI 大模型应用数据中心的数据迁移架构

AGI大模型与大数据研究院

DeepSeekR1&大数据AI人工智能javapythonjavascriptkotlingolang架构人工智能

AI大模型、数据中心、数据迁移、架构设计、迁移策略、性能优化、安全保障1.背景介绍随着人工智能(AI)技术的飞速发展,大规模AI模型的应用日益广泛,涵盖了自然语言处理、计算机视觉、语音识别等多个领域。这些AI模型通常需要海量的数据进行训练和推理,因此数据中心作为AI应用的基础设施,显得尤为重要。然而,随着AI模型规模的不断扩大,数据中心面临着新的挑战:数据规模庞大:AI模型的训练和推理需要海量数据

- 使用LangChain与Amazon Bedrock构建JCVD风格的Chatbot

scaFHIO

langchainpython

技术背景介绍在人工智能时代,构建一个智能化的聊天机器人不仅是一个趋势,更是提升与用户互动体验的关键之一。本文将向你展示如何使用LangChain和AmazonBedrock构建一个仿效让·克劳德·范·达美(JCVD)风格的聊天机器人。我们将借助于Anthropic提供的Claude模型,通过AmazonBedrock强大的基础设施来实现这一目标。核心原理解析LangChain作为一个强大的框架,简

- Cursor 终极使用指南:从零开始走向AI编程

芯作者

DD:日记人工智能机器学习深度学习AI编程

在数字化浪潮席卷全球的今天,人工智能(AI)已不再是遥不可及的概念,而是逐渐融入我们日常生活的方方面面。作为未来技术的核心驱动力,AI编程成为了众多开发者和技术爱好者争相探索的领域。而在这场技术革命中,Cursor——这一看似简单却功能强大的编程工具,正悄然成为连接初学者与AI编程高手的桥梁。本文将带你从零开始,逐步解锁Cursor的终极使用指南,让你在AI编程的道路上越走越远。一、初识Curso

- 知识管理系统:构建企业智慧大脑

AI天才研究院

ChatGPTAI大模型企业级应用开发实战DeepSeekR1&大数据AI人工智能大模型大厂Offer收割机面试题简历程序员读书硅基计算碳基计算认知计算生物计算深度学习神经网络大数据AIGCAGILLMJavaPython架构设计Agent程序员实现财富自由

第一部分:知识管理概述与重要性第1章:知识管理的定义与基本概念1.1.1知识管理的起源与发展知识管理(KnowledgeManagement,KM)起源于20世纪80年代,当时企业在市场竞争中逐渐意识到知识作为一种战略资源的重要性。早期的知识管理实践主要集中在知识的收集、存储和传播上。随着信息技术的发展,知识管理逐渐融入了更先进的技术手段,如数据挖掘、人工智能和大数据分析,使其成为一个跨学科、多领

- C 中调用WIN32API函数

就叫二号人物

http://www.pinvoke.net/磐实文章站(首页)首页>VisualBasic软件开发资料>API函数http://www.panshsoft.com/Sort_VB/API_fun/GetWindowRect用法http://blog.csdn.net/coolszy/article/details/5601455函数功能:该函数返回指定窗口的边框矩形的尺寸。该尺寸以相对于屏幕坐标

- 人工智能知识架构详解

CodeJourney.

数据库人工智能算法架构

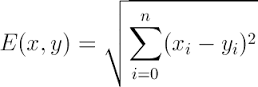

人工智能(ArtificialIntelligence,简称AI)作为当今最具影响力和发展潜力的技术领域之一,正深刻地改变着我们的生活、工作和社会。从智能家居到自动驾驶,从医疗诊断到金融投资,人工智能的应用无处不在。要全面深入地理解和掌握人工智能,构建一个清晰、系统的知识架构至关重要。二、基础数学(一)线性代数线性代数是人工智能的重要数学基础之一。矩阵运算在数据表示和变换中起着核心作用。例如,在图

- TypeScript语言的计算机视觉

苏墨瀚

包罗万象golang开发语言后端

使用TypeScript进行计算机视觉:一个现代化的探索引言随着人工智能和机器学习的快速发展,计算机视觉(ComputerVision)成为了一个极具活力的研究领域。计算机视觉旨在使计算机能够“看”和“理解”数字图像或视频中的内容。近年来,TypeScript作为一种现代化的编程语言,因其类型安全和更好的开发体验,逐渐在前端和后端开发中得到了广泛应用。本文将探讨如何使用TypeScript进行计算

- DeepSeek混合专家架构赋能智能创作

智能计算研究中心

其他

内容概要在人工智能技术加速迭代的当下,DeepSeek混合专家架构(MixtureofExperts)通过670亿参数的动态路由机制,实现了多模态处理的范式突破。该架构将视觉语言理解、多语言语义解析与深度学习算法深度融合,构建出覆盖文本生成、代码编写、学术研究等场景的立体化能力矩阵。其核心优势体现在三个维度:精准化内容生产——通过智能选题、文献综述自动生成等功能,将学术论文写作效率提升40%以上;

- AI推动地理信息系统(GIS)软件的创新发展与应用拓展

酥脆可口

facebook

摘要地理信息系统(GIS)软件作为空间数据处理与分析的核心工具,在城市规划、资源管理、环境监测等领域发挥着关键作用。本文深入探讨人工智能(AI)如何推动GIS软件的创新发展,分析AI技术在提升空间数据分析能力、优化地图制图、拓展应用场景等方面的重要作用,剖析面临的挑战,并对未来发展趋势进行展望,旨在为GIS行业借助AI实现升级提供理论与实践参考。一、引言传统GIS软件主要依赖基于规则的分析方法和人

- 人工智能之数学基础:数学对人工智能技术发展的作用

每天五分钟玩转人工智能

机器学习深度学习之数学基础人工智能深度学习机器学习神经网络自然语言处理数学

本文重点数学是人工智能技术发展的基础,它提供了人工智能技术所需的数学理论和算法,包括概率论、统计学、线性代数、微积分、图论等等。本文将从以下几个方面探讨数学对人工智能技术发展的作用。概率论和统计学概率论和统计学是人工智能技术中最为重要的数学分支之一。概率论和统计学的应用范围非常广泛,包括机器学习、数据挖掘、自然语言处理、计算机视觉等领域。在人工智能技术中,概率论和统计学主要用于处理不确定性的问题,

- HttpClient 4.3与4.3版本以下版本比较

spjich

javahttpclient

网上利用java发送http请求的代码很多,一搜一大把,有的利用的是java.net.*下的HttpURLConnection,有的用httpclient,而且发送的代码也分门别类。今天我们主要来说的是利用httpclient发送请求。

httpclient又可分为

httpclient3.x

httpclient4.x到httpclient4.3以下

httpclient4.3

- Essential Studio Enterprise Edition 2015 v1新功能体验

Axiba

.net

概述:Essential Studio已全线升级至2015 v1版本了!新版本为JavaScript和ASP.NET MVC添加了新的文件资源管理器控件,还有其他一些控件功能升级,精彩不容错过,让我们一起来看看吧!

syncfusion公司是世界领先的Windows开发组件提供商,该公司正式对外发布Essential Studio Enterprise Edition 2015 v1版本。新版本

- [宇宙与天文]微波背景辐射值与地球温度

comsci

背景

宇宙这个庞大,无边无际的空间是否存在某种确定的,变化的温度呢?

如果宇宙微波背景辐射值是表示宇宙空间温度的参数之一,那么测量这些数值,并观测周围的恒星能量输出值,我们是否获得地球的长期气候变化的情况呢?

&nbs

- lvs-server

男人50

server

#!/bin/bash

#

# LVS script for VS/DR

#

#./etc/rc.d/init.d/functions

#

VIP=10.10.6.252

RIP1=10.10.6.101

RIP2=10.10.6.13

PORT=80

case $1 in

start)

/sbin/ifconfig eth2:0 $VIP broadca

- java的WebCollector爬虫框架

oloz

爬虫

WebCollector主页:

https://github.com/CrawlScript/WebCollector

下载:webcollector-版本号-bin.zip将解压后文件夹中的所有jar包添加到工程既可。

接下来看demo

package org.spider.myspider;

import cn.edu.hfut.dmic.webcollector.cra

- jQuery append 与 after 的区别

小猪猪08

1、after函数

定义和用法:

after() 方法在被选元素后插入指定的内容。

语法:

$(selector).after(content)

实例:

<html>

<head>

<script type="text/javascript" src="/jquery/jquery.js"></scr

- mysql知识充电

香水浓

mysql

索引

索引是在存储引擎中实现的,因此每种存储引擎的索引都不一定完全相同,并且每种存储引擎也不一定支持所有索引类型。

根据存储引擎定义每个表的最大索引数和最大索引长度。所有存储引擎支持每个表至少16个索引,总索引长度至少为256字节。

大多数存储引擎有更高的限制。MYSQL中索引的存储类型有两种:BTREE和HASH,具体和表的存储引擎相关;

MYISAM和InnoDB存储引擎

- 我的架构经验系列文章索引

agevs

架构

下面是一些个人架构上的总结,本来想只在公司内部进行共享的,因此内容写的口语化一点,也没什么图示,所有内容没有查任何资料是脑子里面的东西吐出来的因此可能会不准确不全,希望抛砖引玉,大家互相讨论。

要注意,我这些文章是一个总体的架构经验不针对具体的语言和平台,因此也不一定是适用所有的语言和平台的。

(内容是前几天写的,现附上索引)

前端架构 http://www.

- Android so lib库远程http下载和动态注册

aijuans

andorid

一、背景

在开发Android应用程序的实现,有时候需要引入第三方so lib库,但第三方so库比较大,例如开源第三方播放组件ffmpeg库, 如果直接打包的apk包里面, 整个应用程序会大很多.经过查阅资料和实验,发现通过远程下载so文件,然后再动态注册so文件时可行的。主要需要解决下载so文件存放位置以及文件读写权限问题。

二、主要

- linux中svn配置出错 conf/svnserve.conf:12: Option expected 解决方法

baalwolf

option

在客户端访问subversion版本库时出现这个错误:

svnserve.conf:12: Option expected

为什么会出现这个错误呢,就是因为subversion读取配置文件svnserve.conf时,无法识别有前置空格的配置文件,如### This file controls the configuration of the svnserve daemon, if you##

- MongoDB的连接池和连接管理

BigCat2013

mongodb

在关系型数据库中,我们总是需要关闭使用的数据库连接,不然大量的创建连接会导致资源的浪费甚至于数据库宕机。这篇文章主要想解释一下mongoDB的连接池以及连接管理机制,如果正对此有疑惑的朋友可以看一下。

通常我们习惯于new 一个connection并且通常在finally语句中调用connection的close()方法将其关闭。正巧,mongoDB中当我们new一个Mongo的时候,会发现它也

- AngularJS使用Socket.IO

bijian1013

JavaScriptAngularJSSocket.IO

目前,web应用普遍被要求是实时web应用,即服务端的数据更新之后,应用能立即更新。以前使用的技术(例如polling)存在一些局限性,而且有时我们需要在客户端打开一个socket,然后进行通信。

Socket.IO(http://socket.io/)是一个非常优秀的库,它可以帮你实

- [Maven学习笔记四]Maven依赖特性

bit1129

maven

三个模块

为了说明问题,以用户登陆小web应用为例。通常一个web应用分为三个模块,模型和数据持久化层user-core, 业务逻辑层user-service以及web展现层user-web,

user-service依赖于user-core

user-web依赖于user-core和user-service

依赖作用范围

Maven的dependency定义

- 【Akka一】Akka入门

bit1129

akka

什么是Akka

Message-Driven Runtime is the Foundation to Reactive Applications

In Akka, your business logic is driven through message-based communication patterns that are independent of physical locatio

- zabbix_api之perl语言写法

ronin47

zabbix_api之perl

zabbix_api网上比较多的写法是python或curl。上次我用java--http://bossr.iteye.com/blog/2195679,这次用perl。for example: #!/usr/bin/perl

use 5.010 ;

use strict ;

use warnings ;

use JSON :: RPC :: Client ;

use

- 比优衣库跟牛掰的视频流出了,兄弟连Linux运维工程师课堂实录,更加刺激,更加实在!

brotherlamp

linux运维工程师linux运维工程师教程linux运维工程师视频linux运维工程师资料linux运维工程师自学

比优衣库跟牛掰的视频流出了,兄弟连Linux运维工程师课堂实录,更加刺激,更加实在!

-----------------------------------------------------

兄弟连Linux运维工程师课堂实录-计算机基础-1-课程体系介绍1

链接:http://pan.baidu.com/s/1i3GQtGL 密码:bl65

兄弟连Lin

- bitmap求哈密顿距离-给定N(1<=N<=100000)个五维的点A(x1,x2,x3,x4,x5),求两个点X(x1,x2,x3,x4,x5)和Y(

bylijinnan

java

import java.util.Random;

/**

* 题目:

* 给定N(1<=N<=100000)个五维的点A(x1,x2,x3,x4,x5),求两个点X(x1,x2,x3,x4,x5)和Y(y1,y2,y3,y4,y5),

* 使得他们的哈密顿距离(d=|x1-y1| + |x2-y2| + |x3-y3| + |x4-y4| + |x5-y5|)最大

- map的三种遍历方法

chicony

map

package com.test;

import java.util.Collection;

import java.util.HashMap;

import java.util.Iterator;

import java.util.Map;

import java.util.Set;

public class TestMap {

public static v

- Linux安装mysql的一些坑

chenchao051

linux

1、mysql不建议在root用户下运行

2、出现服务启动不了,111错误,注意要用chown来赋予权限, 我在root用户下装的mysql,我就把usr/share/mysql/mysql.server复制到/etc/init.d/mysqld, (同时把my-huge.cnf复制/etc/my.cnf)

chown -R cc /etc/init.d/mysql

- Sublime Text 3 配置

daizj

配置Sublime Text

Sublime Text 3 配置解释(默认){// 设置主题文件“color_scheme”: “Packages/Color Scheme – Default/Monokai.tmTheme”,// 设置字体和大小“font_face”: “Consolas”,“font_size”: 12,// 字体选项:no_bold不显示粗体字,no_italic不显示斜体字,no_antialias和

- MySQL server has gone away 问题的解决方法

dcj3sjt126com

SQL Server

MySQL server has gone away 问题解决方法,需要的朋友可以参考下。

应用程序(比如PHP)长时间的执行批量的MYSQL语句。执行一个SQL,但SQL语句过大或者语句中含有BLOB或者longblob字段。比如,图片数据的处理。都容易引起MySQL server has gone away。 今天遇到类似的情景,MySQL只是冷冷的说:MySQL server h

- javascript/dom:固定居中效果

dcj3sjt126com

JavaScript

<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.0 Transitional//EN" "http://www.w3.org/TR/xhtml1/DTD/xhtml1-transitional.dtd">

<html xmlns="http://www.w3.org/1999/xhtml&

- 使用 Spring 2.5 注释驱动的 IoC 功能

e200702084

springbean配置管理IOCOffice

使用 Spring 2.5 注释驱动的 IoC 功能

developerWorks

文档选项

将打印机的版面设置成横向打印模式

打印本页

将此页作为电子邮件发送

将此页作为电子邮件发送

级别: 初级

陈 雄华 (

[email protected]), 技术总监, 宝宝淘网络科技有限公司

2008 年 2 月 28 日

&nb

- MongoDB常用操作命令

geeksun

mongodb

1. 基本操作

db.AddUser(username,password) 添加用户

db.auth(usrename,password) 设置数据库连接验证

db.cloneDataBase(fromhost)

- php写守护进程(Daemon)

hongtoushizi

PHP

转载自: http://blog.csdn.net/tengzhaorong/article/details/9764655

守护进程(Daemon)是运行在后台的一种特殊进程。它独立于控制终端并且周期性地执行某种任务或等待处理某些发生的事件。守护进程是一种很有用的进程。php也可以实现守护进程的功能。

1、基本概念

&nbs

- spring整合mybatis,关于注入Dao对象出错问题

jonsvien

DAOspringbeanmybatisprototype

今天在公司测试功能时发现一问题:

先进行代码说明:

1,controller配置了Scope="prototype"(表明每一次请求都是原子型)

@resource/@autowired service对象都可以(两种注解都可以)。

2,service 配置了Scope="prototype"(表明每一次请求都是原子型)

- 对象关系行为模式之标识映射

home198979

PHP架构企业应用对象关系标识映射

HELLO!架构

一、概念

identity Map:通过在映射中保存每个已经加载的对象,确保每个对象只加载一次,当要访问对象的时候,通过映射来查找它们。其实在数据源架构模式之数据映射器代码中有提及到标识映射,Mapper类的getFromMap方法就是实现标识映射的实现。

二、为什么要使用标识映射?

在数据源架构模式之数据映射器中

//c

- Linux下hosts文件详解

pda158

linux

1、主机名: 无论在局域网还是INTERNET上,每台主机都有一个IP地址,是为了区分此台主机和彼台主机,也就是说IP地址就是主机的门牌号。 公网:IP地址不方便记忆,所以又有了域名。域名只是在公网(INtERNET)中存在,每个域名都对应一个IP地址,但一个IP地址可有对应多个域名。 局域网:每台机器都有一个主机名,用于主机与主机之间的便于区分,就可以为每台机器设置主机

- nginx配置文件粗解

spjich

javanginx

#运行用户#user nobody;#启动进程,通常设置成和cpu的数量相等worker_processes 2;#全局错误日志及PID文件#error_log logs/error.log;#error_log logs/error.log notice;#error_log logs/error.log inf

- 数学函数

w54653520

java

public

class

S {

// 传入两个整数,进行比较,返回两个数中的最大值的方法。

public

int

get(

int

num1,

int

nu