Hive处理案例——Zebra业务数据清洗

Zebra业务回顾

zebra业务回顾

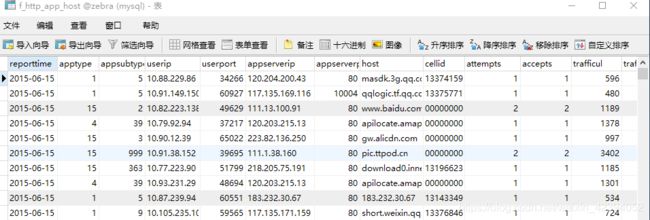

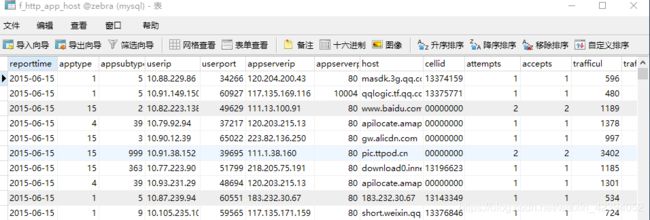

zebra项目最开始阶段会对日志文件进行分析统计,针对apptype,userip等20个字段做了统计,然后把最后的结果落地到数据库里。这张表相当于总表(f_http_app_host)

在企业里做到这步并没有结束,因为后续还要做数据分析,可能会针对此表进行多个维度的查询和统计,比如:

1.应用欢迎度

2.各网站表现

3.小区Http上网能力

4.小区上网洗好

所以我们可以根据以上四个维度,建立对应的表,并从f_http_app_host 取出对应的数据,然后做统计,最后交给前端做数据可视化的工作。比如下图是针对应用受欢迎程度的数据可视化图:

下图展示了前10名最受欢迎应用,是根据每个应用产生的总量来统计的(一般来说,流量越大,用户越多)

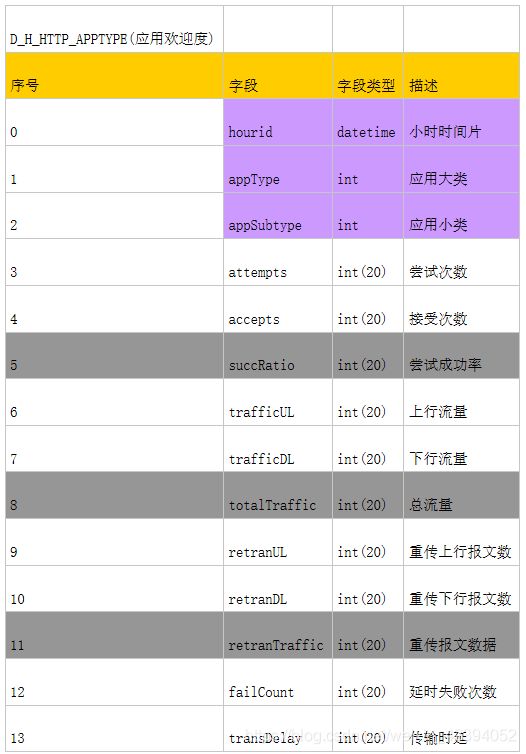

一、应用欢迎度表说明

create table D_H_HTTP_APPTYPE(

hourid datetime, apptype int, appsubtype int, attempts bigint, accepts bigint, succratio double, trafficul bigint, trafficdl bigint, totaltraffic bigint, retranul bigint, retrandl bigint, retrantraffic bigint, failcount bigint, transdelay bigint

);

业务说明

2016年10月25日 20:10

数据以| 分割后,每个数据的含义(仅展示项目里用到的字段数据)

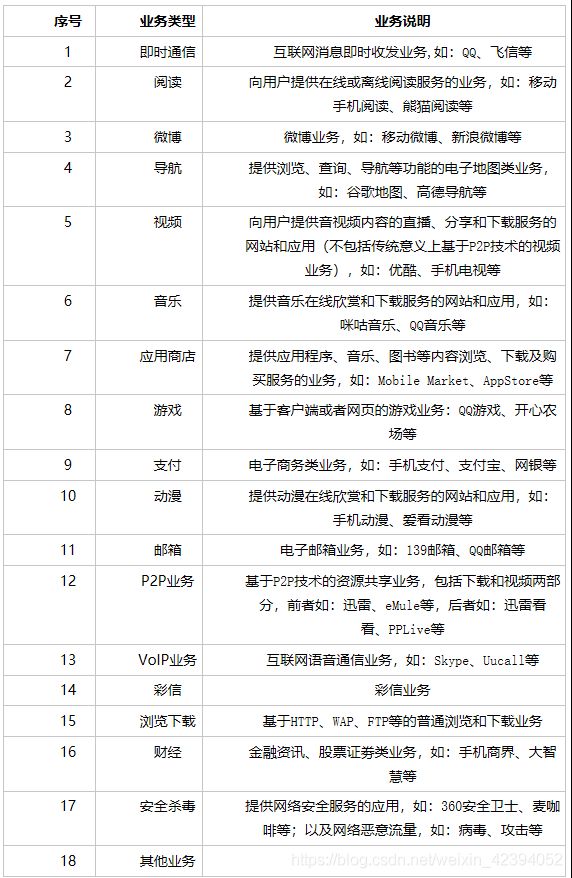

应用大类App Type

应用小类App sub-type

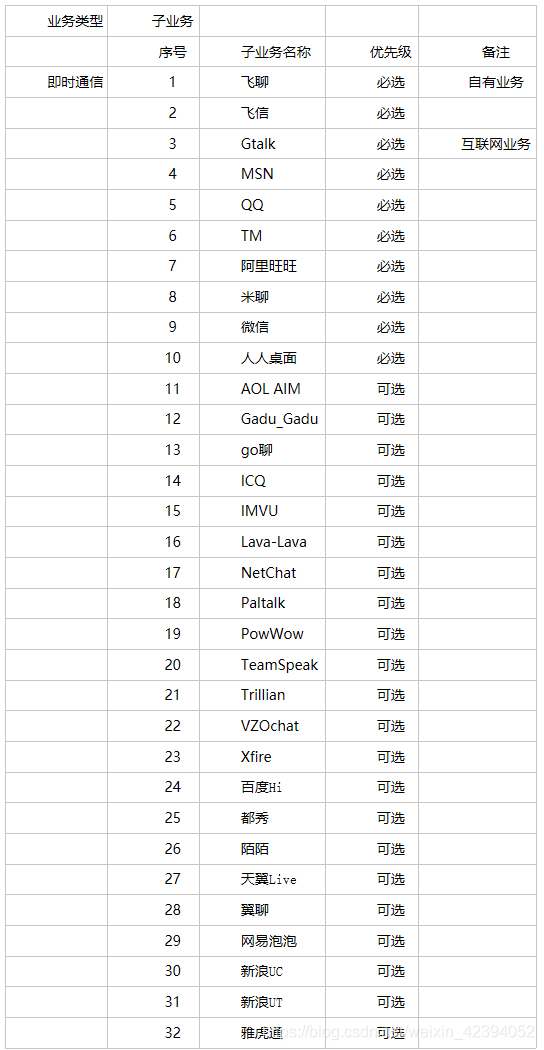

DPI设备子业务识别能力要求(部分)

业务字段处理逻辑

HttpAppHost hah = new HttpAppHost();

hah.setReportTime(reportTime);

//上网小区的id

hah.setCellid(data[16]);

//应用类hah.setAppType(Integer.parseInt(data[22]));

//应用子类hah.setAppSubtype(Integer.parseInt(data[23]));

// 用 户 ip hah.setUserIP(data[26]);

// 用 户 port hah.setUserPort(Integer.parseInt(data[28]));

//访问的服务ip

hah.setAppServerIP(data[30]);

// 访 问 的 服 务 port hah.setAppServerPort(Integer.parseInt(data[32]));

//域名hah.setHost(data[58]);

int appTypeCode = Integer.parseInt(data[18]);

String transStatus = data[54];

int appTypeCode = Integer.parseInt(data[18]);

String transStatus = data[54];

//业务逻辑处理

if (hah.getCellid() == null || hah.getCellid().equals("")) {

hah.setCellid("000000000");

}

if (appTypeCode == 103) {

hah.setAttempts(1);

}

if (appTypeCode == 103

&& "10,11,12,13,14,15,32,33,34,35,36,37,38,48,49,50,51,52,53,54,55,199,200,201,202,

203, 204, 205, 206, 302, 304, 306 ".contains(transStatus)){ hah.setAccepts(1);

}else

{

hah.setAccepts(0);

}

if(appTypeCode==103)

{

hah.setTrafficUL(Long.parseLong(data[33]));

}

if(appTypeCode==103)

{

hah.setTrafficDL(Long.parseLong(data[34]));

}

if(appTypeCode==103)

{

hah.setRetranUL(Long.parseLong(data[39]));

}

if(appTypeCode==103)

{

hah.setRetranDL(Long.parseLong(data[40]));

}

if(appTypeCode==103)

{

hah.setTransDelay(Long.parseLong(data[20]) - Long.parseLong(data[19]));

}

CharSequence key = hah.getReportTime() + "|" + hah.getAppType() + "|" + hah.getAppSubtype() + "|" + hah.getUserIP() + "|" + hah.getUserPort() + "|" + hah.getAppServerIP() + "|" + hah.getAppServerPort() + "|" + hah.getHost() + "|" + hah.getCellid();

if(map.containsKey(key))

{

HttpAppHost mapHah = map.get(key);

mapHah.setAccepts(mapHah.getAccepts() + hah.getAccepts());

mapHah.setAttempts(mapHah.getAttempts() + hah.getAttempts());

mapHah.setTrafficUL(mapHah.getTrafficUL() + hah.getTrafficUL());

mapHah.setTrafficDL(mapHah.getTrafficDL() + hah.getTrafficDL());

mapHah.setRetranUL(mapHah.getRetranUL() + hah.getRetranUL());

mapHah.setRetranDL(mapHah.getRetranDL() + hah.getRetranDL());

mapHah.setTransDelay(mapHah.getTransDelay() + hah.getTransDelay());

map.put(key, mapHah);

}else

{

map.put(key, hah);

}

五、zebra业务说明

zebra项目最开始阶段会对日志文件进行分析统计,然后把最后的结果落地到数据库里。这张表相当于总表

建表语句

create table F_HTTP_APP_HOST( reporttime datetime, apptype int,

appsubtype int, userip varchar(20), userport int,

appserverip varchar(20), appserverport int,

host varchar(255), cellid varchar(20), attempts bigint, accepts bigint, trafficul bigint, trafficdl bigint, retranul bigint, retrandl bigint, failcount bigint, transdelay bigint

);

后期可能会根据统计出来的数据,进行业务拆分。形成几个不同的维度进行查询:

1.应用欢迎度

2.各网站表现

3.小区Http上网能力

4.小区上网洗好

应用欢迎度表说明

create table D_H_HTTP_APPTYPE( hourid datetime,

apptype int, appsubtype int, attempts bigint, accepts bigint, succratio bigint, trafficul bigint, trafficdl bigint, totaltraffic bigint, retranul bigint, retrandl bigint, retrantraffic bigint, failcount bigint, transdelay bigint

);

各网站的表现表

建表语句:

#创建各网站表现表

create table D_H_HTTP_HOST( hourid datetime,

host varchar(255), appserverip varchar(20), attempts bigint,

accepts bigint, succratio bigint, trafficul bigint, trafficdl bigint, totaltraffic bigint, retranul bigint, retrandl bigint, retrantraffic bigint, failcount bigint, transdelay bigint

);

小区HTTP上网能力表

#创建小区HTTP上网能力表create table D_H_HTTP_CELLID(

hourid datetime, cellid varchar(20), attempts bigint, accepts bigint, succratio bigint, trafficul bigint, trafficdl bigint, totaltraffic bigint, retranul bigint, retrandl bigint, retrantraffic bigint, failcount bigint, transdelay bigint

);

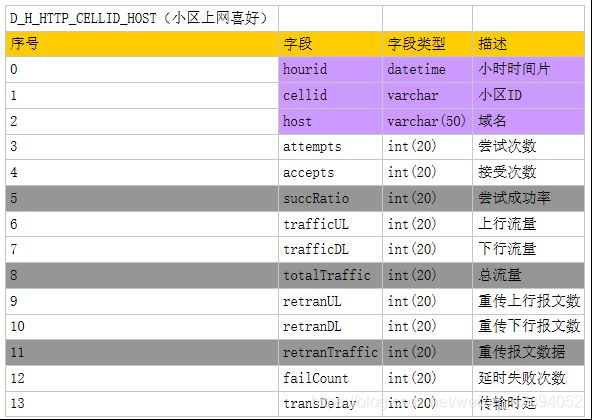

小区上网喜好表

#创建小区上网喜好表

create table D_H_HTTP_CELLID_HOST( hourid datetime,

cellid varchar(20), host varchar(255), attempts bigint, accepts bigint, succratio bigint, trafficul bigint,

trafficdl bigint, totaltraffic bigint, retranul bigint, retrandl bigint, retrantraffic bigint, failcount bigint, transdelay bigint

);

web应用展示zebra业务

此应用只针对 应用受欢迎度表(D_H_HTTP_APPTYPE 表)做统计

实现步骤:

1.数据库建表

2.插入测试数据3.搭建web应用

4.启动tomcat服务器

5.浏览测试 。在浏览器输入:http://localhost:端口号/项目名 即可访问最后的展示效果:

Hive实现Zebra

实现流程:

使用flume收集数据 --> 落地到hdfs系统中 --> 创建hive的外部表管理hdfs中收集到的日志 --> 利用hql处理zebra的业务逻辑 --> 使用sqoop技术将hdfs中处理完成的数据导出到mysql中

flume组件工作说明:

flume在收集日志的时候,按天为单位进行收集。hive在处理的时候,按天作为分区条件,继而对每天的日志进行 统计分析。最后,hive将统计分析的结果利用sqoop导出到关系型数据库里,然后做数据可视化的相关工作。

对于时间的记录,一种思路是把日志文件名里的日志信息拿出来,第二种思路是flume在收集日志时,将当天的日 期记录下来。我们用第二种思路。

Flume配置

配置示例:

a1.sources=r1 a1.channels=c1 a1.sinks=s1

a1.sources.r1.type=spooldir a1.sources.r1.spoolDir=/home/zebra

a1.sources.r1.interceptors=i1

a1.sources.r1.interceptors.i1.type=timestampa1.sinks.s1.type=hdfs

a1.sinks.s1.hdfs.path=hdfs://192.168.150.137:9000/zebra/reportTime=%Y-%m-%d

a1.sinks.s1.hdfs.fileType=DataStream a1.sinks.s1.hdfs.rollInterval=30

a1.sinks.s1.hdfs.rollSize=0 a1.sinks.s1.hdfs.rollCount=0a1.channels.c1.type=memory

a1.sources.r1.channels=c1 a1.sinks.s1.channel=c1

将待处理的日志文件上传到/home/zebra下,最终,这个文件会被01虚拟机收集到,最后落到hdfs上。

注意:在上传日志文件的时候,不要在/root/work/data/flumedata 目录下通过rz 上传,因为rz是连续传输文件, 这样会使得flume在处理时报错,错误为正在处理的日志文件大小被修改,所以最好是先把日志上传到linux的其他 目录下,然后通过mv 指令移动到/root/work/data/flumedata 目录下

Hive组件工作流程(建表语句不用写,重在了解整个ETL过程,这个过程很重要)

使用hive,创建zebra数据库

执行:create database zebra;

执行:use zebra;

然后建立分区,再建立表

详细建表语句:

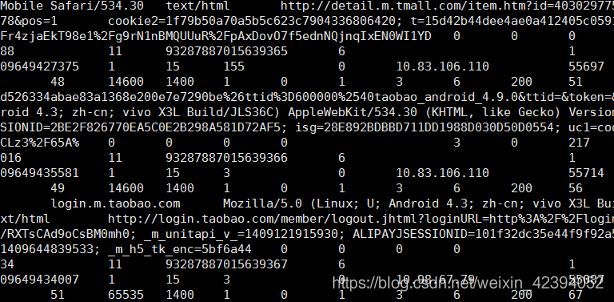

create EXTERNAL table zebra (a1 string,a2 string,a3 string,a4 string,a5 string,a6 string,a7 string,a8 string,a9 string,a10 string,a11 string,a12 string,a13 string,a14 string,a15 string,a16 string,a17 string,a18 string,a19 string,a20 string,a21 string,a22 string,a23 string,a24 string,a25 string,a26 string,a27 string,a28 string,a29 string,a30 string,a31 string,a32 string,a33 string,a34 string,a35 string,a36 string,a37 string,a38 string,a39 string,a40 string,a41 string,a42 string,a43 string,a44 string,a45 string,a46 string,a47 string,a48 string,a49 string,a50 string,a51 string,a52 string,a53 string,a54 string,a55 string,a56 string,a57 string,a58 string,a59 string,a60 string,a61 string,a62 string,a63 string,a64 string,a65 string,a66 string,a67 string,a68 string,a69 string,a70 string,a71 string,a72 string,a73 string,a74 string,a75 string,a76 string,a77 string) partitioned by (reporttime string) row format delimited fields terminated by ‘|’ stored as textfile location ‘/zebra’;

增加分区操作

执行:ALTER TABLE zebra add PARTITION (reportTime=‘2018-09-06’) location ‘/zebra/reportTime= 2018-09-06’;

执行查询,看是否能查出数据

可以通过抽样语法来检验:select * from zebra TABLESAMPLE (1 ROWS);

清洗数据,从原来的77个字段变为23个字段

建表语句:

create table dataclear(reporttime string,appType bigint,appSubtype bigint,userIp string,userPort bigint,appServerIP string,appServerPort bigint,host string,cellid string,appTypeCode bigint,interruptType String,transStatus bigint,trafficUL bigint,trafficDL bigint,retranUL bigint,retranDL bigint,procdureStartTime bigint,procdureEndTime bigint)row format delimited fields terminated by ‘|’;

从zebra表里导出数据到dataclear表里(23个字段的值)

建表语句:

insert overwrite table dataclear select concat(reporttime,’

',‘00:00:00’),a23,a24,a27,a29,a31,a33,a59,a17,a19,a68,a55,a34,a35,a40,a41,a20,a21 from zebra;

处理业务逻辑,得到dataproc表

建表语句:

create table dataproc (reporttime string,appType bigint,appSubtype bigint,userIp string,userPort bigint,appServerIP string,appServerPort bigint,host string,cellid string,attempts bigint,accepts bigint,trafficUL bigint,trafficDL bigint,retranUL bigint,retranDL bigint,failCount bigint,transDelay bigint)row format delimited fields terminated by ‘|’;

根据业务规则,做字段处理

建表语句:

insert overwrite table dataproc select reporttime,appType,appSubtype,userIp,userPort,appServerIP,appServerPort,host,

if(cellid == ‘’,“000000000”,cellid),if(appTypeCode == 103,1,0),if(appTypeCode == 103 and find_in_set(transStatus,“10,11,12,13,14,15,32,33,34,35,36,37,38,48,49,50,51,52,53,54,55,199,200,201,2

02,203,204,205,206,302,304,306”)!=0 and interruptType == 0,1,0),if(apptypeCode == 103,trafficUL,0), if(apptypeCode == 103,trafficDL,0), if(apptypeCode == 103,retranUL,0), if(apptypeCode == 103,retranDL,0), if(appTypeCode == 103 and transStatus == 1 and interruptType == 0,1,0),if(appTypeCode == 103, procdureEndTime - procdureStartTime,0) from dataclear;

查询关心的信息,以应用受欢迎程度表为例:

建表语句:

create table D_H_HTTP_APPTYPE(hourid string,appType int,appSubtype int,attempts bigint,accepts bigint,succRatio double,trafficUL bigint,trafficDL bigint,totalTraffic bigint,retranUL bigint,retranDL bigint,retranTraffic bigint,failCount bigint,transDelay bigint) row format delimited fields terminated by ‘|’;

根据总表dataproc,按条件做聚合以及字段的累加

建表语句:

insert overwrite table D_H_HTTP_APPTYPE select reporttime,apptype,appsubtype,sum(attempts),sum(accepts),round(sum(accepts)/sum(attempts),2),sum(tr afficUL),sum(trafficDL),sum(trafficUL)+sum(trafficDL),sum(retranUL),sum(retranDL),sum(retranUL)+sum(re tranDL),sum(failCount),sum(transDelay)from dataproc group by reporttime,apptype,appsubtype;

18.查询前5名受欢迎app

select hourid,apptype,sum(totalTraffic) as tt from D_H_HTTP_APPTYPE group by hourid,apptype sort by tt desc limit 5;

Sqoop组件工作流程:

将Hive表导出到Mysql数据库中,然后通过web应用程序+echarts做数据可视化工作。

实现步骤:

1.在mysql建立对应的表

2.利用sqoop导出d_h_http_apptype表导出语句:

sh sqoop export --connect jdbc:mysql://hadoop03:3306/zebra --username root --password root – export-dir ‘/user/hive/warehouse/zebra.db/d_h_http_apptype/000000_0’ --table D_H_HTTP_APPTYPE -m 1 --fields-terminated-by ‘|’