pytorch中attention的两种实现方式

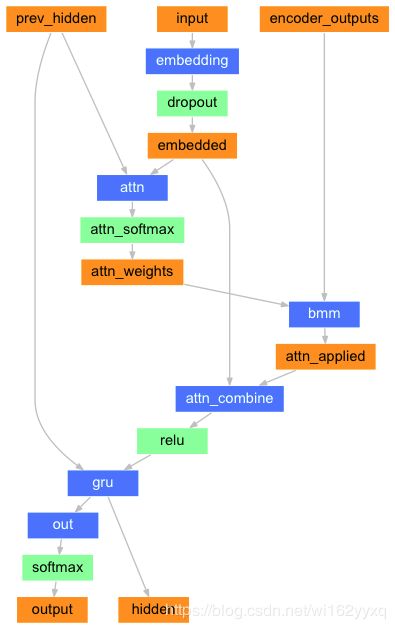

class AttnDecoderRNN(nn.Module):

def __init__(self, hidden_size, output_size, dropout_p=0.1, max_length=MAX_LENGTH):

super(AttnDecoderRNN, self).__init__()

self.hidden_size = hidden_size

self.output_size = output_size

self.dropout_p = dropout_p

self.max_length = max_length

self.embedding = nn.Embedding(self.output_size, self.hidden_size)

self.attn = nn.Linear(self.hidden_size * 2, self.max_length)

self.attn_combine = nn.Linear(self.hidden_size * 2, self.hidden_size)

self.dropout = nn.Dropout(self.dropout_p)

self.gru = nn.GRU(self.hidden_size, self.hidden_size)

self.out = nn.Linear(self.hidden_size, self.output_size)

def forward(self, input, hidden, encoder_outputs):

embedded = self.embedding(input).view(1, 1, -1)

embedded = self.dropout(embedded)

attn_weights = F.softmax(

self.attn(torch.cat((embedded[0], hidden[0]), 1)), dim=1)

attn_applied = torch.bmm(attn_weights.unsqueeze(0),

encoder_outputs.unsqueeze(0))

output = torch.cat((embedded[0], attn_applied[0]), 1)

output = self.attn_combine(output).unsqueeze(0)

output = F.relu(output)

output, hidden = self.gru(output, hidden)

output = F.log_softmax(self.out(output[0]), dim=1)

return output, hidden, attn_weights

def initHidden(self):

return torch.zeros(1, 1, self.hidden_size, device=device)

class AttentiondecoderV2(nn.Module):

"""

采用seq to seq模型,修改注意力权重的计算方式

"""

def __init__(self, hidden_size, output_size, dropout_p=0.1):

super(AttentiondecoderV2, self).__init__()

self.hidden_size = hidden_size

self.output_size = output_size

self.dropout_p = dropout_p

self.embedding = nn.Embedding(self.output_size, self.hidden_size)

self.attn_combine = nn.Linear(self.hidden_size * 2, self.hidden_size)

self.dropout = nn.Dropout(self.dropout_p)

self.gru = nn.GRU(self.hidden_size, self.hidden_size)

self.out = nn.Linear(self.hidden_size, self.output_size)

# test

self.vat = nn.Linear(hidden_size, 1)

def forward(self, input, hidden, encoder_outputs):

embedded = self.embedding(input) # 前一次的输出进行词嵌入

embedded = self.dropout(embedded)

# test

batch_size = encoder_outputs.shape[1]

alpha = hidden + encoder_outputs # 特征融合采用+/concat其实都可以

alpha = alpha.view(-1, alpha.shape[-1])

attn_weights = self.vat( torch.tanh(alpha)) # 将encoder_output:batch*seq*features,将features的维度降为1

attn_weights = attn_weights.view(-1, 1, batch_size).permute((2,1,0))

attn_weights = F.softmax(attn_weights, dim=2)

# attn_weights = F.softmax(

# self.attn(torch.cat((embedded, hidden[0]), 1)), dim=1) # 上一次的输出和隐藏状态求出权重

attn_applied = torch.matmul(attn_weights,

encoder_outputs.permute((1, 0, 2))) # 矩阵乘法,bmm(8×1×56,8×56×256)=8×1×256

output = torch.cat((embedded, attn_applied.squeeze(1) ), 1) # 上一次的输出和attention feature,做一个线性+GRU

output = self.attn_combine(output).unsqueeze(0)

output = F.relu(output)

output, hidden = self.gru(output, hidden)

output = F.log_softmax(self.out(output[0]), dim=1) # 最后输出一个概率

return output, hidden, attn_weights

def initHidden(self, batch_size):

result = Variable(torch.zeros(1, batch_size, self.hidden_size))

return result配图是第一种