python3爬取超级课程表学校及院系的列表

python3爬取超级课程表学校及院系列表

- python3爬取超级课程表学校及院系的列表

- 安装软件

- 抓取数据

- 编写程序

python3爬取超级课程表学校及院系的列表

一共三步:1.安装软件 2.抓取数据 3.编写程序。

安装软件

python3版本,idle凭个人喜好,本人用的是anaconda的jupyter notebook;抓包用的fibbler 5.0;最新版本的超级课程表(手机)。

抓取数据

1.搭建抓包环境。首先使电脑和手机处于同一wifi中,在手机的“无线网络”设置中添加wifi时(如果已经连接则长按wifi名称选择“更改网络”)点开高级选项,在“代理”选项中选择“手动”,在“服务器主机名”中填写电脑的IP地址,在“服务器端口”中填写抓包的端口(此处注意这里的端口号要和fibbler抓包的端口号一致,fibbler默认是8888,还要注意不要和其他端口冲突;笔者在抓包过程中anaconda的默认端口也是8888,导致403了好久)。设置好后在手机上正常操作软件(网络请求),观察抓包软件会有显示,就说明环境搭建好了

2.开始抓取数据。在手机上打开超级课程表软件,点击进入个人的信息页,选择更改学校(此处会有提示仅有两次,无视,只要不保存就可以),然后在输入框中随机输入一个学校(此处厚颜无耻的修改为清华大学)

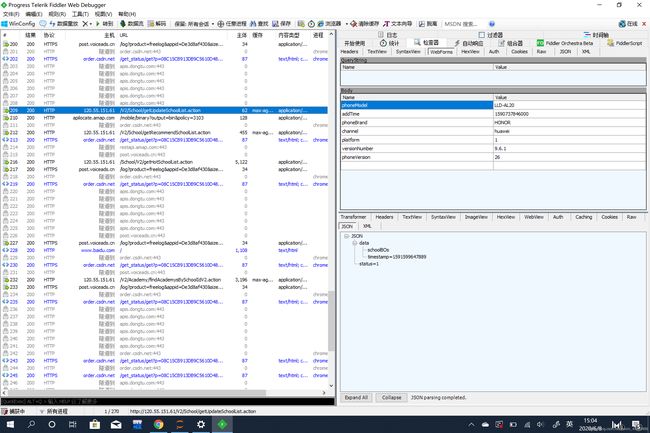

然后在fibbler软件中就会看到我们发出了一个请求

此处笔者也困惑了一下,在软件中请求的是通过文字搜索的学校,但是在网络请求中post的是schoolId,那么应该是有一个两个值的对应表的,然后再次观察这个请求过程,发现是有一个updateschoolList的请求,但是返回值是空的,所以我怀疑可能是在手机本地有缓存的文件(如果有开始抓取后才开始注册文件的朋友可以尝试下看下返回结果留言给我)。

编写程序

梳理思路:

模拟发出请求→接收返回数据→拆包→写入文件存储

import requests

import csv

def get_school_name():

headers = {

"Cookie": "JSESSIONID=289617D002DA1E5DBAB1F9B6851AB63C-memcached1; Path=/; HttpOnly",

"User-Agent": "Dalvik/2.1.0 (Linux; U; Android 8.0.0; LLD-AL20 Build/HONORLLD-AL20)-SuperFriday_9.6.1",

"Content-Type": "application/x-www-form-urlencoded; charset=UTF-8",

"Content-Length": "146",

"Host": "120.55.151.61",

"Connection": "Keep-Alive",

"Accept-Encoding": "gzip",

}

data = "phoneModel=LLD-AL20&searchType=5&phoneBrand=HONOR&channel=huawei&page=1&platform=1&versionNumber=9.6.1&content=160027&phoneVersion=26×tamp=0&"

url = "http://120.55.151.61/V2/School/getUpdateSchoolList.action"

#此处为在下课聊论坛处获取schoolID和学校名称的字典

s = requests.Session()

r = s.post(url = url,data = data,headers = headers)

print(r.status_code)

# print(r.text)

school_text = r.text.replace("false","False") #此处如果不替换会有关键字报错

school_list = eval(school_text)["data"]["schoolBOs"]

school_dict = []

for school in school_list:

school_dict.append({"name":school["name"],"id":school['schoolId']})

# print(school_dict)

return school_dict

def get_school_content(s_list):

s = requests.Session()

school_dict_list = []

print("爬取开始")

for school in s_list:

if school["name"] != "":

print(school["name"],school["id"])

url = "http://120.55.151.61/V2/Academy/findBySchoolId.action"

data = "phoneModel=LLD-AL20&phoneBrand=HONOR&schoolId=" + str(school["id"]) + "&channel=huawei&platform=1&versionNumber=9.6.1&phoneVersion=26&"

# data = "phoneModel=LLD-AL20&phoneBrand=HONOR&schoolId=" + '1001' + "&channel=huawei&platform=1&versionNumber=9.6.1&phoneVersion=26&"

headers = {

"Cookie": "JSESSIONID=289617D002DA1E5DBAB1F9B6851AB63C-memcached1; Path=/; HttpOnly",

"User-Agent": "Dalvik/2.1.0 (Linux; U; Android 8.0.0; LLD-AL20 Build/HONORLLD-AL20)-SuperFriday_9.6.1",

"Content-Type": "application/x-www-form-urlencoded; charset=UTF-8",

"Content-Length": "113",

"Host": "120.55.151.61",

"Connection": "Keep-Alive",

"Accept-Encoding": "gzip"

}

r = s.post(url = url,data = data,headers = headers)

content = eval(r.text)["data"]

temp_list = []

# print(r.text)

for item in content:

temp_list.append(item["name"])

school_dict_list.append({"name":school["name"],"id":school["id"],"content":temp_list})

print("爬取结束")

return school_dict_list

def save1(school_dict_list):

f = open("C:\\Users\\KSH\\Desktop\\爬虫.txt","w")

f.write(str(school_dict_list))

f.close()

def save2(school_dict_list):

f = open("C:\\Users\\KSH\\Desktop\\爬虫.csv","w")

csv_writer = csv.writer(f)

csv_writer.writerow(["大学","schoolID","院系"])

for school in school_dict_list:

contentList = [school["name"],school["id"]]

contentList.extend(school["content"])

csv_writer.writerow(contentList)

f.close()

# a = [{"name":"清华大学","id":"1001"},{"name":"清华大学","id":"1001"}]

a = get_school_name()

b = get_school_content(a)

save2(b)

完整代码下载

爬取下来的csv文件下载

参考文章:

爬虫再探实战(五)———爬取APP数据——超级课程表【一】——不秩稚童