(二)Python3 网页正文提取的各种方法和技巧

本文仅介绍一些简单易用的网页正文提取方法,不涉及正文提取的各种原理。

newspaper

功能非常丰富的一个包,不仅仅支持正文提取,也支持翻译(无字符限制),关键词获取,正确率较高,还有NLP相关的语料库。并且有Python2和Python3两个版本。

安装方式:

pip3 install newspaper3k

使用示例,以今年国庆阅兵的新闻为例:

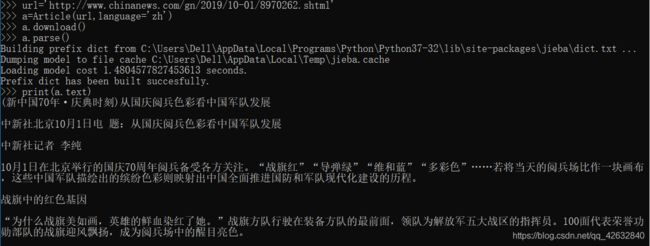

from newspaper import Article

url='http://www.chinanews.com/gn/2019/10-01/8970262.shtml'

a=Article(url)

a.download()

a.parse()

print(a.text)

更多功能介绍和示例请见github:https://github.com/codelucas/newspaper

URL2IO

搜索正文提取比较容易找到的一个免费API,通过注册账号获取token,然后使用该API,还有很多功能待开发。优点是简单易用。

官网:http://url2io.applinzi.com/

Github:https://github.com/url2io/url2io-python-sdk

使用方法:

1.注册账号获取token

2.下载url2io.py文件,放到项目文件夹中(或者加进环境变量)。懒得找下载的也可以直接拖到最下面创建个文件复制粘贴一下代码。

3.使用如下代码(token为注册账号获得的一个字符串)获得上例的正文:

import url2io

api = url2io.API("7vE7n2DHQ5SUmsZ85ZzOoA")

ret = api.article(url='http://www.chinanews.com/gn/2019/10-01/8970262.shtml',fields=['text',])

print(ret['text'])

其他解析器

仅举一个用起来觉得效果不错的,需要安装jparser,lxml。

具体安装的问题我也出现过,但是忘了。遇到问题的也可以问我。

废话不说,代码如下:

import requests,time

from jparser import PageModel

from urllib.parse import urlparse

headers = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8',

'Accept-Encoding': 'gzip, deflate',

'Accept-Language': 'zh-CN,zh;q=0.9',

'Connection': 'keep-alive',

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/63.0.3239.132 Safari/537.36',

}

def get_jparser(url):

response = requests.get(url, headers=headers)

en_code = response.encoding

de_code = response.apparent_encoding

# print(en_code,de_code,'-----------------')

if de_code == None:

if en_code in ['utf-8', 'UTF-8']: # en_code=utf-8时,de_code=utf-8,可以获取到内容

de_code = 'utf-8'

elif de_code in ['ISO-8859-1', 'ISO-8859-2', 'Windows-1254', 'UTF-8-SIG']:

de_code = 'utf-8'

html = response.text.encode(en_code, errors='ignore').decode(de_code, errors='ignore')

pm = PageModel(html)

result = pm.extract()

#print("pm.extract result:\n",result)

ans = [x['data'] for x in result['content'] if x['type'] == 'text']

#print(ans)

for i in range(len(ans)):

ans[i]=ans[i]+'\n'

content=''.join(ans)

return content

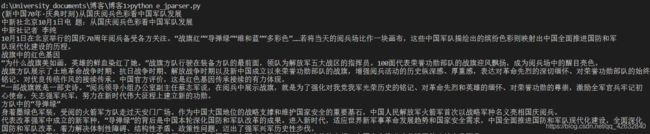

print(get_jparser("http://www.chinanews.com/gn/2019/10-01/8970262.shtml"))

调用get_jparser函数即可,运行结果为:

但是本方法也有明显的缺点:获取后的部分不能很好的体现网页的结构,由于PageModel内部使用正则表达式,不能很好的针对大部分网站。所以我在以前函数的基础上给列表的每个元素加上换行,以此来分段。

url2io.py代码:

# coding: utf-8

#

# This program is free software. It comes without any warranty, to

# the extent permitted by applicable law. You can redistribute it

# and/or modify it under the terms of the Do What The Fuck You Want

# To Public License, Version 2, as published by Sam Hocevar. See

# http://sam.zoy.org/wtfpl/COPYING (copied as below) for more details.

#

# DO WHAT THE FUCK YOU WANT TO PUBLIC LICENSE

# Version 2, December 2004

#

# Copyright (C) 2004 Sam Hocevar

#

# Everyone is permitted to copy and distribute verbatim or modified

# copies of this license document, and changing it is allowed as long

# as the name is changed.

#

# DO WHAT THE FUCK YOU WANT TO PUBLIC LICENSE

# TERMS AND CONDITIONS FOR COPYING, DISTRIBUTION AND MODIFICATION

#

# 0. You just DO WHAT THE FUCK YOU WANT TO.

"""a simple url2io sdk

example:

api = API(token)

api.article(url='http://www.url2io.com/products', fields=['next', 'text'])

"""

__all__ = ['APIError', 'API']

DEBUG_LEVEL = 1

import sys

import socket

import json

import urllib

from urllib import request

import time

from collections import Iterable

import importlib

importlib.reload(sys)

headers = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

'Cache-Control': 'max-age=0',

'Connection': 'keep-alive',

'User-Agent': 'Mozilla/5.txt.0 (X11; Ubuntu; Linux x86_64; rv:22.0) Gecko/20100101 Firefox/22.0'

} # 定义头文件,伪装成浏览器

class APIError(Exception):

code = None

"""HTTP status code"""

url = None

"""request URL"""

body = None

"""server response body; or detailed error information"""

def __init__(self, code, url, body):

self.code = code

self.url = url

self.body = body

def __str__(self):

return 'code={s.code}\nurl={s.url}\n{s.body}'.format(s=self)

__repr__ = __str__

class API(object):

token = None

server = 'http://api.url2io.com/'

decode_result = True

timeout = None

max_retries = None

retry_delay = None

def __init__(self, token, srv=None,

decode_result=True, timeout=30, max_retries=5,

retry_delay=3):

""":param srv: The API server address

:param decode_result: whether to json_decode the result

:param timeout: HTTP request timeout in seconds

:param max_retries: maximal number of retries after catching URL error

or socket error

:param retry_delay: time to sleep before retrying"""

self.token = token

if srv:

self.server = srv

self.decode_result = decode_result

assert timeout >= 0 or timeout is None

assert max_retries >= 0

self.timeout = timeout

self.max_retries = max_retries

self.retry_delay = retry_delay

_setup_apiobj(self, self, [])

def update_request(self, request):

"""overwrite this function to update the request before sending it to

server"""

pass

def _setup_apiobj(self, apiobj, path):

if self is not apiobj:

self._api = apiobj

self._urlbase = apiobj.server + '/'.join(path)

lvl = len(path)

done = set()

for i in _APIS:

if len(i) <= lvl:

continue

cur = i[lvl]

if i[:lvl] == path and cur not in done:

done.add(cur)

setattr(self, cur, _APIProxy(apiobj, i[:lvl + 1]))

class _APIProxy(object):

_api = None

_urlbase = None

def __init__(self, apiobj, path):

_setup_apiobj(self, apiobj, path)

def __call__(self, post=False, *args, **kwargs):

# /article

# url = 'http://xxxx.xxx',

# fields = ['next',],

#

if len(args):

raise TypeError('only keyword arguments are allowed')

if type(post) is not bool:

raise TypeError('post argument can only be True or False')

url = self.geturl(**kwargs)

request = urllib.request.Request(url, headers=headers)

self._api.update_request(request)

retry = self._api.max_retries

while True:

retry -= 1

try:

ret = urllib.request.urlopen(request, timeout=self._api.timeout).read()

break

except urllib.error.HTTPError as e:

raise APIError(e.code, url, e.read())

except (socket.error, urllib.error.URLError) as e:

if retry < 0:

raise e

_print_debug('caught error: {}; retrying'.format(e))

time.sleep(self._api.retry_delay)

if self._api.decode_result:

try:

ret = json.loads(ret)

except:

raise APIError(-1, url, 'json decode error, value={0!r}'.format(ret))

return ret

def _mkarg(self, kargs):

"""change the argument list (encode value, add api key/secret)

:return: the new argument list"""

def enc(x):

# if isinstance(x, unicode):

# return x.encode('utf-8')

# return str(x)

return x.encode('utf-8') if isinstance(x, str) else str(x)

kargs = kargs.copy()

kargs['token'] = self._api.token

for (k, v) in kargs.items():

if isinstance(v, Iterable) and not isinstance(v, str):

kargs[k] = ','.join([str(i) for i in v])

else:

kargs[k] = enc(v)

return kargs

def geturl(self, **kargs):

"""return the request url"""

return self._urlbase + '?' + urllib.parse.urlencode(self._mkarg(kargs))

def _print_debug(msg):

if DEBUG_LEVEL:

sys.stderr.write(str(msg) + '\n')

_APIS = [

'/article',

# '/images',

]

_APIS = [i.split('/')[1:] for i in _APIS]