Flume开发 -- 故障转移和负载均衡

一、需求

使用 Flume1 监控一个端口,其 sink 组中的 sink 分别对接 Flume2 和 Flume3,采用

FailoverSinkProcessor,实现故障转移功能。

二、流程分析

三、实现步骤

3.1 准备工作

在 /opt/module/flume/job 目录下创建 group2 文件夹

[test@hadoop151 job]$ mkdir group2

3.2 创建 flume-netcat-flume.conf

配置一个 netcat source 和一个 channel、一个 sink group(2 个 sink),分别输送给 flume-flume-console1 和 flume-flume-console2。

1、编辑配置文件

[test@hadoop151 group2]$ vim flume-netcat-flume.conf

2、添加如下内容

# Name the components on this agent

a1.sources = r1

a1.channels = c1

a1.sinkgroups = g1

a1.sinks = k1 k2

# Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = 44444

# Describe the sinkgroups

a1.sinkgroups.g1.processor.type = failover

a1.sinkgroups.g1.processor.priority.k1 = 5

a1.sinkgroups.g1.processor.priority.k2 = 10

a1.sinkgroups.g1.processor.maxpenalty = 10000

# Describe the sink

a1.sinks.k1.type = avro

a1.sinks.k1.hostname = hadoop151

a1.sinks.k1.port = 4141

a1.sinks.k2.type = avro

a1.sinks.k2.hostname = hadoop151

a1.sinks.k2.port = 4142

# Describe the channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinkgroups.g1.sinks = k1 k2

a1.sinks.k1.channel = c1

a1.sinks.k2.channel = c1

3.3 创建 flume-flume-console1.conf

配置上级 Flume 输出的 Source,输出是到本地控制台。

1、编辑配置文件

[test@hadoop151 group2]$ vim flume-flume-console1.conf

2、添加如下内容

# Name the components on this agent

a2.sources = r1

a2.sinks = k1

a2.channels = c1

# Describe/configure the source

a2.sources.r1.type = avro

a2.sources.r1.bind = hadoop151

a2.sources.r1.port = 4141

# Describe the sink

a2.sinks.k1.type = logger

# Describe the channel

a2.channels.c1.type = memory

a2.channels.c1.capacity = 1000

a2.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a2.sources.r1.channels = c1

a2.sinks.k1.channel = c1

3.4 创建 flume-flume-console2.conf

配置上级 Flume 输出的 Source,输出是本地控制台。

1、编辑配置文件

[test@hadoop151 group2]$ vim flume-flume-console2.conf

2、添加如下内容

# Name the components on this agent

a3.sources = r1

a3.sinks = k1

a3.channels = c2

# Describe/configure the source

a3.sources.r1.type = avro

a3.sources.r1.bind = hadoop151

a3.sources.r1.port = 4142

# Describe the sink

a3.sinks.k1.type = logger

# Describe the channel

a3.channels.c2.type = memory

a3.channels.c2.capacity = 1000

a3.channels.c2.transactionCapacity = 100

# Bind the source and sink to the channel

a3.sources.r1.channels = c2

a3.sinks.k1.channel = c2

3.5 执行配置文件

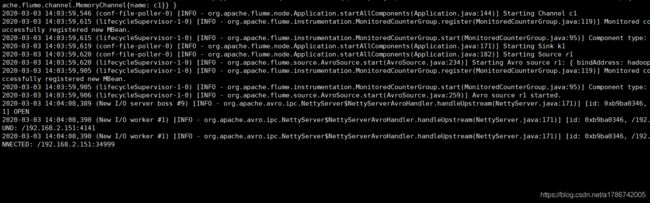

分别开启对应配置文件:flume-flume-console2、flume-flume-console1、flume-netcat-flume。

[test@hadoop151 flume]$ bin/flume-ng agent --conf conf/ --name a3 --conf-file job/group2/flume-flume-console2.conf -Dflume.root.logger=INFO,console

[test@hadoop151 flume]$ bin/flume-ng agent --conf conf/ --name a2 --conf-file job/group2/flume-flume-console1.conf -Dflume.root.logger=INFO,console

[test@hadoop151 flume]$ bin/flume-ng agent --conf conf/ --name a1 --conf-file job/group2/flume-netcat-flume.conf

3.6 使用 netcat 工具向本机的 44444 端口发送内容

3.7 查看 Flume2 和 Flume3 的控制台打印日志

我们可以发现,Flume3 打印,而 Flume2 不打印,因为这里设置的是故障转移的 Flume,只有工作的服务器 down 掉,才会使用下一台。

3.8 将 Flume3 kill,观察 Flume2 的控制台打印情况

注:使用 jps -ml 查看 Flume 进程

四、负载均衡的配置

配置负载均衡只需要把 flume-netcat-flume.conf 文件中的参数:

# Describe the sinkgroups

a1.sinkgroups.g1.processor.type = failover

a1.sinkgroups.g1.processor.priority.k1 = 5

a1.sinkgroups.g1.processor.priority.k2 = 10

a1.sinkgroups.g1.processor.maxpenalty = 10000

修改为:

a1.sinkgroups.g1.processor.type = load_balance

a1.sinkgroups.g1.processor.backoff = true

a1.sinkgroups.g1.processor.selector = random

这样就可以实现两个 Flume 都可以随机接收到数据。