关于 avro 的 maven 工程的搭建以及 avro 的入门知识,可以参考: Apache Avro 入门

1. 定义 schema 文件,并编译 maven 工程生成实体类

schema 文件名称为:stock.avsc,内容如下:

{

"namespace": "com.bonc.rdpe.kafka110.beans",

"type": "record",

"name": "Stock",

"fields": [

{"name": "stockCode", "type": "string"},

{"name": "stockName", "type": "string"},

{"name": "tradeTime", "type": "long"},

{"name": "preClosePrice", "type": "float"},

{"name": "openPrice", "type": "float"},

{"name": "currentPrice", "type": "float"},

{"name": "highPrice", "type": "float"},

{"name": "lowPrice", "type": "float"}

]

}

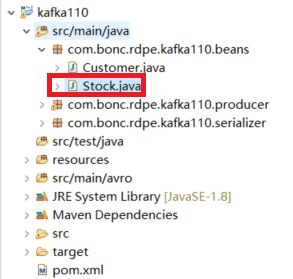

编译 maven 工程生成实体类:

2. 自定义序列化类和反序列化类

(1) 序列化类

package com.bonc.rdpe.kafka110.serializer;

import java.io.ByteArrayOutputStream;

import java.io.IOException;

import java.util.Map;

import org.apache.avro.io.BinaryEncoder;

import org.apache.avro.io.DatumWriter;

import org.apache.avro.io.EncoderFactory;

import org.apache.avro.specific.SpecificDatumWriter;

import org.apache.kafka.common.errors.SerializationException;

import org.apache.kafka.common.serialization.Serializer;

import com.bonc.rdpe.kafka110.beans.Stock;

/**

* @Title AvroSerializer.java

* @Description 使用传统的 Avro API 自定义序列化类

* @Author YangYunhe

* @Date 2018-06-21 16:40:35

*/

public class AvroSerializer implements Serializer {

@Override

public void close() {}

@Override

public void configure(Map arg0, boolean arg1) {}

@Override

public byte[] serialize(String topic, Stock data) {

if(data == null) {

return null;

}

DatumWriter writer = new SpecificDatumWriter<>(data.getSchema());

ByteArrayOutputStream out = new ByteArrayOutputStream();

BinaryEncoder encoder = EncoderFactory.get().directBinaryEncoder(out, null);

try {

writer.write(data, encoder);

}catch (IOException e) {

throw new SerializationException(e.getMessage());

}

return out.toByteArray();

}

}

(2) 反序列化类

package com.bonc.rdpe.kafka110.deserializer;

import java.io.ByteArrayInputStream;

import java.io.IOException;

import java.util.Map;

import org.apache.avro.io.BinaryDecoder;

import org.apache.avro.io.DatumReader;

import org.apache.avro.io.DecoderFactory;

import org.apache.avro.specific.SpecificDatumReader;

import org.apache.kafka.common.serialization.Deserializer;

import com.bonc.rdpe.kafka110.beans.Stock;

/**

* @Title AvroDeserializer.java

* @Description 使用传统的 Avro API 自定义反序列类

* @Author YangYunhe

* @Date 2018-06-21 17:19:40

*/

public class AvroDeserializer implements Deserializer {

@Override

public void close() {}

@Override

public void configure(Map arg0, boolean arg1) {}

@Override

public Stock deserialize(String topic, byte[] data) {

if(data == null) {

return null;

}

Stock stock = new Stock();

ByteArrayInputStream in = new ByteArrayInputStream(data);

DatumReader userDatumReader = new SpecificDatumReader<>(stock.getSchema());

BinaryDecoder decoder = DecoderFactory.get().directBinaryDecoder(in, null);

try {

stock = userDatumReader.read(null, decoder);

} catch (IOException e) {

e.printStackTrace();

}

return stock;

}

}

3. KafkaProducer使用自定义的序列化类发送消息

package com.bonc.rdpe.kafka110.producer;

import java.util.Properties;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.clients.producer.RecordMetadata;

import com.bonc.rdpe.kafka110.beans.Stock;

/**

* @Title TraditionalAvroProducer.java

* @Description Kafka Producer 发送avro序列化后的Stock对象

* @Author YangYunhe

* @Date 2018-06-21 17:41:59

*/

public class TraditionalAvroProducer {

public static void main(String[] args) throws Exception {

Stock[] stocks = new Stock[100];

for(int i = 0; i < 100; i++) {

stocks[i] = new Stock();

stocks[i].setStockCode(String.valueOf(i));

stocks[i].setStockName("stock" + i);

stocks[i].setTradeTime(System.currentTimeMillis());

stocks[i].setPreClosePrice(100.0F);

stocks[i].setOpenPrice(88.8F);

stocks[i].setCurrentPrice(120.5F);

stocks[i].setHighPrice(300.0F);

stocks[i].setLowPrice(12.4F);

}

Properties props = new Properties();

props.put("bootstrap.servers", "192.168.42.89:9092,192.168.42.89:9093,192.168.42.89:9094");

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

// 设置序列化类为自定义的 avro 序列化类

props.put("value.serializer", "com.bonc.rdpe.kafka110.serializer.AvroSerializer");

Producer producer = new KafkaProducer<>(props);

for(Stock stock : stocks) {

ProducerRecord record = new ProducerRecord<>("dev3-yangyunhe-topic001", stock);

RecordMetadata metadata = producer.send(record).get();

StringBuilder sb = new StringBuilder();

sb.append("stock: ").append(stock.toString()).append(" has been sent successfully!").append("\n")

.append("send to partition ").append(metadata.partition())

.append(", offset = ").append(metadata.offset());

System.out.println(sb.toString());

Thread.sleep(100);

}

producer.close();

}

}

4. KafkaConsumer使用自定义的反序列化类接收消息

package com.bonc.rdpe.kafka110.consumer;

import java.util.Collections;

import java.util.Properties;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import com.bonc.rdpe.kafka110.beans.Stock;

/**

* @Title TraditionalAvroConsumer.java

* @Description Kafka Consumer 解析avro序列化后的Stock对象

* @Author YangYunhe

* @Date 2018-06-21 17:43:03

*/

public class TraditionalAvroConsumer {

public static void main(String[] args) {

Properties props = new Properties();

props.put("bootstrap.servers", "192.168.42.89:9092,192.168.42.89:9093,192.168.42.89:9094");

props.put("group.id", "dev3-yangyunhe-group001");

props.put("key.deserializer","org.apache.kafka.common.serialization.StringDeserializer");

// 设置反序列化类为自定义的avro反序列化类

props.put("value.deserializer","com.bonc.rdpe.kafka110.deserializer.AvroDeserializer");

KafkaConsumer consumer = new KafkaConsumer<>(props);

consumer.subscribe(Collections.singletonList("dev3-yangyunhe-topic001"));

try {

while(true) {

ConsumerRecords records = consumer.poll(100);

for(ConsumerRecord record : records) {

Stock stock = record.value();

System.out.println(stock.toString());

}

}

}finally {

consumer.close();

}

}

}

5. 测试结果

运行生产者代码后控制台输出:

stock: {"stockCode": "0", "stockName": "stock0", "tradeTime": 1529631848353, "preClosePrice": 100.0, "openPrice": 88.8, "currentPrice": 120.5, "highPrice": 300.0, "lowPrice": 12.4} has been sent successfully!

send to partition 0, offset = 552

stock: {"stockCode": "1", "stockName": "stock1", "tradeTime": 1529631848353, "preClosePrice": 100.0, "openPrice": 88.8, "currentPrice": 120.5, "highPrice": 300.0, "lowPrice": 12.4} has been sent successfully!

send to partition 2, offset = 551

stock: {"stockCode": "2", "stockName": "stock2", "tradeTime": 1529631848353, "preClosePrice": 100.0, "openPrice": 88.8, "currentPrice": 120.5, "highPrice": 300.0, "lowPrice": 12.4} has been sent successfully!

send to partition 1, offset = 551

stock: {"stockCode": "3", "stockName": "stock3", "tradeTime": 1529631848353, "preClosePrice": 100.0, "openPrice": 88.8, "currentPrice": 120.5, "highPrice": 300.0, "lowPrice": 12.4} has been sent successfully!

send to partition 0, offset = 553

stock: {"stockCode": "4", "stockName": "stock4", "tradeTime": 1529631848353, "preClosePrice": 100.0, "openPrice": 88.8, "currentPrice": 120.5, "highPrice": 300.0, "lowPrice": 12.4} has been sent successfully!

send to partition 2, offset = 552

......

运行消费者代码后控制台输出:

{"stockCode": "0", "stockName": "stock0", "tradeTime": 1529631848353, "preClosePrice": 100.0, "openPrice": 88.8, "currentPrice": 120.5, "highPrice": 300.0, "lowPrice": 12.4}

{"stockCode": "1", "stockName": "stock1", "tradeTime": 1529631848353, "preClosePrice": 100.0, "openPrice": 88.8, "currentPrice": 120.5, "highPrice": 300.0, "lowPrice": 12.4}

{"stockCode": "2", "stockName": "stock2", "tradeTime": 1529631848353, "preClosePrice": 100.0, "openPrice": 88.8, "currentPrice": 120.5, "highPrice": 300.0, "lowPrice": 12.4}

{"stockCode": "3", "stockName": "stock3", "tradeTime": 1529631848353, "preClosePrice": 100.0, "openPrice": 88.8, "currentPrice": 120.5, "highPrice": 300.0, "lowPrice": 12.4}

{"stockCode": "4", "stockName": "stock4", "tradeTime": 1529631848353, "preClosePrice": 100.0, "openPrice": 88.8, "currentPrice": 120.5, "highPrice": 300.0, "lowPrice": 12.4}

......