idea编写spark程序

IDEA编写Spark程序

maven仓库

链接: https://pan.baidu.com/s/1mXe0zlDy4XAgdBQ6BtGkrA 提取码: 2sy4

1、 pom.xml

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>cn.itcast</groupId>

<artifactId>SparkDemo</artifactId>

<version>1.0-SNAPSHOT</version>

<!-- 指定仓库位置,依次为aliyun、cloudera和jboss仓库 -->

<repositories>

<repository>

<id>aliyun</id>

<url>http://maven.aliyun.com/nexus/content/groups/public/</url>

</repository>

<repository>

<id>cloudera</id>

<url>https://repository.cloudera.com/artifactory/cloudera-repos/</url>

</repository>

<repository>

<id>jboss</id>

<url>http://repository.jboss.com/nexus/content/groups/public</url>

</repository>

</repositories>

<properties>

<maven.compiler.source>1.8</maven.compiler.source>

<maven.compiler.target>1.8</maven.compiler.target>

<encoding>UTF-8</encoding>

<scala.version>2.11.8</scala.version>

<scala.compat.version>2.11</scala.compat.version>

<hadoop.version>2.7.4</hadoop.version>

<spark.version>2.2.0</spark.version>

</properties>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive-thriftserver_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- <dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka-0-8_2.11</artifactId>

<version>${spark.version}</version>

</dependency>-->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka-0-10_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql-kafka-0-10_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<!--<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.6.0-mr1-cdh5.14.0</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-client</artifactId>

<version>1.2.0-cdh5.14.0</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-server</artifactId>

<version>1.2.0-cdh5.14.0</version>

</dependency>-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.4</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-client</artifactId>

<version>1.3.1</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-server</artifactId>

<version>1.3.1</version>

</dependency>

<dependency>

<groupId>com.typesafe</groupId>

<artifactId>config</artifactId>

<version>1.3.3</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.38</version>

</dependency>

</dependencies>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<testSourceDirectory>src/test/scala</testSourceDirectory>

<plugins>

<!-- 指定编译java的插件 -->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.5.1</version>

</plugin>

<!-- 指定编译scala的插件 -->

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.2</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

<configuration>

<args>

<arg>-dependencyfile</arg>

<arg>${project.build.directory}/.scala_dependencies</arg>

</args>

</configuration>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-surefire-plugin</artifactId>

<version>2.18.1</version>

<configuration>

<useFile>false</useFile>

<disableXmlReport>true</disableXmlReport>

<includes>

<include>**/*Test.*

**/ *Suite.*</include>

</includes>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>2.3</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<filters>

<filter>

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF

META-INF/*.DSA

META-INF/*.RSA

前提:创建一个maven项目

编写代码

1、创建spark conf

2、实例一个sparkcontext

3、读物数据,对数据进行操作(业务逻辑)

4、保存最终的结果

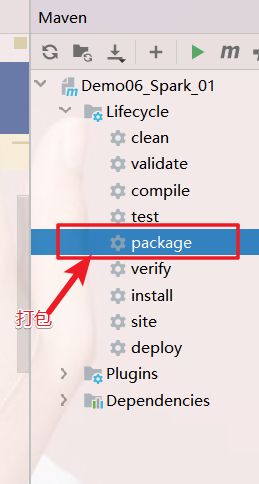

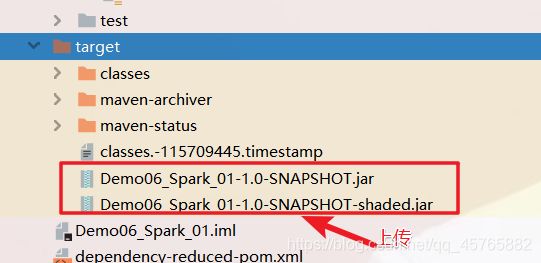

Jar包执行

讲代码到导出成为jar文件,上传到集群,通过spark-submit提交任务

本地运行

object WordCount {

def main(args: Array[String]): Unit = {

//1.创建SparkContext

val config = new SparkConf().setAppName("wc").setMaster("local[*]")

val sc = new SparkContext(config)

sc.setLogLevel("WARN")

//2.读取文件

val fileRDD: RDD[String] = sc.textFile("F:\\第四学期的大数据资料\\day01三月份资料\\第四周\\day04\\4.2号练习题\\word.txt")

//3.处理数据

val wordRDD: RDD[String] = fileRDD.flatMap(_.split(" "))

//3.2每个单词记为1

val wordAndOneRDD: RDD[(String, Int)] = wordRDD.map((_,1))

//3.3根据key进行聚合,统计每个单词的数量

val wordAndCount: RDD[(String, Int)] = wordAndOneRDD.reduceByKey(_+_)

//4.收集结果

val result: Array[(String, Int)] = wordAndCount.collect()

result.foreach(println)

}

}

集群运行

object WordCountJ {

def main(args: Array[String]): Unit = {

//1.创建SparkContext

val config = new SparkConf().setAppName("wc")//.setMaster("local[*]")

val sc = new SparkContext(config)

sc.setLogLevel("WARN")

//2.读取文件

val fileRDD: RDD[String] = sc.textFile(args(0)) //文件输入路径

//3.处理数据

val wordRDD: RDD[String] = fileRDD.flatMap(_.split(" "))

//3.2每个单词记为1

val wordAndOneRDD: RDD[(String, Int)] = wordRDD.map((_,1))

//3.3根据key进行聚合,统计每个单词的数量

val wordAndCount: RDD[(String, Int)] = wordAndOneRDD.reduceByKey(_+_)

wordAndCount.saveAsTextFile(args(1))//文件输出路径

}

}

/export/servers/spark-2.2.0-bin-2.6.0-cdh5.14.0/bin/spark-submit

–class demo02.WordCountJ

–master yarn

–deploy-mode cluster

–driver-memory 1g

–executor-memory 1g

–executor-cores 2

–queue default

/export/pot/Demo06_Spark_01-1.0-SNAPSHOT.jar

hdfs://hadoop01:8020/words.txt

hdfs://hadoop01:8020/wordcount/output5

执行命令提交到Spark-HA集群

/export/servers/spark-2.2.0-bin-2.6.0-cdh5.14.0/bin/spark-submit

–class cn.itcast.sparkhello.WordCount

–master spark://hadoop01:7077,hadoop02:7077

–executor-memory 1g

–total-executor-cores 2

/export/pot/Demo06_Spark_01-1.0-SNAPSHOT.jar

hdfs://hadoop01:8020/words.txt

hdfs://hadoop01:8020/wordcount/output4