Spark修炼之道(进阶篇)——Spark入门到精通:第六节 Spark编程模型(三)

本节主要内容

- RDD transformation(续)

- RDD actions

1. RDD transformation(续)

(1)repartitionAndSortWithinPartitions(partitioner)

repartitionAndSortWithinPartitions函数是repartition函数的变种,与repartition函数不同的是,repartitionAndSortWithinPartitions在给定的partitioner内部进行排序,性能比repartition要高。

函数定义:

/**

* Repartition the RDD according to the given partitioner and, within each resulting partition,

* sort records by their keys.

*

* This is more efficient than calling repartition and then sorting within each partition

* because it can push the sorting down into the shuffle machinery.

*/

def repartitionAndSortWithinPartitions(partitioner: Partitioner): RDD[(K, V)]

使用示例:

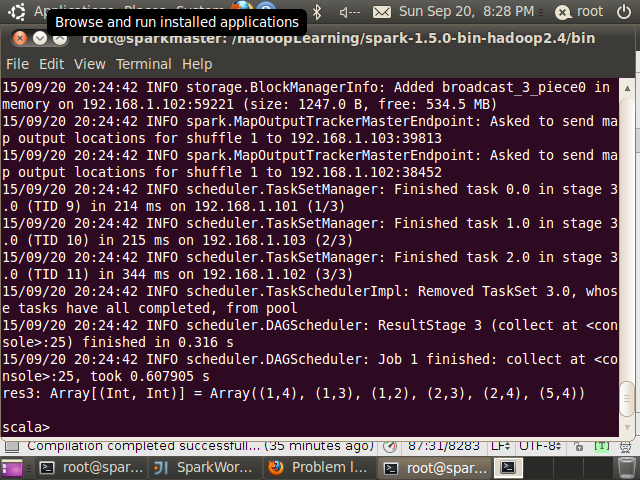

scala> val data = sc.parallelize(List((1,3),(1,2),(5,4),(1, 4),(2,3),(2,4)),3)

data: org.apache.spark.rdd.RDD[(Int, Int)] = ParallelCollectionRDD[3] at parallelize at :21

scala> data.repartitionAndSortWithinPartitions(new HashPartitioner(3)).collect

res3: Array[(Int, Int)] = Array((1,4), (1,3), (1,2), (2,3), (2,4), (5,4))- 1

- 2

- 3

- 4

- 5

(2)aggregateByKey(zeroValue)(seqOp, combOp, [numTasks])

aggregateByKey函数对PairRDD中相同Key的值进行聚合操作,在聚合过程中同样使用了一个中立的初始值。其函数定义如下:

/**

* Aggregate the values of each key, using given combine functions and a neutral “zero value”.

* This function can return a different result type, U, than the type of the values in this RDD,

* V. Thus, we need one operation for merging a V into a U and one operation for merging two U’s,

* as in scala.TraversableOnce. The former operation is used for merging values within a

* partition, and the latter is used for merging values between partitions. To avoid memory

* allocation, both of these functions are allowed to modify and return their first argument

* instead of creating a new U.

*/

def aggregateByKey[U: ClassTag](zeroValue: U)(seqOp: (U, V) => U,

combOp: (U, U) => U): RDD[(K, U)]

示例代码:

import org.apache.spark.SparkContext._

import org.apache.spark.{SparkConf, SparkContext}

object SparkWordCount{

def main(args: Array[String]) {

if (args.length == 0) {

System.err.println("Usage: SparkWordCount " )

System.exit(1)

}

val conf = new SparkConf().setAppName("SparkWordCount").setMaster("local")

val sc = new SparkContext(conf)

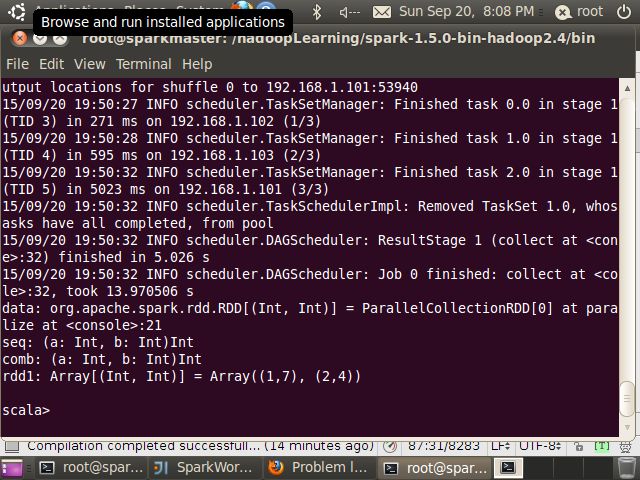

val data = sc.parallelize(List((1,3),(1,2),(1, 4),(2,3),(2,4)))

def seqOp(a:Int, b:Int) : Int ={

println("seq: " + a + "\t " + b)

math.max(a,b)

}

def combineOp(a:Int, b:Int) : Int ={

println("comb: " + a + "\t " + b)

a + b

}

val localIterator=data.aggregateByKey(1)(seqOp, combineOp).toLocalIterator

for(i<-localIterator) println(i)

sc.stop()

}

}- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

执行结果:

seq: 1 3

seq: 3 2

seq: 3 4

seq: 1 3

seq: 3 4

(1,4)

(2,4)

从输出结果来看,seqOp函数起作用了,但comineOp函数并没有起作用,在Spark 1.5、1.4及1.3三个版本中测试,结果都是一样的。这篇文章http://www.iteblog.com/archives/1261给出了aggregateByKey的使用,其Spark版本是1.1,其返回结果符合预期。个人觉得是版本原因造成的,具体后面有时间再来分析。

RDD中还有其它非常有用的transformation操作,参见API文档:http://spark.apache.org/docs/latest/api/scala/index.html

2. RDD actions

本小节将介绍常用的action操作,前面使用的collect方法便是一种action,它返回RDD中所有的数据元素,方法定义如下:

/**

* Return an array that contains all of the elements in this RDD.

*/

def collect(): Array[T]

(1) reduce(func)

reduce采样累加或关联操作减少RDD中元素的数量,其方法定义如下:

/**

* Reduces the elements of this RDD using the specified commutative and

* associative binary operator.

*/

def reduce(f: (T, T) => T): T

使用示例:

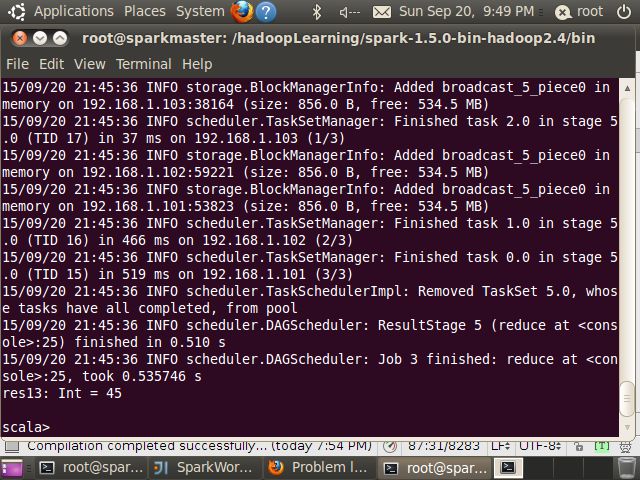

scala> val data=sc.parallelize(1 to 9)

data: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[6] at parallelize at :22

scala> data.reduce((x,y)=>x+y)

res12: Int = 45

scala> data.reduce(_+_)

res13: Int = 45- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

(2)count()

/**

* Return the number of elements in the RDD.

*/

def count(): Long

使用示例:

scala> val data=sc.parallelize(1 to 9)

data: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[6] at parallelize at :22

scala> data.count

res14: Long = 9

- 1

- 2

- 3

- 4

- 5

(3)first()

/**

* Return the first element in this RDD.

*/

def first()

scala> val data=sc.parallelize(1 to 9)

data: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[6] at parallelize at :22

scala> data.first

res15: Int = 1

- 1

- 2

- 3

- 4

- 5

(4)take(n)

/**

* Take the first num elements of the RDD. It works by first scanning one partition, and use the

* results from that partition to estimate the number of additional partitions needed to satisfy

* the limit.

*

* @note due to complications in the internal implementation, this method will raise

* an exception if called on an RDD of Nothing or Null.

*/

def take(num: Int): Array[T]

scala> val data=sc.parallelize(1 to 9)

data: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[6] at parallelize at :22

scala> data.take(2)

res16: Array[Int] = Array(1, 2)

- 1

- 2

- 3

- 4

- 5

(5) takeSample(withReplacement, num, [seed])

对RDD中的数据进行采样

/**

* Return a fixed-size sampled subset of this RDD in an array

*

* @param withReplacement whether sampling is done with replacement

* @param num size of the returned sample

* @param seed seed for the random number generator

* @return sample of specified size in an array

*/

// TODO: rewrite this without return statements so we can wrap it in a scope

def takeSample(

withReplacement: Boolean,

num: Int,

seed: Long = Utils.random.nextLong): Array[T]

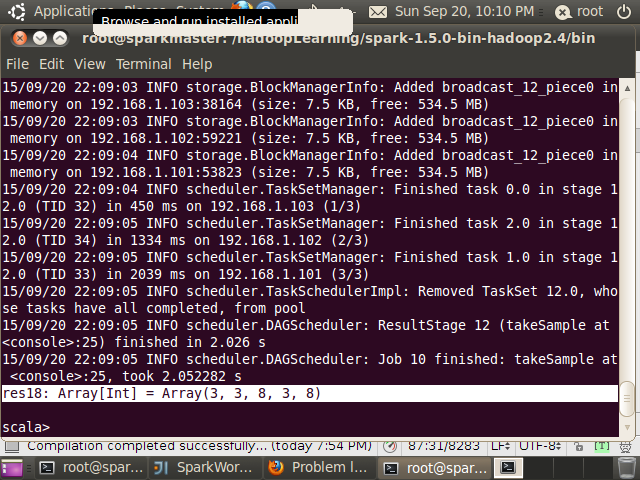

scala> val data=sc.parallelize(1 to 9)

data: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[6] at parallelize at :22

scala> data.takeSample(false,5)

res17: Array[Int] = Array(6, 7, 4, 1, 2)

scala> data.takeSample(true,5)

res18: Array[Int] = Array(3, 3, 8, 3, 8)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

(6) takeOrdered(n, [ordering])

/**

* Returns the first k (smallest) elements from this RDD as defined by the specified

* implicit Ordering[T] and maintains the ordering. This does the opposite of [[top]].

* For example:

* {{{

* sc.parallelize(Seq(10, 4, 2, 12, 3)).takeOrdered(1)

* // returns Array(2)

*

* sc.parallelize(Seq(2, 3, 4, 5, 6)).takeOrdered(2)

* // returns Array(2, 3)

* }}}

*

* @param num k, the number of elements to return

* @param ord the implicit ordering for T

* @return an array of top elements

*/

def takeOrdered(num: Int)(implicit ord: Ordering[T]): Array[T]

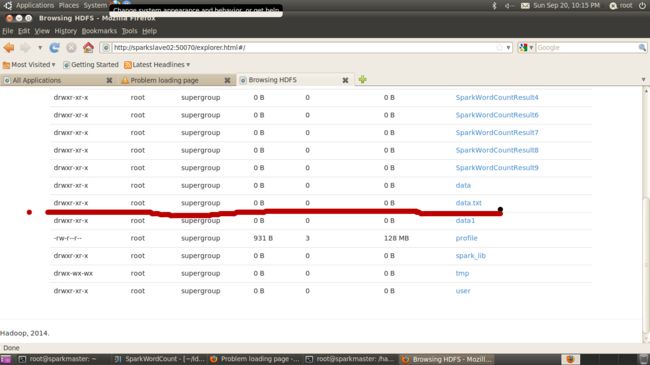

(6) saveAsTextFile(path)

将RDD保存到文件,本地模式时保存在本地文件,集群模式指如果在Hadoop基础上则保存在HDFS上

/**

* Save this RDD as a text file, using string representations of elements.

*/

def saveAsTextFile(path: String): Unit

scala> data.saveAsTextFile("/data.txt")- 1

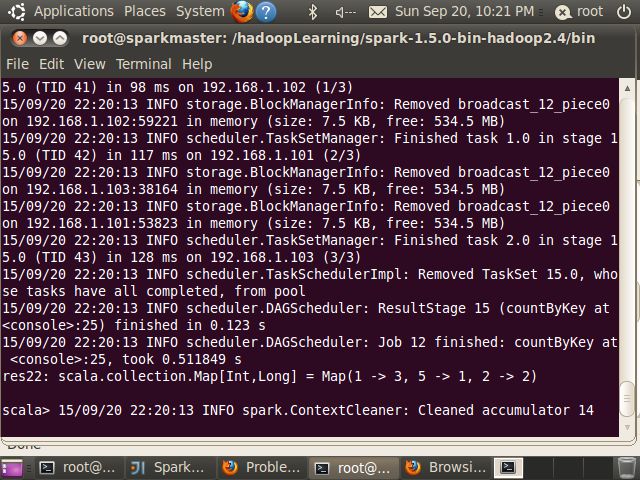

(7) countByKey()

将RDD中的数据按Key计数

/**

* Count the number of elements for each key, collecting the results to a local Map.

*

* Note that this method should only be used if the resulting map is expected to be small, as

* the whole thing is loaded into the driver’s memory.

* To handle very large results, consider using rdd.mapValues(_ => 1L).reduceByKey(_ + _), which

* returns an RDD[T, Long] instead of a map.

*/

def countByKey(): Map[K, Long]

使用示例:

scala> val data = sc.parallelize(List((1,3),(1,2),(5,4),(1, 4),(2,3),(2,4)),3)

data: org.apache.spark.rdd.RDD[(Int, Int)] = ParallelCollectionRDD[10] at parallelize at :22

scala> data.countByKey()

res22: scala.collection.Map[Int,Long] = Map(1 -> 3, 5 -> 1, 2 -> 2)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

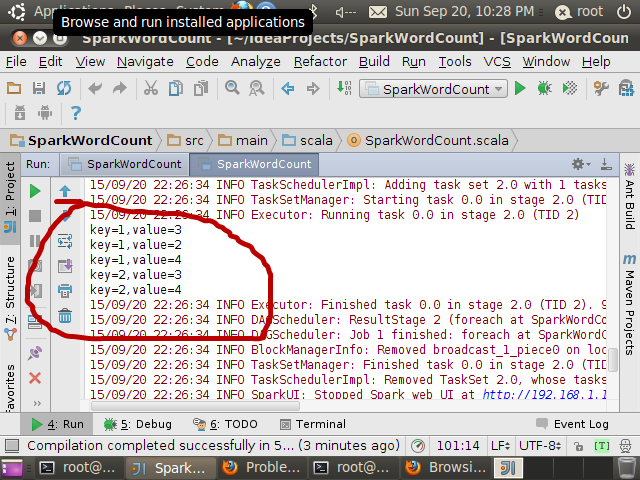

(8)foreach(func)

foreach方法遍历RDD中所有的元素

// Actions (launch a job to return a value to the user program)

/**

* Applies a function f to all elements of this RDD.

*/

def foreach(f: T => Unit): Unit

import org.apache.spark.SparkContext._

import org.apache.spark.{SparkConf, SparkContext}

object ForEachDemo{

def main(args: Array[String]) {

if (args.length == 0) {

System.err.println("Usage: SparkWordCount " )

System.exit(1)

}

val conf = new SparkConf().setAppName("SparkWordCount").setMaster("local")

val sc = new SparkContext(conf)

val data = sc.parallelize(List((1,3),(1,2),(1, 4),(2,3),(2,4)))

data.foreach(x=>println("key="+x._1+",value="+x._2))

sc.stop()

}

}- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

Sparkh中还存在其它非常有用的action操作,如foldByKey、sampleByKey等,参见API文档:http://spark.apache.org/docs/latest/api/scala/index.html

转载: http://blog.csdn.net/lovehuangjiaju/article/details/48622757