openCV 2.2 正态贝叶斯分类器 CvNormalBayesClassifier 类源码解析

1. 先看正态分布的判别函数:

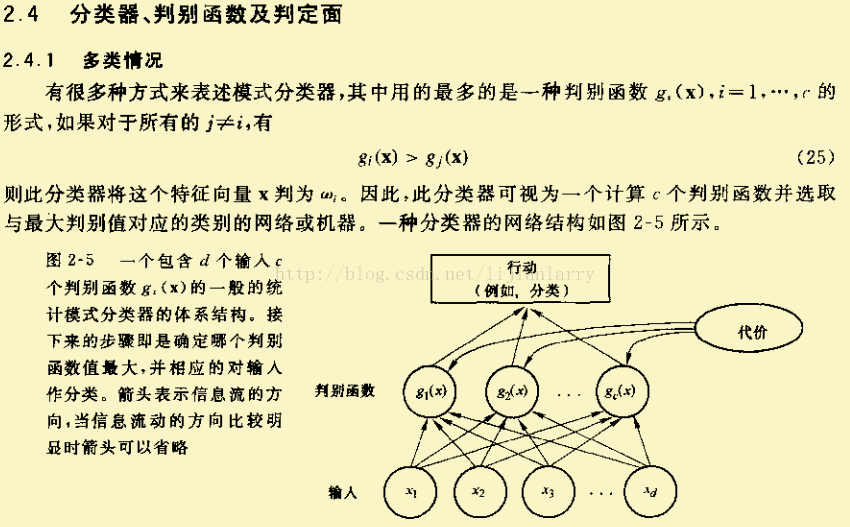

~来自的《模式分类》

其中:

g(x):判别函数

P(w):先验概率

P(w|X) :后验概率(即假设特征值X已知的条件下类别属于w的概率)

P(X|w):似然函数(表明在其他条件都相等的情况下,使得P(X|w)较大的w更有可能是真实的类别)

2. 原理分析:

正态贝叶斯分类器的前提就是待分类的类别都遵循正态分布。算法也必须假设待分类的数据遵循正态分布。

有了这个前提,我们继续往下分析:

参考P(X|w)(似然函数)的概念,当先验概率P(w)相等的条件下,判别函数g(x)只取决于P(X|w)的大小,这是本算法的理论依据。

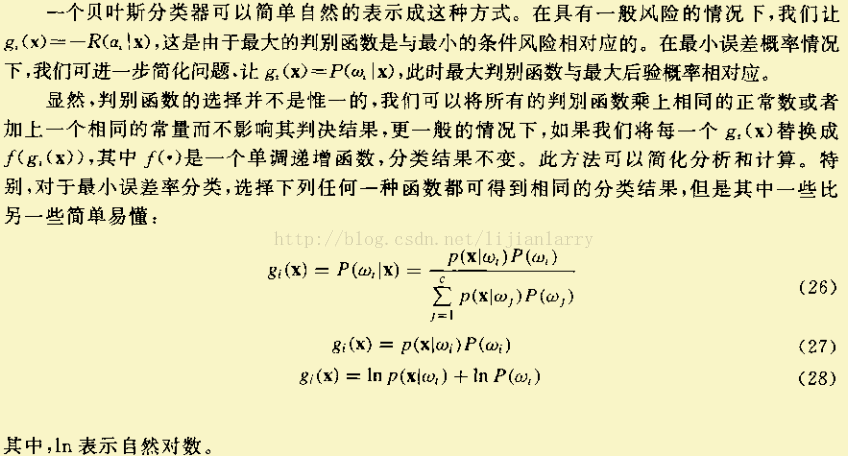

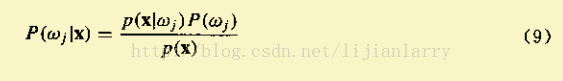

根据公式(9)

因为后验概率正比于先验概率与似然度的乘积(p(x)可仅仅看成一个标量因子),

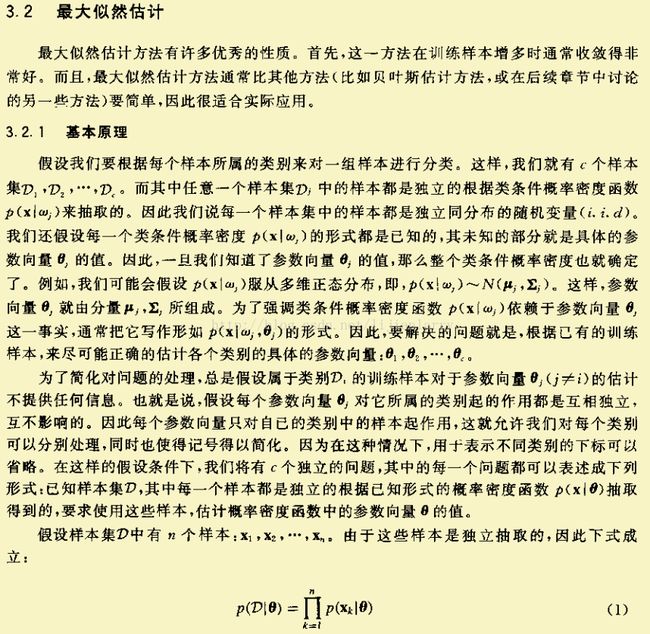

但在有些情况下可以不考虑先验概率,如分类很少或维数较高等情况,即后验概率仅与似然度成正比,这样最大后验问题就变为了极大似然问题,这时我们只需要得到各个分类的似然函数P(X|w),而我们假设待分类的每一类都遵循正态分布,所以似然函数P(X|w)是正态分布,称为最大似然估计:

~来自的《模式分类》

我们只需得到每一类的似然函数,然后把待分类的数据代人其中,比较其大小即可。

似然函数:

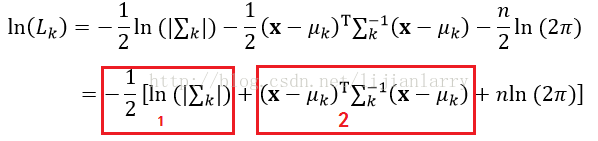

似然函数的对数形式:

协方差矩阵公式:

3.openCV 2.2源码分析

本代码分析时,使用的测试数据为:

double data[4][3] =

{

1, 2, 3,

-1, -2, -3,

-4, -5, -6,

41, 15, 61

};

训练的类别标签:

int trainLabel[4] = {1, -1, -1, 1};

待分类数据:

double testArr[]= { 5, 6, 7};

下面看CvNormalBayesClassifier类的训练函数:

bool CvNormalBayesClassifier::train( const CvMat* _train_data, const CvMat* _responses,

const CvMat* _var_idx, const CvMat* _sample_idx, bool update )

{

const float min_variation = FLT_EPSILON;//浮点数精度(取一个小值)

bool result = false;

CvMat* responses = 0;//类别标签

const float** train_data = 0;//训练数据

CvMat* __cls_labels = 0;//有序的类别标签

CvMat* __var_idx = 0;

CvMat* cov = 0;//协方差矩阵

CV_FUNCNAME( "CvNormalBayesClassifier::train" );

__BEGIN__;

int cls, nsamples = 0, _var_count = 0, _var_all = 0, nclasses = 0;

int s, c1, c2;

const int* responses_data;

CV_CALL( cvPrepareTrainData( 0,

_train_data, CV_ROW_SAMPLE, _responses, CV_VAR_CATEGORICAL,

_var_idx, _sample_idx, false, &train_data,

&nsamples, &_var_count, &_var_all, &responses,

&__cls_labels, &__var_idx ));

if( !update )

{

const size_t mat_size = sizeof(CvMat*);

size_t data_size;

clear();

var_idx = __var_idx;

cls_labels = __cls_labels;

__var_idx = __cls_labels = 0;

var_count = _var_count;

var_all = _var_all;

nclasses = cls_labels->cols;

data_size = nclasses*6*mat_size;

CV_CALL( count = (CvMat**)cvAlloc( data_size ));

memset( count, 0, data_size );

sum = count + nclasses;

productsum = sum + nclasses;

avg = productsum + nclasses;

inv_eigen_values= avg + nclasses;

cov_rotate_mats = inv_eigen_values + nclasses;

CV_CALL( c = cvCreateMat( 1, nclasses, CV_64FC1 ));

for( cls = 0; cls < nclasses; cls++ )

{

CV_CALL(count[cls] = cvCreateMat( 1, var_count, CV_32SC1 ));//训练样本每类的个数

CV_CALL(sum[cls] = cvCreateMat( 1, var_count, CV_64FC1 ));//训练样本每类对应属性的和

CV_CALL(productsum[cls] = cvCreateMat( var_count, var_count, CV_64FC1 ));//协方差矩阵的第一部分(见上面协方差矩阵公式,红框标出1)

CV_CALL(avg[cls] = cvCreateMat( 1, var_count, CV_64FC1 ));//训练样本每类的均值

CV_CALL(inv_eigen_values[cls] = cvCreateMat( 1, var_count, CV_64FC1 ));//训练样本每类的特征值

CV_CALL(cov_rotate_mats[cls] = cvCreateMat( var_count, var_count, CV_64FC1 ));//SVD分解的U的转置

CV_CALL(cvZero( count[cls] ));

CV_CALL(cvZero( sum[cls] ));

CV_CALL(cvZero( productsum[cls] ));

CV_CALL(cvZero( avg[cls] ));

CV_CALL(cvZero( inv_eigen_values[cls] ));

CV_CALL(cvZero( cov_rotate_mats[cls] ));

}

}

else

{

// check that the new training data has the same dimensionality etc.

if( _var_count != var_count || _var_all != var_all || !((!_var_idx && !var_idx) ||

(_var_idx && var_idx && cvNorm(_var_idx,var_idx,CV_C) < DBL_EPSILON)) )

CV_ERROR( CV_StsBadArg,

"The new training data is inconsistent with the original training data" );

if( cls_labels->cols != __cls_labels->cols ||

cvNorm(cls_labels, __cls_labels, CV_C) > DBL_EPSILON )

CV_ERROR( CV_StsNotImplemented,

"In the current implementation the new training data must have absolutely "

"the same set of class labels as used in the original training data" );

nclasses = cls_labels->cols;

}

responses_data = responses->data.i;

CV_CALL( cov = cvCreateMat( _var_count, _var_count, CV_64FC1 ));

//这部分程序是计算count、sum、productsum 即:统计训练样本每类的个数、训练样本每类对应属性的和、协方差矩阵的第一部分(包括对角线的右上部分)

/* process train data (count, sum , productsum) */

for( s = 0; s < nsamples; s++ )

{

cls = responses_data[s];

int* count_data = count[cls]->data.i;

double* sum_data = sum[cls]->data.db;

double* prod_data = productsum[cls]->data.db;

const float* train_vec = train_data[s];

for( c1 = 0; c1 < _var_count; c1++, prod_data += _var_count )

{

double val1 = train_vec[c1];

sum_data[c1] += val1;

count_data[c1]++;

for( c2 = c1; c2 < _var_count; c2++ )

prod_data[c2] += train_vec[c2]*val1;

}

}

/*

本例中:

nsamples = 2;_var_count = 3;

count =

{

2, 2, 2,

2, 2, 2

}

sum =

{

-5, -7 ,-9

43, 17, 64

}

prod_data 指向 productsum,运算后的结果为:

标签为-1的样本:

17 22 27

0 29 36

0 0 45

标签为1的样本:

1682 617 2504

0 229 921

0 0 3730

由以上数据可知,productsum 只是计算的协方差公式中的第一部分的右上部分包括对角线(因为是对称的),有下面的函数cvCompleteSymm把其余的部分补齐

*/

/* calculate avg, covariance matrix, c */

for( cls = 0; cls < nclasses; cls++ )

{

double det = 1;

int i, j;

CvMat* w = inv_eigen_values[cls];

int* count_data = count[cls]->data.i;

double* avg_data = avg[cls]->data.db;

double* sum1 = sum[cls]->data.db;

cvCompleteSymm( productsum[cls], 0 );

for( j = 0; j < _var_count; j++ )

{

int n = count_data[j];

avg_data[j] = n ? sum1[j] / n : 0.;

}

count_data = count[cls]->data.i;

avg_data = avg[cls]->data.db;

sum1 = sum[cls]->data.db;

/*

计算均值、协方差矩阵(包括对角线的左下部分)

*/

for( i = 0; i < _var_count; i++ )

{

double* avg2_data = avg[cls]->data.db;

double* sum2 = sum[cls]->data.db;

double* prod_data = productsum[cls]->data.db + i*_var_count;

double* cov_data = cov->data.db + i*_var_count;

double s1val = sum1[i];

double avg1 = avg_data[i];

int count = count_data[i];

for( j = 0; j <= i; j++ )

{

double avg2 = avg2_data[j];

//见协方差计算公式

double cov_val = prod_data[j] - avg1 * sum2[j] - avg2 * s1val + avg1 * avg2 * count;

cov_val = (count > 1) ? cov_val / (count - 1) : cov_val;

cov_data[j] = cov_val;

}

}

/*

函数cvCompleteSymm补齐协方差矩阵数据,cvSVD函数进行SVD分解,其中cov_rotate_mats保存的是U的转置

w指向特征值

*/

CV_CALL( cvCompleteSymm( cov, 1 ));

/*

cvSVD函数进行SVD分解,其中cov_rotate_mats保存的是U的转置

w指向特征值

使用SVD分解的目的是:计算协方差矩阵的行列式,以及在计算似然函数的第二部分的时候,根据公式cov*x=λx,使用λ来替代协方差矩阵cov,

目的是为了降维,减少数据量。

*/

CV_CALL( cvSVD( cov, w, cov_rotate_mats[cls], 0, CV_SVD_U_T ));

//去掉过小的特征值

CV_CALL( cvMaxS( w, min_variation, w ));

for( j = 0; j < _var_count; j++ )

det *= w->data.db[j];

CV_CALL( cvDiv( NULL, w, w ));

//似然函数中的第一部分

c->data.db[cls] = log( det );

}

result = true;

__END__;

if( !result || cvGetErrStatus() < 0 )

clear();

cvReleaseMat( &cov );

cvReleaseMat( &__cls_labels );

cvReleaseMat( &__var_idx );

cvFree( &train_data );

return result;

}

/*

具体实施的时候,我是使用eigen3计算矩阵的SVD分解,所以计算结果和openCV计算的略有不同,我使用matlab也计算了一遍,

三者的计算结果各不相同。

下面是我计算出来的贝叶斯分类器的各个量,

var_count:3

cls_labels:

rows:1

cols:2

data:[-1 1]

count:

rows:1

cols:3

data:[2 2 2]

rows:1

cols:3

data:[2 2 2]

sum:

rows:1

cols:3

data:[-5.000000e+000 -7.000000e+000 -9.000000e+000]

rows:1

cols:3

data:[4.200000e+001 1.700000e+001 6.400000e+001]

productsum:

rows:3

cols:3

data:[1.700000e+001 2.200000e+001 2.700000e+001 2.200000e+001 2.900000e+001 3.600000e+001 2.700000e+001 3.600000e+001 4.500000e+001]

rows:3

cols:3

data:[1.682000e+003 6.170000e+002 2.504000e+003 6.170000e+002 2.290000e+002 9.210000e+002 2.504000e+003 9.210000e+002 3.730000e+003]

avg:

rows:1

cols:3

data:[-2.5000000000000000e+000 -3.5000000000000000e+000 -4.5000000000000000e+000]

rows:1

cols:3

data:[2.1000000000000000e+001 8.5000000000000000e+000 3.2000000000000000e+001]

inv_eigen_values:

rows:1

cols:3

data:[7.4074074074074070e-002 8.3886080000000000e+006 8.3886080000000000e+006]

rows:1

cols:3

data:[3.8963569062926162e-004 8.3886080000000000e+006 8.3886080000000000e+006]

cov_rotate_mats:

rows:3

cols:3

data:[5.7735026918962584e-001 4.0824829046386302e-001 -7.0710678118654746e-001 5.7735026918962584e-001 4.0824829046386302e-001 7.0710678118654746e-001 5.7735026918962584e-001 -8.1649658092772615e-001 0.0000000000000000e+000]

rows:3

cols:3

data:[5.5830865343769231e-001 7.6990729791549084e-001 3.0908607233755814e-001 1.8145031236725001e-001 2.5021987182253452e-001 -9.5103406873094809e-001 8.0954754748465396e-001 -5.8705431466056179e-001 -0.0000000000000000e+000]

c:

rows:1

cols:2

data:[-2.9282080620313099e+001 -2.4034471923757128e+001]

*/

分类函数:

//在predict函数之前,还有一个load函数,是加载数据用的。

float CvNormalBayesClassifier::predict( const CvMat* samples, CvMat* results ) const

{

float value = 0;

void* buffer = 0;

int allocated_buffer = 0;

CV_FUNCNAME( "CvNormalBayesClassifier::predict" );

__BEGIN__;

int i, j, k, cls = -1, _var_count, nclasses;

double opt = FLT_MAX;

CvMat diff;

int rtype = 0, rstep = 0, size;

const int* vidx = 0;

nclasses = cls_labels->cols;

_var_count = avg[0]->cols;

if( !CV_IS_MAT(samples) || CV_MAT_TYPE(samples->type) != CV_32FC1 || samples->cols != var_all )

CV_ERROR( CV_StsBadArg,

"The input samples must be 32f matrix with the number of columns = var_all" );

if( samples->rows > 1 && !results )

CV_ERROR( CV_StsNullPtr,

"When the number of input samples is >1, the output vector of results must be passed" );

if( results )

{

if( !CV_IS_MAT(results) || (CV_MAT_TYPE(results->type) != CV_32FC1 &&

CV_MAT_TYPE(results->type) != CV_32SC1) ||

(results->cols != 1 && results->rows != 1) ||

results->cols + results->rows - 1 != samples->rows )

CV_ERROR( CV_StsBadArg, "The output array must be integer or floating-point vector "

"with the number of elements = number of rows in the input matrix" );

rtype = CV_MAT_TYPE(results->type);

rstep = CV_IS_MAT_CONT(results->type) ? 1 : results->step/CV_ELEM_SIZE(rtype);

}

if( var_idx )

vidx = var_idx->data.i;

// allocate memory and initializing headers for calculating

size = sizeof(double) * (nclasses + var_count);

if( size <= CV_MAX_LOCAL_SIZE )

buffer = cvStackAlloc( size );

else

{

CV_CALL( buffer = cvAlloc( size ));

allocated_buffer = 1;

}

diff = cvMat( 1, var_count, CV_64FC1, buffer );

for( k = 0; k < samples->rows; k++ )

{

int ival;

for( i = 0; i < nclasses; i++ )

{

//见似然函数公式第一部分

double cur = c->data.db[i];

CvMat* u = cov_rotate_mats[i];

CvMat* w = inv_eigen_values[i];

const double* avg_data = avg[i]->data.db;

const float* x = (const float*)(samples->data.ptr + samples->step*k);

// cov = u w u' --> cov^(-1) = u w^(-1) u'

//见似然函数公式第二部分

for( j = 0; j < _var_count; j++ )

diff.data.db[j] = avg_data[j] - x[vidx ? vidx[j] : j];

//矩阵相乘

CV_CALL(cvGEMM( &diff, u, 1, 0, 0, &diff, CV_GEMM_B_T ));

//见似然函数公式第二部分

for( j = 0; j < _var_count; j++ )

{

double d = diff.data.db[j];

cur += d*d*w->data.db[j];

}

if( cur < opt )

{

cls = i;

opt = cur;

}

/* probability = exp( -0.5 * cur ) */

}

ival = cls_labels->data.i[cls];

if( results )

{

if( rtype == CV_32SC1 )

results->data.i[k*rstep] = ival;

else

results->data.fl[k*rstep] = (float)ival;

}

if( k == 0 )

value = (float)ival;

/*if( _probs )

{

CV_CALL( cvConvertScale( &expo, &expo, -0.5 ));

CV_CALL( cvExp( &expo, &expo ));

if( _probs->cols == 1 )

CV_CALL( cvReshape( &expo, &expo, 1, nclasses ));

CV_CALL( cvConvertScale( &expo, _probs, 1./cvSum( &expo ).val[0] ));

}*/

}

__END__;

if( allocated_buffer )

cvFree( &buffer );

return value;

}

//train函数看懂了,predict函数没有什么好说的了。