GAN简单TensorFlow源码

原文链接Generative Adversarial Nets in TensorFlow

论文链接Generative Adversarial Nets

不废话,源码如下,用于生成minist手写体数字:

# -*- coding: utf-8 -*-

# @Author: adrianna

# @Date: 2017-07-12 10:47:57

# @Last Modified by: adrianna

# @Last Modified time: 2017-07-13 14:43:05

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.gridspec as gridspec

import os

from tensorflow.examples.tutorials.mnist import input_data

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

sess = tf.InteractiveSession()

mb_size = 128

Z_dim = 100

mnist = input_data.read_data_sets('../../MNIST_data', one_hot=True)

def weight_var(shape, name):

return tf.get_variable(name=name, shape=shape, initializer=tf.contrib.layers.xavier_initializer())

def bias_var(shape, name):

return tf.get_variable(name=name, shape=shape, initializer=tf.constant_initializer(0))

# discriminater net

X = tf.placeholder(tf.float32, shape=[None, 784], name='X')

D_W1 = weight_var([784, 128], 'D_W1')

D_b1 = bias_var([128], 'D_b1')

D_W2 = weight_var([128, 1], 'D_W2')

D_b2 = bias_var([1], 'D_b2')

theta_D = [D_W1, D_W2, D_b1, D_b2]

# generator net

Z = tf.placeholder(tf.float32, shape=[None, 100], name='Z')

G_W1 = weight_var([100, 128], 'G_W1')

G_b1 = bias_var([128], 'G_B1')

G_W2 = weight_var([128, 784], 'G_W2')

G_b2 = bias_var([784], 'G_B2')

theta_G = [G_W1, G_W2, G_b1, G_b2]

def generator(z):

G_h1 = tf.nn.relu(tf.matmul(z, G_W1) + G_b1)

G_log_prob = tf.matmul(G_h1, G_W2) + G_b2

G_prob = tf.nn.sigmoid(G_log_prob)

return G_prob

def discriminator(x):

D_h1 = tf.nn.relu(tf.matmul(x, D_W1) + D_b1)

D_logit = tf.matmul(D_h1, D_W2) + D_b2

D_prob = tf.nn.sigmoid(D_logit)

return D_prob, D_logit

G_sample = generator(Z)

D_real, D_logit_real = discriminator(X)

D_fake, D_logit_fake = discriminator(G_sample)

# D_loss = -tf.reduce_mean(tf.log(D_real) + tf.log(1. - D_fake))

# G_loss = -tf.reduce_mean(tf.log(D_fake))

D_loss_real = tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(

logits=D_logit_real, labels=tf.ones_like(D_logit_real)))

D_loss_fake = tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(

logits=D_logit_fake, labels=tf.zeros_like(D_logit_fake)))

D_loss = D_loss_real + D_loss_fake

G_loss = tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(

logits=D_logit_fake, labels=tf.ones_like(D_logit_fake)))

D_optimizer = tf.train.AdamOptimizer().minimize(D_loss, var_list=theta_D)

G_optimizer = tf.train.AdamOptimizer().minimize(G_loss, var_list=theta_G)

# init variables

sess.run(tf.global_variables_initializer())

def sample_Z(m, n):

'''Uniform prior for G(Z)'''

return np.random.uniform(-1., 1., size=[m, n])

def plot(samples):

fig = plt.figure(figsize=(4, 4))

gs = gridspec.GridSpec(4, 4)

gs.update(wspace=0.05, hspace=0.05)

for i, sample in enumerate(samples): # [i,samples[i]] imax=16

ax = plt.subplot(gs[i])

plt.axis('off')

ax.set_xticklabels([])

ax.set_yticklabels([])

ax.set_aspect('equal')

plt.imshow(sample.reshape(28, 28), cmap='Greys_r')

return fig

if not os.path.exists('out/'):

os.makedirs('out/')

i = 0

for it in range(100000):

if it % 1000 == 0:

samples = sess.run(G_sample, feed_dict={

Z: sample_Z(16, Z_dim)}) # 16*784

fig = plot(samples)

plt.savefig('out/{}.png'.format(str(i).zfill(3)), bbox_inches='tight')

i += 1

plt.close(fig)

X_mb, _ = mnist.train.next_batch(mb_size)

_, D_loss_curr = sess.run([D_optimizer, D_loss], feed_dict={

X: X_mb, Z: sample_Z(mb_size, Z_dim)})

_, G_loss_curr = sess.run([G_optimizer, G_loss], feed_dict={

Z: sample_Z(mb_size, Z_dim)})

if it % 1000 == 0:

print('Iter: {}'.format(it))

print('D loss: {:.4}'.format(D_loss_curr))

print('G_loss: {:.4}'.format(G_loss_curr))

print()

在运行中,loss值会出现为nan的情况,不过好像并不影响最终结果。结果我换了个好显卡就没事了!

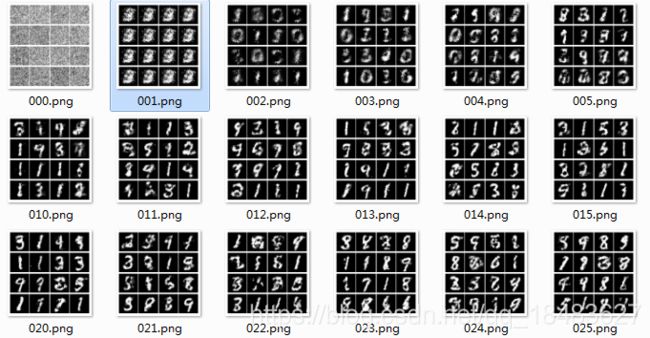

最终运行结果是在目录下新建一个out文件夹,输出图片会保存在该文件夹下,如下所示