数据转换voc2tfrecord

更新:找到了训练效果不好的原因:由于粗心把polyp拼写成了ployp。(泪流满面)

文中基本所有相关字段已经改正,如果有发现未改正的地方,欢迎在评论区指出,谢谢大家!~

常见的数据集有很多,例如coco数据集,他们都是封装好的,用于现成的网络的训练和预测过程,方便的同时也给想训练自己数据集的同学带来了困扰。使用基于tensorflow 的目标检测API的训练必须使用TFrecord这种数据集格式。

1.1 其他种类数据集转化成TFRecord(先往下看,别急着执行,生成数据集具体流程在1.3)

幸运的是,目标检测API提供了部分数据集格式转换的程序可供参考。

从一份VOC格式的文件开始,它是VOC2007年数据。数据目录如下:

- Annotations文件夹

该文件下存放的是xml格式的标签文件,每个xml文件都对应于JPEG Images文件夹的一张图片。 - JPEGImages文件夹

改文件夹下存放的是数据集图片,包括训练和测试图片。 - ImageSets文件夹

该文件夹下存放了三个文件,分别是Layout、Main、Segmentation。在这里我们只用存放图像数据的Main文件,其他两个暂且不管。 - SegmentationClass文件和SegmentationObject文件。 (与我们无关)

这两个文件都是与图像分割相关。

准备好VOC数据集后,修改一些内容,将VOC数据集转换成TFrecord数据集。

这段代码在下面路径下的create_pascal_tf_record.py中。

当然,理解其内容后要修改一部分代码,包括:

- 将object_detection/data/pascal_label_map.pbtxt文件复制一份也放到特定文件夹下,它表示的是我们要识别的类们。49-50行:将'data/pascal_label_map.pbtxt' 改为上面我们复制的pascal_label_map.pbtxt文件所在路径,也就是修改其路径:flags.DEFINE_string('label_map_path','C:\Development\dataset\pascal_label_map.pbtxt','Path to label map proto')

- 163行:将'aeroplane_' + 去掉:examples_path = os.path.join(data_dir, year, ‘ImageSets’, ‘Main’, ‘aeroplane_’ + FLAGS.set + ‘.txt’)

之后将文件复制到API\reserch下,运行命令

Python create_pascal_tf_record.py --data_dir=D:\jupyter_notebook\VOC2007 --year=VOC2007 --set=train --output_path='pascal_train.record'

这里需要注意:将代码放到reserch文件目录下,而不是objection下,源代码中导入的包中要修改相应路径(必要时设置一下系统路径,chdir一下),这样比较方便。

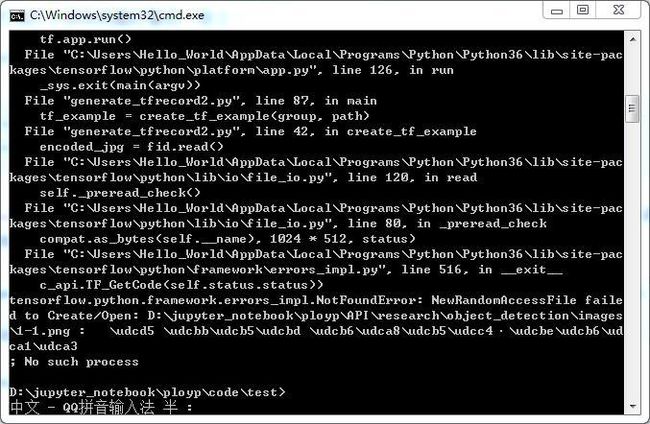

这里将会遇到这个问题(超级烦人,解决费了好长时间):

导致这个问题的原因有很多:

- 最直接的就是路径设置问题,没有此路径

- 如果路径正确而问题依然存在,尝试将相对路径改为绝对路径

- 如果还有问题,那么将运行代码的地址路径设置的短一点,即尽可能放在较为外层的地址上,因为地址字符串长度有限制(看到有个外国大佬说长度限制在55)。

之后,就得到了这个封装好的TFrecord:(命令行的路径带单引号就是这个结果)

1.2自己的数据集转化成TFrecord Demo(生成数据集具体流程在1.3)

知道了TFrecord长什么样子后,就要想办法把自己的数据转化成TFrecord了,而我的数据是之前用来进行基于语义分割的息肉检测,如下图:

可以说转化是相当麻烦了,一方面它不是VOC这种封装好的数据集,另一方面它还是掩码而不是候选框。

因此我想到了两种方案:

- 将自己的数据集转化成VOC格式,再用之前尝试成功的VOC转化成TFrecord。而且相关的博客教程也找到了:点我。相应的问题就是VOC格式的生成比较麻烦,标签也无法直接产生。

- 将自己的数据直接转化成TFrecord,使用lableImg的小工具,手动打标签后自动转成XML文件,通过代码直接转化成TFrecord。这种思路的问题就是掩码标签失去了用处,需要手动打标签。

于是,我选择了1………

才怪,我选择了2。

首先我使用lableimg打个标签看看啥样。

lableIMG源码: https://github.com/tzutalin/labelImg

lableIMG封装好的:https://tzutalin.github.io/labelImg/ (建议点这)

- Open

- 右键new

- 画方框

- 选择class

- 保存

- (不贴图了,看一眼就会)

尝试了几张图片,最终得到的都是些下面的固定格式的XML文件:

(于是我有个大胆的想法,通过遍历掩码的像素,得到候选框的边框,再将其写入文件,美滋滋。待会再试。)

然后将XML的信息提取出来转化成一个csv:

# -*- coding: utf-8 -*-

"""

将文件夹内所有XML文件的信息记录到CSV文件中(notebook可执行)

"""

import os

import glob

import pandas as pd

import xml.etree.ElementTree as ET

os.chdir('D:\\jupyter_notebook\\polyp\\code\\test\\')

path = 'D:\\jupyter_notebook\\polyp\\code\\test\\'

def xml_to_csv(path):

xml_list = []

for xml_file in glob.glob(path + '/*.xml'):

tree = ET.parse(xml_file)

root = tree.getroot()

for member in root.findall('object'):

value = (root.find('filename').text,

int(root.find('size')[0].text),

int(root.find('size')[1].text),

member[0].text,

int(member[4][0].text),

int(member[4][1].text),

int(member[4][2].text),

int(member[4][3].text)

)

xml_list.append(value)

column_name = ['filename', 'width', 'height', 'class', 'xmin', 'ymin', 'xmax', 'ymax']

xml_df = pd.DataFrame(xml_list, columns=column_name)

return xml_df

def main():

image_path = path

xml_df = xml_to_csv(image_path)

print(xml_df)

xml_df.to_csv('tv_vehicle_labels.csv', index=None)

print('Successfully converted xml to csv.')

main()

这样的csv:

然后再通过CSV生成TFrecord.(,py文件,需要命令行执行:

命令:

python generate_tfrecord.py --csv_input=tv_vehicle_labels.csv --output_path=train.record)

需要注意的问题也如刚开始的那样。

看懂之后要改文件路径和相关参数,简单看看就能懂的,我就不一点点说了

# -*- coding: utf-8 -*-

import os

import io

import pandas as pd

import tensorflow as tf

from PIL import Image

from object_detection.utils import dataset_util

from collections import namedtuple, OrderedDict

flags = tf.app.flags

flags.DEFINE_string('csv_input', '', 'Path to the CSV input')

flags.DEFINE_string('output_path', '', 'Path to output TFRecord')

FLAGS = flags.FLAGS

# TO-DO replace this with label map

#注意将对应的label改成自己的类别!!!!!!!!!!

def class_text_to_int(row_label):

if row_label == 'dog':

return 1

else:

None

def split(df, group):

data = namedtuple('data', ['filename', 'object'])

gb = df.groupby(group)

return [data(filename, gb.get_group(x)) for filename, x in zip(gb.groups.keys(), gb.groups)]

def create_tf_example(group, path):

with tf.gfile.GFile(os.path.join(path, '{}'.format(group.filename)), 'rb') as fid:

encoded_jpg = fid.read()

encoded_jpg_io = io.BytesIO(encoded_jpg)

image = Image.open(encoded_jpg_io)

width, height = image.size

filename = group.filename.encode('utf8')

image_format = b'jpg'

xmins = []

xmaxs = []

ymins = []

ymaxs = []

classes_text = []

classes = []

for index, row in group.object.iterrows():

xmins.append(row['xmin'] / width)

xmaxs.append(row['xmax'] / width)

ymins.append(row['ymin'] / height)

ymaxs.append(row['ymax'] / height)

classes_text.append(row['class'].encode('utf8'))

classes.append(class_text_to_int(row['class']))

tf_example = tf.train.Example(features=tf.train.Features(feature={

'image/height': dataset_util.int64_feature(height),

'image/width': dataset_util.int64_feature(width),

'image/filename': dataset_util.bytes_feature(filename),

'image/source_id': dataset_util.bytes_feature(filename),

'image/encoded': dataset_util.bytes_feature(encoded_jpg),

'image/format': dataset_util.bytes_feature(image_format),

'image/object/bbox/xmin': dataset_util.float_list_feature(xmins),

'image/object/bbox/xmax': dataset_util.float_list_feature(xmaxs),

'image/object/bbox/ymin': dataset_util.float_list_feature(ymins),

'image/object/bbox/ymax': dataset_util.float_list_feature(ymaxs),

'image/object/class/text': dataset_util.bytes_list_feature(classes_text),

'image/object/class/label': dataset_util.int64_list_feature(classes),

}))

return tf_example

def main(_):

writer = tf.python_io.TFRecordWriter(FLAGS.output_path)

path = os.path.join('D:\\jupyter_notebook\\polyp\\code\\test\\images')

examples = pd.read_csv(FLAGS.csv_input)

grouped = split(examples, 'filename')

for group in grouped:

tf_example = create_tf_example(group, path)

writer.write(tf_example.SerializeToString())

writer.close()

output_path = os.path.join(os.getcwd(), FLAGS.output_path)

print('Successfully created the TFRecords: {}'.format(output_path))

if __name__ == '__main__':

tf.app.run()

最后就得到了这个训练集的record文件。

1.3 得到最终数据集(生成数据集具体流程在1.3)

我的路径设置仅供参考:

以训练集代码为例,验证集改改变量名就ok:

由掩码转化成csv:

'''

train --> csv

'''

train_path='D:\\jupyter_notebook\\polyp\\data\\cvcvideoclinicdbtrainvalid\\train\\'

list = os.listdir(train_path)

lable_list = []

for f in range(0,len(list)):

filename=list[f]

if(filename.split('.')[0].endswith('mask')):

im = imageio.imread(train_path+filename)

minh=im.shape[1]

minw=im.shape[0]

maxh=0

maxw=0

for i in range(0,im.shape[0]): #288 高 h

for j in range(0,im.shape[1]): #384 宽 w

if(im[i][j]>0):

#print(im[i][j])

if(jmaxw): maxw=j

if(imaxh): maxh=i

if(minh==im.shape[1] & minw==im.shape[0] & maxh==0 & maxw==0):

minh=minw=maxh=maxw=0

value = (

str(filename.split('_mask')[0]+filename.split('_mask')[1]),

im.shape[1],

im.shape[0],

str('polyp'),

int(minw),

int(minh),

int(maxw),

int(maxh)

)

lable_list.append(value)

print(f,'has finished!')

column_name = ['filename', 'width', 'height', 'class', 'xmin', 'ymin', 'xmax', 'ymax']

lable_df = pd.DataFrame(lable_list, columns=column_name)

print(lable_df)

再运行之前所说的csv转化成TFrecord, 修改images的地址等参数,得到了TFrecord

命令:

python generate_tfrecord_train.py --csv_input=object_detection\data\train_labels.csv --output_path=object_detection\data\train_dataSet.record

转化的代码:

# -*- coding: utf-8 -*-

import os

import io

import pandas as pd

import tensorflow as tf

from PIL import Image

from object_detection.utils import dataset_util

from collections import namedtuple, OrderedDict

flags = tf.app.flags

flags.DEFINE_string('csv_input', '', 'Path to the CSV input')

flags.DEFINE_string('output_path', '', 'Path to output TFRecord')

FLAGS = flags.FLAGS

# TO-DO replace this with label map

#注意将对应的label改成自己的类别!!!!!!!!!!

def class_text_to_int(row_label):

if row_label == 'polyp':

return 1

else:

None

def split(df, group):

data = namedtuple('data', ['filename', 'object'])

gb = df.groupby(group)

return [data(filename, gb.get_group(x)) for filename, x in zip(gb.groups.keys(), gb.groups)]

def create_tf_example(group, path):

with tf.gfile.GFile(os.path.join(path, '{}'.format(group.filename)), 'rb') as fid:

encoded_jpg = fid.read()

encoded_jpg_io = io.BytesIO(encoded_jpg)

image = Image.open(encoded_jpg_io)

width, height = image.size

filename = group.filename.encode('utf8')

image_format = b'jpg'

xmins = []

xmaxs = []

ymins = []

ymaxs = []

classes_text = []

classes = []

for index, row in group.object.iterrows():

xmins.append(row['xmin'] / width)

xmaxs.append(row['xmax'] / width)

ymins.append(row['ymin'] / height)

ymaxs.append(row['ymax'] / height)

classes_text.append(row['class'].encode('utf8'))

classes.append(class_text_to_int(row['class']))

tf_example = tf.train.Example(features=tf.train.Features(feature={

'image/height': dataset_util.int64_feature(height),

'image/width': dataset_util.int64_feature(width),

'image/filename': dataset_util.bytes_feature(filename),

'image/source_id': dataset_util.bytes_feature(filename),

'image/encoded': dataset_util.bytes_feature(encoded_jpg),

'image/format': dataset_util.bytes_feature(image_format),

'image/object/bbox/xmin': dataset_util.float_list_feature(xmins),

'image/object/bbox/xmax': dataset_util.float_list_feature(xmaxs),

'image/object/bbox/ymin': dataset_util.float_list_feature(ymins),

'image/object/bbox/ymax': dataset_util.float_list_feature(ymaxs),

'image/object/class/text': dataset_util.bytes_list_feature(classes_text),

'image/object/class/label': dataset_util.int64_list_feature(classes),

}))

return tf_example

def main(_):

writer = tf.python_io.TFRecordWriter(FLAGS.output_path)

path = os.path.join('D:\\jupyter_notebook\\API\\research\\object_detection\\images\\train\\')

examples = pd.read_csv(FLAGS.csv_input)

grouped = split(examples, 'filename')

for group in grouped:

tf_example = create_tf_example(group, path)

writer.write(tf_example.SerializeToString())

writer.close()

output_path = os.path.join(os.getcwd(), FLAGS.output_path)

print('Successfully created the TFRecords: {}'.format(output_path))

if __name__ == '__main__':

tf.app.run()

运行:

最终得到了:训练集和验证集(这里的名字之前是为了测试集,后来发现要准备验证集,就作为验证集了,反正数据多,不怕)