Kubernetes 高可用K8s构建(完结)

高可用K8s构建

- 一、高可用设计

- 二、初始化操作

- 三、部署安装

- 四、集群安装

- 五、部署Flanel网络

- 六、Etcd集群状态查看

重新构建K8s

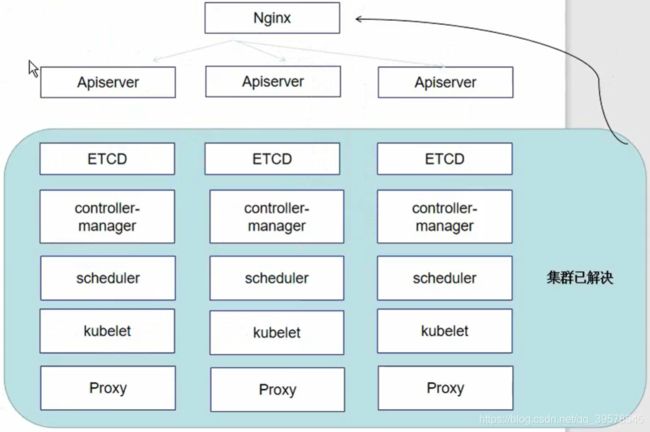

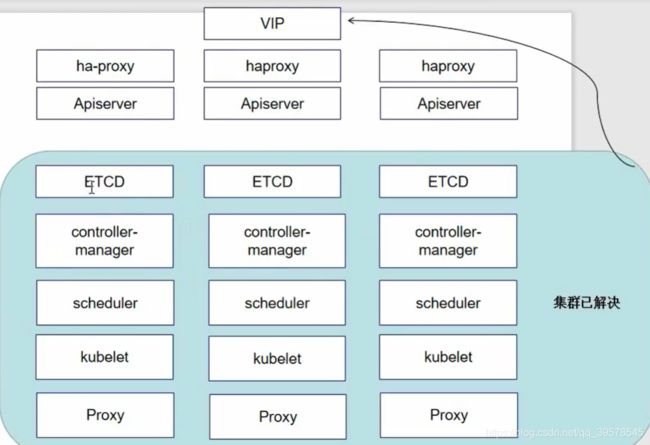

一、高可用设计

高可用所需:

Apiserver:所有服务的总入口

etcd:网络与存储共享

controller-manager:控制器

shceduler:调度服务

kubelet:维持容器的生命周期

Proxy:实现负载方案

Nginx负载均衡+高可用软件(睿云K8s—Breeze) 封装成haproxy

二、初始化操作

1、设置系统主机名以及网络

hostnamectl set-hostname k8s-master01

hostnamectl set-hostname k8s-master02

hostnamectl set-hostname k8s-master03

# vi /etc/sysconfig/network-scripts/ifcfg-ens33

BOOTPROTO=static

IPADDR=10.0.100.10

NETMASK=255.255.255.0

GATEWAY=10.0.100.2

ONBOOT=yes

DNS1=8.8.8.8

node1

BOOTPROTO=static

IPADDR=10.0.100.11

NETMASK=255.255.255.0

GATEWAY=10.0.100.2

ONBOOT=yes

DNS1=8.8.8.8

node2

BOOTPROTO=static

IPADDR=10.0.100.12

NETMASK=255.255.255.0

GATEWAY=10.0.100.2

ONBOOT=yes

DNS1=8.8.8.8

# vi /etc/hosts 三个服务器高可用集群

10.0.100.10 k8s-master01

10.0.100.11 k8s-master02

10.0.100.12 k8s-master03

10.0.100.100 k8s-vip

2、安装依赖包

yum -y install wget

wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

yum install -y conntrack ntpdate ntp ipvsadm ipset jq iptables curl sysstat libseccomp wget vim net-tools git

3、设置防火墙为Iptables并设置空规则

systemctl stop firewalld && systemctl disable firewalld

yum -y install iptables-services && systemctl start iptables && systemctl enable iptables && iptables -F && service iptables save

4、关闭SELINUX

swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

setenforce 0 && sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

5、调整内核参数,对于K8s

必备三调参数:开启bridge网桥模式,关闭ipv6协议

cat > kubernetes.conf << EOF

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

net.ipv4.tcp_tw_recycle=0

vm.swappiness=0 # 禁止使用swap空间,只有当系统OOM时才允许使用它

vm.overcommit_memory=1 # 不检查物理内存是否够用

vm.panic_on_oom=0 # 开启OOM

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

fs.file-max=52706963

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

net.netfilter.nf_conntrack_max=2310720

EOF

cp kubernetes.conf /etc/sysctl.d/kubernetes.conf

sysctl -p /etc/sysctl.d/kubernetes.conf

6、调整系统时区

# 设置系统时区为 中国/上海

timedatectl set-timezone Asia/Shanghai

# 将当前的UTC时间写入硬件时钟

timedatectl set-local-rtc 0

# 重启依赖于系统时间的服务

systemctl restart rsyslog

systemctl restart crond

7、关闭系统不需要服务

systemctl stop postfix && systemctl disable postfix

8、设置rsyslogd和systemd journald

让journald控制转发

mkdir /var/log/journal # 持久化保存日志的目录

mkdir /etc/systemd/journald.conf.d # 配置文件存放目录

# 创建配置文件

cat > /etc/systemd/journald.conf.d/99-prophet.conf << EOF

[Journal]

# 持久化保存到磁盘

Storage=persistent

# 压缩历史日志

Compress=yes

SyncIntervalSec=5m

RateLimitInterval=30s

RateLimitBurst=1000

# 最大占用空间 10G

SystemMaxUse=10G

# 单日志文件最大 200M

SystemMaxFileSize=200M

# 日志保存时间2周

MaxRetentionSec=2week

# 不将日志转发到 syslog

ForwardToSyslog=no

EOF

systemctl restart systemd-journald

9、升级系统内核为4.44

CentOS 7.x系统自带的3.10x内核存在一些Bugs,导致运行的Docker、Kubernetes不稳定。

rpm -Uvh http://mirror.ventraip.net.au/elrepo/elrepo/el7/x86_64/RPMS/elrepo-release-7.0-4.el7.elrepo.noarch.rpm

# 安装完成后检查 /boot/grub2/grub.cfg 中对应内核 menuentry 中是否包含 initrd16 配置,如果没有,再安装一次

yum --enablerepo=elrepo-kernel install -y kernel-lt

# 设置开机从新内核启动

grub2-set-default "CentOS Linux (4.4.182-1.el7.elrepo.x86_64) 7 (Core)"

检测:

[root@k8s-master01 ~]# uname -r

4.4.237-1.el7.elrepo.x86_64

10、关闭NUMA

# cp /etc/default/grub{,.bak}

# vim /etc/default/grub # 在GRUB_CMDLINE_LINUX 一行添加 'numa=off'参数,如下所示

# cp /boot/grub2/grub.cfg{,.bak}

# grub2-mkconfig -o /boot/grub2/grub.cfg

三、部署安装

1、kube-proxy开启ipvs的前置条件

kube-proxy主要解决 pod的调度方式,开启这个条件可以增加访问效率

modprobe br_netfilter

cat > /etc/sysconfig/modules/ipvs.modules << EOF

#! /bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash

/etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_contrack_ipv4 # 加载ipvs模块

2、安装Docker软件

安装docker依赖

yum install -y yum-utils device-mapper-persistent-data lvm2

添加docker-ce镜像

yum-config-manager \

--add-repo \

http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

更新yum源,安装docker-ce

yum update -y && yum install -y docker-ce

# 重启

reboot

# 重新设置内核

grub2-set-default "CentOS Linux (4.4.182-1.el7.elrepo.x86_64) 7 (Core)"

## 创建/etc/docker目录

mkdir /etc/docker

# 配置daemon

cat > /etc/docker/daemon.json << EOF

{

"exec-opts":["native.cgroupdriver=systemd"],

"log-driver":"json-file",

"log-opts":{

"max-size":"100m"

}

}

EOF

# 创建存放docker的配置文件

mkdir -p /etc/systemd/system/docker.service.d

# 重启docker服务

systemctl daemon-reload && systemctl restart docker && systemctl enable docker

3、在主节点启动Haproxy与Keepalived容器

导入脚本 > 运行 > 查看可用节点 (也可以通过手动安装通过Nginx实现反向代理)

睿云的方案

mkdir -p /usr/local/kubernetes/install

cd !$

导入镜像,5个文件

haproxy和keepalived.tar是睿云厂商编写的

kubeadm-basic.images.tar是k8s 1.15版本的基础镜像文件

load-images.sh和start.keep.tart.gz是启动脚本

# scp /usr/local/kubernetes/install/* root@k8s-master02:/usr/local/kubernetes/install/

# scp /usr/local/kubernetes/install/* root@k8s-master03:/usr/local/kubernetes/install/

[root@k8s-master01 install]# docker load -i haproxy.tar

[root@k8s-master01 install]# docker load -i keepalived.tar

[root@k8s-master01 install]# tar -zxvf kubeadm-basic.images.tar.gz

[root@k8s-master01 install]# vim load-images.sh

#!/bin/bash

cd /usr/local/kubernetes/install/kubeadm-basic.images

ls /usr/local/kubernetes/install/kubeadm-basic.images | grep -v load-images.sh > /tmp/k8s-images.txt

for i in $( cat /tmp/k8s-images.txt )

do

docker load -i $i

done

rm -rf /tmp/k8s-images.txt

# scp load-images.sh root@k8s-master02:/usr/local/kubernetes/install/

# scp load-images.sh root@k8s-master03:/usr/local/kubernetes/install/

[root@k8s-master01 install]# chmod a+x load-images.sh

[root@k8s-master01 install]# ./load-images.sh

[root@k8s-master01 install]# tar -zxvf start.keep.tar.gz

[root@k8s-master01 install]# mv data/ /

[root@k8s-master01 install]# cd /data/lb

[root@k8s-master01 lb]# vim /data/lb/etc/haproxy.cfg

如果同时写三个部署集群,新加入的会被利用到,那负载就不正确了。

先保留一个

[root@k8s-master01 lb]# vim /data/lb/start-haproxy.sh

[root@k8s-master01 lb]# sh start-haproxy.sh

[root@k8s-master01 lb]# netstat -anpt | grep 6444

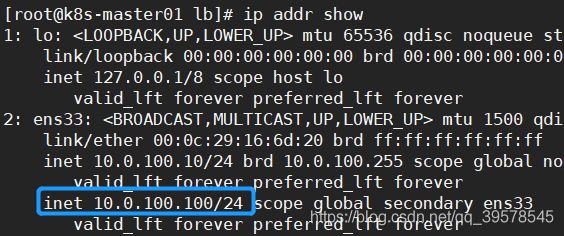

[root@k8s-master01 lb]# vim start-keepalived.sh

VIRTUAL_IP=10.0.100.100

INTERFACE=ens33

[root@k8s-master01 lb]# sh start-keepalived.sh

四、集群安装

1、安装Kubeadm(主从配置)

cat <<EOF >/etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum -y install kubeadm-1.15.1 kubectl-1.15.1 kubelet-1.15.1

# kubelet是与容器接口进行交互,而k8s通过kubeadm安装以后都是以Pod方式存在,底层是以容器的方式运行。所以一定要开机自启,不然的话启动不了k8s集群

systemctl enable kubelet.service

2、初始化主节点

cd /usr/local/kubernetes/install/

mkdir images

mv * images/

# 显示默认init初始化文件打印到 yaml文件中。从而得到默认的初始化模板

kubeadm config print init-defaults > kubeadm-config.yaml

vim kubeadm-config.yaml

修改的点:

localAPIEndpoint:

advertiseAddress: 10.0.100.10

添加高可用节点IP地址

controlPlaneEndpoint: "10.0.100.100:6444"

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: k8s.gcr.io

kind: ClusterConfiguration

kubernetesVersion: v1.15.1 # 修改的点

networking:

dnsDomain: cluster.local

podSubnet: "10.244.0.0/16" # 修改的点

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

featureGates:

SupportIPVSProxyMode: true

mode: ipvs

kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 10.0.100.10

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: k8s-master01

taints:

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 10.0.100.10

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: k8s-master01

taints:

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 10.0.100.10

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: k8s-master01

taints:

- effect: NoSchedule

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 10.0.100.10

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: k8s-master01

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/master

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 10.0.100.10

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: k8s-master01

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/master

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controlPlaneEndpoint: "10.0.100.100:6444" # 修改的点

controllerManager: {

}

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: k8s.gcr.io

kind: ClusterConfiguration

kubernetesVersion: v1.15.1 # 修改的点

networking:

dnsDomain: cluster.local

podSubnet: "10.244.0.0/16" # 修改的点

serviceSubnet: 10.96.0.0/12

scheduler: {

}

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1 # 修改的点

kind: KubeProxyConfiguration

featureGates:

SupportIPVSProxyMode: true

mode: ipvs

执行kubeadm init --config=kubeadm-config.yaml --experimental-upload-certs | tee kubeadm-init.log

初始化

[root@k8s-master01 install]# kubeadm init --config=kubeadm-config.yaml --experimental-upload-certs | tee kubeadm-init.log

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

1.14版本之后实现集群高可用,证书会自动生成

控制节点master服务器增加新节点,用第一条命令

[root@k8s-master01 install]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master01 NotReady master 9m17s v1.15.1

3、加入其余工作节点

一个个改费劲,直接scp /data/好了

!!!!!!!!!!!!!!

一个个改费劲,直接scp /data/好了

[root@k8s-master01 ~]# cd /data/lb/

[root@k8s-master01 lb]# scp -r /data root@k8s-master02:/

[root@k8s-master01 lb]# scp -r /data root@k8s-master03:/

k8s-master02、03:

[root@k8s-master02 install]# pwd

/usr/local/kubernetes/install

[root@k8s-master02 install]#

docker load -i haproxy.tar

docker load -i keepalived.tar

cd /data/lb/

sh start-haproxy.sh

sh start-keepalived.sh

安装Kubeadm

k8s-master02、03:

cat <<EOF >/etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum -y install kubeadm-1.15.1 kubectl-1.15.1 kubelet-1.15.1

# kubelet是与容器接口进行交互,而k8s通过kubeadm安装以后都是以Pod方式存在,底层是以容器的方式运行。所以一定要开机自启,不然的话启动不了k8s集群

systemctl enable kubelet.service

注意,一定要是上面所写的第二点的第一条,不然就白做了

k8s-master02、03:

You can now join any number of the control-plane node running the following command on each as root:

kubeadm join 10.0.100.100:6444 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:c089d41e3a6ae5012285528b60cf69bd08d573ea97b2f7fe4ef56b34bb4e453a \

--control-plane --certificate-key 8a034fae6b9d2dceb702bbe21a2a35f4bd9b15213b25cae7efa735854124b8f1

加入之后执行如下三条

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

修改haproxy配置文件

[root@k8s-master01 ~]# vim /data/lb/etc/haproxy.cfg

server rancher01 10.0.100.10:6443

server rancher02 10.0.100.11:6443

server rancher03 10.0.100.12:6443

[root@k8s-master01 ~]# scp /data/lb/etc/haproxy.cfg root@k8s-master02:/data/lb/etc/haproxy.cfg

[root@k8s-master01 ~]# scp /data/lb/etc/haproxy.cfg root@k8s-master03:/data/lb/etc/haproxy.cfg

[root@k8s-master01 ~]# docker rm -f HAProxy-K8S && bash /data/lb/start-haproxy.sh

[root@k8s-master02 ~]# docker rm -f HAProxy-K8S && bash /data/lb/start-haproxy.sh

[root@k8s-master03 ~]# docker rm -f HAProxy-K8S && bash /data/lb/start-haproxy.sh

五、部署Flanel网络

Notready——》ready

还没构建Flannel网络插件,所以还是NotReady

最常见的问题:flannel镜像拉取失败,访问不了

kube-flannel.yml

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

kubectl create -f kube-flannel.yml

kubectl get pod -n kube-system

kubectl get node

?

vim ~/.kube/config 配置文件中

使用的访问地址是负载地址,改为当前节点的地址:6443

连接集群的时候使用的是什么地址,本机使用本机即可。

[root@k8s-master02 ~]# vim .kube/config

[root@k8s-master03 ~]# vim .kube/config

六、Etcd集群状态查看

运行容器etcd-k8s-master01,用到的容器内部执行命令etcdctl,指定访问的地址endpoints,指定ca证书,指定server证书,秘钥,指定查看方式cluster-health

kubectl -n kube-system exec etcd-k8s-master01 -- etcdctl \

--endpoints=https://10.0.100.10:2379 \

--ca-file=/etc/kubernetes/pki/etcd/ca.crt \

--cert-file=/etc/kubernetes/pki/etcd/server.crt \

--key-file=/etc/kubernetes/pki/etcd/server.key cluster-health

关机master01,在主节点02-master02查看,可以看到control-manager工作在master02(原本工作在master01)

kubectl get node

kubectl get endpoints kube-controller-manager --namespace=kube-system -o yaml

kubectl get endpoints kube-scheduler --namespace=kube-system -o yaml

后续node节点添加通过日志即可

cat /usr/local/kubernetes/install/kubeadm-init.log