opencv3/C++ 机器学习-支持向量机SVM & 单分类器 : ONE_CLASS

支持向量机(SVM)最开始用于二分类情况。 后来SVM被扩展到回归和多分类问题。 SVM基于内核方法,使用核函数将特征向量映射到高维空间,并在该空间中建立最佳的线性判别函数或适合于训练数据的最优超平面。 在SVM中内核没有明确定义,但需要定义超空间中任意两点之间的距离。

分离超平面和来自两个类别(二分类情况下)的最近特征向量之间的距离最大时,该解是最优的。 离超平面最近的特征向量被称为支持向量,即其他向量的位置不会影响超平面/决策函数。OpenCV中的SVM实现基于[LibSVM]。

常用构造函数:

Ptr SVM::create(const Params& p=Params(), const Ptr& customKernel=Ptr())//创建空的模型

void setType(int val) = 0;//SVM公式的类型

int getType() const = 0;

void setTermCriteria(const cv::TermCriteria &val) = 0;//SVM训练过程的终止标准;可以指定容差或最大迭代次数;

void setKernel(int kernelType) = 0;//用预定义的内核之一进行初始化;

Mat getSupportVectors() const = 0;//检索所有的支持向量;将所有支持向量作为浮点矩阵返回,其中支持向量作为矩阵行存储;

SVM::getDefaulltGrid(int param_id)//生成支持向量机参数的网格。 SVM内核的类型有:

- 线性内核:SVM :: LINEAR K(xi,xj)=xTixj. K ( x i , x j ) = x i T x j .

- 多项式内核:SVM :: POLY K(xi,xj)=(γxTixj+coef0)degree,γ>0. K ( x i , x j ) = ( γ x i T x j + c o e f 0 ) d e g r e e , γ > 0.

- 径向基函数内核:SVM :: RBF K(xi,xj)=e−γ||xi−xj||2,γ>0. K ( x i , x j ) = e − γ | | x i − x j | | 2 , γ > 0.

- Sigmoid内核:SVM :: SIGMOID K(xi,xj)=tanh(γxTixj+coef0). K ( x i , x j ) = tanh ( γ x i T x j + c o e f 0 ) .

- 指数Chi2内核:SVM::CHI2 K(xi,xj)=e−γχ2(xi,xj),χ2(xi,xj)=(xi−xj)2/(xi+xj),γ>0. K ( x i , x j ) = e − γ χ 2 ( x i , x j ) , χ 2 ( x i , x j ) = ( x i − x j ) 2 / ( x i + x j ) , γ > 0.

- 直方图相交内核:SVM::INTER K(xi,xj)=min(xi,xj). K ( x i , x j ) = m i n ( x i , x j ) .

gamma - 核函数(POLY / RBF / SIGMOID / CHI2)的参数 γ γ 。

coef0 - 核函数(POLY / SIGMOID)的参数coef0。

Cvalue - SVM优化问题的参数C(C_SVC / EPS_SVR / NU_SVR)。

ν ν - SVM优化问题(NU_SVC / ONE_CLASS / NU_SVR)的参数 ν ν 。

p - 支持向量机优化问题(EPS_SVR)的参数ε。

classWeights - C_SVC问题中的可选权重,分配给特定的类。它们乘以C,所以类#i的参数C变为classWeights(i) * C。因此,这些权重影响不同类别的错误分类惩罚。权重越大,对相应类别的数据错误分类的惩罚就越大。

termCrit - 解部分二次优化问题的迭代SVM训练程序终止准则。可以指定容差和或最大迭代次数。

示例

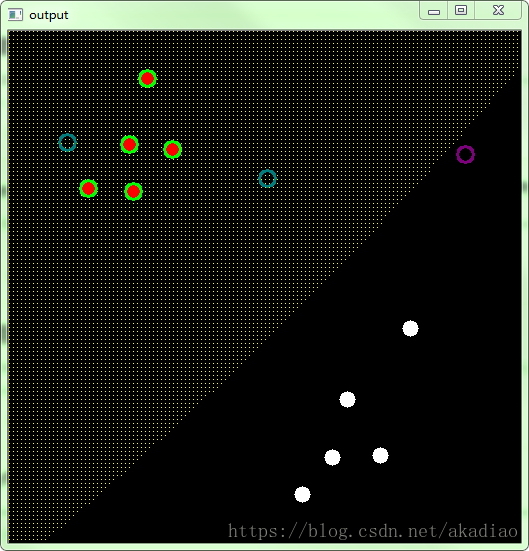

使用SVM进行分类

#include model = SVM::create();

model->setType(SVM::C_SVC);

model->setKernel(SVM::POLY);

model->setDegree(1.0);

model->setTermCriteria(TermCriteria(TermCriteria::MAX_ITER,100,1e-6));

model->train(trainDataMat,ROW_SAMPLE,labelsMat);

//对每个像素点进行分类

Mat showImg = Mat::zeros(512, 512, CV_8UC3);

for (int i=0; ifor (int j=0; jfloat>(1, 2) << j, i);

float response = model->predict(sampleMat);

for (int label = 0; label < sampleSum; label++)

{

if (response == labels[label])

{

RNG rng1(labels[label]);

showImg.at(i, j) = Vec3b(rng1.uniform(0,255),rng1.uniform(0,255),rng1.uniform(0,255));

}

RNG rng2(3-labels[label]);

circle(showImg,Point(trainData[label][0],trainData[label][1]),8,Scalar(rng2.uniform(0,255),rng2.uniform(0,255),rng2.uniform(0,255)),-1,-1);

}

}

}

//绘制出支持向量

Mat supportVectors = model->getSupportVectors();

for (int i = 0; i < supportVectors.rows; ++i)

{

const float* sv = supportVectors.ptr<float>(i);

circle(showImg,Point(sv[0],sv[1]),8,Scalar(0,255,0),2,8);

}

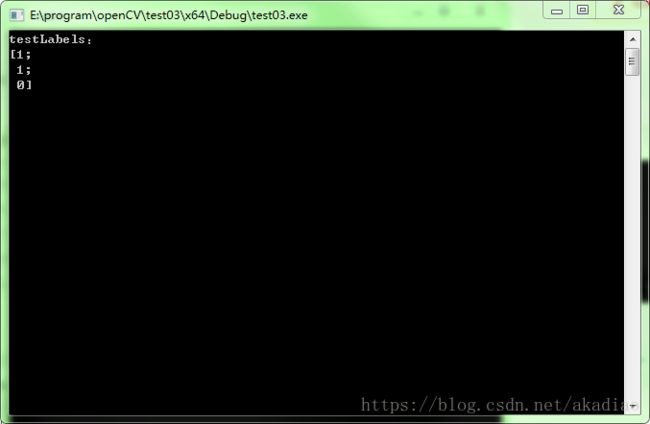

//测试

Mat testLabels;

float testData[3][2] = {

{

456,123},{

258,147},{

58,111}};

Mat testDataMat(3,2,CV_32FC1,testData);

model->predict(testDataMat, testLabels);

std::cout <<"testLabels:\n"<std::endl;

imshow("output", showImg);

waitKey();

return 0;

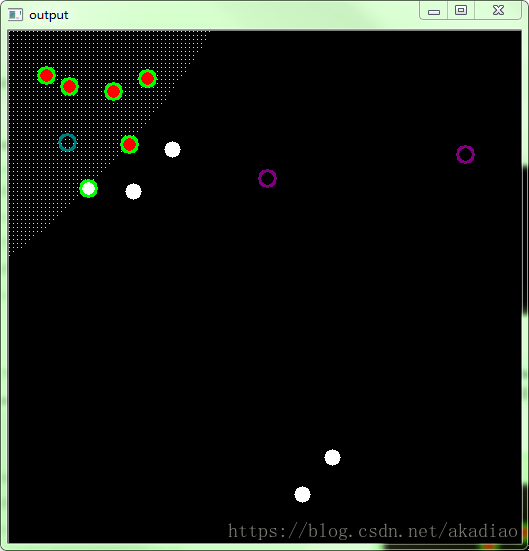

} 单分类器 : ONE_CLASS

单分类器, 所有的训练数据都来自同一类,SVM建立一个边界,将类与剩余的特征空间分开。

#include model = SVM::create();

model->setType(SVM::ONE_CLASS);

model->setKernel(SVM::POLY);

model->setDegree(3);

model->setGamma(1);

model->setC(1);

model->setP(0);

model->setNu(0.5);

model->setTermCriteria(TermCriteria(TermCriteria::MAX_ITER,100,1e-6));

model->train(trainDataMat,ROW_SAMPLE,labelsMat);

//对每个像素点进行分类

Mat showImg = Mat::zeros(512, 512, CV_8UC3);

for (int i=0; i4)

{

for (int j=0; j4)

{

Mat sampleMat = (Mat_<float>(1, 2) << j, i);

float response = model->predict(sampleMat);

if (response == 0)

showImg.at(i, j) = Vec3b(0, 255, 255);

}

}

for (int i = 0; i < sampleSum; i++)

{

Mat trainMat = (Mat_<float>(1, 2) << trainData[i][0], trainData[i][1]);

float trainResponse = model->predict(trainMat);

Scalar color;

if (trainResponse == 0)

color = Vec3b(0, 0, 255);

else

color = Vec3b(255, 255, 255);

circle(showImg,Point(trainData[i][0],trainData[i][1]),8,color,-1,-1);

}

//绘制出支持向量

Mat supportVectors = model->getSupportVectors();

for (int i = 0; i < supportVectors.rows; ++i)

{

const float* sv = supportVectors.ptr<float>(i);

circle(showImg,Point(sv[0],sv[1]),8,Scalar(0,255,0),2,8);

}

//测试

Mat testLabels;

const int sumtest = 3;

float testData[sumtest][2] = {

{

456,123},{

258,147},{

58,111}};

Mat testDataMat(3,2,CV_32FC1,testData);

model->predict(testDataMat, testLabels);

for (int i = 0; i < sumtest; i++)

{

Mat testMat = (Mat_<float>(1, 2) << testData[i][0], testData[i][1]);

float testResponse = model->predict(testMat);

Scalar color;

if (testResponse == 0)

color = Vec3b(125, 125, 0);

else

color = Vec3b(125, 0, 125);

circle(showImg, Point(testData[i][0], testData[i][1]), 8, color, 2, -1);

}

std::cout <<"testLabels:\n"<std::endl;

imshow("output", showImg);

waitKey();

return 0;

} float trainData[sampleSum][2] = {

{79,157},{163,118},{138,47},{120,113},{124,160},{104,60},{37,44},{293,463},{323,426},{401,297}}; float trainData[sampleSum][2] = {

{79,157},{163,118},{138,47},{120,113},{124,160},{104,60},{37,44},{60,55},{293,463},{323,426}};相关链接:

1. OpenCV3:Support Vector Machines

2. LIBSVM: A Library for Support Vector Machines