环境

首先安装一下matplotlib库:

sudo pip install matplotlib下载1.4.1的tensorflow

https://github.com/lhelontra/tensorflow-on-arm/releases安装

sudo pip uninstall tensorflow

sudo pip install --upgrade tensorflow-1.4.1-cp27-none-linux_armv7l.whl

准备模型

- 下载tensorflow提供的models API并解压,我这里解压后的目录为

models_master,下载路径:

https://github.com/tensorflow/models/tree/master/research/object_detection/models - 下载训练好的模型并放到上一步

models_master下的object_detection/models目录,下载路径:

https://github.com/tensorflow/models/blob/master/research/object_detection/g3doc/detection_model_zoo.md

这里下载几个典型的:ssd_mobilenet_v1_coco_2017_11_17、faster_rcnn_resnet101_coco和mask_rcnn_inception_v2_coco

注:做物体检测的网络有很多种,如faster rcnn,ssd,yolo等等,通过不同维度的对比,各个网络都有各自的优势。

毕竟树莓派计算能力有限,我们这里先选择专门为速度优化过最快的网络SSD,以及经典的faster-rcnn作对比,再加上能显示mask的高端网络,,,

事实上yolo v3刚出来,比SSD更快,而faster rcnn相对来说运行慢的多了,后面可以都尝试对比一下,目前先把基线系统搭建好。

Protobuf 安装与配置

- 说明

protobuf是Google开发的一种混合语言数据标准,提供了一种轻便高效的结构化数据存储格式,可以用于结构化数据序列化。很适合做数据存储或 RPC 数据交换格式。可用于通讯协议、数据存储等领域的语言无关、平台无关、可扩展的序列化结构数据格式。目前提供了 C++、Java、Python 三种语言的 API。

下载地址:https://github.com/google/protobuf/releases

我们这里下载最新版本protobuf-all-3.5.1.tar.gz - 安装

tar -xf protobuf-all-3.5.1.tar.gz

cd protobuf-3.5.1

./configure

make

make check ->这一步是检查编译是否正确,耗时非常长,可略过

sudo make install

sudo ldconfig ->更新库搜索路径,否则可能找不到库文件

如果运行了make check,结果如下,可以看到所有的测试用例都PASS了,说明编译正确:

============================================================================

Testsuite summary for Protocol Buffers 3.5.1

============================================================================

# TOTAL: 7

# PASS: 7

# SKIP: 0

# XFAIL: 0

# FAIL: 0

# XPASS: 0

# ERROR: 0

============================================================================

- 配置

配置的目的是将proto格式的数据转换为python格式,从而可以在python脚本中调用,进入目录models-master/research,运行:

protoc object_detection/protos/*.proto --python_out=.

转换完毕后可以看到在object_detection/protos/目录下多了许多*.py文件。

代码

这里的代码很简单,因为基本实现都已经有了,我们只是调用一下接口实现功能即可。

import numpy as np

import os

import sys

import tarfile

import tensorflow as tf

import cv2

import time

from collections import defaultdict

# This is needed since the notebook is stored in the object_detection folder.

sys.path.append("../..")

from object_detection.utils import label_map_util

from object_detection.utils import visualization_utils as vis_util

# What model to download.

MODEL_NAME = 'ssd_mobilenet_v1_coco_2017_11_17'

#MODEL_NAME = 'faster_rcnn_resnet101_coco_11_06_2017'

#MODEL_NAME = 'ssd_inception_v2_coco_11_06_2017'

MODEL_FILE = MODEL_NAME + '.tar.gz'

# Path to frozen detection graph. This is the actual model that is used for the object detection.

PATH_TO_CKPT = MODEL_NAME + '/frozen_inference_graph.pb'

# List of the strings that is used to add correct label for each box.

PATH_TO_LABELS = os.path.join('/home/yinan/object_detect/models-master/research/object_detection/data', 'mscoco_label_map.pbtxt')

#extract the ssd_mobilenet

start = time.clock()

NUM_CLASSES = 90

#opener = urllib.request.URLopener()

#opener.retrieve(DOWNLOAD_BASE + MODEL_FILE, MODEL_FILE)

tar_file = tarfile.open(MODEL_FILE)

for file in tar_file.getmembers():

file_name = os.path.basename(file.name)

if 'frozen_inference_graph.pb' in file_name:

tar_file.extract(file, os.getcwd())

end= time.clock()

print('load the model',(end-start))

detection_graph = tf.Graph()

with detection_graph.as_default():

od_graph_def = tf.GraphDef()

with tf.gfile.GFile(PATH_TO_CKPT, 'rb') as fid:

serialized_graph = fid.read()

od_graph_def.ParseFromString(serialized_graph)

tf.import_graph_def(od_graph_def, name='')

label_map = label_map_util.load_labelmap(PATH_TO_LABELS)

categories = label_map_util.convert_label_map_to_categories(label_map, max_num_classes=NUM_CLASSES, use_display_name=True)

category_index = label_map_util.create_category_index(categories)

cap = cv2.VideoCapture(0)

with detection_graph.as_default():

with tf.Session(graph=detection_graph) as sess:

writer = tf.summary.FileWriter("logs/", sess.graph)

sess.run(tf.global_variables_initializer())

while(1):

start = time.clock()

ret, frame = cap.read()

if cv2.waitKey(1) & 0xFF == ord('q'):

break

image_np=frame

# the array based representation of the image will be used later in order to prepare the

# result image with boxes and labels on it.

# Expand dimensions since the model expects images to have shape: [1, None, None, 3]

image_np_expanded = np.expand_dims(image_np, axis=0)

image_tensor = detection_graph.get_tensor_by_name('image_tensor:0')

# Each box represents a part of the image where a particular object was detected.

boxes = detection_graph.get_tensor_by_name('detection_boxes:0')

# Each score represent how level of confidence for each of the objects.

# Score is shown on the result image, together with the class label.

scores = detection_graph.get_tensor_by_name('detection_scores:0')

classes = detection_graph.get_tensor_by_name('detection_classes:0')

num_detections = detection_graph.get_tensor_by_name('num_detections:0')

# Actual detection.

(boxes, scores, classes, num_detections) = sess.run(

[boxes, scores, classes, num_detections],

feed_dict={image_tensor: image_np_expanded})

# Visualization of the results of a detection.

vis_util.visualize_boxes_and_labels_on_image_array(

image_np,

np.squeeze(boxes),

np.squeeze(classes).astype(np.int32),

np.squeeze(scores),

category_index,

use_normalized_coordinates=True,

line_thickness=6)

end = time.clock()

#print('frame:',1.0/(end - start))

print 'One frame detect take time:',end - start

cv2.imshow("capture", image_np)

print('after cv2 show')

cv2.waitKey(1)

cap.release()

cv2.destroyAllWindows()

保存为 detect.py,到目录models-master/research/object_detection/models下。

运行

命令:

sudo chmod 666 /dev/video0

python detect.py

效果

SSD模型

下图可以看到,SSD模型加载模型花了8s,差不多一张图识别时间在5s:

image.png

PS. 为什么把房间识别成了book...

faster-RCNN模型

faster-RCNN,加载模型83s,内存不够,跑不起来。。。

mask SSD模型

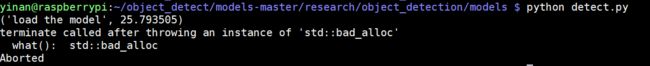

mask模型可以描绘出轮廓,看起来更高端,加载模型25s,遇到个问题:

接下来查一下

CPU占用率100%,内存占用60%多