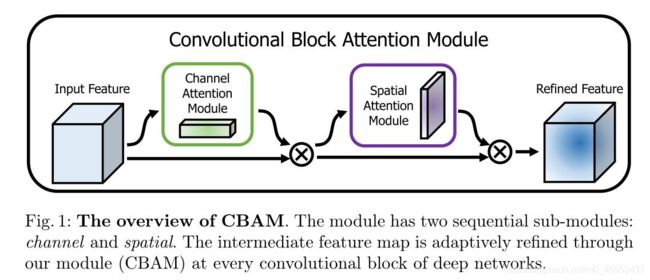

注意力机制(通道注意机制、空间注意力机制、CBAM、SELayer)

参考链接: 注意力机制

参考链接: 深度学习卷积神经网络重要结构之通道注意力和空间注意力模块

参考链接: 用于卷积神经网络的注意力机制(Attention)----CBAM: Convolutional Block Attention Module

参考链接: moskomule/senet.pytorch

参考链接: Squeeze-and-Excitation Networks

参考链接: CBAM: Convolutional Block Attention Module

import torch

from torch import nn

class SpatialAttention(nn.Module):

def __init__(self, kernel_size=7):

super(SpatialAttention, self).__init__()

assert kernel_size in (3, 7), 'kernel size must be 3 or 7'

padding = 3 if kernel_size == 7 else 1

self.conv1 = nn.Conv2d(2, 1, kernel_size, padding=padding, bias=False) # 7,3 3,1

self.sigmoid = nn.Sigmoid()

def forward(self, x):

avg_out = torch.mean(x, dim=1, keepdim=True)

max_out, _ = torch.max(x, dim=1, keepdim=True)

x = torch.cat([avg_out, max_out], dim=1)

x = self.conv1(x)

return self.sigmoid(x)

if __name__ == '__main__':

SA = SpatialAttention(7)

data_in = torch.randn(8,32,300,300)

data_out = SA(data_in)

print(data_in.shape) # torch.Size([8, 32, 300, 300])

print(data_out.shape) # torch.Size([8, 1, 300, 300])

控制台结果输出展示:

Windows PowerShell

版权所有 (C) Microsoft Corporation。保留所有权利。

尝试新的跨平台 PowerShell https://aka.ms/pscore6

加载个人及系统配置文件用了 1003 毫秒。

(base) PS C:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM> & 'D:\Anaconda3\python.exe' 'c:\Users\chenxuqi\.vscode\extensions\ms-python.python-2021.1.502429796\pythonFiles\lib\python\debugpy\launcher' '55088' '--' 'c:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM\

空间注意力机制.py'

torch.Size([8, 32, 300, 300])

torch.Size([8, 1, 300, 300])

(base) PS C:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM> conda activate base

(base) PS C:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM>

通道注意力机制:

代码实验展示:

import torch

from torch import nn

class ChannelAttention(nn.Module):

def __init__(self, in_planes, ratio=16):

super(ChannelAttention, self).__init__()

self.avg_pool = nn.AdaptiveAvgPool2d(1)

self.max_pool = nn.AdaptiveMaxPool2d(1)

self.fc1 = nn.Conv2d(in_planes, in_planes // ratio, 1, bias=False)

self.relu1 = nn.ReLU()

self.fc2 = nn.Conv2d(in_planes // ratio, in_planes, 1, bias=False)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

avg_out = self.fc2(self.relu1(self.fc1(self.avg_pool(x))))

max_out = self.fc2(self.relu1(self.fc1(self.max_pool(x))))

out = avg_out + max_out

return self.sigmoid(out)

if __name__ == '__main__':

CA = ChannelAttention(32)

data_in = torch.randn(8,32,300,300)

data_out = CA(data_in)

print(data_in.shape) # torch.Size([8, 32, 300, 300])

print(data_out.shape) # torch.Size([8, 32, 1, 1])

控制台结果输出展示:

Windows PowerShell

版权所有 (C) Microsoft Corporation。保留所有权利。

尝试新的跨平台 PowerShell https://aka.ms/pscore6

加载个人及系统配置文件用了 882 毫秒。

(base) PS C:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM> conda activate base

(base) PS C:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM> & 'D:\Anaconda3\python.exe' 'c:\Users\chenxuqi\.vscode\extensions\ms-python.python-2021.1.502429796\pythonFiles\lib\python\debugpy\launcher' '55339' '--' 'c:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM\

通道注意力机制.py'

torch.Size([8, 32, 300, 300])

torch.Size([8, 32, 1, 1])

(base) PS C:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM>

import torch

from torch import nn

class ChannelAttention(nn.Module):

def __init__(self, in_planes, ratio=16):

super(ChannelAttention, self).__init__()

self.avg_pool = nn.AdaptiveAvgPool2d(1)

self.max_pool = nn.AdaptiveMaxPool2d(1)

self.fc1 = nn.Conv2d(in_planes, in_planes // ratio, 1, bias=False)

self.relu1 = nn.ReLU()

self.fc2 = nn.Conv2d(in_planes // ratio, in_planes, 1, bias=False)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

avg_out = self.fc2(self.relu1(self.fc1(self.avg_pool(x))))

max_out = self.fc2(self.relu1(self.fc1(self.max_pool(x))))

out = avg_out + max_out

return self.sigmoid(out)

class SpatialAttention(nn.Module):

def __init__(self, kernel_size=7):

super(SpatialAttention, self).__init__()

assert kernel_size in (3, 7), 'kernel size must be 3 or 7'

padding = 3 if kernel_size == 7 else 1

self.conv1 = nn.Conv2d(2, 1, kernel_size, padding=padding, bias=False) # 7,3 3,1

self.sigmoid = nn.Sigmoid()

def forward(self, x):

avg_out = torch.mean(x, dim=1, keepdim=True)

max_out, _ = torch.max(x, dim=1, keepdim=True)

x = torch.cat([avg_out, max_out], dim=1)

x = self.conv1(x)

return self.sigmoid(x)

class CBAM(nn.Module):

def __init__(self, in_planes, ratio=16, kernel_size=7):

super(CBAM, self).__init__()

self.ca = ChannelAttention(in_planes, ratio)

self.sa = SpatialAttention(kernel_size)

def forward(self, x):

out = x * self.ca(x)

result = out * self.sa(out)

return result

if __name__ == '__main__':

print('testing ChannelAttention'.center(100,'-'))

torch.manual_seed(seed=20200910)

CA = ChannelAttention(32)

data_in = torch.randn(8,32,300,300)

data_out = CA(data_in)

print(data_in.shape) # torch.Size([8, 32, 300, 300])

print(data_out.shape) # torch.Size([8, 32, 1, 1])

if __name__ == '__main__':

print('testing SpatialAttention'.center(100,'-'))

torch.manual_seed(seed=20200910)

SA = SpatialAttention(7)

data_in = torch.randn(8,32,300,300)

data_out = SA(data_in)

print(data_in.shape) # torch.Size([8, 32, 300, 300])

print(data_out.shape) # torch.Size([8, 1, 300, 300])

if __name__ == '__main__':

print('testing CBAM'.center(100,'-'))

torch.manual_seed(seed=20200910)

cbam = CBAM(32, 16, 7)

data_in = torch.randn(8,32,300,300)

data_out = cbam(data_in)

print(data_in.shape) # torch.Size([8, 32, 300, 300])

print(data_out.shape) # torch.Size([8, 1, 300, 300])

控制台结果输出展示:

Windows PowerShell

版权所有 (C) Microsoft Corporation。保留所有权利。

尝试新的跨平台 PowerShell https://aka.ms/pscore6

加载个人及系统配置文件用了 1029 毫秒。

(base) PS C:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM> & 'D:\Anaconda3\python.exe' 'c:\Users\chenxuqi\.vscode\extensions\ms-python.python-2021.1.502429796\pythonFiles\lib\python\debugpy\launcher' '55659' '--' 'c:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM\cbam注意力机制.py'

--------------------------------------testing ChannelAttention--------------------------------------

torch.Size([8, 32, 300, 300])

torch.Size([8, 32, 1, 1])

--------------------------------------testing SpatialAttention--------------------------------------

torch.Size([8, 32, 300, 300])

torch.Size([8, 1, 300, 300])

--------------------------------------------testing CBAM--------------------------------------------

torch.Size([8, 32, 300, 300])

torch.Size([8, 32, 300, 300])

(base) PS C:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM> conda activate base

(base) PS C:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM>

(base) PS C:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM>

(base) PS C:\Users\chenxuqi\Desktop\News4cxq\测试注意力机制CBAM>

SE注意力机制:

from torch import nn

import torch

class SELayer(nn.Module):

def __init__(self, channel, reduction=16):

super(SELayer, self).__init__()

self.avg_pool = nn.AdaptiveAvgPool2d(1)

self.fc = nn.Sequential(

nn.Linear(channel, channel // reduction, bias=False),

nn.ReLU(inplace=True),

nn.Linear(channel // reduction, channel, bias=False),

nn.Sigmoid()

)

def forward(self, x):

b, c, _, _ = x.size()

y = self.avg_pool(x).view(b, c)

y = self.fc(y).view(b, c, 1, 1)

return x * y.expand_as(x)

# return x * y

if __name__ == '__main__':

torch.manual_seed(seed=20200910)

data_in = torch.randn(8,32,300,300)

SE = SELayer(32)

data_out = SE(data_in)

print(data_in.shape) # torch.Size([8, 32, 300, 300])

print(data_out.shape) # torch.Size([8, 32, 300, 300])

控制台输出结果展示:

Windows PowerShell

版权所有 (C) Microsoft Corporation。保留所有权利。

尝试新的跨平台 PowerShell https://aka.ms/pscore6

加载个人及系统配置文件用了 979 毫秒。

(base) PS F:\Iris_SSD_small\senet.pytorch-master> & 'D:\Anaconda3\envs\pytorch_1.7.1_cu102\python.exe' 'c:\Users\chenxuqi\.vscode\extensions\ms-python.python-2021.1.502429796\pythonFiles\lib\python\debugpy\launcher' '54904' '--' 'f:\Iris_SSD_small\senet.pytorch-master\senet\se_module.py'

torch.Size([8, 32, 300, 300])

torch.Size([8, 32, 300, 300])

(base) PS F:\Iris_SSD_small\senet.pytorch-master> conda activate pytorch_1.7.1_cu102

(pytorch_1.7.1_cu102) PS F:\Iris_SSD_small\senet.pytorch-master>