Python是什么

Python是著名的“龟叔”Guido van Rossum在1989年圣诞节期间,为了打发无聊的圣诞节而编写的一个编程语言。

创始人Guido van Rossum是BBC出品英剧Monty Python’s Flying Circus(中文:蒙提·派森的飞行马戏团)的狂热粉丝,因而将自己创造的这门编程语言命名为Python。

Python英式发音:/ˈpaɪθən/ ,中文类似‘拍森’。而美式发音:/ˈpaɪθɑːn/,中文类似‘拍赏’。我看麻省理工授课教授读的是‘拍赏’,我觉得国内大多是读‘拍森’吧。

2017年python排第一也无可争议,比较AI第一语言,在当下人工智能大数据大火的情况下,python无愧第一语言的称号,至于C、C++、java都是万年的老大哥了,在代码量比较方面,小编相信java肯定是完爆其它语言的。

不过从这一年的编程语言流行趋势看,java依然是传播最多的,比较无论app、web、云计算都离不开,而其相对python而言,学习路径更困难一点,想要转行编程,而且追赶潮流,python已然是最佳语言。

许多大型网站就是用Python开发的,国内:豆瓣、搜狐、金山、腾讯、盛大、网易、百度、阿里、淘宝、热酷、土豆、新浪、果壳…; 国外:谷歌、NASA、YouTube、Facebook、工业光魔、红帽…

Python将被纳入高考内容

浙江省信息技术课程改革方案已经出台,Python确定进入浙江省信息技术高考,从2018年起浙江省信息技术教材编程语言将会从vb更换为Python。其实不止浙江,教育大省北京和山东也确定要把Python编程基础纳入信息技术课程和高考的内容体系,Python语言课程化也将成为孩子学习的一种趋势。尤其山东省最新出版的小学信息技术六年级教材也加入了Python内容,小学生都开始接触Python语言了!!

再不学习,又要被小学生完爆了。。。

Python入门教程

- Python教程 - 廖雪峰的官方网站

- Python官网

- Python 100例 | 菜鸟教程

- Python中文社区

- 微信公众号:Python中文社区

- 微信跳一跳的python外挂项目:https://github.com/wangshub/wechat_jump_game

- 对于有语言基础的童鞋来说,学习python是很easy的事,而且很funny

Python能做什么

- 网络爬虫

- Web应用开发

- 系统网络运维

- 科学与数字计算

- 图形界面开发

- 网络编程

- 自然语言处理(NLP)

- 人工智能

- 区块链

- 多不胜举。。。

Python入门爬虫

这是我的第一个python项目,在这里与大家分享出来~

-

需求

- 我们目前正在开发一款产品其功能大致是:用户收到短信如:购买了电影票或者火车票机票之类的事件。然后app读取短信,解析短信,获取时间地点,然后后台自动建立一个备忘录,在事件开始前1小时提醒用户。

-

设计

- 开始我们将解析的功能放在了服务端,但是后来考虑到用户隐私问题。后来将解析功能放到了app端,服务端只负责收集数据,然后将新数据发送给app端。

- 关于服务端主要是分离出两个功能,一、响应app端请求返回数据。二、爬取数据,存入数据库。

- 响应请求返回数据使用java来做,而爬取数据存入数据库使用python来做,这样分别使用不同语言来做是因为这两种语言各有优势,java效率比python高些,适合做web端,而爬取数据并不是太追求性能且python语言和大量的库适合做爬虫。

-

代码

- 本项目使用python3的版本

- 源码可在我的码云上下载:https://gitee.com/Mrcmc/py-scratch

- 了解这个项目你只需要有简单的python基础,能了解python语法就可以。其实我自己也是python没学完,然后就开始写,遇到问题就百度,边做边学这样才不至于很枯燥,因为python可以做一些很有意思的事情,比如模拟连续登录挣积分,比如我最近在写一个预定模范出行车子的python脚本。推荐看廖雪峰的python入门教程

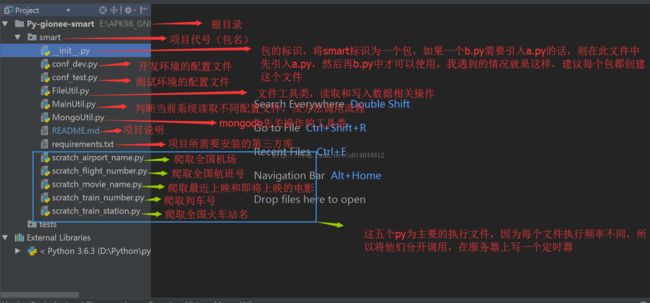

- 首先带大家看看我的目录结构,开始我打算是定义一个非常好非常全的规范,后来才发现由于自己不熟悉框架,而是刚入门级别,所以就放弃了。从简而入:

- 下面咱们按照上图中的顺序,从上往下一个一个文件的讲解init.py包的标识文件,python包就是文件夹,当该文件夹下有一个init.py文件后它就成为一个package,我在这个包中引入一些py供其他py调用。

init.py

# -*- coding: UTF-8 -*-

# import need manager module

import MongoUtil

import FileUtil

import conf_dev

import conf_test

import scratch_airport_name

import scratch_flight_number

import scratch_movie_name

import scratch_train_number

import scratch_train_station

import MainUtil

下面两个是配置文件,第一个是开发环境的(windows),第二个是测试环境的(linux),然后再根据不同系统启用不同的配置文件

conf_dev.py

# -*- coding: UTF-8 -*-

# the configuration file of develop environment

# path configure

data_root_path = 'E:/APK98_GNBJ_SMARTSERVER/Proj-gionee-data/smart/data'

# mongodb configure

user = "cmc"

pwd = "123456"

server = "localhost"

port = "27017"

db_name = "smartdb"

conf_test.py

# -*- coding: UTF-8 -*-

# the configuration file of test environment

#path configure

data_root_path = '/data/app/smart/data'

#mongodb configure

user = "smart"

pwd = "123456"

server = "10.8.0.30"

port = "27017"

db_name = "smartdb"

下面文件是一个util文件,主要是读取原文件的内容,还有将新内容写入原文件。

FileUtil.py

# -*- coding: UTF-8 -*-

import conf_dev

import conf_test

import platform

# configure Multi-confronment

# 判断当前系统,并引入相对的配置文件

platform_os = platform.system()

config = conf_dev

if (platform_os == 'Linux'):

config = conf_test

# path

data_root_path = config.data_root_path

# load old data

def read(resources_file_path, encode='utf-8'):

file_path = data_root_path + resources_file_path

outputs = []

for line in open(file_path, encoding=encode):

if not line.startswith("//"):

outputs.append(line.strip('\n').split(',')[-1])

return outputs

# append new data to file from scratch

def append(resources_file_path, data, encode='utf-8'):

file_path = data_root_path + resources_file_path

with open(file_path, 'a', encoding=encode) as f:

f.write(data)

f.close

下面这个main方法控制着执行流程,其他的执行方法调用这个main方法

MainUtil.py

# -*- coding: UTF-8 -*-

import sys

from datetime import datetime

import MongoUtil

import FileUtil

# @param resources_file_path 资源文件的path

# @param base_url 爬取的连接

# @param scratch_func 爬取的方法

def main(resources_file_path, base_url, scratch_func):

old_data = FileUtil.read(resources_file_path) #读取原资源

new_data = scratch_func(base_url, old_data) #爬取新资源

if new_data: #如果新数据不为空

date_new_data = "//" + datetime.now().strftime('%Y-%m-%d') + "\n" + "\n".join(new_data) + "\n" #在新数据前面加上当前日期

FileUtil.append(resources_file_path, date_new_data) #将新数据追加到文件中

MongoUtil.insert(resources_file_path, date_new_data) #将新数据插入到mongodb数据库中

else: #如果新数据为空,则打印日志

print(datetime.now().strftime('%Y-%m-%d %H:%M:%S'), '----', getattr(scratch_func, '__name__'), ": nothing to update ")

将更新的内容插入mongodb中

MongoUtil.py

# -*- coding: UTF-8 -*-

import platform

from pymongo import MongoClient

from datetime import datetime, timedelta, timezone

import conf_dev

import conf_test

# configure Multi-confronment

platform_os = platform.system()

config = conf_dev

if (platform_os == 'Linux'):

config = conf_test

# mongodb

uri = 'mongodb://' + config.user + ':' + config.pwd + '@' + config.server + ':' + config.port + '/' + config.db_name

# 将数据写入mongodb

# @author chenmc

# @param uri connect to mongodb

# @path save mongodb field

# @data save mongodb field

# @operation save mongodb field default value 'append'

# @date 2017/12/07 16:30

# 先在mongodb中插入一条自增数据 db.sequence.insert({ "_id" : "version","seq" : 1})

def insert(path, data, operation='append'):

client = MongoClient(uri)

resources = client.smartdb.resources

sequence = client.smartdb.sequence

seq = sequence.find_one({"_id": "version"})["seq"] #获取自增id

sequence.update_one({"_id": "version"}, {"$inc": {"seq": 1}}) #自增id+1

post_data = {"_class": "com.gionee.smart.domain.entity.Resources", "version": seq, "path": path,

"content": data, "status": "enable", "operation": operation,

"createtime": datetime.now(timezone(timedelta(hours=8)))}

resources.insert(post_data) #插入数据

项目引入的第三方库,可使用pip install -r requirements.txt下载第三方库

requirements.txt

# need to install module# need to install module

bs4

pymongo

requests

json

下面真正的执行方法来了,这五个py分别表示爬取五种信息:机场名、航班号、电影名、列车号、列车站。他们的结构都差不多,如下:

第一部分:定义查找的url;

第二部分:获取并与旧数据比较,返回新数据;

第三部分:main方法,执行写入新数据到文件和mongodb中;

scratch_airport_name.py:爬取全国机场

# -*- coding: UTF-8 -*-

import requests

import bs4

import json

import MainUtil

resources_file_path = '/resources/airplane/airportNameList.ini'

scratch_url_old = 'https://data.variflight.com/profiles/profilesapi/search'

scratch_url = 'https://data.variflight.com/analytics/codeapi/initialList'

get_city_url = 'https://data.variflight.com/profiles/Airports/%s'

#传入查找网页的url和旧数据,然后本方法会比对原数据中是否有新的条目,如果有则不加入,如果没有则重新加入,最后返回新数据

def scratch_airport_name(scratch_url, old_airports):

new_airports = []

data = requests.get(scratch_url).text

all_airport_json = json.loads(data)['data']

for airport_by_word in all_airport_json.values():

for airport in airport_by_word:

if airport['fn'] not in old_airports:

get_city_uri = get_city_url % airport['id']

data2 = requests.get(get_city_uri).text

soup = bs4.BeautifulSoup(data2, "html.parser")

city = soup.find('span', text="城市").next_sibling.text

new_airports.append(city + ',' + airport['fn'])

return new_airports

#main方法,执行这个py,默认调用main方法,相当于java的main

if __name__ == '__main__':

MainUtil.main(resources_file_path, scratch_url, scratch_airport_name)

scratch_flight_number.py:爬取全国航班号

#!/usr/bin/python

# -*- coding: UTF-8 -*-

import requests

import bs4

import MainUtil

resources_file_path = '/resources/airplane/flightNameList.ini'

scratch_url = 'http://www.variflight.com/sitemap.html?AE71649A58c77='

def scratch_flight_number(scratch_url, old_flights):

new_flights = []

data = requests.get(scratch_url).text

soup = bs4.BeautifulSoup(data, "html.parser")

a_flights = soup.find('div', class_='list').find_all('a', recursive=False)

for flight in a_flights:

if flight.text not in old_flights and flight.text != '国内航段列表':

new_flights.append(flight.text)

return new_flights

if __name__ == '__main__':

MainUtil.main(resources_file_path, scratch_url, scratch_flight_number)

scratch_movie_name.py:爬取最近上映的电影

#!/usr/bin/python

# -*- coding: UTF-8 -*-

import re

import requests

import bs4

import json

import MainUtil

# 相对路径,也是需要将此路径存入数据库

resources_file_path = '/resources/movie/cinemaNameList.ini'

scratch_url = 'http://theater.mtime.com/China_Beijing/'

# scratch data with define url

def scratch_latest_movies(scratch_url, old_movies):

data = requests.get(scratch_url).text

soup = bs4.BeautifulSoup(data, "html.parser")

new_movies = []

new_movies_json = json.loads(

soup.find('script', text=re.compile("var hotplaySvList")).text.split("=")[1].replace(";", ""))

coming_movies_data = soup.find_all('li', class_='i_wantmovie')

# 上映的电影

for movie in new_movies_json:

move_name = movie['Title']

if move_name not in old_movies:

new_movies.append(movie['Title'])

# 即将上映的电影

for coming_movie in coming_movies_data:

coming_movie_name = coming_movie.h3.a.text

if coming_movie_name not in old_movies and coming_movie_name not in new_movies:

new_movies.append(coming_movie_name)

return new_movies

if __name__ == '__main__':

MainUtil.main(resources_file_path, scratch_url, scratch_latest_movies)

scratch_train_number.py:爬取全国列车号

#!/usr/bin/python

# -*- coding: UTF-8 -*-

import requests

import bs4

import json

import MainUtil

resources_file_path = '/resources/train/trainNameList.ini'

scratch_url = 'http://www.59178.com/checi/'

def scratch_train_number(scratch_url, old_trains):

new_trains = []

resp = requests.get(scratch_url)

data = resp.text.encode(resp.encoding).decode('gb2312')

soup = bs4.BeautifulSoup(data, "html.parser")

a_trains = soup.find('table').find_all('a')

for train in a_trains:

if train.text not in old_trains and train.text:

new_trains.append(train.text)

return new_trains

if __name__ == '__main__':

MainUtil.main(resources_file_path, scratch_url, scratch_train_number)

scratch_train_station.py:爬取全国列车站

#!/usr/bin/python

# -*- coding: UTF-8 -*-

import requests

import bs4

import random

import MainUtil

resources_file_path = '/resources/train/trainStationNameList.ini'

scratch_url = 'http://www.smskb.com/train/'

def scratch_train_station(scratch_url, old_stations):

new_stations = []

provinces_eng = (

"Anhui", "Beijing", "Chongqing", "Fujian", "Gansu", "Guangdong", "Guangxi", "Guizhou", "Hainan", "Hebei",

"Heilongjiang", "Henan", "Hubei", "Hunan", "Jiangsu", "Jiangxi", "Jilin", "Liaoning", "Ningxia", "Qinghai",

"Shandong", "Shanghai", "Shanxi", "Shanxisheng", "Sichuan", "Tianjin", "Neimenggu", "Xianggang", "Xinjiang",

"Xizang",

"Yunnan", "Zhejiang")

provinces_chi = (

"安徽", "北京", "重庆", "福建", "甘肃", "广东", "广西", "贵州", "海南", "河北",

"黑龙江", "河南", "湖北", "湖南", "江苏", "江西", "吉林", "辽宁", "宁夏", "青海",

"山东", "上海", "陕西", "山西", "四川", "天津", "内蒙古", "香港", "新疆", "西藏",

"云南", "浙江")

for i in range(0, provinces_eng.__len__(), 1):

cur_url = scratch_url + provinces_eng[i] + ".htm"

resp = requests.get(cur_url)

data = resp.text.encode(resp.encoding).decode('gbk')

soup = bs4.BeautifulSoup(data, "html.parser")

a_stations = soup.find('left').find('table').find_all('a')

for station in a_stations:

if station.text not in old_stations:

new_stations.append(provinces_chi[i] + ',' + station.text)

return new_stations

if __name__ == '__main__':

MainUtil.main(resources_file_path, scratch_url, scratch_train_station)

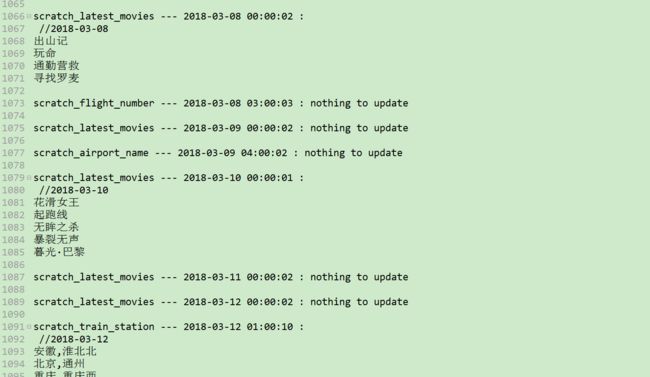

将项目放到测试服务器(centos7系统)中运行起来,我写了一个crontab,定时调用他们,下面贴出crontab。

/etc/crontab

SHELL=/bin/bash

PATH=/sbin:/bin:/usr/sbin:/usr/bin

MAILTO=root

# For details see man 4 crontabs

# Example of job definition:

# .---------------- minute (0 - 59)

# | .------------- hour (0 - 23)

# | | .---------- day of month (1 - 31)

# | | | .------- month (1 - 12) OR jan,feb,mar,apr ...

# | | | | .---- day of week (0 - 6) (Sunday=0 or 7) OR sun,mon,tue,wed,thu,fri,sat

# | | | | |

# * * * * * user-name command to be executed

0 0 * * * root python3 /data/app/smart/py/scratch_movie_name.py >> /data/logs/smartpy/out.log 2>&1

0 1 * * 1 root python3 /data/app/smart/py/scratch_train_station.py >> /data/logs/smartpy/out.log 2>&1

0 2 * * 2 root python3 /data/app/smart/py/scratch_train_number.py >> /data/logs/smartpy/out.log 2>&1

0 3 * * 4 root python3 /data/app/smart/py/scratch_flight_number.py >> /data/logs/smartpy/out.log 2>&1

0 4 * * 5 root python3 /data/app/smart/py/scratch_airport_name.py >> /data/logs/smartpy/out.log 2>&1

后续

目前项目已经正常运行了三个多月啦。。。