转换步骤概览

- 准备好模型定义文件(.py文件)

- 准备好训练完成的权重文件(.pth或.pth.tar)

- 安装onnx和onnxruntime

- 将训练好的模型转换为.onnx格式

- 安装tensorRT

环境参数

ubuntu-18.04 PyTorch-1.8.1 onnx-1.9.0 onnxruntime-1.7.2 cuda-11.1 cudnn-8.2.0 TensorRT-7.2.3.4

PyTorch转ONNX

Step1:安装ONNX和ONNXRUNTIME

网上找到的安装方式是通过pip

pip install onnx pip install onnxruntime

如果使用的是Anaconda环境,conda安装也是可以的。

conda install -c conda-forge onnx conda install -c conda-forge onnxruntime

Step2:安装netron

netron是用于可视化网络结构的,便于debug。

pip install netron

Step3 PyTorch转ONNx

安装完成后,可以根据下面code进行转换。

#--*-- coding:utf-8 --*--

import onnx

# 注意这里导入onnx时必须在torch导入之前,否则会出现segmentation fault

import torch

import torchvision

from model import Net

model= Net(args).cuda()#初始化模型

checkpoint = torch.load(checkpoint_path)

net.load_state_dict(checkpoint['state_dict'])#载入训练好的权重文件

print ("Model and weights LOADED successfully")

export_onnx_file = './net.onnx'

x = torch.onnx.export(net,

torch.randn(1,1,224,224,device='cuda'), #根据输入要求初始化一个dummy input

export_onnx_file,

verbose=False, #是否以字符串形式显示计算图

input_names = ["inputs"]+["params_%d"%i for i in range(120)],#输入节点的名称,这里也可以给一个list,list中名称分别对应每一层可学习的参数,便于后续查询

output_names = ["outputs"],# 输出节点的名称

opset_version = 10,#onnx 支持采用的operator set, 应该和pytorch版本相关

do_constant_folding = True,

dynamic_axes = {"inputs":{0:"batch_size"}, 2:"h", 3:"w"}, "outputs":{0: "batch_size"},})

net = onnx.load('./erfnet.onnx') #加载onnx 计算图

onnx.checker.check_model(net) # 检查文件模型是否正确

onnx.helper.printable_graph(net.graph) #输出onnx的计算图

dynamic_axes用于指定输入、输出中的可变维度。输入输出的batch_size在这里都设为了可变,输入的第2和第3维也设置为了可变。

Step 4:验证ONNX模型

下面可视化onnx模型,同时测试模型是否正确运行

import netron

import onnxruntime

import numpy as np

from PIL import Image

import cv2

netron.start('./net.onnx')

test_image = np.asarray(Image.open(test_image_path).convert('L'),dtype='float32') /255.

test_image = cv2.resize(np.array(test_image),(224,224),interpolation = cv2.INTER_CUBIC)

test_image = test_image[np.newaxis,np.newaxis,:,:]

session = onnxruntime.InferenceSession('./net.onnx')

outputs = session.run(None, {"inputs": test_image})

print(len(outputs))

print(outputs[0].shape)

#根据需要处理一下outputs[0],并可视化一下结果,看看结果是否正常

ONNX转TensorRT

Step1:从NVIDIA下载TensorRT下载安装包 https://developer.nvidia.com/tensorrt

根据自己的cuda版本选择,我选择的是TensorRT 7.2.3,下载到本地。

cd download_path dpkg -i nv-tensorrt-repo-ubuntu1804-cuda11.1-trt7.2.3.4-ga-20210226_1-1_amd64.deb sudo apt-get update sudo apt-get install tensorrt

查了一下NVIDIA的官方安装教程https://docs.nvidia.com/deeplearning/tensorrt/quick-start-guide/index.html#install,由于可能需要调用TensorRT Python API,我们还需要先安装PyCUDA。这边先插入一下PyCUDA的安装。

pip install 'pycuda<2021.1'

遇到任何问题,请参考官方说明 https://wiki.tiker.net/PyCuda/Installation/Linux/#step-1-download-and-unpack-pycuda

如果使用的是Python 3.X,再执行一下以下安装。

sudo apt-get install python3-libnvinfer-dev

如果需要ONNX graphsurgeon或使用Python模块,还需要执行以下命令。

sudo apt-get install onnx-graphsurgeon

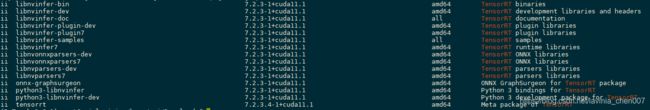

验证是否安装成功。

dpkg -l | grep TensorRT

得到类似上图的结果就是安装成功了。

问题:此时在python中import tensorrt,得到ModuleNotFoundError: No module named 'tensorrt'的报错信息。

网上查了一下,通过dpkg安装的tensorrt是默认安装在系统python中,而不是Anaconda环境的python里的。由于系统默认的python是3.6,而Anaconda里使用的是3.8.8,通过export PYTHONPATH的方式,又会出现python版本不匹配的问题。

重新搜索了一下如何在anaconda环境里安装tensorRT。

pip3 install --upgrade setuptools pip pip install nvidia-pyindex pip install nvidia-tensorrt

验证一下这是Anconda环境的python是否可以import tensorrt。

import tensorrt print(tensorrt.__version__) #输出8.0.0.3

Step 2:ONNX转TensorRT

先说一下,在这一步里遇到了*** AttributeError: ‘tensorrt.tensorrt.Builder' object has no attribute 'max_workspace_size'的报错信息。网上查了一下,是8.0.0.3版本的bug,要退回到7.2.3.4。

emmm…

pip unintall nvidia-tensorrt #先把8.0.0.3版本卸载掉 pip install nvidia-tensorrt==7.2.* --index-url https://pypi.ngc.nvidia.com # 安装7.2.3.4banben

转换代码

import pycuda.autoinit

import pycuda.driver as cuda

import tensorrt as trt

import torch

import time

from PIL import Image

import cv2,os

import torchvision

import numpy as np

from scipy.special import softmax

### get_img_np_nchw h和postprocess_the_output函数根据需要进行修改

TRT_LOGGER = trt.Logger()

def get_img_np_nchw(img_path):

img = Image.open(img_path).convert('L')

img = np.asarray(img, dtype='float32')

img = cv2.resize(np.array(img),(224, 224), interpolation = cv2.INTER_CUBIC)

img = img / 255.

img = img[np.newaxis, np.newaxis]

return image

class HostDeviceMem(object):

def __init__(self, host_mem, device_mem):

"""host_mom指代cpu内存,device_mem指代GPU内存

"""

self.host = host_mem

self.device = device_mem

def __str__(self):

return "Host:\n" + str(self.host) + "\nDevice:\n" + str(self.device)

def __repr__(self):

return self.__str__()

def allocate_buffers(engine):

inputs = []

outputs = []

bindings = []

stream = cuda.Stream()

for binding in engine:

size = trt.volume(engine.get_binding_shape(binding)) * engine.max_batch_size

dtype = trt.nptype(engine.get_binding_dtype(binding))

# Allocate host and device buffers

host_mem = cuda.pagelocked_empty(size, dtype)

device_mem = cuda.mem_alloc(host_mem.nbytes)

# Append the device buffer to device bindings.

bindings.append(int(device_mem))

# Append to the appropriate list.

if engine.binding_is_input(binding):

inputs.append(HostDeviceMem(host_mem, device_mem))

else:

outputs.append(HostDeviceMem(host_mem, device_mem))

return inputs, outputs, bindings, stream

def get_engine(max_batch_size=1, onnx_file_path="", engine_file_path="",fp16_mode=False, int8_mode=False,save_engine=False):

"""

params max_batch_size: 预先指定大小好分配显存

params onnx_file_path: onnx文件路径

params engine_file_path: 待保存的序列化的引擎文件路径

params fp16_mode: 是否采用FP16

params int8_mode: 是否采用INT8

params save_engine: 是否保存引擎

returns: ICudaEngine

"""

# 如果已经存在序列化之后的引擎,则直接反序列化得到cudaEngine

if os.path.exists(engine_file_path):

print("Reading engine from file: {}".format(engine_file_path))

with open(engine_file_path, 'rb') as f, \

trt.Runtime(TRT_LOGGER) as runtime:

return runtime.deserialize_cuda_engine(f.read()) # 反序列化

else: # 由onnx创建cudaEngine

# 使用logger创建一个builder

# builder创建一个计算图 INetworkDefinition

explicit_batch = 1 << (int)(trt.NetworkDefinitionCreationFlag.EXPLICIT_BATCH)

# In TensorRT 7.0, the ONNX parser only supports full-dimensions mode, meaning that your network definition must be created with the explicitBatch flag set. For more information, see Working With Dynamic Shapes.

with trt.Builder(TRT_LOGGER) as builder, \

builder.create_network(explicit_batch) as network, \

trt.OnnxParser(network, TRT_LOGGER) as parser, \

builder.create_builder_config() as config: # 使用onnx的解析器绑定计算图,后续将通过解析填充计算图

profile = builder.create_optimization_profile()

profile.set_shape("inputs", (1, 1, 224, 224),(1,1,224,224),(1,1,224,224))

config.add_optimization_profile(profile)

config.max_workspace_size = 1<<30 # 预先分配的工作空间大小,即ICudaEngine执行时GPU最大需要的空间

builder.max_batch_size = max_batch_size # 执行时最大可以使用的batchsize

builder.fp16_mode = fp16_mode

builder.int8_mode = int8_mode

if int8_mode:

# To be updated

raise NotImplementedError

# 解析onnx文件,填充计算图

if not os.path.exists(onnx_file_path):

quit("ONNX file {} not found!".format(onnx_file_path))

print('loading onnx file from path {} ...'.format(onnx_file_path))

# with open(onnx_file_path, 'rb') as model: # 二值化的网络结果和参数

# print("Begining onnx file parsing")

# parser.parse(model.read()) # 解析onnx文件

parser.parse_from_file(onnx_file_path) # parser还有一个从文件解析onnx的方法

print("Completed parsing of onnx file")

# 填充计算图完成后,则使用builder从计算图中创建CudaEngine

print("Building an engine from file{}' this may take a while...".format(onnx_file_path))

#################

# import pdb;pdb.set_trace()

print(network.get_layer(network.num_layers-1).get_output(0).shape)

# network.mark_output(network.get_layer(network.num_layers -1).get_output(0))

engine = builder.build_engine(network,config) # 注意,这里的network是INetworkDefinition类型,即填充后的计算图

print("Completed creating Engine")

if save_engine: #保存engine供以后直接反序列化使用

with open(engine_file_path, 'wb') as f:

f.write(engine.serialize()) # 序列化

return engine

def do_inference(context, bindings, inputs, outputs, stream, batch_size=1):

# Transfer data from CPU to the GPU.

[cuda.memcpy_htod_async(inp.device, inp.host, stream) for inp in inputs]

# Run inference.

context.execute_async(batch_size=batch_size, bindings=bindings, stream_handle=stream.handle)

# Transfer predictions back from the GPU.

[cuda.memcpy_dtoh_async(out.host, out.device, stream) for out in outputs]

# Synchronize the stream

stream.synchronize()

# Return only the host outputs.

return [out.host for out in outputs]

def postprocess_the_outputs(outputs, shape_of_output):

outputs = outputs.reshape(*shape_of_output)

out = np.argmax(softmax(outputs,axis=1)[0,...],axis=0)

# import pdb;pdb.set_trace()

return out

# 验证TensorRT模型是否正确

onnx_model_path = './Net.onnx'

max_batch_size = 1

# These two modes are dependent on hardwares

fp16_mode = False

int8_mode = False

trt_engine_path = './model_fp16_{}_int8_{}.trt'.format(fp16_mode, int8_mode)

# Build an engine

engine = get_engine(max_batch_size, onnx_model_path, trt_engine_path, fp16_mode, int8_mode , save_engine=True)

# Create the context for this engine

context = engine.create_execution_context()

# Allocate buffers for input and output

inputs, outputs, bindings, stream = allocate_buffers(engine) # input, output: host # bindings

# Do inference

img_np_nchw = get_img_np_nchw(img_path)

inputs[0].host = img_np_nchw.reshape(-1)

shape_of_output = (max_batch_size, 2, 224, 224)

# inputs[1].host = ... for multiple input

t1 = time.time()

trt_outputs = do_inference(context, bindings=bindings, inputs=inputs, outputs=outputs, stream=stream) # numpy data

t2 = time.time()

feat = postprocess_the_outputs(trt_outputs[0], shape_of_output)

print('TensorRT ok')

print("Inference time with the TensorRT engine: {}".format(t2-t1))

根据https://www.jb51.net/article/187266.htm文章里的方法,转换的时候会报下面的错误:

原来我是根据链接里的代买进行转换的,后来进行了修改,按我文中的转换代码不会有问题,

修改的地方在:

with trt.Builder(TRT_LOGGER) as builder, \

builder.create_network(explicit_batch) as network, \

trt.OnnxParser(network, TRT_LOGGER) as parser, \

builder.create_builder_config() as config: # 使用onnx的解析器绑定计算图,后续将通过解析填充计算图

profile = builder.create_optimization_profile()

profile.set_shape("inputs", (1, 1, 224, 224),(1,1,224,224),(1,1,224,224))

config.add_optimization_profile(profile)

config.max_workspace_size = 1<<30 # 预先分配的工作空间大小,即ICudaEngine执行时GPU最大需要的空间

engine = builder.build_engine(network,config)

将链接中相应的代码进行修改或添加,就没有这个问题了。

到此这篇关于PyTorch模型转TensorRT是怎么实现的?的文章就介绍到这了,更多相关PyTorch模型转TensorRT内容请搜索脚本之家以前的文章或继续浏览下面的相关文章希望大家以后多多支持脚本之家!