- 12.1 本篇概述

- 12.1.1 移动设备的特点

- 12.2 UE移动端渲染特性

- 12.2.1 Feature Level

- 12.2.2 Deferred Shading

- 12.2.3 Ground Truth Ambient Occlusion

- 12.2.4 Dynamic Lighting and Shadow

- 12.2.5 Pixel Projected Reflection

- 12.2.6 Mesh Auto-Instancing

- 12.2.7 Post Processing

- 12.2.8 其它特性和限制

- 12.3 FMobileSceneRenderer

- 12.3.1 渲染器主流程

- 12.3.2 RenderForward

- 12.3.3 RenderDeferred

- 12.3.3.1 MobileDeferredShadingPass

- 12.3.3.2 MobileBasePassShader

- 12.3.3.3 MobileDeferredShading

- 团队招员

- 特别说明

- 参考文献

12.1 本篇概述

前面的所有篇章都是基于PC端的延迟渲染管线阐述UE的渲染体系的,特别是剖析虚幻渲染体系(04)- 延迟渲染管线详尽地阐述了在PC端的延迟渲染管线的流程和步骤。

此篇只要针对UE的移动端的渲染管线进行阐述,最终还会对比移动和和PC端的渲染差异,以及特殊的优化措施。本篇主要阐述UE渲染体系的以下内容:

- FMobileSceneRenderer的主要流程和步骤。

- 移动端的前向和延迟渲染管线。

- 移动端的光影和阴影。

- 移动端和PC端的异同,以及涉及的特殊优化技巧。

特别要指出的是,本篇分析的UE源码升级到了4.27.1,需要同步看源码的同学注意更新了。

如果要在PC的UE编辑器开启移动端渲染管线,可以选择如下所示的菜单:

等待Shader编译完成,UE编辑器的视口内便是移动端的预览效果。

12.1.1 移动设备的特点

相比PC桌面平台,移动端在尺寸、电量、硬件性能等诸多方面都存在显著的差异,具体表现在:

-

更小的尺寸。移动端的便携性就要求整机设备必须轻巧,可置于掌中或口袋内,所以整机只能限制在非常小的体积之内。

-

有限的能量和功率。受限于电池存储技术,目前的主流锂电池普通在1万毫安,但移动设备的分辨率、画质却越来越高,为了满足足够长的续航和散热限制,必须严格控制移动设备的整机功率,通常在5w以内。

-

散热方式受限。PC设备通常可以安装散热风扇、甚至水冷系统,而移动设备不具备这些主动散热方式,只能靠热传导散热。如果散热不当,CPU和GPU都会主动降频,以非常有限的性能运行,以免设备元器件因过热而损毁。

-

有限的硬件性能。移动设备的各类元件(CPU、带宽、内存、GPU等)的性能都只是PC设备的数十分之一。

2018年,主流PC设备(NV GV100-400-A1 Titan V)和主流移动设备(Samsung Exynos 9 8895)的性能对比图。移动设备的很多硬件性能只是PC设备的几十分之一,但分辨率却接近PC的一半,更加突显了移动设备的挑战和窘境。

到了2020年,主流的移动设备性能如下所示:

-

特殊的硬件架构。如CPU和GPU共享内存存储设备,被称为耦合式架构,还有GPU的TB(D)R架构,目的都是为了在低功耗内完成尽可能多的操作。

PC设备的解耦硬件架构和移动设备的耦合式硬件架构对比图。

除此之外,不同于PC端的CPU和GPU纯粹地追求计算性能,衡量移动端的性能有三个指标:性能(Performance)、能量(Power)、面积(Area),俗称PPA。(下图)

衡量移动设备的三个基本参数:Performance、Area、Power,其中Compute Density(计算密度)涉及性能和面积,能耗比涉及性能和能力消耗,越大越好。

与移动设备一起崛起的还有XR设备,它是移动设备的一个重要发展分支。目前存在着各种不同大小、功能、应用场景的XR设备:

各种形式的XR设备。

随着近来元宇宙(Metaverse)的爆火,以及FaceBook改名为Meta,加之Apple、MicroSoft、NVidia、Google等科技巨头都在加紧布局面向未来的沉浸式体验,XR设备作为能够最接近元宇宙畅想的载体和入口,自然成为一条未来非常有潜力能够出现巨无霸的全新赛道。

12.2 UE移动端渲染特性

本章阐述一下UE4.27在移动端的渲染特性。

12.2.1 Feature Level

UE在移动端支持以下图形API:

| Feature Level | 说明 |

|---|---|

| OpenGL ES 3.1 | 安卓系统的默认特性等级,可以在工程设置(Project Settings > Platforms > Android Material Quality - ES31)配置具体的材质参数。 |

| Android Vulkan | 可用于某些特定Android设备的高端渲染器,支持Vulkan 1.2 API,轻量级设计理念的Vulkan,多数情况下会比OpenGL更高效。 |

| Metal 2.0 | 专用于iOS设备的特性等级。可以在Project Settings > Platforms > iOS Material Quality配置材质参数。 |

在目前的主流安卓设备,使用Vulkan能获得更好的性能,原因在于Vulkan轻量级的设计理念,使得UE等应用程序能够更加精准地执行优化。下面是Vulkan和OpenGL的对照表:

| Vulkan | OpenGL |

|---|---|

| 基于对象的状态,没有全局状态。 | 单一的全局状态机。 |

| 所有的状态概念都放置到命令缓冲区中。 | 状态被绑定到单个上下文。 |

| 可以多线程编码。 | 渲染操作只能被顺序执行。 |

| 可以精确、显式地操控GPU的内存和同步。 | GPU的内存和同步细节通常被驱动程序隐藏起来。 |

| 驱动程序没有运行时错误检测,但存在针对开发人员的验证层。 | 广泛的运行时错误检测。 |

如果在Windows平台,UE编辑器也可以启动OpenGL、Vulkan、Metal的模拟器,以便在编辑器时预览效果,但可能跟实际的运行设备的画面有差异,不可完全依赖此功能。

开启Vulkan前需要在工程中配置一些参数,具体参看官方文档Android Vulkan Mobile Renderer。

另外,UE早前几个版本就移除了windows下的OpenGL支持,虽然目前UE编辑器还存在OpenGL的模拟选项,但实际上底层是用D3D渲染的。

12.2.2 Deferred Shading

UE的Deferred Shading(延迟着色)是在4.26才加入的功能,使得开发者能够在移动端实现较复杂的光影效果,诸如高质量反射、多动态光照、贴花、高级光照特性。

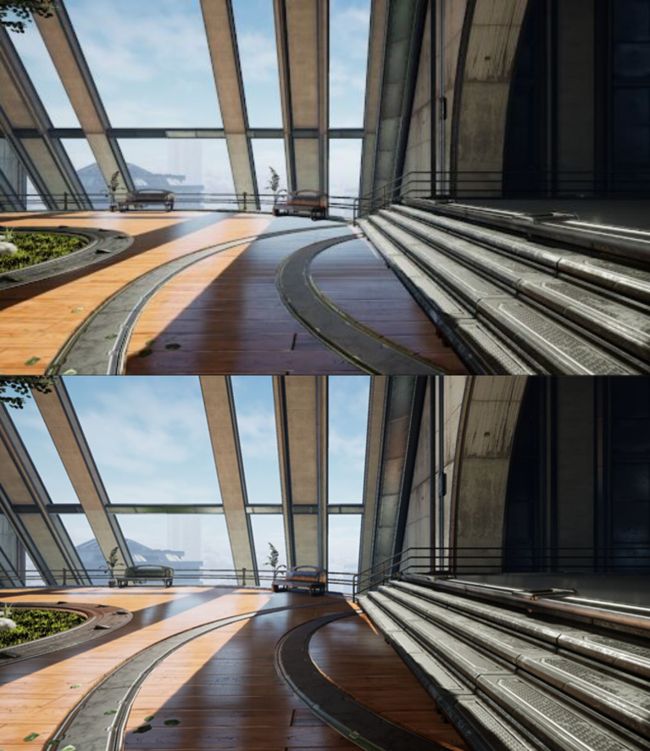

上:前向渲染;下:延迟渲染。

如果要在移动端开启延迟渲染,需要在工程配置目录下的DefaultEngine.ini添加r.Mobile.ShadingPath=1字段,然后重启编辑器。

12.2.3 Ground Truth Ambient Occlusion

Ground Truth Ambient Occlusion (GTAO)是接近现实世界的环境遮挡技术,是阴影的一种补偿,能够遮蔽一部分非直接光照,从而获得良好的软阴影效果。

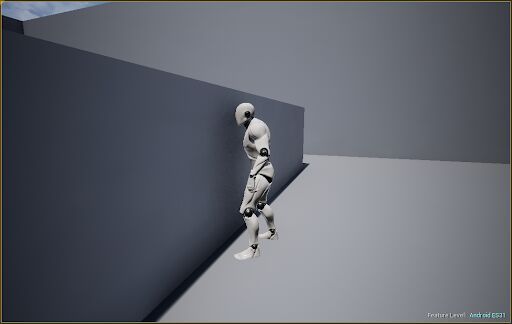

开启了GTAO的效果,注意机器人靠近墙面时,会在墙面留下具有渐变的软阴影效果。

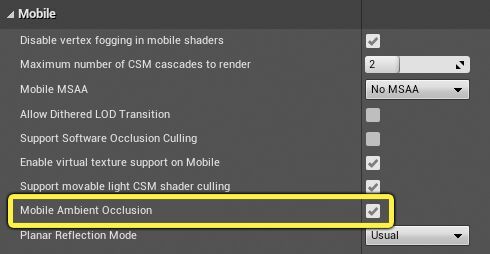

为了开启GTAO,需要勾选以下所示的选项:

此外,GTAO依赖Mobile HDR的选项,为了在对应目标设备开启,还需要在[Platform]Scalability.ini的配置中添加r.Mobile.AmbientOcclusionQuality字段,并且值需要大于0,否则GTAO将被禁用。

值得注意的是,GTAO在Mali设备上存在性能问题,因为它们的最大Compute Shader线程数量少于1024个。

12.2.4 Dynamic Lighting and Shadow

UE在移动端实现的光源特性有:

- 线性空间的HDR光照。

- 带方向的光照图(考虑了法线)。

- 太阳(平行光)支持距离场阴影 + 解析的镜面高光。

- IBL光照:每个物体采样了最近的一个反射捕捉器,而没有视差校正。

- 动态物体能正确地接受光照,也可以投射阴影。

UE移动端支持的动态光源的类型、数量、阴影等信息如下:

| 光源类型 | 最大数量 | 阴影 | 描述 |

|---|---|---|---|

| 平行光 | 1 | CSM | CSM默认是2级,最多支持4级。 |

| 点光源 | 4 | 不支持 | 点光源阴影需要立方体阴影图,而单Pass渲染立方体阴影(OnePassPointLightShadow)的技术需要GS(SM5才有)才支持。 |

| 聚光灯 | 4 | 支持 | 默认禁用,需要在工程中开启。 |

| 区域光 | 0 | 不支持 | 目前不支持动态区域光照效果。 |

动态的聚光灯需要在工程配置中显式开启:

在移动BasePass的像素着色器中,聚光灯阴影图与CSM共享相同的纹理采样器,并且聚光灯阴影和CSM使用相同的阴影图图集。CSM能够保证有足够的空间,而聚光灯将按阴影分辨率排序。

默认情况下,可见阴影的最大数量被限制为8个,但可以通过改变r.Mobile.MaxVisibleMovableSpotLightsShadow的值来改变上限值。聚光灯阴影的分辨率是基于屏幕大小和r.Shadow.TexelsPerPixelSpotlight。

在前向渲染路径中,局部光源(点光源和聚光灯)的总数不能超过4个。

移动端还支持一种特殊的阴影模式,那就是调制阴影(Modulated Shadows),只能用于固定(Stationary)的平行光。开启了调制阴影的效果图如下:

调制阴影还支持改变阴影颜色和混合比例:

左:动态阴影;右:调制阴影。

移动端的阴影同样支持自阴影、阴影质量等级(r.shadowquality)、深度偏移等参数的设置。

此外,移动端默认使用了GGX的高光反射,如果想切换到传统的高光着色模型,可以在以下配置里修改:

12.2.5 Pixel Projected Reflection

UE针对移动端做了一个优化版的SSR,被称为Pixel Projected Reflection(PPR),也是复用屏幕空间像素的核心思想。

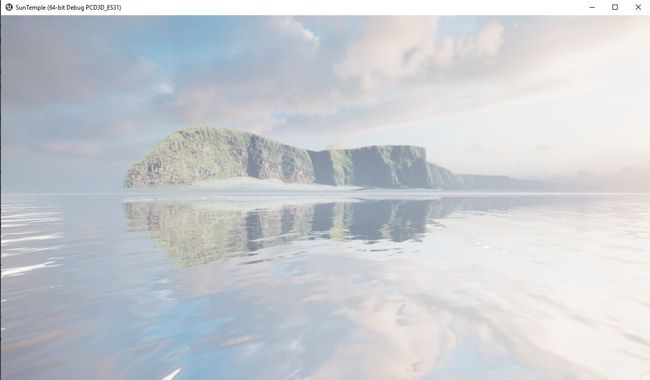

PPR效果图。

为了开启PPR效果,需要满足以下条件:

-

开启MobileHDR选项。

-

r.Mobile.PixelProjectedReflectionQuality的值大于0。 -

设置Project Settings > Mobile and set the Planar Reflection Mode成正确的模式:

Planar Reflection Mode有3个选项:

- Usual:平面反射Actor在所有平台上的作用都是相同的。

- MobilePPR:平面反射Actor在PC/主机平台上正常工作,但在移动平台上使用PPR渲染。

- MobilePPRExclusive:平面反射Actor将只用于移动平台上的PPR,为PC和Console项目留下了使用传统SSR的空间。

默认只有高端移动设备才会在[Project]Scalability.ini开启r.Mobile.PixelProjectedReflectionQuality。

12.2.6 Mesh Auto-Instancing

PC端的网格绘制管线已经支持了网格的自动化实例和合并绘制,这个特性可以极大提升渲染性能。4.27已经在移动端支持了这一特性。

若想开启,则需要打开工程配置目录下的DefaultEngine.ini,添加以下字段:

r.Mobile.SupportGPUScene=1

r.Mobile.UseGPUSceneTexture=1

重启编辑器,等待Shader编译完即可预览效果。

由于需要GPUSceneTexture支持,而Mali设备的Uniform Buffer最大只有64kb,以致无法支持足够大的空间,所以,Mali设备会使用纹理而非缓冲区来存储GPUScene数据。

但也存在一些限制:

-

移动设备上的自动实例化主要有利于CPU密集型项目,而不是GPU密集型项目。虽然启用自动实例化不太可能会对GPU密集型的项目造成损害,但不太可能看到使用它带来的显著性能改进。

-

如果一款游戏或应用需要大量内存,那么关闭

r.Mobile.UseGPUSceneTexture并使用缓冲区可能会更有好处,因为它无法在Mali设备上正常运行。也可以针对Mali设备关闭

r.Mobile.UseGPUSceneTexture,而其它GPU厂商的设备正常使用。

自动实例化的有效性很大程度上取决于项目的确切规范和定位,建议创建一个启用了自动实例化的构建,并对其进行概要分析,以确定是否会看到实质性的性能提升。

12.2.7 Post Processing

由于移动设备存在更慢的依赖纹理读取(dependent texture read)、有限的硬件特性、特殊的硬件架构、额外的渲染目标解析、有限的带宽等限制性因素,故而后处理在移动设备上执行起来会比较耗性能,有些极端情况会卡住渲染管线。

尽管如此,在某些画质要求高的游戏或应用,依然非常依赖后处理的强劲表现力,为高品质迈上几个台阶。UE不会限制开发者使用后处理。

为了开启后处理,必须先开启MobileHDR选项:

开启后处理之后,就可以在后处理体积(Post Process Volume)设置各种后处理效果。

在移动端可以支持的后处理有Mobile Tonemapper、Color Grading、Lens、Bloom、Dirt Mask、Auto Exposure、Lens Flares、Depth of Field等等。

为了获得更好的性能,官方给出的建议是在移动端只开启Bloom和TAA。

12.2.8 其它特性和限制

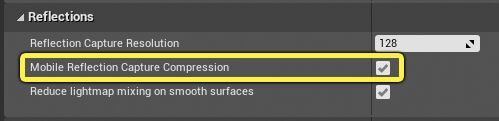

- Reflection Capture Compression

移动端支持反射捕捉器组件(Reflection Capture Component)的压缩,可以减少Reflection Capture运行时的内存和带宽,提升渲染效率。需要在工程配置中开启:

开启之后,默认使用ETC2进行压缩。另外,也可以针对每个Reflection Capture Component进行调整:

- 材质特性

移动平台上的材质(特性级别Open ES 3.1)使用与其他平台相同的基于节点的创建过程,并且绝大多数节点在移动端都支持。

移动平台支持的材质属性有:BaseColor、Roughness、Metallic、Specular、Normal、Emissive、Refraction,但不支持Scene Color表达式、Tessellation输入、次表面散射着色模型。

移动平台支持的材质存在一些限制:

- 由于硬件限制,只能使用16个纹理采样器。

- 只有DefaultLit和Unlit着色模型可用。

- 自定义UV应该用来避免依赖纹理读取(没有纹理uv的数学计算)。

- 半透明和Masked材质是及其耗性能, 建议尽量使用不透明材质。

- 深度淡出(Depth fade)可以在iOS平台的半透明材质中使用,但在硬件不支持从深度缓冲区获取数据的平台上,是不受支持的,将导致不可接受的性能成本。

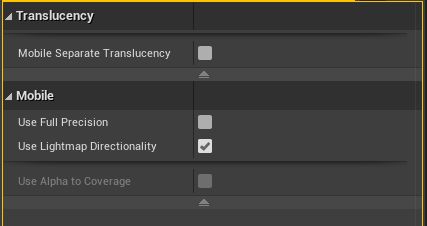

材质属性面板存在一些针对移动端的特殊选项:

这些属性的说明如下:

-

Mobile Separate Translucency:是否在移动端开启单独的半透明渲染纹理。

-

Use Full Precision:是否使用全精度,如果是,可以减少带宽占用和能耗,提升性能,但可能会出现远处物体的瑕疵:

左:全精度材质;右:半精度材质,远处的太阳出现了瑕疵。

-

Use Lightmap Directionality:是否开启光照图的方向性,若勾选,会考虑光照图的方向和像素法线,但会提升性能消耗。

-

Use Alpha to Coverage:是否为Masked材质开启MSAA抗锯齿,若勾选,会开启MSAA。

-

Fully Rough:是否完全粗糙,如果勾选,将极大提升此材质的渲染效率。

此外,移动端支持的网格类型有:

- Skeletal Mesh

- Static Mesh

- Landscape

- CPU particle sprites, particle meshes

除上述类型以外的其它都不被支持。其它限制还有:

- 单个网格最多只能到65k,因为顶点索引只有16位。

- 单个Skeletal Mesh的骨骼数量必须在75个以内,因为受硬件性能的限制。

12.3 FMobileSceneRenderer

FMobileSceneRenderer继承自FSceneRenderer,它负责移动端的场景渲染流程,而PC端是同样继承自FSceneRenderer的FDeferredShadingSceneRenderer。它们的继承关系图如下:

前述多篇文章已经提及了FDeferredShadingSceneRenderer,它的渲染流程尤为复杂,包含了复杂的光影和渲染步骤。相比之下,FMobileSceneRenderer的逻辑和步骤会简单许多,下面是RenderDoc的截帧:

以上主要包含了InitViews、ShadowDepths、PrePass、BasePass、OcclusionTest、ShadowProjectionOnOpaque、Translucency、PostProcessing等步骤。其中这些步骤在PC端都是存在的,但实现过程可能会有所不同。见后续章节剖析。

12.3.1 渲染器主流程

移动端的场景渲染器的主流程也发生在FMobileSceneRenderer::Render中,代码和解析如下:

// Engine\Source\Runtime\Renderer\Private\MobileShadingRenderer.cpp

void FMobileSceneRenderer::Render(FRHICommandListImmediate& RHICmdList)

{

// 更新图元场景信息。

Scene->UpdateAllPrimitiveSceneInfos(RHICmdList);

// 准备视图的渲染区域.

PrepareViewRectsForRendering(RHICmdList);

// 准备天空大气的数据

if (ShouldRenderSkyAtmosphere(Scene, ViewFamily.EngineShowFlags))

{

for (int32 LightIndex = 0; LightIndex < NUM_ATMOSPHERE_LIGHTS; ++LightIndex)

{

if (Scene->AtmosphereLights[LightIndex])

{

PrepareSunLightProxy(*Scene->GetSkyAtmosphereSceneInfo(), LightIndex, *Scene->AtmosphereLights[LightIndex]);

}

}

}

else

{

Scene->ResetAtmosphereLightsProperties();

}

if(!ViewFamily.EngineShowFlags.Rendering)

{

return;

}

// 等待遮挡剔除测试.

WaitOcclusionTests(RHICmdList);

FRHICommandListExecutor::GetImmediateCommandList().PollOcclusionQueries();

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

// 初始化视图, 查找可见图元, 准备渲染所需的RT和缓冲区等数据.

InitViews(RHICmdList);

if (GRHINeedsExtraDeletionLatency || !GRHICommandList.Bypass())

{

QUICK_SCOPE_CYCLE_COUNTER(STAT_FMobileSceneRenderer_PostInitViewsFlushDel);

// 可能会暂停遮挡查询,所以最好在等待时让RHI线程和GPU工作. 此外,当执行RHI线程时,这是唯一将处理挂起删除的位置.

FRHICommandListExecutor::GetImmediateCommandList().PollOcclusionQueries();

FRHICommandListExecutor::GetImmediateCommandList().ImmediateFlush(EImmediateFlushType::FlushRHIThreadFlushResources);

}

GEngine->GetPreRenderDelegate().Broadcast();

// 在渲染开始前提交全局动态缓冲.

DynamicIndexBuffer.Commit();

DynamicVertexBuffer.Commit();

DynamicReadBuffer.Commit();

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLMM_SceneSim));

if (ViewFamily.bLateLatchingEnabled)

{

BeginLateLatching(RHICmdList);

}

FSceneRenderTargets& SceneContext = FSceneRenderTargets::Get(RHICmdList);

// 处理虚拟纹理

if (bUseVirtualTexturing)

{

SCOPED_GPU_STAT(RHICmdList, VirtualTextureUpdate);

FVirtualTextureSystem::Get().Update(RHICmdList, FeatureLevel, Scene);

// Clear virtual texture feedback to default value

FUnorderedAccessViewRHIRef FeedbackUAV = SceneContext.GetVirtualTextureFeedbackUAV();

RHICmdList.Transition(FRHITransitionInfo(FeedbackUAV, ERHIAccess::SRVMask, ERHIAccess::UAVMask));

RHICmdList.ClearUAVUint(FeedbackUAV, FUintVector4(~0u, ~0u, ~0u, ~0u));

RHICmdList.Transition(FRHITransitionInfo(FeedbackUAV, ERHIAccess::UAVMask, ERHIAccess::UAVMask));

RHICmdList.BeginUAVOverlap(FeedbackUAV);

}

// 已排序的光源信息.

FSortedLightSetSceneInfo SortedLightSet;

// 延迟渲染.

if (bDeferredShading)

{

// 收集和排序光源.

GatherAndSortLights(SortedLightSet);

int32 NumReflectionCaptures = Views[0].NumBoxReflectionCaptures + Views[0].NumSphereReflectionCaptures;

bool bCullLightsToGrid = (NumReflectionCaptures > 0 || GMobileUseClusteredDeferredShading != 0);

FRDGBuilder GraphBuilder(RHICmdList);

// 计算光源格子.

ComputeLightGrid(GraphBuilder, bCullLightsToGrid, SortedLightSet);

GraphBuilder.Execute();

}

// 生成天空/大气LUT.

const bool bShouldRenderSkyAtmosphere = ShouldRenderSkyAtmosphere(Scene, ViewFamily.EngineShowFlags);

if (bShouldRenderSkyAtmosphere)

{

FRDGBuilder GraphBuilder(RHICmdList);

RenderSkyAtmosphereLookUpTables(GraphBuilder);

GraphBuilder.Execute();

}

// 通知特效系统场景准备渲染.

if (FXSystem && ViewFamily.EngineShowFlags.Particles)

{

FXSystem->PreRender(RHICmdList, NULL, !Views[0].bIsPlanarReflection);

if (FGPUSortManager* GPUSortManager = FXSystem->GetGPUSortManager())

{

GPUSortManager->OnPreRender(RHICmdList);

}

}

// 轮询遮挡剔除请求.

FRHICommandListExecutor::GetImmediateCommandList().PollOcclusionQueries();

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLMM_Shadows));

// 渲染阴影.

RenderShadowDepthMaps(RHICmdList);

FRHICommandListExecutor::GetImmediateCommandList().PollOcclusionQueries();

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

// 收集视图列表.

TArray ViewList;

for (int32 ViewIndex = 0; ViewIndex < Views.Num(); ViewIndex++)

{

ViewList.Add(&Views[ViewIndex]);

}

// 渲染自定义深度.

if (bShouldRenderCustomDepth)

{

FRDGBuilder GraphBuilder(RHICmdList);

FSceneTextureShaderParameters SceneTextures = CreateSceneTextureShaderParameters(GraphBuilder, Views[0].GetFeatureLevel(), ESceneTextureSetupMode::None);

RenderCustomDepthPass(GraphBuilder, SceneTextures);

GraphBuilder.Execute();

}

// 渲染深度PrePass.

if (bIsFullPrepassEnabled)

{

// SDF和AO需要完整的PrePass深度.

FRHIRenderPassInfo DepthPrePassRenderPassInfo(

SceneContext.GetSceneDepthSurface(),

EDepthStencilTargetActions::ClearDepthStencil_StoreDepthStencil);

DepthPrePassRenderPassInfo.NumOcclusionQueries = ComputeNumOcclusionQueriesToBatch();

DepthPrePassRenderPassInfo.bOcclusionQueries = DepthPrePassRenderPassInfo.NumOcclusionQueries != 0;

RHICmdList.BeginRenderPass(DepthPrePassRenderPassInfo, TEXT("DepthPrepass"));

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLM_MobilePrePass));

// 渲染完整的深度PrePass.

RenderPrePass(RHICmdList);

// 提交遮挡剔除.

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLMM_Occlusion));

RenderOcclusion(RHICmdList);

RHICmdList.EndRenderPass();

// SDF阴影

if (bRequiresDistanceFieldShadowingPass)

{

CSV_SCOPED_TIMING_STAT_EXCLUSIVE(RenderSDFShadowing);

RenderSDFShadowing(RHICmdList);

}

// HZB.

if (bShouldRenderHZB)

{

RenderHZB(RHICmdList, SceneContext.SceneDepthZ);

}

// AO.

if (bRequiresAmbientOcclusionPass)

{

RenderAmbientOcclusion(RHICmdList, SceneContext.SceneDepthZ);

}

}

FRHITexture* SceneColor = nullptr;

// 延迟渲染.

if (bDeferredShading)

{

SceneColor = RenderDeferred(RHICmdList, ViewList, SortedLightSet);

}

// 前向渲染.

else

{

SceneColor = RenderForward(RHICmdList, ViewList);

}

// 渲染速度缓冲.

if (bShouldRenderVelocities)

{

FRDGBuilder GraphBuilder(RHICmdList);

FRDGTextureMSAA SceneDepthTexture = RegisterExternalTextureMSAA(GraphBuilder, SceneContext.SceneDepthZ);

FRDGTextureRef VelocityTexture = TryRegisterExternalTexture(GraphBuilder, SceneContext.SceneVelocity);

if (VelocityTexture != nullptr)

{

AddClearRenderTargetPass(GraphBuilder, VelocityTexture);

}

// 渲染可移动物体的速度缓冲.

AddSetCurrentStatPass(GraphBuilder, GET_STATID(STAT_CLMM_Velocity));

RenderVelocities(GraphBuilder, SceneDepthTexture.Resolve, VelocityTexture, FSceneTextureShaderParameters(), EVelocityPass::Opaque, false);

AddSetCurrentStatPass(GraphBuilder, GET_STATID(STAT_CLMM_AfterVelocity));

// 渲染透明物体的速度缓冲.

AddSetCurrentStatPass(GraphBuilder, GET_STATID(STAT_CLMM_TranslucentVelocity));

RenderVelocities(GraphBuilder, SceneDepthTexture.Resolve, VelocityTexture, GetSceneTextureShaderParameters(CreateMobileSceneTextureUniformBuffer(GraphBuilder, EMobileSceneTextureSetupMode::SceneColor)), EVelocityPass::Translucent, false);

GraphBuilder.Execute();

}

// 处理场景渲染后的逻辑.

{

FRendererModule& RendererModule = static_cast(GetRendererModule());

FRDGBuilder GraphBuilder(RHICmdList);

RendererModule.RenderPostOpaqueExtensions(GraphBuilder, Views, SceneContext);

if (FXSystem && Views.IsValidIndex(0))

{

AddUntrackedAccessPass(GraphBuilder, [this](FRHICommandListImmediate& RHICmdList)

{

check(RHICmdList.IsOutsideRenderPass());

FXSystem->PostRenderOpaque(

RHICmdList,

Views[0].ViewUniformBuffer,

nullptr,

nullptr,

Views[0].AllowGPUParticleUpdate()

);

if (FGPUSortManager* GPUSortManager = FXSystem->GetGPUSortManager())

{

GPUSortManager->OnPostRenderOpaque(RHICmdList);

}

});

}

GraphBuilder.Execute();

}

// 刷新/提交命令缓冲.

if (bSubmitOffscreenRendering)

{

RHICmdList.SubmitCommandsHint();

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

}

// 转换场景颜色成SRV, 以供后续步骤读取.

if (!bGammaSpace || bRenderToSceneColor)

{

RHICmdList.Transition(FRHITransitionInfo(SceneColor, ERHIAccess::Unknown, ERHIAccess::SRVMask));

}

if (bDeferredShading)

{

// 释放场景渲染目标上的原始引用.

SceneContext.AdjustGBufferRefCount(RHICmdList, -1);

}

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLMM_Post));

// 处理虚拟纹理.

if (bUseVirtualTexturing)

{

SCOPED_GPU_STAT(RHICmdList, VirtualTextureUpdate);

// No pass after this should make VT page requests

RHICmdList.EndUAVOverlap(SceneContext.VirtualTextureFeedbackUAV);

RHICmdList.Transition(FRHITransitionInfo(SceneContext.VirtualTextureFeedbackUAV, ERHIAccess::UAVMask, ERHIAccess::SRVMask));

TArray> ViewRects;

ViewRects.AddUninitialized(Views.Num());

for (int32 ViewIndex = 0; ViewIndex < Views.Num(); ++ViewIndex)

{

ViewRects[ViewIndex] = Views[ViewIndex].ViewRect;

}

FVirtualTextureFeedbackBufferDesc Desc;

Desc.Init2D(SceneContext.GetBufferSizeXY(), ViewRects, SceneContext.GetVirtualTextureFeedbackScale());

SubmitVirtualTextureFeedbackBuffer(RHICmdList, SceneContext.VirtualTextureFeedback, Desc);

}

FMemMark Mark(FMemStack::Get());

FRDGBuilder GraphBuilder(RHICmdList);

FRDGTextureRef ViewFamilyTexture = TryCreateViewFamilyTexture(GraphBuilder, ViewFamily);

// 解析场景

if (ViewFamily.bResolveScene)

{

if (!bGammaSpace || bRenderToSceneColor)

{

// 完成每个视图的渲染或完整的立体声缓冲区(如果启用)

{

RDG_EVENT_SCOPE(GraphBuilder, "PostProcessing");

SCOPE_CYCLE_COUNTER(STAT_FinishRenderViewTargetTime);

TArray, TInlineAllocator<1, SceneRenderingAllocator>> MobileSceneTexturesPerView;

MobileSceneTexturesPerView.SetNumZeroed(Views.Num());

const auto SetupMobileSceneTexturesPerView = [&]()

{

for (int32 ViewIndex = 0; ViewIndex < Views.Num(); ++ViewIndex)

{

EMobileSceneTextureSetupMode SetupMode = EMobileSceneTextureSetupMode::SceneColor;

if (Views[ViewIndex].bCustomDepthStencilValid)

{

SetupMode |= EMobileSceneTextureSetupMode::CustomDepth;

}

if (bShouldRenderVelocities)

{

SetupMode |= EMobileSceneTextureSetupMode::SceneVelocity;

}

MobileSceneTexturesPerView[ViewIndex] = CreateMobileSceneTextureUniformBuffer(GraphBuilder, SetupMode);

}

};

SetupMobileSceneTexturesPerView();

FMobilePostProcessingInputs PostProcessingInputs;

PostProcessingInputs.ViewFamilyTexture = ViewFamilyTexture;

// 渲染后处理效果.

for (int32 ViewIndex = 0; ViewIndex < Views.Num(); ViewIndex++)

{

RDG_EVENT_SCOPE_CONDITIONAL(GraphBuilder, Views.Num() > 1, "View%d", ViewIndex);

PostProcessingInputs.SceneTextures = MobileSceneTexturesPerView[ViewIndex];

AddMobilePostProcessingPasses(GraphBuilder, Views[ViewIndex], PostProcessingInputs, NumMSAASamples > 1);

}

}

}

}

GEngine->GetPostRenderDelegate().Broadcast();

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLMM_SceneEnd));

if (bShouldRenderVelocities)

{

SceneContext.SceneVelocity.SafeRelease();

}

if (ViewFamily.bLateLatchingEnabled)

{

EndLateLatching(RHICmdList, Views[0]);

}

RenderFinish(GraphBuilder, ViewFamilyTexture);

GraphBuilder.Execute();

// 轮询遮挡剔除请求.

FRHICommandListExecutor::GetImmediateCommandList().PollOcclusionQueries();

FRHICommandListExecutor::GetImmediateCommandList().ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

}

看过剖析虚幻渲染体系(04)- 延迟渲染管线篇章的同学应该都知道,移动端的场景渲染过程精简了很多很多步骤,相当于是PC端场景渲染器的一个子集。当然,为了适应移动端特有的GPU硬件架构,移动端的场景渲染也有区别于PC端的地方。后面会详细剖析。移动端场景的主要步骤和流程如下所示:

关于上面的流程图,有以下几点需要加以说明:

- 流程图节点

bDeferredShading和bDeferredShading2是同一个变量,这里区分开主要是为了防止mermaid语法绘图错误。 - 带*的节点是有条件的,非必然执行的步骤。

UE4.26便加入了移动端的延迟渲染管线,所以上述代码中有前向渲染分支RenderForward和延迟渲染分支RenderDeferred,它们返回的都是渲染结果SceneColor。

移动端也支持了图元GPU场景、SDF阴影、AO、天空大气、虚拟纹理、遮挡剔除等渲染特性。

自UE4.26开始,渲染体系广泛地使用了RDG系统,移动端的场景渲染器也不例外。上述代码中总共声明了数个FRDGBuilder实例,用于计算光源格子,以及渲染天空大气LUT、自定义深度、速度缓冲、渲染后置事件、后处理等,它们都是相对独立的功能模块或渲染阶段。

12.3.2 RenderForward

RenderForward在移动端场景渲染器中负责前向渲染的分支,它的代码和解析如下:

FRHITexture* FMobileSceneRenderer::RenderForward(FRHICommandListImmediate& RHICmdList, const TArrayView ViewList)

{

const FViewInfo& View = *ViewList[0];

FSceneRenderTargets& SceneContext = FSceneRenderTargets::Get(RHICmdList);

FRHITexture* SceneColor = nullptr;

FRHITexture* SceneColorResolve = nullptr;

FRHITexture* SceneDepth = nullptr;

ERenderTargetActions ColorTargetAction = ERenderTargetActions::Clear_Store;

EDepthStencilTargetActions DepthTargetAction = EDepthStencilTargetActions::ClearDepthStencil_DontStoreDepthStencil;

// 是否启用移动端MSAA.

bool bMobileMSAA = NumMSAASamples > 1 && SceneContext.GetSceneColorSurface()->GetNumSamples() > 1;

// 是否启用移动端多试图模式.

static const auto CVarMobileMultiView = IConsoleManager::Get().FindTConsoleVariableDataInt(TEXT("vr.MobileMultiView"));

const bool bIsMultiViewApplication = (CVarMobileMultiView && CVarMobileMultiView->GetValueOnAnyThread() != 0);

// gamma空间的渲染分支.

if (bGammaSpace && !bRenderToSceneColor)

{

// 如果开启MSAA, 则从SceneContext获取渲染纹理(包含场景颜色和解析纹理)

if (bMobileMSAA)

{

SceneColor = SceneContext.GetSceneColorSurface();

SceneColorResolve = ViewFamily.RenderTarget->GetRenderTargetTexture();

ColorTargetAction = ERenderTargetActions::Clear_Resolve;

RHICmdList.Transition(FRHITransitionInfo(SceneColorResolve, ERHIAccess::Unknown, ERHIAccess::RTV | ERHIAccess::ResolveDst));

}

// 非MSAA,从视图家族获取渲染纹理.

else

{

SceneColor = ViewFamily.RenderTarget->GetRenderTargetTexture();

RHICmdList.Transition(FRHITransitionInfo(SceneColor, ERHIAccess::Unknown, ERHIAccess::RTV));

}

SceneDepth = SceneContext.GetSceneDepthSurface();

}

// 线性空间或渲染到场景纹理.

else

{

SceneColor = SceneContext.GetSceneColorSurface();

if (bMobileMSAA)

{

SceneColorResolve = SceneContext.GetSceneColorTexture();

ColorTargetAction = ERenderTargetActions::Clear_Resolve;

RHICmdList.Transition(FRHITransitionInfo(SceneColorResolve, ERHIAccess::Unknown, ERHIAccess::RTV | ERHIAccess::ResolveDst));

}

else

{

SceneColorResolve = nullptr;

ColorTargetAction = ERenderTargetActions::Clear_Store;

}

SceneDepth = SceneContext.GetSceneDepthSurface();

if (bRequiresMultiPass)

{

// store targets after opaque so translucency render pass can be restarted

ColorTargetAction = ERenderTargetActions::Clear_Store;

DepthTargetAction = EDepthStencilTargetActions::ClearDepthStencil_StoreDepthStencil;

}

if (bKeepDepthContent)

{

// store depth if post-processing/capture needs it

DepthTargetAction = EDepthStencilTargetActions::ClearDepthStencil_StoreDepthStencil;

}

}

// prepass的深度纹理状态.

if (bIsFullPrepassEnabled)

{

ERenderTargetActions DepthTarget = MakeRenderTargetActions(ERenderTargetLoadAction::ELoad, GetStoreAction(GetDepthActions(DepthTargetAction)));

ERenderTargetActions StencilTarget = MakeRenderTargetActions(ERenderTargetLoadAction::ELoad, GetStoreAction(GetStencilActions(DepthTargetAction)));

DepthTargetAction = MakeDepthStencilTargetActions(DepthTarget, StencilTarget);

}

FRHITexture* ShadingRateTexture = nullptr;

if (!View.bIsSceneCapture && !View.bIsReflectionCapture)

{

TRefCountPtr ShadingRateTarget = GVRSImageManager.GetMobileVariableRateShadingImage(ViewFamily);

if (ShadingRateTarget.IsValid())

{

ShadingRateTexture = ShadingRateTarget->GetRenderTargetItem().ShaderResourceTexture;

}

}

// 场景颜色渲染Pass信息.

FRHIRenderPassInfo SceneColorRenderPassInfo(

SceneColor,

ColorTargetAction,

SceneColorResolve,

SceneDepth,

DepthTargetAction,

nullptr, // we never resolve scene depth on mobile

ShadingRateTexture,

VRSRB_Sum,

FExclusiveDepthStencil::DepthWrite_StencilWrite

);

SceneColorRenderPassInfo.SubpassHint = ESubpassHint::DepthReadSubpass;

if (!bIsFullPrepassEnabled)

{

SceneColorRenderPassInfo.NumOcclusionQueries = ComputeNumOcclusionQueriesToBatch();

SceneColorRenderPassInfo.bOcclusionQueries = SceneColorRenderPassInfo.NumOcclusionQueries != 0;

}

// 如果场景颜色不是多视图,但应用程序是,需要渲染为单视图的多视图给着色器.

SceneColorRenderPassInfo.MultiViewCount = View.bIsMobileMultiViewEnabled ? 2 : (bIsMultiViewApplication ? 1 : 0);

// 开始渲染场景颜色.

RHICmdList.BeginRenderPass(SceneColorRenderPassInfo, TEXT("SceneColorRendering"));

if (GIsEditor && !View.bIsSceneCapture)

{

DrawClearQuad(RHICmdList, Views[0].BackgroundColor);

}

if (!bIsFullPrepassEnabled)

{

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLM_MobilePrePass));

// 渲染深度pre-pass

RenderPrePass(RHICmdList);

}

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLMM_Opaque));

// 渲染BasePass: 不透明和masked物体.

RenderMobileBasePass(RHICmdList, ViewList);

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

//渲染调试模式.

#if !(UE_BUILD_SHIPPING || UE_BUILD_TEST)

if (ViewFamily.UseDebugViewPS())

{

// Here we use the base pass depth result to get z culling for opaque and masque.

// The color needs to be cleared at this point since shader complexity renders in additive.

DrawClearQuad(RHICmdList, FLinearColor::Black);

RenderMobileDebugView(RHICmdList, ViewList);

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

}

#endif // !(UE_BUILD_SHIPPING || UE_BUILD_TEST)

const bool bAdrenoOcclusionMode = CVarMobileAdrenoOcclusionMode.GetValueOnRenderThread() != 0;

if (!bIsFullPrepassEnabled)

{

// 遮挡剔除

if (!bAdrenoOcclusionMode)

{

// 提交遮挡剔除

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLMM_Occlusion));

RenderOcclusion(RHICmdList);

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

}

}

// 后置事件, 处理插件渲染.

{

CSV_SCOPED_TIMING_STAT_EXCLUSIVE(ViewExtensionPostRenderBasePass);

QUICK_SCOPE_CYCLE_COUNTER(STAT_FMobileSceneRenderer_ViewExtensionPostRenderBasePass);

for (int32 ViewExt = 0; ViewExt < ViewFamily.ViewExtensions.Num(); ++ViewExt)

{

for (int32 ViewIndex = 0; ViewIndex < ViewFamily.Views.Num(); ++ViewIndex)

{

ViewFamily.ViewExtensions[ViewExt]->PostRenderBasePass_RenderThread(RHICmdList, Views[ViewIndex]);

}

}

}

// 如果需要渲染透明物体或像素投影的反射, 则需要拆分pass.

if (bRequiresMultiPass || bRequiresPixelProjectedPlanarRelfectionPass)

{

RHICmdList.EndRenderPass();

}

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLMM_Translucency));

// 如果需要, 则重新开启透明渲染通道.

if (bRequiresMultiPass || bRequiresPixelProjectedPlanarRelfectionPass)

{

check(RHICmdList.IsOutsideRenderPass());

// 如果当前硬件不支持读写相同的深度缓冲区,则复制场景深度.

ConditionalResolveSceneDepth(RHICmdList, View);

if (bRequiresPixelProjectedPlanarRelfectionPass)

{

const FPlanarReflectionSceneProxy* PlanarReflectionSceneProxy = Scene ? Scene->GetForwardPassGlobalPlanarReflection() : nullptr;

RenderPixelProjectedReflection(RHICmdList, SceneContext, PlanarReflectionSceneProxy);

FRHITransitionInfo TranslucentRenderPassTransitions[] = {

FRHITransitionInfo(SceneColor, ERHIAccess::SRVMask, ERHIAccess::RTV),

FRHITransitionInfo(SceneDepth, ERHIAccess::SRVMask, ERHIAccess::DSVWrite)

};

RHICmdList.Transition(MakeArrayView(TranslucentRenderPassTransitions, UE_ARRAY_COUNT(TranslucentRenderPassTransitions)));

}

DepthTargetAction = EDepthStencilTargetActions::LoadDepthStencil_DontStoreDepthStencil;

FExclusiveDepthStencil::Type ExclusiveDepthStencil = FExclusiveDepthStencil::DepthRead_StencilRead;

if (bModulatedShadowsInUse)

{

ExclusiveDepthStencil = FExclusiveDepthStencil::DepthRead_StencilWrite;

}

// 用于移动端像素投影反射的不透明网格必须将深度写入深度RT, 因为只渲染一次网格(如果质量水平低于或等于BestPerformance).

if (IsMobilePixelProjectedReflectionEnabled(View.GetShaderPlatform())

&& GetMobilePixelProjectedReflectionQuality() == EMobilePixelProjectedReflectionQuality::BestPerformance)

{

ExclusiveDepthStencil = FExclusiveDepthStencil::DepthWrite_StencilWrite;

}

if (bKeepDepthContent && !bMobileMSAA)

{

DepthTargetAction = EDepthStencilTargetActions::LoadDepthStencil_StoreDepthStencil;

}

#if PLATFORM_HOLOLENS

if (bShouldRenderDepthToTranslucency)

{

ExclusiveDepthStencil = FExclusiveDepthStencil::DepthWrite_StencilWrite;

}

#endif

// 透明物体渲染Pass.

FRHIRenderPassInfo TranslucentRenderPassInfo(

SceneColor,

SceneColorResolve ? ERenderTargetActions::Load_Resolve : ERenderTargetActions::Load_Store,

SceneColorResolve,

SceneDepth,

DepthTargetAction,

nullptr,

ShadingRateTexture,

VRSRB_Sum,

ExclusiveDepthStencil

);

TranslucentRenderPassInfo.NumOcclusionQueries = 0;

TranslucentRenderPassInfo.bOcclusionQueries = false;

TranslucentRenderPassInfo.SubpassHint = ESubpassHint::DepthReadSubpass;

// 开始渲染半透明物体.

RHICmdList.BeginRenderPass(TranslucentRenderPassInfo, TEXT("SceneColorTranslucencyRendering"));

}

// 场景深度是只读的,可以获取.

RHICmdList.NextSubpass();

if (!View.bIsPlanarReflection)

{

// 渲染贴花.

if (ViewFamily.EngineShowFlags.Decals)

{

CSV_SCOPED_TIMING_STAT_EXCLUSIVE(RenderDecals);

RenderDecals(RHICmdList);

}

// 渲染调制阴影投射.

if (ViewFamily.EngineShowFlags.DynamicShadows)

{

CSV_SCOPED_TIMING_STAT_EXCLUSIVE(RenderShadowProjections);

RenderModulatedShadowProjections(RHICmdList);

}

}

// 绘制半透明.

if (ViewFamily.EngineShowFlags.Translucency)

{

CSV_SCOPED_TIMING_STAT_EXCLUSIVE(RenderTranslucency);

SCOPE_CYCLE_COUNTER(STAT_TranslucencyDrawTime);

RenderTranslucency(RHICmdList, ViewList);

FRHICommandListExecutor::GetImmediateCommandList().PollOcclusionQueries();

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

}

if (!bIsFullPrepassEnabled)

{

// Adreno遮挡剔除模式.

if (bAdrenoOcclusionMode)

{

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLMM_Occlusion));

// flush

RHICmdList.SubmitCommandsHint();

bSubmitOffscreenRendering = false; // submit once

// Issue occlusion queries

RenderOcclusion(RHICmdList);

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

}

}

// 在MSAA被解析前预计算色调映射(只在iOS有效)

if (!bGammaSpace)

{

PreTonemapMSAA(RHICmdList);

}

// 结束场景颜色渲染.

RHICmdList.EndRenderPass();

// 优化返回场景颜色的解析纹理(开启了MSAA才有).

return SceneColorResolve ? SceneColorResolve : SceneColor;

}

移动端前向渲染主要步骤跟PC端类似,依次渲染PrePass、BasePass、特殊渲染(贴花、AO、遮挡剔除等)、半透明物体。它们的流程图如下:

其中遮挡剔除和GPU厂商相关,比如高通Adreno系列GPU芯片要求在Flush渲染指令和Switch FBO之间:

Render Opaque -> Render Translucent -> Flush -> Render Queries -> Switch FBO

那么UE也遵循了Adreno系列芯片的特殊要求,对其的遮挡剔除做了特殊的处理。

Adreno系列芯片支持TBDR架构的Bin和普通的Direct两种混合模式的渲染,会在遮挡查询时自动切换到Direct模式,以降低遮挡查询的开销。如果不在Flush渲染指令和Switch FBO之间提交查询,会卡住整个渲染管线,引发渲染性能下降。

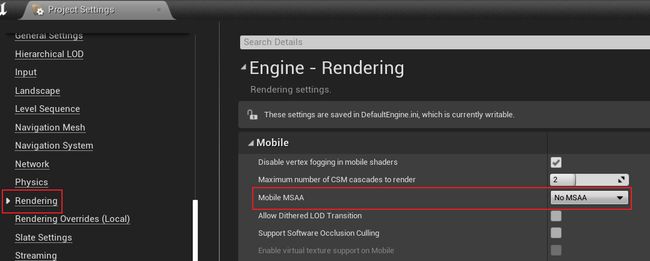

MSAA由于天然硬件支持且效果和效率达到很好的平衡,是UE在移动端前向渲染的首选抗锯齿。因此,上述代码中出现了不少处理MSAA的逻辑,包含颜色和深度纹理及其资源状态。如果开启了MSAA,默认情况下是在RHICmdList.EndRenderPass()解析场景颜色(同时将芯片分块上的数据写回到系统显存中),由此获得抗锯齿的纹理。移动端的MSAA默认不开启,但可在以下界面中设置:

前向渲染支持Gamma空间和HDR(线性空间)两种颜色空间模式。如果是线性空间,则渲染后期需要色调映射等步骤。默认是HDR,可在项目配置中更改:

上述代码的bRequiresMultiPass标明了是否需要专用的渲染Pass绘制半透明物体,决定它的值由以下代码完成:

// Engine\Source\Runtime\Renderer\Private\MobileShadingRenderer.cpp

bool FMobileSceneRenderer::RequiresMultiPass(FRHICommandListImmediate& RHICmdList, const FViewInfo& View) const

{

// Vulkan uses subpasses

if (IsVulkanPlatform(ShaderPlatform))

{

return false;

}

// All iOS support frame_buffer_fetch

if (IsMetalMobilePlatform(ShaderPlatform))

{

return false;

}

if (IsMobileDeferredShadingEnabled(ShaderPlatform))

{

// TODO: add GL support

return true;

}

// Some Androids support frame_buffer_fetch

if (IsAndroidOpenGLESPlatform(ShaderPlatform) && (GSupportsShaderFramebufferFetch || GSupportsShaderDepthStencilFetch))

{

return false;

}

// Always render reflection capture in single pass

if (View.bIsPlanarReflection || View.bIsSceneCapture)

{

return false;

}

// Always render LDR in single pass

if (!IsMobileHDR())

{

return false;

}

// MSAA depth can't be sampled or resolved, unless we are on PC (no vulkan)

if (NumMSAASamples > 1 && !IsSimulatedPlatform(ShaderPlatform))

{

return false;

}

return true;

}

与之类似但意义不同的是bIsMultiViewApplication和bIsMobileMultiViewEnabled标记,标明是否开启多视图渲染以及多视图的个数。只用于VR,由控制台变量vr.MobileMultiView及图形API等因素决定。MultiView用于XR,用于优化渲染两次的情形,它存在Basic和Advanced两种模式:

用于优化VR等渲染的MultiView对比图。上:未采用MultiView模式的渲染,两个眼睛各自提交绘制指令;中:基础MultiView模式,复用提交指令,在GPU层复制多一份Command List;下:高级MultiView模式,可以复用DC、Command List、几何信息。

bKeepDepthContent标明是否要保留深度内容,决定它的代码:

bKeepDepthContent =

bRequiresMultiPass ||

bForceDepthResolve ||

bRequiresPixelProjectedPlanarRelfectionPass ||

bSeparateTranslucencyActive ||

Views[0].bIsReflectionCapture ||

(bDeferredShading && bPostProcessUsesSceneDepth) ||

bShouldRenderVelocities ||

bIsFullPrepassEnabled;

// 带MSAA的深度从不保留.

bKeepDepthContent = (NumMSAASamples > 1 ? false : bKeepDepthContent);

上述代码还揭示了平面反射在移动端的一种特殊渲染方式:Pixel Projected Reflection(PPR)。它的实现原理类似于SSR,但需要的数据更少,只需要场景颜色、深度缓冲和反射区域。它的核心步骤:

- 计算场景颜色的所有像素在反射平面的镜像位置。

- 测试像素的反射是否在反射区域内。

- 光线投射到镜像像素位置。

- 测试交点是否在反射区域内。

- 如果找到相交点,计算像素在屏幕的镜像位置。

- 在交点处写入镜像像素的颜色。

- 如果反射区域内的交点被其它物体遮挡,则剔除此位置的反射。

PPR效果一览。

PPR可以在工程配置中设置:

12.3.3 RenderDeferred

UE在4.26在移动端渲染管线增加了延迟渲染分支,并在4.27做了改进和优化。移动端是否开启延迟着色的特性由以下代码决定:

// Engine\Source\Runtime\RenderCore\Private\RenderUtils.cpp

bool IsMobileDeferredShadingEnabled(const FStaticShaderPlatform Platform)

{

// 禁用OpenGL的延迟着色.

if (IsOpenGLPlatform(Platform))

{

// needs MRT framebuffer fetch or PLS

return false;

}

// 控制台变量"r.Mobile.ShadingPath"要为1.

static auto* MobileShadingPathCvar = IConsoleManager::Get().FindTConsoleVariableDataInt(TEXT("r.Mobile.ShadingPath"));

return MobileShadingPathCvar->GetValueOnAnyThread() == 1;

}

简单地说就是非OpenGL图形API且控制台变量r.Mobile.ShadingPath设为1。

r.Mobile.ShadingPath不可在编辑器动态设置值,只能在项目工程根目录/Config/DefaultEngine.ini增加以下字段来开启:[/Script/Engine.RendererSettings]

r.Mobile.ShadingPath=1

添加以上字段后,重启UE编辑器,等待shader编译完成即可预览移动端延迟着色效果。

以下是延迟渲染分支FMobileSceneRenderer::RenderDeferred的代码和解析:

FRHITexture* FMobileSceneRenderer::RenderDeferred(FRHICommandListImmediate& RHICmdList, const TArrayView ViewList, const FSortedLightSetSceneInfo& SortedLightSet)

{

FSceneRenderTargets& SceneContext = FSceneRenderTargets::Get(RHICmdList);

// 准备GBuffer.

FRHITexture* ColorTargets[4] = {

SceneContext.GetSceneColorSurface(),

SceneContext.GetGBufferATexture().GetReference(),

SceneContext.GetGBufferBTexture().GetReference(),

SceneContext.GetGBufferCTexture().GetReference()

};

// RHI是否需要将GBuffer存储到GPU的系统内存中,并在单独的渲染通道中进行着色.

ERenderTargetActions GBufferAction = bRequiresMultiPass ? ERenderTargetActions::Clear_Store : ERenderTargetActions::Clear_DontStore;

EDepthStencilTargetActions DepthAction = bKeepDepthContent ? EDepthStencilTargetActions::ClearDepthStencil_StoreDepthStencil : EDepthStencilTargetActions::ClearDepthStencil_DontStoreDepthStencil;

// RT的load/store动作.

ERenderTargetActions ColorTargetsAction[4] = {ERenderTargetActions::Clear_Store, GBufferAction, GBufferAction, GBufferAction};

if (bIsFullPrepassEnabled)

{

ERenderTargetActions DepthTarget = MakeRenderTargetActions(ERenderTargetLoadAction::ELoad, GetStoreAction(GetDepthActions(DepthAction)));

ERenderTargetActions StencilTarget = MakeRenderTargetActions(ERenderTargetLoadAction::ELoad, GetStoreAction(GetStencilActions(DepthAction)));

DepthAction = MakeDepthStencilTargetActions(DepthTarget, StencilTarget);

}

FRHIRenderPassInfo BasePassInfo = FRHIRenderPassInfo();

int32 ColorTargetIndex = 0;

for (; ColorTargetIndex < UE_ARRAY_COUNT(ColorTargets); ++ColorTargetIndex)

{

BasePassInfo.ColorRenderTargets[ColorTargetIndex].RenderTarget = ColorTargets[ColorTargetIndex];

BasePassInfo.ColorRenderTargets[ColorTargetIndex].ResolveTarget = nullptr;

BasePassInfo.ColorRenderTargets[ColorTargetIndex].ArraySlice = -1;

BasePassInfo.ColorRenderTargets[ColorTargetIndex].MipIndex = 0;

BasePassInfo.ColorRenderTargets[ColorTargetIndex].Action = ColorTargetsAction[ColorTargetIndex];

}

if (MobileRequiresSceneDepthAux(ShaderPlatform))

{

BasePassInfo.ColorRenderTargets[ColorTargetIndex].RenderTarget = SceneContext.SceneDepthAux->GetRenderTargetItem().ShaderResourceTexture.GetReference();

BasePassInfo.ColorRenderTargets[ColorTargetIndex].ResolveTarget = nullptr;

BasePassInfo.ColorRenderTargets[ColorTargetIndex].ArraySlice = -1;

BasePassInfo.ColorRenderTargets[ColorTargetIndex].MipIndex = 0;

BasePassInfo.ColorRenderTargets[ColorTargetIndex].Action = GBufferAction;

ColorTargetIndex++;

}

BasePassInfo.DepthStencilRenderTarget.DepthStencilTarget = SceneContext.GetSceneDepthSurface();

BasePassInfo.DepthStencilRenderTarget.ResolveTarget = nullptr;

BasePassInfo.DepthStencilRenderTarget.Action = DepthAction;

BasePassInfo.DepthStencilRenderTarget.ExclusiveDepthStencil = FExclusiveDepthStencil::DepthWrite_StencilWrite;

BasePassInfo.SubpassHint = ESubpassHint::DeferredShadingSubpass;

if (!bIsFullPrepassEnabled)

{

BasePassInfo.NumOcclusionQueries = ComputeNumOcclusionQueriesToBatch();

BasePassInfo.bOcclusionQueries = BasePassInfo.NumOcclusionQueries != 0;

}

BasePassInfo.ShadingRateTexture = nullptr;

BasePassInfo.bIsMSAA = false;

BasePassInfo.MultiViewCount = 0;

RHICmdList.BeginRenderPass(BasePassInfo, TEXT("BasePassRendering"));

if (GIsEditor && !Views[0].bIsSceneCapture)

{

DrawClearQuad(RHICmdList, Views[0].BackgroundColor);

}

// 深度PrePass

if (!bIsFullPrepassEnabled)

{

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLM_MobilePrePass));

// Depth pre-pass

RenderPrePass(RHICmdList);

}

// BasePass: 不透明和镂空物体.

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLMM_Opaque));

RenderMobileBasePass(RHICmdList, ViewList);

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

// 遮挡剔除.

if (!bIsFullPrepassEnabled)

{

// Issue occlusion queries

RHICmdList.SetCurrentStat(GET_STATID(STAT_CLMM_Occlusion));

RenderOcclusion(RHICmdList);

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

}

// 非多Pass模式

if (!bRequiresMultiPass)

{

// 下个子Pass: SSceneColor + GBuffer写入, SceneDepth只读.

RHICmdList.NextSubpass();

// 渲染贴花.

if (ViewFamily.EngineShowFlags.Decals)

{

CSV_SCOPED_TIMING_STAT_EXCLUSIVE(RenderDecals);

RenderDecals(RHICmdList);

}

// 下个子Pass: SceneColor写入, SceneDepth只读

RHICmdList.NextSubpass();

// 延迟光照着色.

MobileDeferredShadingPass(RHICmdList, *Scene, ViewList, SortedLightSet);

// 绘制半透明.

if (ViewFamily.EngineShowFlags.Translucency)

{

CSV_SCOPED_TIMING_STAT_EXCLUSIVE(RenderTranslucency);

SCOPE_CYCLE_COUNTER(STAT_TranslucencyDrawTime);

RenderTranslucency(RHICmdList, ViewList);

FRHICommandListExecutor::GetImmediateCommandList().PollOcclusionQueries();

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

}

// 结束渲染Pass.

RHICmdList.EndRenderPass();

}

// 多Pass模式(PC设备模拟的移动端).

else

{

// 结束子pass.

RHICmdList.NextSubpass();

RHICmdList.NextSubpass();

RHICmdList.EndRenderPass();

// SceneColor + GBuffer write, SceneDepth is read only

{

for (int32 Index = 0; Index < UE_ARRAY_COUNT(ColorTargets); ++Index)

{

BasePassInfo.ColorRenderTargets[Index].Action = ERenderTargetActions::Load_Store;

}

BasePassInfo.DepthStencilRenderTarget.Action = EDepthStencilTargetActions::LoadDepthStencil_StoreDepthStencil;

BasePassInfo.DepthStencilRenderTarget.ExclusiveDepthStencil = FExclusiveDepthStencil::DepthRead_StencilRead;

BasePassInfo.SubpassHint = ESubpassHint::None;

BasePassInfo.NumOcclusionQueries = 0;

BasePassInfo.bOcclusionQueries = false;

RHICmdList.BeginRenderPass(BasePassInfo, TEXT("AfterBasePass"));

// 渲染贴花.

if (ViewFamily.EngineShowFlags.Decals)

{

CSV_SCOPED_TIMING_STAT_EXCLUSIVE(RenderDecals);

RenderDecals(RHICmdList);

}

RHICmdList.EndRenderPass();

}

// SceneColor write, SceneDepth is read only

{

FRHIRenderPassInfo ShadingPassInfo(

SceneContext.GetSceneColorSurface(),

ERenderTargetActions::Load_Store,

nullptr,

SceneContext.GetSceneDepthSurface(),

EDepthStencilTargetActions::LoadDepthStencil_StoreDepthStencil,

nullptr,

nullptr,

VRSRB_Passthrough,

FExclusiveDepthStencil::DepthRead_StencilWrite

);

ShadingPassInfo.NumOcclusionQueries = 0;

ShadingPassInfo.bOcclusionQueries = false;

RHICmdList.BeginRenderPass(ShadingPassInfo, TEXT("MobileShadingPass"));

// 延迟光照着色.

MobileDeferredShadingPass(RHICmdList, *Scene, ViewList, SortedLightSet);

// 绘制半透明.

if (ViewFamily.EngineShowFlags.Translucency)

{

CSV_SCOPED_TIMING_STAT_EXCLUSIVE(RenderTranslucency);

SCOPE_CYCLE_COUNTER(STAT_TranslucencyDrawTime);

RenderTranslucency(RHICmdList, ViewList);

FRHICommandListExecutor::GetImmediateCommandList().PollOcclusionQueries();

RHICmdList.ImmediateFlush(EImmediateFlushType::DispatchToRHIThread);

}

RHICmdList.EndRenderPass();

}

}

return ColorTargets[0];

}

由上面可知,移动端的延迟渲染管线和PC比较类似,先渲染BasePass,获得GBuffer几何信息,再执行光照计算。它们的流程图如下:

当然,也有和PC不一样的地方,最明显的是移动端使用了适配TB(D)R架构的SubPass渲染,使得移动端在渲染PrePass深度、BasePass、光照计算时,让场景颜色、深度、GBuffer等信息一直在On-Chip的缓冲区中,提升渲染效率,降低设备能耗。

12.3.3.1 MobileDeferredShadingPass

延迟渲染光照的过程由MobileDeferredShadingPass担当:

void MobileDeferredShadingPass(

FRHICommandListImmediate& RHICmdList,

const FScene& Scene,

const TArrayView PassViews,

const FSortedLightSetSceneInfo &SortedLightSet)

{

SCOPED_DRAW_EVENT(RHICmdList, MobileDeferredShading);

const FViewInfo& View0 = *PassViews[0];

FSceneRenderTargets& SceneContext = FSceneRenderTargets::Get(RHICmdList);

// 创建Uniform Buffer.

FUniformBufferRHIRef PassUniformBuffer = CreateMobileSceneTextureUniformBuffer(RHICmdList);

FUniformBufferStaticBindings GlobalUniformBuffers(PassUniformBuffer);

SCOPED_UNIFORM_BUFFER_GLOBAL_BINDINGS(RHICmdList, GlobalUniformBuffers);

// 设置视口.

RHICmdList.SetViewport(View0.ViewRect.Min.X, View0.ViewRect.Min.Y, 0.0f, View0.ViewRect.Max.X, View0.ViewRect.Max.Y, 1.0f);

// 光照的默认材质.

FCachedLightMaterial DefaultMaterial;

DefaultMaterial.MaterialProxy = UMaterial::GetDefaultMaterial(MD_LightFunction)->GetRenderProxy();

DefaultMaterial.Material = DefaultMaterial.MaterialProxy->GetMaterialNoFallback(ERHIFeatureLevel::ES3_1);

check(DefaultMaterial.Material);

// 绘制平行光.

RenderDirectLight(RHICmdList, Scene, View0, DefaultMaterial);

if (GMobileUseClusteredDeferredShading == 0)

{

// 渲染非分簇的简单光源.

RenderSimpleLights(RHICmdList, Scene, PassViews, SortedLightSet, DefaultMaterial);

}

// 渲染非分簇的局部光源.

int32 NumLights = SortedLightSet.SortedLights.Num();

int32 StandardDeferredStart = SortedLightSet.SimpleLightsEnd;

if (GMobileUseClusteredDeferredShading != 0)

{

StandardDeferredStart = SortedLightSet.ClusteredSupportedEnd;

}

// 渲染局部光源.

for (int32 LightIdx = StandardDeferredStart; LightIdx < NumLights; ++LightIdx)

{

const FSortedLightSceneInfo& SortedLight = SortedLightSet.SortedLights[LightIdx];

const FLightSceneInfo& LightSceneInfo = *SortedLight.LightSceneInfo;

RenderLocalLight(RHICmdList, Scene, View0, LightSceneInfo, DefaultMaterial);

}

}

下面继续分析渲染不同类型光源的接口:

// Engine\Source\Runtime\Renderer\Private\MobileDeferredShadingPass.cpp

// 渲染平行光

static void RenderDirectLight(FRHICommandListImmediate& RHICmdList, const FScene& Scene, const FViewInfo& View, const FCachedLightMaterial& DefaultLightMaterial)

{

FSceneRenderTargets& SceneContext = FSceneRenderTargets::Get(RHICmdList);

// 查找第一个平行光.

FLightSceneInfo* DirectionalLight = nullptr;

for (int32 ChannelIdx = 0; ChannelIdx < UE_ARRAY_COUNT(Scene.MobileDirectionalLights) && !DirectionalLight; ChannelIdx++)

{

DirectionalLight = Scene.MobileDirectionalLights[ChannelIdx];

}

// 渲染状态.

FGraphicsPipelineStateInitializer GraphicsPSOInit;

RHICmdList.ApplyCachedRenderTargets(GraphicsPSOInit);

// 增加自发光到SceneColor.

GraphicsPSOInit.BlendState = TStaticBlendState::GetRHI();

GraphicsPSOInit.RasterizerState = TStaticRasterizerState<>::GetRHI();

// 只绘制默认光照模型(MSM_DefaultLit)的像素.

uint8 StencilRef = GET_STENCIL_MOBILE_SM_MASK(MSM_DefaultLit);

GraphicsPSOInit.DepthStencilState = TStaticDepthStencilState<

false, CF_Always,

true, CF_Equal, SO_Keep, SO_Keep, SO_Keep,

false, CF_Always, SO_Keep, SO_Keep, SO_Keep,

GET_STENCIL_MOBILE_SM_MASK(0x7), 0x00>::GetRHI(); // 4 bits for shading models

// 处理VS.

TShaderMapRef VertexShader(View.ShaderMap);

const FMaterialRenderProxy* LightFunctionMaterialProxy = nullptr;

if (View.Family->EngineShowFlags.LightFunctions && DirectionalLight)

{

LightFunctionMaterialProxy = DirectionalLight->Proxy->GetLightFunctionMaterial();

}

FMobileDirectLightFunctionPS::FPermutationDomain PermutationVector = FMobileDirectLightFunctionPS::BuildPermutationVector(View, DirectionalLight != nullptr);

FCachedLightMaterial LightMaterial;

TShaderRef PixelShader;

GetLightMaterial(DefaultLightMaterial, LightFunctionMaterialProxy, PermutationVector.ToDimensionValueId(), LightMaterial, PixelShader);

GraphicsPSOInit.BoundShaderState.VertexDeclarationRHI = GFilterVertexDeclaration.VertexDeclarationRHI;

GraphicsPSOInit.BoundShaderState.VertexShaderRHI = VertexShader.GetVertexShader();

GraphicsPSOInit.BoundShaderState.PixelShaderRHI = PixelShader.GetPixelShader();

GraphicsPSOInit.PrimitiveType = PT_TriangleList;

SetGraphicsPipelineState(RHICmdList, GraphicsPSOInit);

// 处理PS.

FMobileDirectLightFunctionPS::FParameters PassParameters;

PassParameters.Forward = View.ForwardLightingResources->ForwardLightDataUniformBuffer;

PassParameters.MobileDirectionalLight = Scene.UniformBuffers.MobileDirectionalLightUniformBuffers[1];

PassParameters.ReflectionCaptureData = Scene.UniformBuffers.ReflectionCaptureUniformBuffer;

FReflectionUniformParameters ReflectionUniformParameters;

SetupReflectionUniformParameters(View, ReflectionUniformParameters);

PassParameters.ReflectionsParameters = CreateUniformBufferImmediate(ReflectionUniformParameters, UniformBuffer_SingleDraw);

PassParameters.LightFunctionParameters = FVector4(1.0f, 1.0f, 0.0f, 0.0f);

if (DirectionalLight)

{

const bool bUseMovableLight = DirectionalLight && !DirectionalLight->Proxy->HasStaticShadowing();

PassParameters.LightFunctionParameters2 = FVector(DirectionalLight->Proxy->GetLightFunctionFadeDistance(), DirectionalLight->Proxy->GetLightFunctionDisabledBrightness(), bUseMovableLight ? 1.0f : 0.0f);

const FVector Scale = DirectionalLight->Proxy->GetLightFunctionScale();

// Switch x and z so that z of the user specified scale affects the distance along the light direction

const FVector InverseScale = FVector(1.f / Scale.Z, 1.f / Scale.Y, 1.f / Scale.X);

PassParameters.WorldToLight = DirectionalLight->Proxy->GetWorldToLight() * FScaleMatrix(FVector(InverseScale));

}

FMobileDirectLightFunctionPS::SetParameters(RHICmdList, PixelShader, View, LightMaterial.MaterialProxy, *LightMaterial.Material, PassParameters);

RHICmdList.SetStencilRef(StencilRef);

const FIntPoint TargetSize = SceneContext.GetBufferSizeXY();

// 用全屏幕的矩形绘制.

DrawRectangle(

RHICmdList,

0, 0,

View.ViewRect.Width(), View.ViewRect.Height(),

View.ViewRect.Min.X, View.ViewRect.Min.Y,

View.ViewRect.Width(), View.ViewRect.Height(),

FIntPoint(View.ViewRect.Width(), View.ViewRect.Height()),

TargetSize,

VertexShader);

}

// 渲染非分簇模式的简单光源.

static void RenderSimpleLights(

FRHICommandListImmediate& RHICmdList,

const FScene& Scene,

const TArrayView PassViews,

const FSortedLightSetSceneInfo &SortedLightSet,

const FCachedLightMaterial& DefaultMaterial)

{

const FSimpleLightArray& SimpleLights = SortedLightSet.SimpleLights;

const int32 NumViews = PassViews.Num();

const FViewInfo& View0 = *PassViews[0];

// 处理VS.

TShaderMapRef> VertexShader(View0.ShaderMap);

TShaderRef PixelShaders[2];

{

const FMaterialShaderMap* MaterialShaderMap = DefaultMaterial.Material->GetRenderingThreadShaderMap();

FMobileRadialLightFunctionPS::FPermutationDomain PermutationVector;

PermutationVector.Set(false);

PermutationVector.Set(false);

PermutationVector.Set(false);

PixelShaders[0] = MaterialShaderMap->GetShader(PermutationVector);

PermutationVector.Set(true);

PixelShaders[1] = MaterialShaderMap->GetShader(PermutationVector);

}

// 设置PSO.

FGraphicsPipelineStateInitializer GraphicsPSOLight[2];

{

SetupSimpleLightPSO(RHICmdList, View0, VertexShader, PixelShaders[0], GraphicsPSOLight[0]);

SetupSimpleLightPSO(RHICmdList, View0, VertexShader, PixelShaders[1], GraphicsPSOLight[1]);

}

// 设置模板缓冲.

FGraphicsPipelineStateInitializer GraphicsPSOLightMask;

{

RHICmdList.ApplyCachedRenderTargets(GraphicsPSOLightMask);

GraphicsPSOLightMask.PrimitiveType = PT_TriangleList;

GraphicsPSOLightMask.BlendState = TStaticBlendStateWriteMask::GetRHI();

GraphicsPSOLightMask.RasterizerState = View0.bReverseCulling ? TStaticRasterizerState::GetRHI() : TStaticRasterizerState::GetRHI();

// set stencil to 1 where depth test fails

GraphicsPSOLightMask.DepthStencilState = TStaticDepthStencilState<

false, CF_DepthNearOrEqual,

true, CF_Always, SO_Keep, SO_Replace, SO_Keep,

false, CF_Always, SO_Keep, SO_Keep, SO_Keep,

0x00, STENCIL_SANDBOX_MASK>::GetRHI();

GraphicsPSOLightMask.BoundShaderState.VertexDeclarationRHI = GetVertexDeclarationFVector4();

GraphicsPSOLightMask.BoundShaderState.VertexShaderRHI = VertexShader.GetVertexShader();

GraphicsPSOLightMask.BoundShaderState.PixelShaderRHI = nullptr;

}

// 遍历所有简单光源列表, 执行着色计算.

for (int32 LightIndex = 0; LightIndex < SimpleLights.InstanceData.Num(); LightIndex++)

{

const FSimpleLightEntry& SimpleLight = SimpleLights.InstanceData[LightIndex];

for (int32 ViewIndex = 0; ViewIndex < NumViews; ViewIndex++)

{

const FViewInfo& View = *PassViews[ViewIndex];

const FSimpleLightPerViewEntry& SimpleLightPerViewData = SimpleLights.GetViewDependentData(LightIndex, ViewIndex, NumViews);

const FSphere LightBounds(SimpleLightPerViewData.Position, SimpleLight.Radius);

if (NumViews > 1)

{

// set viewports only we we have more than one

// otherwise it is set at the start of the pass

RHICmdList.SetViewport(View.ViewRect.Min.X, View.ViewRect.Min.Y, 0.0f, View.ViewRect.Max.X, View.ViewRect.Max.Y, 1.0f);

}

// 渲染光源遮罩.

SetGraphicsPipelineState(RHICmdList, GraphicsPSOLightMask);

VertexShader->SetSimpleLightParameters(RHICmdList, View, LightBounds);

RHICmdList.SetStencilRef(1);

StencilingGeometry::DrawSphere(RHICmdList);

// 渲染光源.

FMobileRadialLightFunctionPS::FParameters PassParameters;

FDeferredLightUniformStruct DeferredLightUniformsValue;

SetupSimpleDeferredLightParameters(SimpleLight, SimpleLightPerViewData, DeferredLightUniformsValue);

PassParameters.DeferredLightUniforms = TUniformBufferRef::CreateUniformBufferImmediate(DeferredLightUniformsValue, EUniformBufferUsage::UniformBuffer_SingleFrame);

PassParameters.IESTexture = GWhiteTexture->TextureRHI;

PassParameters.IESTextureSampler = GWhiteTexture->SamplerStateRHI;

if (SimpleLight.Exponent == 0)

{

SetGraphicsPipelineState(RHICmdList, GraphicsPSOLight[1]);

FMobileRadialLightFunctionPS::SetParameters(RHICmdList, PixelShaders[1], View, DefaultMaterial.MaterialProxy, *DefaultMaterial.Material, PassParameters);

}

else

{

SetGraphicsPipelineState(RHICmdList, GraphicsPSOLight[0]);

FMobileRadialLightFunctionPS::SetParameters(RHICmdList, PixelShaders[0], View, DefaultMaterial.MaterialProxy, *DefaultMaterial.Material, PassParameters);

}

VertexShader->SetSimpleLightParameters(RHICmdList, View, LightBounds);

// 只绘制默认光照模型(MSM_DefaultLit)的像素.

uint8 StencilRef = GET_STENCIL_MOBILE_SM_MASK(MSM_DefaultLit);

RHICmdList.SetStencilRef(StencilRef);

// 用球体渲染光源(点光源和聚光灯), 以快速剔除光源影响之外的像素.

StencilingGeometry::DrawSphere(RHICmdList);

}

}

}

// 渲染局部光源.

static void RenderLocalLight(

FRHICommandListImmediate& RHICmdList,

const FScene& Scene,

const FViewInfo& View,

const FLightSceneInfo& LightSceneInfo,

const FCachedLightMaterial& DefaultLightMaterial)

{

if (!LightSceneInfo.ShouldRenderLight(View))

{

return;

}

// 忽略非局部光源(光源和聚光灯之外的光源).

const uint8 LightType = LightSceneInfo.Proxy->GetLightType();

const bool bIsSpotLight = LightType == LightType_Spot;

const bool bIsPointLight = LightType == LightType_Point;

if (!bIsSpotLight && !bIsPointLight)

{

return;

}

// 绘制光源模板.

if (GMobileUseLightStencilCulling != 0)

{

RenderLocalLight_StencilMask(RHICmdList, Scene, View, LightSceneInfo);

}

// 处理IES光照.

bool bUseIESTexture = false;

FTexture* IESTextureResource = GWhiteTexture;

if (View.Family->EngineShowFlags.TexturedLightProfiles && LightSceneInfo.Proxy->GetIESTextureResource())

{

IESTextureResource = LightSceneInfo.Proxy->GetIESTextureResource();

bUseIESTexture = true;

}

FGraphicsPipelineStateInitializer GraphicsPSOInit;

RHICmdList.ApplyCachedRenderTargets(GraphicsPSOInit);

GraphicsPSOInit.BlendState = TStaticBlendState::GetRHI();

GraphicsPSOInit.PrimitiveType = PT_TriangleList;

const FSphere LightBounds = LightSceneInfo.Proxy->GetBoundingSphere();

// 设置光源光栅化和深度状态.

if (GMobileUseLightStencilCulling != 0)

{

SetLocalLightRasterizerAndDepthState_StencilMask(GraphicsPSOInit, View);

}

else

{

SetLocalLightRasterizerAndDepthState(GraphicsPSOInit, View, LightBounds);

}

// 设置VS

TShaderMapRef> VertexShader(View.ShaderMap);

const FMaterialRenderProxy* LightFunctionMaterialProxy = nullptr;

if (View.Family->EngineShowFlags.LightFunctions)

{

LightFunctionMaterialProxy = LightSceneInfo.Proxy->GetLightFunctionMaterial();

}

FMobileRadialLightFunctionPS::FPermutationDomain PermutationVector;

PermutationVector.Set(bIsSpotLight);

PermutationVector.Set(LightSceneInfo.Proxy->IsInverseSquared());

PermutationVector.Set(bUseIESTexture);

FCachedLightMaterial LightMaterial;

TShaderRef PixelShader;

GetLightMaterial(DefaultLightMaterial, LightFunctionMaterialProxy, PermutationVector.ToDimensionValueId(), LightMaterial, PixelShader);

GraphicsPSOInit.BoundShaderState.VertexDeclarationRHI = GetVertexDeclarationFVector4();

GraphicsPSOInit.BoundShaderState.VertexShaderRHI = VertexShader.GetVertexShader();

GraphicsPSOInit.BoundShaderState.PixelShaderRHI = PixelShader.GetPixelShader();

SetGraphicsPipelineState(RHICmdList, GraphicsPSOInit);

VertexShader->SetParameters(RHICmdList, View, &LightSceneInfo);

// 设置PS.

FMobileRadialLightFunctionPS::FParameters PassParameters;

PassParameters.DeferredLightUniforms = TUniformBufferRef::CreateUniformBufferImmediate(GetDeferredLightParameters(View, LightSceneInfo), EUniformBufferUsage::UniformBuffer_SingleFrame);

PassParameters.IESTexture = IESTextureResource->TextureRHI;

PassParameters.IESTextureSampler = IESTextureResource->SamplerStateRHI;

const float TanOuterAngle = bIsSpotLight ? FMath::Tan(LightSceneInfo.Proxy->GetOuterConeAngle()) : 1.0f;

PassParameters.LightFunctionParameters = FVector4(TanOuterAngle, 1.0f /*ShadowFadeFraction*/, bIsSpotLight ? 1.0f : 0.0f, bIsPointLight ? 1.0f : 0.0f);

PassParameters.LightFunctionParameters2 = FVector(LightSceneInfo.Proxy->GetLightFunctionFadeDistance(), LightSceneInfo.Proxy->GetLightFunctionDisabledBrightness(), 0.0f);

const FVector Scale = LightSceneInfo.Proxy->GetLightFunctionScale();

// Switch x and z so that z of the user specified scale affects the distance along the light direction

const FVector InverseScale = FVector(1.f / Scale.Z, 1.f / Scale.Y, 1.f / Scale.X);

PassParameters.WorldToLight = LightSceneInfo.Proxy->GetWorldToLight() * FScaleMatrix(FVector(InverseScale));

FMobileRadialLightFunctionPS::SetParameters(RHICmdList, PixelShader, View, LightMaterial.MaterialProxy, *LightMaterial.Material, PassParameters);

// 只绘制默认光照模型(MSM_DefaultLit)的像素.

uint8 StencilRef = GET_STENCIL_MOBILE_SM_MASK(MSM_DefaultLit);

RHICmdList.SetStencilRef(StencilRef);

// 点光源用球体绘制.

if (LightType == LightType_Point)

{

StencilingGeometry::DrawSphere(RHICmdList);

}

// 聚光灯用锥体绘制.

else // LightType_Spot

{

StencilingGeometry::DrawCone(RHICmdList);

}

}

绘制光源时,按光源类型划分为三个步骤:平行光、非分簇简单光源、局部光源(点光源和聚光灯)。需要注意的是,移动端只支持默认光照模型(MSM_DefaultLit)的计算,其它高级光照模型(头发、次表面散射、清漆、眼睛、布料等)暂不支持。

绘制平行光时,最多只能绘制1个,采用的是全屏幕矩形绘制,支持若干级CSM阴影。

绘制非分簇简单光源时,无论是点光源还是聚光灯,都采用球体绘制,不支持阴影。

绘制局部光源时,会复杂许多,先绘制局部光源模板缓冲,再设置光栅化和深度状态,最后才绘制光源。其中点光源采用球体绘制,不支持阴影;聚光灯采用锥体绘制,可以支持阴影,默认情况下,聚光灯不支持动态光影计算,需要在工程配置中开启:

此外,是否开启模板剔除光源不相交的像素由GMobileUseLightStencilCulling决定,而GMobileUseLightStencilCulling又由r.Mobile.UseLightStencilCulling决定,默认为1(即开启状态)。渲染光源的模板缓冲代码如下:

static void RenderLocalLight_StencilMask(FRHICommandListImmediate& RHICmdList, const FScene& Scene, const FViewInfo& View, const FLightSceneInfo& LightSceneInfo)

{

const uint8 LightType = LightSceneInfo.Proxy->GetLightType();

FGraphicsPipelineStateInitializer GraphicsPSOInit;

// 应用缓存好的RT(颜色/深度等).

RHICmdList.ApplyCachedRenderTargets(GraphicsPSOInit);

GraphicsPSOInit.PrimitiveType = PT_TriangleList;

// 禁用所有RT的写操作.

GraphicsPSOInit.BlendState = TStaticBlendStateWriteMask::GetRHI();

GraphicsPSOInit.RasterizerState = View.bReverseCulling ? TStaticRasterizerState::GetRHI() : TStaticRasterizerState::GetRHI();

// 如果深度测试失败, 则写入模板缓冲值为1.

GraphicsPSOInit.DepthStencilState = TStaticDepthStencilState<

false, CF_DepthNearOrEqual,

true, CF_Always, SO_Keep, SO_Replace, SO_Keep,

false, CF_Always, SO_Keep, SO_Keep, SO_Keep,

0x00,

// 注意只写入Pass专用的沙盒(SANBOX)位, 即模板缓冲的索引为0的位.

STENCIL_SANDBOX_MASK>::GetRHI();

// 绘制光源模板的VS是TDeferredLightVS.

TShaderMapRef > VertexShader(View.ShaderMap);

GraphicsPSOInit.BoundShaderState.VertexDeclarationRHI = GetVertexDeclarationFVector4();

GraphicsPSOInit.BoundShaderState.VertexShaderRHI = VertexShader.GetVertexShader();

// PS为空.

GraphicsPSOInit.BoundShaderState.PixelShaderRHI = nullptr;

SetGraphicsPipelineState(RHICmdList, GraphicsPSOInit);

VertexShader->SetParameters(RHICmdList, View, &LightSceneInfo);

// 模板值为1.

RHICmdList.SetStencilRef(1);

// 根据不同光源用不同形状绘制.

if (LightType == LightType_Point)

{

StencilingGeometry::DrawSphere(RHICmdList);

}

else // LightType_Spot

{

StencilingGeometry::DrawCone(RHICmdList);

}

}

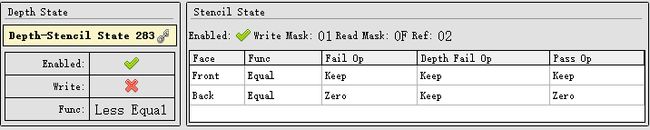

每个局部光源首先绘制光源范围内的Mask,再计算通过了Stencil测试(Early-Z)的像素的光照。具体的剖析过程以下图的聚光灯为例:

上:场景中一盏等待渲染的聚光灯;中:利用模板Pass绘制出的模板Mask(白色区域),标记了屏幕空间中和聚光灯形状重叠且深度更近的像素 ;下:对有效像素进行光照计算后的效果。

对有效像素进行光照计算时,使用的DepthStencil状态如下:

翻译成文字就是,执行光照的像素必须在光源形状体之内,光源形状之外的像素会被剔除。模板Pass标记的是比光源形状深度更近的像素(光源形状体之外的像素),光源绘制Pass通过模板测试剔除模板Pass标记的像素,然后再通过深度测试找出在光源形状体内的像素,从而提升光照计算效率。

移动端的这种光源模板裁剪(Light Stencil Culling)技术和Siggraph2020的Unity演讲Deferred Shading in Unity URP提及的基于模板的光照计算相似(思想一致,但做法可能不完全一样)。该论文还提出了更加契合光源形状的几何体模拟:

以及对比了各种光源计算方法在PC和移动端的性能,下面是Mali GPU的对比图:

Mali Gpu在使用不同光照渲染技术的性能对比,可见在移动端,基于模板裁剪的光照算法要优于常规和分块算法。

值得一提的是,光源模板裁剪技术结合GPU的Early-Z技术,将极大提升光照渲染性能。而当前主流的移动端GPU都支持Early-Z技术,也为光源模板裁剪的应用奠定了基础。

UE目前实现的光源裁剪算法兴许还有改进的空间,比如背向光源的像素(下图红框所示)其实也是可以不计算的。(但如何快速有效地找到背向光源的像素又是一个问题)

12.3.3.2 MobileBasePassShader

本节主要阐述移动端BasePass涉及的shader,包括VS和PS。先看VS:

// Engine\Shaders\Private\MobileBasePassVertexShader.usf

(......)

struct FMobileShadingBasePassVSToPS

{

FVertexFactoryInterpolantsVSToPS FactoryInterpolants;

FMobileBasePassInterpolantsVSToPS BasePassInterpolants;

float4 Position : SV_POSITION;

};

#define FMobileShadingBasePassVSOutput FMobileShadingBasePassVSToPS

#define VertexFactoryGetInterpolants VertexFactoryGetInterpolantsVSToPS

// VS主入口.

void Main(

FVertexFactoryInput Input

, out FMobileShadingBasePassVSOutput Output

#if INSTANCED_STEREO

, uint InstanceId : SV_InstanceID

, out uint LayerIndex : SV_RenderTargetArrayIndex

#elif MOBILE_MULTI_VIEW

, in uint ViewId : SV_ViewID

#endif

)

{

// 立体视图模式.

#if INSTANCED_STEREO

const uint EyeIndex = GetEyeIndex(InstanceId);

ResolvedView = ResolveView(EyeIndex);

LayerIndex = EyeIndex;

Output.BasePassInterpolants.MultiViewId = float(EyeIndex);

// 多视图模式.

#elif MOBILE_MULTI_VIEW

#if COMPILER_GLSL_ES3_1

const int MultiViewId = int(ViewId);

ResolvedView = ResolveView(uint(MultiViewId));

Output.BasePassInterpolants.MultiViewId = float(MultiViewId);

#else

ResolvedView = ResolveView(ViewId);

Output.BasePassInterpolants.MultiViewId = float(ViewId);

#endif

#else

ResolvedView = ResolveView();

#endif

// 初始化打包的插值数据.

#if PACK_INTERPOLANTS

float4 PackedInterps[NUM_VF_PACKED_INTERPOLANTS];

UNROLL

for(int i = 0; i < NUM_VF_PACKED_INTERPOLANTS; ++i)

{

PackedInterps[i] = 0;

}

#endif

// 处理顶点工厂数据.

FVertexFactoryIntermediates VFIntermediates = GetVertexFactoryIntermediates(Input);

float4 WorldPositionExcludingWPO = VertexFactoryGetWorldPosition(Input, VFIntermediates);

float4 WorldPosition = WorldPositionExcludingWPO;

// 获取材质的顶点数据, 处理坐标等.

half3x3 TangentToLocal = VertexFactoryGetTangentToLocal(Input, VFIntermediates);

FMaterialVertexParameters VertexParameters = GetMaterialVertexParameters(Input, VFIntermediates, WorldPosition.xyz, TangentToLocal);

half3 WorldPositionOffset = GetMaterialWorldPositionOffset(VertexParameters);

WorldPosition.xyz += WorldPositionOffset;

float4 RasterizedWorldPosition = VertexFactoryGetRasterizedWorldPosition(Input, VFIntermediates, WorldPosition);

Output.Position = mul(RasterizedWorldPosition, ResolvedView.TranslatedWorldToClip);

Output.BasePassInterpolants.PixelPosition = WorldPosition;

#if USE_WORLD_POSITION_EXCLUDING_SHADER_OFFSETS

Output.BasePassInterpolants.PixelPositionExcludingWPO = WorldPositionExcludingWPO.xyz;

#endif

// 裁剪面.

#if USE_PS_CLIP_PLANE

Output.BasePassInterpolants.OutClipDistance = dot(ResolvedView.GlobalClippingPlane, float4(WorldPosition.xyz - ResolvedView.PreViewTranslation.xyz, 1));

#endif

// 顶点雾.

#if USE_VERTEX_FOG

float4 VertexFog = CalculateHeightFog(WorldPosition.xyz - ResolvedView.TranslatedWorldCameraOrigin);

#if PROJECT_SUPPORT_SKY_ATMOSPHERE && MATERIAL_IS_SKY==0 // Do not apply aerial perpsective on sky materials

if (ResolvedView.SkyAtmosphereApplyCameraAerialPerspectiveVolume > 0.0f)

{

const float OneOverPreExposure = USE_PREEXPOSURE ? ResolvedView.OneOverPreExposure : 1.0f;

// Sample the aerial perspective (AP). It is also blended under the VertexFog parameter.

VertexFog = GetAerialPerspectiveLuminanceTransmittanceWithFogOver(

ResolvedView.RealTimeReflectionCapture, ResolvedView.SkyAtmosphereCameraAerialPerspectiveVolumeSizeAndInvSize,

Output.Position, WorldPosition.xyz*CM_TO_SKY_UNIT, ResolvedView.TranslatedWorldCameraOrigin*CM_TO_SKY_UNIT,

View.CameraAerialPerspectiveVolume, View.CameraAerialPerspectiveVolumeSampler,

ResolvedView.SkyAtmosphereCameraAerialPerspectiveVolumeDepthResolutionInv,

ResolvedView.SkyAtmosphereCameraAerialPerspectiveVolumeDepthResolution,

ResolvedView.SkyAtmosphereAerialPerspectiveStartDepthKm,

ResolvedView.SkyAtmosphereCameraAerialPerspectiveVolumeDepthSliceLengthKm,

ResolvedView.SkyAtmosphereCameraAerialPerspectiveVolumeDepthSliceLengthKmInv,

OneOverPreExposure, VertexFog);

}

#endif

#if PACK_INTERPOLANTS

PackedInterps[0] = VertexFog;

#else

Output.BasePassInterpolants.VertexFog = VertexFog;

#endif // PACK_INTERPOLANTS

#endif // USE_VERTEX_FOG

(......)

// 获取待插值的数据.

Output.FactoryInterpolants = VertexFactoryGetInterpolants(Input, VFIntermediates, VertexParameters);

Output.BasePassInterpolants.PixelPosition.w = Output.Position.w;

// 打包插值数据.

#if PACK_INTERPOLANTS

VertexFactoryPackInterpolants(Output.FactoryInterpolants, PackedInterps);

#endif // PACK_INTERPOLANTS

#if !OUTPUT_MOBILE_HDR && COMPILER_GLSL_ES3_1

Output.Position.y *= -1;

#endif

}

以上可知,视图实例会根据立体绘制、多视图和普通模式不同而不同处理。支持顶点雾,但默认是关闭的,需要在工程配置内开启。

存在打包插值模式,为了压缩VS到PS之间的插值消耗和带宽。是否开启由宏PACK_INTERPOLANTS决定,它的定义如下:

// Engine\Shaders\Private\MobileBasePassCommon.ush

#define PACK_INTERPOLANTS (USE_VERTEX_FOG && NUM_VF_PACKED_INTERPOLANTS > 0 && (ES3_1_PROFILE))

也就是说,只有开启顶点雾、存在顶点工厂打包插值数据且是OpenGLES3.1着色平台才开启打包插值的特性。相比PC端的BasePass的VS,移动端的做了大量的简化,可以简单地认为只是PC端的一个很小的子集。下面继续分析PS:

// Engine\Shaders\Private\MobileBasePassVertexShader.usf

#include "Common.ush"

// 各类宏定义.

#define MobileSceneTextures MobileBasePass.SceneTextures

#define EyeAdaptationStruct MobileBasePass

(......)

// 最接近被渲染对象的场景的预归一化捕获(完全粗糙材质不支持)

#if !FULLY_ROUGH

#if HQ_REFLECTIONS

#define MAX_HQ_REFLECTIONS 3

TextureCube ReflectionCubemap0;

SamplerState ReflectionCubemapSampler0;

TextureCube ReflectionCubemap1;

SamplerState ReflectionCubemapSampler1;

TextureCube ReflectionCubemap2;

SamplerState ReflectionCubemapSampler2;

// x,y,z - inverted average brightness for 0, 1, 2; w - sky cube texture max mips.

float4 ReflectionAverageBrigtness;

float4 ReflectanceMaxValueRGBM;

float4 ReflectionPositionsAndRadii[MAX_HQ_REFLECTIONS];

#if ALLOW_CUBE_REFLECTIONS

float4x4 CaptureBoxTransformArray[MAX_HQ_REFLECTIONS];

float4 CaptureBoxScalesArray[MAX_HQ_REFLECTIONS];

#endif

#endif

#endif

// 反射球/IBL等接口.

half4 GetPlanarReflection(float3 WorldPosition, half3 WorldNormal, half Roughness);

half MobileComputeMixingWeight(half IndirectIrradiance, half AverageBrightness, half Roughness);

half3 GetLookupVectorForBoxCaptureMobile(half3 ReflectionVector, ...);

half3 GetLookupVectorForSphereCaptureMobile(half3 ReflectionVector, ...);

void GatherSpecularIBL(FMaterialPixelParameters MaterialParameters, ...);

void BlendReflectionCaptures(FMaterialPixelParameters MaterialParameters, ...)

half3 GetImageBasedReflectionLighting(FMaterialPixelParameters MaterialParameters, ...);

// 其它接口.

half3 FrameBufferBlendOp(half4 Source);

bool UseCSM();

void ApplyPixelDepthOffsetForMobileBasePass(inout FMaterialPixelParameters MaterialParameters, FPixelMaterialInputs PixelMaterialInputs, out float OutDepth);

// 累积动态点光源.

#if MAX_DYNAMIC_POINT_LIGHTS > 0

void AccumulateLightingOfDynamicPointLight(

FMaterialPixelParameters MaterialParameters,

FMobileShadingModelContext ShadingModelContext,

FGBufferData GBuffer,

float4 LightPositionAndInvRadius,

float4 LightColorAndFalloffExponent,

float4 SpotLightDirectionAndSpecularScale,

float4 SpotLightAnglesAndSoftTransitionScaleAndLightShadowType,

#if SUPPORT_SPOTLIGHTS_SHADOW

FPCFSamplerSettings Settings,

float4 SpotLightShadowSharpenAndShadowFadeFraction,

float4 SpotLightShadowmapMinMax,

float4x4 SpotLightShadowWorldToShadowMatrix,

#endif

inout half3 Color)

{

uint LightShadowType = SpotLightAnglesAndSoftTransitionScaleAndLightShadowType.w;

float FadedShadow = 1.0f;

// 计算聚光灯阴影.

#if SUPPORT_SPOTLIGHTS_SHADOW

if ((LightShadowType & LightShadowType_Shadow) == LightShadowType_Shadow)

{

float4 HomogeneousShadowPosition = mul(float4(MaterialParameters.AbsoluteWorldPosition, 1), SpotLightShadowWorldToShadowMatrix);

float2 ShadowUVs = HomogeneousShadowPosition.xy / HomogeneousShadowPosition.w;

if (all(ShadowUVs >= SpotLightShadowmapMinMax.xy && ShadowUVs <= SpotLightShadowmapMinMax.zw))

{

// Clamp pixel depth in light space for shadowing opaque, because areas of the shadow depth buffer that weren't rendered to will have been cleared to 1

// We want to force the shadow comparison to result in 'unshadowed' in that case, regardless of whether the pixel being shaded is in front or behind that plane

float LightSpacePixelDepthForOpaque = min(HomogeneousShadowPosition.z, 0.99999f);

Settings.SceneDepth = LightSpacePixelDepthForOpaque;

Settings.TransitionScale = SpotLightAnglesAndSoftTransitionScaleAndLightShadowType.z;

half Shadow = MobileShadowPCF(ShadowUVs, Settings);

Shadow = saturate((Shadow - 0.5) * SpotLightShadowSharpenAndShadowFadeFraction.x + 0.5);

FadedShadow = lerp(1.0f, Square(Shadow), SpotLightShadowSharpenAndShadowFadeFraction.y);

}

}

#endif

// 计算光照.

if ((LightShadowType & ValidLightType) != 0)

{

float3 ToLight = LightPositionAndInvRadius.xyz - MaterialParameters.AbsoluteWorldPosition;

float DistanceSqr = dot(ToLight, ToLight);

float3 L = ToLight * rsqrt(DistanceSqr);

half3 PointH = normalize(MaterialParameters.CameraVector + L);

half PointNoL = max(0, dot(MaterialParameters.WorldNormal, L));

half PointNoH = max(0, dot(MaterialParameters.WorldNormal, PointH));

// 计算光源的衰减.

float Attenuation;

if (LightColorAndFalloffExponent.w == 0)

{

// Sphere falloff (technically just 1/d2 but this avoids inf)

Attenuation = 1 / (DistanceSqr + 1);

float LightRadiusMask = Square(saturate(1 - Square(DistanceSqr * (LightPositionAndInvRadius.w * LightPositionAndInvRadius.w))));

Attenuation *= LightRadiusMask;

}

else

{

Attenuation = RadialAttenuation(ToLight * LightPositionAndInvRadius.w, LightColorAndFalloffExponent.w);

}

#if PROJECT_MOBILE_ENABLE_MOVABLE_SPOTLIGHTS

if ((LightShadowType & LightShadowType_SpotLight) == LightShadowType_SpotLight)

{

Attenuation *= SpotAttenuation(L, -SpotLightDirectionAndSpecularScale.xyz, SpotLightAnglesAndSoftTransitionScaleAndLightShadowType.xy) * FadedShadow;

}

#endif

// 累加光照结果.

#if !FULLY_ROUGH

FMobileDirectLighting Lighting = MobileIntegrateBxDF(ShadingModelContext, GBuffer, PointNoL, MaterialParameters.CameraVector, PointH, PointNoH);

Color += min(65000.0, (Attenuation) * LightColorAndFalloffExponent.rgb * (1.0 / PI) * (Lighting.Diffuse + Lighting.Specular * SpotLightDirectionAndSpecularScale.w));

#else

Color += (Attenuation * PointNoL) * LightColorAndFalloffExponent.rgb * (1.0 / PI) * ShadingModelContext.DiffuseColor;

#endif

}

}

#endif

(......)

// 计算非直接光照.

half ComputeIndirect(VTPageTableResult LightmapVTPageTableResult, FVertexFactoryInterpolantsVSToPS Interpolants, float3 DiffuseDir, FMobileShadingModelContext ShadingModelContext, out half IndirectIrradiance, out half3 Color)

{

//To keep IndirectLightingCache conherence with PC, initialize the IndirectIrradiance to zero.

IndirectIrradiance = 0;

Color = 0;

// 非直接漫反射.

#if LQ_TEXTURE_LIGHTMAP

float2 LightmapUV0, LightmapUV1;

uint LightmapDataIndex;

GetLightMapCoordinates(Interpolants, LightmapUV0, LightmapUV1, LightmapDataIndex);

half4 LightmapColor = GetLightMapColorLQ(LightmapVTPageTableResult, LightmapUV0, LightmapUV1, LightmapDataIndex, DiffuseDir);

Color += LightmapColor.rgb * ShadingModelContext.DiffuseColor * View.IndirectLightingColorScale;

IndirectIrradiance = LightmapColor.a;

#elif CACHED_POINT_INDIRECT_LIGHTING

#if MATERIALBLENDING_MASKED || MATERIALBLENDING_SOLID

// 将法线应用到半透明物体.

FThreeBandSHVectorRGB PointIndirectLighting;

PointIndirectLighting.R.V0 = IndirectLightingCache.IndirectLightingSHCoefficients0[0];

PointIndirectLighting.R.V1 = IndirectLightingCache.IndirectLightingSHCoefficients1[0];

PointIndirectLighting.R.V2 = IndirectLightingCache.IndirectLightingSHCoefficients2[0];

PointIndirectLighting.G.V0 = IndirectLightingCache.IndirectLightingSHCoefficients0[1];

PointIndirectLighting.G.V1 = IndirectLightingCache.IndirectLightingSHCoefficients1[1];

PointIndirectLighting.G.V2 = IndirectLightingCache.IndirectLightingSHCoefficients2[1];

PointIndirectLighting.B.V0 = IndirectLightingCache.IndirectLightingSHCoefficients0[2];

PointIndirectLighting.B.V1 = IndirectLightingCache.IndirectLightingSHCoefficients1[2];

PointIndirectLighting.B.V2 = IndirectLightingCache.IndirectLightingSHCoefficients2[2];

FThreeBandSHVector DiffuseTransferSH = CalcDiffuseTransferSH3(DiffuseDir, 1);

// 计算加入了法线影响的漫反射光照.

half3 DiffuseGI = max(half3(0, 0, 0), DotSH3(PointIndirectLighting, DiffuseTransferSH));

IndirectIrradiance = Luminance(DiffuseGI);

Color += ShadingModelContext.DiffuseColor * DiffuseGI * View.IndirectLightingColorScale;

#else

// 半透明使用无方向(Non-directional), 漫反射被打包在xyz, 已经在cpu端除了PI和SH漫反射.

half3 PointIndirectLighting = IndirectLightingCache.IndirectLightingSHSingleCoefficient.rgb;

half3 DiffuseGI = PointIndirectLighting;

IndirectIrradiance = Luminance(DiffuseGI);

Color += ShadingModelContext.DiffuseColor * DiffuseGI * View.IndirectLightingColorScale;

#endif

#endif

return IndirectIrradiance;

}

// PS主入口.

PIXELSHADER_EARLYDEPTHSTENCIL

void Main(

FVertexFactoryInterpolantsVSToPS Interpolants

, FMobileBasePassInterpolantsVSToPS BasePassInterpolants

, in float4 SvPosition : SV_Position

OPTIONAL_IsFrontFace

, out half4 OutColor : SV_Target0

#if DEFERRED_SHADING_PATH

, out half4 OutGBufferA : SV_Target1

, out half4 OutGBufferB : SV_Target2

, out half4 OutGBufferC : SV_Target3

#endif

#if USE_SCENE_DEPTH_AUX

, out float OutSceneDepthAux : SV_Target4

#endif

#if OUTPUT_PIXEL_DEPTH_OFFSET

, out float OutDepth : SV_Depth

#endif

)

{

#if MOBILE_MULTI_VIEW

ResolvedView = ResolveView(uint(BasePassInterpolants.MultiViewId));

#else

ResolvedView = ResolveView();

#endif

#if USE_PS_CLIP_PLANE

clip(BasePassInterpolants.OutClipDistance);

#endif

// 解压打包的插值数据.

#if PACK_INTERPOLANTS

float4 PackedInterpolants[NUM_VF_PACKED_INTERPOLANTS];

VertexFactoryUnpackInterpolants(Interpolants, PackedInterpolants);

#endif

#if COMPILER_GLSL_ES3_1 && !OUTPUT_MOBILE_HDR && !MOBILE_EMULATION

// LDR Mobile needs screen vertical flipped

SvPosition.y = ResolvedView.BufferSizeAndInvSize.y - SvPosition.y - 1;

#endif

// 获取材质的像素属性.

FMaterialPixelParameters MaterialParameters = GetMaterialPixelParameters(Interpolants, SvPosition);

FPixelMaterialInputs PixelMaterialInputs;

{

float4 ScreenPosition = SvPositionToResolvedScreenPosition(SvPosition);

float3 WorldPosition = BasePassInterpolants.PixelPosition.xyz;

float3 WorldPositionExcludingWPO = BasePassInterpolants.PixelPosition.xyz;

#if USE_WORLD_POSITION_EXCLUDING_SHADER_OFFSETS