猫狗大战--使用 “VGG16进行CIFAR10分类” 迁移学习实现

猫狗大战--使用 “VGG16进行CIFAR10分类” 迁移学习实现

目录

猫狗大战--使用 “VGG16进行CIFAR10分类” 迁移学习实现

一、在colab上使用数据集

二、训练模型

三、测试数据 Valid(研习社的test在下一部分)

四、研习社测试Test

以下为旧版本 2020年11月17日 13点48分

使用VGG模型进行猫狗大战

一、代码部分

猫狗大战训练代码.ipynb

https://colab.research.google.com/drive/1qbo216iUiKvfnwNtVcZ2fFH821VzJoIu?usp=sharing

猫狗大战测试代码.ipynb

https://colab.research.google.com/drive/1Ulwkgn87dzDeu4nrfdIEEsyZ1YCth9Eo?usp=sharing

最优模型(Model) 百度云盘地址

链接:https://pan.baidu.com/s/1jbcO3UYiPDYRSrHpM7B7Qg 提取码:1yk2

一、在colab上使用数据集

有两种方案:

- 在colab中使用wget直接从互联网下载数据

! wget *url*

- 将数据上传到Google Drive,然后在colab中连接到Drive中

from google.colab import drive drive.mount('/content/drive')

二、训练模型

- 解压文件

#具体看你自己的文件放在哪 #1、如果是直接下载的 就默认在 conten/ 下面 #2、用drive里的文件就在下面的路径中,可以点击左边的文件目录查看 !unzip "/content/drive/My Drive/data.zip" - 加载数据

normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]) vgg_format = transforms.Compose([ transforms.CenterCrop(224), transforms.ToTensor(), normalize, ]) data_dir = '/content/data' dsets = {x: datasets.ImageFolder(os.path.join(data_dir, x), vgg_format) for x in ['train']} dset_sizes = {x: len(dsets[x]) for x in ['train']} dset_classes = dsets['train'].classes #另一个数据集 # data_dir2 = '/content/dogscats' # dsets2 = {x: datasets.ImageFolder(os.path.join(data_dir2, x), vgg_format) # for x in ['valid']} # dset_sizes2 = {x: len(dsets2[x]) for x in ['valid']} # dset_classes2 = dsets2['valid'].classes# 通过下面代码可以查看 dsets 的一些属性 print(dsets['train'].classes) print(dsets['train'].class_to_idx) print(dsets['train'].imgs[:5]) print('dset_sizes: ', dset_sizes)loader_train = torch.utils.data.DataLoader(dsets['train'], batch_size=256, shuffle=True, num_workers=6) # loader_valid = torch.utils.data.DataLoader(dsets2['valid'], batch_size=5, shuffle=False, num_workers=6) # count = 1 # for data in loader_valid: # print(count, end='\n') # if count == 1: # inputs_try,labels_try = data # count +=1 # print(labels_try) # print(inputs_try.shape)注释掉的是验证集中的数据,因为这部分主要是训练,测试和验证我会跟训练分开,原因下面会介绍。

- 下载模型(第一次训练,需要下载VGG16的模型)

#下载 !wget https://s3.amazonaws.com/deep-learning-models/image-models/imagenet_class_index.json如果下面的运行不出来,把上面的验证集注释取消就可以了,也可以把下面报错的行全 删掉/注释 掉。

#使用vgg16需要 model_vgg = models.vgg16(pretrained=True) with open('./imagenet_class_index.json') as f: class_dict = json.load(f) dic_imagenet = [class_dict[str(i)][1] for i in range(len(class_dict))] inputs_try , labels_try = inputs_try.to(device), labels_try.to(device) model_vgg = model_vgg.to(device) outputs_try = model_vgg(inputs_try) print(outputs_try) print(outputs_try.shape) ''' 可以看到结果为5行,1000列的数据,每一列代表对每一种目标识别的结果。 但是我也可以观察到,结果非常奇葩,有负数,有正数, 为了将VGG网络输出的结果转化为对每一类的预测概率,我们把结果输入到 Softmax 函数 ''' m_softm = nn.Softmax(dim=1) probs = m_softm(outputs_try) vals_try,pred_try = torch.max(probs,dim=1) print( 'prob sum: ', torch.sum(probs,1)) print( 'vals_try: ', vals_try) print( 'pred_try: ', pred_try) def imshow(inp, title=None): # Imshow for Tensor. inp = inp.numpy().transpose((1, 2, 0)) mean = np.array([0.485, 0.456, 0.406]) std = np.array([0.229, 0.224, 0.225]) inp = np.clip(std * inp + mean, 0,1) plt.imshow(inp) if title is not None: plt.title(title) plt.pause(0.001) # pause a bit so that plots are updated print([dic_imagenet[i] for i in pred_try.data]) imshow(torchvision.utils.make_grid(inputs_try.data.cpu()), title=[dset_classes[x] for x in labels_try.data.cpu()]) - 修改模型

print(model_vgg) model_vgg_new = model_vgg; #冻结VGG16中的参数,不进行梯度下降 for param in model_vgg_new.parameters(): param.requires_grad = False #新增两个线性层,后期主要训练这两层 model_vgg_new.classifier._modules['6'] = nn.Linear(4096, 4096) model_vgg_new.classifier._modules['7'] = nn.ReLU(inplace=False) model_vgg_new.classifier._modules['8'] = nn.Dropout(p=0.5,inplace=False) model_vgg_new.classifier._modules['9'] = nn.Linear(4096, 2) model_vgg_new.classifier._modules['10'] = torch.nn.LogSoftmax(dim=1) model_vgg_new = model_vgg_new.to(device) print(model_vgg_new.classifier) -

训练模型

① 我把SGD改成了Adam;

② epochs修改到了100;

③ 每一个epoch结束,都会计算loss 和acc,然后把acc最高的那一时刻的model覆盖保留

④ 训练结束后,把最后一轮的model保留

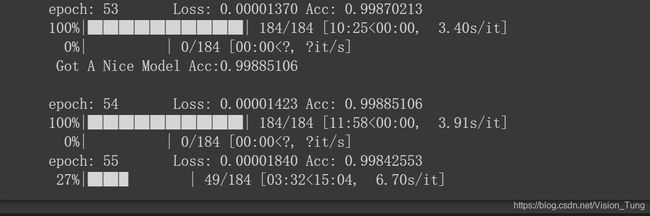

⑤ model都会保留到Google Drive 中from tqdm import trange,tqdm criterion = nn.NLLLoss() lr = 0.001 optimizer_vgg = torch.optim.Adam(model_vgg_new.classifier[6].parameters(), lr=lr) def train_model(model, dataloader, size, epochs=200, optimizer=None): model.train() max_acc = 0 count = 0 for epoch in range(epochs): running_loss = 0.0 running_corrects = 0 count = 0 for inputs, classes in tqdm(dataloader): inputs = inputs.to(device) classes = classes.to(device) outputs = model(inputs) loss = criterion(outputs, classes) optimizer = optimizer optimizer.zero_grad() loss.backward() optimizer.step() _, preds = torch.max(outputs.data, 1) # statistics running_loss += loss.data.item() running_corrects += torch.sum(preds == classes.data) count += len(inputs) #print('Training: No. ', count, ' process ... total: ', size) epoch_loss = running_loss / size epoch_acc = running_corrects.data.item() / size if epoch_acc>max_acc: max_acc = epoch_acc torch.save(model, '/content/drive/My Drive/model_best_new.pth') tqdm.write("\n Got A Nice Model Acc:{:.8f}".format(max_acc)) tqdm.write('\nepoch: {} \tLoss: {:.8f} Acc: {:.8f}'.format(epoch,epoch_loss, epoch_acc)) time.sleep(0.1) torch.save(model, '/content/drive/My Drive/model_last_new.pth') tqdm.write("Got A Nice Model") # 模型训练 train_model(model_vgg_new, loader_train, size=dset_sizes["train"], epochs=100, optimizer=optimizer_vgg)

三、测试数据 Valid(研习社的test在下一部分)

在上一部分,我将train和valid分开是原因的是:

- 训练过程很慢,特别是在train和epoch都比较大的情况下,即使是用 Tesla P100 训练也需要很久

- 因为训练时间很久,所以我将acc比较高的model都保存到了Google Drive中,这样就可以在另一个colab中直接拿到表现最好的model进行Valid了

- 因为这样更骚。

- 从Google Drive 中获取model 和 测试数据

from google.colab import drive drive.mount('/content/drive')#视具体情况而定 !unzip "/content/drive/My Drive/test.zip" - 加载数据和模型

import os import torch from torchvision import transforms,datasets from tqdm import tqdm device = torch.device("cuda:0") normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]) vgg_format = transforms.Compose([ transforms.CenterCrop(224), transforms.ToTensor(), normalize, ]) #注意这里的文件夹名称,我的是test,因为我的压缩包就叫test data_dir = r'test' file_name = 'valid'#"train1" dsets = {x: datasets.ImageFolder(os.path.join(data_dir, x), vgg_format) for x in [file_name]} dset_sizes = {x: len(dsets[x]) for x in [file_name]} loader_valid = torch.utils.data.DataLoader(dsets[file_name], batch_size=256, shuffle=False, num_workers=0) #这里是需要记载的模型 model_vgg_new = torch.load(r'/content/drive/My Drive/model_best_new.pth') model_vgg_new = model_vgg_new.to(device) - 测试

def test_model(model,dataloader,size): model.eval() running_corrects = 0 for inputs,classes in tqdm(dataloader): inputs = inputs.to(device) classes = classes.to(device) outputs = model(inputs) _,preds = torch.max(outputs.data,1) running_corrects += torch.sum(preds == classes.data) epoch_acc = running_corrects.data.item() / size tqdm.write('Acc: {:.4f} '.format(epoch_acc)) test_model(model_vgg_new, loader_valid, size=dset_sizes[file_name])

四、研习社测试Test

- 加载 测试数据 和 模型

import torch import numpy as np from torchvision import transforms,datasets from tqdm import tqdm device = torch.device("cuda:0" ) normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]) vgg_format = transforms.Compose([ transforms.CenterCrop(224), transforms.ToTensor(), normalize, ]) #注意这里,我的数据在yanxishe这个文件夹里 dsets_mine = datasets.ImageFolder(r"yanxishe", vgg_format) loader_test = torch.utils.data.DataLoader(dsets_mine, batch_size=1, shuffle=False, num_workers=0) #模型的具体地址需要根据具体情况修改 model_vgg_new = torch.load(r'/content/drive/My Drive/model_best_16.pth') model_vgg_new = model_vgg_new.to(device) - 测试

dic = {} def test(model,dataloader,size): model.eval() predictions = np.zeros(size) cnt = 0 for inputs,_ in tqdm(dataloader): inputs = inputs.to(device) outputs = model(inputs) _,preds = torch.max(outputs.data,1) #这里是切割路径,因为dset中的数据不是按1-2000顺序排列的 key = dsets_mine.imgs[cnt][0].split("\\")[-1].split('.')[0] dic[key] = preds[0] cnt = cnt +1 test(model_vgg_new,loader_test,size=2000)

- 写入csv

with open("result18.csv",'a+') as f: for key in range(2000): #这里的yanxishe/test/是我的图片路径,按需更换 f.write("{},{}\n".format(key,dic["yanxishe/test/"+str(key)]))

五、结果

以下为旧版本 2020年11月17日 13点48分

使用VGG模型进行猫狗大战

原文见:https://github.com/mlelarge/dataflowr/blob/master/CEA_EDF_INRIA/01_intro_DLDIY_colab.ipynb

-

VGG是由Simonyan 和Zisserman在文献《Very Deep Convolutional Networks for Large Scale Image Recognition》中提出卷积神经网络模型,其名称来源于作者所在的牛津大学视觉几何组(Visual Geometry Group)的缩写。该模型参加2014年的 ImageNet图像分类与定位挑战赛,取得了优异成绩:在分类任务上排名第二,在定位任务上排名第一。

-

迁移学习是一种机器学习方法,就是把为任务 A 开发的模型作为初始点,重新使用在为任务 B 开发模型的过程中。

-

ImageNet图像分类10类中存在猫和狗,所以用VGG来作为“猫狗大战”的预训练是十分合理的

一、代码部分

- 头文件

import os

import torch

import torch.nn as nn

from torchvision import models,transforms,datasets

from tqdm import trange,tqdm

# 判断是否存在GPU设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print('Using gpu: %s ' % torch.cuda.is_available())- 数据处理

datasets 是 torchvision 中的一个包,可以用做加载图像数据。它可以以多线程(multi-thread)的形式从硬盘中读取数据,使用 mini-batch 的形式,在网络训练中向 GPU 输送。在使用CNN处理图像时,需要进行预处理。图片将被整理成 224*224*3 的大小,同时还将进行归一化处理。

这里我将https://static.leiphone.com/cat_dog.rar的训练文件一起加入了到了训练集中,因为原来的训练集只有1800张图片,我希望能在更大的数据集上进行训练,以求获得更好的结果。

normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

vgg_format = transforms.Compose([

transforms.CenterCrop(224),

transforms.ToTensor(),

normalize,

])

data_dir = r'G:\作业\#1.人工智能\colab_demo-master\dogscats'

dsets = {x: datasets.ImageFolder(os.path.join(data_dir, x), vgg_format)

for x in ['train', 'valid']}

dset_sizes = {x: len(dsets[x]) for x in ['train', 'valid']}

dset_classes = dsets['train'].classes

loader_train = torch.utils.data.DataLoader(dsets['train'], batch_size=40, shuffle=True, num_workers=0)

loader_valid = torch.utils.data.DataLoader(dsets['valid'], batch_size=16, shuffle=False, num_workers=0)- 载入 VGG Model

这里我把训练出来比较好的模型序列化到硬盘上

首先,准确率比较高的情况往往不是训练的最终结果,选择准确率较高的模型保存到本地可以获得比较好的效果

其次,2w+数据的训练周期较长,拿出其中表现较好时刻的模型可以提前进行测试,提高效率

n = input("是否重新训练?(Y/N)")

if n=='N':

path = input("输入文件名:")

CNT = input("输入轮数:")

model_vgg_new = torch.load(path)

model_vgg_new = model_vgg_new.to(device)

else:

model_vgg = models.vgg16(pretrained=True)

model_vgg = model_vgg.to(device)

model_vgg_new = model_vgg

for param in model_vgg_new.parameters():

param.requires_grad = False

model_vgg_new.classifier._modules['6'] = nn.Linear(4096, 2)

model_vgg_new.classifier._modules['7'] = torch.nn.LogSoftmax(dim = 1)- 训练并测试全连接层

将表现较好时刻的模型存盘到本地

model_vgg_new = model_vgg_new.to(device)

criterion = nn.NLLLoss()

# 学习率

lr = 0.001

optimizer_vgg = torch.optim.Adam(model_vgg_new.classifier[6].parameters(), lr=lr)

'''

第二步:训练模型

'''

N_ = int(CNT)+1

def train_model(model, dataloader, size, epochs=100, optimizer=None):

model.train()

global N_

for epoch in range(epochs):

running_loss = 0.0

running_corrects = 0

count = 0

for inputs, classes in tqdm(dataloader):

inputs = inputs.to(device)

classes = classes.to(device)

outputs = model(inputs)

loss = criterion(outputs, classes)

optimizer = optimizer

optimizer.zero_grad()

loss.backward()

optimizer.step()

_, preds = torch.max(outputs.data, 1)

# statistics

running_loss += loss.data.item()

running_corrects += torch.sum(preds == classes.data)

count += len(inputs)

#print('Training: No. ', count, ' process ... total: ', size)

epoch_loss = running_loss / size

epoch_acc = running_corrects.data.item() / size

print('{} \tLoss: {:.4f} Acc: {:.4f}'.format(N_,epoch_loss, epoch_acc))

if epoch_acc > 0.97:

torch.save(model, './model'+str(N_)+'_'+str(epoch_acc)+'_'+'.pth')

print("Got A Nice Model")

N_ = N_ + 1

# 模型训练

train_model(model_vgg_new, loader_train, size=dset_sizes['train'], epochs=100,

optimizer=optimizer_vgg)- 训练过程

- AI研习社结果

- 测试代码

import os

import torch

from torchvision import transforms,datasets

from tqdm import tqdm

device = torch.device("cuda:0")

normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

vgg_format = transforms.Compose([

transforms.CenterCrop(224),

transforms.ToTensor(),

normalize,

])

data_dir = r'G:\作业\#1.人工智能\colab_demo-master\dogscats'

dsets = {x: datasets.ImageFolder(os.path.join(data_dir, x), vgg_format)

for x in ['valid']}

dset_sizes = {x: len(dsets[x]) for x in ['valid']}

loader_valid = torch.utils.data.DataLoader(dsets['valid'], batch_size=8, shuffle=False, num_workers=0)

model_vgg_new = torch.load('xxxxxx')

model_vgg_new = model_vgg_new.to(device)

def test_model(model,dataloader,size):

model.eval()

running_corrects = 0

for inputs,classes in tqdm(dataloader):

inputs = inputs.to(device)

classes = classes.to(device)

outputs = model(inputs)

_,preds = torch.max(outputs.data,1)

running_corrects += torch.sum(preds == classes.data)

epoch_acc = running_corrects.data.item() / size

print('Acc: {:.4f} '.format(epoch_acc))

test_model(model_vgg_new, loader_valid, size=dset_sizes['valid'])

- 研习社数据测试代码

import torch

import numpy as np

from torchvision import transforms,datasets

from tqdm import tqdm

device = torch.device("cuda:0" )

normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

vgg_format = transforms.Compose([

transforms.CenterCrop(224),

transforms.ToTensor(),

normalize,

])

dsets_mine = datasets.ImageFolder(r"G:\作业\#1.人工智能\colab_demo-master\dogscats2", vgg_format)

loader_test = torch.utils.data.DataLoader(dsets_mine, batch_size=1, shuffle=False, num_workers=0)

model_vgg_new = torch.load('')

model_vgg_new = model_vgg_new.to(device)

dic = {}

def test(model,dataloader,size):

model.eval()

predictions = np.zeros(size)

cnt = 0

for inputs,_ in tqdm(dataloader):

inputs = inputs.to(device)

outputs = model(inputs)

_,preds = torch.max(outputs.data,1)

key = dsets_mine.imgs[cnt][0].split("\\")[-1].split('.')[0]

dic[key] = preds[0]

cnt = cnt +1

test(model_vgg_new,loader_test,size=2000)

with open("result.csv",'a+') as f:

for key in range(2000):

f.write("{},{}\n".format(key,dic[str(key)]))