【笔记】动手学深度学习 - Resnet

resnet就是在前面几个卷积网络的基础上的延续,基本思想还是卷积堆叠。

之前学弟BN(批量归一化)可以解决网络层数太深而出现的梯度消失问题,但是如果网络层数太多,这个方法也是不太管用的。所以就提出了resnet。

resnet的主要特点就是残差块,残差块的目的就是为了保存之前当前层未训练之前的参数的特征,将这些参数和训练之后的数据一同传入到之后的层当中。

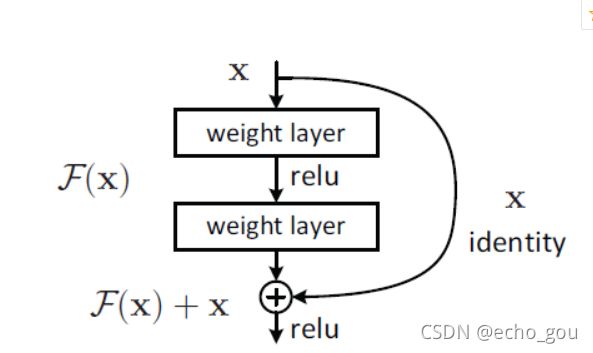

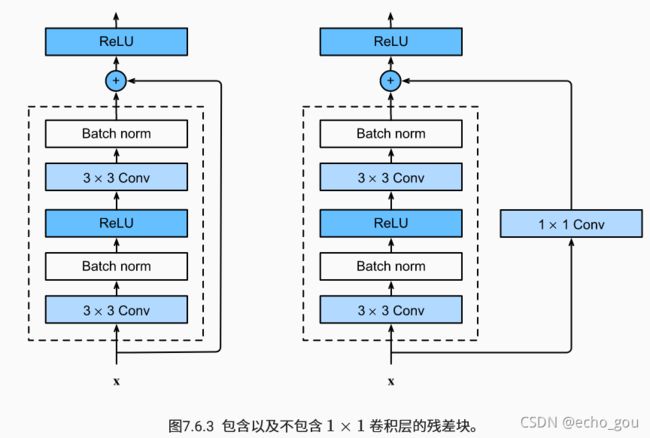

残差块具体解释如下:

黑色图中F(x)可以理解为进行中间的一系列卷积、relu、BN等操作之后的输出,我们最后把F(x)和x相加作为本层残差块的输出,这样既保留了上一层的一部分特征,也进行了卷积训练,有效避免了较深层网络梯度消失的问题。

将多个残差块堆叠之后形成一个resnet网络:

虽然 ResNet 的主体结构跟 GoogLeNet类似,但 ResNet 结构更简单,修改也更方便。这些因素都导致了 ResNet 迅速被广泛使用。

代码:

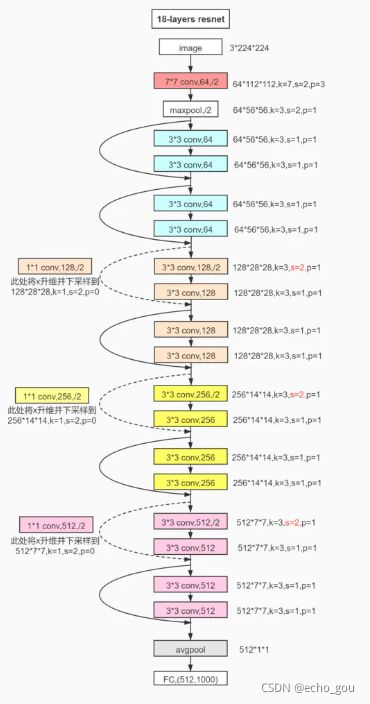

这是resnet18详细的结构图:

李沐的代码:

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

class Residual(nn.Module): #@save

def __init__(self, input_channels, num_channels,

use_1x1conv=False, strides=1):

super().__init__()

self.conv1 = nn.Conv2d(input_channels, num_channels,

kernel_size=3, padding=1, stride=strides)

self.conv2 = nn.Conv2d(num_channels, num_channels,

kernel_size=3, padding=1)

if use_1x1conv:

self.conv3 = nn.Conv2d(input_channels, num_channels,

kernel_size=1, stride=strides)

else:

self.conv3 = None

self.bn1 = nn.BatchNorm2d(num_channels)

self.bn2 = nn.BatchNorm2d(num_channels)

self.relu = nn.ReLU(inplace=True)

def forward(self, X):

Y = F.relu(self.bn1(self.conv1(X)))

Y = self.bn2(self.conv2(Y))

if self.conv3:

X = self.conv3(X)

Y += X

return F.relu(Y)

'''resnet前面几层'''

b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

def resnet_block(input_channels, num_channels, num_residuals,

first_block=False):

blk = []

for i in range(num_residuals):

if i == 0 and not first_block:

blk.append(Residual(input_channels, num_channels,

use_1x1conv=True, strides=2))

else:

blk.append(Residual(num_channels, num_channels))

return blk

b2 = nn.Sequential(*resnet_block(64, 64, 2, first_block=True))

b3 = nn.Sequential(*resnet_block(64, 128, 2))

b4 = nn.Sequential(*resnet_block(128, 256, 2))

b5 = nn.Sequential(*resnet_block(256, 512, 2))

net = nn.Sequential(b1, b2, b3, b4, b5,

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten(), nn.Linear(512, 10))

X = torch.rand(size=(1, 1, 224, 224))

for layer in net:

X = layer(X)

print(layer.__class__.__name__,'output shape:\t', X.shape)

lr, num_epochs, batch_size = 0.05, 10, 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=96)

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

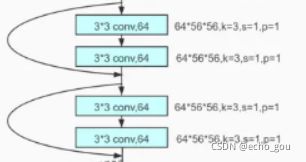

residual对应的是:

resnet_block对应的是这样的:

这四行代码就对应图中4个颜色

b2 = nn.Sequential(*resnet_block(64, 64, 2, first_block=True))

b3 = nn.Sequential(*resnet_block(64, 128, 2))

b4 = nn.Sequential(*resnet_block(128, 256, 2))

b5 = nn.Sequential(*resnet_block(256, 512, 2))参考:

ResNet详解——通俗易懂版_sunny_yeah_的博客-CSDN博客_resnet

ResNet结构解析及pytorch代码 - 知乎

resnet18 50网络结构以及pytorch实现代码 - 简书