selenium自动化测试和爬取名言和京东商品信息

如愿

- 一、selenium

-

- 1.1简介

- 1.2 下载

- 二、自动化测试

- 三、爬取名言

- 四、爬取京东商品信息

- 五、总结

- 六、参考资料

一、selenium

1.1简介

- Selenium是一个用于Web应用程序测试的工具。Selenium测试直接运行在浏览器中,就像真正的用户在操作一样。支持的浏览器包括IE(7, 8, 9, 10, 11),Mozilla Firefox,Safari,Google Chrome,Opera,Edge等。这个工具的主要功能包括:测试与浏览器的兼容性——测试应用程序看是否能够很好得工作在不同浏览器和操作系统之上。测试系统功能——创建回归测试检验软件功能和用户需求。支持自动录制动作和自动生成.Net、Java、Perl等不同语言的测试脚本。

1.2 下载

- 可以直接用 pip install selenium 或者conda install selenium下载,但使用的话需要下载对应浏览器的驱动,这里是谷歌浏览器的驱动下载地址,需要选择自己的浏览器版本

下载:https://npm.taobao.org/mirrors/chromedriver/

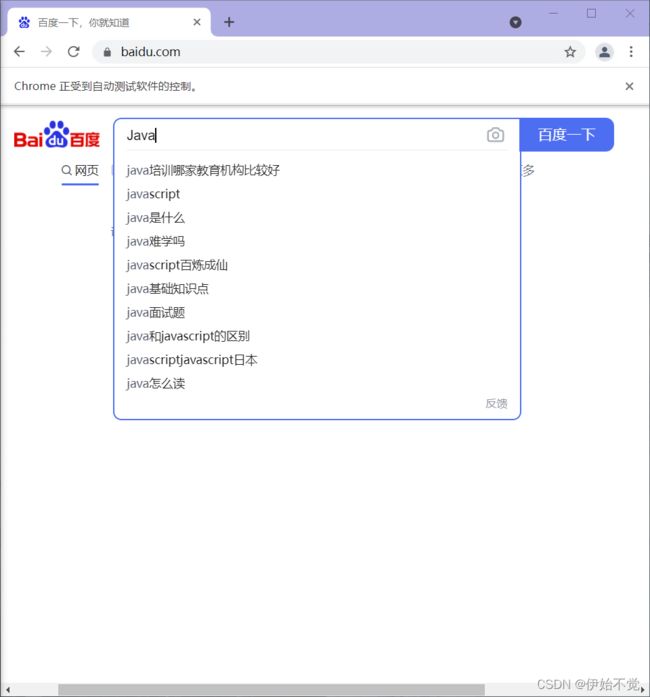

二、自动化测试

- 引入头文件并进入网页,驱动要加到环境变量里去,但好像加了也没用,这里就用的绝对路径

from selenium import webdriver

driver=webdriver.Chrome('D:\\software\\chromedriver_win32\\chromedriver.exe')

#进入网页

driver.get("https://www.baidu.com/")

- 可以看到弹出来的谷歌浏览器

- 按F12,进入控制台,再点击左上角,点一下变蓝之后再点击百度的搜索框,就会发现旁边就会定位到输入框对应的代码

- 可以看到input的id为kw,通过driver的find_element_by_id,获取该元素,利用send_key填值

p_input = driver.find_element_by_id("kw")

p_input.send_keys('Java')

#点击搜索按钮

p_btn=driver.find_element_by_id('su')

p_btn.click()

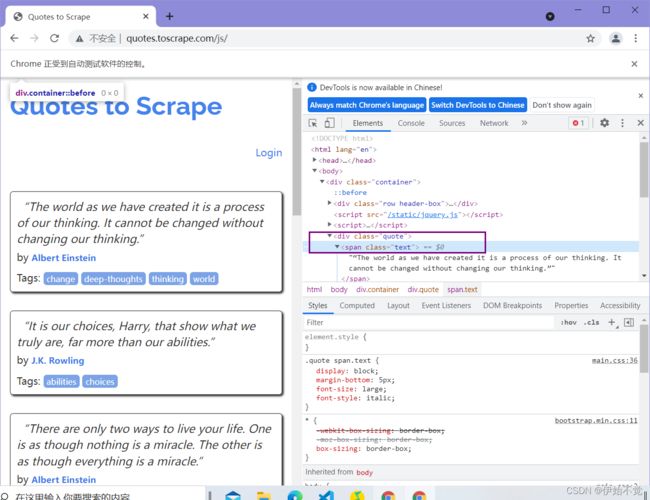

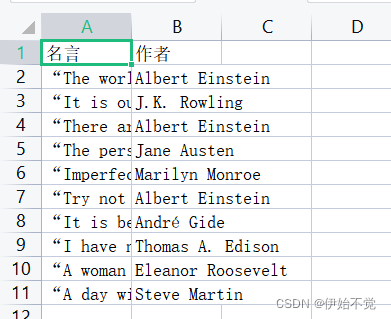

三、爬取名言

要求:到指定网站去爬取十句名言

网站:http://quotes.toscrape.com/js/

- 导入包并进入网站

from bs4 import BeautifulSoup as bs

from selenium import webdriver

import csv

from selenium.webdriver.chrome.options import Options

from tqdm import tqdm#在电脑终端上显示进度,使代码可视化进度加快

driver=webdriver.Chrome('D:\\software\\chromedriver_win32\\chromedriver.exe')

driver.get('http://quotes.toscrape.com/js/')

- 定义csv表头和文件路径,以及存放内容的列表

#定义csv表头

quote_head=['名言','作者']

#csv文件的路径和名字

quote_path='..\\source\\csv_file\\quote_csv.csv'

#存放内容的列表

quote_content=[]

'''

function_name:write_csv

parameters: csv_head,csv_content,csv_path

csv_head: the csv file head

csv_content: the csv file content,the number of columns equal to length of csv_head

csv_path: the csv file route

'''

def write_csv(csv_head,csv_content,csv_path):

with open(csv_path, 'w', newline='',encoding='utf-8') as file:

fileWriter =csv.writer(file)

fileWriter.writerow(csv_head)

fileWriter.writerows(csv_content)

print('爬取信息成功')

- 爬取信息并存入

###

#可以用find_elements_by_class_name获取所有含这个元素的集合(列表也有可能)

#然后把这个提取出来之后再用继续提取

quote=driver.find_elements_by_class_name("quote")

#将要收集的信息放在quote_content里

for i in tqdm(range(len(quote))):

quote_text=quote[i].find_element_by_class_name("text")

quote_author=quote[i].find_element_by_class_name("author")

temp=[]

temp.append(quote_text.text)

temp.append(quote_author.text)

quote_content.append(temp)

write_csv(quote_head,quote_content,quote_path)

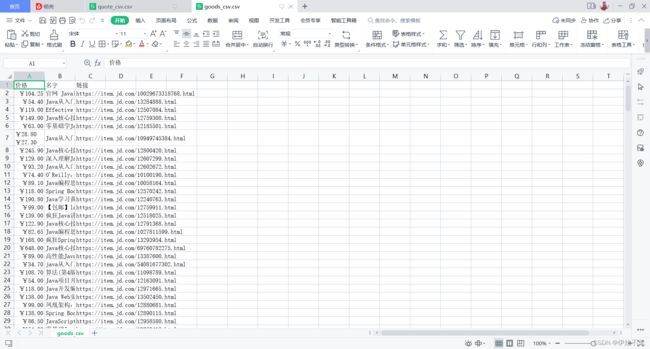

四、爬取京东商品信息

- 老样子,导入包并进入京东搜索界面,同时sleep 3秒,行为要向人

from selenium import webdriver

import time

import csv

from tqdm import tqdm#在电脑终端上显示进度,使代码可视化进度加快

driver=webdriver.Chrome('D:\\software\\chromedriver_win32\\chromedriver.exe')

#加载页面

driver.get("https://www.jd.com/")

time.sleep(3)

- 定义存放爬取信息的路径和存放内容的列表以及爬取数量

#定义存放图书信息的列表

goods_info_list=[]

#爬取200本

goods_num=200

#定义表头

goods_head=['价格','名字','链接']

#csv文件的路径和名字

goods_path='..\\source\\csv_file\\goods_csv.csv'

- 进入开发者模式,定位搜索框,可以看到搜索框的id为key,获取按钮输入信息

- 获取输入框并输入信息

#向输入框里输入Java

p_input = driver.find_element_by_id("key")

p_input.send_keys('Java')

#button好像不能根据类名直接获取,先获取大的div,再获取按钮

from_filed=driver.find_element_by_class_name('form')

s_btn=from_filed.find_element_by_tag_name('button')

s_btn.click()#实现点击

- 搜索之后获取价格也是同样的步骤,就不详细讲了,后面直接放代码了,这里是获取商品价格、名称、链接的函数

#获取商品价格、名称、链接

def get_prince_and_name(goods):

#直接用css定位元素

#获取价格

goods_price=goods.find_element_by_css_selector('div.p-price')

#获取元素

goods_name=goods.find_element_by_css_selector('div.p-name')

#获取链接

goods_herf=goods.find_element_by_css_selector('div.p-img>a').get_property('href')

return goods_price,goods_name,goods_herf

- 定义滑动到页面底部函数,滑动到页面底部会刷出下一页,直接点下一页的话就是到下下页去了,也要沉睡一下,表现的像个人

def drop_down(web_driver):

#将滚动条调整至页面底部

web_driver.execute_script('window.scrollTo(0, document.body.scrollHeight)')

time.sleep(3)

- 定义爬取一页的函数

#获取爬取一页

def crawl_a_page(web_driver,goods_num):

#获取图书列表

drop_down(web_driver)

goods_list=web_driver.find_elements_by_css_selector('div#J_goodsList>ul>li')

#获取一个图书的价格、名字、链接

for i in tqdm(range(len(goods_list))):

goods_num-=1

goods_price,goods_name,goods_herf=get_prince_and_name(goods_list[i])

goods=[]

goods.append(goods_price.text)

goods.append(goods_name.text)

goods.append(goods_herf)

goods_info_list.append(goods)

if goods_num==0:

break

return goods_num

- 爬出一定数量的商品,这要求的200本确实有点卡的难受

while goods_num!=0:

goods_num=crawl_a_page(driver,goods_num)

btn=driver.find_element_by_class_name('pn-next').click()

time.sleep(1)

write_csv(goods_head,goods_info_list,goods_path)

五、总结

- selenium是真的方便,如果有一点css基础,使用会更加得心应手,而且有一说一,ipnyb文件用VScode写是真的舒服。

六、参考资料

python+selenium 采集动态加载(下拉加载)的页面内容,自动下拉滚动条

Selenium之Css定位元素