Spark MLlib Linear Regression线性回归算法

1、Spark MLlib Linear Regression线性回归算法

1.1 线性回归算法

1.1.1 基础理论

在统计学中,线性回归(Linear Regression)是利用称为线性回归方程的最小平方函数对一个或多个自变量和因变量之间关系进行建模的一种回归分析。这种函数是一个或多个称为回归系数的模型参数的线性组合。

回归分析中,只包括一个自变量和一个因变量,且二者的关系可用一条直线近似表示,这种回归分析称为一元线性回归分析。如果回归分析中包括两个或两个以上的自变量,且因变量和自变量之间是线性关系,则称为多元线性回归分析。

下面我们来举例何为一元线性回归分析,为某地区的房屋面积(feet)、房间数、价格($)的一个数据集,在该数据集中,只有自变量面积(feet)、房间数,和一个因变量价格($),

分析得到的线性方程应如下所示:

因此,无论是一元线性方程还是多元线性方程,可统一写成如下的格式:

上式中x0=1,而求线性方程则演变成了求方程的参数ΘT。

线性回归假设特征和结果满足线性关系。其实线性关系的表达能力非常强大,每个特征对结果的影响强弱可以有前面的参数体现,而且每个特征变量可以首先映射到一个函数,然后再参与线性计算,这样就可以表达特征与结果之间的非线性关系。

1.1.2 梯度下降算法

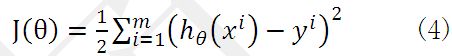

为了得到目标线性方程,我们只需确定公式(3)中的ΘT,同时为了确定所选定的的ΘT效果好坏,通常情况下,我们使用一个损失函数(loss function)或者说是错误函数(error function)来评估h(x)函数的好坏。该错误函数如公式(4)所示。前面乘上的1/2是为了在求导的时候,这个系数就不见了。

如何调整ΘT以使得J(Θ)取得最小值有很多方法,其中有完全用数学描述的最小二乘法(min square)和梯度下降法。

1.1.3 批量梯度下降算法

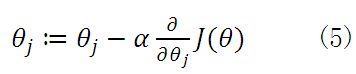

由之前所述,求ΘT的问题演变成了求J(Θ)的极小值问题,这里使用梯度下降法。而梯度下降法中的梯度方向由J(Θ)对Θ的偏导数确定,由于求的是极小值,因此梯度方向是偏导数的反方向。

公式(5)中α为学习速率,当α过大时,有可能越过最小值,而α当过小时,容易造成迭代次数较多,收敛速度较慢。假如数据集中只有一条样本,那么样本数量,所以公式(5)中

所以公式(5)就演变成:

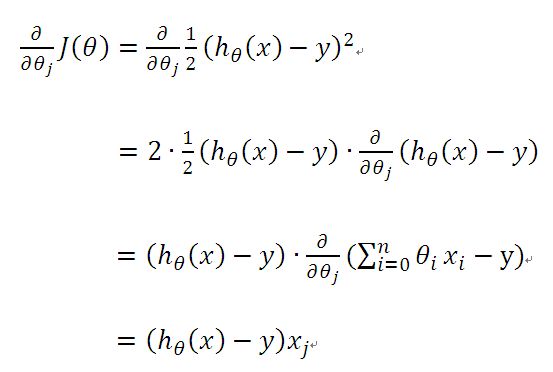

当样本数量m不为1时,将公式(5)中![]() 由公式(4)带入求偏导,那么每个参数沿梯度方向的变化值由公式(7)求得。

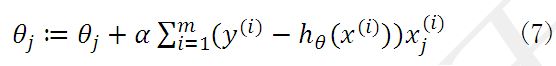

由公式(4)带入求偏导,那么每个参数沿梯度方向的变化值由公式(7)求得。

初始时ΘT可设为0,然后迭代使用公式(7)计算ΘT中的每个参数,直至收敛为止。由于每次迭代计算ΘT时,都使用了整个样本集,因此我们称该梯度下降算法为批量梯度下降算法(batch gradient descent)。

1.1.4 随机梯度下降算法

当样本集数据量m很大时,批量梯度下降算法每迭代一次的复杂度为O(mn),复杂度很高。因此,为了减少复杂度,当m很大时,我们更多时候使用随机梯度下降算法(stochastic gradient descent),算法如下所示:

即每读取一条样本,就迭代对ΘT进行更新,然后判断其是否收敛,若没收敛,则继续读取样本进行处理,如果所有样本都读取完毕了,则循环重新从头开始读取样本进行处理。

这样迭代一次的算法复杂度为O(n)。对于大数据集,很有可能只需读取一小部分数据,函数J(Θ)就收敛了。比如样本集数据量为100万,有可能读取几千条或几万条时,函数就达到了收敛值。所以当数据量很大时,更倾向于选择随机梯度下降算法。

但是,相较于批量梯度下降算法而言,随机梯度下降算法使得J(Θ)趋近于最小值的速度更快,但是有可能造成永远不可能收敛于最小值,有可能一直会在最小值周围震荡,但是实践中,大部分值都能够接近于最小值,效果也都还不错。

1.1.4 最小二乘法

将训练特征表示为X矩阵,结果表示成y向量,仍然是线性回归模型,误差函数不变。那么θ可以直接由下面公式得出

但此方法要求X是列满秩的,而且求矩阵的逆比较慢。

1.2 Spark Mllib Linear Regression源码分析

1.2.1 LinearRegressionWithSGD

线性回归算法的train方法,由LinearRegressionWithSGD类的object定义了train函数。

package org.apache.spark.mllib.regression

def train(

input: RDD[LabeledPoint],

numIterations: Int,

stepSize: Double,

miniBatchFraction: Double): LinearRegressionModel = {

new LinearRegressionWithSGD(stepSize, numIterations, miniBatchFraction).run(input)

}

Input为输入样本,numIterations为迭代次数,stepSize为步长,miniBatchFraction为迭代因子。

创建一个LinearRegressionWithSGD对象,初始化梯度下降算法。

Run方法来自于继承父类GeneralizedLinearAlgorithm,实现方法如下。

1.2.2 GeneralizedLinearAlgorithm

LinearRegressionWithSGD中run方法的实现。

package org.apache.spark.mllib.regression

/**

* Run the algorithm with the configured parameters on an input RDD

* of LabeledPoint entries starting from the initial weights provided.

*/

def run(input: RDD[LabeledPoint], initialWeights: Vector): M = {

// 特征维度赋值。

if (numFeatures < 0) {

numFeatures = input.map(_.features.size).first()

}

// 输入样本数据检测。

if (input.getStorageLevel == StorageLevel.NONE) {

logWarning("The input data is not directly cached, which may hurt performance if its"

+ " parent RDDs are also uncached.")

}

// 输入样本数据检测。

// Check the data properties before running the optimizer

if (validateData && !validators.forall(func => func(input))) {

thrownew SparkException("Input validation failed.")

}

val scaler = if (useFeatureScaling) {

new StandardScaler(withStd = true, withMean = false).fit(input.map(_.features))

} else {

null

}

// 输入样本数据处理,输出data(label, features)格式。

// addIntercept:是否增加θ0常数项,若增加,则增加x0=1项。

// Prepend an extra variable consisting of all 1.0's for the intercept.

// TODO: Apply feature scaling to the weight vector instead of input data.

val data =

if (addIntercept) {

if (useFeatureScaling) {

input.map(lp => (lp.label, appendBias(scaler.transform(lp.features)))).cache()

} else {

input.map(lp => (lp.label, appendBias(lp.features))).cache()

}

} else {

if (useFeatureScaling) {

input.map(lp => (lp.label, scaler.transform(lp.features))).cache()

} else {

input.map(lp => (lp.label, lp.features))

}

}

//初始化权重。

// addIntercept:是否增加θ0常数项,若增加,则权重增加θ0。

/**

* TODO: For better convergence, in logistic regression, the intercepts should be computed

* from the prior probability distribution of the outcomes; for linear regression,

* the intercept should be set as the average of response.

*/

val initialWeightsWithIntercept = if (addIntercept && numOfLinearPredictor == 1) {

appendBias(initialWeights)

} else {

/** If `numOfLinearPredictor > 1`, initialWeights already contains intercepts. */

initialWeights

}

//权重优化,进行梯度下降学习,返回最优权重。

val weightsWithIntercept = optimizer.optimize(data, initialWeightsWithIntercept)

val intercept = if (addIntercept && numOfLinearPredictor == 1) {

weightsWithIntercept(weightsWithIntercept.size - 1)

} else {

0.0

}

var weights = if (addIntercept && numOfLinearPredictor == 1) {

Vectors.dense(weightsWithIntercept.toArray.slice(0, weightsWithIntercept.size - 1))

} else {

weightsWithIntercept

}

createModel(weights, intercept)

}

其中optimizer.optimize(data, initialWeightsWithIntercept)是线性回归实现的核心。

oprimizer的类型为GradientDescent,optimize方法中主要调用GradientDescent伴生对象的runMiniBatchSGD方法,返回当前迭代产生的最优特征权重向量。

GradientDescentd对象中optimize实现方法如下。

1.2.3 GradientDescent

optimize实现方法如下。

package org.apache.spark.mllib.optimization

/**

* :: DeveloperApi ::

* Runs gradient descent on the given training data.

* @param data training data

* @param initialWeights initial weights

* @return solution vector

*/

@DeveloperApi

def optimize(data: RDD[(Double, Vector)], initialWeights: Vector): Vector = {

val (weights, _) = GradientDescent.runMiniBatchSGD(

data,

gradient,

updater,

stepSize,

numIterations,

regParam,

miniBatchFraction,

initialWeights)

weights

}

}

在optimize方法中,调用了GradientDescent.runMiniBatchSGD方法,其runMiniBatchSGD实现方法如下:

/**

* Run stochastic gradient descent (SGD) in parallel using mini batches.

* In each iteration, we sample a subset (fraction miniBatchFraction) of the total data

* in order to compute a gradient estimate.

* Sampling, and averaging the subgradients over this subset is performed using one standard

* spark map-reduce in each iteration.

*

* @param data - Input data for SGD. RDD of the set of data examples, each of

* the form (label, [feature values]).

* @param gradient - Gradient object (used to compute the gradient of the loss function of

* one single data example)

* @param updater - Updater function to actually perform a gradient step in a given direction.

* @param stepSize - initial step size for the first step

* @param numIterations - number of iterations that SGD should be run.

* @param regParam - regularization parameter

* @param miniBatchFraction - fraction of the input data set that should be used for

* one iteration of SGD. Default value 1.0.

*

* @return A tuple containing two elements. The first element is a column matrix containing

* weights for every feature, and the second element is an array containing the

* stochastic loss computed for every iteration.

*/

def runMiniBatchSGD(

data: RDD[(Double, Vector)],

gradient: Gradient,

updater: Updater,

stepSize: Double,

numIterations: Int,

regParam: Double,

miniBatchFraction: Double,

initialWeights: Vector): (Vector, Array[Double]) = {

//历史迭代误差数组

val stochasticLossHistory = new ArrayBuffer[Double](numIterations)

//样本数据检测,若为空,返回初始值。

val numExamples = data.count()

// if no data, return initial weights to avoid NaNs

if (numExamples == 0) {

logWarning("GradientDescent.runMiniBatchSGD returning initial weights, no data found")

return (initialWeights, stochasticLossHistory.toArray)

}

// miniBatchFraction值检测。

if (numExamples * miniBatchFraction < 1) {

logWarning("The miniBatchFraction is too small")

}

// weights权重初始化。

// Initialize weights as a column vector

var weights = Vectors.dense(initialWeights.toArray)

val n = weights.size

/**

* For the first iteration, the regVal will be initialized as sum of weight squares

* if it's L2 updater; for L1 updater, the same logic is followed.

*/

var regVal = updater.compute(

weights, Vectors.dense(new Array[Double](weights.size)), 0, 1, regParam)._2

// weights权重迭代计算。

for (i <- 1 to numIterations) {

val bcWeights = data.context.broadcast(weights)

// Sample a subset (fraction miniBatchFraction) of the total data

// compute and sum up the subgradients on this subset (this is one map-reduce)

// 采用treeAggregate的RDD方法,进行聚合计算,计算每个样本的权重向量、误差值,然后对所有样本权重向量及误差值进行累加。

val (gradientSum, lossSum, miniBatchSize) = data.sample(false, miniBatchFraction, 42 + i)

.treeAggregate((BDV.zeros[Double](n), 0.0, 0L))(

seqOp = (c, v) => {

// c: (grad, loss, count), v: (label, features)

val l = gradient.compute(v._2, v._1, bcWeights.value, Vectors.fromBreeze(c._1))

(c._1, c._2 + l, c._3 + 1)

},

combOp = (c1, c2) => {

// c: (grad, loss, count)

(c1._1 += c2._1, c1._2 + c2._2, c1._3 + c2._3)

})

// 保存本次迭代误差值,以及更新weights权重向量。

if (miniBatchSize > 0) {

/**

* NOTE(Xinghao): lossSum is computed using the weights from the previous iteration

* and regVal is the regularization value computed in the previous iteration as well.

*/

stochasticLossHistory.append(lossSum / miniBatchSize + regVal)

val update = updater.compute(

weights, Vectors.fromBreeze(gradientSum / miniBatchSize.toDouble), stepSize, i, regParam)

weights = update._1

regVal = update._2

} else {

logWarning(s"Iteration ($i/$numIterations). The size of sampled batch is zero")

}

}

logInfo("GradientDescent.runMiniBatchSGD finished. Last 10 stochastic losses %s".format(

stochasticLossHistory.takeRight(10).mkString(", ")))

(weights, stochasticLossHistory.toArray)

}

runMiniBatchSGD的输入、输出参数说明:

data 样本输入数据,格式 (label, [feature values])

gradient 梯度对象,用于对每个样本计算梯度及误差

updater 权重更新对象,用于每次更新权重

stepSize 初始步长

numIterations 迭代次数

regParam 正则化参数

miniBatchFraction 迭代因子

返回结果(Vector, Array[Double]),第一个为权重,每二个为每次迭代的误差值。

在MiniBatchSGD中主要实现对输入数据集进行迭代抽样,通过使用LeastSquaresGradient作为梯度下降算法,使用SimpleUpdater作为更新算法,不断对抽样数据集进行迭代计算从而找出最优的特征权重向量解。在LinearRegressionWithSGD中定义如下:

privateval gradient = new LeastSquaresGradient()

privateval updater = new SimpleUpdater()

overrideval optimizer = new GradientDescent(gradient, updater)

.setStepSize(stepSize)

.setNumIterations(numIterations)

.setMiniBatchFraction(miniBatchFraction)

runMiniBatchSGD方法中调用了gradient.compute、updater.compute两个方法,其实现方法如下。

1.2.4 gradient & updater

1)gradient

/**

* :: DeveloperApi ::

* Compute gradient and loss for a Least-squared loss function, as used in linear regression.

* This is correct for the averaged least squares loss function (mean squared error)

* L = 1/2n ||A weights-y||^2

* See also the documentation for the precise formulation.

*/

@DeveloperApi

class LeastSquaresGradient extends Gradient {

//计算当前计算对象的类标签与实际类标签值之差

//计算当前平方梯度下降值

//计算权重的更新值

//返回当前训练对象的特征权重向量和误差

overridedef compute(data: Vector, label: Double, weights: Vector): (Vector, Double) = {

val diff = dot(data, weights) - label

val loss = diff * diff / 2.0

val gradient = data.copy

scal(diff, gradient)

(gradient, loss)

}

overridedef compute(

data: Vector,

label: Double,

weights: Vector,

cumGradient: Vector): Double = {

val diff = dot(data, weights) - label

axpy(diff, data, cumGradient)

diff * diff / 2.0

}

}

2)updater

/**

* :: DeveloperApi ::

* A simple updater for gradient descent *without* any regularization.

* Uses a step-size decreasing with the square root of the number of iterations.

*/

//weihtsOld:上一次迭代计算后的特征权重向量

//gradient:本次迭代计算的特征权重向量

//stepSize:迭代步长

//iter:当前迭代次数

//regParam:回归参数

//以当前迭代次数的平方根的倒数作为本次迭代趋近(下降)的因子

//返回本次剃度下降后更新的特征权重向量

@DeveloperApi

class SimpleUpdater extends Updater {

overridedef compute(

weightsOld: Vector,

gradient: Vector,

stepSize: Double,

iter: Int,

regParam: Double): (Vector, Double) = {

val thisIterStepSize = stepSize / math.sqrt(iter)

val brzWeights: BV[Double] = weightsOld.toBreeze.toDenseVector

brzAxpy(-thisIterStepSize, gradient.toBreeze, brzWeights)

(Vectors.fromBreeze(brzWeights), 0)

}

}

1.3 Mllib Linear Regression实例

1、数据

数据格式为:标签, 特征1 特征2 特征3……

-0.4307829,-1.63735562648104 -2.00621178480549 -1.86242597251066 -1.02470580167082 -0.522940888712441 -0.863171185425945 -1.04215728919298 -0.864466507337306

-0.1625189,-1.98898046126935 -0.722008756122123 -0.787896192088153 -1.02470580167082 -0.522940888712441 -0.863171185425945 -1.04215728919298 -0.864466507337306

……

2、代码

//1 读取样本数据

valdata_path = "/user/tmp/lpsa.data"

valdata = sc.textFile(data_path)

valexamples = data.map { line =>

valparts = line.split(',')

LabeledPoint(parts(0).toDouble, Vectors.dense(parts(1).split(' ').map(_.toDouble)))

}.cache()

//2 样本数据划分训练样本与测试样本

valsplits = examples.randomSplit(Array(0.8, 0.2))

valtraining = splits(0).cache()

valtest = splits(1).cache()

valnumTraining = training.count()

valnumTest = test.count()

println(s"Training: $numTraining, test: $numTest.")

//3 新建线性回归模型,并设置训练参数

valnumIterations = 100

valstepSize = 1

valminiBatchFraction = 1.0

valmodel = LinearRegressionWithSGD.train(training, numIterations, stepSize, miniBatchFraction)

//4 对测试样本进行测试

valprediction = model.predict(test.map(_.features))

valpredictionAndLabel = prediction.zip(test.map(_.label))

//5 计算测试误差

valloss = predictionAndLabel.map {

case (p, l) =>

valerr = p - l

err * err

}.reduce(_ + _)

valrmse = math.sqrt(loss / numTest)

println(s"Test RMSE = $rmse.")