网络爬虫重庆交通大学新闻网站中所有的信息通知

目录

- 一、创建anaconda虚拟环境

- 二、爬虫怕爬取信息

-

- (1)爬取南阳理工学院ACM练习题目数据(例子)

- (2)爬取重庆交通大学新闻网站中所有的信息通知

- 三、总结

- 四、参考链接

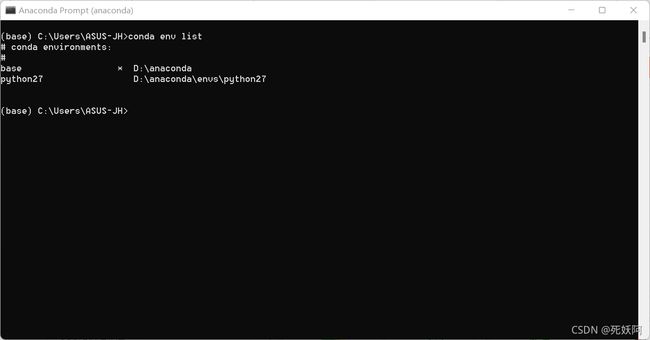

一、创建anaconda虚拟环境

1.打开Anaconda Prompt,创建虚拟环境,python27是环境名,可自行更改,python=3.6是下载的python版本,也可自行更改

conda create -n python27 python=3.6

2.激活环境

activate pythonwork

3.在此虚拟环境中用pip或conda安装requests、beautifulsoup4等必要包。

conda install -n pythonwork requests

conda install -n pythonwork beautifulsoup4

conda install tqdm

二、爬虫怕爬取信息

(1)爬取南阳理工学院ACM练习题目数据(例子)

1.对南阳理工学院ACM题目网站(http://www.51mxd.cn/) 练习题目数据的抓取和保存

# -*- coding: utf-8 -*-

"""

Created on Sat Nov 20 18:51:38 2021

@author: ASUS-JH

"""

import requests

from bs4 import BeautifulSoup

import csv

from tqdm import tqdm

# 模拟浏览器访问

Headers ={

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/95.0.4638.69 Safari/537.36 Edg/95.0.1020.44'

}

#csv的表头

cqjtu_head=["日期","标题"]

#存放内容

cqjtu_infomation=[]

#获取新闻标题和时间

def get_time_and_title(page_num,Headers):#页数,请求头

if page_num==66 :

url='http://news.cqjtu.edu.cn/xxtz.htm'

else :

url=f'http://news.cqjtu.edu.cn/xxtz/{page_num}.htm'

r=requests.get(url,headers=Headers)

r.raise_for_status()

r.encoding="utf-8"

array={#根据class来选择

'class':'time',

}

title_array={

'target':'_blank'

}

page_array={

'type':'text/javascript'

}

soup = BeautifulSoup(r.text, 'html.parser')

time=soup.find_all('div',array)

title=soup.find_all('a',title_array)

temp=[]

for i in range(0,len(time)):

time_s=time[i].string

time_s=time_s.strip('\n ')

time_s=time_s.strip('\n ')

#清除空格

temp.append(time_s)

temp.append(title[i+1].string)

cqjtu_infomation.append(temp)

temp=[]

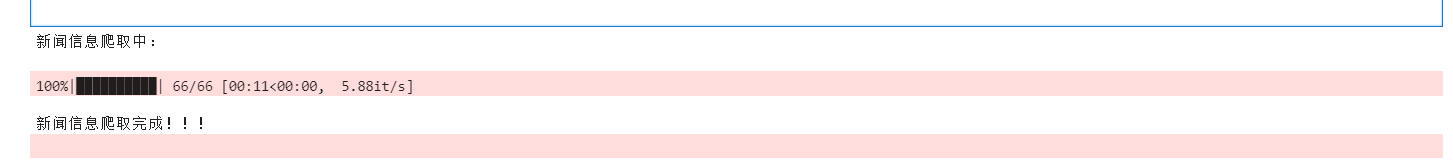

print('新闻信息爬取中:\n')

for pages in tqdm(range(66, 0,-1)):

get_time_and_title(pages,Headers)

# 存放题目

with open('cqjtu_news.csv', 'w',newline='',encoding='utf-8') as file:

fileWriter = csv.writer(file)

fileWriter.writerow(cqjtu_head)

fileWriter.writerows(cqjtu_infomation)

print('\n新闻信息爬取完成!!!')

2.运行

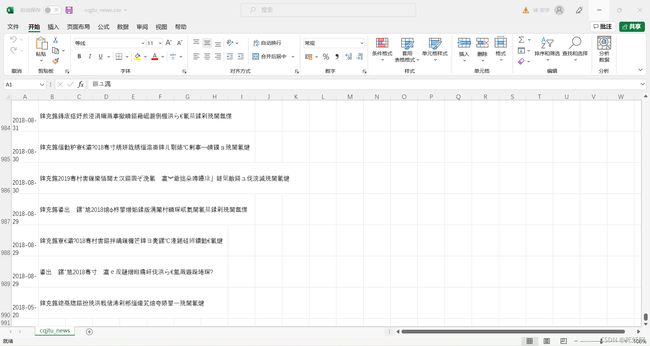

3.查看生成文件NYOJ_Subjects.csv(excel打开的)

(2)爬取重庆交通大学新闻网站中所有的信息通知

1.重庆交通大学新闻网站中近几年所有的信息通知(http://news.cqjtu.edu.cn/xxtz.htm) 的发布日期和标题全部爬取下来

import requests

from bs4 import BeautifulSoup

import csv

from tqdm import tqdm

# 模拟浏览器访问

Headers = 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3741.400 QQBrowser/10.5.3863.400'

# 表头

csvHeaders = ['题号', '难度', '标题', '通过率', '通过数/总提交数']

# 题目数据

subjects = []

# 爬取题目

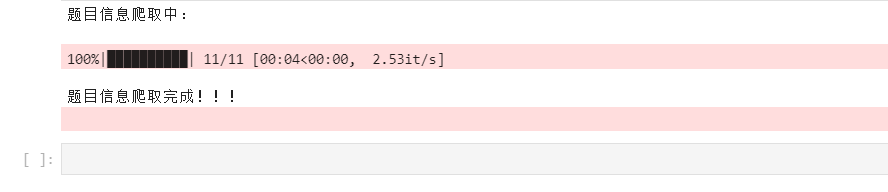

print('题目信息爬取中:\n')

for pages in tqdm(range(1, 11 + 1)):

r = requests.get(f'http://www.51mxd.cn/problemset.php-page={pages}.htm', Headers)

r.raise_for_status()

r.encoding = 'utf-8'

soup = BeautifulSoup(r.text, 'html5lib')

td = soup.find_all('td')

subject = []

for t in td:

if t.string is not None:

subject.append(t.string)

if len(subject) == 5:

subjects.append(subject)

subject = []

# 存放题目

with open('NYOJ_Subjects.csv', 'w', newline='') as file:

fileWriter = csv.writer(file)

fileWriter.writerow(csvHeaders)

fileWriter.writerows(subjects)

print('\n题目信息爬取完成!!!')

2.运行

3.查看生成文件test.csv(excel打开的),发现乱码

4.使用记事本打开test.csv,又发现是对的

4.使用记事本打开test.csv,又发现是对的

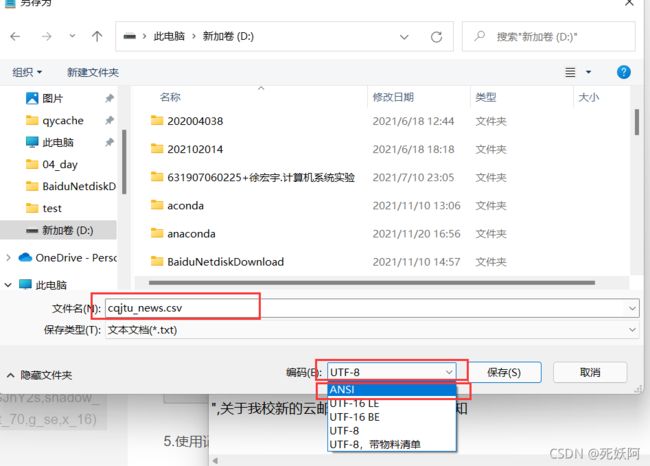

5.使用记事本另存一下,我们把UTF-8编码格式转为ANSI,点击保存,如下图

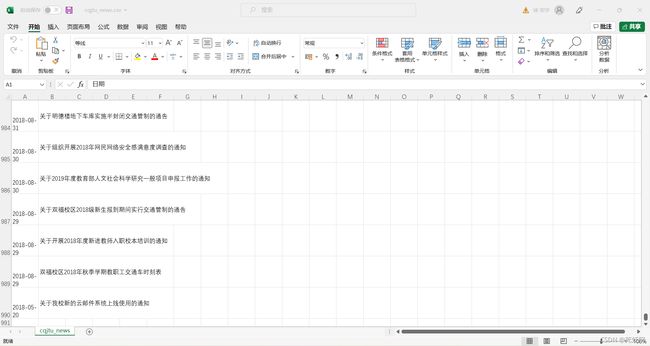

6.再次打开test.csv(excel打开)

这你就爬取成功了!

三、总结

爬取网页信息时,首先要分析页面的源码,结构了解清楚,利用网络爬虫爬取信息能够让我们快速找到有用信息,十分方便

四、参考链接

爬虫爬取学校通知信息(python))