爬虫——大数据

1. 提取本地HTML中的数据

1. 新建index.html文件

Title

欢迎来到Python

小乔

小乔- 大乔

- 嫦娥

- 李白

- 上官婉儿

- 孙策

- 百里守约

- 百里玄策

这是div标签

被动:小乔释放技能命中敌人时,会增加25%移动速度,持续2秒

点击跳转百度

2. 读取HTML文件

需要安装lxml,在PyCharm中的Terminal中使用pip install lxml即可安装

from lxml import html

# 读取html文件

with open('./index.html','r',encoding='utf-8') as f:

html_data = f.read()

# 解析html文件,获得selector对象

selector = html.fromstring(html_data)

3. 使用xpath语法进行读取

常用节点选择工具

Chrome插件XPath Helper(下载crx扩展程序进行安装)

- 节点选择语法:

Xpath使用路径表达式来选取XML文档中的节点或者节点集。

- 表达式:描述

- nodename:选取此节点的所有子节点。

- /:从根节点选取。

- //:从匹配选择的当前节点选择文档中的节点,而不考虑它们的位置。

- .:选取当前节点

- ..:选取当前节点的父节点

- @:选取属性

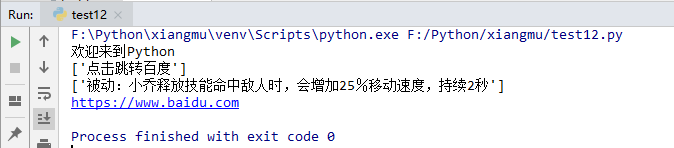

h1 = selector.xpath('/html/body/h1/text()')

print(h1[0])

# //可以代表从任意位置出发、

# 标签1[@属性=属性值]/标签2[@属性=属性值].../text()

a = selector.xpath('//div[@id="container"]/a/text()')

print(a)

#获取p标签的内容

b = selector.xpath('//div[@id="container"]/p/text()')

print(b)

# 获取属性

link = selector.xpath('//div[@id="container"]/a/@href')

print(link[0])

2. requests

需要安装requests,在PyCharm中的Terminal中使用pip install requests即可安装

1. 基本GET请求(headers参数和parmas参数)

response = requests.get(url)

- response的常用方法:

- response.text——获取str类型的响应

- response.content——获取bytes类型的响应

- response.status_code——获取状态码

- response.headers——获取响应头

- response.request——获取相应对应的请求

最基本的GET请求可以直接用get方法

- response.text和response.content的区别

- response.text

类型:str

解码类型:根据HTTP头部对应的编码作出有根据的推测,推测的文本编码

如何修改编码方式:response.encoding="gbk" - response.content

类型:bytes

解码类型:没有指定

如何修改编码方式:response.encoding="utf8"

- 添加header和查询参数

为什么请求需要带上header?

模拟浏览器,欺骗服务器,获取和浏览器一致的内容。headers的形式:字典 - 发送带参数的请求

什么叫做请求参数:

eg1:http://www/webkaka.com/tutorial/server/2015/021013/——不是

eg2:https://www/baidu.com/s?wd=python&c=b——是

# requests

# 导入

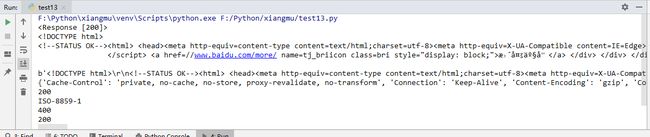

import requests

url = 'https://www.baidu.com'

# url = 'https://www.taobao.com'

# url = 'http://www.dangdang.com'

response = requests.get(url)

print(response)

# # 获取str类型的响应

print(response.text)

# 获取bytes类型的响应

print(response.content)

# 获取响应头

print(response.headers)

# 获取状态码

print(response.status_code)

print(response.encoding)

# 200 ok 404 500

# 没有添加头的知乎网站

resp = requests.get('https://www.zhihu.com/signup?next=%2F')

print(resp.status_code)

# 使用字典定义请求头

headers = {"User-Agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/75.0.3770.142 Safari/537.36"}

resp = requests.get('https://www.zhihu.com/',headers = headers)

print(resp.status_code)

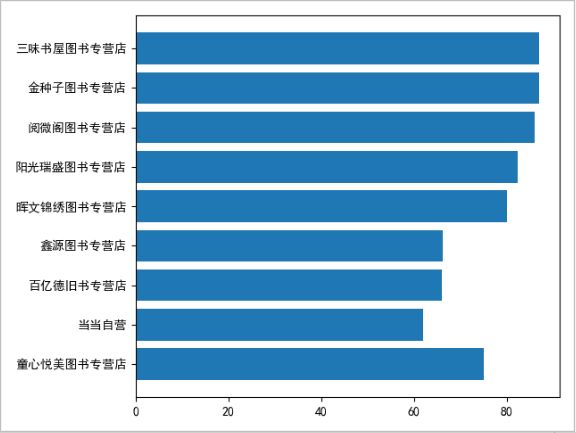

3. 爬虫当当网

1.步骤

- 读取'http://search.dangdang.com/?key={}&act=input',获取站点str类型的响应

- 将html页面写入本地的dangdang.html

- 提取目标站的信息

import requests

from lxml import html

import pandas as pd

from matplotlib import pyplot as plt

plt.rcParams["font.sans-serif"] = ['SimHei']

plt.rcParams['axes.unicode_minus'] = False

def spider_dangdang(isbn):

book_list = []

# 目标站点地址

url = 'http://search.dangdang.com/?key={}&act=input'.format(isbn)

# print(url)

# 获取站点str类型的响应

headers = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/75.0.3770.142 Safari/537.36"}

resp = requests.get(url, headers=headers)

html_data = resp.text

# 将html页面写入本地

# with open('dangdang.html', 'w', encoding='utf-8') as f:

# f.write(html_data)

# 提取目标站的信息

selector = html.fromstring(html_data)

ul_list = selector.xpath('//div[@id="search_nature_rg"]/ul/li')

print('您好,共有{}家店铺售卖此图书'.format(len(ul_list)))

# 遍历 ul_list

for li in ul_list:

# 图书名称

title = li.xpath('./a/@title')[0].strip()

# print(title)

# 图书购买链接

link = li.xpath('a/@href')[0]

# print(link)

# 图书价格

price = li.xpath('./p[@class="price"]/span[@class="search_now_price"]/text()')[0]

price = float(price.replace('¥',''))

# print(price)

# 图书卖家名称

store = li.xpath('./p[@class="search_shangjia"]/a/text()')

# if len(store) == 0:

# store = '当当自营'

# else:

# store = store[0]

store = '当当自营' if len(store) == 0 else store[0]

# print(store)

# 添加每一个商家的图书信息

book_list.append({

'title':title,

'price':price,

'link':link,

'store':store

})

# 按照价格进行排序

book_list.sort(key=lambda x:x['price'])

# 遍历booklist

for book in book_list:

print(book)

# 展示价格最低的前10家 柱状图

# 店铺的名称

top10_store = [book_list[i] for i in range(10)]

# x = []

# for store in top10_store:

# x.append(store['store'])

x = [x['store'] for x in top10_store]

print(x)

# 图书的价格

y = [x['price'] for x in top10_store]

print(y)

# plt.bar(x, y)

plt.barh(x, y)

plt.show()

# 存储成csv文件

df = pd.DataFrame(book_list)

df.to_csv('dangdang.csv')

spider_dangdang('9787115428028')

4. 重庆影讯

import requests

from lxml import html

import pandas as pd

from matplotlib import pyplot as plt

from wordcloud import WordCloud

plt.rcParams["font.sans-serif"] = ['SimHei']

plt.rcParams['axes.unicode_minus'] = False

cinemaList = []

import jieba

from random import randint

import string

def spider_cinema(isbn):

url = 'https://movie.douban.com/cinema/later/?key={}&act=input'.format(isbn)

# print(url)

# 获取站点str类型的响应

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/75.0.3770.142 Safari/537.36"}

resp = requests.get(url, headers=headers)

html_data = resp.text

# 将html页面写入本地

# with open('chongqingcinema.html', 'w', encoding='utf-8') as f:

# f.write(html_data)

# 提取目标站的信息

selector = html.fromstring(html_data)

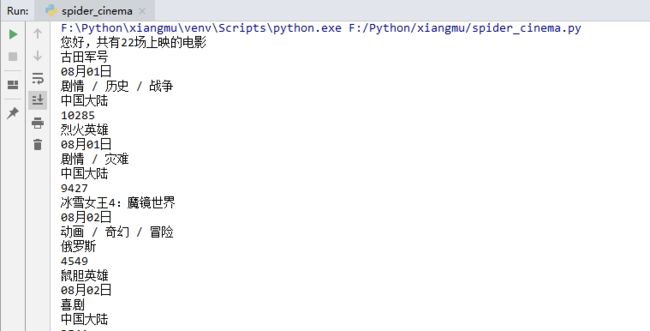

ul_list = selector.xpath('//div[@id="showing-soon"]/div')

print('您好,共有{}场上映的电影'.format(len(ul_list)))

# 遍历电影计算以下

for li in ul_list:

# 电影名

cinemaName = li.xpath('./div/h3/a/text()')[0]

print(cinemaName)

# 上映日期

release_date = li.xpath('./div/ul/li/text()')[0]

print(release_date)

# 类型

cinema_Type = li.xpath('./div/ul/li/text()')[1]

print(cinema_Type)

# 上映国家

release_Country = li.xpath('./div/ul/li/text()')[2]

print(release_Country)

# 想看人数

peoNum = li.xpath('./div/ul/li/span/text()')[0]

peoNum = str(peoNum).replace('人想看','')

peoNum = int(peoNum)

print(peoNum)

# 集合

cinemaList.append({

'cinemaName': cinemaName,

'release_date': release_date,

'cinema_Type': cinema_Type,

'release_Country': release_Country,

'peoNum':peoNum

})

# 根据想看人数排序

cinemaList.sort(key=lambda x: x['peoNum'],reverse=True)

for cinema in cinemaList:

print(cinema)

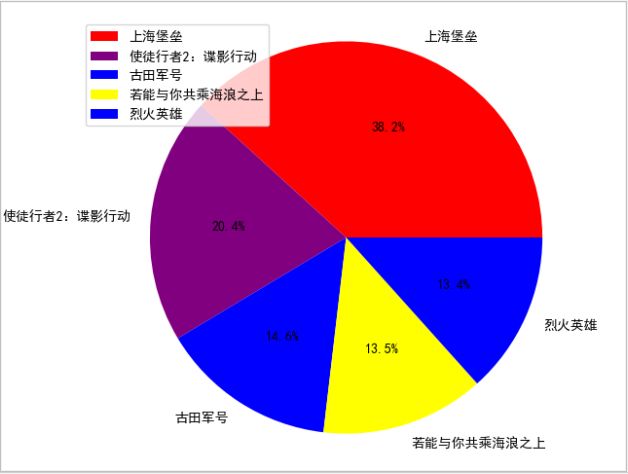

#排名前五的电影饼状图

colors = ['red', 'purple', 'blue', 'yellow','blue']

top5_cinema = [cinemaList[i] for i in range(5)]

countsF = [i['peoNum'] for i in top5_cinema]

labels = [i['cinemaName'] for i in top5_cinema]

# 距离圆心点距离

plt.pie(countsF,labels=labels,autopct='%1.1f%%',colors=colors)

plt.legend(loc=2)

plt.axis('equal')

plt.show()

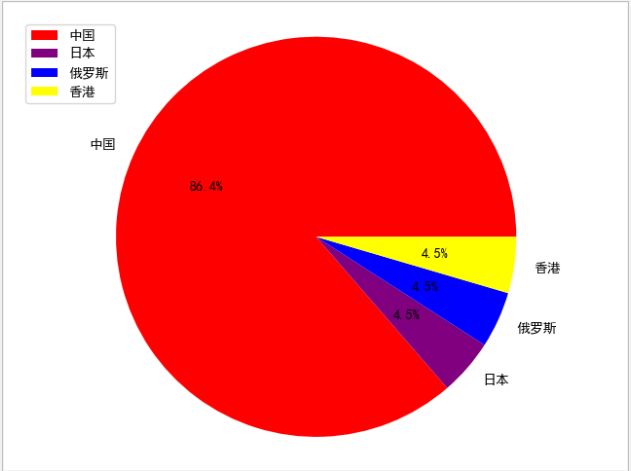

# 绘制即将上映电影国家的占比图(饼图)

counts = {}

coucounts = []

labels = []

total = [x['release_Country'] for x in cinemaList]

text = ''.join(total)

words_list = jieba.lcut(text)

print(words_list)

excludes = {"大陆"}

for word in words_list:

if len(word) <= 1:

continue

else:

counts[word] = counts.get(word, 0) + 1

print(counts)

for word in excludes:

del counts[word]

print(counts)

colors = ['red', 'purple', 'blue', 'yellow']

for x,v in counts.items():

print(x,v)

coucounts.append(v)

labels.append(x)

plt.pie(coucounts,labels=labels,autopct='%1.1f%%',colors=colors)

plt.legend(loc=2)

plt.axis('equal')

plt.show()

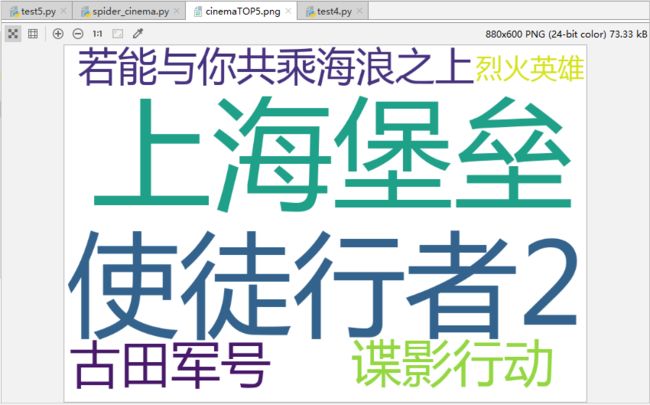

# 绘制top5最想看的电影cinemaTOP5.png

top5_cinema = [cinemaList[i] for i in range(5)]

x = [x['cinemaName'] for x in top5_cinema]

li = []

for i in x:

li.append(i)

text = ' '.join(li)

WordCloud(

font_path='msyh.ttc',

background_color='white',

width=880,

height=600,

# 两个相邻重复词之间的匹配

collocations=False

).generate(text).to_file('cinemaTOP5.png')

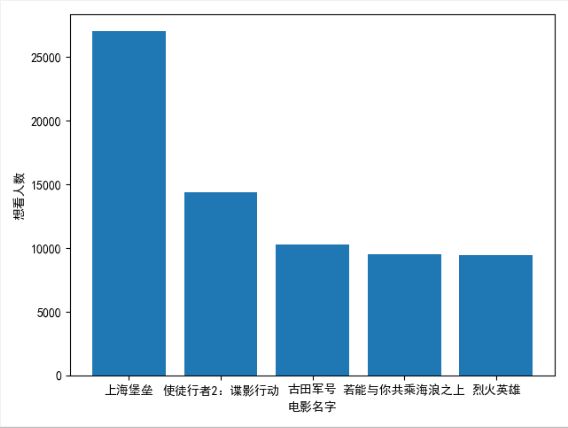

# 绘制top5最想看的电影柱状图

top5_cinema = [cinemaList[i] for i in range(5)]

x = [x['cinemaName'] for x in top5_cinema]

y = [y['peoNum'] for y in top5_cinema]

print(x)

print(y)

plt.xlabel('电影名字')

plt.ylabel('想看人数')

plt.bar(x,y)

plt.show()

spider_cinema('chongqing')

小乔

小乔