Pytorch(colab端)——基于VGG模型迁移学习的猫狗大战

简介

本次实验为 Kaggle 于2013年举办的猫狗大战比赛,即判断一张输入图像是“猫”还是“狗”。该实验使用在 ImageNet 上预训练 的 VGG 网络进行测试。因为原网络的分类结果是1000类,所以这里进行迁移学习,对原网络进行 fine-tune (即固定前面若干层,作为特征提取器,只重新训练最后两层)。大概步骤为下载比赛的测试集(包含2000张图片),利用fine-tune的VGG模型进行测试,按照比赛规定的格式输出,上传结果评测。

https://god.yanxishe.com/41

下载数据集

本次实验最终要完成对研习社比赛项目2000张图像的检验,为了模拟更加真实的实验状况,我们的训练集(20000张)和验证集(2000张)均使用该网站上的数据集。

https://static.leiphone.com/cat_dog.rar

数据集文件目录处理

为了使数据集适应原有代码,我们把数据集文件目录设置成下面的形式

在colab上运行Pytorch程序

colab上可分段运行程序,这里我们也分段介绍实验代码

import numpy as np

import matplotlib.pyplot as plt

import os

import torch

import torch.nn as nn

import torchvision

from torchvision import models,transforms,datasets

import time

import json

# 判断是否存在GPU设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print('Using gpu: %s ' % torch.cuda.is_available())

解压数据集

数据集上传到谷歌云盘,在colab上装载云盘文件

! unzip drive/MyDrive/app/cat_dog

对图像进行预处理

normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

vgg_format = transforms.Compose([

transforms.CenterCrop(224),

transforms.ToTensor(),

normalize,

])

data_dir = './cat_dog'

data_test_dir = './cat_dog/test'

dsets = {x: datasets.ImageFolder(os.path.join(data_dir, x), vgg_format)

for x in ['train', 'valid', 'test']}

dsets_test = {'test': datasets.ImageFolder(data_test_dir, vgg_format)}

dset_sizes = {x: len(dsets[x]) for x in ['train', 'valid', 'test']}

dset_classes = dsets['train'].classes

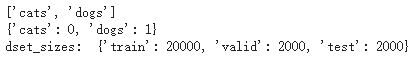

print(dsets['train'].classes)

print(dsets['train'].class_to_idx)

print('dset_sizes: ', dset_sizes)

loader_train = torch.utils.data.DataLoader(dsets['train'], batch_size=128, shuffle=True, num_workers=6)

loader_valid = torch.utils.data.DataLoader(dsets['valid'], batch_size=8, shuffle=False, num_workers=6)

loader_test = torch.utils.data.DataLoader(dsets_test['test'], batch_size=1, shuffle=False, num_workers=0)

对数据集的分类,猫用0表示,狗用1表示

显示各数据集的图片数

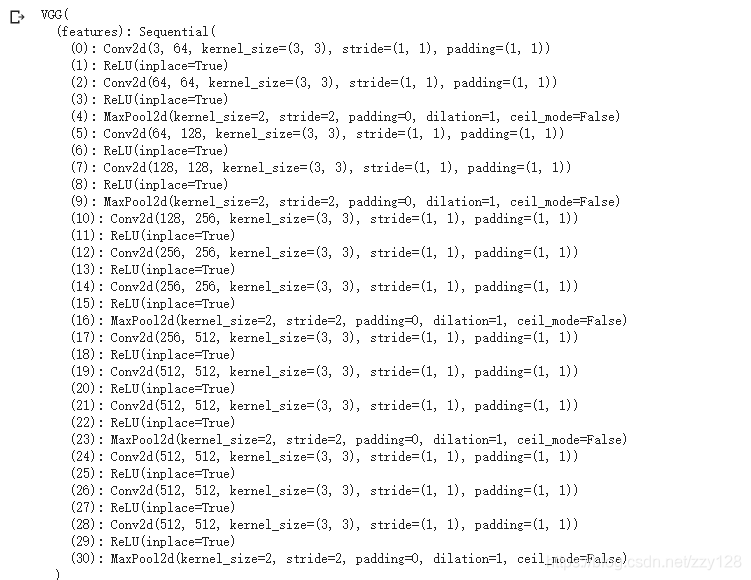

创建 VGG Model

!wget https://s3.amazonaws.com/deep-learning-models/image-models/imagenet_class_index.json

model_vgg = models.vgg16(pretrained=True)

print(model_vgg)

#修改最后一层,冻结前面层的参数

model_vgg_new = model_vgg;

for param in model_vgg_new.parameters():

param.requires_grad = False

model_vgg_new.classifier._modules['6'] = nn.Linear(4096, 2)

model_vgg_new.classifier._modules['7'] = torch.nn.LogSoftmax(dim = 1)

model_vgg_new = model_vgg_new.to(device)

print(model_vgg_new.classifier)

训练模型

'''

第一步:创建损失函数和优化器

损失函数 NLLLoss() 的 输入 是一个对数概率向量和一个目标标签.

它不会为我们计算对数概率,适合最后一层是log_softmax()的网络.

'''

criterion = nn.NLLLoss()

# 学习率

lr = 0.001

# 随机梯度下降

optimizer_vgg = torch.optim.Adam(model_vgg_new.classifier[6].parameters(),lr = lr)

'''

第二步:训练模型

'''

def train_model(model,dataloader,size,epochs=1,optimizer=None):

model.train()

max_acc = 0

for epoch in range(epochs):

running_loss = 0.0

running_corrects = 0

count = 0

for inputs,classes in dataloader:

inputs = inputs.to(device)

classes = classes.to(device)

outputs = model(inputs)

loss = criterion(outputs,classes)

optimizer = optimizer

optimizer.zero_grad()

loss.backward()

optimizer.step()

_,preds = torch.max(outputs.data,1)

# statistics

running_loss += loss.data.item()

running_corrects += torch.sum(preds == classes.data)

count += len(inputs)

print('Training: No. ', count, ' process ... total: ', size)

epoch_loss = running_loss / size

epoch_acc = running_corrects.data.item() / size

if epoch_acc > max_acc:

max_acc = epoch_acc

torch.save(model , 'drive/MyDrive/app/model_max_acc/models' + str(epoch) + '' + str(epoch_acc) + '' + '.pth')

print('Loss: {:.4f} Acc: {:.4f}'.format(

epoch_loss, epoch_acc))

# 模型训练

train_model(model_vgg_new,loader_train,size=dset_sizes['train'], epochs=1,

optimizer=optimizer_vgg)

验证模型

# 定义验证模型并验证

def valid_model(model,dataloader,size):

model.eval()

predictions = np.zeros(size)

all_classes = np.zeros(size)

all_proba = np.zeros((size,2))

i = 0

running_loss = 0.0

running_corrects = 0

for inputs,classes in dataloader:

inputs = inputs.to(device)

classes = classes.to(device)

outputs = model(inputs)

loss = criterion(outputs,classes)

_,preds = torch.max(outputs.data,1)

# statistics

running_loss += loss.data.item()

running_corrects += torch.sum(preds == classes.data)

predictions[i:i+len(classes)] = preds.to('cpu').numpy()

all_classes[i:i+len(classes)] = classes.to('cpu').numpy()

all_proba[i:i+len(classes),:] = outputs.data.to('cpu').numpy()

i += len(classes)

print('Testing: No. ', i, ' process ... total: ', size)

epoch_loss = running_loss / size

epoch_acc = running_corrects.data.item() / size

print('Loss: {:.4f} Acc: {:.4f}'.format(

epoch_loss, epoch_acc))

return predictions, all_proba, all_classes

predictions, all_proba, all_classes = valid_model(model_vgg_new,loader_valid,size=dset_sizes['valid'])

测试

model_vgg_new = torch.load('drive/MyDrive/app/model_max_acc/models290.98285.pth')

model_vgg_new = model_vgg_new.to(device)

def test_model(model,dataloader,size):

model.eval()

predictions = np.zeros(size)

i = 0

all_preds = {}

for inputs,classes in dataloader:

inputs = inputs.to(device)

outputs = model(inputs)

_,preds = torch.max(outputs.data,1)

# statistics

key = dsets['test'].imgs[i][0]

print(key)

all_preds[key] = preds[0]

i += 1

print('Testing: No. ', i, ' process ... total: ', size)

with open("./drive/MyDrive/app/test_result/result.csv", 'a+') as f:

for i in range(2000):

f.write("{},{}\n".format(i, all_preds["./cat_dog/test/raw_random/"+str(i)+".jpg"]))

test_model(model_vgg_new,loader_test,size=dset_sizes['test'])

实验结果

在2000张图片中区分猫与狗的正确率达到98.15%

总结

本文对课堂实验实现代码进行了微调,使最终准确率获得了2个百分点的提升,主要调整有:

一、用Adam优化算法替换SGD随机梯度下降

二、训练轮次30轮,恰恰是最后一轮出现了最优质训练模型,由于本身机器运算能力有限,并未进一步增加轮次,感兴趣的读者可自行检验

三、训练模型batch_size调为128

在实验过程中认识到自身能力的不足,还有些概念没完全理解,如图像和标签是怎样实现匹配的…还有一些想实现却无力实现的,如在训练模型前期使用较大学习率,为前期训练模型提速,在后期训练中使用较小的学习率。对于本文出现的错误或有待改进的地方,欢迎批评指正。