[十八]深度学习Pytorch-学习率Learning Rate调整策略

0. 往期内容

[一]深度学习Pytorch-张量定义与张量创建

[二]深度学习Pytorch-张量的操作:拼接、切分、索引和变换

[三]深度学习Pytorch-张量数学运算

[四]深度学习Pytorch-线性回归

[五]深度学习Pytorch-计算图与动态图机制

[六]深度学习Pytorch-autograd与逻辑回归

[七]深度学习Pytorch-DataLoader与Dataset(含人民币二分类实战)

[八]深度学习Pytorch-图像预处理transforms

[九]深度学习Pytorch-transforms图像增强(剪裁、翻转、旋转)

[十]深度学习Pytorch-transforms图像操作及自定义方法

[十一]深度学习Pytorch-模型创建与nn.Module

[十二]深度学习Pytorch-模型容器与AlexNet构建

[十三]深度学习Pytorch-卷积层(1D/2D/3D卷积、卷积nn.Conv2d、转置卷积nn.ConvTranspose)

[十四]深度学习Pytorch-池化层、线性层、激活函数层

[十五]深度学习Pytorch-权值初始化

[十六]深度学习Pytorch-18种损失函数loss function

[十七]深度学习Pytorch-优化器Optimizer

[十八]深度学习Pytorch-学习率Learning Rate调整策略

深度学习Pytorch-学习率Learning Rate调整策略

- 0. 往期内容

- 1. 学习率调整

- 2. class _LRScheduler

- 3. 6种学习率调整策略

-

- 3.1 optim.lr_scheduler.StepLR(optimizer, step_size, gamma=0.1, last_epoch=- 1)

- 3.2 optim.lr_scheduler.MultiStepLR(optimizer, milestones, gamma=0.1, last_epoch=- 1)

- 3.3 optim.lr_scheduler.ExponentialLR(optimizer, gamma, last_epoch=- 1)

- 3.4 optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max, eta_min=0, last_epoch=- 1)

- 3.5 optim.lr_scheduler.ReduceLROnPlateau(optimizer, mode='min', factor=0.1, patience=10, threshold=0.0001, threshold_mode='rel', cooldown=0, min_lr=0, eps=1e-08)

- 3.6 optim.lr_scheduler.LambdaLR(optimizer, lr_lambda, last_epoch=- 1)

- 4. 学习率调整策略小结

- 5. 完整代码

1. 学习率调整

2. class _LRScheduler

学习率的调整是以epoch为周期的。

3. 6种学习率调整策略

3.1 optim.lr_scheduler.StepLR(optimizer, step_size, gamma=0.1, last_epoch=- 1)

optim.lr_scheduler.StepLR(optimizer, step_size, gamma=0.1, last_epoch=- 1, verbose=False)

代码示例:

LR = 0.1

iteration = 10

max_epoch = 200

# ------------------------------ fake data and optimizer ------------------------------

weights = torch.randn((1), requires_grad=True)

target = torch.zeros((1))

optimizer = optim.SGD([weights], lr=LR, momentum=0.9)

# ------------------------------ 1 Step LR ------------------------------

flag = 0

# flag = 1

if flag:

scheduler_lr = optim.lr_scheduler.StepLR(optimizer, step_size=50, gamma=0.1) # 设置学习率下降策略

lr_list, epoch_list = list(), list()

for epoch in range(max_epoch):

lr_list.append(scheduler_lr.get_lr())

epoch_list.append(epoch)

for i in range(iteration):

loss = torch.pow((weights - target), 2)

loss.backward()

optimizer.step()

optimizer.zero_grad()

scheduler_lr.step() #更新当前学习率

plt.plot(epoch_list, lr_list, label="Step LR Scheduler")

plt.xlabel("Epoch")

plt.ylabel("Learning rate")

plt.legend()

plt.show()

# Assuming optimizer uses lr = 0.05 for all groups

# lr = 0.05 if epoch < 30

# lr = 0.005 if 30 <= epoch < 60

# lr = 0.0005 if 60 <= epoch < 90

# ...

scheduler = StepLR(optimizer, step_size=30, gamma=0.1)

for epoch in range(100):

train(...)

validate(...)

scheduler.step()

3.2 optim.lr_scheduler.MultiStepLR(optimizer, milestones, gamma=0.1, last_epoch=- 1)

optim.lr_scheduler.MultiStepLR(optimizer, milestones, gamma=0.1, last_epoch=- 1, verbose=False)

代码示例:

LR = 0.1

iteration = 10

max_epoch = 200

# ------------------------------ fake data and optimizer ------------------------------

weights = torch.randn((1), requires_grad=True)

target = torch.zeros((1))

optimizer = optim.SGD([weights], lr=LR, momentum=0.9)

# ------------------------------ 2 Multi Step LR ------------------------------

flag = 0

# flag = 1

if flag:

milestones = [50, 125, 160]

scheduler_lr = optim.lr_scheduler.MultiStepLR(optimizer, milestones=milestones, gamma=0.1)

lr_list, epoch_list = list(), list()

for epoch in range(max_epoch):

lr_list.append(scheduler_lr.get_lr())

epoch_list.append(epoch)

for i in range(iteration):

loss = torch.pow((weights - target), 2)

loss.backward()

optimizer.step()

optimizer.zero_grad()

scheduler_lr.step()

plt.plot(epoch_list, lr_list, label="Multi Step LR Scheduler\nmilestones:{}".format(milestones))

plt.xlabel("Epoch")

plt.ylabel("Learning rate")

plt.legend()

plt.show()

# Assuming optimizer uses lr = 0.05 for all groups

# lr = 0.05 if epoch < 30

# lr = 0.005 if 30 <= epoch < 80

# lr = 0.0005 if epoch >= 80

scheduler = MultiStepLR(optimizer, milestones=[30,80], gamma=0.1)

for epoch in range(100):

train(...)

validate(...)

scheduler.step()

3.3 optim.lr_scheduler.ExponentialLR(optimizer, gamma, last_epoch=- 1)

optim.lr_scheduler.ExponentialLR(optimizer, gamma, last_epoch=- 1, verbose=False)

代码示例:

# ------------------------------ 3 Exponential LR ------------------------------

flag = 0

# flag = 1

if flag:

gamma = 0.95

scheduler_lr = optim.lr_scheduler.ExponentialLR(optimizer, gamma=gamma)

lr_list, epoch_list = list(), list()

for epoch in range(max_epoch):

lr_list.append(scheduler_lr.get_lr())

epoch_list.append(epoch)

for i in range(iteration):

loss = torch.pow((weights - target), 2)

loss.backward()

optimizer.step()

optimizer.zero_grad()

scheduler_lr.step()

plt.plot(epoch_list, lr_list, label="Exponential LR Scheduler\ngamma:{}".format(gamma))

plt.xlabel("Epoch")

plt.ylabel("Learning rate")

plt.legend()

plt.show()

3.4 optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max, eta_min=0, last_epoch=- 1)

optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max, eta_min=0, last_epoch=- 1, verbose=False)

T_max是余弦下降的周期,即总周期的一半。

代码示例:

# ------------------------------ 4 Cosine Annealing LR ------------------------------

flag = 0

# flag = 1

if flag:

t_max = 50

scheduler_lr = optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max=t_max, eta_min=0.)

lr_list, epoch_list = list(), list()

for epoch in range(max_epoch):

lr_list.append(scheduler_lr.get_lr())

epoch_list.append(epoch)

for i in range(iteration):

loss = torch.pow((weights - target), 2)

loss.backward()

optimizer.step()

optimizer.zero_grad()

scheduler_lr.step()

plt.plot(epoch_list, lr_list, label="CosineAnnealingLR Scheduler\nT_max:{}".format(t_max))

plt.xlabel("Epoch")

plt.ylabel("Learning rate")

plt.legend()

plt.show()

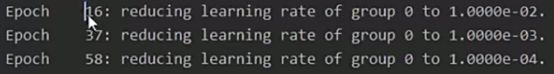

3.5 optim.lr_scheduler.ReduceLROnPlateau(optimizer, mode=‘min’, factor=0.1, patience=10, threshold=0.0001, threshold_mode=‘rel’, cooldown=0, min_lr=0, eps=1e-08)

optim.lr_scheduler.ReduceLROnPlateau(optimizer, mode='min', factor=0.1, patience=10, threshold=0.0001, threshold_mode='rel', cooldown=0, min_lr=0, eps=1e-08, verbose=False)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第13张图片](http://img.e-com-net.com/image/info8/216acb8a5ba14ca28e455387593af72a.jpg)

(1)mode为min时,监控的指标不下降则调整学习率,比如loss;为max时,监控的指标不上升则调整学习率,比如accuracy。

(2)patience是指连续多少次不变化。

(3)cooldown是指调整完学习率后,停止多长时间以后再去监控。

(4)scheduler_lr.step(loss_value) 使用监控时,必须要把监控的参数传入

代码示例:

LR = 0.1

iteration = 10

max_epoch = 200

# ------------------------------ fake data and optimizer ------------------------------

weights = torch.randn((1), requires_grad=True)

target = torch.zeros((1))

optimizer = optim.SGD([weights], lr=LR, momentum=0.9)

# ------------------------------ 5 Reduce LR On Plateau ------------------------------

flag = 0

# flag = 1

if flag:

loss_value = 0.5

accuray = 0.9

factor = 0.1

mode = "min"

patience = 10

cooldown = 10

min_lr = 1e-4

verbose = True

scheduler_lr = optim.lr_scheduler.ReduceLROnPlateau(optimizer, factor=factor, mode=mode, patience=patience,

cooldown=cooldown, min_lr=min_lr, verbose=verbose)

for epoch in range(max_epoch):

for i in range(iteration):

# train(...)

optimizer.step()

optimizer.zero_grad()

if epoch == 5:

loss_value = 0.4

scheduler_lr.step(loss_value) #使用监控时,必须要把监控的参数传入

官网示例:

optimizer = torch.optim.SGD(model.parameters(), lr=0.1, momentum=0.9)

scheduler = ReduceLROnPlateau(optimizer, 'min')

for epoch in range(10):

train(...)

val_loss = validate(...)

# Note that step should be called after validate()

scheduler.step(val_loss)

3.6 optim.lr_scheduler.LambdaLR(optimizer, lr_lambda, last_epoch=- 1)

optim.lr_scheduler.LambdaLR(optimizer, lr_lambda, last_epoch=- 1, verbose=False)

代码示例:

# ------------------------------ 6 lambda ------------------------------

# flag = 0

flag = 1

if flag:

lr_init = 0.1

weights_1 = torch.randn((6, 3, 5, 5))

weights_2 = torch.ones((5, 5))

optimizer = optim.SGD([

{'params': [weights_1]},

{'params': [weights_2]}], lr=lr_init)

lambda1 = lambda epoch: 0.1 ** (epoch // 20) #//整除,代表对20进行整除

lambda2 = lambda epoch: 0.95 ** epoch

scheduler = torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda=[lambda1, lambda2])

lr_list, epoch_list = list(), list()

for epoch in range(max_epoch):

for i in range(iteration):

# train(...)

optimizer.step()

optimizer.zero_grad()

scheduler.step()

lr_list.append(scheduler.get_lr())

epoch_list.append(epoch)

print('epoch:{:5d}, lr:{}'.format(epoch, scheduler.get_lr()))

plt.plot(epoch_list, [i[0] for i in lr_list], label="lambda 1")

plt.plot(epoch_list, [i[1] for i in lr_list], label="lambda 2")

plt.xlabel("Epoch")

plt.ylabel("Learning Rate")

plt.title("LambdaLR")

plt.legend()

plt.show()

官网示例:

# Assuming optimizer has two groups.

lambda1 = lambda epoch: epoch // 30

lambda2 = lambda epoch: 0.95 ** epoch

scheduler = LambdaLR(optimizer, lr_lambda=[lambda1, lambda2])

for epoch in range(100):

train(...)

validate(...)

scheduler.step()

4. 学习率调整策略小结

5. 完整代码

create_scheduler.py

# -*- coding: utf-8 -*-

"""

# @file name : train_lenet.py

# @brief : 人民币分类模型训练

"""

import os

import random

import numpy as np

import torch

import torch.nn as nn

from torch.utils.data import DataLoader

import torchvision.transforms as transforms

import torch.optim as optim

from PIL import Image

from matplotlib import pyplot as plt

from model.lenet import LeNet

from tools.my_dataset import RMBDataset

import torchvision

def transform_invert(img_, transform_train):

"""

将data 进行反transfrom操作

:param img_: tensor

:param transform_train: torchvision.transforms

:return: PIL image

"""

if 'Normalize' in str(transform_train):

norm_transform = list(filter(lambda x: isinstance(x, transforms.Normalize), transform_train.transforms))

mean = torch.tensor(norm_transform[0].mean, dtype=img_.dtype, device=img_.device)

std = torch.tensor(norm_transform[0].std, dtype=img_.dtype, device=img_.device)

img_.mul_(std[:, None, None]).add_(mean[:, None, None])

img_ = img_.transpose(0, 2).transpose(0, 1) # C*H*W --> H*W*C

if 'ToTensor' in str(transform_train):

img_ = np.array(img_) * 255

if img_.shape[2] == 3:

img_ = Image.fromarray(img_.astype('uint8')).convert('RGB')

elif img_.shape[2] == 1:

img_ = Image.fromarray(img_.astype('uint8').squeeze())

else:

raise Exception("Invalid img shape, expected 1 or 3 in axis 2, but got {}!".format(img_.shape[2]) )

return img_

def set_seed(seed=1):

random.seed(seed)

np.random.seed(seed)

torch.manual_seed(seed)

torch.cuda.manual_seed(seed)

set_seed() # 设置随机种子

rmb_label = {"1": 0, "100": 1}

# 参数设置

MAX_EPOCH = 10

BATCH_SIZE = 16

LR = 0.01

log_interval = 10

val_interval = 1

# ============================ step 1/5 数据 ============================

split_dir = os.path.join("..", "..", "data", "rmb_split")

train_dir = os.path.join(split_dir, "train")

valid_dir = os.path.join(split_dir, "valid")

norm_mean = [0.485, 0.456, 0.406]

norm_std = [0.229, 0.224, 0.225]

train_transform = transforms.Compose([

transforms.Resize((32, 32)),

transforms.RandomCrop(32, padding=4),

transforms.RandomGrayscale(p=0.8),

transforms.ToTensor(),

transforms.Normalize(norm_mean, norm_std),

])

valid_transform = transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor(),

transforms.Normalize(norm_mean, norm_std),

])

# 构建MyDataset实例

train_data = RMBDataset(data_dir=train_dir, transform=train_transform)

valid_data = RMBDataset(data_dir=valid_dir, transform=valid_transform)

# 构建DataLoder

train_loader = DataLoader(dataset=train_data, batch_size=BATCH_SIZE, shuffle=True)

valid_loader = DataLoader(dataset=valid_data, batch_size=BATCH_SIZE)

# ============================ step 2/5 模型 ============================

net = LeNet(classes=2)

net.initialize_weights()

# ============================ step 3/5 损失函数 ============================

criterion = nn.CrossEntropyLoss() # 选择损失函数

# ============================ step 4/5 优化器 ============================

optimizer = optim.SGD(net.parameters(), lr=LR, momentum=0.9) # 选择优化器

scheduler = torch.optim.lr_scheduler.StepLR(optimizer, step_size=10, gamma=0.1) # 设置学习率下降策略

# ============================ step 5/5 训练 ============================

train_curve = list()

valid_curve = list()

for epoch in range(MAX_EPOCH):

loss_mean = 0.

correct = 0.

total = 0.

net.train()

for i, data in enumerate(train_loader):

# forward

inputs, labels = data

outputs = net(inputs)

# backward

optimizer.zero_grad()

loss = criterion(outputs, labels)

loss.backward()

# update weights

optimizer.step()

# 统计分类情况

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).squeeze().sum().numpy()

# 打印训练信息

loss_mean += loss.item()

train_curve.append(loss.item())

if (i+1) % log_interval == 0:

loss_mean = loss_mean / log_interval

print("Training:Epoch[{:0>3}/{:0>3}] Iteration[{:0>3}/{:0>3}] Loss: {:.4f} Acc:{:.2%}".format(

epoch, MAX_EPOCH, i+1, len(train_loader), loss_mean, correct / total))

loss_mean = 0.

scheduler.step() # 更新学习率

# validate the model

if (epoch+1) % val_interval == 0:

correct_val = 0.

total_val = 0.

loss_val = 0.

net.eval()

with torch.no_grad():

for j, data in enumerate(valid_loader):

inputs, labels = data

outputs = net(inputs)

loss = criterion(outputs, labels)

_, predicted = torch.max(outputs.data, 1)

total_val += labels.size(0)

correct_val += (predicted == labels).squeeze().sum().numpy()

loss_val += loss.item()

valid_curve.append(loss_val)

print("Valid:\t Epoch[{:0>3}/{:0>3}] Iteration[{:0>3}/{:0>3}] Loss: {:.4f} Acc:{:.2%}".format(

epoch, MAX_EPOCH, j+1, len(valid_loader), loss_val, correct / total))

train_x = range(len(train_curve))

train_y = train_curve

train_iters = len(train_loader)

valid_x = np.arange(1, len(valid_curve)+1) * train_iters*val_interval # 由于valid中记录的是epochloss,需要对记录点进行转换到iterations

valid_y = valid_curve

plt.plot(train_x, train_y, label='Train')

plt.plot(valid_x, valid_y, label='Valid')

plt.legend(loc='upper right')

plt.ylabel('loss value')

plt.xlabel('Iteration')

plt.show()

# ============================ inference ============================

BASE_DIR = os.path.dirname(os.path.abspath(__file__))

test_dir = os.path.join(BASE_DIR, "test_data")

test_data = RMBDataset(data_dir=test_dir, transform=valid_transform)

valid_loader = DataLoader(dataset=test_data, batch_size=1)

for i, data in enumerate(valid_loader):

# forward

inputs, labels = data

outputs = net(inputs)

_, predicted = torch.max(outputs.data, 1)

rmb = 1 if predicted.numpy()[0] == 0 else 100

img_tensor = inputs[0, ...] # C H W

img = transform_invert(img_tensor, train_transform)

plt.imshow(img)

plt.title("LeNet got {} Yuan".format(rmb))

plt.show()

plt.pause(0.5)

plt.close()

lr_decay_scheduler.py

# -*- coding:utf-8 -*-

"""

@file name : lr_decay_scheduler.py

@brief : 学习率下降策略

"""

import torch

import torch.optim as optim

import numpy as np

import matplotlib.pyplot as plt

torch.manual_seed(1)

LR = 0.1

iteration = 10

max_epoch = 200

# ------------------------------ fake data and optimizer ------------------------------

weights = torch.randn((1), requires_grad=True)

target = torch.zeros((1))

optimizer = optim.SGD([weights], lr=LR, momentum=0.9)

# ------------------------------ 1 Step LR ------------------------------

flag = 0

# flag = 1

if flag:

scheduler_lr = optim.lr_scheduler.StepLR(optimizer, step_size=50, gamma=0.1) # 设置学习率下降策略

lr_list, epoch_list = list(), list()

for epoch in range(max_epoch):

lr_list.append(scheduler_lr.get_lr())

epoch_list.append(epoch)

for i in range(iteration):

loss = torch.pow((weights - target), 2)

loss.backward()

optimizer.step()

optimizer.zero_grad()

scheduler_lr.step() #更新当前学习率

plt.plot(epoch_list, lr_list, label="Step LR Scheduler")

plt.xlabel("Epoch")

plt.ylabel("Learning rate")

plt.legend()

plt.show()

# ------------------------------ 2 Multi Step LR ------------------------------

flag = 0

# flag = 1

if flag:

milestones = [50, 125, 160]

scheduler_lr = optim.lr_scheduler.MultiStepLR(optimizer, milestones=milestones, gamma=0.1)

lr_list, epoch_list = list(), list()

for epoch in range(max_epoch):

lr_list.append(scheduler_lr.get_lr())

epoch_list.append(epoch)

for i in range(iteration):

loss = torch.pow((weights - target), 2)

loss.backward()

optimizer.step()

optimizer.zero_grad()

scheduler_lr.step()

plt.plot(epoch_list, lr_list, label="Multi Step LR Scheduler\nmilestones:{}".format(milestones))

plt.xlabel("Epoch")

plt.ylabel("Learning rate")

plt.legend()

plt.show()

# ------------------------------ 3 Exponential LR ------------------------------

flag = 0

# flag = 1

if flag:

gamma = 0.95

scheduler_lr = optim.lr_scheduler.ExponentialLR(optimizer, gamma=gamma)

lr_list, epoch_list = list(), list()

for epoch in range(max_epoch):

lr_list.append(scheduler_lr.get_lr())

epoch_list.append(epoch)

for i in range(iteration):

loss = torch.pow((weights - target), 2)

loss.backward()

optimizer.step()

optimizer.zero_grad()

scheduler_lr.step()

plt.plot(epoch_list, lr_list, label="Exponential LR Scheduler\ngamma:{}".format(gamma))

plt.xlabel("Epoch")

plt.ylabel("Learning rate")

plt.legend()

plt.show()

# ------------------------------ 4 Cosine Annealing LR ------------------------------

flag = 0

# flag = 1

if flag:

t_max = 50

scheduler_lr = optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max=t_max, eta_min=0.)

lr_list, epoch_list = list(), list()

for epoch in range(max_epoch):

lr_list.append(scheduler_lr.get_lr())

epoch_list.append(epoch)

for i in range(iteration):

loss = torch.pow((weights - target), 2)

loss.backward()

optimizer.step()

optimizer.zero_grad()

scheduler_lr.step()

plt.plot(epoch_list, lr_list, label="CosineAnnealingLR Scheduler\nT_max:{}".format(t_max))

plt.xlabel("Epoch")

plt.ylabel("Learning rate")

plt.legend()

plt.show()

# ------------------------------ 5 Reduce LR On Plateau ------------------------------

flag = 0

# flag = 1

if flag:

loss_value = 0.5

accuray = 0.9

factor = 0.1

mode = "min"

patience = 10

cooldown = 10

min_lr = 1e-4

verbose = True

scheduler_lr = optim.lr_scheduler.ReduceLROnPlateau(optimizer, factor=factor, mode=mode, patience=patience,

cooldown=cooldown, min_lr=min_lr, verbose=verbose)

for epoch in range(max_epoch):

for i in range(iteration):

# train(...)

optimizer.step()

optimizer.zero_grad()

if epoch == 5:

loss_value = 0.4

scheduler_lr.step(loss_value) #使用监控时,必须要把监控的参数传入

# ------------------------------ 6 lambda ------------------------------

# flag = 0

flag = 1

if flag:

lr_init = 0.1

weights_1 = torch.randn((6, 3, 5, 5))

weights_2 = torch.ones((5, 5))

optimizer = optim.SGD([

{'params': [weights_1]},

{'params': [weights_2]}], lr=lr_init)

lambda1 = lambda epoch: 0.1 ** (epoch // 20) #//整除,代表对20进行整除

lambda2 = lambda epoch: 0.95 ** epoch

scheduler = torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda=[lambda1, lambda2])

lr_list, epoch_list = list(), list()

for epoch in range(max_epoch):

for i in range(iteration):

# train(...)

optimizer.step()

optimizer.zero_grad()

scheduler.step()

lr_list.append(scheduler.get_lr())

epoch_list.append(epoch)

print('epoch:{:5d}, lr:{}'.format(epoch, scheduler.get_lr()))

plt.plot(epoch_list, [i[0] for i in lr_list], label="lambda 1")

plt.plot(epoch_list, [i[1] for i in lr_list], label="lambda 2")

plt.xlabel("Epoch")

plt.ylabel("Learning Rate")

plt.title("LambdaLR")

plt.legend()

plt.show()

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第1张图片](http://img.e-com-net.com/image/info8/e7b0992dad2e4275b8df0b3d5b3d0e70.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第2张图片](http://img.e-com-net.com/image/info8/341397fae8e648b8a84f091f7c4d4257.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第3张图片](http://img.e-com-net.com/image/info8/89e2ae04c7d84bb5b780db4512f8c4b3.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第4张图片](http://img.e-com-net.com/image/info8/b0af2bdaeb684a758e2cbb83012cc9e2.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第5张图片](http://img.e-com-net.com/image/info8/d9368bc8093141309b160b6a35bc27f8.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第6张图片](http://img.e-com-net.com/image/info8/4d935f86809644e981269f436cfd11af.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第7张图片](http://img.e-com-net.com/image/info8/83e14709c23446e89044d9da45f051fa.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第8张图片](http://img.e-com-net.com/image/info8/af55448a6881428a9432d390a4bd9d5a.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第9张图片](http://img.e-com-net.com/image/info8/d44dab6f54f94405a33c63988e991b0a.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第10张图片](http://img.e-com-net.com/image/info8/0763c3f56d3f49acb90a19354d9a2d7d.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第11张图片](http://img.e-com-net.com/image/info8/edbcdd297ac54e3f98208a347e9e9ff2.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第12张图片](http://img.e-com-net.com/image/info8/dba5b0478baf44c3b08729748ab447ba.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第14张图片](http://img.e-com-net.com/image/info8/d5c50020a70747879862bc1be8d168ea.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第15张图片](http://img.e-com-net.com/image/info8/e2f1c63dacca4953874e298295549f6a.jpg)

![[十八]深度学习Pytorch-学习率Learning Rate调整策略_第16张图片](http://img.e-com-net.com/image/info8/136a885b9b1342c299696af31bb3bb1c.jpg)