yolo v5 onnxruntime与opencv cv2加载部署推理

参考:https://github.com/hpc203/yolov5-lite-onnxruntime

https://github.com/hpc203/yolov5-dnn-cpp-python

1、onnxruntime 加载推理yolo v5 onnx

import cv2

import numpy as np

import argparse

import onnxruntime as ort

import math

class yolov5_lite():

def __init__(self, model_pb_path, label_path, confThreshold=0.5, nmsThreshold=0.5, objThreshold=0.5):

so = ort.SessionOptions()

so.log_severity_level = 3

self.net = ort.InferenceSession(model_pb_path, so)

self.classes = list(map(lambda x: x.strip(), open(label_path, 'r').readlines()))

self.num_classes = len(self.classes)

anchors = [[10, 13, 16, 30, 33, 23], [30, 61, 62, 45, 59, 119], [116, 90, 156, 198, 373, 326]]

self.nl = len(anchors)

self.na = len(anchors[0]) // 2

self.no = self.num_classes + 5

self.grid = [np.zeros(1)] * self.nl

self.stride = np.array([8., 16., 32.])

self.anchor_grid = np.asarray(anchors, dtype=np.float32).reshape(self.nl, -1, 2)

self.confThreshold = confThreshold

self.nmsThreshold = nmsThreshold

self.objThreshold = objThreshold

self.input_shape = (self.net.get_inputs()[0].shape[2], self.net.get_inputs()[0].shape[3])

def resize_image(self, srcimg, keep_ratio=True):

top, left, newh, neww = 0, 0, self.input_shape[0], self.input_shape[1]

if keep_ratio and srcimg.shape[0] != srcimg.shape[1]:

hw_scale = srcimg.shape[0] / srcimg.shape[1]

if hw_scale > 1:

newh, neww = self.input_shape[0], int(self.input_shape[1] / hw_scale)

img = cv2.resize(srcimg, (neww, newh), interpolation=cv2.INTER_AREA)

left = int((self.input_shape[1] - neww) * 0.5)

img = cv2.copyMakeBorder(img, 0, 0, left, self.input_shape[1] - neww - left, cv2.BORDER_CONSTANT,

value=0) # add border

else:

newh, neww = int(self.input_shape[0] * hw_scale), self.input_shape[1]

img = cv2.resize(srcimg, (neww, newh), interpolation=cv2.INTER_AREA)

top = int((self.input_shape[0] - newh) * 0.5)

img = cv2.copyMakeBorder(img, top, self.input_shape[0] - newh - top, 0, 0, cv2.BORDER_CONSTANT, value=0)

else:

img = cv2.resize(srcimg, self.input_shape, interpolation=cv2.INTER_AREA)

return img, newh, neww, top, left

def _make_grid(self, nx=20, ny=20):

xv, yv = np.meshgrid(np.arange(ny), np.arange(nx))

return np.stack((xv, yv), 2).reshape((-1, 2)).astype(np.float32)

def postprocess(self, frame, outs, pad_hw):

newh, neww, padh, padw = pad_hw

frameHeight = frame.shape[0]

frameWidth = frame.shape[1]

ratioh, ratiow = frameHeight / newh, frameWidth / neww

# Scan through all the bounding boxes output from the network and keep only the

# ones with high confidence scores. Assign the box's class label as the class with the highest score.

classIds = []

confidences = []

boxes = []

for detection in outs:

scores = detection[5:]

classId = np.argmax(scores)

confidence = scores[classId]

if confidence > self.confThreshold and detection[4] > self.objThreshold:

center_x = int((detection[0] - padw) * ratiow)

center_y = int((detection[1] - padh) * ratioh)

width = int(detection[2] * ratiow)

height = int(detection[3] * ratioh)

left = int(center_x - width / 2)

top = int(center_y - height / 2)

classIds.append(classId)

confidences.append(float(confidence))

boxes.append([left, top, width, height])

# Perform non maximum suppression to eliminate redundant overlapping boxes with

# lower confidences.

indices = cv2.dnn.NMSBoxes(boxes, confidences, self.confThreshold, self.nmsThreshold)

for i in indices:

i = i[0] if isinstance(i, (tuple,list)) else i

box = boxes[i]

left = box[0]

top = box[1]

width = box[2]

height = box[3]

frame = self.drawPred(frame, classIds[i], confidences[i], left, top, left + width, top + height)

return frame

def drawPred(self, frame, classId, conf, left, top, right, bottom):

# Draw a bounding box.

cv2.rectangle(frame, (left, top), (right, bottom), (0, 0, 255), thickness=4)

label = '%.2f' % conf

label = '%s:%s' % (self.classes[classId], label)

# Display the label at the top of the bounding box

labelSize, baseLine = cv2.getTextSize(label, cv2.FONT_HERSHEY_SIMPLEX, 0.5, 1)

top = max(top, labelSize[1])

# cv.rectangle(frame, (left, top - round(1.5 * labelSize[1])), (left + round(1.5 * labelSize[0]), top + baseLine), (255,255,255), cv.FILLED)

cv2.putText(frame, label, (left, top - 10), cv2.FONT_HERSHEY_SIMPLEX, 1, (0, 255, 0), thickness=2)

return frame

def detect(self, srcimg):

img, newh, neww, top, left = self.resize_image(srcimg)

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

img = img.astype(np.float32) / 255.0

blob = np.expand_dims(np.transpose(img, (2, 0, 1)), axis=0)

outs = self.net.run(None, {self.net.get_inputs()[0].name: blob})[0].squeeze(axis=0)

row_ind = 0

for i in range(self.nl):

h, w = int(self.input_shape[0] / self.stride[i]), int(self.input_shape[1] / self.stride[i])

length = int(self.na * h * w)

if self.grid[i].shape[2:4] != (h, w):

self.grid[i] = self._make_grid(w, h)

outs[row_ind:row_ind + length, 0:2] = (outs[row_ind:row_ind + length, 0:2] * 2. - 0.5 + np.tile(

self.grid[i], (self.na, 1))) * int(self.stride[i])

outs[row_ind:row_ind + length, 2:4] = (outs[row_ind:row_ind + length, 2:4] * 2) ** 2 * np.repeat(

self.anchor_grid[i], h * w, axis=0)

row_ind += length

srcimg = self.postprocess(srcimg, outs, (newh, neww, top, left))

# cv2.imwrite('result.jpg', srcimg)

return srcimg

if __name__=='__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--imgpath', type=str, default=r'D:\cv\yolov5-lite-onnxruntime-main\imgs\bus.jpg', help="image path")

parser.add_argument('--modelpath', type=str, default=r'D:\cv\yolov5-lite-onnxruntime-main\onnxmodel\v5lite-g.onnx', help="onnx filepath")

parser.add_argument('--classfile', type=str, default='D:\cv\yolov5-lite-onnxruntime-main\imgs\coco.names', help="classname filepath")

parser.add_argument('--confThreshold', default=0.5, type=float, help='class confidence')

parser.add_argument('--nmsThreshold', default=0.6, type=float, help='nms iou thresh')

args = parser.parse_args()

srcimg = cv2.imread(args.imgpath)

print(args.imgpath,srcimg)

net = yolov5_lite(args.modelpath, args.classfile, confThreshold=args.confThreshold, nmsThreshold=args.nmsThreshold)

print(net)

srcimg = net.detect(srcimg)

winName = 'Deep learning object detection in onnxruntime'

cv2.namedWindow(winName, cv2.WINDOW_NORMAL)

cv2.imshow(winName, srcimg)

cv2.waitKey(0)

cv2.destroyAllWindows()

2、cv2加载部署推理yolo v5 onnx

import cv2

import argparse

import numpy as np

class yolov5():

def __init__(self, yolo_type, confThreshold=0.5, nmsThreshold=0.5, objThreshold=0.5):

anchors = [[4,5, 8,10, 13,16], [23,29, 43,55, 73,105], [146,217, 231,300, 335,433]]

num_classes = 1

self.nl = len(anchors)

self.na = len(anchors[0]) // 2

self.no = num_classes + 5 + 10

self.grid = [np.zeros(1)] * self.nl

self.stride = np.array([8., 16., 32.])

self.anchor_grid = np.asarray(anchors, dtype=np.float32).reshape(self.nl, -1, 2)

self.inpWidth = 640

self.inpHeight = 640

self.net = cv2.dnn.readNet(yolo_type+'-face.onnx')

self.confThreshold = confThreshold

self.nmsThreshold = nmsThreshold

self.objThreshold = objThreshold

def _make_grid(self, nx=20, ny=20):

xv, yv = np.meshgrid(np.arange(ny), np.arange(nx))

return np.stack((xv, yv), 2).reshape((-1, 2)).astype(np.float32)

def postprocess(self, frame, outs):

frameHeight = frame.shape[0]

frameWidth = frame.shape[1]

ratioh, ratiow = frameHeight / self.inpHeight, frameWidth / self.inpWidth

# Scan through all the bounding boxes output from the network and keep only the

# ones with high confidence scores. Assign the box's class label as the class with the highest score.

confidences = []

boxes = []

landmarks = []

for detection in outs:

confidence = detection[15]

# if confidence > self.confThreshold and detection[4] > self.objThreshold:

if detection[4] > self.objThreshold:

center_x = int(detection[0] * ratiow)

center_y = int(detection[1] * ratioh)

width = int(detection[2] * ratiow)

height = int(detection[3] * ratioh)

left = int(center_x - width / 2)

top = int(center_y - height / 2)

confidences.append(float(confidence))

boxes.append([left, top, width, height])

landmark = detection[5:15] * np.tile(np.float32([ratiow,ratioh]), 5)

landmarks.append(landmark.astype(np.int32))

# Perform non maximum suppression to eliminate redundant overlapping boxes with

# lower confidences.

indices = cv2.dnn.NMSBoxes(boxes, confidences, self.confThreshold, self.nmsThreshold)

print(indices)

for i in indices:

# i = i

box = boxes[i]

left = box[0]

top = box[1]

width = box[2]

height = box[3]

landmark = landmarks[i]

frame = self.drawPred(frame, confidences[i], left, top, left + width, top + height, landmark)

return frame

def drawPred(self, frame, conf, left, top, right, bottom, landmark):

# Draw a bounding box.

cv2.rectangle(frame, (left, top), (right, bottom), (0, 0, 255), thickness=2)

# label = '%.2f' % conf

# Display the label at the top of the bounding box

# labelSize, baseLine = cv2.getTextSize(label, cv2.FONT_HERSHEY_SIMPLEX, 0.5, 1)

# top = max(top, labelSize[1])

# cv2.putText(frame, label, (left, top - 10), cv2.FONT_HERSHEY_SIMPLEX, 1, (0, 255, 0), thickness=2)

for i in range(5):

cv2.circle(frame, (landmark[i*2], landmark[i*2+1]), 1, (0,255,0), thickness=-1)

return frame

def detect(self, srcimg):

blob = cv2.dnn.blobFromImage(srcimg, 1 / 255.0, (self.inpWidth, self.inpHeight), [0, 0, 0], swapRB=True, crop=False)

# Sets the input to the network

self.net.setInput(blob)

# Runs the forward pass to get output of the output layers

outs = self.net.forward(self.net.getUnconnectedOutLayersNames())[0]

# inference output

outs[..., [0,1,2,3,4,15]] = 1 / (1 + np.exp(-outs[..., [0,1,2,3,4,15]])) ###sigmoid

row_ind = 0

for i in range(self.nl):

h, w = int(self.inpHeight/self.stride[i]), int(self.inpWidth/self.stride[i])

length = int(self.na * h * w)

if self.grid[i].shape[2:4] != (h,w):

self.grid[i] = self._make_grid(w, h)

g_i = np.tile(self.grid[i], (self.na, 1))

a_g_i = np.repeat(self.anchor_grid[i], h * w, axis=0)

outs[row_ind:row_ind + length, 0:2] = (outs[row_ind:row_ind + length, 0:2] * 2. - 0.5 + g_i) * int(self.stride[i])

outs[row_ind:row_ind + length, 2:4] = (outs[row_ind:row_ind + length, 2:4] * 2) ** 2 * a_g_i

outs[row_ind:row_ind + length, 5:7] = outs[row_ind:row_ind + length, 5:7] * a_g_i + g_i * int(self.stride[i]) # landmark x1 y1

outs[row_ind:row_ind + length, 7:9] = outs[row_ind:row_ind + length, 7:9] * a_g_i + g_i * int(self.stride[i]) # landmark x2 y2

outs[row_ind:row_ind + length, 9:11] = outs[row_ind:row_ind + length, 9:11] * a_g_i + g_i * int(self.stride[i]) # landmark x3 y3

outs[row_ind:row_ind + length, 11:13] = outs[row_ind:row_ind + length, 11:13] * a_g_i + g_i * int(self.stride[i]) # landmark x4 y4

outs[row_ind:row_ind + length, 13:15] = outs[row_ind:row_ind + length, 13:15] * a_g_i + g_i * int(self.stride[i]) # landmark x5 y5

row_ind += length

return outs

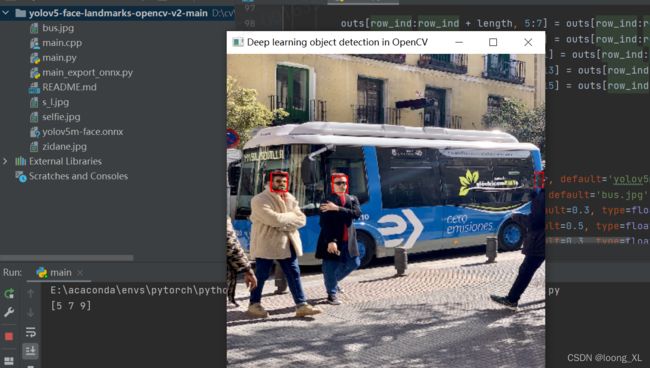

if __name__ == "__main__":

parser = argparse.ArgumentParser()

parser.add_argument('--yolo_type', type=str, default='yolov5m', choices=['yolov5s', 'yolov5m', 'yolov5l'], help="yolo type")

parser.add_argument("--imgpath", type=str, default='bus.jpg', help="image path")

parser.add_argument('--confThreshold', default=0.3, type=float, help='class confidence')

parser.add_argument('--nmsThreshold', default=0.5, type=float, help='nms iou thresh')

parser.add_argument('--objThreshold', default=0.3, type=float, help='object confidence')

args = parser.parse_args()

yolonet = yolov5(args.yolo_type, confThreshold=args.confThreshold, nmsThreshold=args.nmsThreshold, objThreshold=args.objThreshold)

srcimg = cv2.imread(args.imgpath)

dets = yolonet.detect(srcimg)

srcimg = yolonet.postprocess(srcimg, dets)

winName = 'Deep learning object detection in OpenCV'

cv2.namedWindow(winName, 0)

cv2.imshow(winName, srcimg)

cv2.waitKey(0)

cv2.destroyAllWindows()