天池学习赛——街景字符编码识别(得分上0.93)

项目代码已上传至github需要的可以自行下载

目录

- 1 比赛介绍

- 2 解题思路

- 3 比赛数据集

- 4 模型训练

- 5 更改detect.py文件

- 6 上传文件

1 比赛介绍

项目链接:零基础入门CV - 街景字符编码识别

一、赛题数据

赛题来源自Google街景图像中的门牌号数据集(The Street View House Numbers Dataset, SVHN),并根据一定方式采样得到比赛数据集。

数据集报名后可见并可下载,该数据来自真实场景的门牌号。训练集数据包括3W张照片,验证集数据包括1W张照片,每张照片包括颜色图像和对应的编码类别和具体位置;为了保证比赛的公平性,测试集A包括4W张照片,测试集B包括4W张照片

需要注意的是本赛题需要选手识别图片中所有的字符,为了降低比赛难度,我们提供了训练集、验证集和测试集中字符的位置框。

所有的数据(训练集、验证集和测试集)的标注使用JSON格式,并使用文件名进行索引。如果一个文件中包括多个字符,则使用列表将字段进行组合。

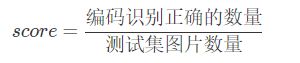

二、评测标准

评价标准为准确率,选手提交结果与实际图片的编码进行对比,以编码整体识别准确率为评价指标,结果越大越好,具体计算公式如下:

三、结果提交

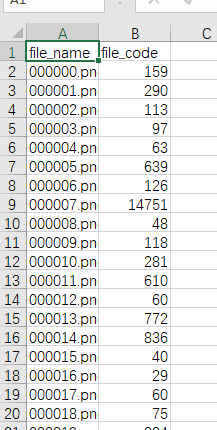

提交前请确保预测结果的格式与sample_submit.csv中的格式一致,以及提交文件后缀名为csv。

file_name, file_code

0010000.jpg,451

0010001.jpg,232

0010002.jpg,45

0010003.jpg,67

0010004.jpg,191

0010005.jpg,892

2 解题思路

从下载好的数据看,本题为数字识别,为识别类任务。这种任务的选择有很多,可以用cnn、VGG,具体的可以参看手写数字识别。

在参看大赛论坛后发现几个高分模型是使用yolo系列把识别汉字当作检测类别来做。

参考1:yolov5加全局nms 第八名方案分享

参考2:真正零基础,单模型非融合,上93的最简单技巧

参考3:街景字符识别

本题采用yolov5进行解题。

3 比赛数据集

下载比赛数据:

mchar_train.zip

mchar_train.json

mchar_val.zip

mchar_val.json

mchar_test_a.zip

mchar_sample_submit_A.csv

本次赛题已经将训练文件分为训练集与测试集,我们这里重新给它进行划分,首先将训练集与测试集所有图片放入all_images文件夹中(由于训练集与测试集中的图片名有重合,所以在整合时将测试集的图片名稍作更改加上前缀val,以防数据覆盖):

import os

import shutil

train_image_path = './data/mchar_train/'#下载好的存储路径

val_image_path = './data/mchar_val/'

dst_image_path = '../coco/all_images/'#文件整合后的位置

train_image_list = os.listdir(train_image_path)

val_image_list = os.listdir(val_image_path)

for img in train_image_list:

shutil.copy(train_image_path+img, dst_image_path+img)

for img in val_image_list:

shutil.copy(val_image_path+img, dst_image_path+'val_'+img)

将json类型的标签转为txt类型的标签并存放到all_labels文件夹中:

import os

import cv2

import json

train_image_path = './data/mchar_train/'#下载好的数据集位置

val_image_path = './data/mchar_val/'

train_annotation_path = './data/mchar_train.json'

val_annotation_path = './data/mchar_val.json'

train_data = json.load(open(train_annotation_path))

val_data = json.load(open(val_annotation_path))

label_path = '../coco/all_labels/'

for key in train_data:

f = open(label_path+key.replace('.png', '.txt'), 'w')

img = cv2.imread(train_image_path+key)

shape = img.shape

label = train_data[key]['label']

left = train_data[key]['left']

top = train_data[key]['top']

height = train_data[key]['height']

width = train_data[key]['width']

for i in range(len(label)):

x_center = 1.0 * (left[i]+width[i]/2) / shape[1]

y_center = 1.0 * (top[i]+height[i]/2) / shape[0]

w = 1.0 * width[i] / shape[1]

h = 1.0 * height[i] / shape[0]

# label, x_center, y_center, w, h

f.write(str(label[i]) + ' ' + str(x_center) + ' ' + str(y_center) + ' ' + str(w) + ' ' + str(h) + '\n')

f.close()

for key in val_data:

f = open(label_path+'val_'+key.replace('.png', '.txt'), 'w')

img = cv2.imread(val_image_path+key)

shape = img.shape

label = val_data[key]['label']

left = val_data[key]['left']

top = val_data[key]['top']

height = val_data[key]['height']

width = val_data[key]['width']

for i in range(len(label)):

x_center = 1.0 * (left[i]+width[i]/2) / shape[1]

y_center = 1.0 * (top[i]+height[i]/2) / shape[0]

w = 1.0 * width[i] / shape[1]

h = 1.0 * height[i] / shape[0]

# label, x_center, y_center, w, h

f.write(str(label[i]) + ' ' + str(x_center) + ' ' + str(y_center) + ' ' + str(w) + ' ' + str(h) + '\n')

f.close()

所有的训练文件准备完毕,接着参看《yolov5训练自己的数据集(一文搞定训练)》制作自己的数据集,里面包含数据集的重新划分的脚本,按照该博客更改自己的coco.yaml。

4 模型训练

采用yolov5x模型为预训练模型,设置epochs,batch_size,image-size(32的整数倍),进行训练。

可以自行调节训练参数,可以在选择分数不错的模型设为预训练模型进一步训练。

训练结束后我们使用输出的权重文件best.pt进行预测。

5 更改detect.py文件

本次需要提交csv格式的预测结果,我们更改原来的detect.py文件以满足我们的需求:参看《yolov5-detect.py解析与重写》

import argparse

import time

from pathlib import Path

import os

import cv2

import torch

import torch.backends.cudnn as cudnn

from numpy import random

from models.experimental import attempt_load

from utils.datasets import LoadStreams, LoadImages

from utils.general import check_img_size, check_requirements, check_imshow, non_max_suppression, apply_classifier, \

scale_coords, xyxy2xywh, strip_optimizer, set_logging, increment_path

from utils.plots import plot_one_box

from utils.torch_utils import select_device, load_classifier, time_synchronized

import pandas as pd

def detect(save_img=False):

source, weights, view_img, save_txt, imgsz = opt.source, opt.weights, opt.view_img, opt.save_txt, opt.img_size

save_img = not opt.nosave and not source.endswith('.txt') # save inference images

webcam = source.isnumeric() or source.endswith('.txt') or source.lower().startswith(

('rtsp://', 'rtmp://', 'http://'))

file_name = []

file_code = []

# result = dict()

# Directories

save_dir = Path(increment_path(Path(opt.project) / opt.name, exist_ok=opt.exist_ok)) # increment run

(save_dir if save_txt else save_dir).mkdir(parents=True, exist_ok=True) # make dir

# Initialize

set_logging()

device = select_device(opt.device)

half = device.type != 'cpu' # half precision only supported on CUDA

# Load model

model = attempt_load(weights, map_location=device) # load FP32 model

stride = int(model.stride.max()) # model stride

imgsz = check_img_size(imgsz, s=stride) # check img_size

if half:

model.half() # to FP16

# Second-stage classifier

classify = False

if classify:

modelc = load_classifier(name='resnet101', n=2) # initialize

modelc.load_state_dict(torch.load('weights/resnet101.pt', map_location=device)['model']).to(device).eval()

# Set Dataloader

vid_path, vid_writer = None, None

if webcam:

view_img = check_imshow()

cudnn.benchmark = True # set True to speed up constant image size inference

dataset = LoadStreams(source, img_size=imgsz, stride=stride)

else:

dataset = LoadImages(source, img_size=imgsz, stride=stride)

# Get names and colors

names = model.module.names if hasattr(model, 'module') else model.names

colors = [[random.randint(0, 255) for _ in range(3)] for _ in names]

# Run inference

if device.type != 'cpu':

model(torch.zeros(1, 3, imgsz, imgsz).to(device).type_as(next(model.parameters()))) # run once

t0 = time.time()

for path, img, im0s, vid_cap in dataset:

img = torch.from_numpy(img).to(device)

img = img.half() if half else img.float() # uint8 to fp16/32

img /= 255.0 # 0 - 255 to 0.0 - 1.0

if img.ndimension() == 3:

img = img.unsqueeze(0)

# Inference

t1 = time_synchronized()

pred = model(img, augment=opt.augment)[0]

# Apply NMS

pred = non_max_suppression(pred, opt.conf_thres, opt.iou_thres, classes=opt.classes, agnostic=opt.agnostic_nms)

t2 = time_synchronized()

# Apply Classifier

if classify:

pred = apply_classifier(pred, modelc, img, im0s)

# Process detections

for i, det in enumerate(pred): # detections per image

if webcam: # batch_size >= 1

p, s, im0, frame = path[i], '%g: ' % i, im0s[i].copy(), dataset.count

else:

p, s, im0, frame = path, '', im0s, getattr(dataset, 'frame', 0)

s += '%gx%g ' % img.shape[2:] # print string

gn = torch.tensor(im0.shape)[[1, 0, 1, 0]] # normalization gain whwh

if len(det):

# Rescale boxes from img_size to im0 size

det[:, :4] = scale_coords(img.shape[2:], det[:, :4], im0.shape).round()

# Print results

for c in det[:, -1].unique():

n = (det[:, -1] == c).sum() # detections per class

s += f"{n} {names[int(c)]}{'s' * (n > 1)}, " # add to string

# Write results

x_value = dict()

for *xyxy, conf, cls in reversed(det):

if save_txt: # Write to file

xywh = (xyxy2xywh(torch.tensor(xyxy).view(1, 4)) / gn).view(-1).tolist() # normalized xywh

cls = torch.tensor(cls).tolist()

x_value[xywh[0]] = int(cls)

# Print time (inference + NMS)

print(f'{s}Done. ({t2 - t1:.3f}s)')

file_name.append(os.path.split(path)[-1])

res = ''

for key in sorted(x_value):

res += str(x_value[key])

file_code.append(res)

save_csv_path=str(os.getcwd())+'\\'+str(save_dir)+'\\submission.csv'

print(save_csv_path)

sub = pd.DataFrame({"file_name": file_name, 'file_code': file_code})

sub.to_csv(save_csv_path, index=False)

print(f'Done. ({time.time() - t0:.3f}s)')

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--weights', nargs='+', type=str, default='weights/best.pt', help='model.pt path(s)')

parser.add_argument('--source', type=str, default='data/mchar_test_a', help='source') # file/folder, 0 for webcam

parser.add_argument('--img-size', type=int, default=160, help='inference size (pixels)')

parser.add_argument('--conf-thres', type=float, default=0.25, help='object confidence threshold')

parser.add_argument('--iou-thres', type=float, default=0.45, help='IOU threshold for NMS')

parser.add_argument('--device', default='0', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')

parser.add_argument('--view-img', action='store_true', help='display results')

parser.add_argument('--save-txt', action='store_true', help='save results to *.txt')

parser.add_argument('--save-conf', action='store_true', help='save confidences in --save-txt labels')

parser.add_argument('--nosave', action='store_true', help='do not save images/videos')

parser.add_argument('--classes', nargs='+', type=int, help='filter by class: --class 0, or --class 0 2 3')

parser.add_argument('--agnostic-nms', action='store_true', help='class-agnostic NMS')

parser.add_argument('--augment', action='store_true', help='augmented inference')

parser.add_argument('--update', action='store_true', help='update all models')

parser.add_argument('--project', default='runs/detect', help='save results to project/name')

parser.add_argument('--name', default='exp', help='save results to project/name')

parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment')

opt = parser.parse_args()

print(opt)

check_requirements(exclude=('pycocotools', 'thop'))

with torch.no_grad():

if opt.update: # update all models (to fix SourceChangeWarning)

for opt.weights in ['yolov5s.pt', 'yolov5m.pt', 'yolov5l.pt', 'yolov5x.pt']:

detect()

strip_optimizer(opt.weights)

else:

detect()

6 上传文件

目前得分0.933

展望:从nms和anchor上继续改进,加入多尺度多模型融合